- The paper demonstrates that AI-assessed transcript clarity robustly predicts TED Talk engagement, explaining an additional 9–10% variance beyond baseline factors.

- The authors employed an AI-based pipeline with 50 LLM runs per transcript to quantify clarity and structure, achieving superior predictive power over classical readability scores.

- The study reveals that clarity's effect is consistent across topics and has increased over time with professionalization, underscoring its domain-general impact in digital communication.

Computational Analysis of Linguistic Clarity as a Predictor of Audience Engagement in TED Talks

Introduction

This paper presents a comprehensive computational study of how speech clarity, as assessed by LLMs, predicts large-scale audience engagement with TED Talks on YouTube (2604.04583). The central hypothesis is that linguistic clarity drives collective audience response, as measured by likes and views, more robustly than speaker, topical, or surface-level textual features. The analysis leverages modern LLMs for reliable discourse evaluation at scale and examines both the effect's domain generality and the longitudinal standardization of clarity within the TED platform.

Methods

The authors implement an AI-based pipeline to quantify the clarity and structure of TED Talk transcripts. For over 1,200 talks, each transcript was independently assessed via 50 LLM runs on two dimensions: clarity of explanation and structural organization. These linguistic metrics were then linked with log-transformed engagement variables (likes and views) and enriched with topical category, scientificness, duration, and Google's global search interest trends as covariates.

Figure 1: Overview of the AI-based transcript evaluation pipeline for TED Talks.

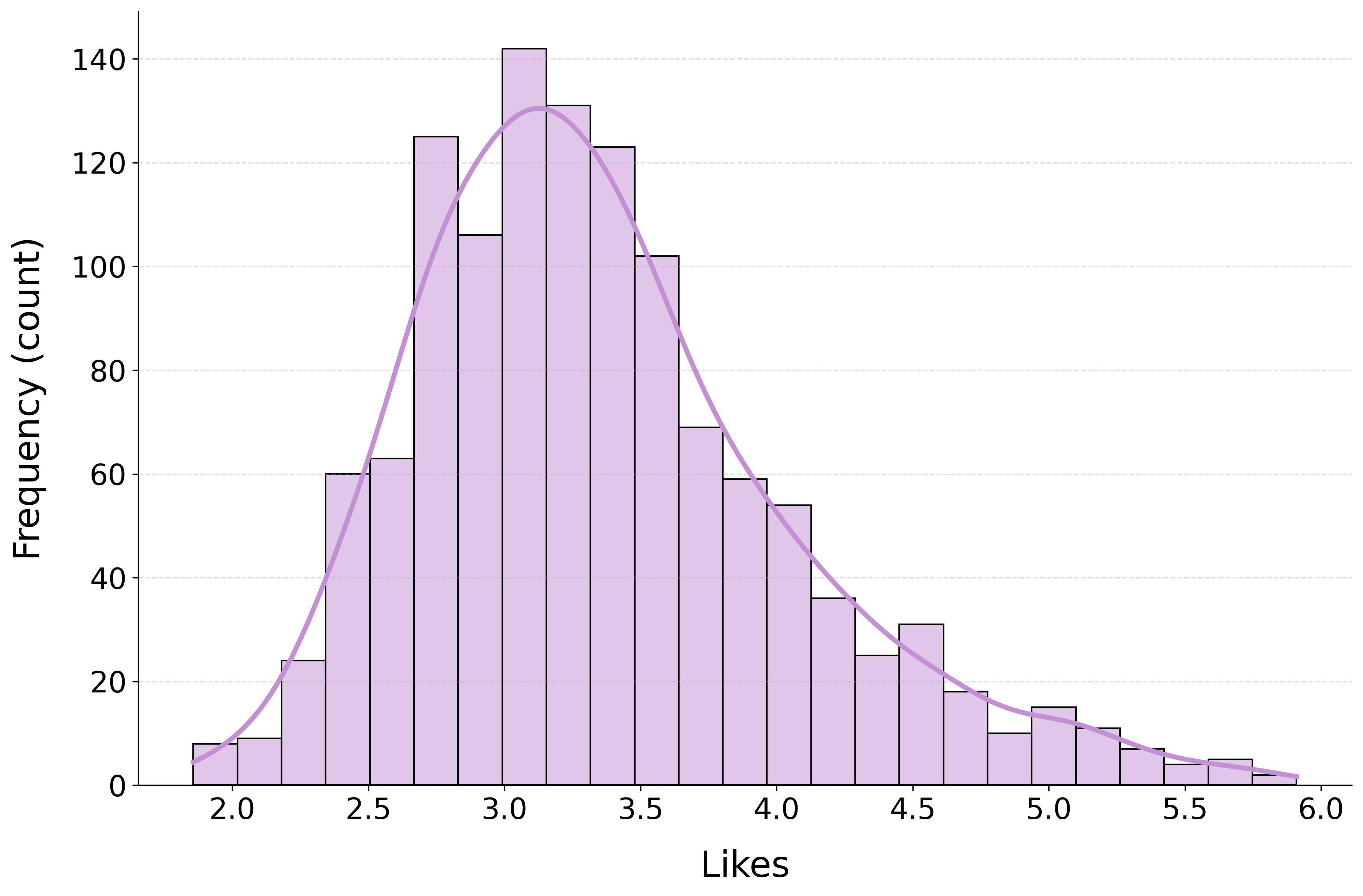

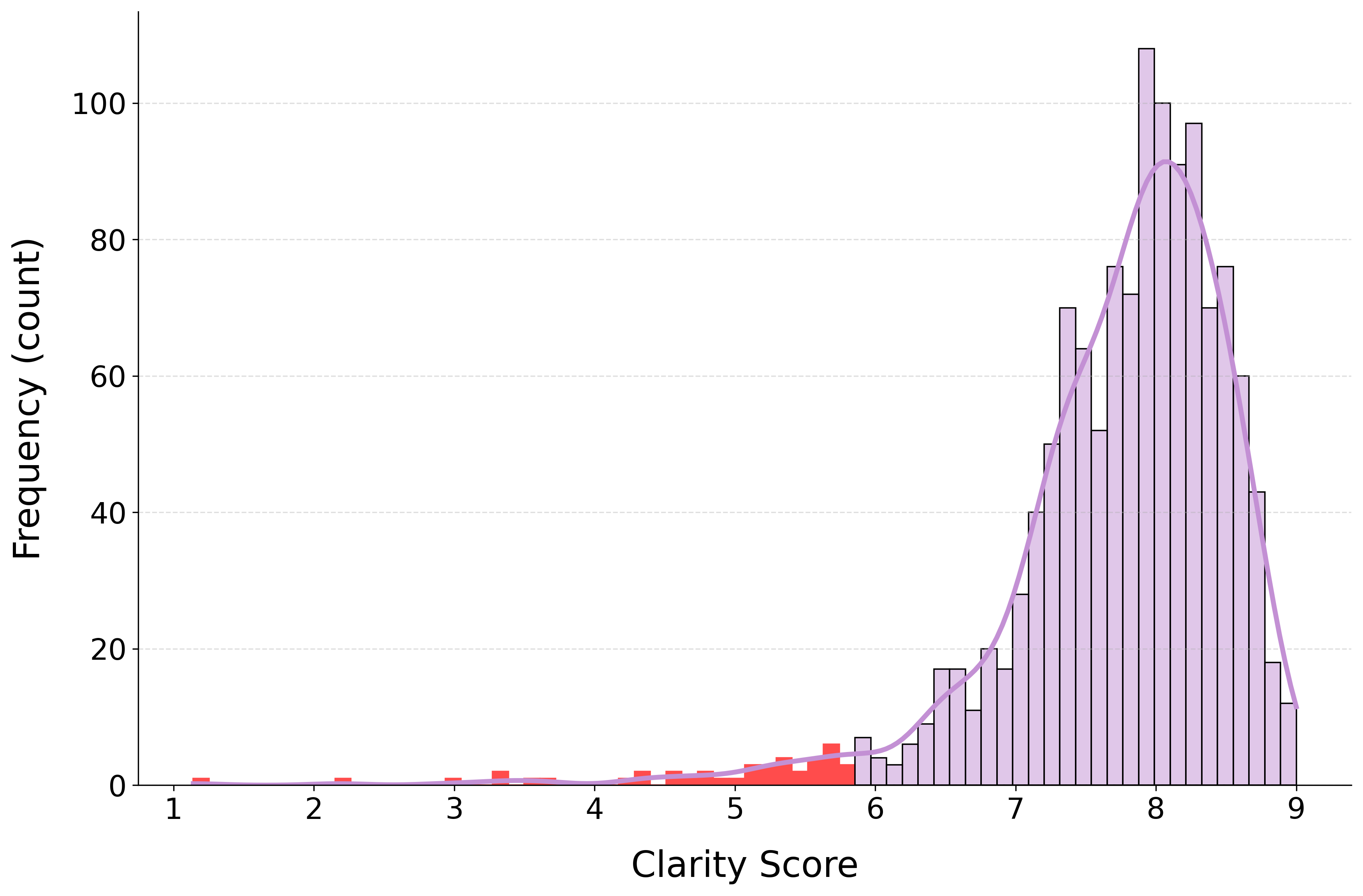

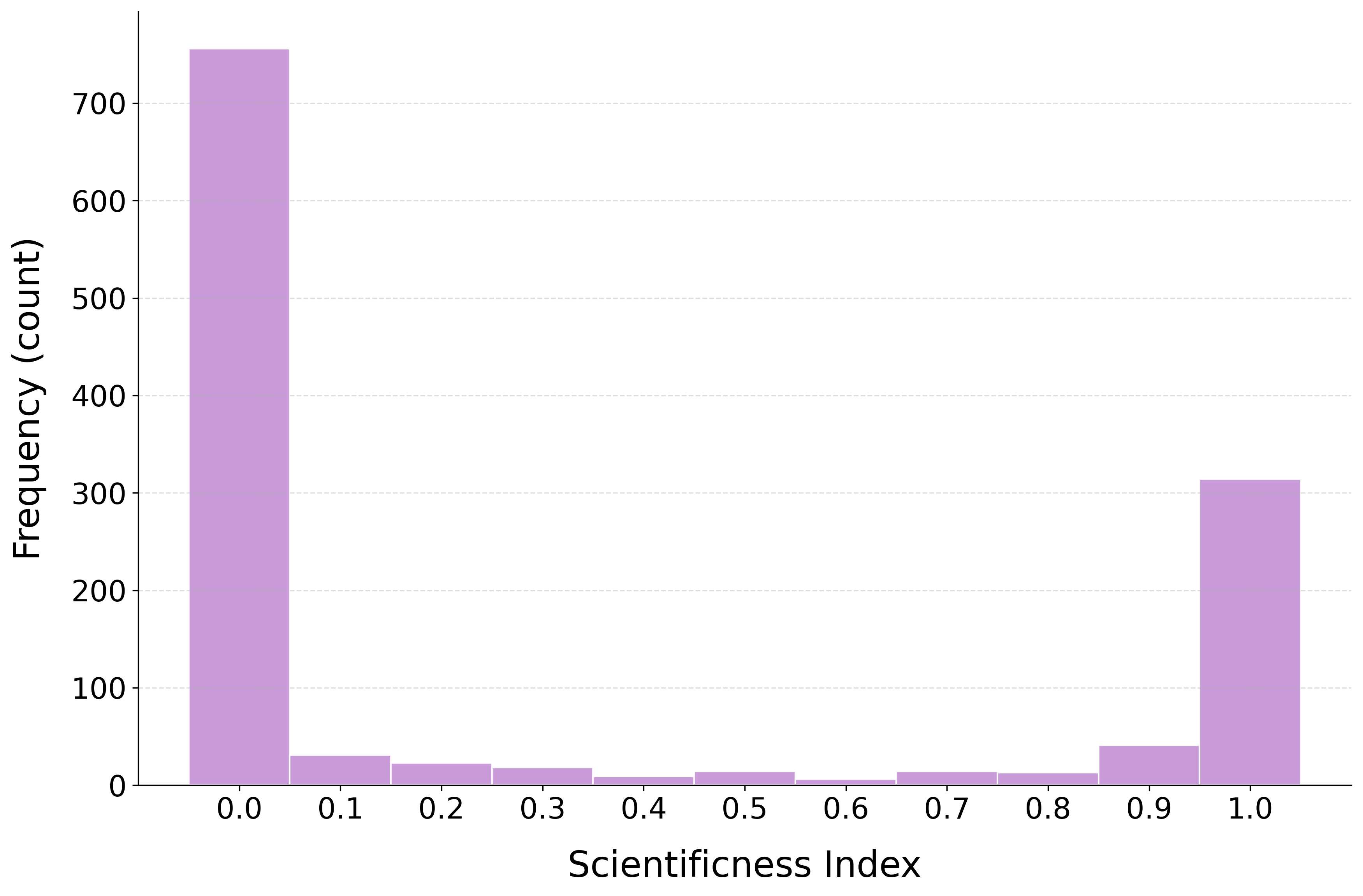

Rigorous exclusion of non-lecture or multimodal-performative talks was achieved by thresholding low-clarity outliers, corroborated via post-hoc human evaluation. The scientific and topical classification of transcripts was also conducted using repeated prompting, leading to highly stable and bimodal category assignments.

Distributional Analysis and Reliability

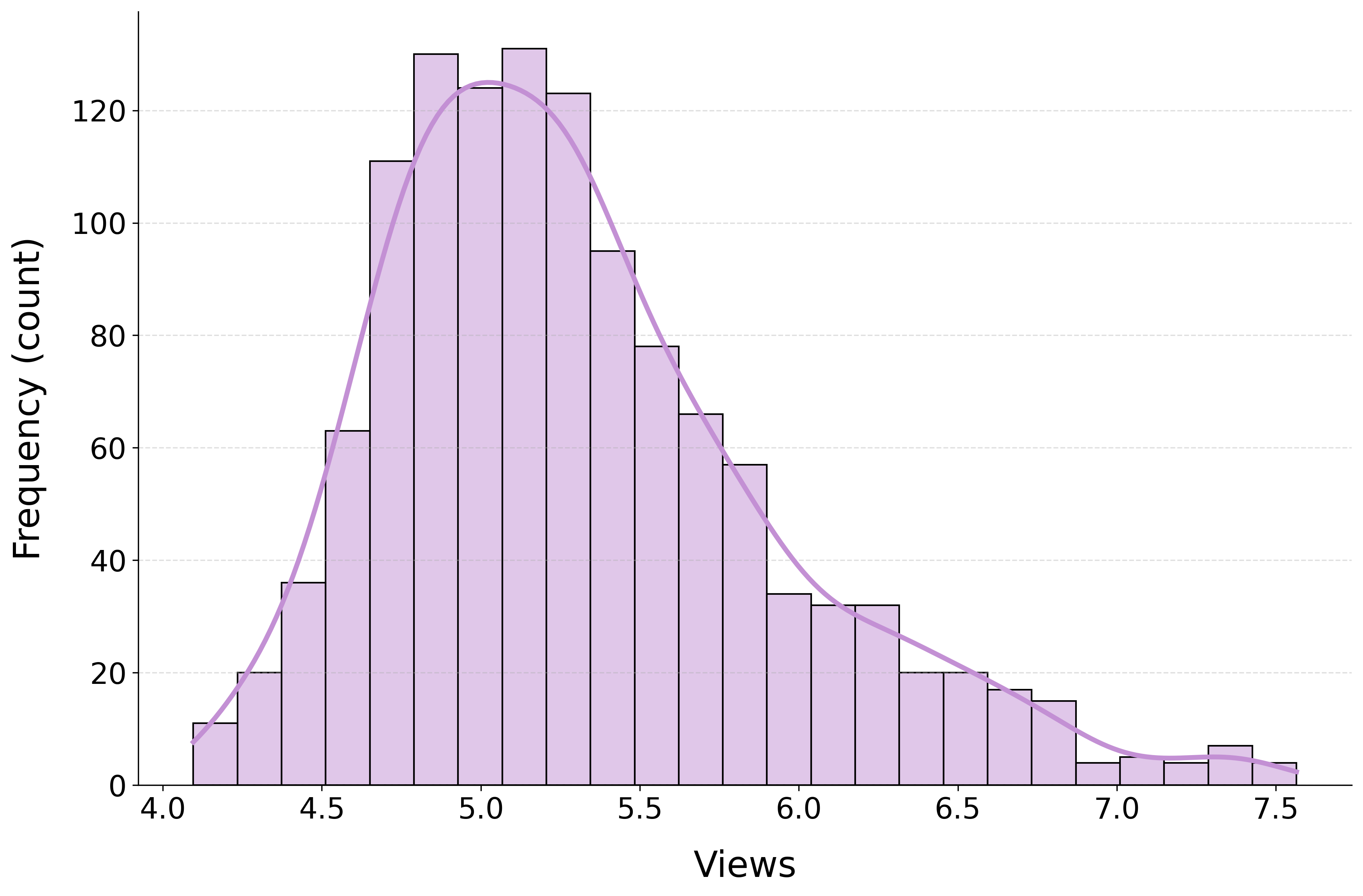

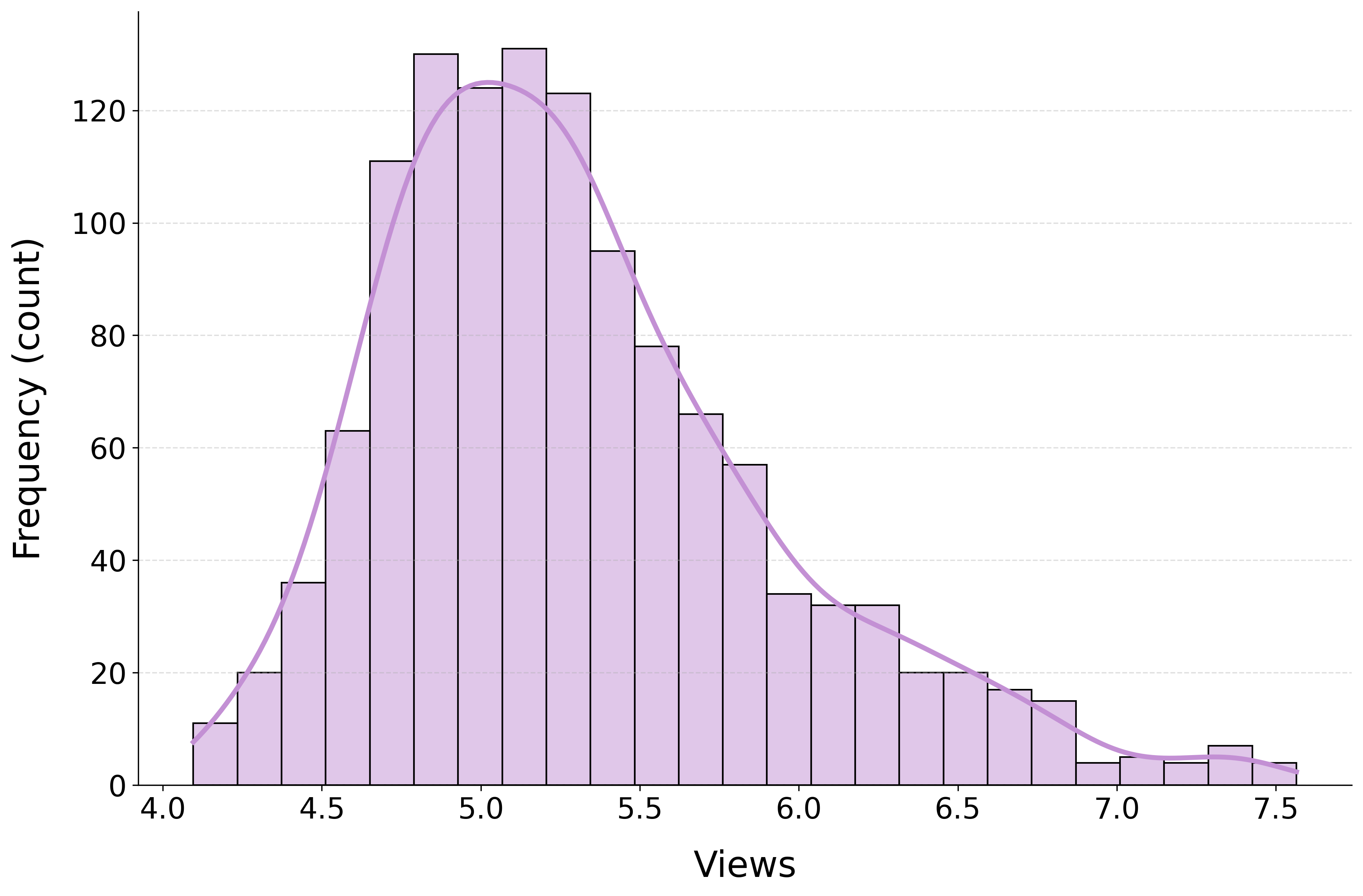

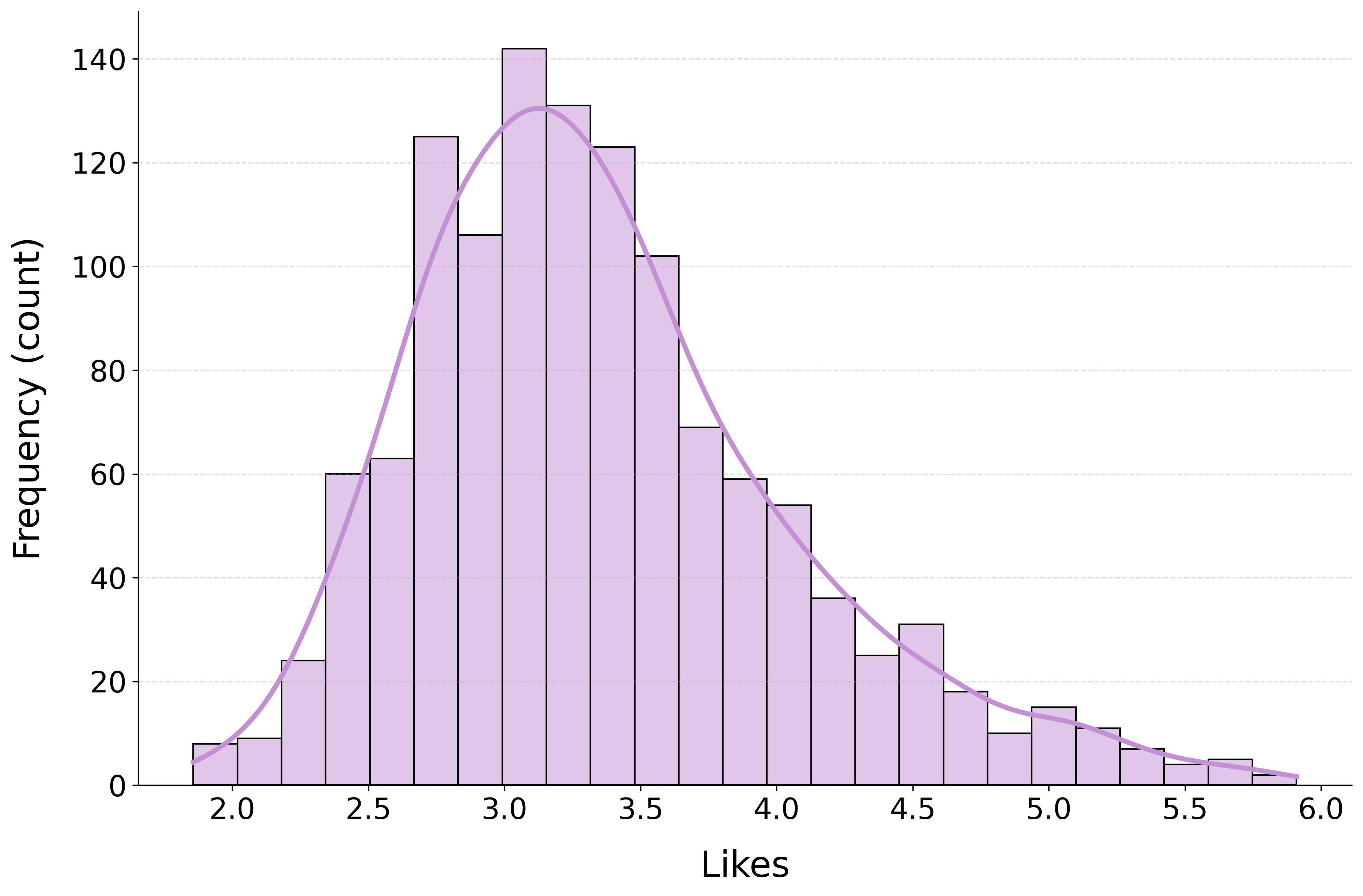

Engagement metrics on YouTube exhibited strong positive skew, necessitating log transformation for statistical modeling.

Figure 2: Histograms of the log-transformed TED Talk engagement metrics.

AI-derived clarity scores were heavily concentrated in the upper range with a minority left-tail, reflecting the effect of standardized transcript-based communication.

Figure 3: Distribution of AI-derived clarity scores across all TED Talks in the dataset. (N = 1,280).

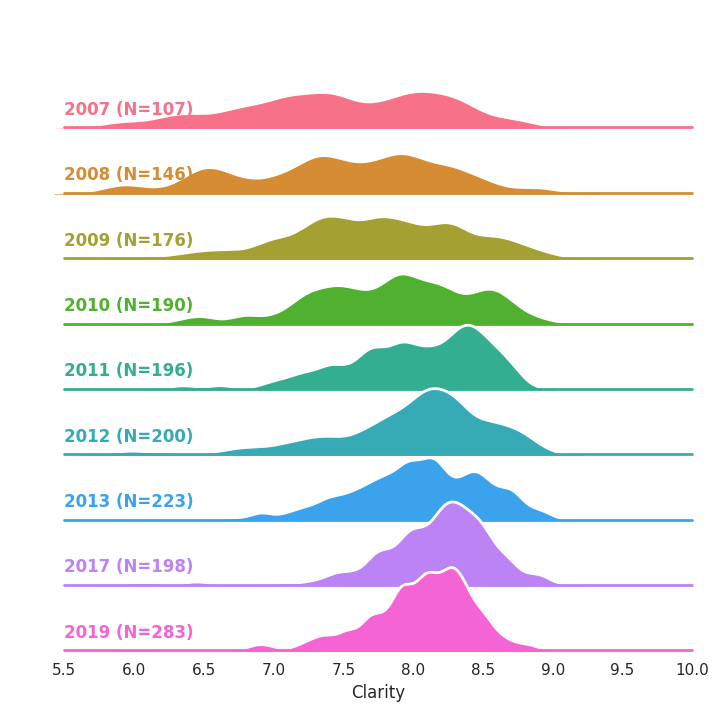

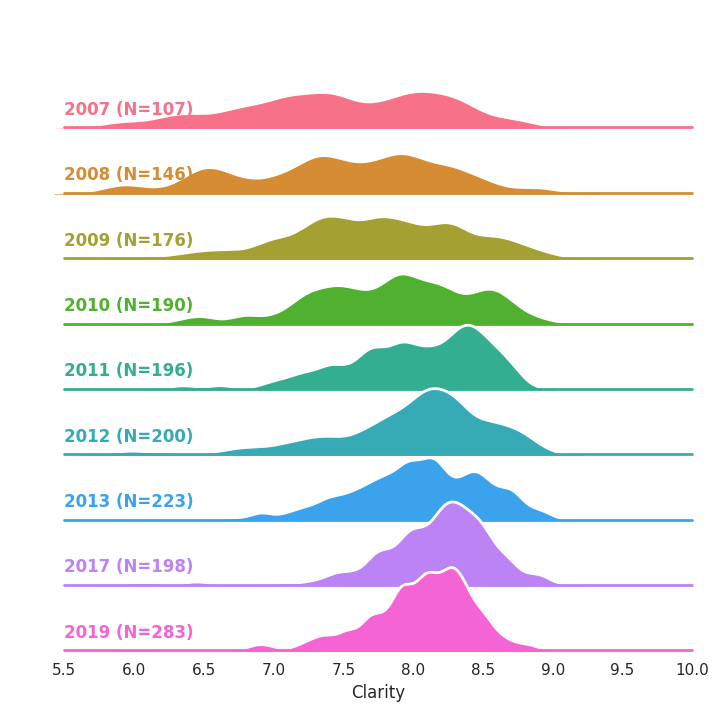

Clarity scores increased over time, with decreased inter-talk variance, evidencing TED’s longitudinal professionalization and genre convergence.

Figure 4: Ridgeline density plots of clarity scores by year; mean clarity increases and variance contracts, indicating progressive standardization within TED.

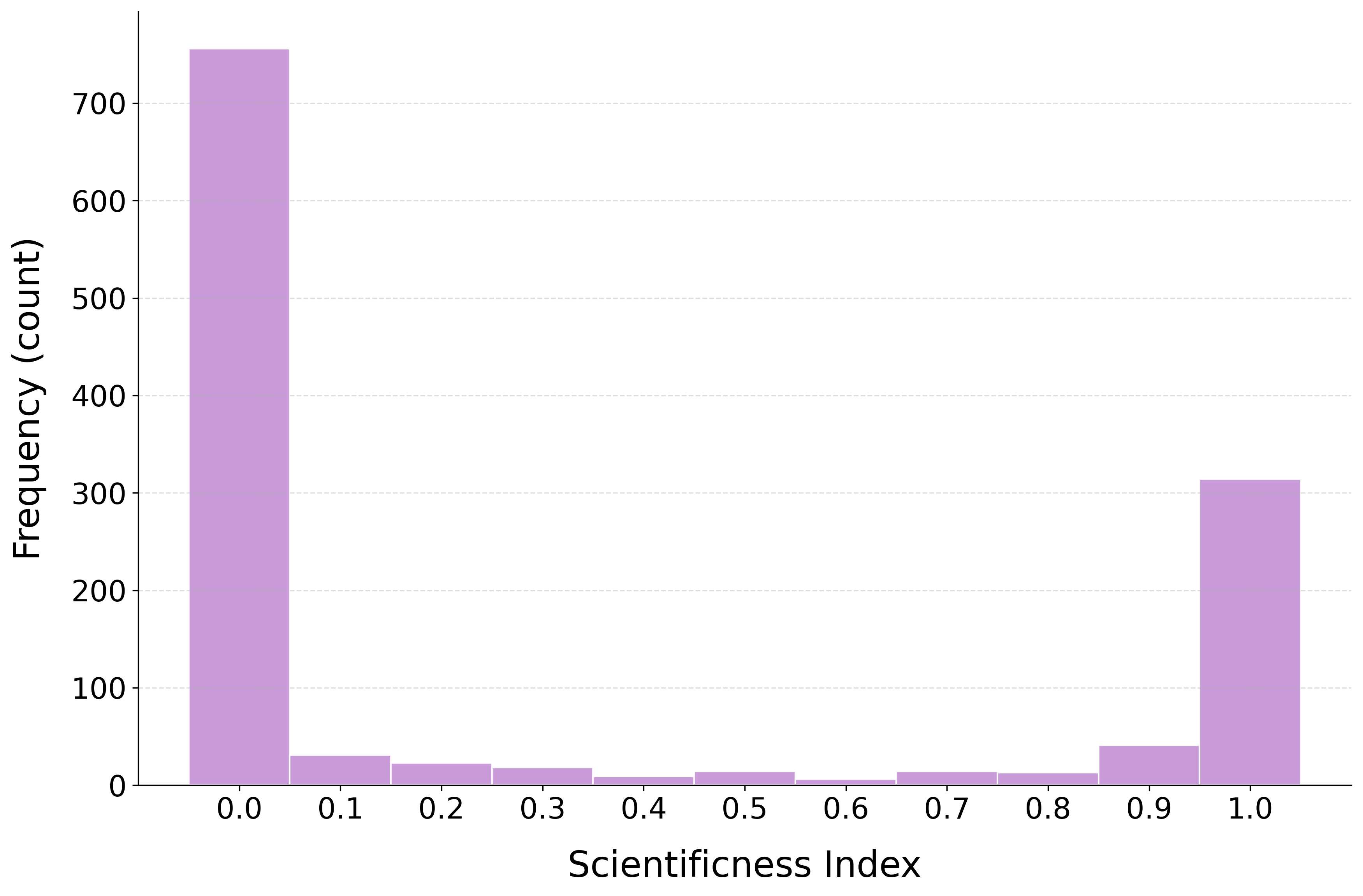

The scientificness classification revealed a bimodal distribution, confirming labeling reliability for science-vs-non-science content.

Figure 5: Most talks are robustly classified as clearly scientific or non-scientific, validating the transcript-based approach.

Main Results

Clarity as a Predictor

The most robust and conceptually novel result is the strength and consistency of transcript-level clarity as the dominant predictor of TED Talk engagement. In hierarchical regressions, clarity produced the largest standardized effect (β=.339 for likes; β=.314 for views), explaining 9–10% additional variance over baseline factors such as duration, topic, and scientific status. The total R2 achieved was 29% for likes and 22.5% for views.

Notably, the clarity effect was invariant across topical categories (e.g., Cosmos, Tech, Society, Mind, etc.) and between scientific and non-scientific talks, with negligible category × clarity interactions. The implication is that, for global digital audiences, clarity’s effect is domain-general.

Superiority over Readability Metrics

AI-derived clarity showed superior predictive power relative to classical readability scores, such as Flesch Reading Ease, which were only weakly associated with engagement. The clarity metric correlated more strongly with both likes and views than readability, and showed only weak, negative correlation with readability, supporting its orthogonality and capturing of higher-order discourse features rather than mere linguistic simplicity.

Longitudinal Trends

Longitudinal analysis revealed increased clarity and reduced variance over time at TED, driven by institutional professionalization and speaker coaching. However, the attenuation of clarity–engagement correlations in the standardized late phase suggests a ceiling effect: when clarity is uniformly high, incremental changes exert less behavioral impact.

Prompt and Model Dependence

Prompt adaptation to the TED genre significantly increased the clarity–engagement correlation relative to generic academic prompts, highlighting the need for genre-specific evaluation frameworks. Cross-model validation (GPT-4o, Gemini, Claude) yielded consistent positive correlations, demonstrating the robustness of LLM-based clarity assessment.

Theoretical and Practical Implications

Cognitive and Communicative Mechanisms

The findings substantiate processing fluency theory in mass communication: higher clarity corresponds with reduced cognitive load, lowering processing effort and enhancing engagement propensity. These results extend processing fluency from experimental and lab contexts to aggregated real-world digital behavior, supporting the centrality of clarity in optimizing audience response at scale.

AI and Communication Evaluation

The use of LLMs as clarity evaluators signals a new paradigm for scalable, holistic text analysis. The demonstrated reliability and external validity (relative to human and behavioral ground-truth) suggest LLM-based feedback systems could be instrumental for improving public science and educational communication. This methodology could be generalized to aid educators, public speakers, and media producers in real-time content optimization.

Genre and Context Sensitivity

The results make clear that communicative quality is not context-independent. LLM prompts and evaluation frameworks must be adapted to the genre’s rhetorical norms (e.g., TED vs. academic lectures), as what constitutes "clarity" is genre-specific, with implications for automatic speech assessment pipelines and universal rubrics.

Limitations and Future Directions

The current approach is transcript-centric and excludes analysis of multimodal features (gesture, intonation, visuals) present in video. While clarity exerts a robust effect in transcript-dominated modalities, future integration with vision-LLMs could elucidate cross-modal interactions and refine predictors for hybrid communicative environments.

Additionally, the focus on TED—a highly curated, professionalized domain—raises questions about generalization to more heterogeneous platforms (e.g., TikTok, open online debates, MOOCs). Applying this analytic framework beyond TED will help determine if clarity’s predictive premium persists in less standardized, more diverse digital genres.

Conclusion

This paper demonstrates, with strong quantitative evidence and methodological rigor, that linguistic clarity—measured using AI-based transcript evaluation—is a primary, domain-general predictor of aggregate audience engagement with TED Talks. This effect is robust across time, topic, and content type, and significantly outperforms traditional text readability indices. The research establishes transcript-level clarity as a core, scalable variable linking communicative strategy to behavioral outcomes in digital mass communication, and outlines a methodological blueprint for leveraging LLMs to enhance clarity—and, consequently, public engagement—in educational, scientific, and popular discourse.