- The paper demonstrates that aggressive unlearning ensures nudity suppression but severely degrades compositional fidelity in T2I models.

- The methodology employs systematic evaluation on Stable Diffusion 1.4 using benchmarks like T2I-CompBench++ and GenEval to quantify object loss, attribute leakage, and mode collapse.

- The study concludes that unlearning strategies must balance erasure efficacy with semantic and compositional integrity to maintain overall generative utility.

Evaluating Compositional Degradation in Unlearned Text-To-Image Diffusion Models

Introduction

This work analyzes the impact of post-hoc concept unlearning in text-to-image (T2I) diffusion models on compositional generation capabilities, focusing on the trade-off between effective content erasure and the preservation of generative utility. Using a systematic empirical approach, the analysis examines whether state-of-the-art unlearning techniques designed to remove undesirable content (specifically nudity) from models such as Stable Diffusion 1.4 inadvertently degrade the model’s ability to perform compositional synthesis on neutral, semantically distant prompts. The central claim is that prioritizing erasure efficacy in isolation is insufficient and that robust unlearning must also maintain compositional and semantic alignment.

Background

Compositionality in T2I models refers to the synthesis of scenes that accurately encode multiple entities, attributes, and relational constraints from natural language prompts. Models based on diffusion architectures (DDPMs, latent diffusion, or Transformer-based backbones) possess distributed, entangled semantic representations, making them susceptible to collateral damage in the feature space during any parameter manipulation. Unlearning methods—whether based on global parameter updates, localized interventions, or adversarial regularization—are typically evaluated for targeted erasure capability. However, the entanglement of semantics means that even localized or “surgical” unlearning can disrupt compositional logic, including attribute binding, spatial relationships, and multi-object understanding.

Experimental Design

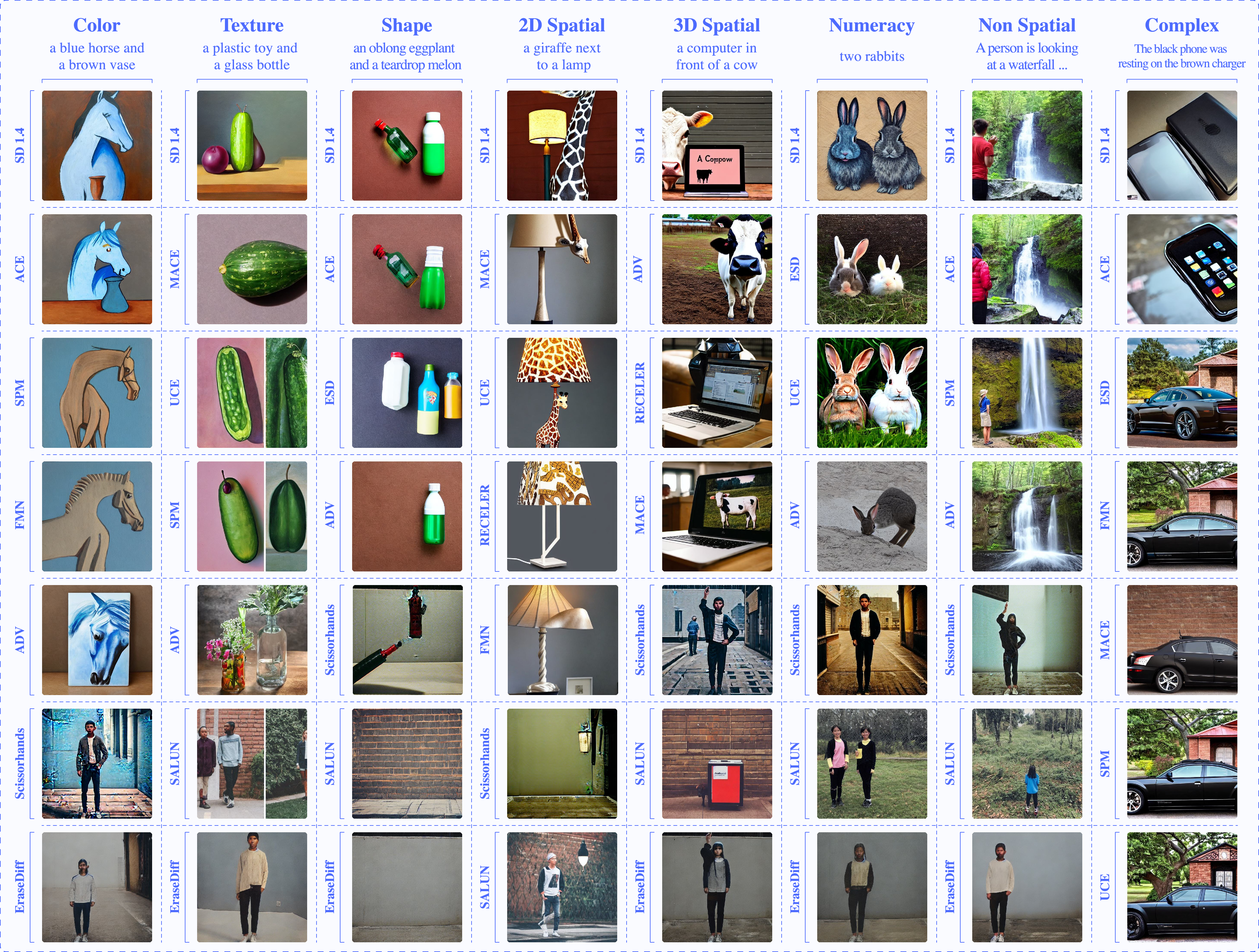

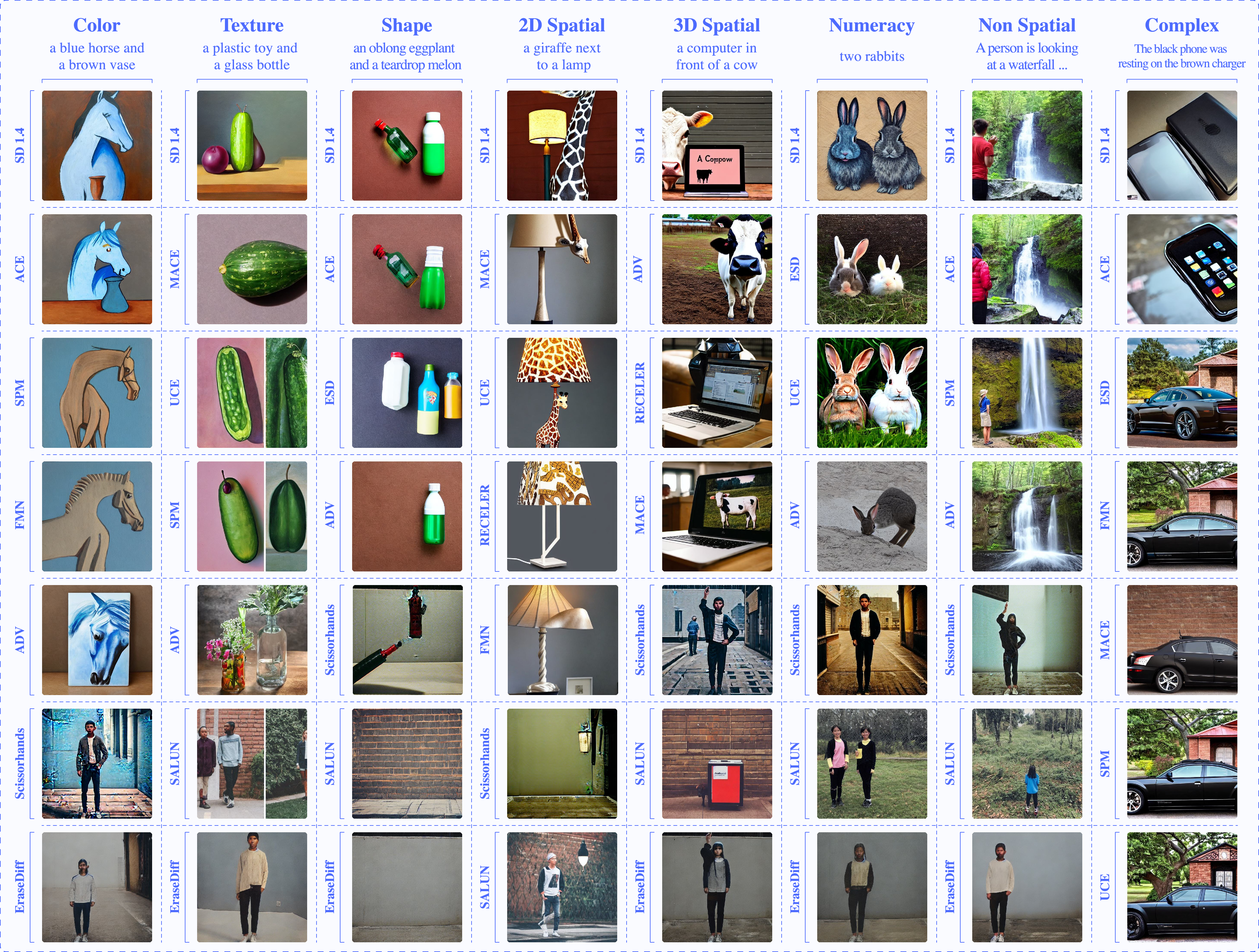

The study evaluated a diverse range of unlearning algorithms (ACE, UCE, ESD, EraseDiff, Salun, SPM, Scissorhands, MACE, ADV, etc.) using a fixed Stable Diffusion 1.4 backbone to ensure that variations were solely attributable to unlearning strategies. Safety (erasure) performance was benchmarked against the I2P dataset focused on nudity removal, while compositional utility was assessed via T2I-CompBench++ and GenEval, two standardized benchmarks probing attribute binding, relational reasoning, spatial composition, numeracy, and multi-object generation. Additional metrics included BVQA and CLIP-score alignment on semantically neutral prompts (i.e., prompt variants with explicit attributes removed) and Frechet Inception Distance (FID) for overall quality. The qualitative analysis was complemented by visual inspection of prompt generations.

Results: The Compositionality–Safety Trade-off

A consistent inverse relationship was found between unlearning power and compositional fidelity. Methods that delivered near-perfect suppression of nudity (EraseDiff, Salun, ADV, Scissorhands) did so at the cost of severe structural degradation, including:

Quantitatively, the most aggressive erasure methods inflated FID (up to 73.11) and collapsed GenEval and T2I-CompBench++ scores (in some cases, over 80% relative drop in compositional categories such as 2D-Spatial and across-the-board for color and texture). In contrast, methods prioritizing semantic preservation (e.g., ACE, SPM, UCE) achieved erasure with substantially less compositional damage, maintaining comparability to the baseline in shape and sometimes improving on single-object recognition. However, these methods yielded lower erasure completeness, demonstrating that trade-offs are inherent in current approaches.

Figure 2: Aggressive unlearning methods produce mode collapse or object loss, whereas ACE and SPM retain scene structure and compositional binding.

Qualitative analysis confirmed that catastrophic methods (EraseDiff, Salun, Scissorhands) induced manifold collapse, turning the model’s output space into repetitive or minimalist scenes irrespective of the input prompt—evidence that over-sanitization equates to loss of generative capacity rather than precise concept removal.

Implications and Theoretical Significance

This work highlights the limitations of contemporary evaluation protocols emphasizing only safety metrics. The major implication is that practical unlearning in T2I diffusion models requires the formulation of objectives that regularize for semantic and compositional integrity, not just erasure success. Failure to do so undermines trust, as nominally “safe” models become semantically unstable, jeopardizing their utility in real-world creative and design workflows where compositional reasoning is critical.

From a theoretical standpoint, the findings stress the challenges introduced by the entangled, distributed semantic subspace in diffusion T2I models. The clear non-uniformity in degradation—with spatial and attribute binding being the most fragile—suggests that future methods should target concept disentanglement with localization in the parameter space, perhaps leveraging continual learning or structured pruning. Additionally, it may be necessary to integrate explicit compositional regularization or multi-object constraint alignment during fine-tuning, or to design hybrid architectures that segregate safety-critical and generative features at a representational level.

Speculation on Future Directions

Future research should target the development of compositionality-aware unlearning objectives, possibly leveraging techniques from disentangled representation learning or modular neural design, to mitigate cross-concept interference. The empirical synthesis in this work provides evidence that integrated compositional benchmarks are essential for the holistic evaluation of safety mechanisms. There is also potential for leveraging more advanced meta-learning or reinforcement frameworks that adaptively optimize the erasure-utility Pareto front rather than relying on static hyperparameter schedules.

Model auditors and practitioners should not rely exclusively on suppression accuracy but incorporate compositional generalization metrics and visual diagnostics as part of the safety pipeline. Ultimately, the field must move toward unlearning algorithms that guarantee both semantic precision and compositional integrity, thus achieving both utility and compliance.

Conclusion

The systematic evaluation presented demonstrates that concept unlearning in T2I diffusion models is a dual-natured process: aggressive erasure undermines compositional generation, while structural preservation limits the completeness of suppression. The findings underscore the necessity of compositional alignment as a central objective in unlearning research. Safety-centric interventions that ignore this consideration are insufficient, as they risk producing models that are technically compliant but fundamentally broken in their generative syntax and scene understanding. The implications for both the deployment and further evolution of generative AI are substantial, motivating continued research into compositionality-preserving unlearning methodologies (2604.04575).