- The paper presents SIFT, a spectral subspace control framework that orthogonalizes gradients to resolve conflicts between primary and constraint objectives.

- It employs localized interventions via SVD-based subspace orthogonalization to reduce interference in multi-objective model steering tasks.

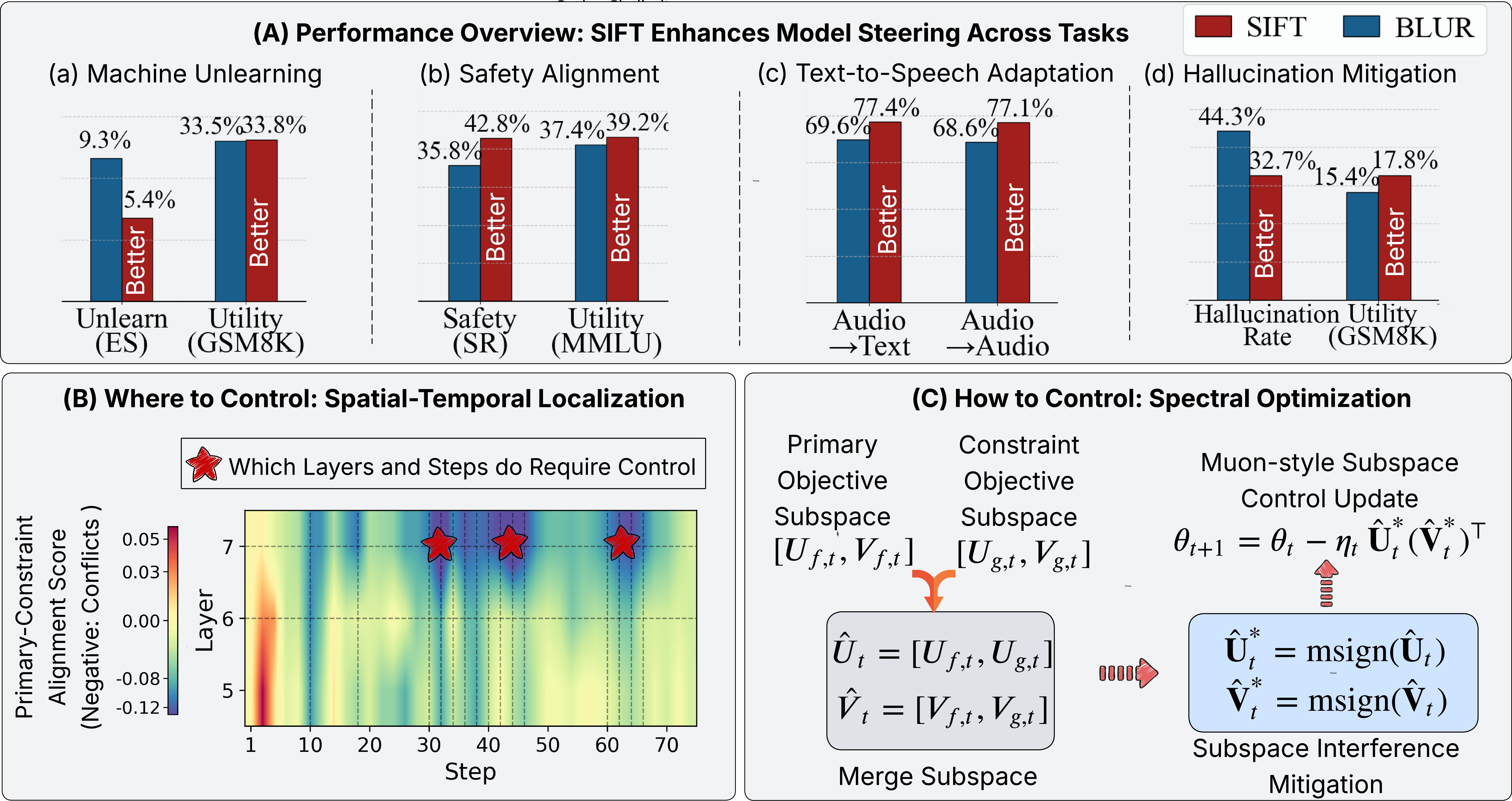

- Empirical results on unlearning, safety alignment, and cross-modal adaptation show SIFT outperforms traditional methods while preserving overall model utility.

Subspace Control and Controllable Spectral Optimization for Constrained Model Steering

Introduction and Motivation

The paper "Subspace Control: Turning Constrained Model Steering into Controllable Spectral Optimization" (2604.04231) addresses optimization pathologies arising from interference between multiple objectives during post-hoc model steering in foundation models. In real deployment scenarios, model customization is rarely unconstrained—practical requirements such as safety, privacy, unlearning, and cross-modal adaptation induce complex constraints that must be balanced against the maintenance of general utility or other desirable properties. The problem induces a constrained optimization scenario, often leading to severe conflicts between the primary and auxiliary objectives.

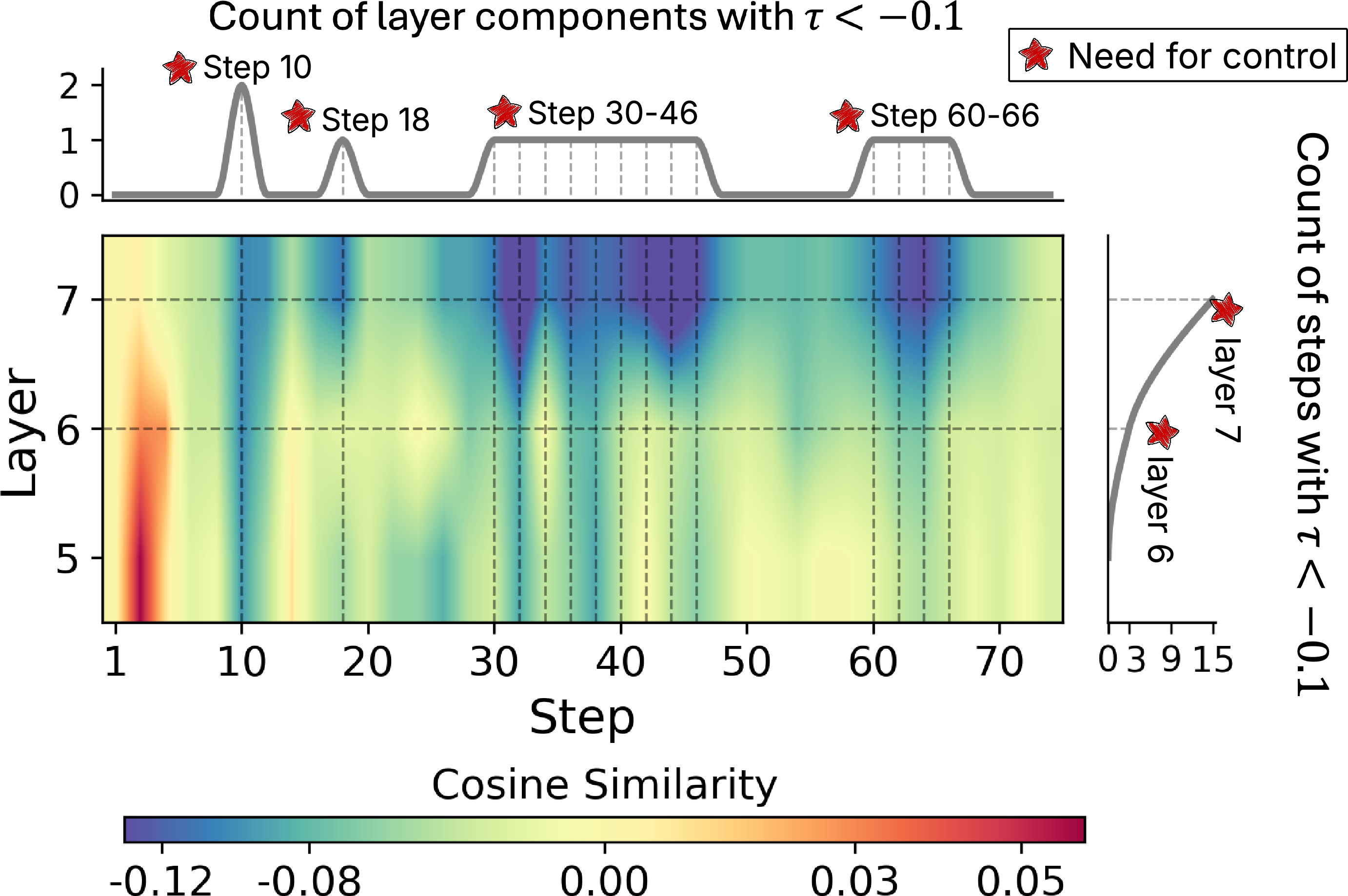

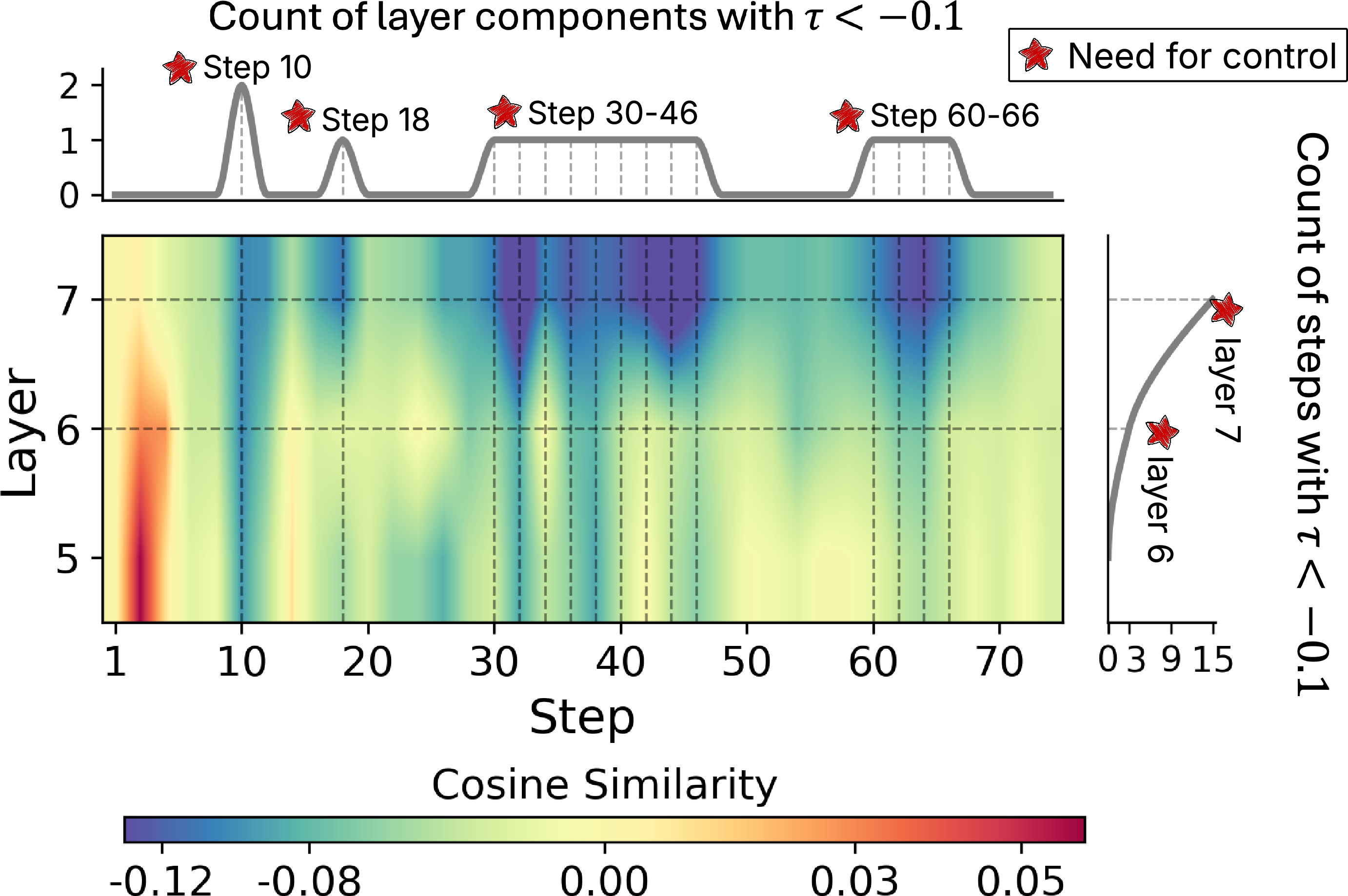

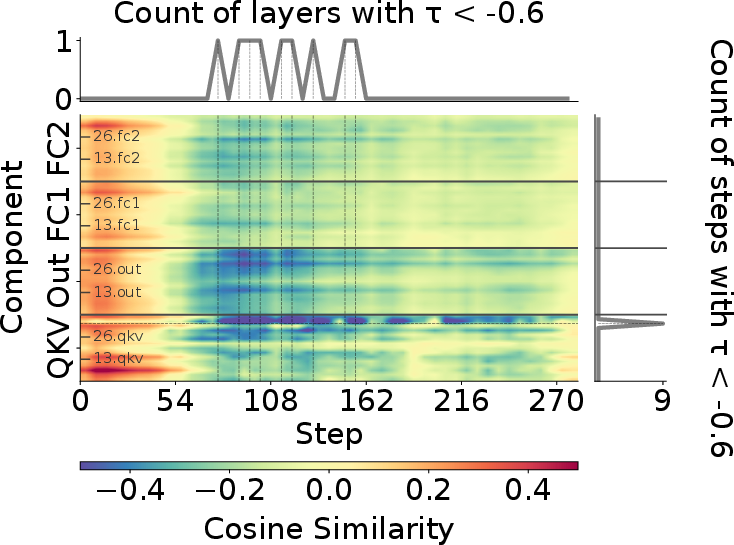

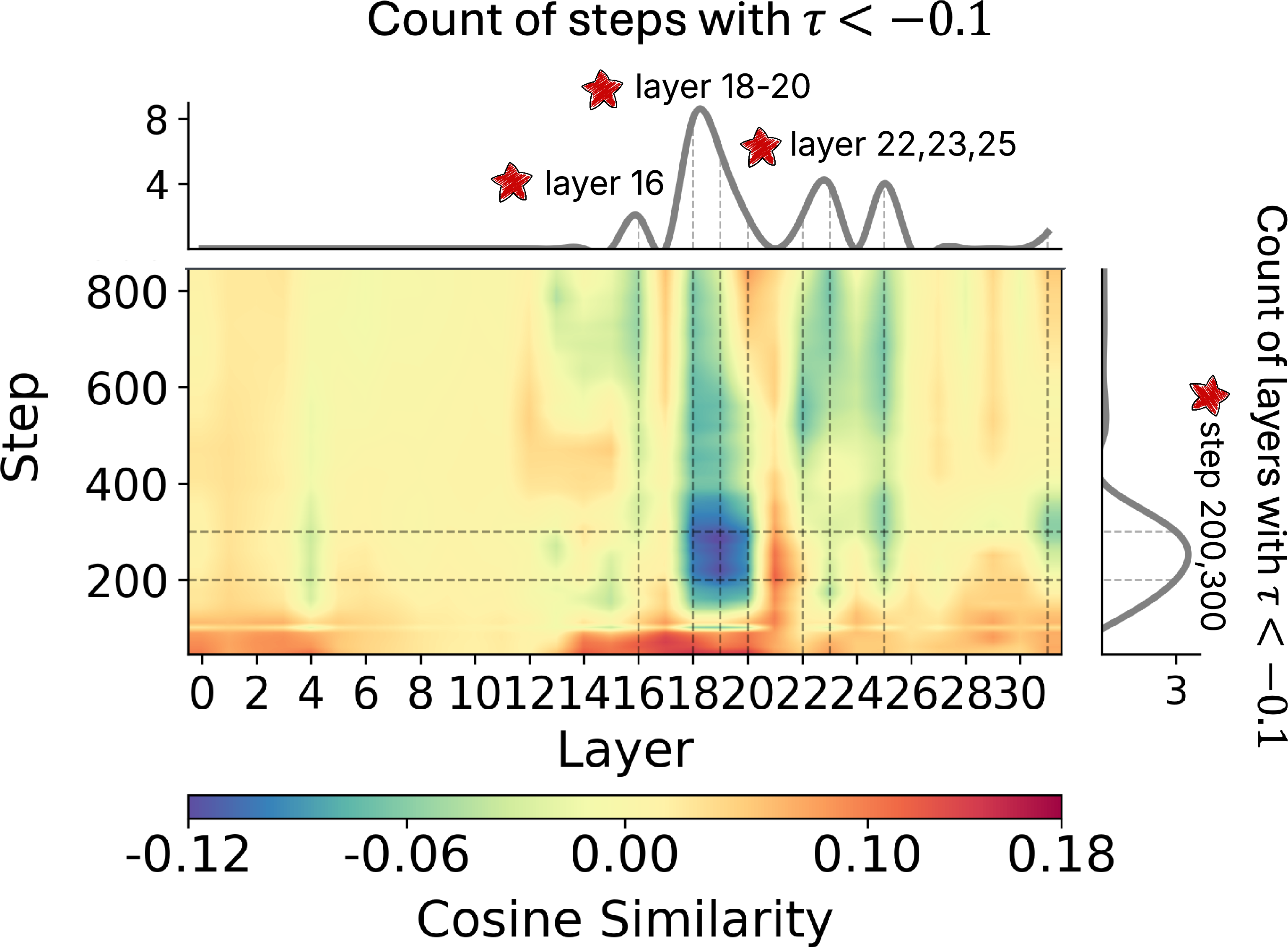

Diagnosis of the optimization process reveals that the conflict between objectives is neither uniform nor global but highly localized across both the spatial (layer-wise/module-wise) and temporal (stepwise) dimensions of training. Empirical gradient analyses indicate persistent gradient misalignment (negative cosine similarity) confined to specific model blocks and training steps, motivating the need for precise, selective intervention rather than monolithic control strategies.

Spectral Subspace Interference: Theory and Motivation

The foundational insight of the paper is the identification of "spectral interference" as the structural root cause of objective conflicts in constrained model steering. From the perspective of task vector arithmetic and model merging, combining parameter updates for divergent tasks (primary versus constraint) in parameter space typically leads to interference due to non-orthogonality of their respective spectral subspaces. A direct SVD-based merging yields non-orthogonal bases, causing descent directions to destructively interact—a phenomenon previously observed in the context of multi-task learning and model merging.

The authors formalize mitigation of this interference as a subspace orthogonalization problem. Specifically, via a whitening/Procrustes step, the expanded task subspace can be transformed to an orthonormal basis, ensuring that updates in the combined subspace respect mutual independence of constituent tasks.

This insight is tightly linked to recent developments in spectral optimizers, notably Muon, which leverage the matrix sign function (msign) to orthogonalize update directions. By drawing this explicit connection between "one-shot" merging solutions and iterative spectral descent with gradient orthogonalization, the authors place the challenge of constraint-aware steering within the algorithmic reach of spectral control.

The SIFT Framework: Localized Interference-Free Spectral Training

Building on this subspace control formalism, the authors introduce SIFT (Spectral Interference-Free Training), an optimally localized spectral update method for constraint-aware steering.

The core SIFT pipeline consists of:

- Gradient Alignment Scanning: For each parameter block at each optimization step, the cosine similarity between the gradients from the primary and constraint objectives is computed. Blocks and steps with strong negative alignment (below a fixed threshold ϵ) are identified as targets for corrective intervention.

- Momentum Subspace Construction: For identified blocks/steps, the top-K spectral components of the momentum matrices for each objective are extracted (via SVD) and assembled into an expanded momentum subspace. The low-rank structure is strongly present in practice, enabling K≪D (with D the native parameter dimension).

- Spectral Orthogonalization and Update: Using the msign function (computed efficiently via Newton–Schulz iterations), the concatenated objective subspaces are orthogonalized to produce interference-free bases. The update direction is then reconstructed via these orthogonalized components, replacing the naive momentum update in the intercepted block at the flagged step.

- Localization: All other blocks/timesteps are updated using the standard spectral optimizer (Muon), ensuring that computational overhead remains closely bounded and only arises when strictly necessary.

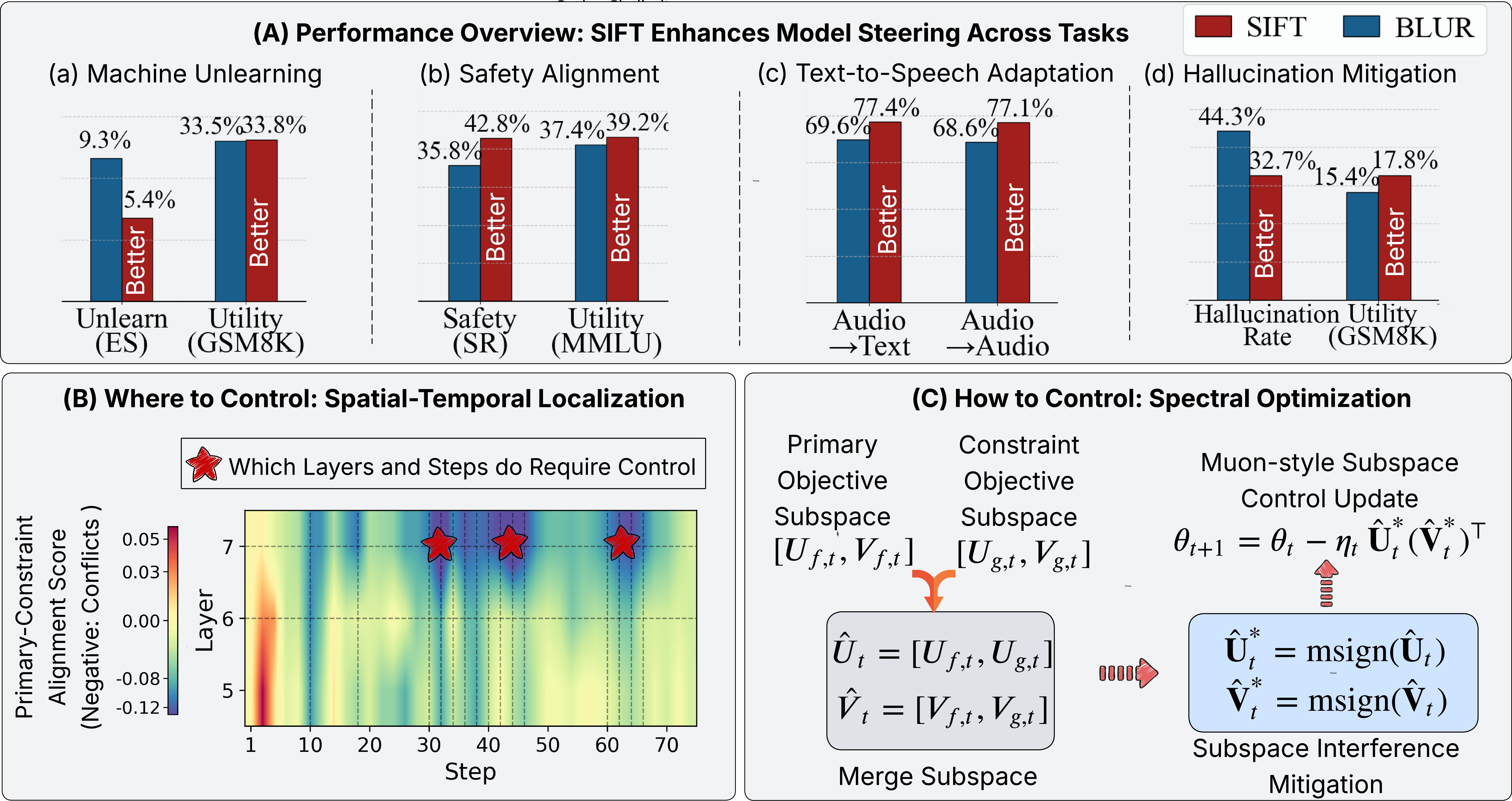

Figure 1: Schematic overview of the SIFT framework, highlighting performance across four model steering tasks, spatial-temporal localization of interventions, and the interference-free update via gradient orthogonalization.

Empirical Analysis: Diagnostics, Localization, and Subspace Sensitivity

The empirical framework includes:

- Gradient Misalignment Visualization: In the context of LLM unlearning, SIFT's diagnostic metrics reveal spatial-temporal localization where interference is concentrated in upper layers and specific training windows.

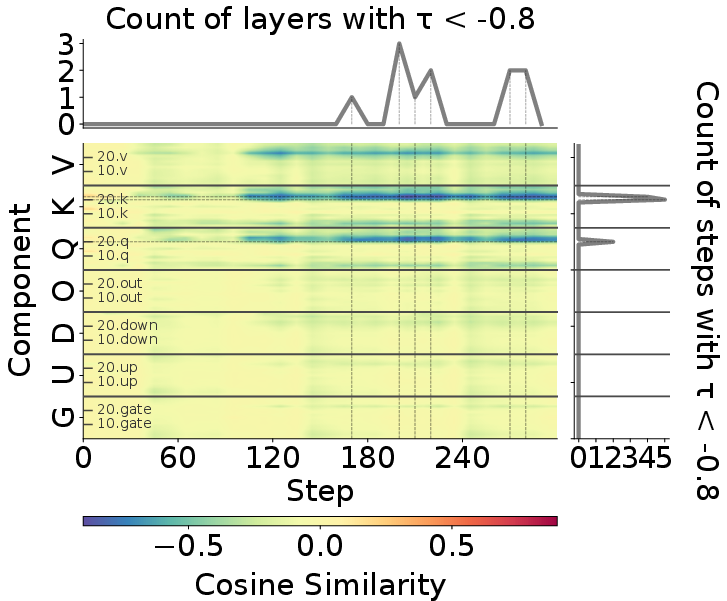

Figure 2: Visualization of layer- and time-wise gradient misalignment (τ) in LLM unlearning, showing persistent conflicts that motivate localized control.

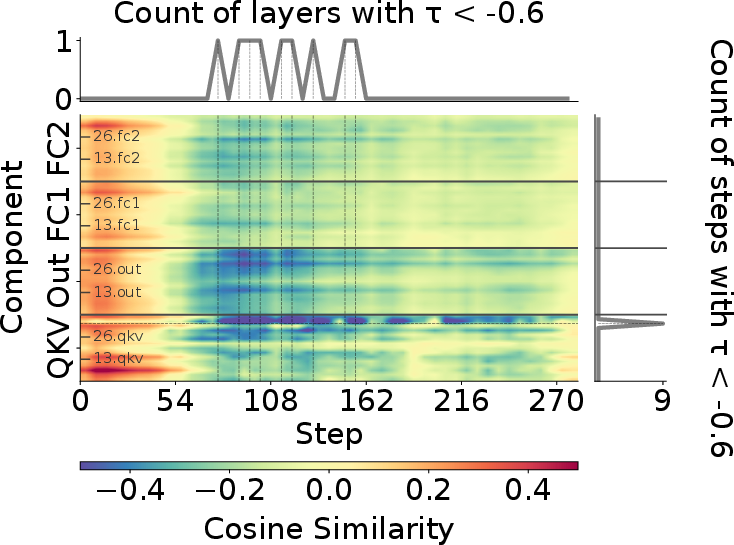

- Intervention Patterns: For text-to-speech adaptation, interference localizes specifically to the self-attention (QKV) matrices of target transformer layers, supporting the fine-grained block-wise nature of SIFT's intervention.

Figure 3: Layer-wise localization of interference in ESNLI-based speech adaptation—conflicts are highly concentrated in Q, K, V modules.

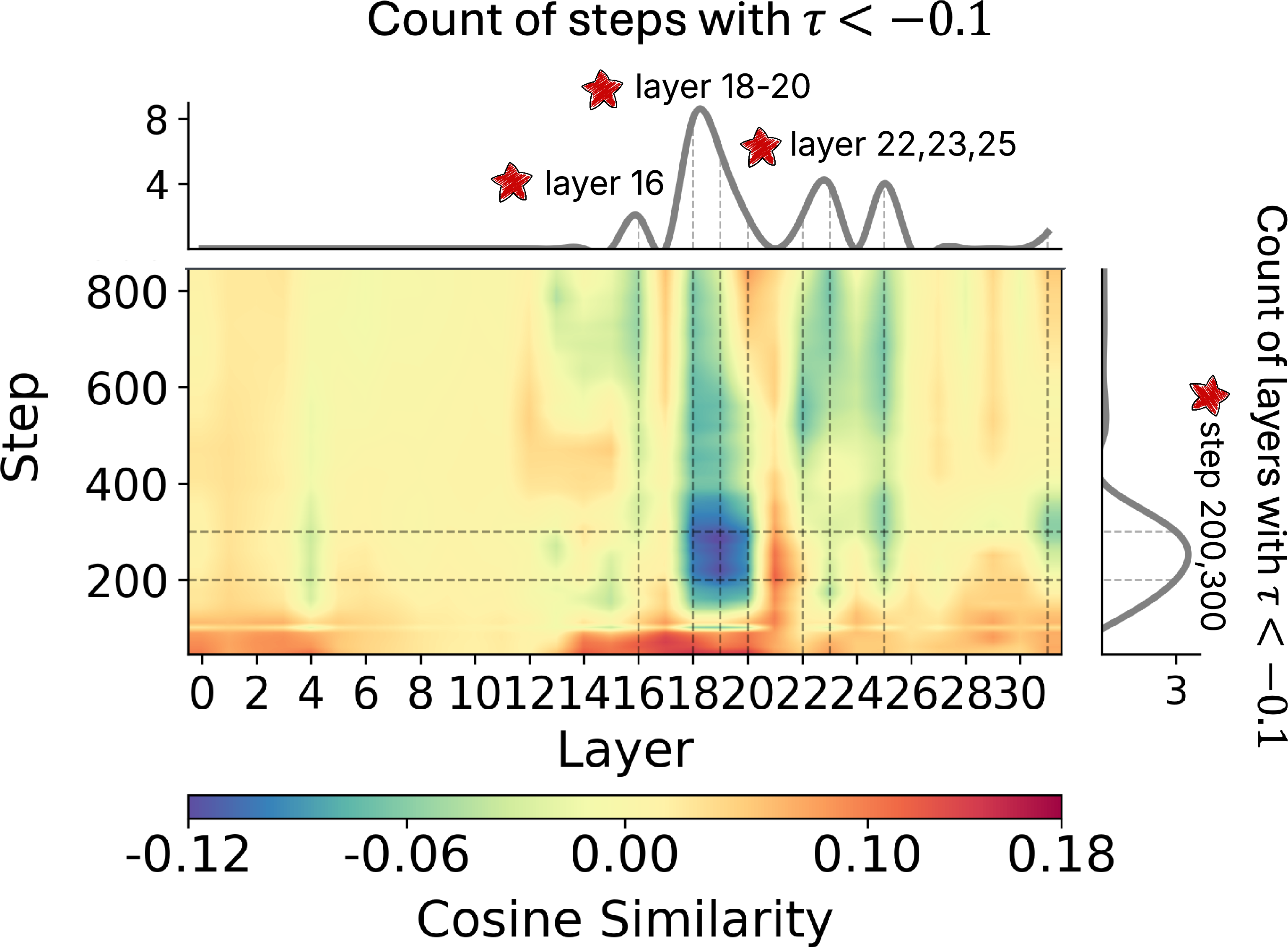

- Safety Alignment Intervention: For alignment, conflicts are concentrated in mid-level transformer layers and early steps, again supporting the sparse-localized activation mechanism.

Figure 4: Localization patterns in SIFT during safety alignment, indicating sparse conflicts primarily in the model's middle layers at early optimization steps.

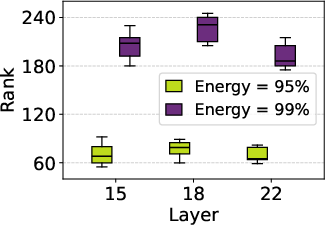

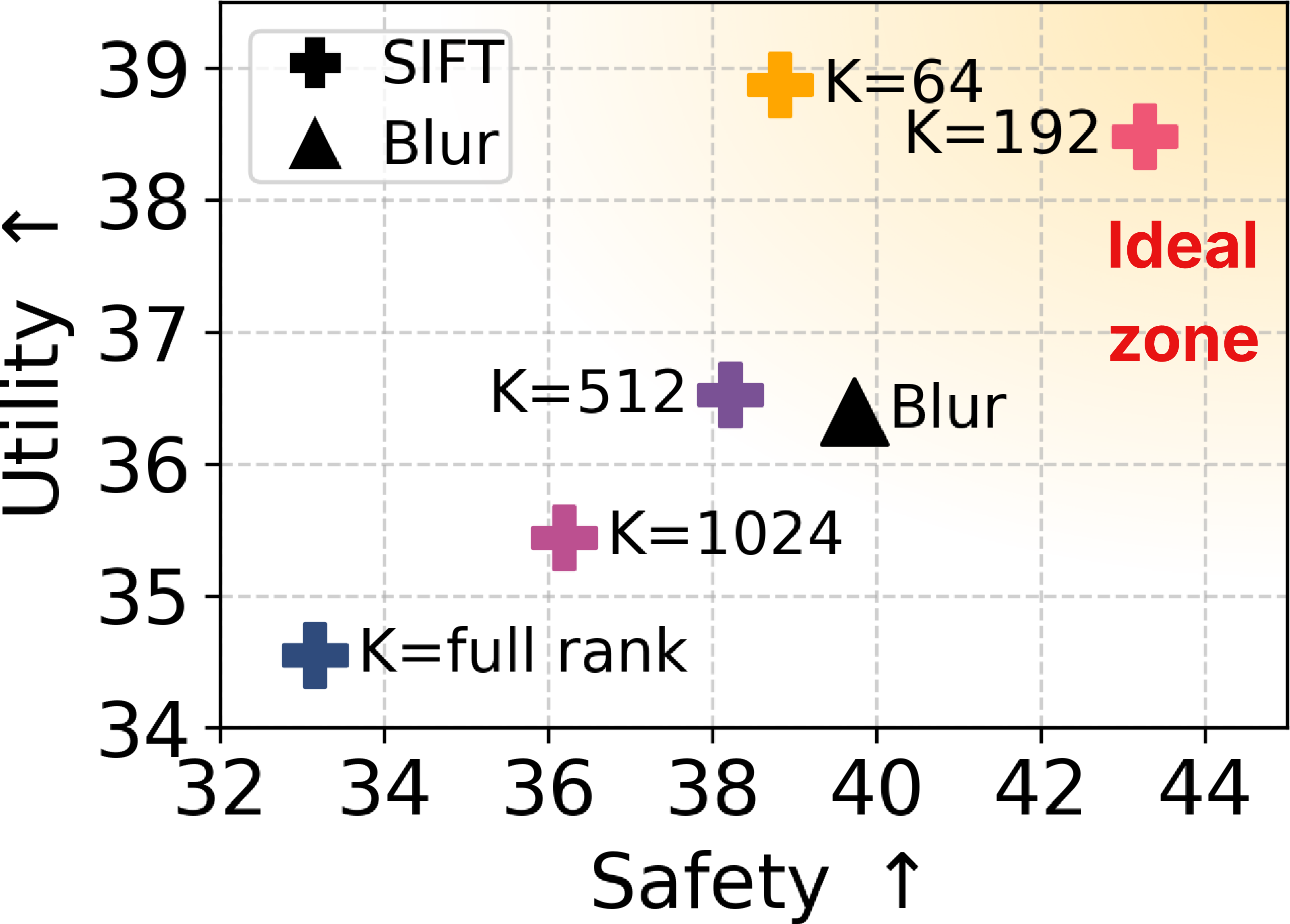

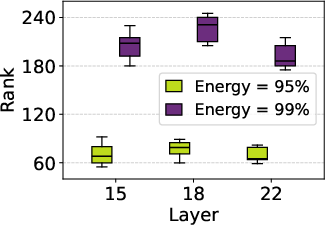

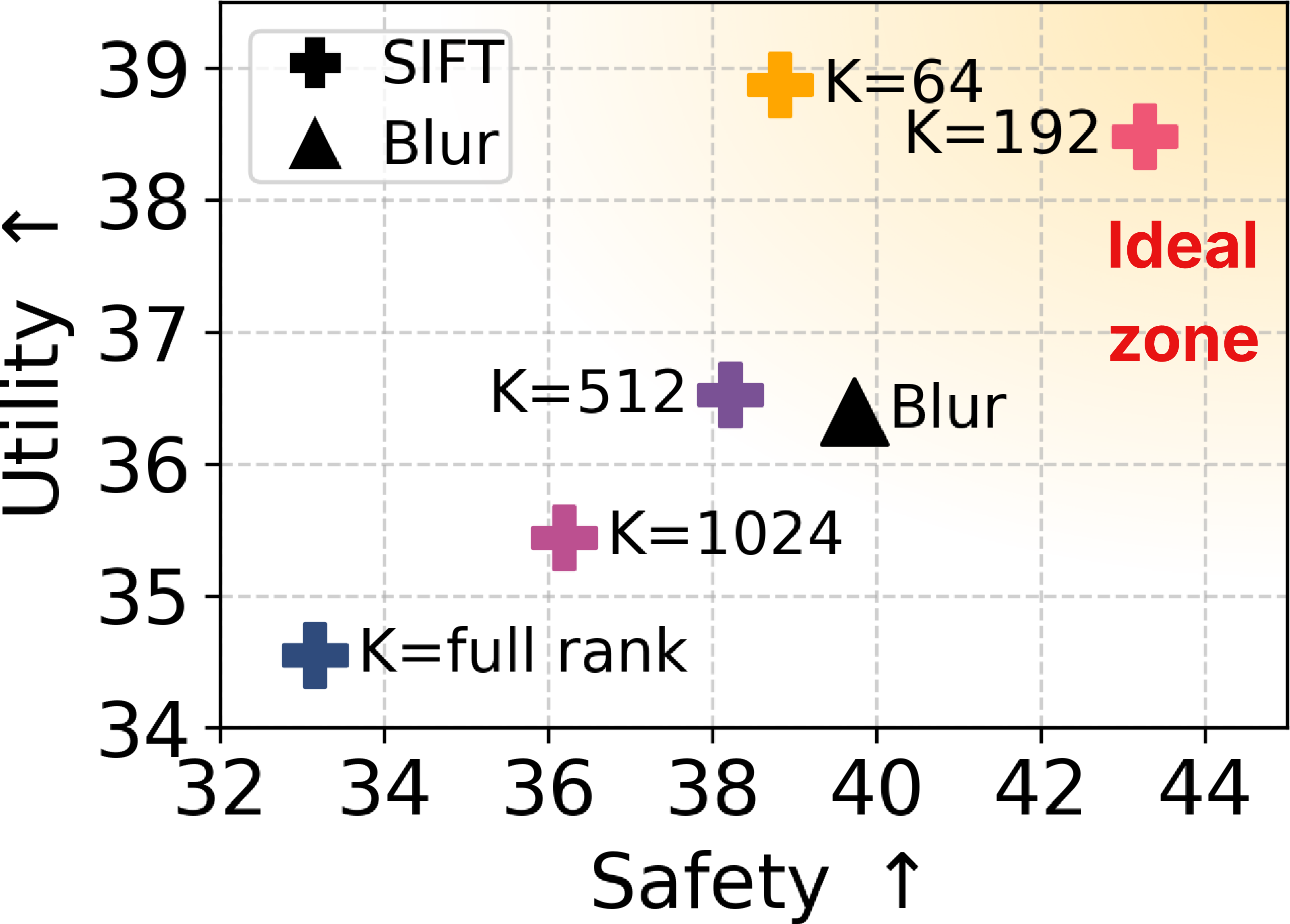

- Subspace Rank Sensitivity: SIFT-induced subspaces are verified empirically to be highly low-rank. Performance tradeoff curves show that moderate K (e.g., K<200 for 7B LLMs) produces the optimal safety–utility Pareto front, while overexpansion (K≫200) degrades both metrics.

Figure 5: Sensitivity analysis of SIFT to subspace dimension K—the effective rank of momentum matrices is low, and moderate K yields optimal tradeoff between safety and utility.

Experimental Results

SIFT is comprehensively benchmarked against both control-based (BLUR, POME) and standard (AdamW, Muon) optimizers on four critical model steering tasks: LLM unlearning, safety alignment, hallucination mitigation, and text-to-speech adaptation. Strong performance is observed across all metrics:

- LLM Unlearning: SIFT achieves substantial reductions in both entailment score and multiple-choice question accuracy for erased capabilities (K0 ES-Bio, K1 ES-Cyber), outperforming BLUR and POME by large margins, while maintaining competitive general utility.

- Safety Alignment: SIFT attains the highest safety scores while minimizing utility losses, far exceeding standard control-based approaches.

- Text-to-Speech Adaptation: On GLM-4-Voice, SIFT enables simultaneous boosting of accuracy across all cross-modal generation directions, most notably in audio input cases where naive or control-based methods fail due to cross-task interference.

- Hallucination Mitigation: SIFT yields the lowest hallucination rate, again retaining general language utility.

A consistent pattern is that simple gradient projection (as used in BLUR) discards significant portions of useful descent direction and is suboptimal compared to interference-free subspace orthogonalization. SIFT's localized, low-rank interventions strike the optimal balance in all tasks.

Theoretical and Practical Implications

The explicit unification of one-shot model merging, spectral optimizer orthogonalization, and localized block-wise intervention signals a shift toward structured spectral control as the dominant paradigm for post-hoc steering of foundation models. The findings imply several important corollaries for future work:

- Scalability and Efficiency: Subspace-based control is justified when limited to low-rank critical points, making SIFT practical for large-scale models. Computational cost can be further amortized by smarter localization heuristics.

- Extensibility: The SIFT pattern can be adapted to multi-objective steering, domain adaptation, and constrained pretraining. Its applicability to continual learning and privacy-centric erasure is a vital direction for further study.

- Interpretability: The consistent emergence of QKV modules as the locus of cross-task interference suggests a mechanistic hypothesis about knowledge localization and transfer in large models.

- Algorithmic Generalization: The framework may extend naturally beyond language to vision and multimodal foundation models, subject to empirical validation.

Conclusion

SIFT represents a structure-aware, interference-minimizing control schema for constrained model adaptation, rooted in a robust spectral subspace control framework. By capturing and orthogonalizing the critical subspaces responsible for cross-objective conflicts, SIFT produces improvements across a wide array of tasks—machine unlearning, safety alignment, cross-modal adaptation, and hallucination mitigation—while preserving, or in many cases improving, generalization and task utility.

The theoretical synthesis and empirical performance underscore the necessity of moving beyond naive parameter or gradient projection to algorithmically rich, localized spectral control. Further directions involve the extension of these principles to multi-objective, online, and pretraining settings, and the optimization of the SVD computation pipelines that form the heart of the SIFT methodology.

Reference:

"Subspace Control: Turning Constrained Model Steering into Controllable Spectral Optimization" (2604.04231)