- The paper demonstrates that open-world games expose the limits of closed-world testing by revealing unbounded behavior spaces and non-deterministic execution outcomes.

- It shows that traditional metrics fail due to elusive behavioral boundaries and unstable test oracles, urging a shift to distribution-based evaluations.

- It advocates adaptive, evidence-driven testing approaches that prioritize behavior diversity, systematic risk assessment, and iterative empirical characterization.

Software Testing Beyond Closed Worlds: Open-World Games as an Extreme Case

Introduction and Motivation

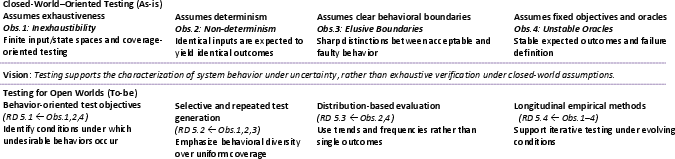

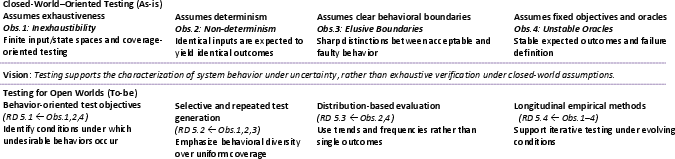

The study "Software Testing Beyond Closed Worlds: Open-World Games as an Extreme Case" (2604.04047) elucidates the limitations of traditional software testing paradigms when confronted with software systems that operate under significant uncertainty and dynamic conditions. The authors position open-world video games as an archetypal domain that exposes these limitations in their most acute form. Under classical closed-world assumptions, software testing has focused on systems with finite, stable state spaces and deterministically reproducible execution. The emergence of complex, open-world environments—characterized by inexhaustible behavior spaces, non-determinism, elusive behavioral boundaries, and unstable oracles—demands a fundamental reconceptualization of testing objectives, methodologies, and evaluation metrics.

Figure 1: Overview of the paper—a depiction of how open-world games expose the insufficiency of closed-world testing assumptions (top), and a mapping to research directions for software testing under uncertainty (bottom).

Problem Statement: The Limits of Closed-World Testing

Closed-world testing relies on premises such as:

- Finite and enumerable state spaces

- Stable and well-defined test oracles

- Test executions yielding consistent and reproducible outcomes

Such premises underpin well-established concepts such as branch/path coverage and fault detection effectiveness but are manifestly violated in modern, dynamic software systems, notably open-world games. These games foreground structural properties—such as multi-agent autonomy, emergent behavior, environment-driven variability, and extensive history-dependence—that fundamentally challenge the tractability and meaningfulness of exhaustive or deterministic testing.

Empirical Observations from Open-World Games

The paper synthesizes empirical observations and insights from a comprehensive analysis of automated game testing literature, interactive systems, and related domains:

1. Inexhaustibility of Behavior Spaces

Open-world games yield technically unbounded and history-dependent behavior spaces due to multi-agent autonomy and environmental complexity. Empirical studies consistently demonstrate that exhaustive exploration via fuzzing or search-based techniques produces diminishing returns as input and state space cardinality grows rapidly [kato2025, Politowski2021, Politowski2022]. Attempts to cover meaningful behavioral diversity are frustrated by the combinatorial explosion inherent to these domains. This undercuts the value of completeness-based adequacy and highlights the practical infeasibility of classical coverage metrics.

2. Non-deterministic Execution Outcomes

Identical test stimuli routinely induce different execution paths and results due to stochasticity (e.g., physics engines), concurrent agent actions, or randomness in the simulation [Politowski2021, Wuji, Inspector, kato2025]. Empirical studies on interactive and adaptive systems further corroborate that non-determinism is intrinsic, rather than pathological. This non-repeatability challenges the validity of single-run verdicts and necessitates multi-run, distributional evaluation strategies.

3. Elusive Behavioral Boundaries

The distinction between correct, buggy, or undesirable behaviors is blurred by sensitive dependence on initial conditions, continuous parameter spaces, and emergent effects. Automated playtesting and player-modeling research show that even minor changes in action sequences or configuration can yield disproportionate behavioral shifts, making the delineation of "correct" boundaries ill-posed [Fraser2013, Xiao2023, Politowski2022].

4. Unstable and Imprecise Oracles

Defining precise, stable test oracles is unrealizable for open-world environments where expectations are context-dependent, evolve over time, or are determined by emergent community standards. The instability of testing oracles is evidenced in both the literature on flaky tests in ML applications [Dutta2020] and automated game testing [Politowski2021, kato2025]. Methods reliant on fixed assertions oracles are unsuitable, necessitating alternatives such as metamorphic testing, probabilistic assertions, or statistical evaluation [Patel2018, Barr2015].

Reconceptualizing Software Testing under Uncertainty

The authors recommend a paradigm shift from exhaustive verification towards systematic characterization and empirical interpretation of system behaviors under uncertainty. The crux of this vision is that testing must no longer aim for definitive correctness certification, but rather serve as a mechanism for collecting empirical evidence about behavioral tendencies, risks, and failure profiles.

Testing activities should be:

- Empirically driven, emphasizing repeatability and trend detection over single-run pass/fail outcomes

- Focused on mapping and understanding the distributional properties of behaviors

- Designed to support iterative investigation, prioritization, and improvement within a context of incomplete knowledge and evolving requirements

Research Directions

The paper delineates four primary research trajectories informed by the open-world lens:

Test Objectives

Test objectives must decouple from exhaustive coverage and binary oracular judgments. They should prioritize:

- Behavioral diversity and the systematic sampling of operational regions associated with high risk or value

- Detection and profiling of failure-prone scenarios or under-specified behavior

- Support for risk-based triage and investigation, not just aggregate coverage

Automated Test Generation

Automated test generation must:

- Abandon uniform exploration strategies in favor of selective, cost-aware, and behaviorally-targeted search, potentially guided by user models, reinforcement learning, or historical analyses [MIMIC_YIFEI_ASE_2025].

- Explicitly treat non-determinism and execution variability as signal for further exploration and understanding, rather than noise to be suppressed.

- Leverage general mechanisms applicable across fuzzing, search-based algorithms, and LLM-driven approaches.

Evaluation and Metrics

Evaluation protocols must:

- Shift from scalar, deterministic metrics (e.g., coverage, fail counts) to distribution-based, probabilistic, or diversity-oriented assessment

- Employ risk-based or statistical oracles, where conclusiveness derives from repeated, trend-aligned violations or outlier behaviors

- Consider notions of reproducibility redefined around observable statistical patterns, rather than deterministic outcomes

Empirical Studies and Benchmarks

Empirical research must:

- Embrace longitudinal and repeated experimentation to capture evolving system dynamics and oracle drift

- Develop benchmarks and datasets that support evaluation under varied and temporally extended conditions

- Provide foundations for comparative assessment of techniques in dynamic and uncertain domains

Implications and Future Outlook

Practical implications extend far beyond gaming. Autonomous vehicles, metaverse platforms, and self-adaptive systems similarly operate in open, non-deterministic environments. The work compels a foundational rethinking of automated software testing, advocating for robust, evidence-oriented methodologies suitable for dynamic, complex, and interactive systems. Theoretically, this shift underscores the need for hybrid evaluation paradigms combining empirical science, statistical modeling, and adaptive test strategies.

For AI and LLM-driven systems embedded as agents or testers in such environments, this framework implies the necessity of self-improving and uncertainty-aware testing mechanisms capable of both assessing and adapting to evolving software landscapes.

Conclusion

The paper provides a formal and rigorous analysis of the limits of closed-world software testing assumptions as exposed by open-world game environments. Its synthesis of key structural challenges—combinatorial explosion, non-determinism, boundary ambiguity, and oracle instability—lays the groundwork for a broad methodological transition in software testing research and practice. The outlined research directions establish a blueprint for empirically grounded, uncertainty-conscious, and distribution-oriented software testing approaches that will be necessary as software systems increasingly operate beyond the confines of closed, deterministic worlds.