- The paper presents an autoencoder technique that jointly denoises, reconstructs, and estimates physical parameters (frequency, phase, decay, amplitude) from noisy, superposed damped sinusoids.

- It employs a nine-layer deep architecture with a structured latent space and decoupled encoder-decoder training, using dropouts to enhance generalization.

- The method achieves high match scores (>0.98) even under high noise and component overlap, outperforming traditional analytical approaches.

Autoencoder-Based Parameter Estimation for Superposed Multi-Component Damped Sinusoidal Signals

Introduction and Problem Motivation

Accurate parameter estimation in superposed, noisy, and rapidly decaying damped sinusoidal signals remains a fundamental yet challenging problem across a variety of domains, including gravitational wave physics, NMR, structural monitoring, and communications. Traditional analytical methodologies—e.g., matrix pencil, Prony, MUSIC, ESPRIT—encounter significant performance degradation in the presence of strong damping, noise, overlapping signal components, or model-order uncertainty. These constraints motivate the need for robust, data-driven approaches capable of extracting the underlying parameter structure directly from noisy observational data.

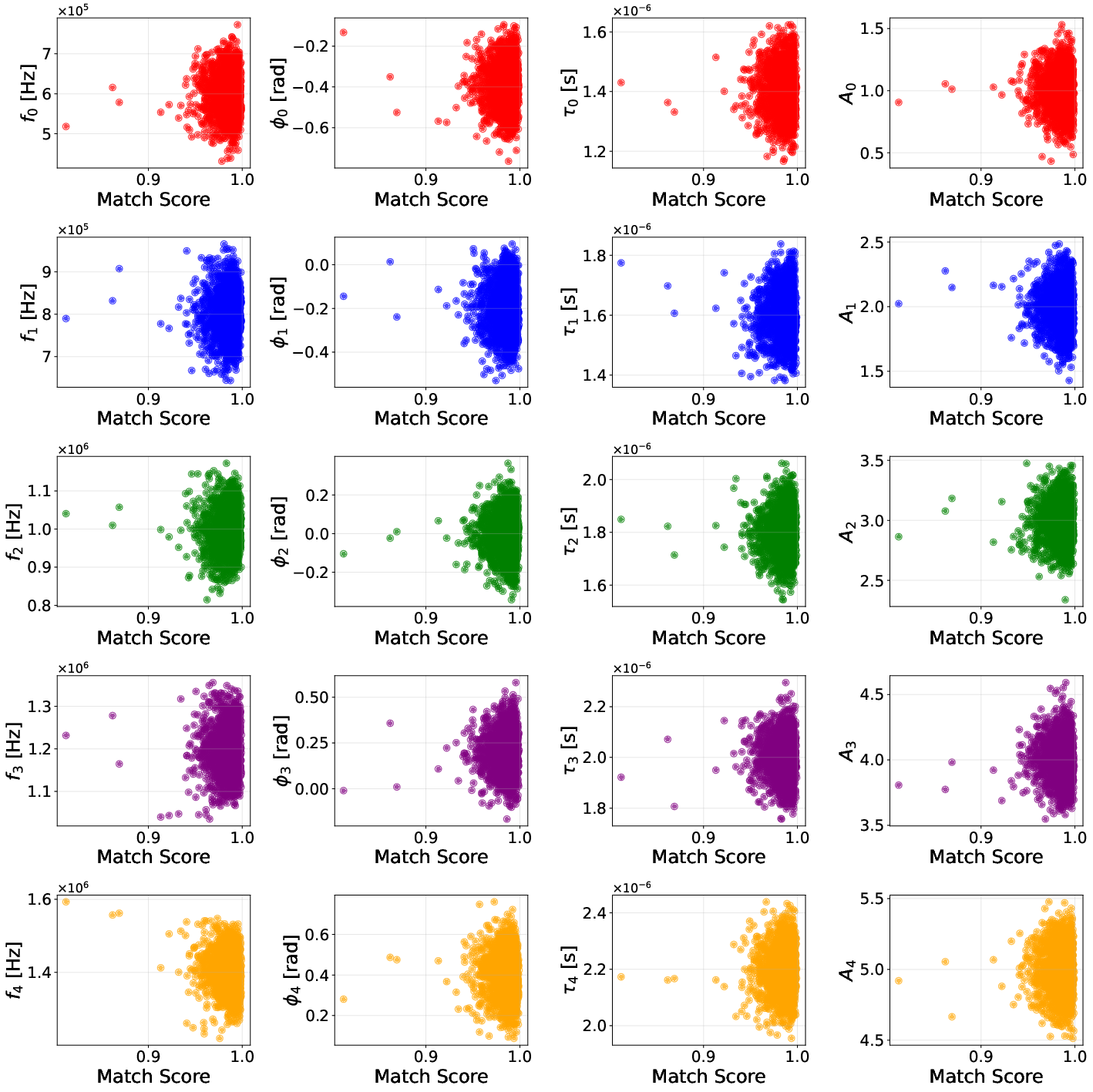

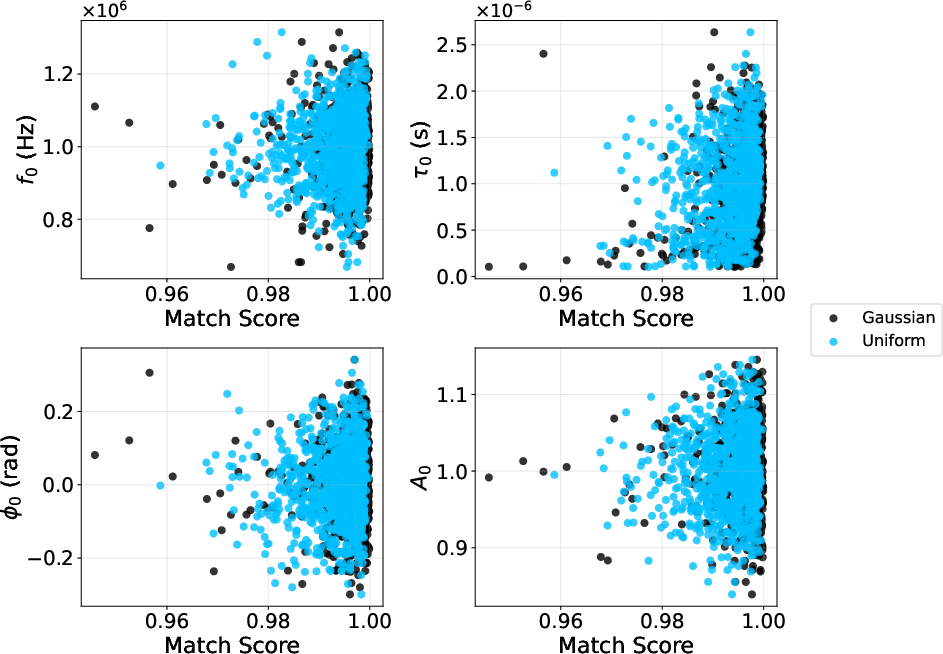

The paper "Autoencoder-Based Parameter Estimation for Superposed Multi-Component Damped Sinusoidal Signals" (2604.03985) presents an autoencoder-based framework for joint denoising, waveform reconstruction, and parameter estimation of signals composed of multiple superposed damped sinusoidal components. The method leverages a structured latent representation enforced to correspond directly with the physical parameters—frequency, phase, decay time, amplitude—of each component. The approach is systematically evaluated under a variety of complexity regimes, including up to five-component superpositions, as well as varying training-data distributions.

Methodology

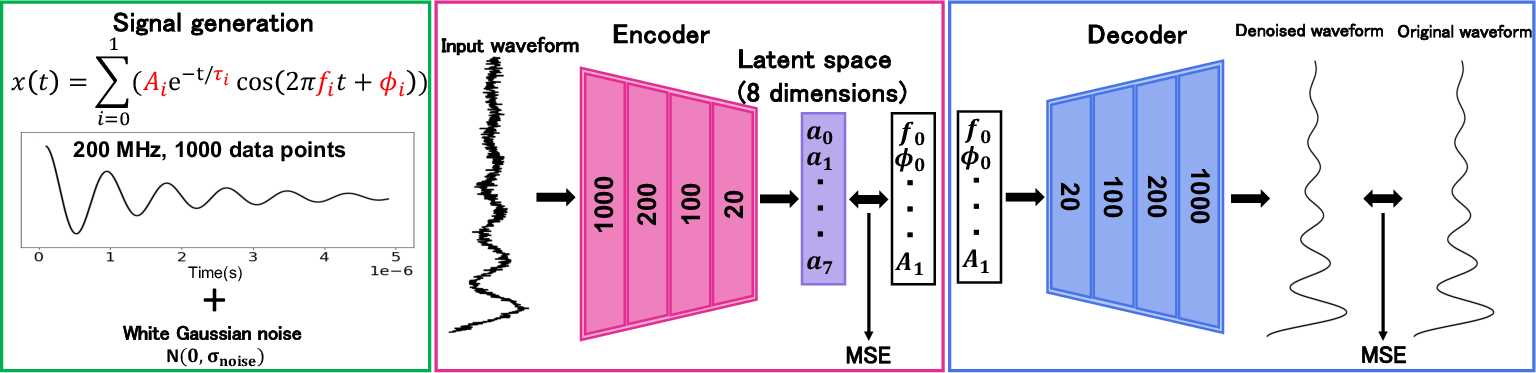

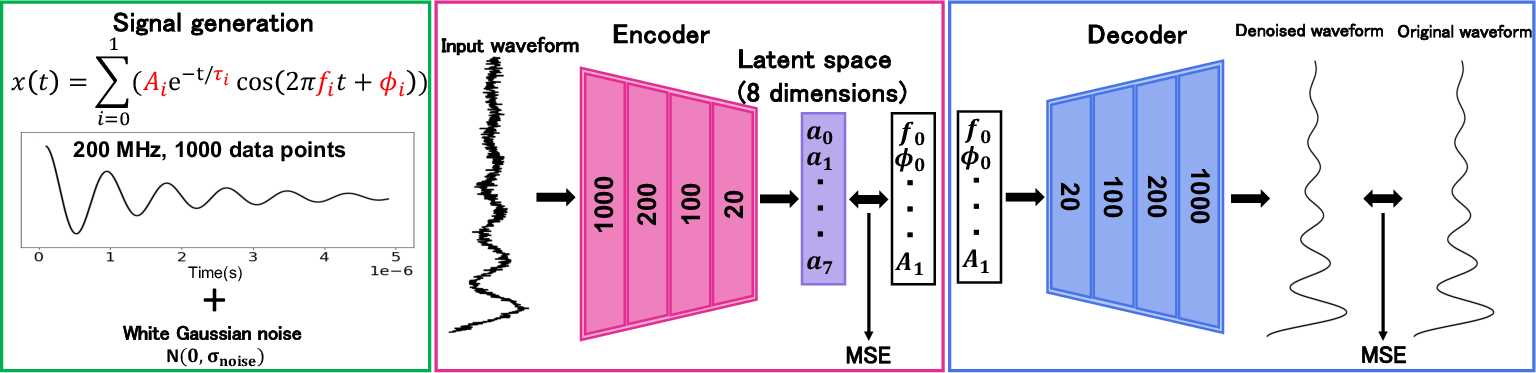

Structured Autoencoder Architecture

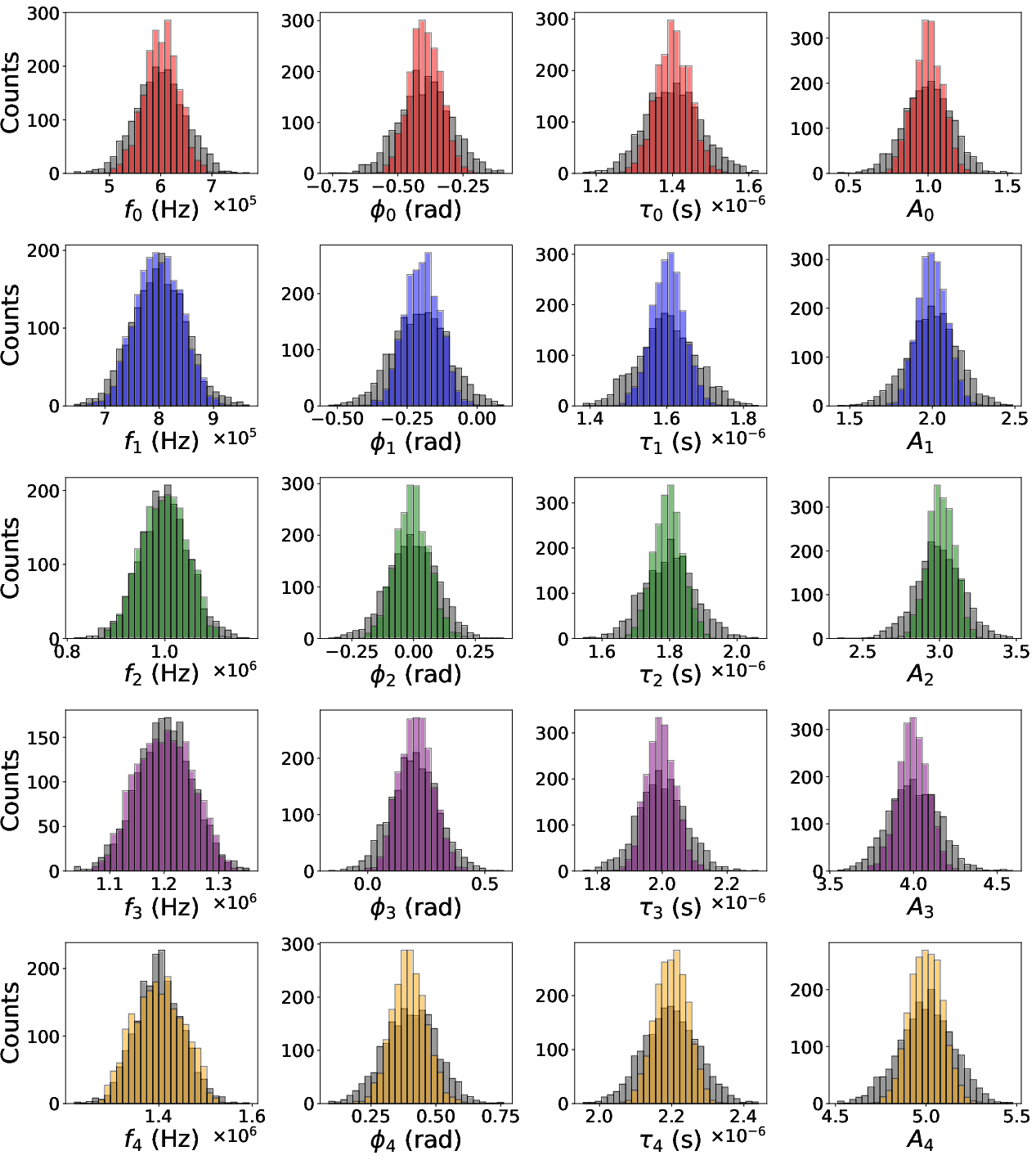

The architecture employs a nine-layer deep autoencoder, where the dimensionality of the central latent space is matched to the total number of physical parameters (four per signal component). Linear latent variables, without non-linear activation, enable direct mapping between the learned latent codes and the desired physical parameters.

The autoencoder is trained in two decoupled phases:

- Encoder training: The network learns to map noisy input signals to the associated physical parameters using a mean squared error (MSE) loss in parameter space, enforcing a bijective mapping from physical parameters to latent codes.

- Decoder training: The network reconstructs denoised waveforms from the normalized physical parameters, using MSE in data space as the loss functional.

Dropouts at all layers mitigate overfitting. Both encoder and decoder leverage Adam as an optimizer for faster convergence.

Figure 1: Schematic overview of the pipeline, from data generation (left) to autoencoder architecture (center and right); illustrated for two-component signals.

Simulation Data and Noise Model

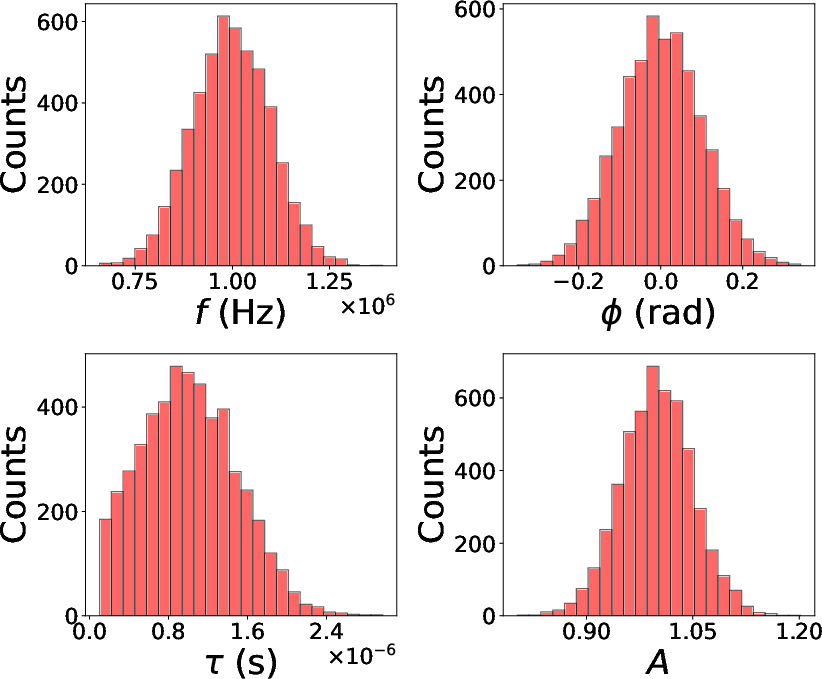

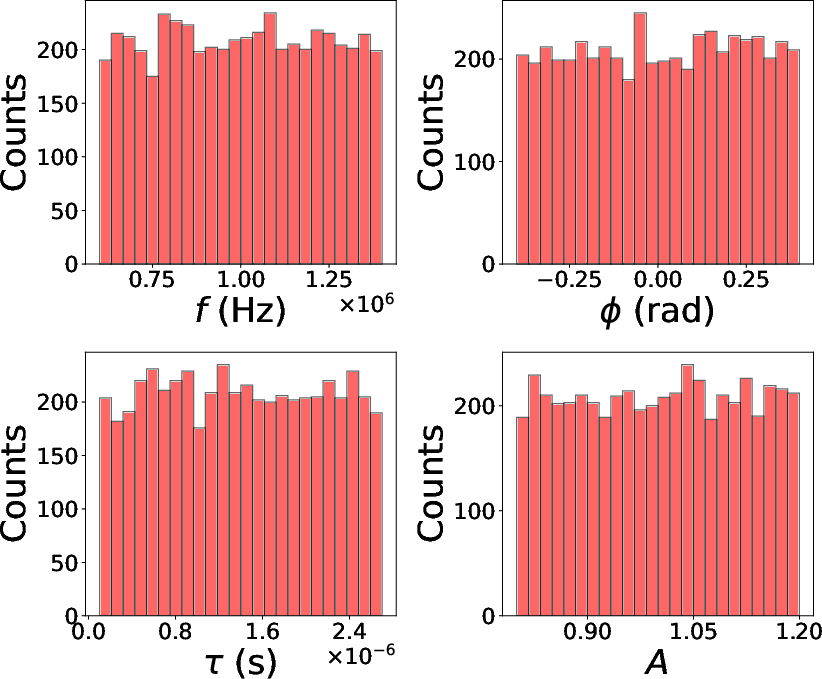

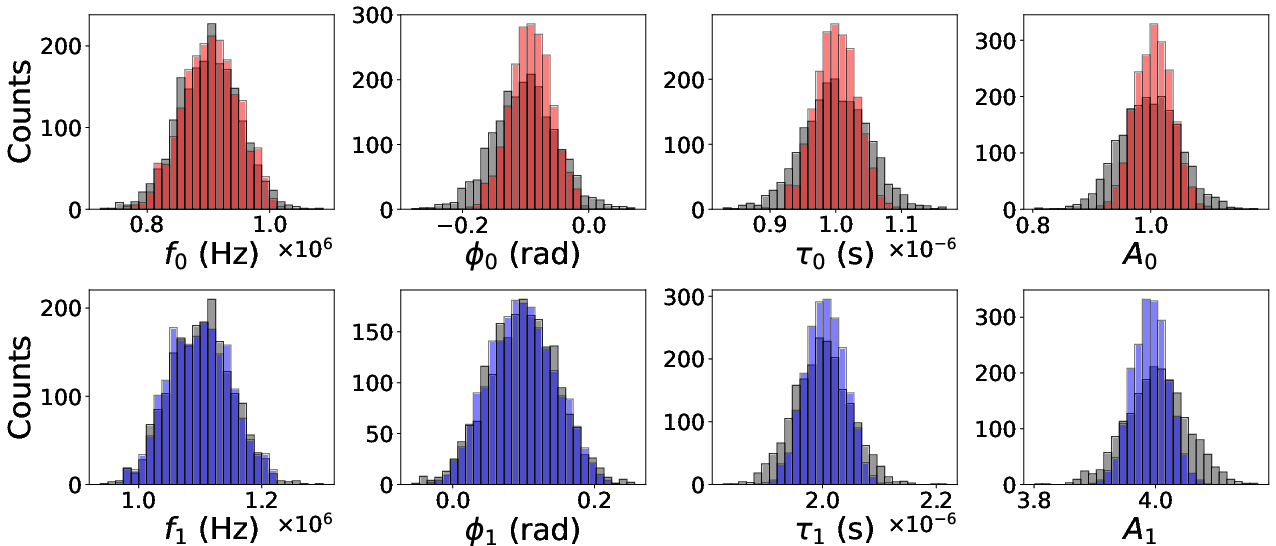

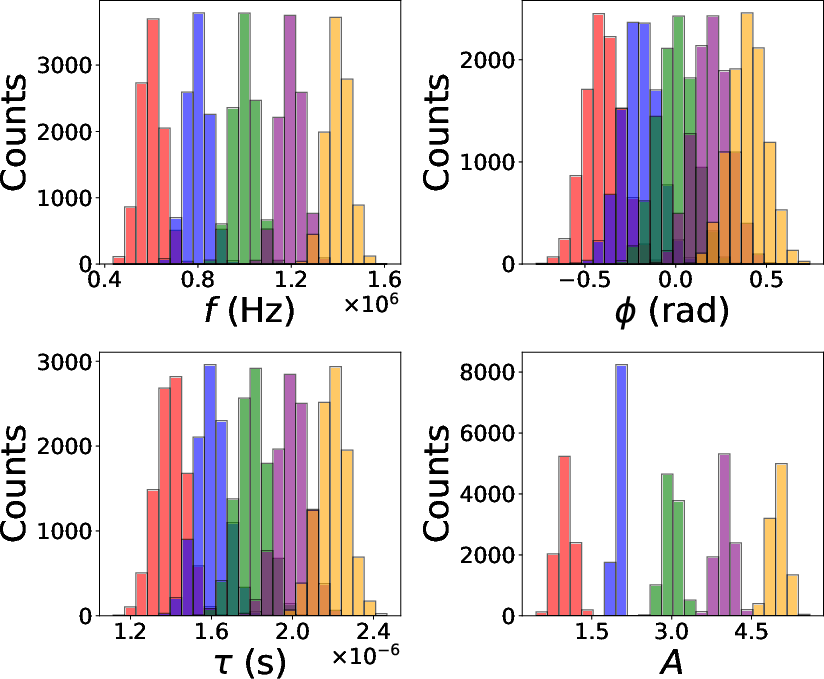

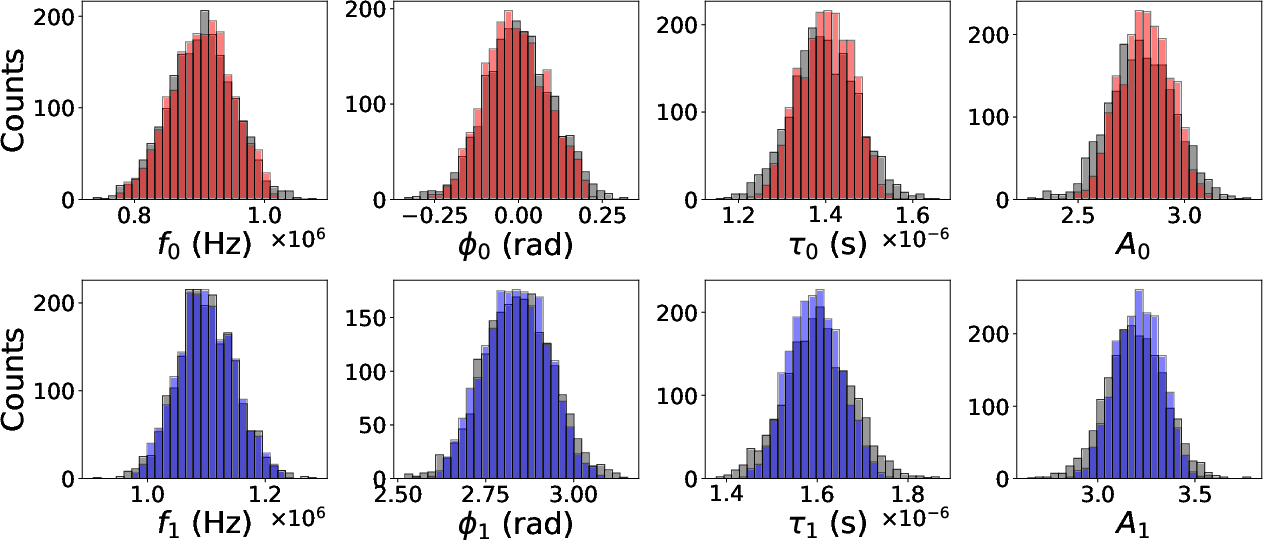

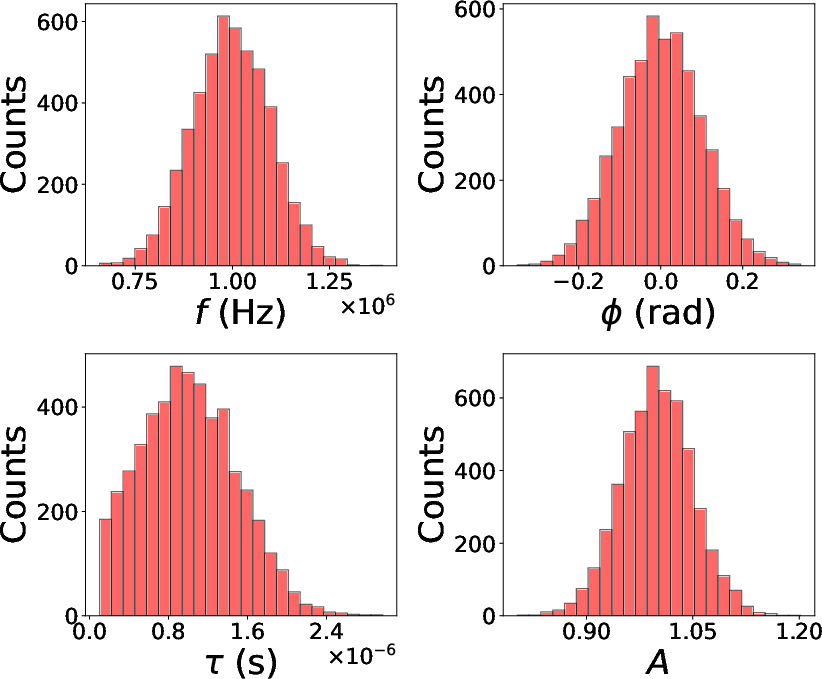

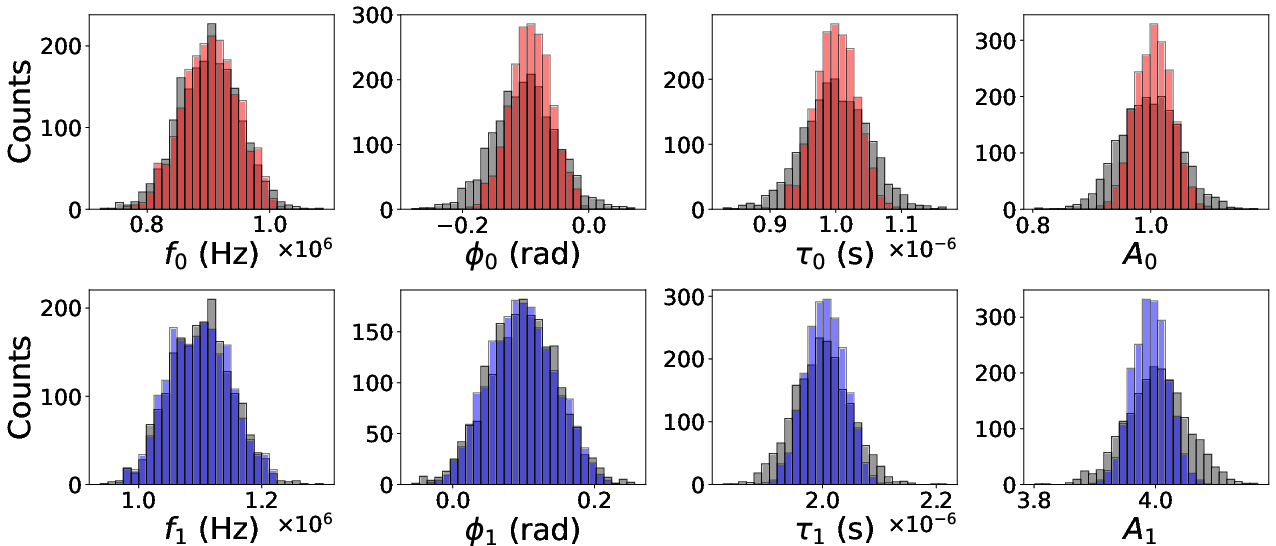

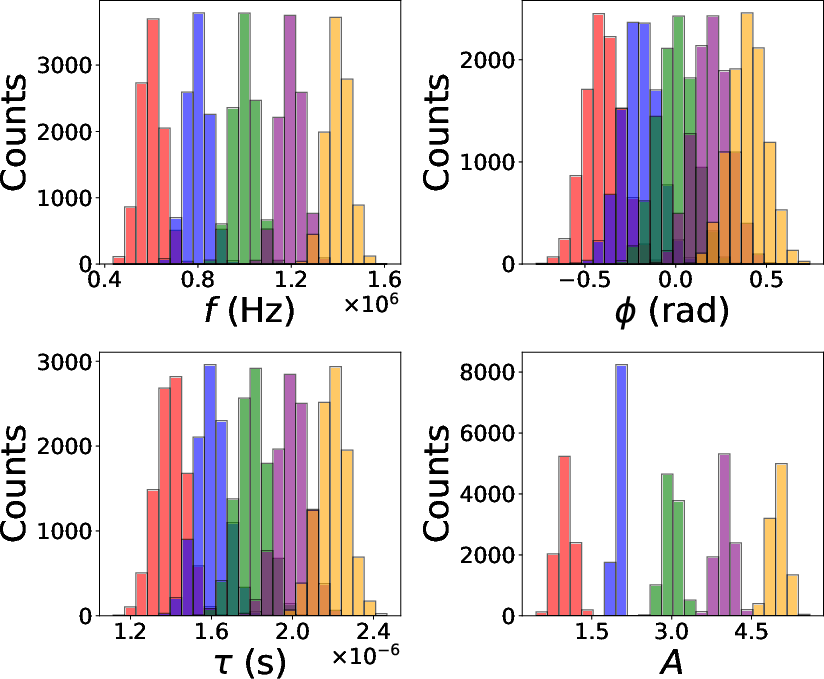

Synthetic datasets are generated using a summation of N+1 exponentially damped sinusoids, where each component is parameterized by its amplitude Ai, decay time τi, frequency fi, and phase ϕi. Parameter settings (means and standard deviations for Gaussian scenarios, min-max ranges for uniform) vary by experimental case, controlling for component dominance, phase relations, and mutual overlap. White Gaussian noise at tunable levels is added, with noise settings scaled to represent both moderate and high SNR regimes.

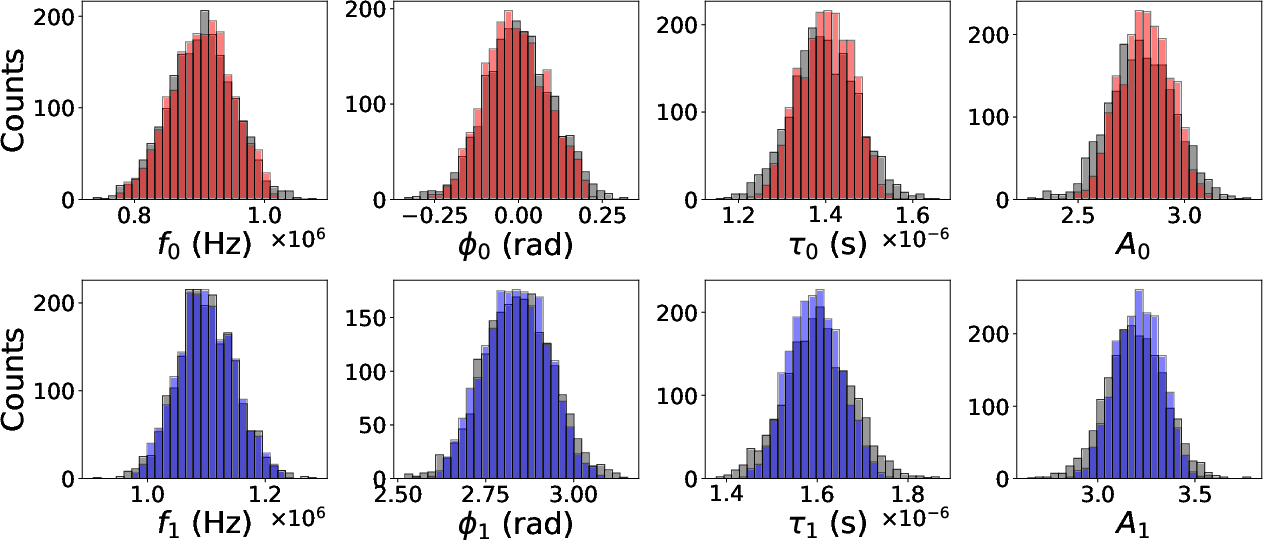

Figure 2: Example training parameter distributions for single-component Gaussian case.

Evaluation Metrics

Three quantitative axes are used for evaluation:

- Waveform reconstruction: Match score as defined in [ref:Pycbc], optimized over time and phase shifts and normalized for unitary white-noise spectral density.

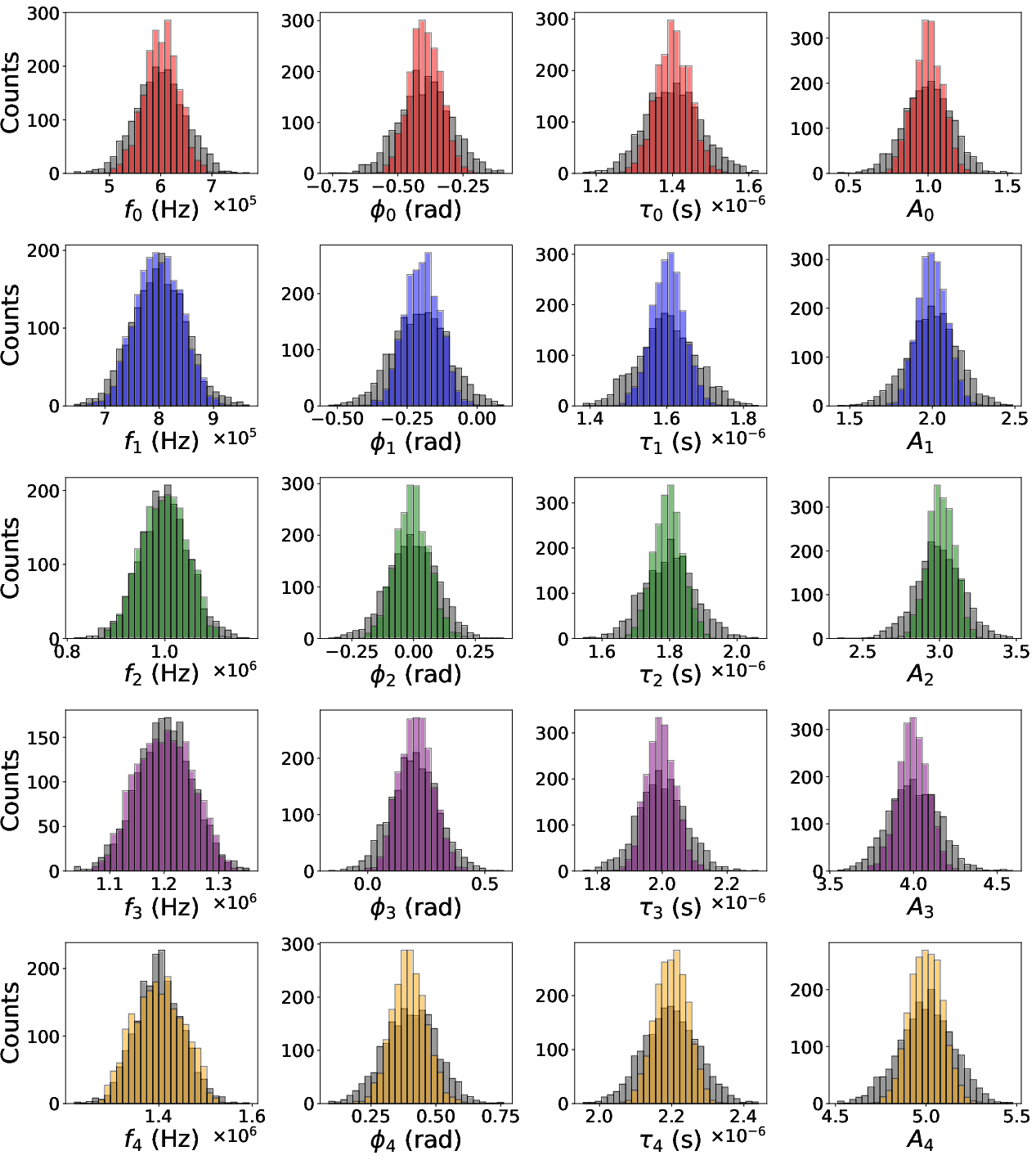

- Distributional accuracy: Comparison of estimated parameter histograms to true parameter distributions.

- Parameter-wise errors: Relative errors for fi, τi, Ai; absolute error for phase ϕi.

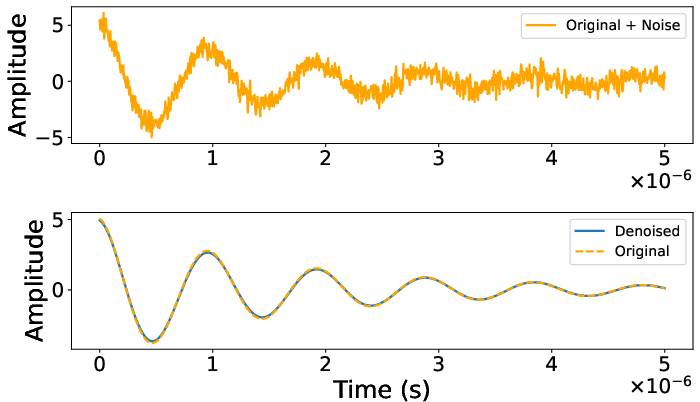

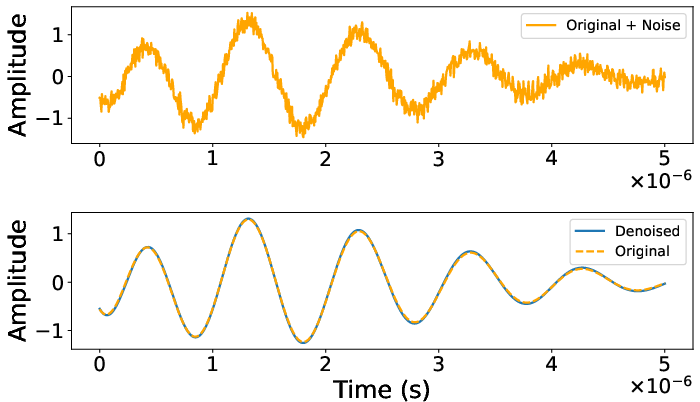

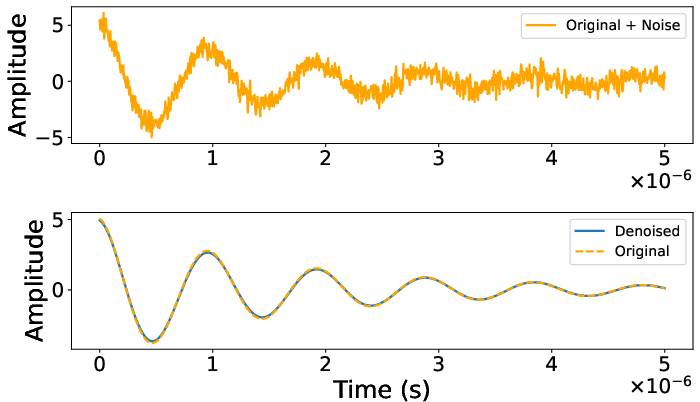

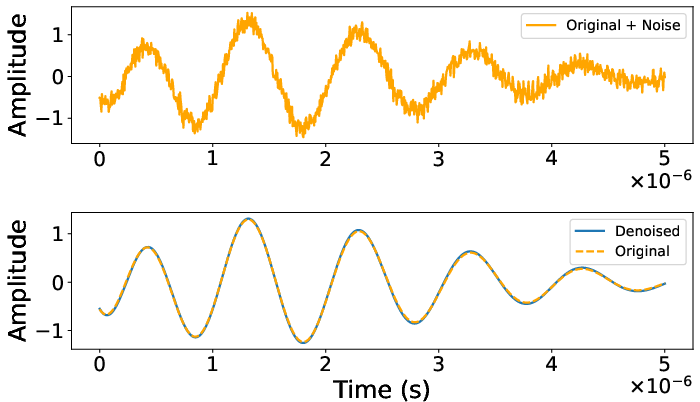

Figure 3: Example denoising output, showing excellent signal recovery (Match score: 1.000).

Results

Two-Component Challenging Scenarios

The method achieves high fidelity in reconstructing and parameterizing signals in regimes traditionally considered problematic:

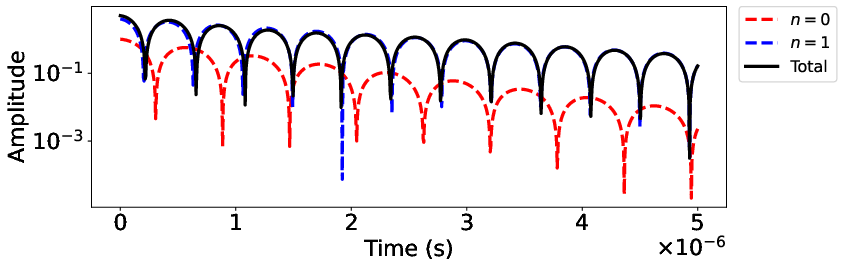

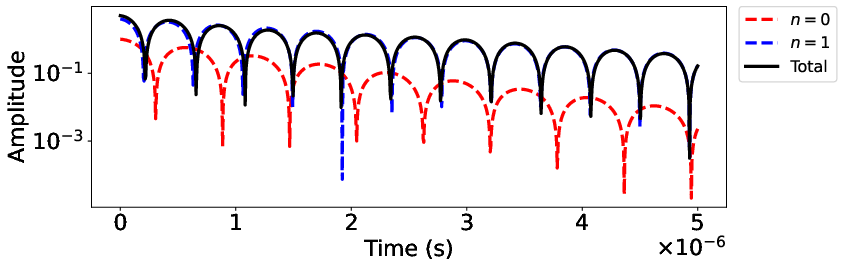

- Subdominant component (Case 1): A low-amplitude, rapidly decaying component is correctly extracted, with mean match score 0.999±0.006 and mean relative frequency error Ai0 for the difficult component.

- Nearly opposite phases (Case 2): Superpositions exhibiting destructive interference are also handled robustly, with a match score of Ai1 even in the high noise, small net amplitude regime.

Figure 4: Logarithmic amplitude plot for a representative subdominant two-component waveform.

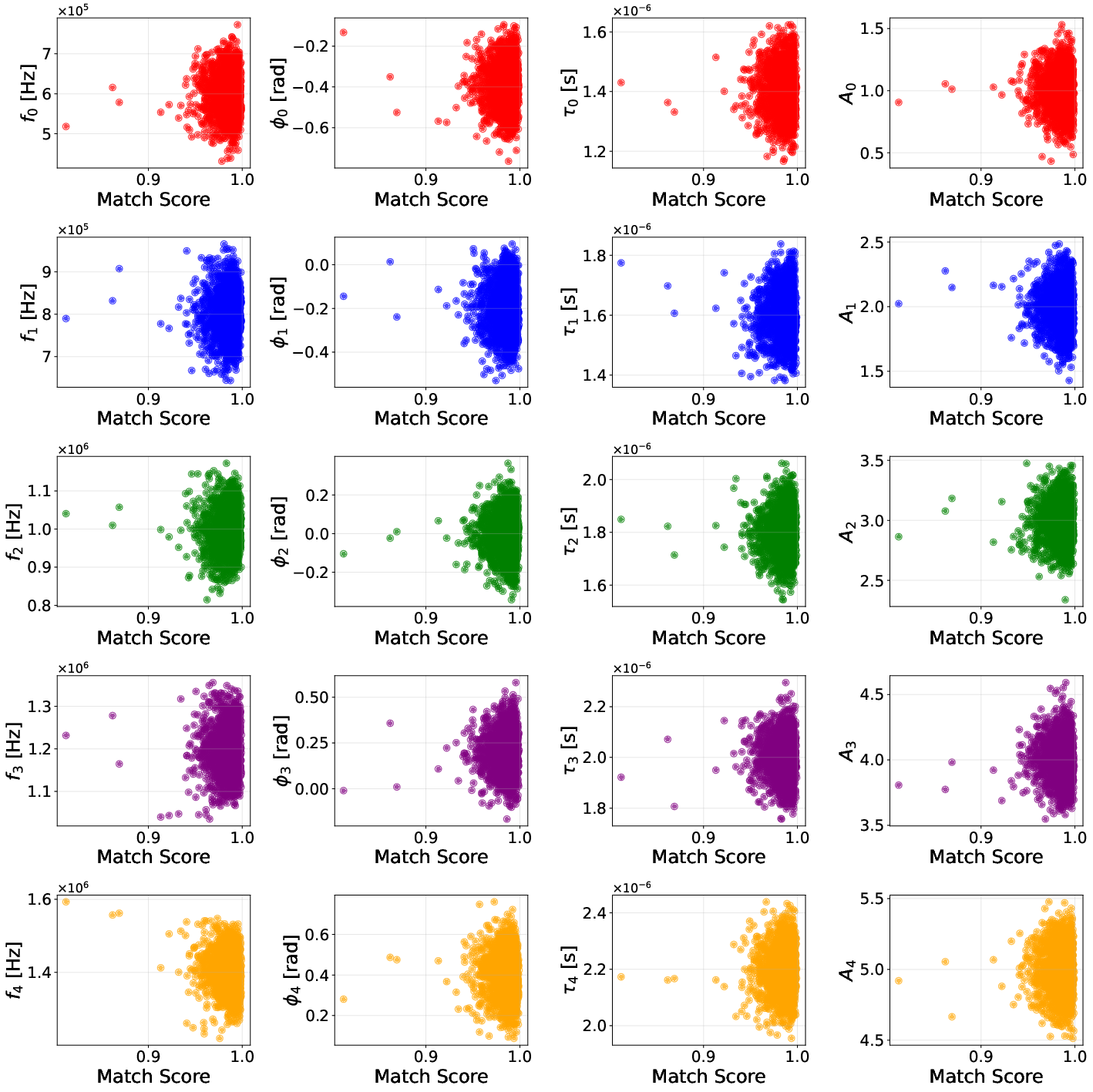

High-Component-Count and Overlap

The approach remains stable and accurate as the number of superposed components increases. For the five-component configuration (Case 3) at substantial noise (Ai2), the match score is Ai3, with frequency estimation RMSE for the weakest component below Ai4.

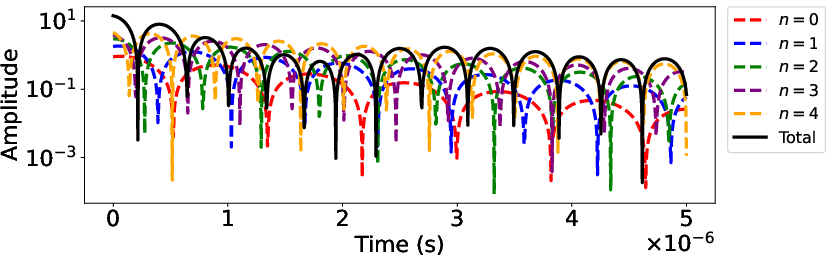

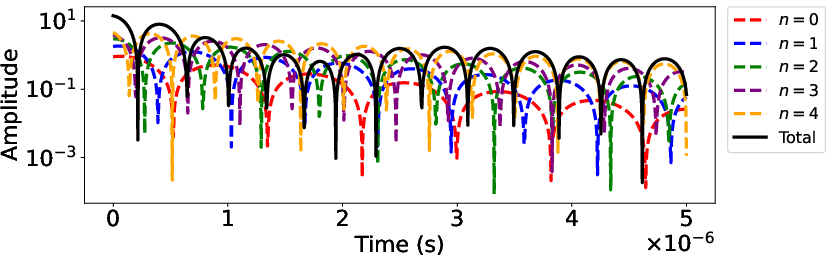

Figure 5: Logarithmic amplitude for a five-component waveform with overlapping components.

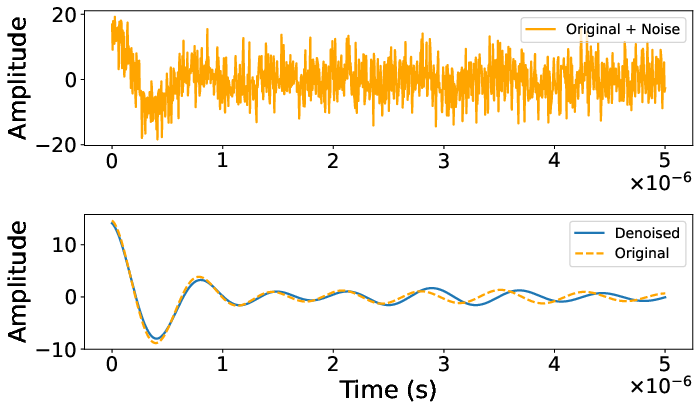

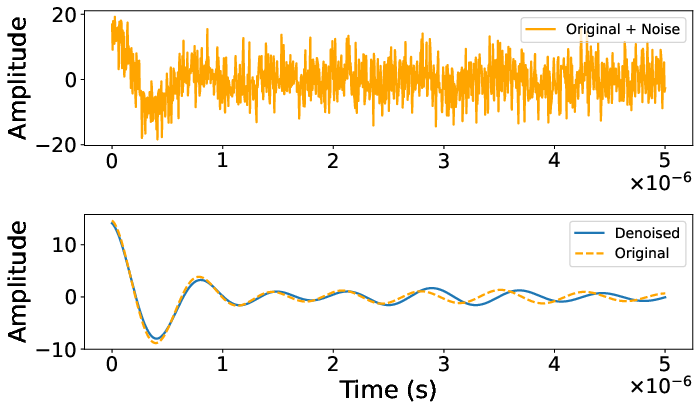

Figure 6: Denoising of a five-component signal (Match score: 0.973).

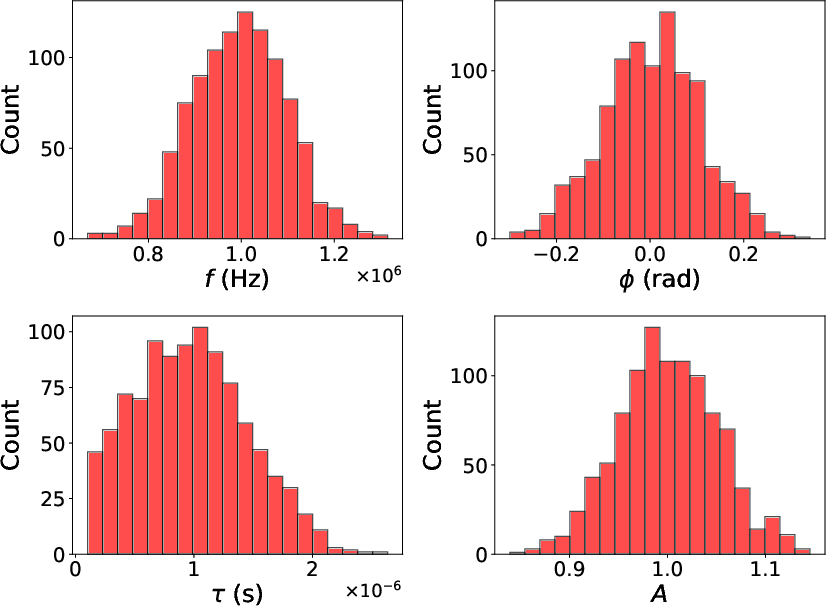

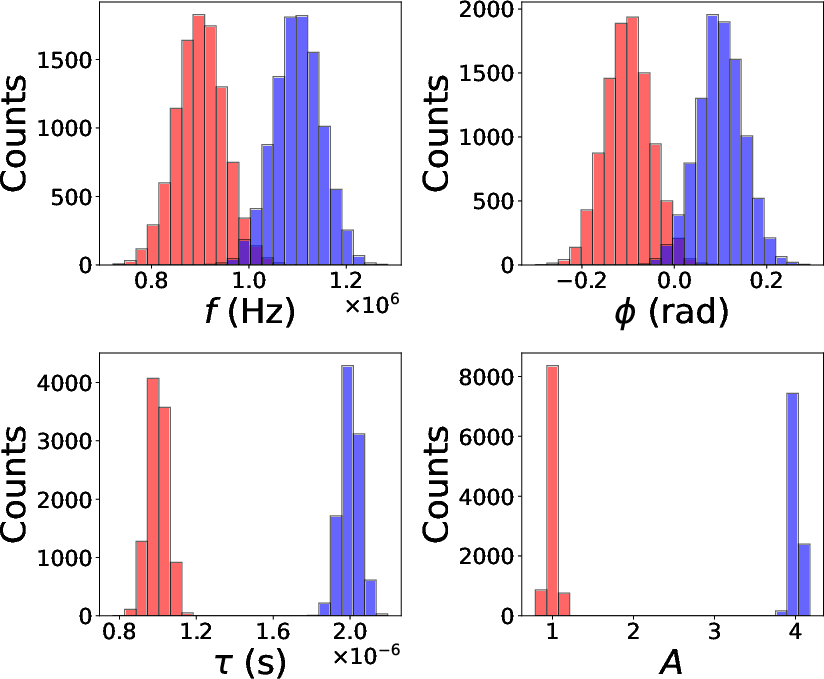

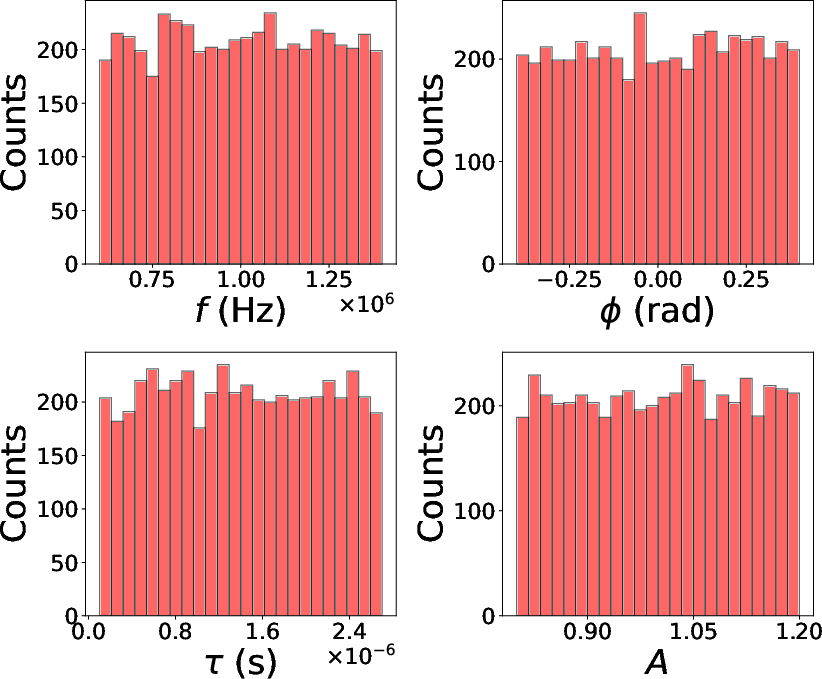

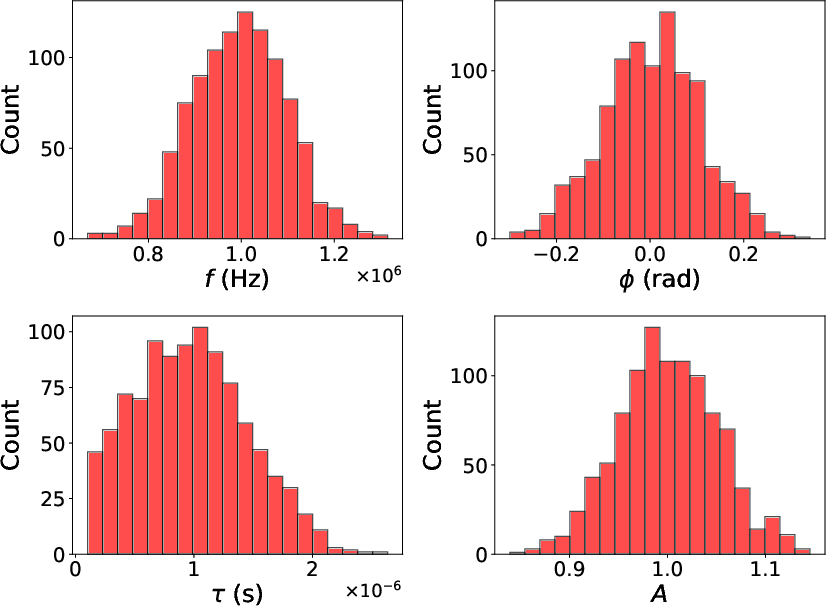

Training Distribution Effects

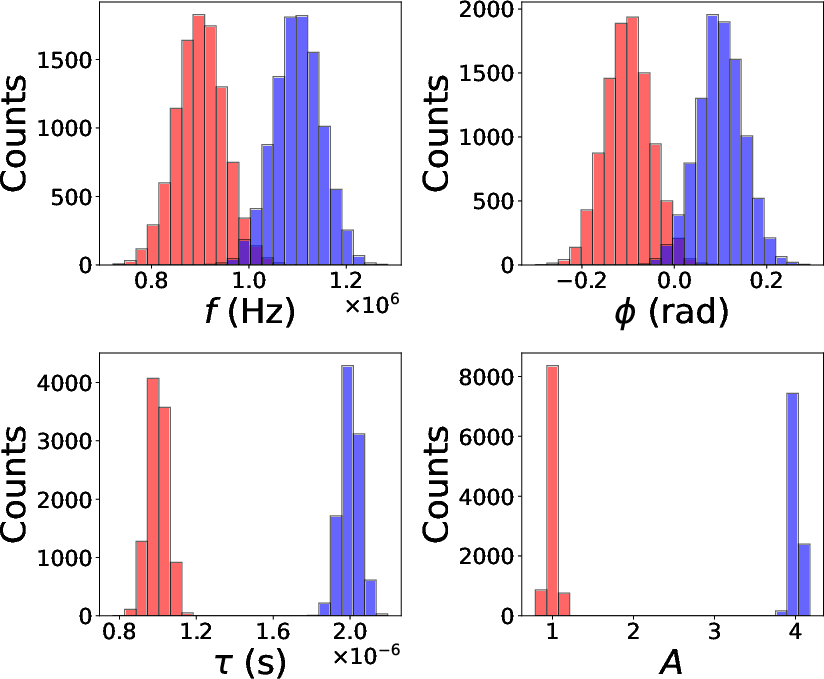

Generalization to non-Gaussian parameter distributions is demonstrated:

Robust Denoising and Parameter Recovery

Across all cases, the autoencoder achieves near-theoretical optimal reconstruction/denoising for most test cases, with match scores generally Ai5 and parameter-wise errors controlled, even in aggressively noisy environments.

Figure 8: Example denoising output under non-Gaussian (uniform) training (Match score: 0.997).

Theoretical and Practical Implications

The results indicate that deep-autoencoder-based latent-space representations can serve as effective surrogates for explicit physical models in the parameter estimation of superposed, noisy, damped oscillatory signals. The method offers several notable advantages:

- Scalability: Can handle increasing component counts, facilitating application in multiphysics systems.

- Robustness to training distribution: Effective even when prior information about parameter distributions is weak (uniform priors).

- Resilience to noise and component interference: Handles edge cases such as destructive interference or subdominant/overlapping components with high accuracy.

Practically, this enables application in domains such as spectroscopy, structural monitoring, and gravitational wave analysis, where classical methods struggle due to noise or signal complexity. The method is particularly promising for short-duration, low SNR signals and settings with limited prior knowledge about signal composition.

Limitations and Future Directions

Some estimation accuracy degradation occurs near parameter space boundaries and in cases of strong overlap (e.g., overlapping decay rates or frequencies). The method’s sensitivity increases with component overlap, underscoring the importance of careful training set design.

Future research should address:

- Comparison to alternative ML-based methods (e.g., Xie et al. [ref:DataDrivenDamped])

- Application to colored noise and mismatched training/test distributions

- Incorporation of domain-specific regularization (e.g., physics-informed constraints)

- Direct application to gravitational wave ringdown analysis (black hole spectroscopy)

- Mitigating limitations observed at distribution extremes and under severe component overlap

Conclusion

The paper presents a comprehensive, rigorous evaluation of a structured autoencoder framework for denoising and parameter estimation in multi-component damped sinusoidal signals embedded in noise (2604.03985). The method achieves high accuracy and robustness in parameter recovery across a wide variety of settings, outperforming traditional analytical estimators especially in complex, noisy, or ambiguous signal regimes, and revealing a promising direction for next-generation data-driven signal analysis in physical sciences.