Real-time Neural Six-way Lightmaps

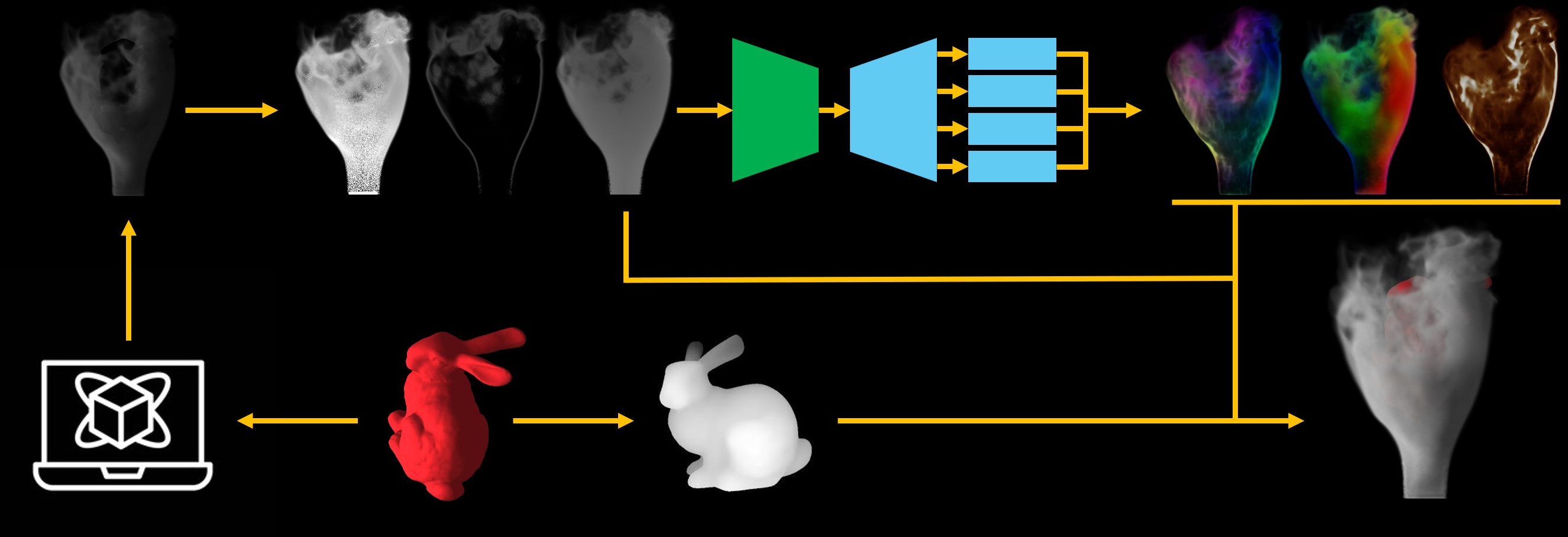

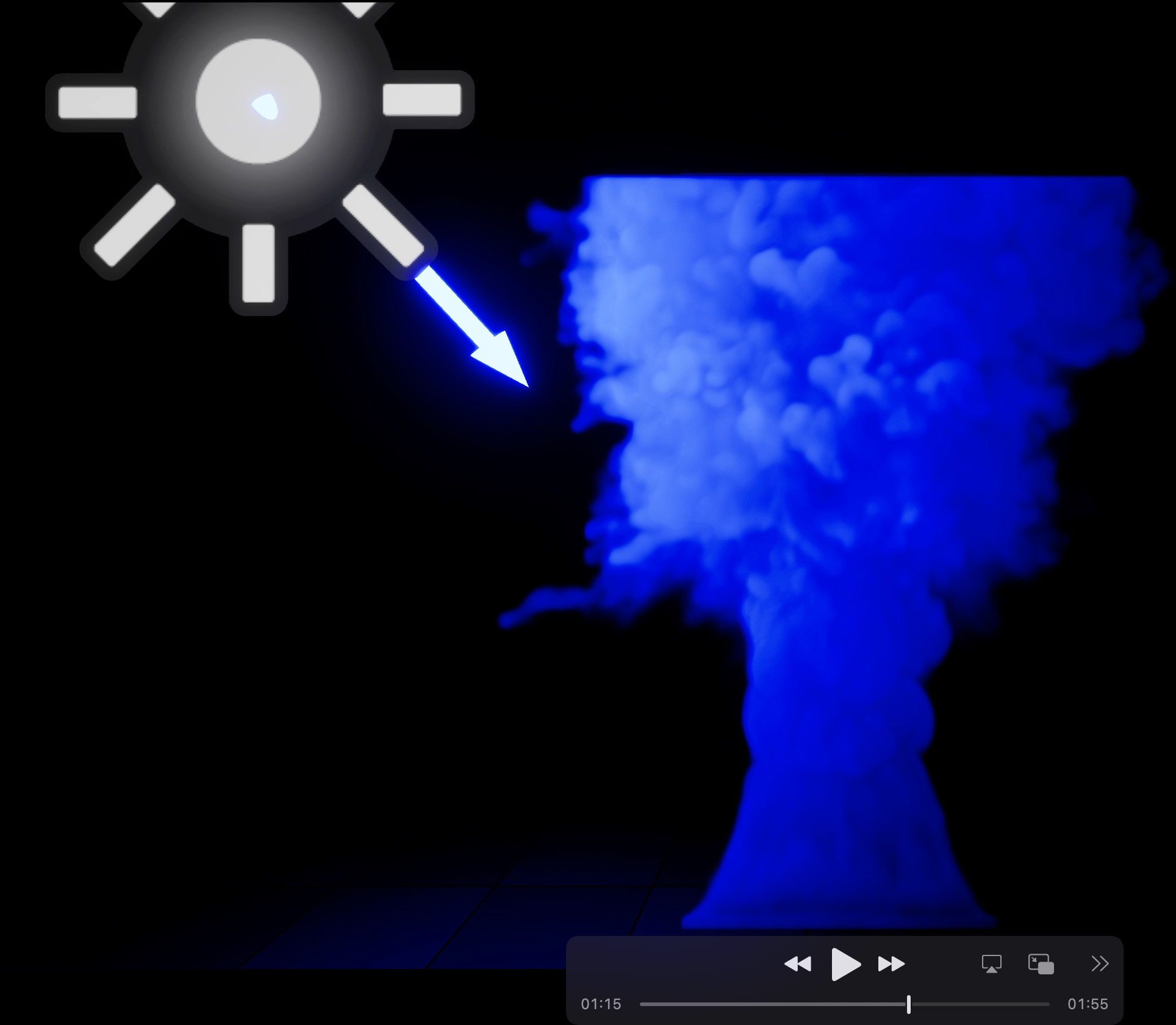

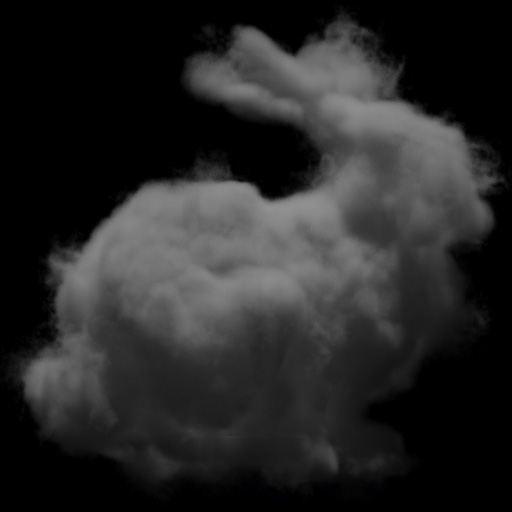

Abstract: Participating media are a pervasive and intriguing visual effect in virtual environments. Unfortunately, rendering such phenomena in real-time is notoriously difficult due to the computational expense of estimating the volume rendering equation. While the six-way lightmaps technique has been widely used in video games to render smoke with a camera-oriented billboard and approximate lighting effects using six precomputed lightmaps, achieving a balance between realism and efficiency, it is limited to pre-simulated animation sequences and is ignorant of camera movement. In this work, we propose a neural six-way lightmaps method to strike a long-sought balance between dynamics and visual realism. Our approach first generates a guiding map from the camera view using ray marching with a large sampling distance to approximate smoke scattering and silhouette. Then, given a guiding map, we train a neural network to predict the corresponding six-way lightmaps. The resulting lightmaps can be seamlessly used in existing game engine pipelines. This approach supports visually appealing rendering effects while enabling real-time user interactivity, including smoke-obstacle interaction, camera movement, and light change. By conducting a series of comprehensive benchmarks, we demonstrate that our method is well-suited for real-time applications, such as games and VR/AR.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Simple Explanation of “Real-time Neural Six-way Lightmaps”

What this paper is about (big picture)

This paper is about making smoke, fog, and explosions in video games look realistic while still running in real time. These effects are hard to draw because light bounces around inside them in complicated ways. The authors present a new method that uses a small neural network to quickly create believable lighting for smoke that you can interact with—move the camera, change the lights, or let smoke flow around objects—without waiting a long time.

The main goals, in plain words

- Make smoke and fog look realistic in real time (fast enough for games and VR).

- Allow the camera and lights to move freely without breaking the effect.

- Let smoke interact with objects (like smoke flowing around a statue and receiving its shadow).

- Keep the method compatible with existing game engines.

How the method works (using everyday ideas)

First, a quick reminder of an older trick:

- Six-way lightmaps: Imagine taking six photos of how light hits smoke from the right, left, top, bottom, front, and back. During gameplay, you blend those six “photos” depending on where the light comes from. This is fast, but traditionally it only works for a fixed camera and pre-baked smoke.

The new method keeps the speed of six-way lightmaps but makes them dynamic with a neural network. Here’s the pipeline:

- Simulate the smoke

- A fast physics simulator creates a 3D “density” of smoke (where it’s thick and where it’s thin). Think of it like a 3D cloud map that changes over time.

- Make a guiding map (a rough sketch)

- From the camera’s point of view, they do a quick “ray march,” which means they look along lines through the smoke to estimate what it generally looks like (its silhouette and overall brightness). They use three simple “helper lights” (front, top, bottom) to help show shape. This step is like drawing a rough sketch of the smoke very quickly.

- The guiding map has three channels:

- Rough scattered light (how bright the smoke looks overall)

- Transparency (how much background you can see through it)

- Depth (how far away the “front” of the smoke appears)

- Predict six lightmaps with a neural network

- A compact U-Net (a type of image-to-image neural network) takes the guiding map and predicts:

- Six directional lightmaps (right, left, top, bottom, front, back)

- A transparency map

- An optional emissive map (for glowing effects like fire in explosions)

- The network uses “channel adapters” to better handle groups of directions (front/back, left/right, up/down). Think of these as small specialists that fine-tune different pairs of directions.

- Handle shadows from objects (fast approximation)

- The method compares a “smoke depth map” to a typical shadow map from lights. This quickly estimates whether parts of the smoke are in shadow from nearby objects, without expensive extra calculations.

- Render in the game engine

- The predicted six lightmaps are blended depending on the light direction(s), just like traditional six-way lightmaps. Because the lightmaps are produced on the fly from the camera’s view, they work even when you move the camera or change lights.

What they found (results) and why it matters

- It’s fast: The guiding map plus neural network takes around 3 milliseconds; final shading is under 1 millisecond. That’s well within real-time budgets.

- It’s interactive: You can move the camera, change the lights, and let smoke interact with objects—unlike traditional pre-baked six-way lightmaps that often break when you move the camera around.

- It looks good: Compared to advanced methods that simulate lighting inside smoke more exactly (but slowly), this method gives clean, stable images without noisy flicker—and still runs in real time.

- It works in real engines: They integrated it into Unreal Engine and showed examples like chimney smoke, jet flows, and explosions, with better realism than simple particle-based smoke.

Why this is important (impact and future possibilities)

- Better visuals in games and VR/AR: You get convincing smoke, fog, and explosions that react to the scene and the player.

- Practical for developers: It plugs into existing pipelines (lightmaps) and runs fast enough for real use.

- Fewer limits: Unlike the old approach, you’re not stuck with a fixed camera or pre-baked sequences.

- Room to grow: The same idea—quick “sketch,” then neural network—could be adapted to other volumetric effects like clouds, dust, or magical effects. Future work could make shadows even more accurate or support more complex lights.

In short, this paper shows how to blend a quick physical hint (the guiding map) with a smart neural network to create realistic, fast, and flexible smoke lighting—perfect for modern interactive applications.

Knowledge Gaps

Unresolved Knowledge Gaps, Limitations, and Open Questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper.

- Physical fidelity beyond single-scattering: Training data is generated with single-bounce scattering and isotropic HG (); the method’s accuracy under multi-bounce scattering and anisotropic phase functions () is untested. Evaluate and extend to multi-scattering and varying and albedo.

- Environment/area lighting: Six-way interpolation targets directional lighting; support and accuracy for extended lights (area/spot), soft shadows, and HDR environment maps remain unaddressed. Investigate basis expansions (e.g., SH) or learned light bases.

- Full bidirectional object–smoke light transport: Current screen-space “outer shell” only approximates obstacle-to-smoke shadows. Smoke casting shadows onto geometry, internal self-shadowing within the volume, and multi-layer occlusions are not modeled. Explore deep shadow maps, deep opacity volumes, or volumetric shadow mapping.

- Near-field parallax and billboard limitations: Despite dynamic view handling, a 2D lightmap on a billboard cannot reproduce true volumetric parallax, thick-volume depth ordering, or close-up occlusions. Assess multi-plane impostors, layered lightmaps, or sparse volumetric proxies.

- Overlapping volumes and compositing: Interaction and correct blending between multiple smoke emitters/layers and other transparent effects (order-independent transparency, depth peeling) are not addressed. Develop compositing strategies compatible with predicted lightmaps.

- Generalization scope: Training uses 14 LBM sequences and limited camera angles; OOD testing is minimal. Systematic evaluation across simulators (e.g., FLIP/PIC, gridless), turbulence regimes, and third-party assets is missing. Build broader, parameterized datasets and report generalization metrics.

- Scaling with resolution, scene complexity, and instance count: All results are at 512² lightmaps on a single instance. Behavior at 1K–4K, wide FOVs, many simultaneous smoke assets, and large screens is unknown. Profile quality/performance/memory scaling.

- Multiple lights at scale: While summation across lights is suggested, accuracy and cost with many lights (dozens) or varied types (point/spot/area) are not quantified. Benchmark quality and amortized costs for multiple-lights scenarios.

- Guiding map design sensitivity: The method fixes three guiding directions and a coarse step size (h=10Δx) with jitter. The impact of step size, jitter amplitude, number/orientation of guiding lights, and alternative screen-space features (e.g., multiscale integrals, normals/curvature) is not studied. Provide sensitivity analyses and optimal configurations.

- Temporal stability under stress: Although a flow loss is used, there is no quantitative analysis (e.g., temporal SSIM, flicker metrics) under rapid camera/light changes or simulator noise. Define and evaluate temporal stability metrics and worst cases.

- Colored/spectral media: The pipeline appears largely gray for scattering with RGB emissive add-on; wavelength-dependent scattering/absorption, chromatic phase functions, and spectrally colored lights/media are not supported. Extend to spectral or trichromatic coefficients and validate.

- Emissive modeling generality: Emission training relies on a Houdini-specific remap function. Robustness to other fire/combustion shading models and physically-based emission parametrizations is unknown. Condition the network on emissive parameters and test across models.

- Dynamic/deforming occluders: Only rigid-body obstacles are demonstrated. Behavior with skinned meshes, deformables, cloth, and fast-moving occluders (including motion blur) is unexplored. Evaluate and, if needed, adapt shadowing and guiding inputs.

- Scene integration and post-processing: Interactions with HDR pipelines, exposure changes, tone mapping, TAA, motion blur, and temporal upscaling (DLSS/FSR) can introduce artifacts, but are not analyzed. Provide guidelines and compatibility evaluation.

- Energy and radiometric correctness: Training in sRGB with perceptual losses may bias radiance. No validation of energy conservation or radiometric accuracy vs. linear HDR reference is provided. Assess in linear space and report radiometric error.

- Mobile/console/VR readiness: Results are on a high-end GPU; feasibility on consoles, mobile, and standalone VR (lower power, tight latency) is unknown. Explore model compression, quantization, half/INT inference, and latency budgets.

- Stereo consistency for VR: Screen-space conditioning may cause inter-eye inconsistencies. Stereo/simultaneous dual-view training or view-consistent features are not explored. Measure and mitigate stereo mismatch.

- Failure mode characterization: The paper mentions blockiness in guiding maps but lacks systematic analysis of failure cases (e.g., ultra-thin wisps, very dense fog, extreme lighting contrast, grazing angles). Curate stress tests and publish failure taxonomies.

- Training data cost and iteration speed: Generating >25k GT lightmaps takes hours; strategies for frequent content iteration (incremental training, fine-tuning, distillation, synthetic augmentation) are not discussed. Develop faster update pipelines for production.

- Theoretical/empirical error bounds: No bounds or convergence analysis exist for the learned six-way prediction vs. path-traced ground truth across scene parameters. Define benchmarks, confidence intervals, and error predictors for content creators.

Practical Applications

Immediate Applications

The following applications can be deployed now with standard game/VFX pipelines and a modern GPU. Each item summarizes target sectors, concrete use cases, likely tools/workflows, and feasibility caveats.

- Real-time volumetric VFX in game engines

- Sectors: Software, Gaming, VR/AR

- Use cases: Dynamic smoke, fog, and explosions with moving lights/cameras; smoke–object interactions; replacement for billboards/flipbooks to improve realism without Monte Carlo noise.

- Tools/workflows: Unreal Engine or Unity plugin; shader/material using neural six-way lightmaps; 512×512 textures; support for multiple directional/point lights via weighted interpolation; artist-driven smoke authored with a lightweight LBM simulator or imported density fields.

- Assumptions/dependencies: Requires GPU inference (CUDA/TensorRT-class or engine-native ML runtime); current network trained largely on isotropic scattering and 512×512 lightmaps; approximated object→smoke shadows (screen-space), no accurate smoke→object shadows; performance tuned for PC/console-class hardware.

- VR/AR experiences with volumetric effects

- Sectors: VR/AR, Entertainment, Education

- Use cases: Immersive scenes (e.g., tunnels, factories, outdoors) with dynamic smoke reacting to user interactions and lighting in real time.

- Tools/workflows: Integrate into XR rendering passes; expose runtime controls for light color/position and obstacle motion; tie into interaction systems.

- Assumptions/dependencies: VR framerates (80–120 Hz) may require further optimization (e.g., lower resolution, foveated rendering, network pruning/quantization); mobile XR requires device-optimized inference.

- Virtual production and previsualization

- Sectors: Media & Entertainment

- Use cases: On-set previs of smoke/explosions inside LED volumes; fast iteration for lighting and camera blocking without heavy path tracing.

- Tools/workflows: Unreal-based virtual production pipelines; real-time relighting and camera moves; art-directable smoke via quick sims or asset libraries.

- Assumptions/dependencies: Visual plausibility-oriented; final shots may still use offline volume path tracing; screen-space shadowing may differ from ground truth.

- Interactive training and safety drills

- Sectors: Enterprise Training, Public Safety, Education

- Use cases: Fire safety drills, plant operations training, emergency response scenarios where believable smoke obscuration and lighting are needed.

- Tools/workflows: Training platforms (Unreal/Unity); scenario authoring with dynamic obstacles and lights; configurable density and emissive channels for explosions.

- Assumptions/dependencies: Not a substitute for validated CFD in engineering decision-making; meant for visualization and behavioral training.

- Level design and VFX authoring productivity

- Sectors: Software, Creative Tools

- Use cases: Rapid look-dev of volumetric effects; reduced reliance on pre-baked flipbooks; real-time iteration of light setups.

- Tools/workflows: Editor tools to spawn smoke volumes and predict lightmaps on the fly; sliders for density/emissive intensity; version control of neural-lightmap assets.

- Assumptions/dependencies: Artists may need updated workflows (guiding map generation, neural lightmap preview); requires engine-side ML integration.

- Cloud gaming and performance optimization on PC/console

- Sectors: Cloud Gaming, Game Platforms

- Use cases: Maintain high-quality volumetrics at low frame budgets by replacing expensive volumetric path tracing or heavy particle systems.

- Tools/workflows: Deployment via engine plugins; inference on datacenter GPUs or client GPUs; dynamic light additions via six-way interpolation.

- Assumptions/dependencies: Performance depends on GPU ML throughput; CPU-only paths are unlikely to meet real-time budgets.

- Robotics/AV simulation: camera-based visual domain randomization

- Sectors: Robotics, Autonomous Vehicles

- Use cases: Generate camera-rendered training data in smoky/foggy conditions to test robustness of vision models in simulators.

- Tools/workflows: Integrate into robotics simulators (e.g., Unreal/Unity-based); randomize density, lighting, and camera trajectories.

- Assumptions/dependencies: Visual realism-focused; not validated for physically accurate sensor modeling (e.g., LiDAR/radar returns); use for vision-only augmentation.

- Digital twins and operator dashboards (visual briefing)

- Sectors: Industrial Operations, Smart Buildings

- Use cases: Visual overlays of smoke propagation for training/briefings and comms; interactive demos during safety planning.

- Tools/workflows: Embed into 3D dashboards; real-time adjustment of vents/obstacles/lights for scenario walkthroughs.

- Assumptions/dependencies: Qualitative visualization; not suitable for regulatory compliance or quantitative prediction.

- Education and outreach

- Sectors: Education, Science Communication

- Use cases: Interactive demonstrations of volumetric scattering and fluid behavior in classrooms/museums.

- Tools/workflows: Lightweight LBM-based sims; live relighting; viewport overlays explaining transmittance and phase effects.

- Assumptions/dependencies: Pedagogical focus; simplified physics (e.g., isotropy) acceptable.

- Asset marketplaces and middleware

- Sectors: Content Platforms, Middleware

- Use cases: Distribute neural-lightmap-ready smoke assets; turnkey plugins offering dynamic volumetric effects.

- Tools/workflows: Package pretrained weights, materials, and sample scenes; versioned SDK for engines.

- Assumptions/dependencies: Ecosystem/engine support for on-device inference and six-way-lightmap shading.

Long-Term Applications

These applications require additional research, scaling, validation, or platform support before broad deployment.

- Mobile XR and WebGPU deployment

- Sectors: Mobile, XR, Web

- Use cases: High-fidelity smoke in mobile games/AR and web-based 3D experiences.

- Tools/workflows: Network compression (pruning, quantization), ONNX/WebGPU backends, foveated/tiling strategies.

- Assumptions/dependencies: Tight memory/thermal budgets; mobile NPUs/GPUs and web runtimes must support efficient inference.

- Physically grounded planning and policy tools (with CFD coupling)

- Sectors: Urban Planning, Building Safety, Policy

- Use cases: Public consultations and scenario planning augmented with validated physics for evacuation/smoke control design.

- Tools/workflows: Couple the neural renderer to certified CFD solvers for accurate density fields; validated pipelines for compliance workflows.

- Assumptions/dependencies: Requires rigorous validation, uncertainty quantification, and regulatory acceptance; current method is not a design-grade solver.

- Multi-sensor adverse-weather simulation for robotics/AV

- Sectors: Robotics, Automotive

- Use cases: Joint simulation of camera, LiDAR, and radar responses in smoke/fog with real-time performance.

- Tools/workflows: Extend guiding maps to simulate wavelength-dependent scattering, backscatter, and transmittance; multi-modal neural components.

- Assumptions/dependencies: Substantial physics extensions (anisotropy, spectral effects), sensor-specific validation, and new training datasets.

- Generalization to broader participating media and lighting

- Sectors: Software, Gaming, VFX

- Use cases: Clouds, dust storms, mist, and complex phase functions; high-order multiple scattering and area lights.

- Tools/workflows: Expanded datasets (varying g-parameters, spectral behavior), network architecture upgrades, hybrid Monte Carlo guidance.

- Assumptions/dependencies: Increased model capacity and training cost; may need 3D-aware priors for highly anisotropic media.

- Post-production relighting for 2D footage

- Sectors: Media & Entertainment, Creative Tools

- Use cases: Relight smoke in plates during compositing without full 3D reconstruction.

- Tools/workflows: Nuke/After Effects plugin; monocular depth/segmentation to estimate guiding maps from footage; neural six-way lightmaps for relighting.

- Assumptions/dependencies: Reliable monocular depth/alpha estimation in real scenes; domain adaptation for real-to-synthetic transfer.

- Differentiable, inverse-designed volumetrics for creators

- Sectors: Creative Tools, Software

- Use cases: Artist-driven objectives (e.g., silhouette, rim lighting) solved by optimizing density/emission under neural lightmap constraints.

- Tools/workflows: Differentiable pipeline exposing gradients through guiding map and network; constraint-based authoring UI.

- Assumptions/dependencies: Requires stable, end-to-end differentiable implementation and regularization to avoid artifacts.

- Procedural/generative asset creation

- Sectors: AI/Content Creation

- Use cases: AI-assisted generation of dynamic smoke assets with consistent neural lightmap behavior.

- Tools/workflows: Combine diffusion/NeRF-style priors with the six-way lightmap predictor; auto-rigging of emissive channels for explosions.

- Assumptions/dependencies: Large curated datasets of volumetric effects; controls for temporal coherence and editability.

- Engine-level global volumetric lighting integration

- Sectors: Game Engines, Rendering Middleware

- Use cases: Hybrid pipelines that blend neural lightmaps with ReSTIR or voxel GI for unified scene lighting.

- Tools/workflows: Scheduling and resource sharing between ray-based and neural passes; dynamic importance sampling guided by predicted lightmaps.

- Assumptions/dependencies: Careful budget partitioning; APIs for interop between denoisers, ray tracing, and neural passes.

- Standards and best practices for training-based volumetric VFX

- Sectors: Industry Consortia, Standards Bodies

- Use cases: Authoring and interchange guidelines (datasets, phase-function settings, QA metrics) for neural volumetric effects.

- Tools/workflows: Open benchmarks (PSNR, MSE, temporal consistency), asset metadata for lightmap networks.

- Assumptions/dependencies: Cross-vendor alignment; shared reference implementations and datasets.

- Domain-specific training (e.g., industrial plants, tunnels)

- Sectors: Energy, Transportation, Mining

- Use cases: Operational training and visualization tailored to typical geometry and lighting in specific industries.

- Tools/workflows: Curated datasets reflecting domain geometry/materials; scenario editors; integration with digital twin platforms.

- Assumptions/dependencies: Domain adaptation effort; alignment with site policies and IT/security constraints.

Glossary

- Advection: Transport of a quantity (like smoke density) by a flow field over time. "grid-based smoke advection"

- Alpha channel: An image/texture channel used to store transparency or related per-pixel scalar data. "the alpha channel for the first texture contains the transparency"

- Billboard: A flat polygon that always faces the camera to approximate volumetric or complex geometry. "camera-facing billboard"

- Delta tracking: A stochastic free-path sampling technique for simulating light transport in heterogeneous media. "delta tracking~\cite{Novak2014}"

- Emissive: Describing self-emitted light from a medium or surface, often stored as an emission/color term. "transparency and emissive color"

- Extinction coefficient: The sum of absorption and scattering coefficients that governs attenuation along a ray. "the extinction coefficient "

- Henyey–Greenstein phase function: A common analytic phase function parameterizing the anisotropy of scattering. "Henyey-Greenstein phase function with "

- In-scattered radiance: Light added at a point within a medium due to scattering of incoming light from all directions. "the in-scattered radiance term:"

- Lattice Boltzmann method (LBM): A numerical fluid simulation method that models flow using particle distributions on a lattice. "based on the lattice Boltzmann method (LBM)~\cite{li2023high}"

- Mipmap hierarchy: A multi-resolution pyramid of textures/volumes used to accelerate sampling and filtering. "multi-level 3D mipmap hierarchy"

- Monte Carlo (MC) path sampling: Stochastic sampling of light paths to estimate rendering integrals in complex scenes. "relying on Monte Carlo (MC) path sampling"

- NAFBlocks: Neural network building blocks designed for efficient image restoration without nonlinear activations. "implemented as NAFBlocks~\cite{chen2022simple}"

- Neural Radiance Fields (NeRF): A neural representation that maps 3D coordinates and view direction to color and density for view synthesis. "Neural Radiance Fields (NeRF)~\cite{Mildenhall2021NeRF}"

- Null-collision techniques: Sampling strategies that introduce fictitious interactions to enable unbiased traversal in heterogeneous media. "null-collision techniques~\cite{Butcher1958, Georgiev2013, Kutz2017}"

- Optical flow: A per-pixel motion field between frames that captures apparent image-space motion. "optical flow fields"

- Participating media: Volumetric materials (e.g., smoke, fog) that absorb, emit, and scatter light. "Participating media are a pervasive and intriguing visual effect"

- Phase function: The angular distribution governing how light is scattered by particles in a medium. "the media phase function."

- Path tracing: A rendering algorithm that stochastically traces light paths to simulate global illumination. "Path tracing and Monte Carlo sampling offer a general framework"

- Ray marching: Numerical integration along a ray using discrete steps to approximate volume rendering. "ray marching~\cite{Raab2008}"

- ReSTIR: A spatiotemporal importance resampling method to efficiently reuse samples for real-time rendering. "ReSTIR~\cite{Lin2021}"

- Screen-space: The image-plane coordinate space after projection, used for view-dependent computations. "screen-space guiding map"

- Shadow map: A depth map rendered from the light’s viewpoint to determine shadowed regions. "compared against a shadow map"

- Six-way lightmaps: A set of six precomputed directional lightmaps (±X, ±Y, ±Z) for fast volumetric lighting. "six-way lightmaps technique"

- Sprite-based particle effects: Particle systems rendered as textured quads (sprites) to approximate volumetric phenomena. "sprite-based particle effects"

- Transmittance: The fraction of light that remains unattenuated along a path through a medium. "The transmittance function"

- U-Net: An encoder–decoder convolutional network with skip connections for image-to-image tasks. "A U-Net with specialized channel adapters"

- Virtual Point Lights (VPLs): Point light proxies sampled from light transport to approximate indirect illumination. "Virtual Point Lights (VPLs)~\cite{keller1997instant}"

- Virtual Ray Lights (VRLs): Line/beam light proxies that extend VPLs for more accurate volumetric lighting. "Virtual Ray Lights (VRLs)~\cite{Novak2012vrl}"

- Volume path tracing: Path tracing specialized for volumes to account for absorption, scattering, and emission. "with volume path tracing."

- Volume rendering equation: The integral equation describing radiance transport through participating media. "The volume rendering equation represents incident radiance"

- Voxel: A 3D pixel (grid cell) representing volumetric data in a discretized field. "voxel width"

Collections

Sign up for free to add this paper to one or more collections.