- The paper presents a neural network method that generates high-quality hard and soft shadows in real-time using a modified UNet.

- It incorporates techniques like bilinear interpolation, algebraic sum layers, and a VGG-19 loss function to boost performance and visual fidelity.

- Experimental results show competitive performance with improved temporal stability and generalization even on low-power hardware.

Neural Shadow Mapping

Introduction

"Neural Shadow Mapping" presents a machine learning approach designed to efficiently produce high-quality hard and soft shadows using a neural network architecture tailored for real-time applications. This technique aims to improve upon current shadow mapping methods by delivering results comparable to ray tracing without the associated computational costs. The method achieves this through a blend of rasterization and neural networks, optimized for deployment on low-end GPU hardware.

Methodology

Feature Engineering

The network inputs consist of screen-space buffers generated through a G-buffer and shadow mapping pass. These include view-space depths, emitter-to-occluder depths, pixel-to-emitter distances, and dot products illustrating spatial relationships. The neural network is trained using ray-traced shadows as the target. A novel aspect of the approach is encoding emitter size as a scalar, modulating the inputs to predict shadow softness effectively.

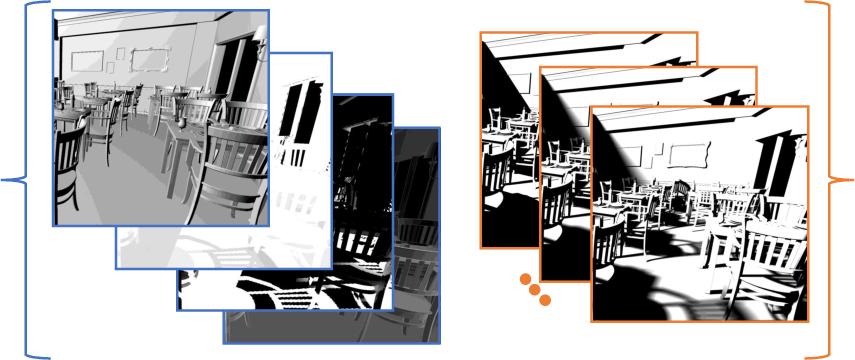

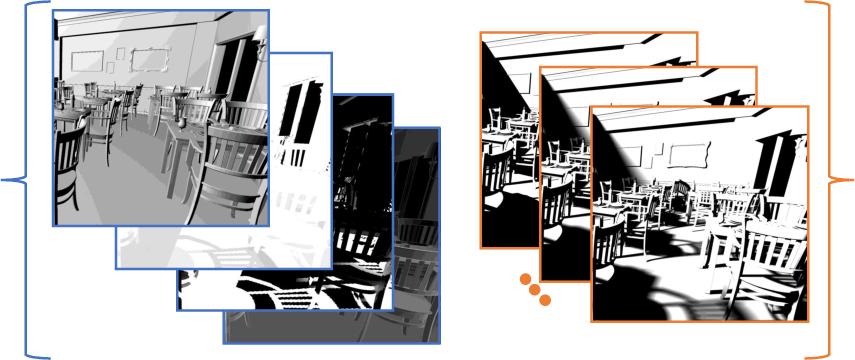

Figure 1: Visualizing supervised learning pairs. The network inputs are the rasterization buffers modulated by the size of emitter (re). The targets are generated using ray-tracing according to the corresponding emitter size.

Network Architecture

The network is a modified UNet architecture, optimized for reduced memory bandwidth and performance efficiency. Key modifications include:

- Use of bilinear interpolation instead of transpose convolutions.

- Integration of algebraic sum layers instead of concatenation.

- Conversion to half-precision for reduced model size.

Additionally, the first layer in the network often serves as a bottleneck due to intensive memory operations. The optimization involves rearranging input channels to maximize cache efficiency and remove the initial layer's computational demand, significantly boosting runtime performance to approximately 5.8ms.

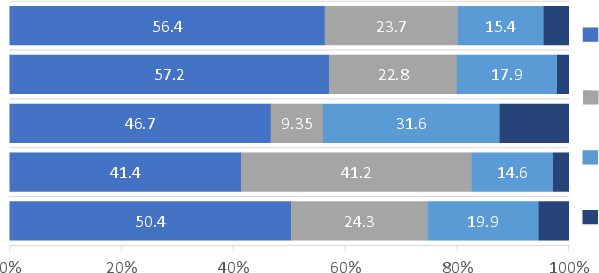

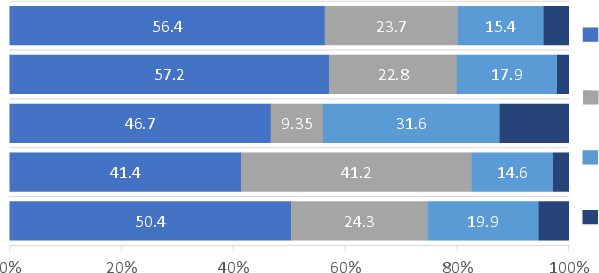

Figure 2: Effect of VGG loss on the final output. VGG loss produces sharper edges for geometry and shadow silhouettes.

Loss Function and Temporal Stability

The loss function combines per-pixel VGG-19 perceptual loss, which is applied to sharpen output edges, thus addressing aliasing issues. Perturbation-based temporal stability loss is introduced to enhance temporal coherence without requiring additional historical buffers, reducing the computational burden. By applying small perturbations to camera or emitter positions during training, the network learns to stabilize its outputs against such variations.

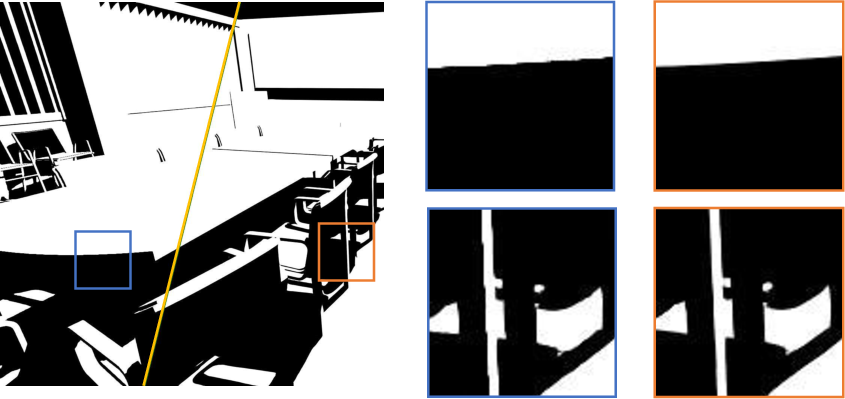

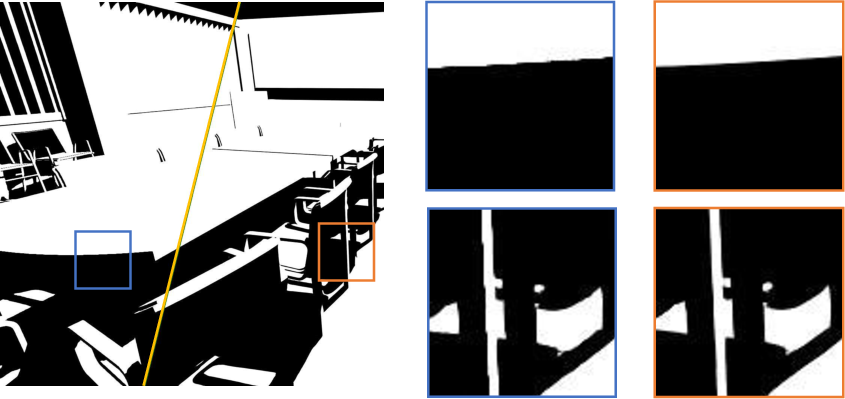

Figure 3: Comparing the temporal and spatial effect of perturbation loss. The application of perturbation loss reduces temporal instability while causing an increase in spatial blur as shown in the cutouts.

Results

The neural shadow mapping technique has demonstrated competitive performance against traditional methods like PCSS and MSM. The approach yields visually superior results while maintaining a favorable performance-accuracy trade-off. Even when introducing untrained objects into scenes, the network generalizes effectively, rendering shadows that closely approximate reference-quality results.

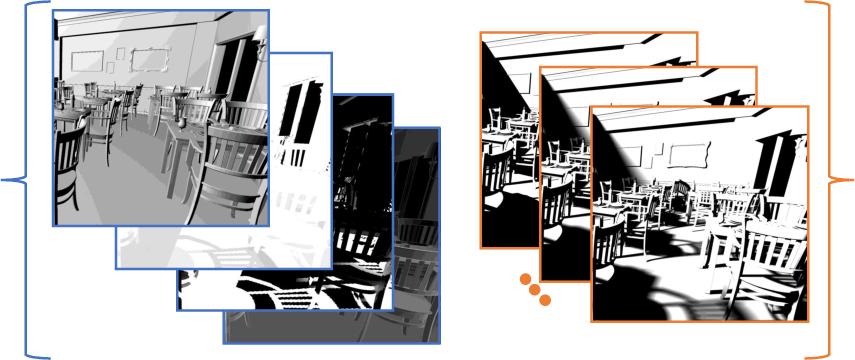

Figure 4: Comparing our network's ability to generalize to unseen objects (buddha, bunny, dragon) with other competing techniques (MSM, PCSS, Raytracing+Denoising) for hard and soft shadows.

Limitations and Future Work

Despite its successes, the method encounters limitations with highly complex scenes and multi-light scenarios, where shadow rendering might not generalize as well. Potential future improvements could involve extending the architecture to handle multiple light sources and further optimizing the network's architecture via advanced techniques such as sparsification or automated design.

Conclusion

This paper presents a significant step forward in shadow rendering, leveraging neural networks tailored for speed and quality. The proposed method provides a practical solution for deploying complex shadow rendering on low-power systems without the need for extensive post-processing. As AI techniques continue to evolve, this approach provides a promising direction for integrating more complex computational models in real-time graphics applications.