- The paper demonstrates the SCRAT framework that integrates fast feedback control, structured episodic memory, and in-loop verification based on squirrel locomotion and caching behaviors.

- It introduces a hierarchical, partially observed stochastic control model that jointly addresses control latency, retrieval cost, and verification error through explicit benchmark protocols.

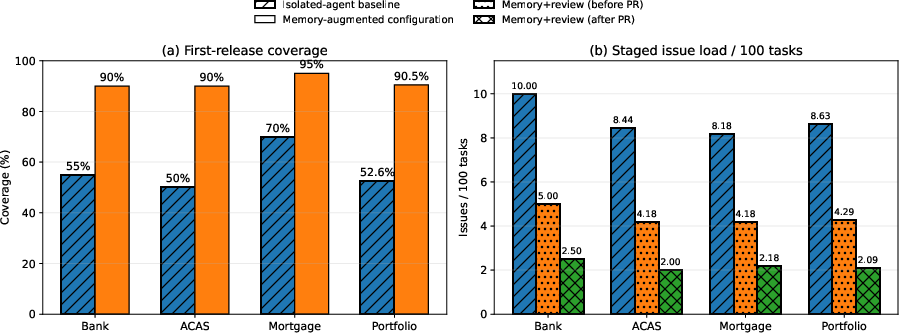

- Empirical evidence from the Chiron system shows significant improvements in project duration, first-release coverage, and issue load, underscoring the advantages of memory-augmented, staged validation.

Coupled Control, Structured Memory, and Verifiable Action in Agentic AI: The SCRAT Framework

Introduction

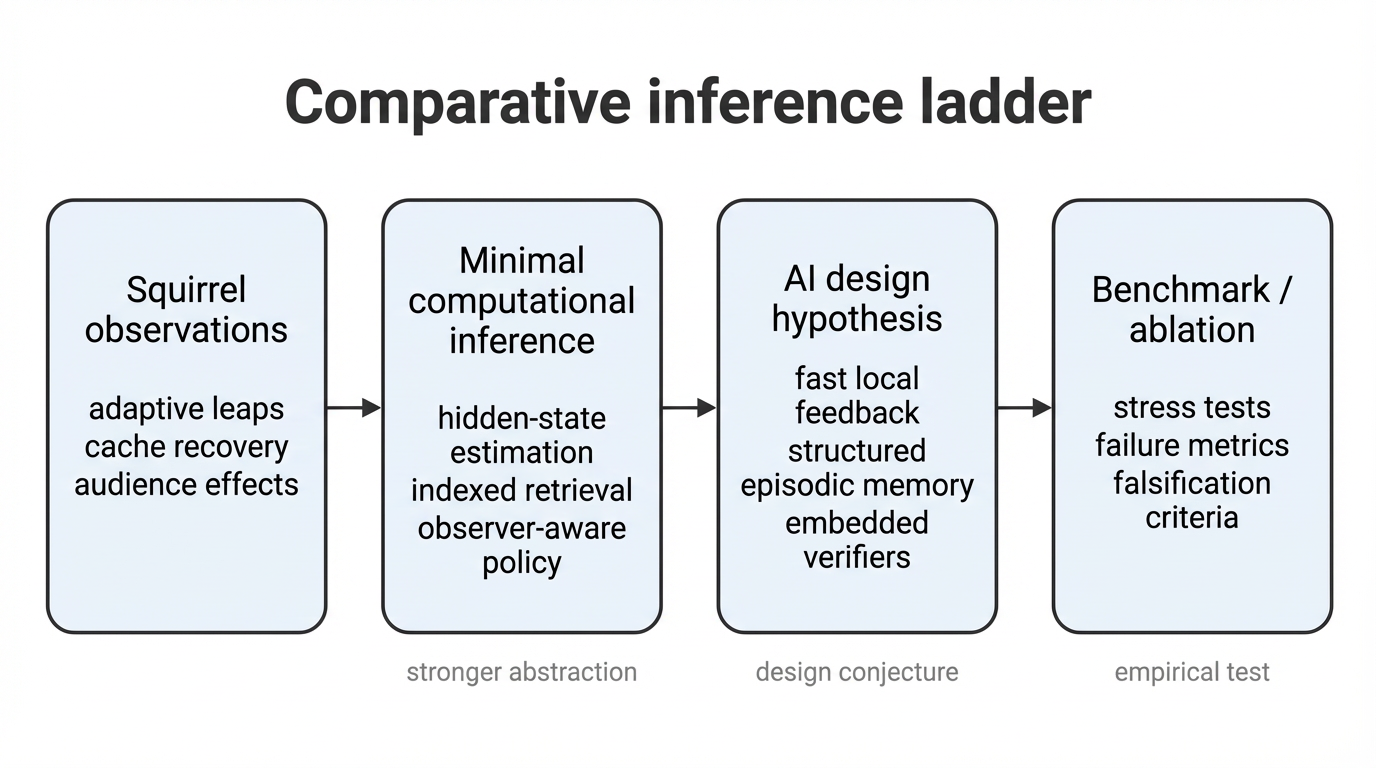

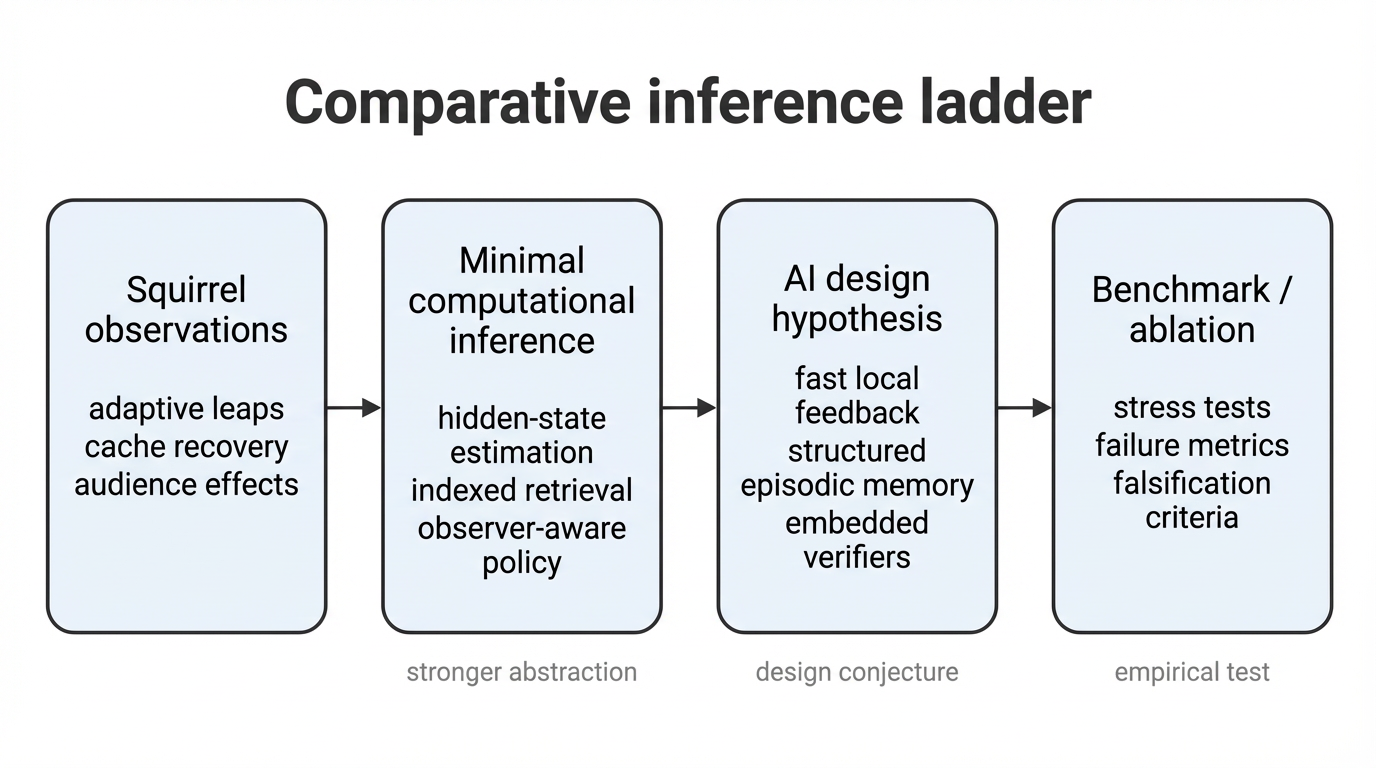

This paper investigates the intersection of control, episodic memory, and verification in agentic AI through a biologically inspired comparative perspective anchored in squirrel locomotion and scatter-hoarding ecology. Unlike much of the contemporary literature which treats partial observability, delayed feedback, and assurance as modular side-constraints, the authors argue that these are fundamentally integrated and should be treated as a joint engineering problem. The core thesis is advanced through explicit formal decomposition, sharp hypothesis articulation, and a benchmark agenda aimed at verifying those hypotheses. Emphasis is placed on substrate-independence and falsifiability, avoiding generic or metaphorical analogies and focusing on measurable joint demands.

Figure 1: Comparative inference ladder mapping the ascent from behavioral observation to engineering claim, increasing abstraction and experimental burden at each step.

Biological Case Study: Integrated Demands in Squirrel Behavior

Squirrel ecology provides an unusually clear natural experiment for agentic AI, with three interlocked domains:

- Arboreal Locomotion: Fox squirrels exhibit adaptive error correction and online dynamics estimation when leaping onto compliant branches. Key behaviors include rapid launch-point adaptation and agile landing correction under hidden dynamics and delayed sensory feedback. This directly parallels the requirements for robust feedback control in robot manipulation and embodied agents under model misspecification and stochasticity.

- Scatter-hoarding: Gray and red squirrels possess one-shot spatial episodic memory, enabling successful recovery of individually cached food, even after long delays and under cue conflict. Cache organization is policy-dependent, with evidence for chunking and value/effort calibration, supporting structured, non-archival memory that directly influences future control actions.

- Audience-sensitive Caching: Gray squirrels modulate caching behavior—spatial separation, orientation—based on conspecific observation, minimizing pilferage. This encodes observer-aware policy design and implicit leakage management, crucial for adversarial and assurance-centered AI applications.

Through these three behaviors, the authors justify a strict computational interpretation: the demands are not isolated but form a coupled computation loop involving fast feedback, structured memory, and in situ verification.

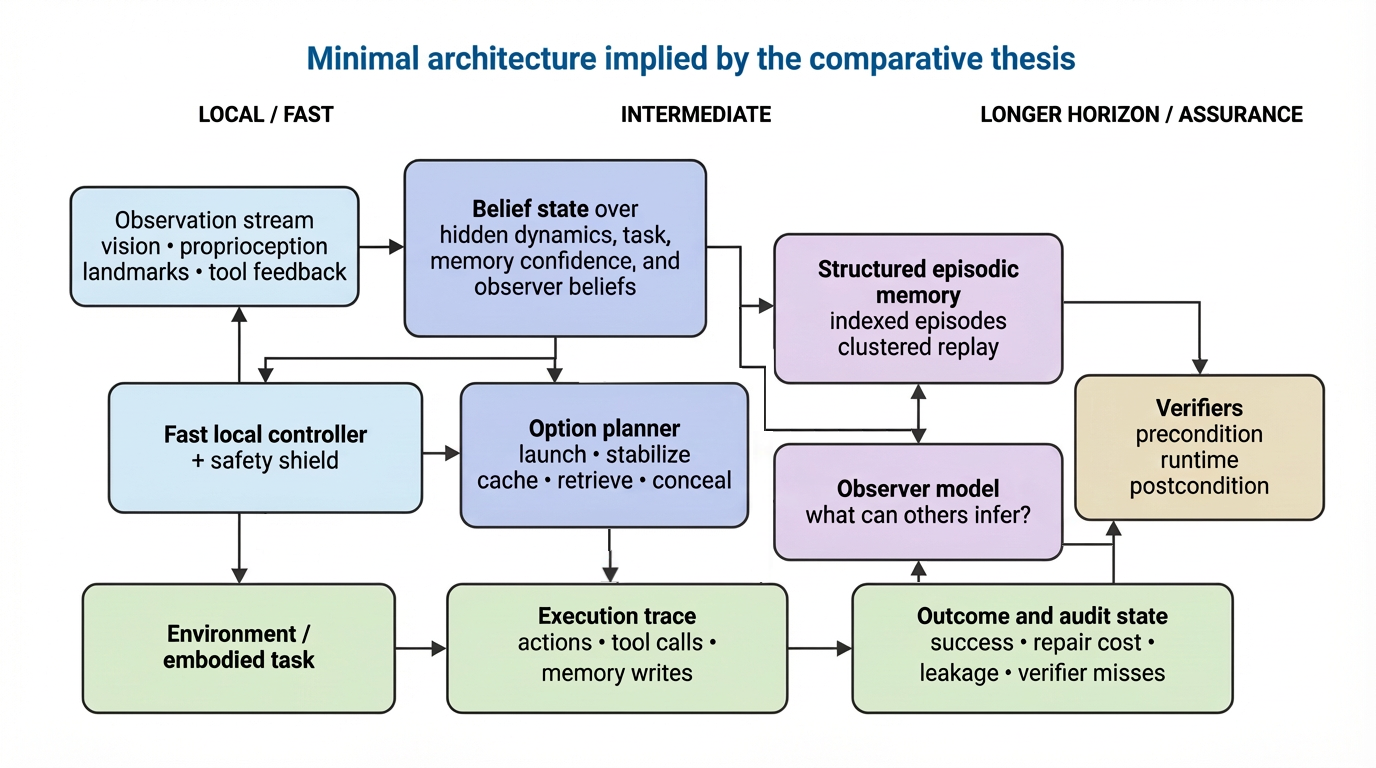

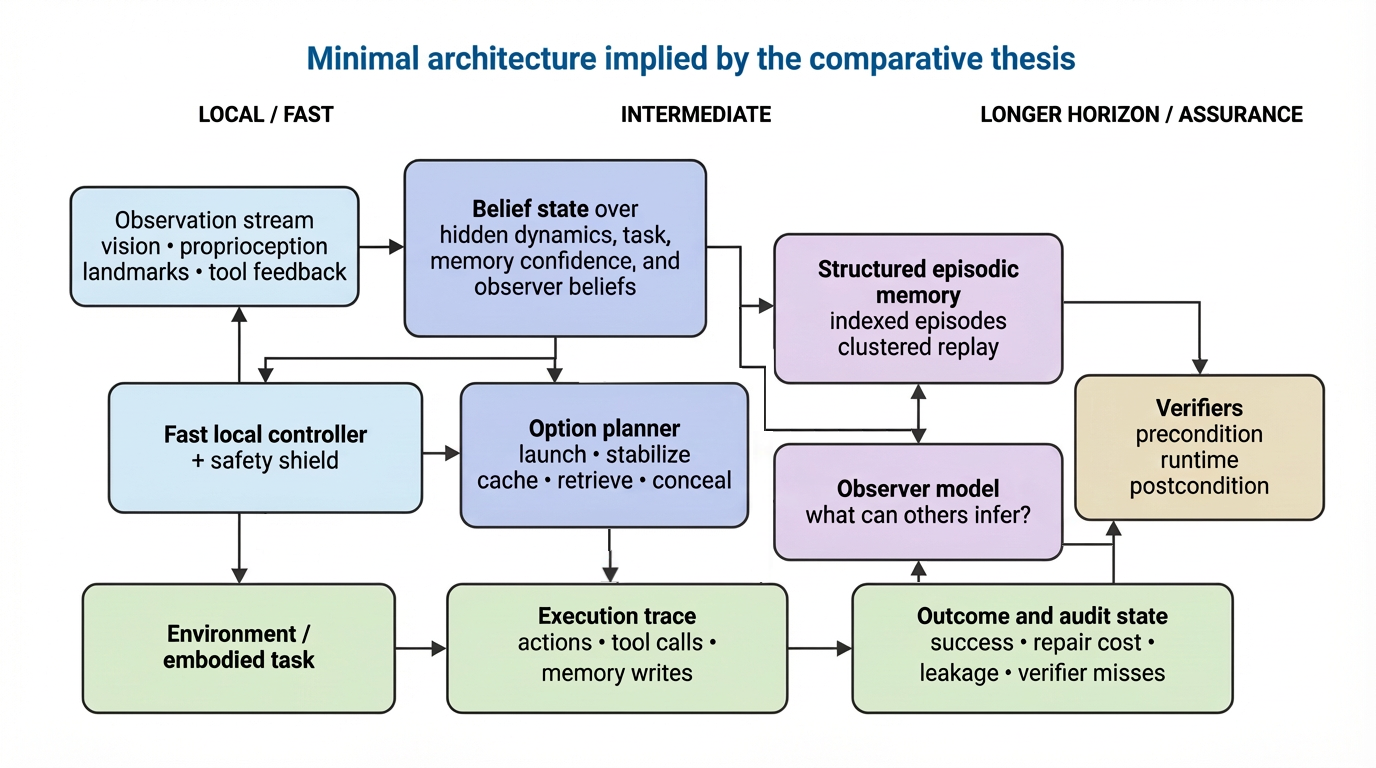

The authors formalize the joint problem as a hierarchical, partially observed stochastic control system—SCRAT (Stochastic Control with Retrieval and Auditable Trajectories). The state is augmented to include:

- Embodied state (xt)

- Latent task/environment variable (zt) (e.g., support compliance)

- Structured episodic memory (mt)

- Observer belief/estimates (bt)

- Task/resource/permission state (et)

The control architecture is temporally abstracted, featuring option-level planning, dynamic belief updates, structured episodic memory queries, and in-loop verification. Critically, verifiers and observer models are embedded as first-class components in the control-memory-verification loop, supporting both runtime and post-hoc checking.

Figure 2: Minimal SCRAT architectural decomposition, partitioning agent state into control, memory, observer model, and verification pathways, enabling benchmarkable isolation of H1–H3 and the downstream role-division conjecture.

The objective in this system is multi-faceted: it includes not just raw task reward, but also control latency, information leakage cost, and repair costs after late-failure— a direct transposition from the foraging–pilferage–retrieval loop in squirrels. Constraints are imposed on probability of success, compute budget, and allowable verification error.

Main Hypotheses

Three principal, benchmarkable hypotheses are advanced:

H1 (Fast Feedback + Predictive Compensation):

Placing a short-horizon, feedback-driven controller close to execution, and coupling it with predictive modeling, delivers robustness to latent or shifting dynamics unmatched by open-loop planning alone. The testable failure signature is not merely lower mean success, but greater intervention/repair requirements and latency after disturbance.

H2 (Structured Control-Oriented Episodic Memory):

Memory modules organized for action relevance—indexed, clustered, value-aware—yield reduced retrieval latency and cue-conflict failure relative to undifferentiated archives, especially under scale and interference. This aligns with observed chunked cache organization and task-salient memory retrieval in squirrels.

H3 (In-loop Verification and Observer Modeling):

Verifiers and observer models embedded within the action-memory loop improve robustness, lower silent-failure/information leakage, and promote correction. However, the architecture is explicitly vulnerable to checker misspecification and adversarial gaming, as evidenced by animal behaviors that trade off local success against global leakage/posterior risk.

A weaker, fourth conjecture (C1) is that differentiated role allocation (proposer, executor, checker, adversary) can further reduce error correlations and enhance auditability, but this is not directly warranted by the biological data.

Benchmark and Evaluation Agenda

The paper delineates four targeted benchmark families:

- Hidden-Dynamics Control: Stress latent or variable dynamics, perturbation timing, and observation delays; ablate fast feedback or latent-state adapter.

- Cache-like Episodic Retrieval at Scale: Test under increasing memory load, retrieval delay, and cue-conflict; ablate index structure.

- Observer-aware Action: Vary observer visibility, adversary competence, constraints; ablate observer model, end-only checking.

- Role-Differentiated Pipeline: Examine impact of distinct role incentives/coverage; ablate role separation.

The evaluation protocol prioritizes latency, repair cost, silent-failure/leakage profile, and ablation sensitivity over mere aggregate task accuracy.

Preliminary Numerical Evidence

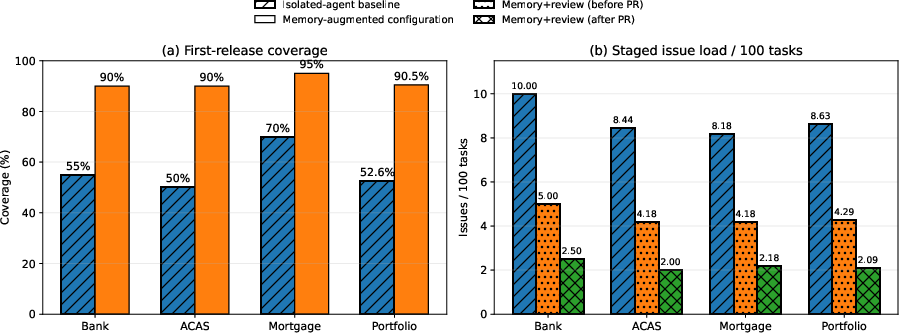

A companion benchmarking effort in software delivery (Chiron system) provides empirical support for H2. Integration of project-scale, graph-structured, semantically indexed memory modules—queried dynamically during task decomposition, code synthesis, and validation—reduces project times, elevates first-release coverage, and materially lowers pre- and post-review issue loads compared with isolated single-agent baselines.

Figure 3: Project-level improvements in coverage and staged issue load, isolating the contributions of memory-augmented, staged validation in the Chiron system.

Specifically, project duration is reduced by 3.08× (28.6 to 9.3 weeks), first-release coverage rises from 52.6% to 90.5%, and validation-stage issue load drops from 8.63 to 2.09 per 100 tasks, with the staged counts further isolating memory's effect prior to downstream human and agent review.

Theoretical and Practical Implications

The SCRAT framework shifts emphasis from decomposed, independently tested modules toward architectures where rapid feedback, operationally relevant memory, and context-sensitive verification are co-optimized. Biologically, the comparative method emphasizes substrate-independent demands rather than mechanistic mimicry or moral analogy. Theoretically, it strengthens the necessity for explicit ablation studies and diversified benchmarks centered on error recovery, interference resistance, and adversarial observability—qualities typically neglected in static accuracy evaluations.

Practically, the immediate implication is in architecture and benchmark design for high-reliability agentic systems, particularly in high-stakes or adversarial environments (e.g., autonomous robotics, software agents, and complex workflow orchestration). The evidence also cautions against naive translation of biological architectures and underlines the potential fragility of monolithic, end-to-end optimization in systematically coupled problem domains.

Limitations and Directions for Future Work

The argument is explicitly limited in three directions:

- The evidence base is inferential, not mechanistic or prescriptive; computational demands are inferred at a high level of abstraction.

- Ecological fitness does not equate to human design goals, and strategic behaviors observed in animals may introduce ethical or policy risks in AI unless bounded by external governance.

- The framework’s efficacy may be undermined if monolithic approaches—after accounting for resource and supervision parity—yield non-inferior performance on the composite benchmarks proposed.

Validation of the full thesis depends on future comprehensive, coupled benchmarks that expose and stress the integration points hypothesized here. Further theoretical development may clarify sufficiency and necessity conditions for decomposed architectures, and more extensive field deployments can robustly isolate the impact of memory structuring and verification integration at scale.

Conclusion

This work re-focuses agentic AI research on the inseparable demands of robust control, operationally structured memory, and context-sensitive verification, motivated by natural experiments in squirrel behavior and formalized in the SCRAT framework. The core claims—if validated under explicit benchmarking and ablation—imply architectural constraints substantially stronger and more discriminating than those imposed by static accuracy alone. Future work should test these constraints, clarifying both architectural necessity for coupled design and the possibility space for alternative, monolithic solutions.