- The paper presents a novel framework for swarm-based inertial methods integrating momentum and gradient-Hessian coupling to accelerate convergence.

- It establishes an energy dissipation law and uses structure-preserving discretization schemes to achieve faster-than-theoretical convergence rates.

- Empirical results demonstrate that the methods outperform classical gradient approaches through enhanced exploration, coordinated dynamics, and robust energy stability.

Swarm-Based Inertial Methods for Optimization: A Technical Analysis

Introduction and Theoretical Foundations

This paper introduces a comprehensive framework for swarm-based inertial methods (SBIMs) for global optimization, leveraging coupled dissipative inertial dynamical systems and the generalized Onsager principle (2604.03124). The SBIMs are characterized by a momentum-driven relaxation mechanism, where friction and potential energy scaling govern the dissipative dynamics across the energy landscape. The formulated continuous-time dynamics incorporate both gradient and Hessian information, yielding adaptive damping and acceleration depending on local curvature and agent trajectories.

A new underdamped ODE is proposed for agents xi, with coupled dynamics including mass, gradient, Hessian, and nonlinear cross terms, summarized as:

x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=0

where α>0, β(t) and γ(t) are time-dependent functions reflecting agent mass and friction, and the nonlinear disturbing term −tα∇F(x(t))⋅x˙(t)1 enables both coordinated frictional deceleration and directed acceleration depending on trajectory directionality.

The energy dissipation law is rigorously established, leading to function value convergence rates bounded by O(1/δ(t)), where δ(t) diverges asymptotically. Discrete algorithms, derived via structure-preserving time integration—including fully discretized and IMEX schemes—retains this dissipative structure, offering O(1/δk) rates.

Relaxation Dynamics and Swarm-Based Algorithm Construction

Mechanical energy per agent is formulated as Ei=(1/2)m(xi,t)∥x˙i(t)∥2+aiF(xi), with Lyapunov-based analysis ensuring that kinetic energy vanishes and potential energy minimizes globally. The SBIMs are derived as compressible or incompressible dynamical systems, with mass transport and communication dynamics among agents fostering exploration and mass concentration near minima.

The proposed SBIMs generalize beyond classical swarm-based gradient descent by allowing for inertia, thereby improving exploration in nonconvex landscapes. Nesterov-like accelerated dynamics are embedded into the SBIM formulation, allowing analytical comparison and integration of classical momentum-based optimization within a swarm framework.

Underdamped Inertial Dynamics: Gradient and Hessian Damping

The new inertial ODE introduces a mechanism for coordinated adaptation—accelerating when traversing uphill, decelerating when descending—by exploiting gradient-Hessian coupling. The energy dissipation theorem demonstrates x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=00 is upper-bounded by x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=01; this property is retained under structure-preserving discretization. Discrete schemes such as fully-backward, semi-discretized, forward-backward (FB), and IMEX-RB are developed, each preserving (theoretically or empirically) monotonic energy dissipation and robust convergence rates.

Numerical Results: Convergence Properties and Swarm-Based Exploration

Extensive numerical experiments validate the theoretical predictions for both convex and nonconvex landscapes. For convex test functions (e.g., Rotated Hyper-Ellipsoid, Sphere, Sum Squares, Modified Sphere), inertial methods consistently demonstrate rapid convergence rates significantly exceeding their theoretical bounds. Average local convergence exponents x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=02, defined via ratios of decrements in function value versus the x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=03 scaling, are typically in the range x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=04, signifying faster-than-x¨(t)+[tαI−tα1∇F(x(t))⊤+γ(t)∇2F(x(t))]x˙(t)+β(t)∇F(x(t))=05 decay.

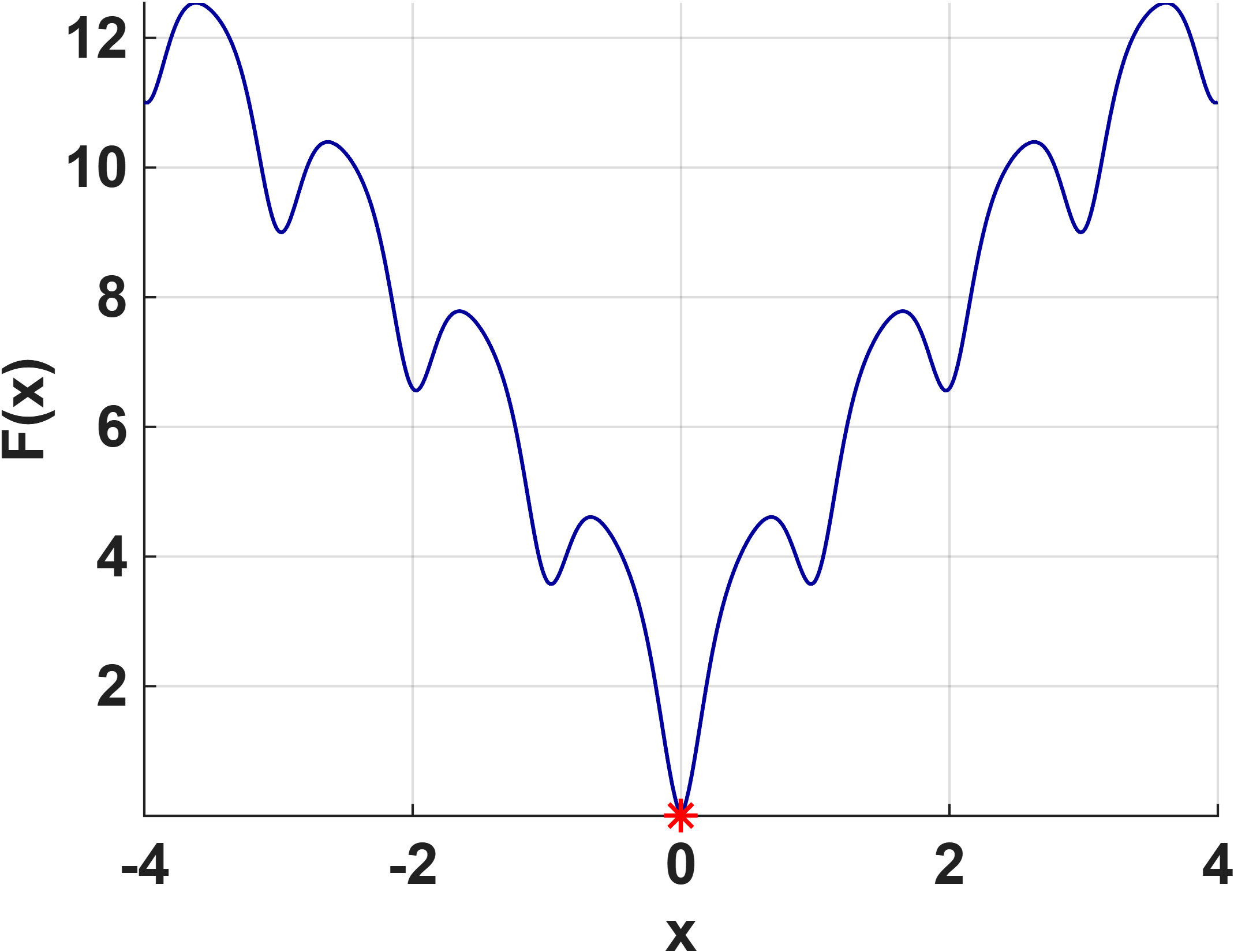

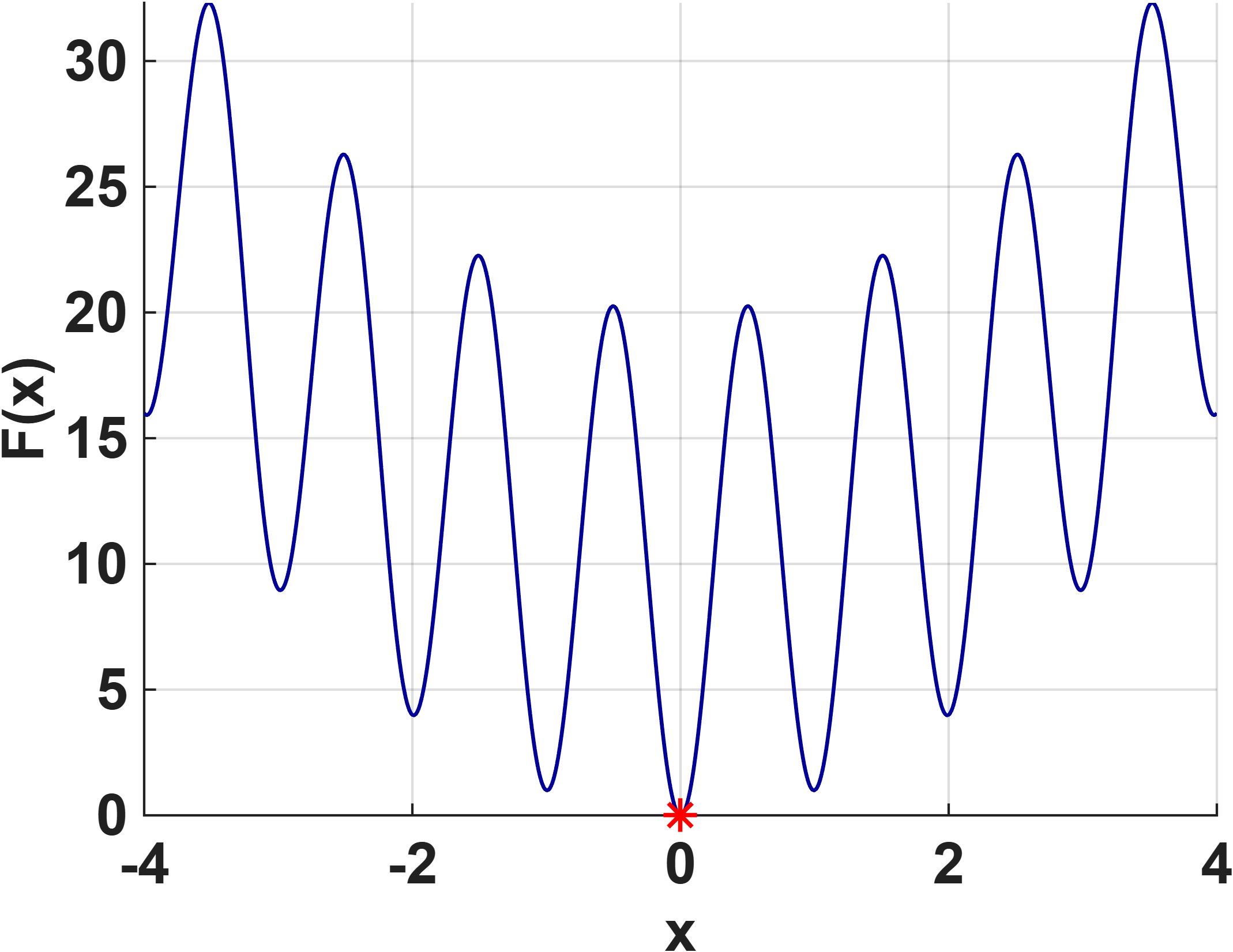

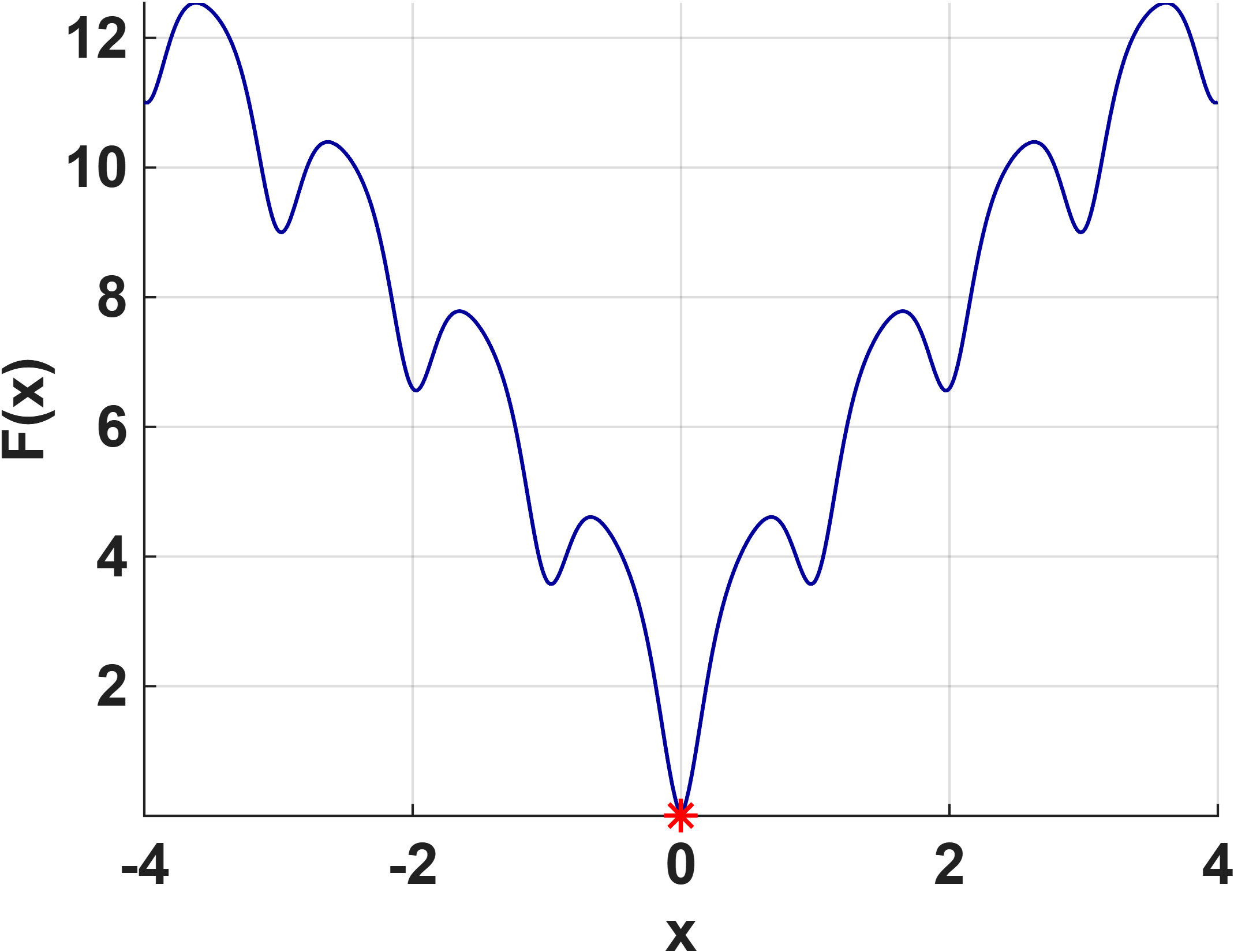

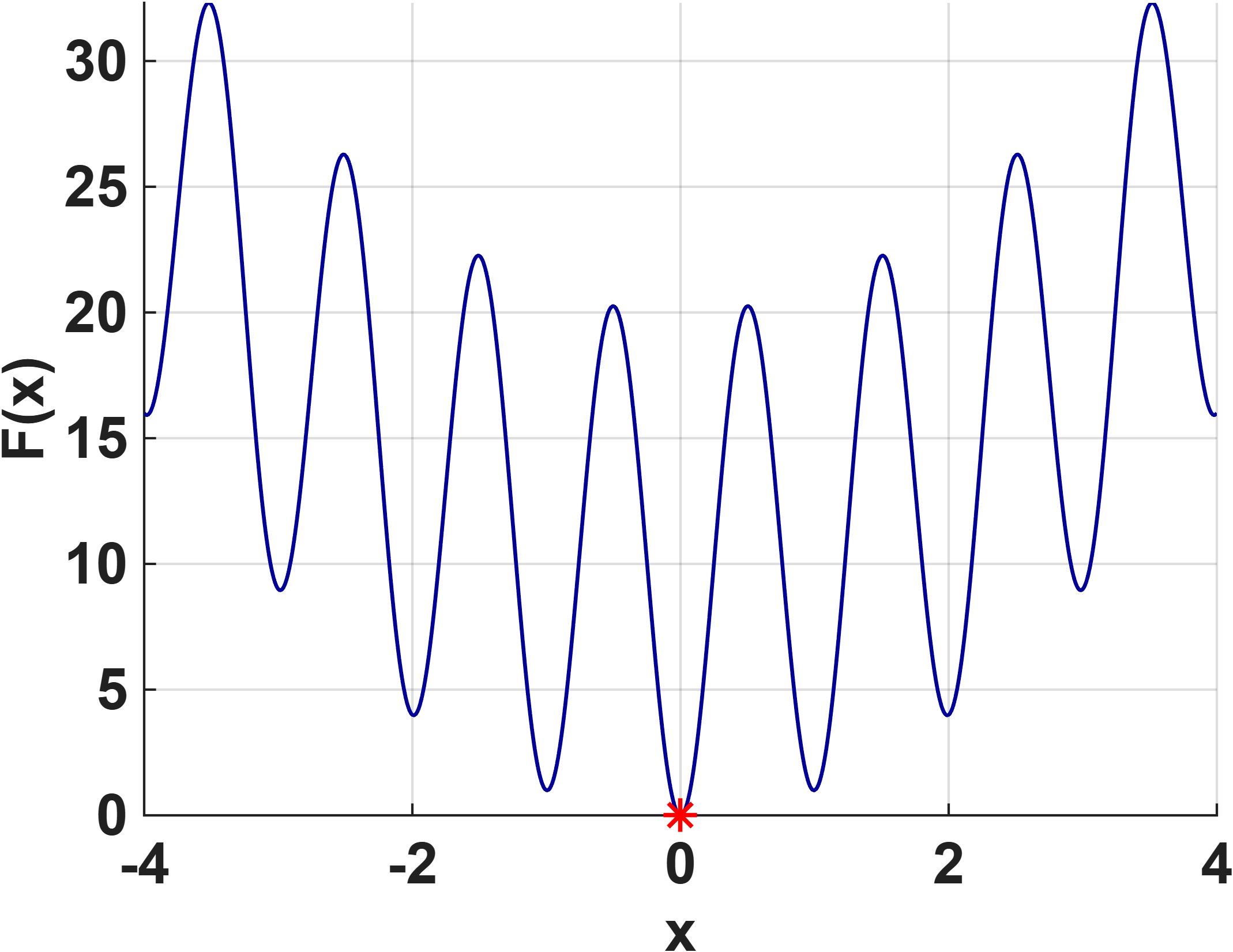

Nonconvex benchmark testing (Ackley, Rastrigin) highlights SBIMs' superior exploration and stability via mass transport and agent merging. Methods that integrate Hessian-driven damping achieve high success rates and markedly improved energy stability compared to classical Nesterov or gradient descent, which often display oscillatory energy profiles or get trapped in local minima.

Figure 1: Performance of SBIMs on the 1D Ackley function, showing robust monotonic energy decay and successful convergence to the global minimum.

Empirical Highlights and Method Comparison

SBIMs constructed with the new inertial dynamics, the fully discretized and IMEX-RB algorithms, and acceleration schemes consistently outperform classical methods. Notably, the actual convergence rates are substantially better than the worst-case theoretical guarantees. For highly oscillatory functions (such as Rastrigin), Hessian-informed SBIMs demonstrate the highest rates of successful global minimization and stable dissipation.

Explicit schemes (FB, Semi) offer computational efficiency while preserving most energy-stable features, albeit lacking full theoretical dissipation guarantees—yet they deliver strong empirical results. Methods based solely on first-order information (gradient descent) lack robustness in high dimensions or highly nonconvex settings, as evidenced by frequent convergence to non-global minima and erratic energy trajectories.

Theoretical and Practical Implications

The energy-dissipative SBIM framework introduced extends classical optimization theory to multi-agent dynamical systems with adaptive inertia and friction. The results establish strong theoretical guarantees for convergence rates and energy monotonicity, supported by rigorous Lyapunov analyses and structure-preserving discretization. Practically, the methods provide improved robustness for nonconvex global optimization, with demonstrated stability and exploration properties benefiting applications in high-dimensional settings.

Future work may explore adaptive parameter selection, computationally efficient Hessian approximations, stochasticized variants, and deeper integration of communication and mass-transfer dynamics. The implications extend to scalable optimization, AI landscape exploration, and analysis of swarming and population-based algorithms under inertial and dissipative constraints.

Conclusion

The paper presents a mathematically rigorous framework for swarm-based inertial optimization methods, establishing both theoretical and empirical superiority over classical first-order and momentum-based schemes in challenging convex and nonconvex landscapes. SBIMs achieve enhanced exploration, robust convergence rates, and stable energy dissipation, providing a systematic foundation for future developments in swarm-based and large-scale optimization. The underlying energy-dissipative principles and structure-preserving discretizations may inform advances in AI optimization, high-dimensional scientific computing, and dynamical systems analysis.