- The paper shows that credible human feedback significantly increases learner engagement, with medium effect sizes (d = 0.61 for time on task and d = 0.56 for focus).

- It isolates attribution from timing effects, revealing that delayed feedback raises code complexity (d = 0.55, p = .008) without enhancing behavioral measures.

- Findings indicate that non-credible human attribution undermines intrinsic motivation and performance, highlighting the importance of authenticity in feedback.

Attribution and Credibility Effects in AI-Generated Feedback for Computing Education

Experimental Framework and Methodology

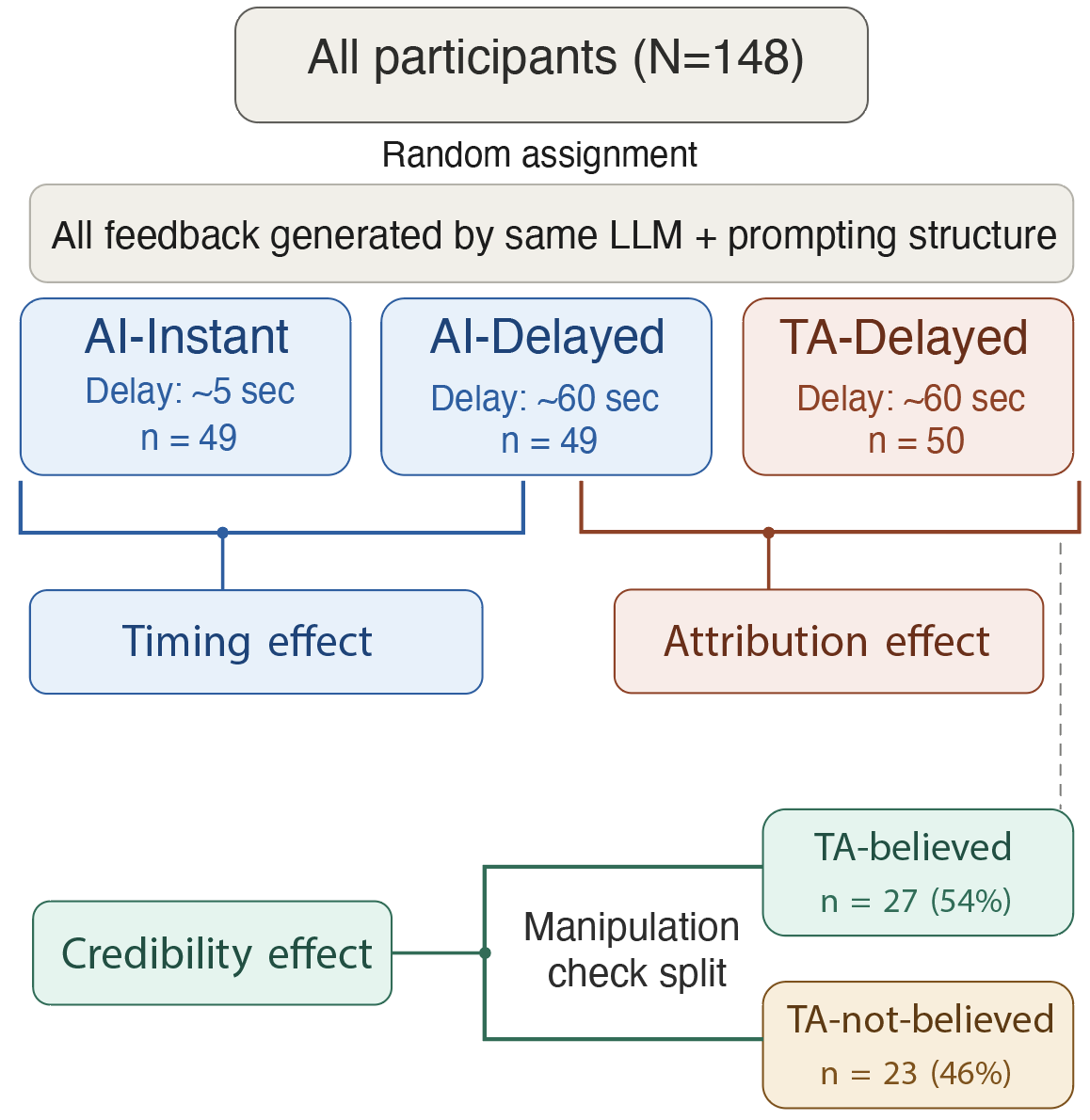

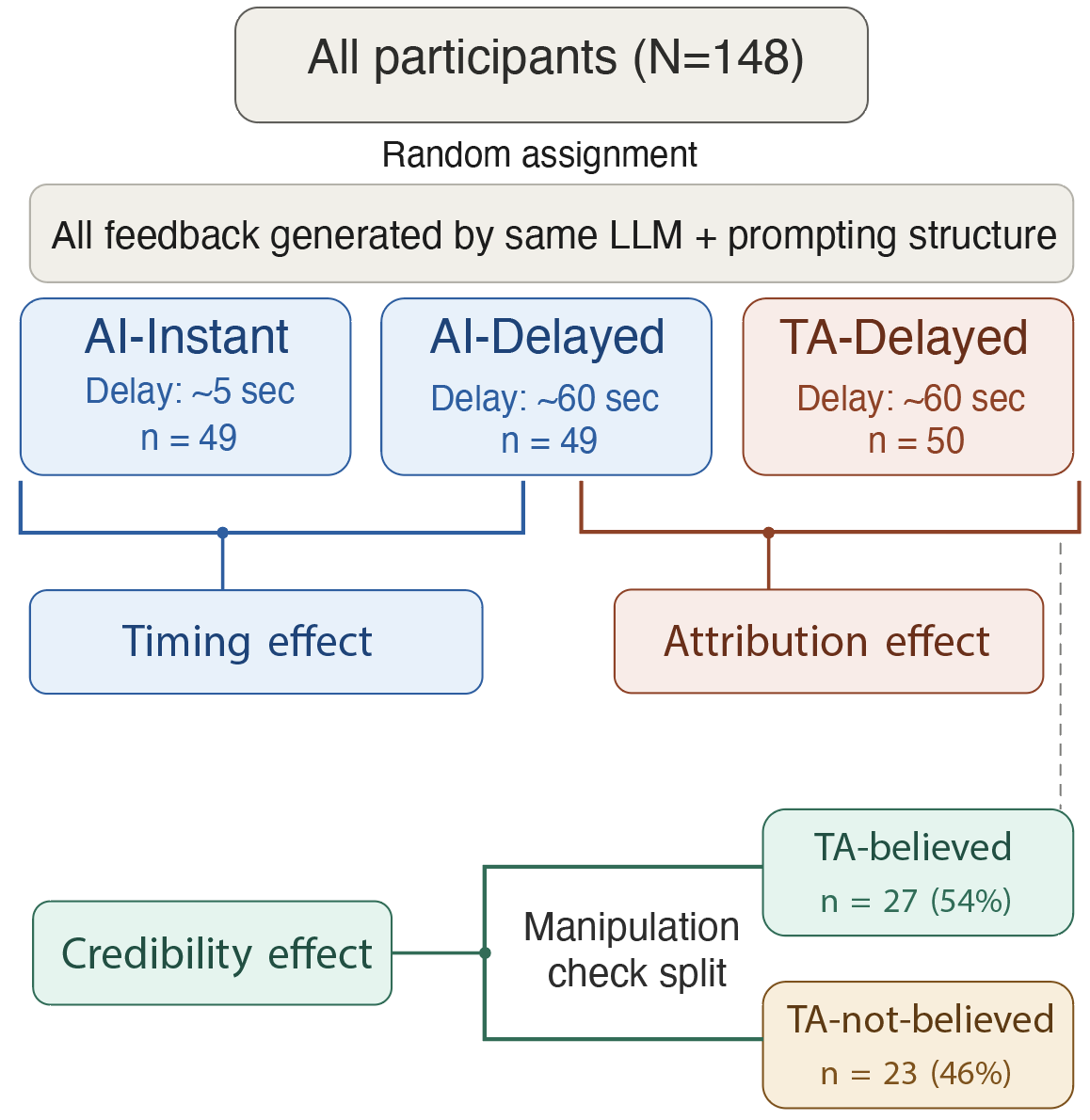

This study investigates the influence of feedback source attribution—AI versus human—on learner engagement and outcomes in computing education environments. The experiment utilizes a three-condition design that isolates attribution effects from delivery timing: instant AI feedback (AI-Instant), delayed AI feedback (AI-Delayed), and delayed feedback attributed to a human teaching assistant (TA-Delayed). Critically, all feedback is generated by an LLM (Claude Sonnet 4) following identical prompting protocols, ensuring equivalence in content and tone across sources. This approach allows rigorous assessment of attribution effects independent of timing, addressing a major methodological limitation of prior work.

Figure 1: Experiment design: AI-Instant, AI-Delayed, and TA-Delayed conditions separate attribution and timing.

Behavioral, output, and perception metrics are logged throughout four self-paced creative coding modules in p5.js. These include time on task, code execution frequency, code complexity, and self-reported motivation and engagement. A manipulation check assesses credibility: whether participants believe the attributed feedback source.

Distinct Attribution and Timing Effects

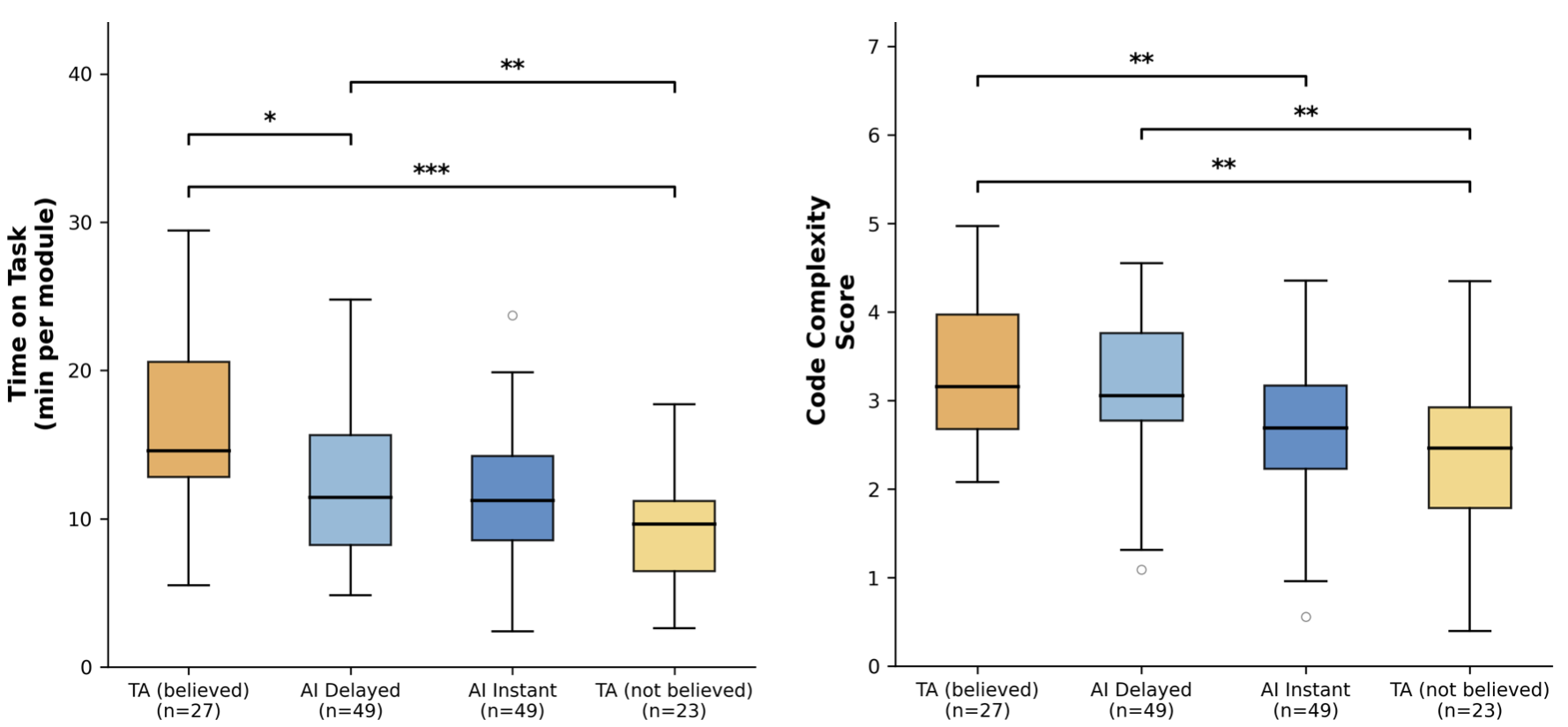

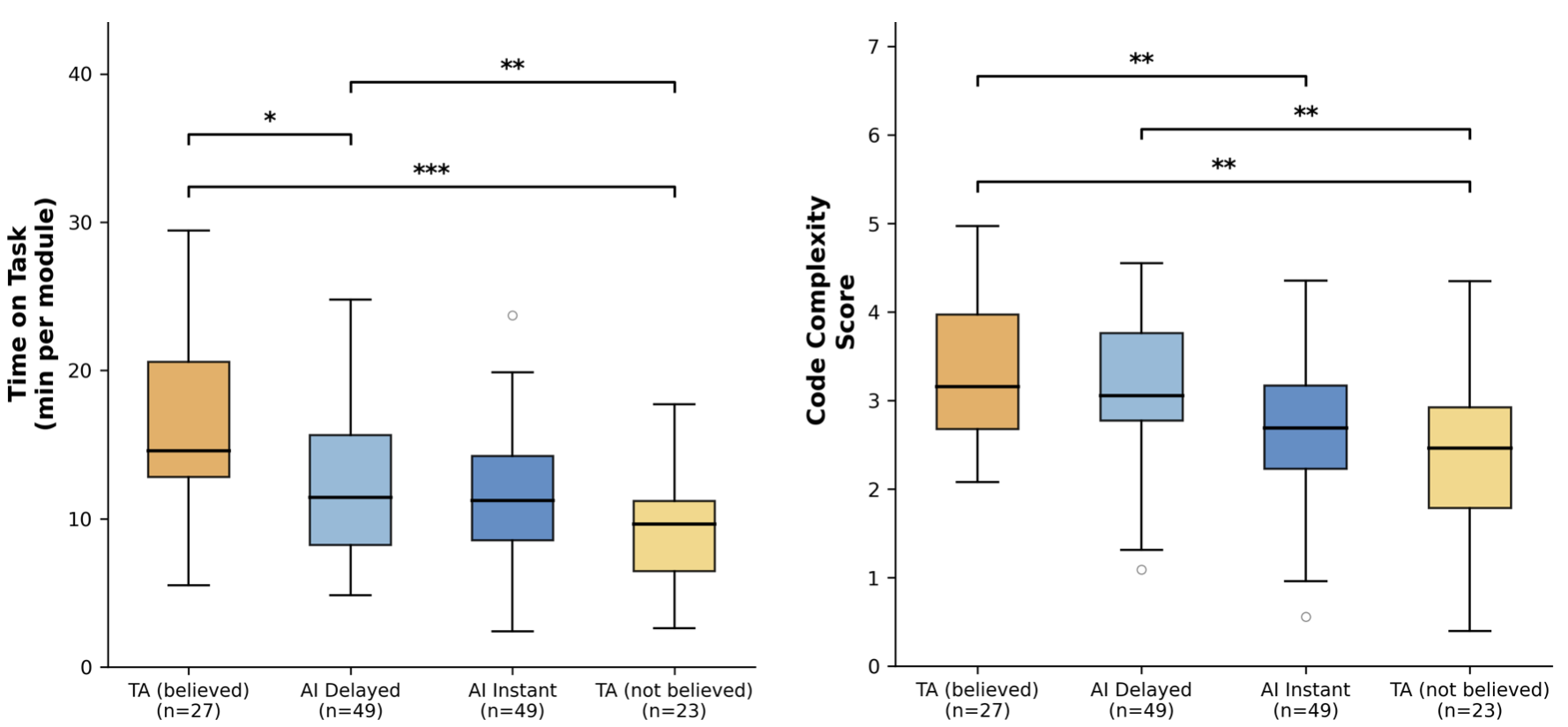

The study demonstrates that source attribution and delivery timing exert separable influences on learner behavior. Believed human attribution (TA-believed group) produces a statistically significant increase in time on task (d=0.61) and active focus (d=0.56), relative to delayed AI attribution, with medium effect sizes. Delivery delay alone (AI-Instant vs. AI-Delayed) does not increase process measures, but it does promote greater code complexity (d=0.55, p=.008), indicating that temporal spacing fosters more reflective code production without enhancing engagement per se.

Figure 2: Behavioral measures (L: Time on task per module; R: Experience-adjusted code complexity score) by condition.

Helpfulness ratings do not differ significantly across conditions, confirming a dissociation between perceived and actual behavioral impact, consistent with feedback gap literature. Attribution effect emerges primarily in behavioral process indicators, and timing effect in output measures.

Impact of Attribution Credibility

An exploratory analysis reveals that credibility of attribution is a key moderator. Nearly half of TA-Delayed participants (46%) do not believe the feedback is genuinely human-sourced. Non-believers exhibit the lowest behavioral and output metrics: significantly less code complexity (d=0.77, p=.003), shorter programs (d=0.82, p=.002), and reduced focus (d=0.68, p=.009), compared to the transparently attributed AI feedback. Conversely, TA-believers consistently outperform both AI groups across all measures.

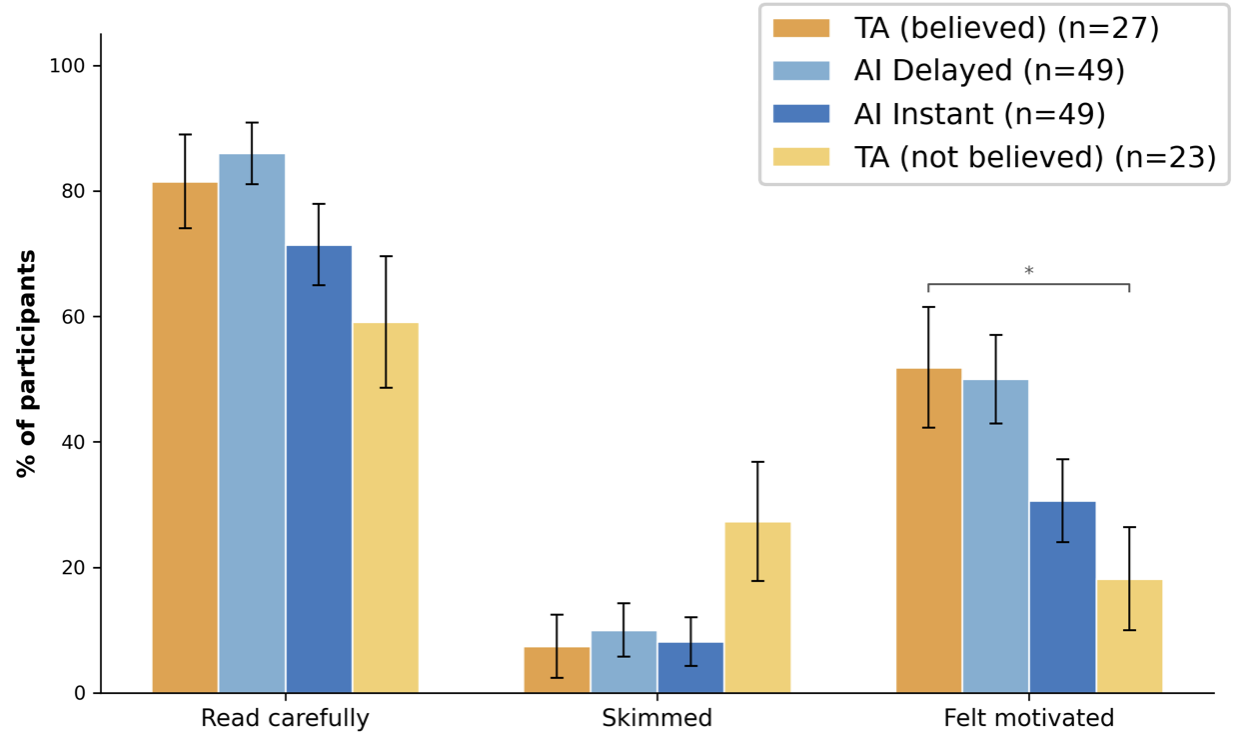

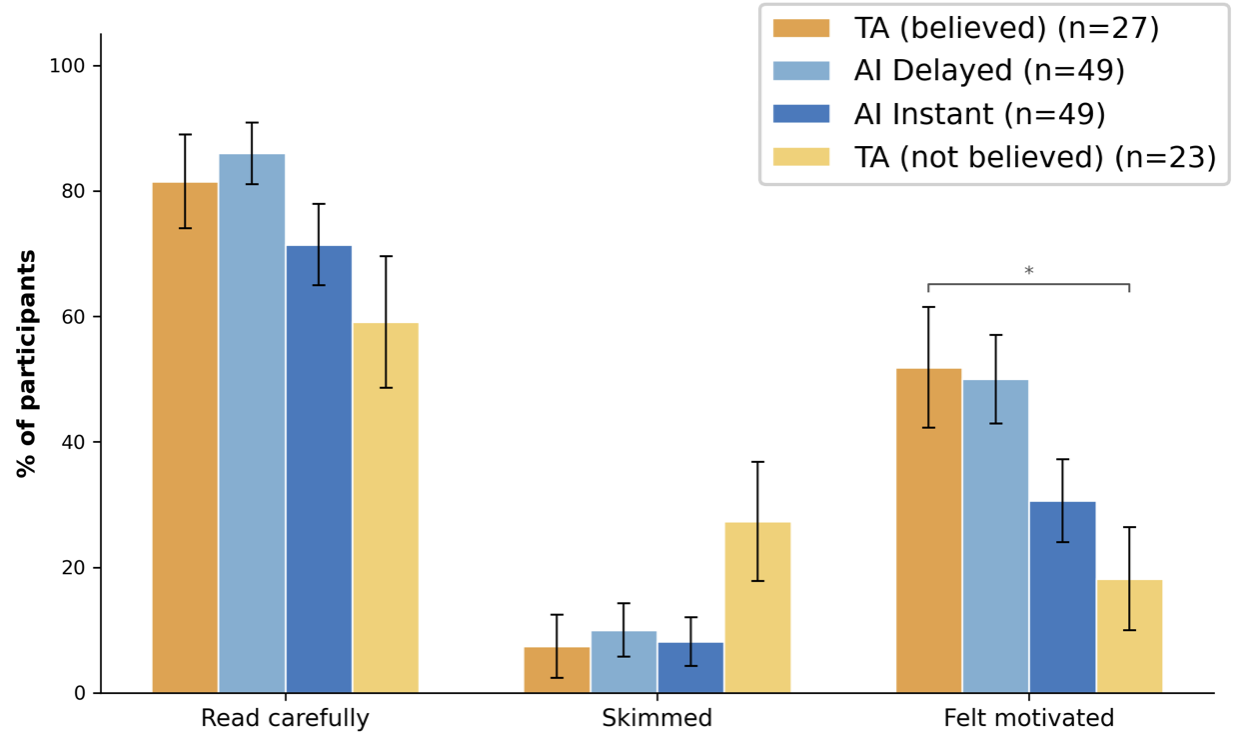

Figure 3: Self-reported feedback behavior by condition—TA-not-believed group skims feedback and reports lowest motivation.

Self-reported behavior in post-task surveys aligns with these outcomes: TA-not-believed participants skim feedback more frequently (26% vs. 7-10% in other groups) and report the lowest motivation. The behavioral withdrawal observed in the non-credible attribution group is substantial, with effect sizes exceeding 1.0 for several metrics between believers and non-believers.

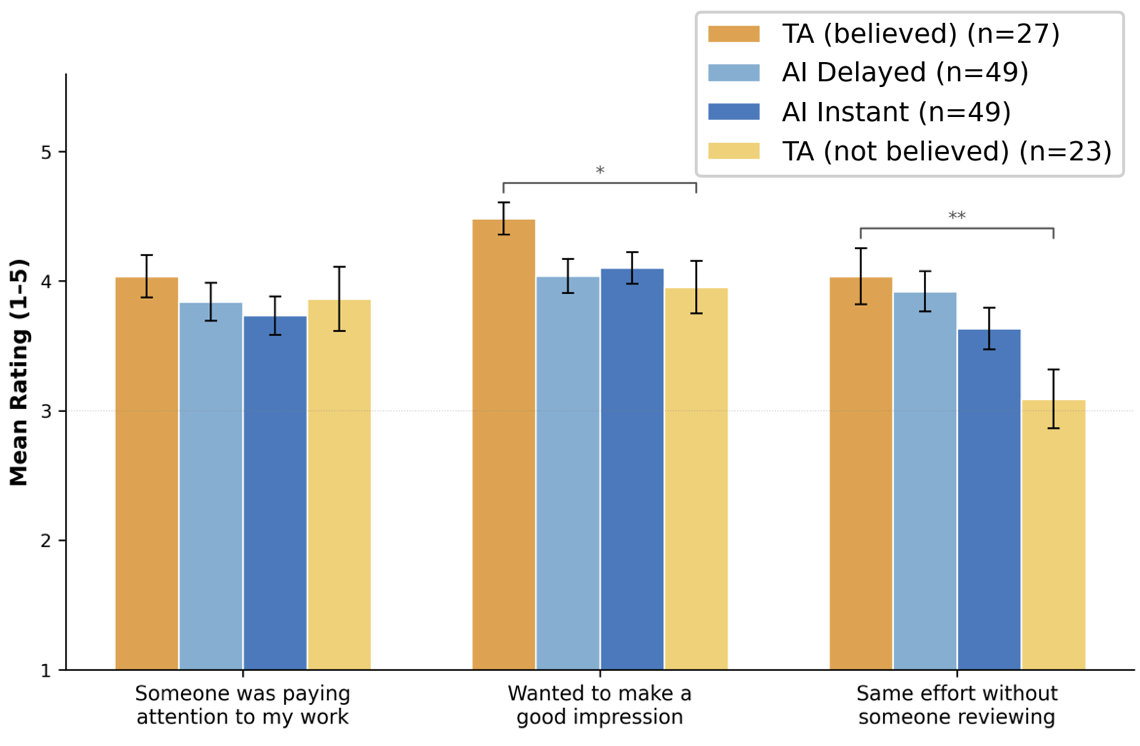

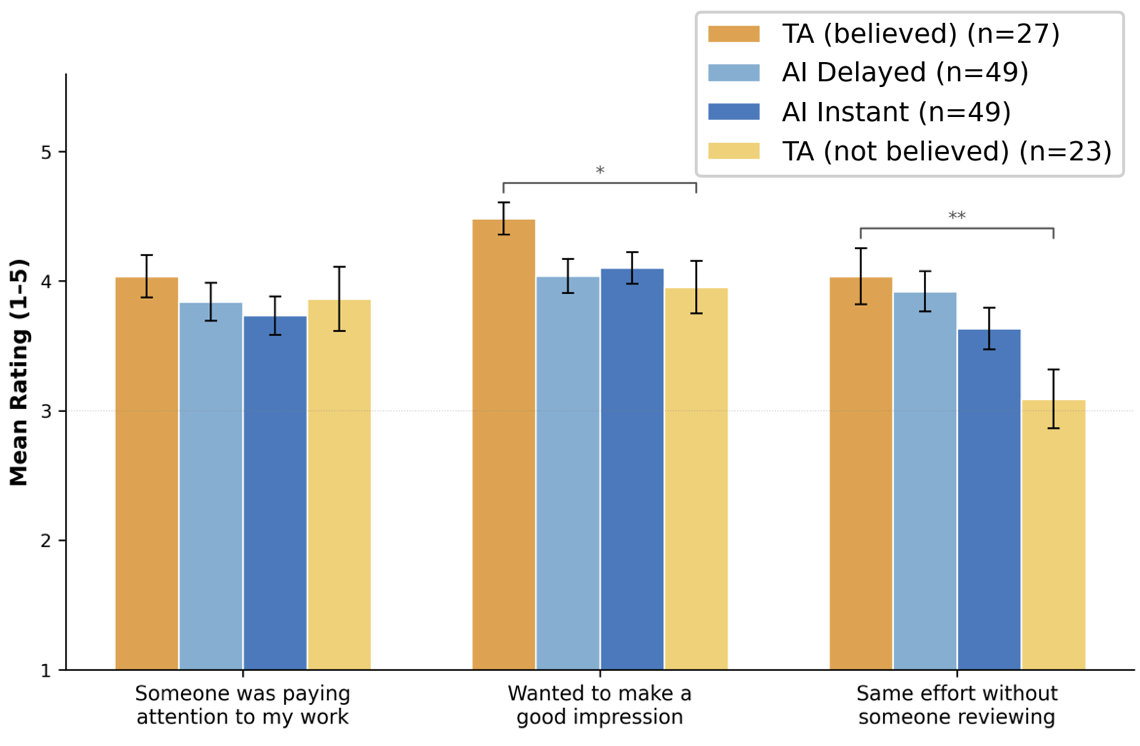

Mechanistic Insights: Motivation versus Social Presence

Survey data provide an initial test of mechanism. No significant differences are found in social presence measures (feeling watched, evaluated, desire to impress reviewer) across conditions, refuting the hypothesis that surveillance or accountability drives attribution effects. Instead, intrinsic motivation and impression motivation show strong differentiation: TA-believed participants express highest autonomous effort and enjoyment, while TA-not-believed report the lowest (d=1.01 for autonomous effort).

Figure 4: Selected motivation survey items by condition—TA-believed participants report highest autonomous effort and intrinsic interest.

These patterns support self-determination theory and trust violation models emphasizing perceived authenticity and relational value over surveillance. The benefit of human attribution depends on credibility; non-credible claims undermine intrinsic motivation and behavioral investment more than transparent AI.

Practical and Theoretical Implications

Findings imply that genuine, credible human attribution in feedback offers measurable motivational benefits in computing education, as evidenced by increased engagement and higher quality code production. However, non-credible attribution (e.g., suspected impersonation or AI-authored feedback relabeled as human) reduces investment below transparent AI—a risk amplified as learners become more AI-literate and skeptical. Thus, transparent AI attribution is recommended as a lower-risk default in settings where human attribution cannot be established as credible.

These results also inform design and policy for hybrid educational environments: as instructors increasingly use LLMs to draft or augment feedback, maintaining authenticity and transparency is critical. The motivational value of human presence is contingent; failed credibility carries costs greater than simply using AI labels. This dynamic is likely to intensify in contexts with high AI literacy, calling for further research on attribution manipulation, credibility effects, and hybrid framing strategies ("AI-assisted and human-reviewed" feedback).

Conclusion

Source attribution and delivery timing independently shape learning behavior in AI-augmented feedback contexts. Believed human feedback attribution increases engagement and performance, but only when credibility is maintained. Non-credible human attribution induces behavioral withdrawal, with outcomes worse than transparent AI feedback. The mechanistic analysis points to authenticity and intrinsic motivation—not social presence—as primary drivers. As AI systems take on instructional roles, educators must recognize the conditional value of human attribution and default to transparency where credibility is uncertain. These insights extend to broader contexts of human-AI content integration and warrant further investigation into attribution framing and trust dynamics in educational technology.