- The paper introduces a two-step diffusion framework that leverages reweighted empirical sampling followed by DDPM training to generate samples from exponentially tilted distributions.

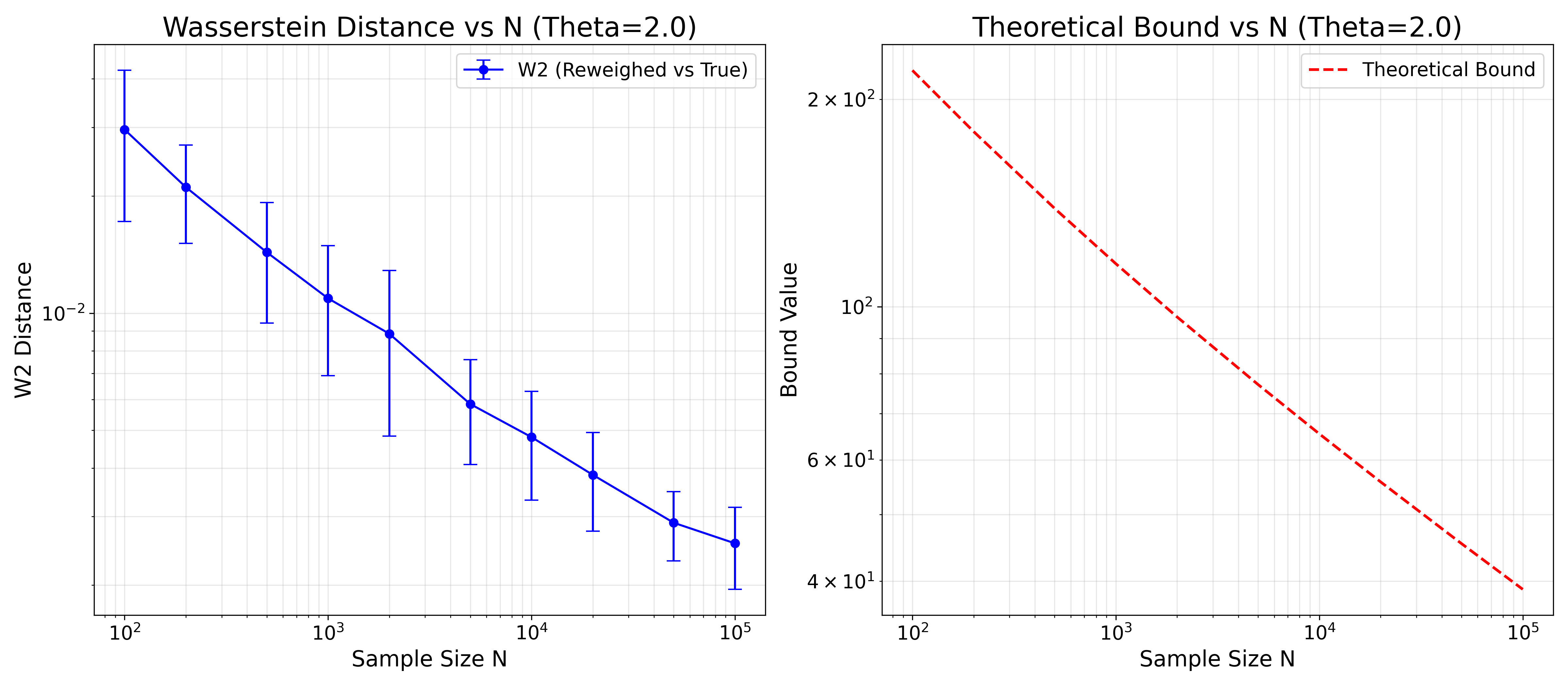

- It provides provable guarantees in Wasserstein and total variation metrics by quantifying error propagation from the reweighted estimator to the diffusion model output.

- Empirical validations, including high-dimensional tests and climate data simulations, demonstrate robustness over heuristic score-guidance methods for rare-event generation.

Generating DDPM-based Samples from Tilted Distributions: A Technical Exploration

Problem Statement and Context

The paper "Generating DDPM-based Samples from Tilted Distributions" (2604.03015) systematically addresses the challenge of sample generation from exponential tilts of an unknown high-dimensional distribution μ. The task is, given n i.i.d. samples from μ, to generate samples from a related distribution ν(x)∝exp(θTg(x))μ(x), where θ∈Rd and g(⋅) is a tilting function, possibly the identity. This problem appears in critical applications including financial risk modeling, rare-event simulation, and climate science, where one must simulate from distributions that are subject to moment or risk constraints.

Whilst prior literature mainly leverages (self-normalized) importance sampling or heuristic score-guidance in diffusion models for such tilting, little is known about precise statistical guarantees—especially in high-dimensional settings or for non-differentiable tilting functions. This paper bridges that gap by proposing a two-step diffusion-based methodology with provable guarantees in the Wasserstein and total variation (TV) metrics.

Algorithmic Framework

The proposed method consists of two stages:

- Reweighted Empirical Sampling: Given samples X1,…,Xn∼μ, construct a weighted empirical measure μn,θ by assigning each sample an importance weight wi=exp(θTg(Xi)). New samples are generated by drawing from the empirical distribution with replacement, weighted by these importance weights.

- Diffusion Model Training and Sampling: Utilize μn,θ to train a denoising diffusion probabilistic model (DDPM), which then generates new samples via the reverse diffusion process.

The central technical contributions are quantitative analyses of:

Theoretical Analysis

Minimaxity of the Plug-in Estimator

A core result is the asymptotic minimaxity of the plug-in estimator (the reweighted empirical distribution) for the tilted measure in the Kolmogorov–Smirnov sense. Specifically, it is shown that, for bounded-support distributions and suitable moment conditions, no estimator can achieve strictly better asymptotic rate in sup-norm deviation from the true (unknown) tilted CDF.

Wasserstein Bounds

The paper derives non-asymptotic bounds on n1 as a function of n2, n3, and moments of the tilted measure, generalizing the classic rates for empirical measures [Fournier and Guillin, 2013] to the reweighted case. Two key theorems address the scenario with bounded n4 and unbounded but moment-constrained measures, establishing rates essentially matching the i.i.d. empirical estimator, modulo multiplicative constants that depend polynomially or, in some regimes, exponentially on the norm of the tilt n5. The exponential dependence highlights the well-known instability of importance sampling in large deviation regimes.

Diffusion Process Error Propagation

The paper rigorously analyzes how the error in the input distribution (Wasserstein distance between the plug-in estimator and the true tilt) translates into error in the output of the DDPM. The error accumulation is quantified assuming a Lipschitz condition on score approximation, and invoking recent advances on the accuracy of diffusion sampling under perturbed input distributions (Chen et al., 2022). Explicit upper bounds in total variation are derived as a function of Wasserstein error and score-matching loss.

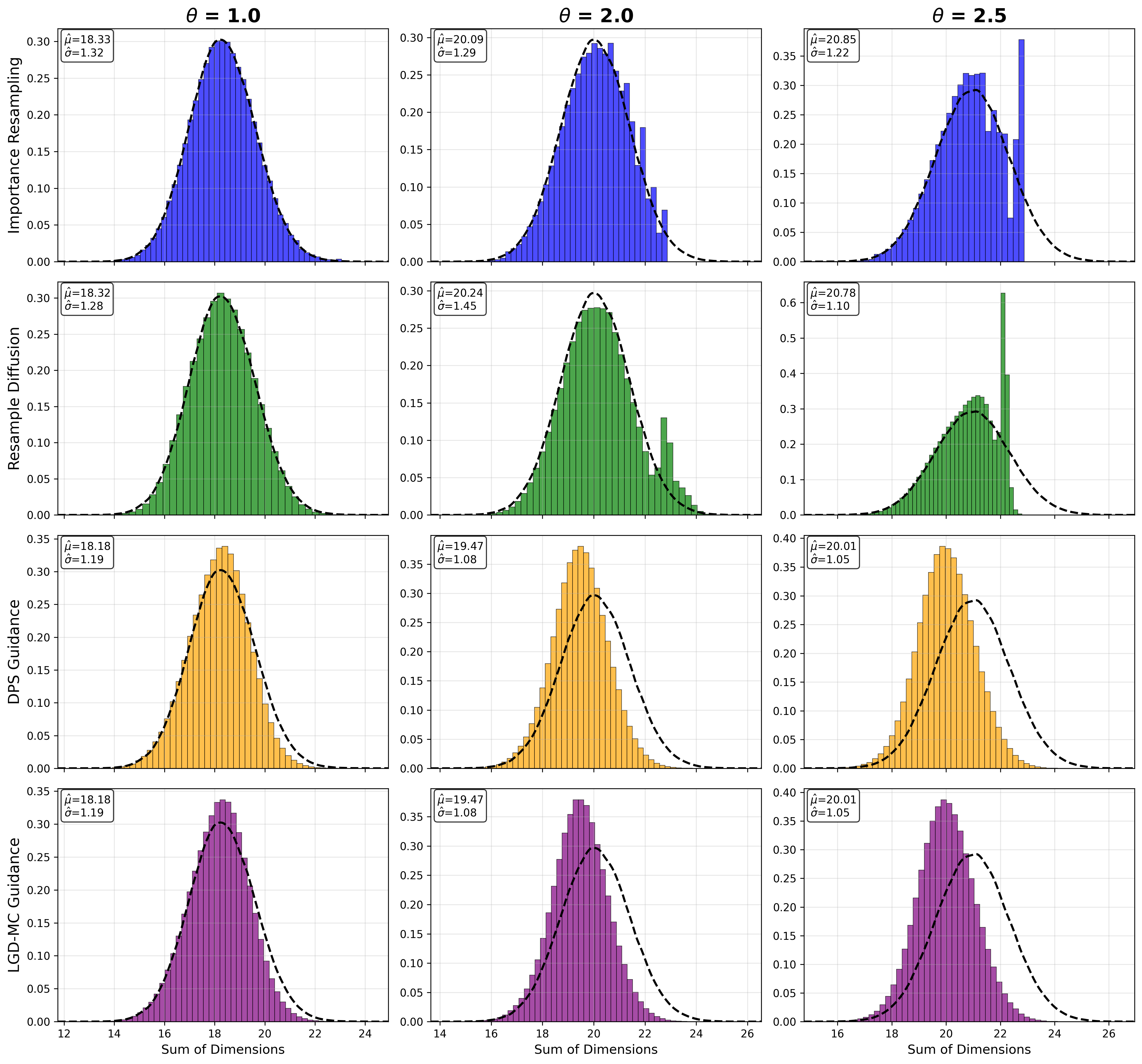

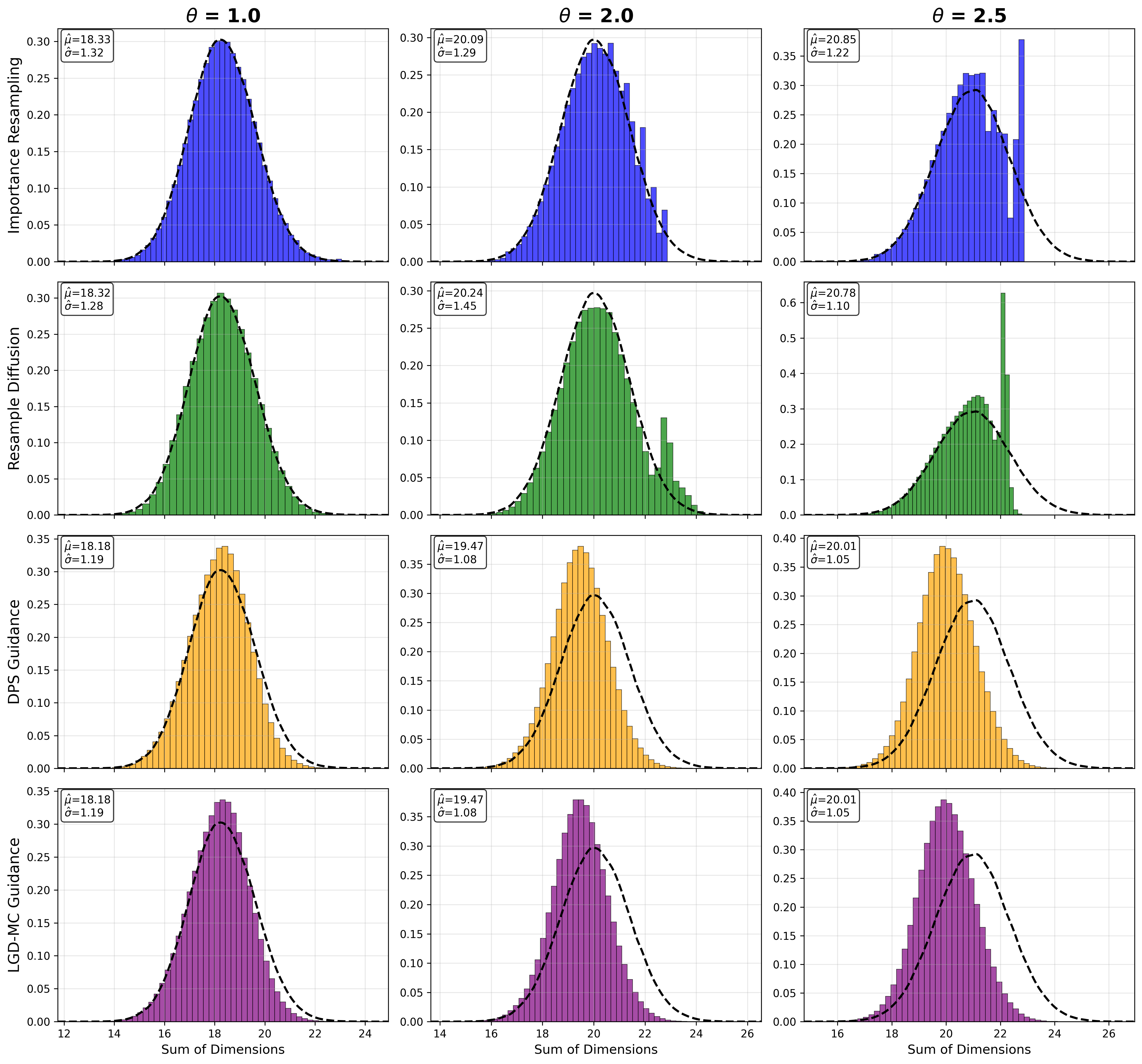

Figure 2: Samples generated by twisting a bounded distribution in 50D by different n6 values, comparing reweighted sampling, diffusion, DPS, and LGD-MC; the proposed method tracks the empirical samples closely, outperforming guidance-based approaches.

Empirical Validation

The theoretical rates are verified through comprehensive simulations:

- In moderate dimensions and for various tilt strengths, the decrease of the empirical sliced Wasserstein distance conforms closely to the predicted rates as n7 increases.

- The proposed approach is benchmarked against diffusion posterior sampling (DPS) and loss-guided diffusion (LGD-MC) in high-dimensional structured, non-Gaussian targets. The DDPM trained on reweighted samples matches the actual tilted empirical law, while heuristic guidance methods degrade rapidly for large n8.

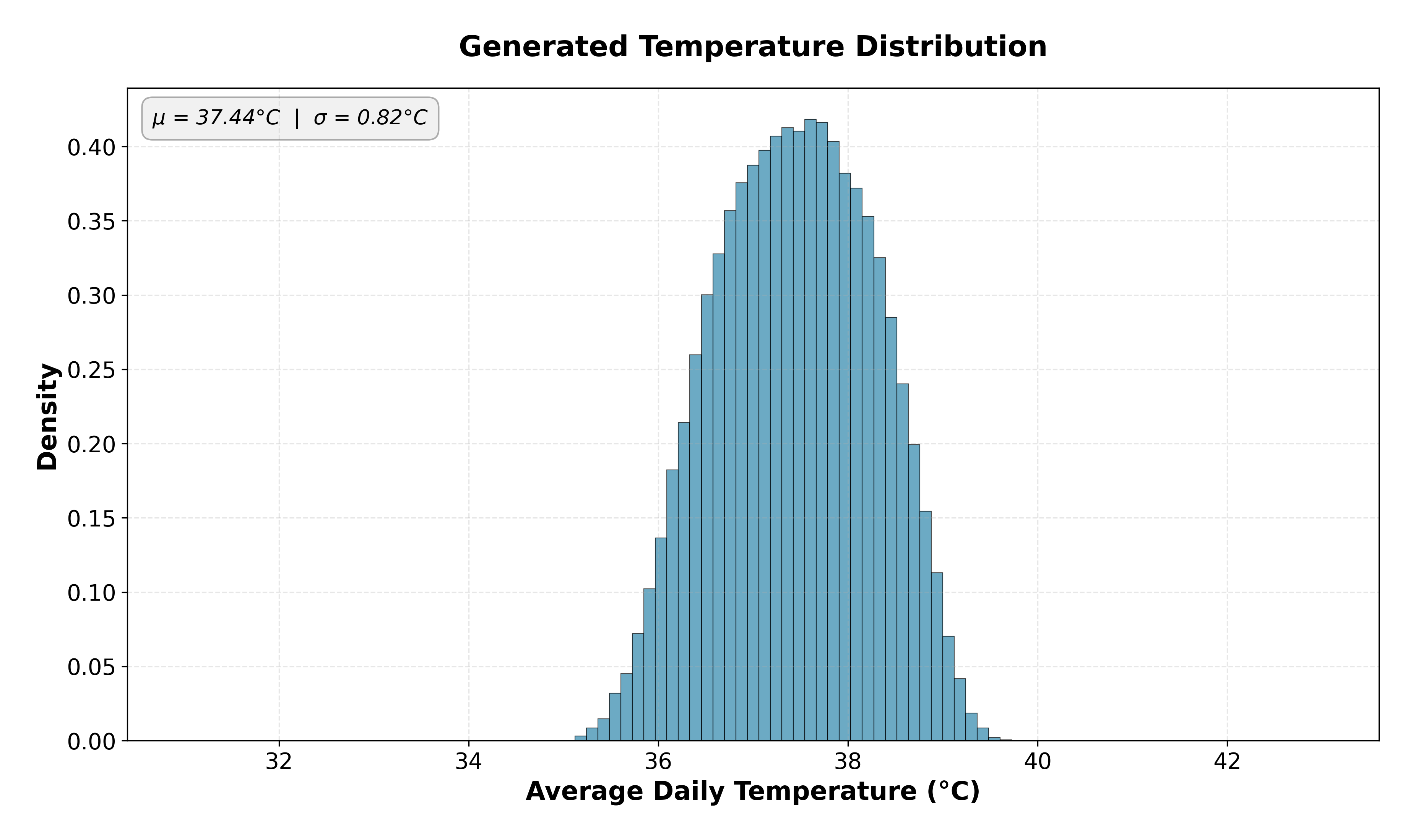

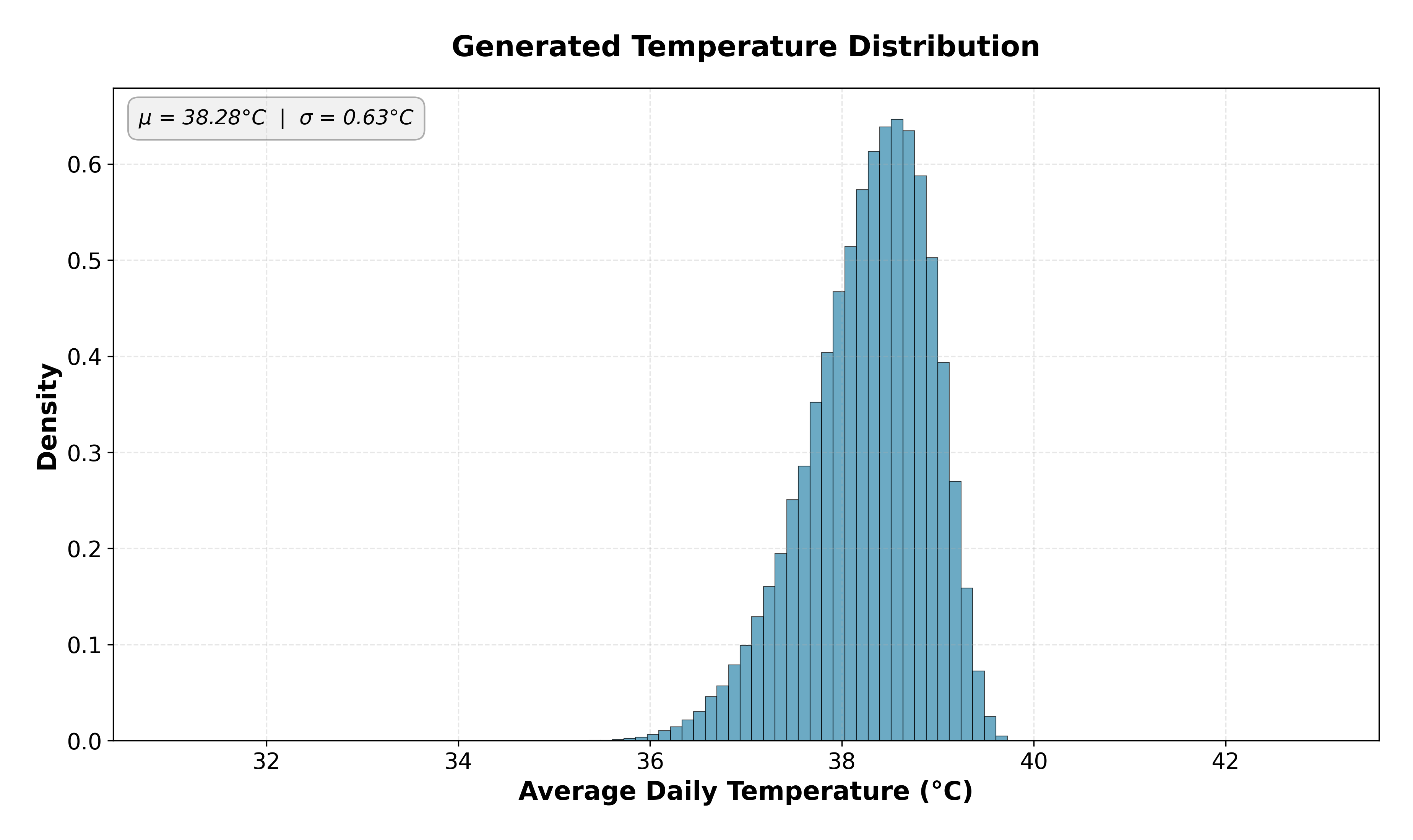

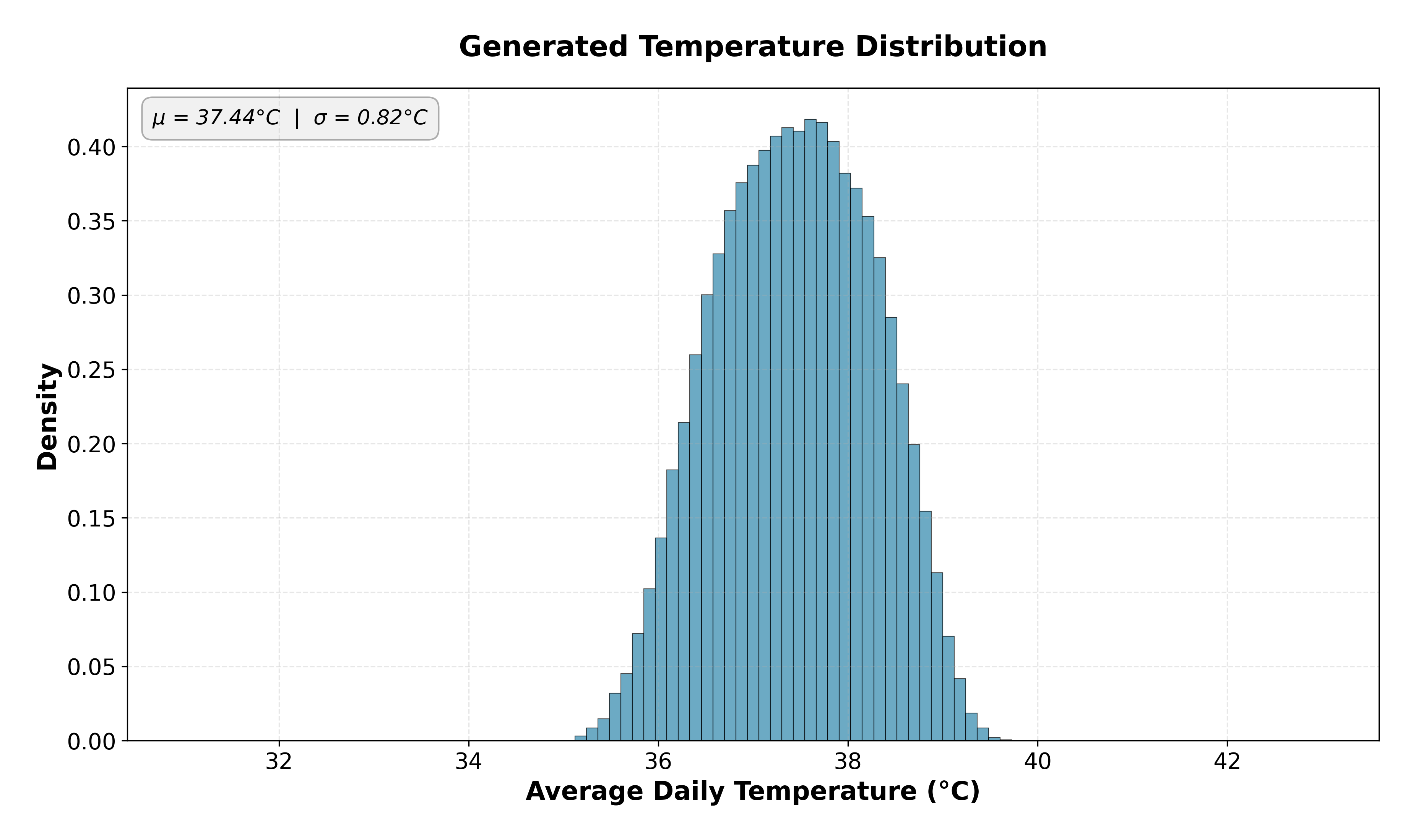

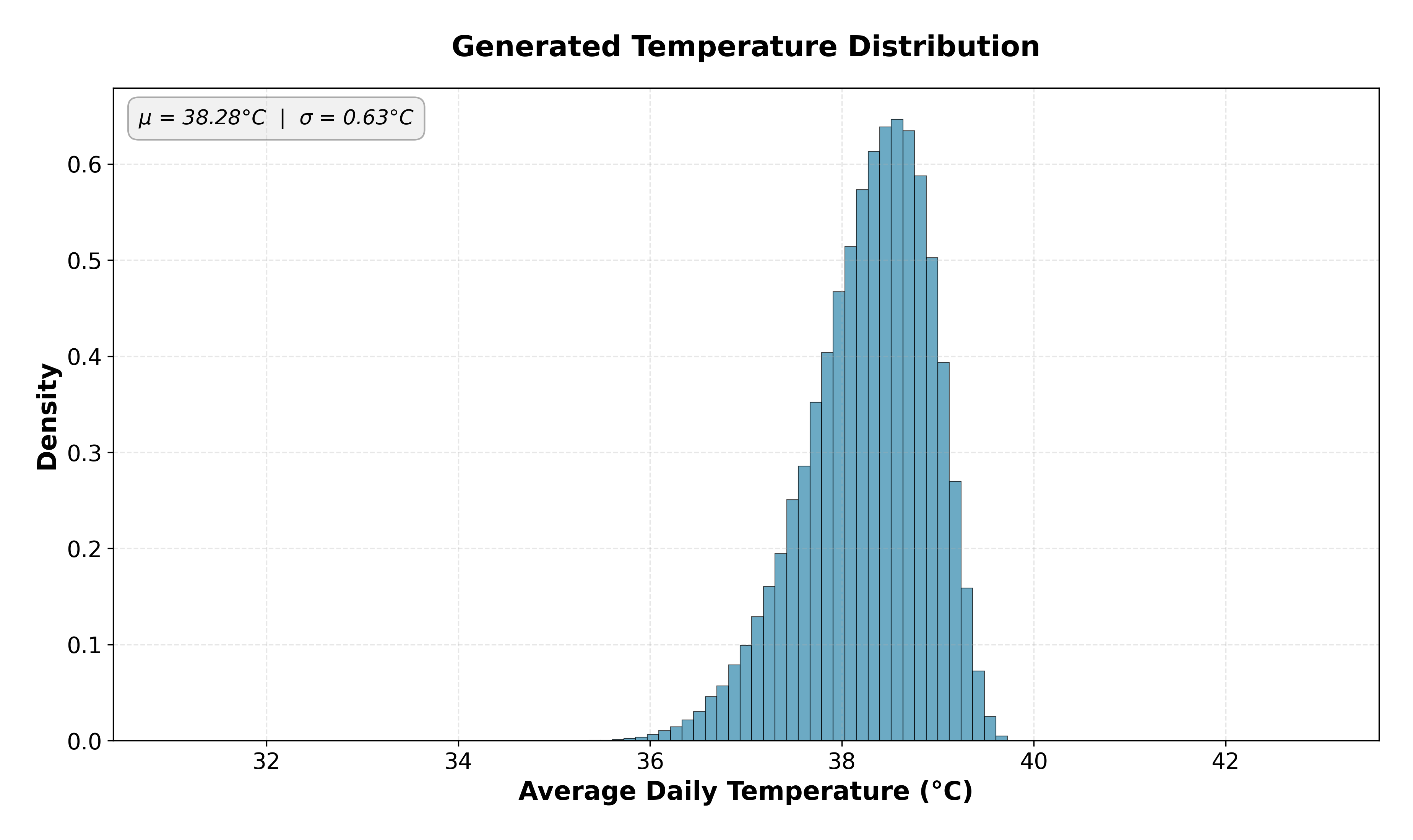

- A practical application is demonstrated in climate data: by tilting the temperature distribution over India to enforce higher mean, the DDPM can reproduce rare, hotter scenarios accurately via reweighted training.

Figure 3: DDPM samples from the baseline (untwisted) climate distribution, showing realistic daily temperature fields.

Figure 4: DDPM samples from the exponentially tilted distribution, with the model targeting the hotter, rarer slice and achieving the specified moment constraint.

Implications for Practice and Theory

The presented framework enables principled simulation from complex, high-dimensional, exponentially-twisted distributions using only samples from the base distribution, circumventing the need for explicit density access or differentiability assumptions on n9. This is critical in operational risk, stress-testing, rare climate event modeling, and robust scenario generation, where moment constraints dictate the desired output distribution.

From a theoretical perspective, the work closes several open questions:

- It justifies the plug-in estimator's optimality for weighted empirical measures in the minimax sense for exponential tilts.

- It quantifies precisely how input distribution error propagates through the DDPM, offering data-driven guidance on sample size versus tilting strength trade-offs.

- It elucidates the limitations of existing diffusion guidance heuristics, advocating for reweighted augmentation as a robust alternative.

Future research directions include tightening the dependence of sample complexity on μ0 (currently, exponential), extending analysis to alternative diffusion mechanisms (variance-exploding, non-VP, or rectified flows), and exploring lower bounds and risk-specific metrics beyond Wasserstein or TV.

Conclusion

This work provides a unified, theoretically grounded strategy for generating samples from exponentially tilted distributions using diffusion models. Through minimax-optimal plug-in weighting and rigorous error propagation analysis, the approach achieves strong statistical guarantees, outperforms heuristic guidance methods for large deviations, and enables robust applications in simulation-driven sciences and engineering.

(2604.03015)