- The paper presents S-Researcher, a platform that leverages LLM agents for automated, scalable social science simulation.

- It integrates auto-programming from natural language with simulation frameworks to support inductive, deductive, and abductive research paradigms.

- Empirical validations demonstrate high-quality behavior modeling and rapid scenario execution, paving the way for efficient human-AI research collaboration.

LLM Agents as Social Scientists: S-Researcher for Comprehensive Social Science Automation

Introduction

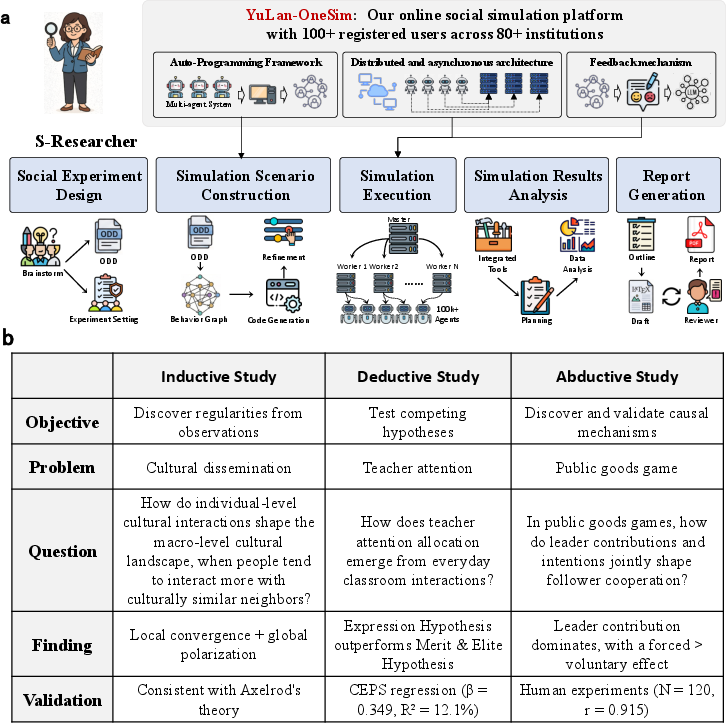

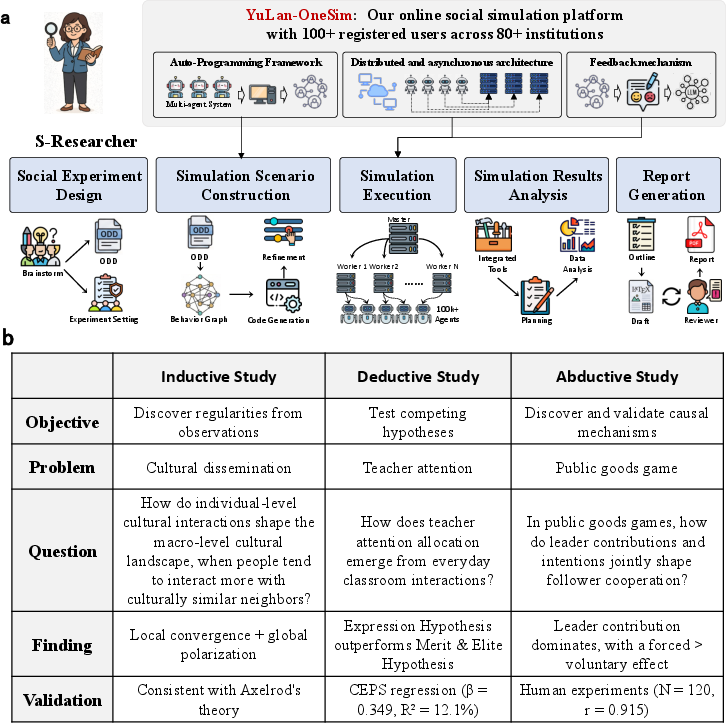

"LLM Agents as Social Scientists: A Human-AI Collaborative Platform for Social Science Automation" (2604.01520) introduces S-Researcher, an agentic framework that operationalizes LLMs as both "silicon scientists" and synthetic participants, enabling scalable, rigorous, and researcher-controllable simulation-based social science. The system integrates the YuLan-OneSim simulation engine with a structured research pipeline, supporting autonomous experiment design, agent-based behavioral simulation, automated data analysis, and report generation, with continuous human oversight.

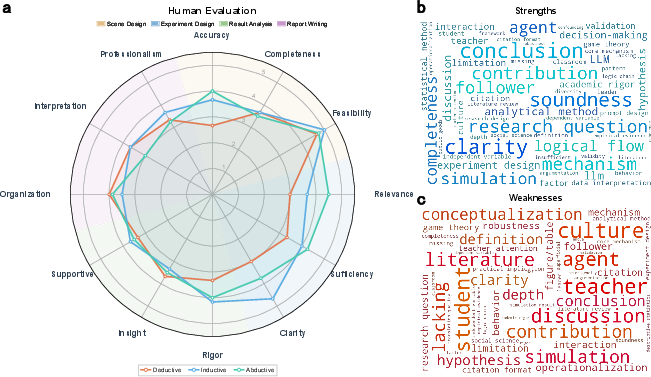

Figure 1: Overview of S-Researcher, including user interaction, automated scenario construction and execution, and three exemplifying case studies corresponding to inductive, deductive, and abductive research paradigms.

This essay synthesizes the technical advances of S-Researcher, analyzes its empirical results across canonical social science paradigms, and critically evaluates its contributions and limitations.

System Architecture and Key Innovations

General, Scalable, Reliable Social Simulation via YuLan-OneSim

S-Researcher's core simulation backend, YuLan-OneSim, addresses three requirements:

- Generality: An auto-programming pipeline converts free-form natural language scenario descriptions directly into ODD-protocol-valid executable code using behavior graphs, supporting arbitrary agent interaction and experimental logic.

- Scalability: A distributed, event-driven architecture supports up to 100,000 concurrent LLM agents, with topology-aware orchestration and gRPC-based, batched inter-process communication.

- Reliability: The Verifier–Reasoner–Refiner–Tuner (VR2T) feedback module integrates automated multi-agent critique and fine-tuning (using SFT or DPO), facilitating domain-specialization and error correction over repeated simulation runs.

Figure 2: Evaluation of YuLan-OneSim: a) Expert ratings on code and behavior graph quality, b) error analysis, c–d) ablation analysis of graph/code refinements, e–f) scalability and deployment efficiency, g) reliability gains from feedback-driven fine-tuning in backbone LLMs (Qwen2.5-1.5B, Llama-3.2-1B).

Empirical validation across 50 diverse scenarios demonstrates program synthesis approaching expert quality for behavior graphs (mean ≈ 5.0/5.0) and strong code outputs (mean ≈ 4.2/5.0), rapid scenario compilation, and efficient runtime scaling.

Operationalizing Scientific Reasoning Paradigms

S-Researcher integrates social science methodology through the formalization of three canonical reasoning paradigms:

- Induction: Pattern identification from simulated micro–macro dynamics without a priori theory.

- Deduction: Systematic hypothesis competition via parallel modeling.

- Abduction: Mechanistic inference via counterfactual decomposition and targeted manipulation.

Researchers can intervene at every stage—experiment design, simulation, analysis, and reporting—or decouple and use submodules independently.

(Figure 1, lower panel)

Figure 1: Case study mapping by reasoning paradigm; workflows autonomously operationalized by S-Researcher.

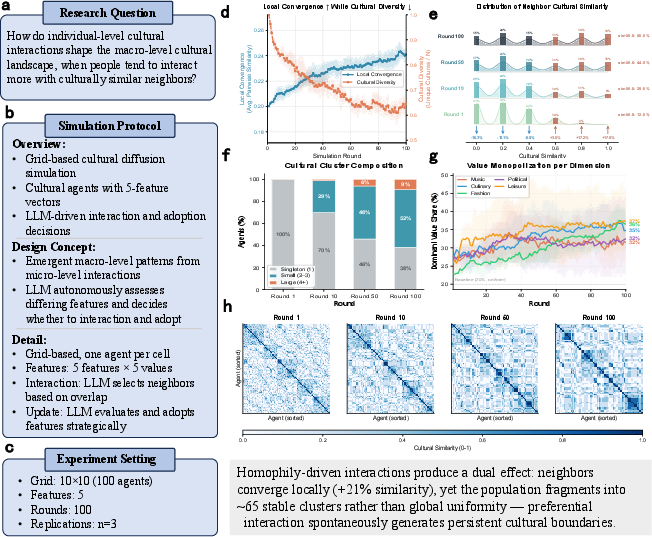

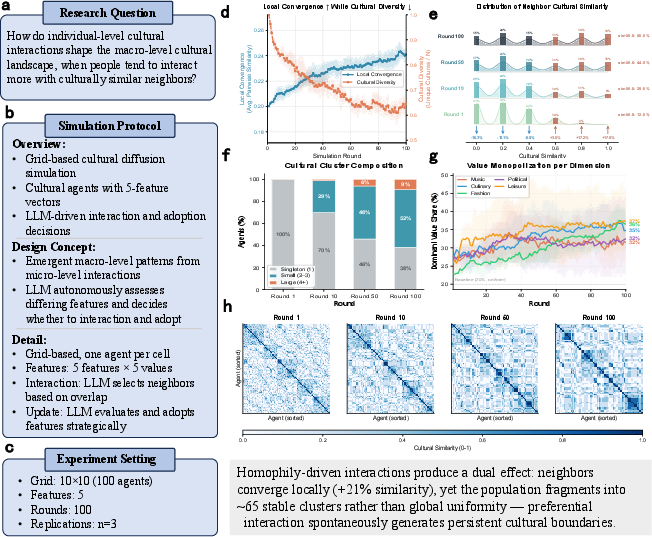

Inductive Case: Reproduction of Classical Cultural Dynamics

S-Researcher autonomously designs and executes an experiment replicating Axelrod's model of cultural dissemination. With only a high-level prompt, it generates a 10×10 grid world, five-dimension agent culture vectors, and homophily-driven interaction protocols. Emergent results show local cultural convergence (+21% avg. similarity increase) and persistent global polarization, closely mirroring classical simulation and theory, but crucially arising from LLM-based rather than rule-based microdynamics.

Figure 3: Inductive simulation output—emergence of macro-level cultural clusters, convergence/diversity time series, and visualization of evolving similarity structure.

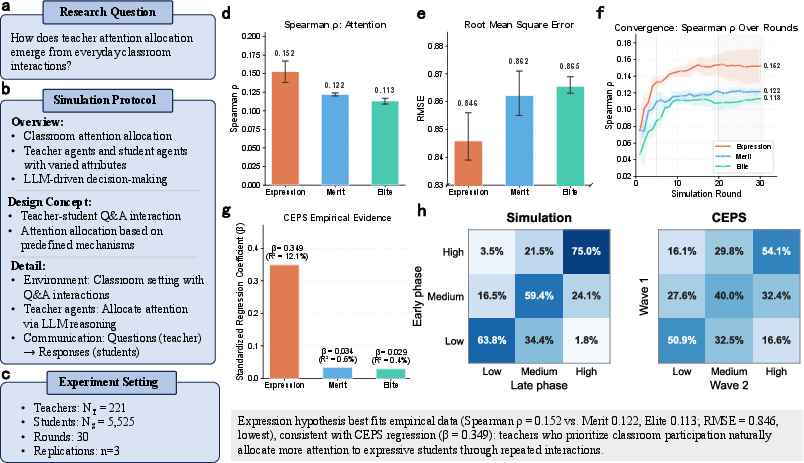

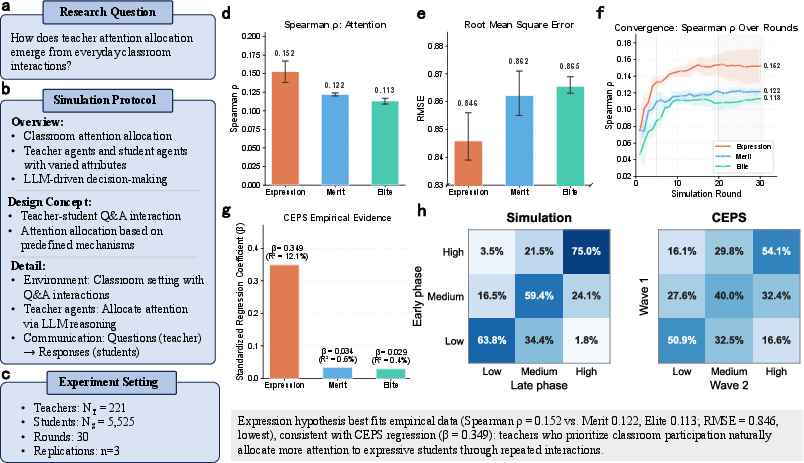

Deductive Case: Competing Hypotheses in Teacher Attention

The system encodes three competing hypotheses from input (expression, merit, elite models) about drivers of teacher attention, executes large-scale parallel simulations using student profile data from CEPS, and aligns simulation outcomes with empirical survey results. The communicative (expression) hypothesis achieves the highest consistency with CEPS attention rankings (ρ=0.152); supporting analyses concur with real-world effect ordering and error metrics.

Figure 4: Deductive simulation—hypotheses, protocol, fit metrics, and validation against human survey data, confirming dominance of communicative ability in attention allocation.

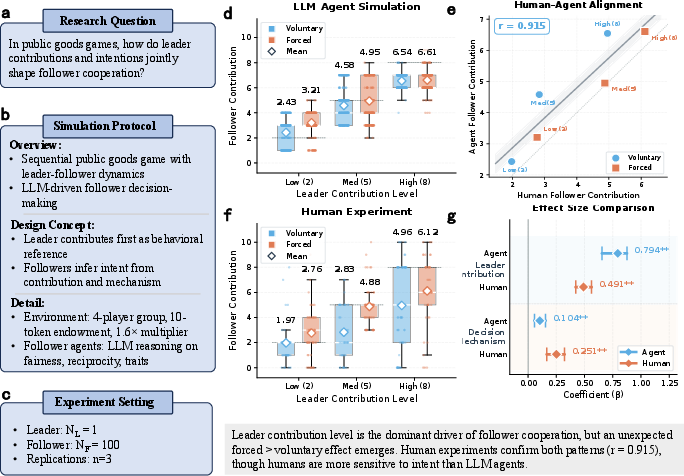

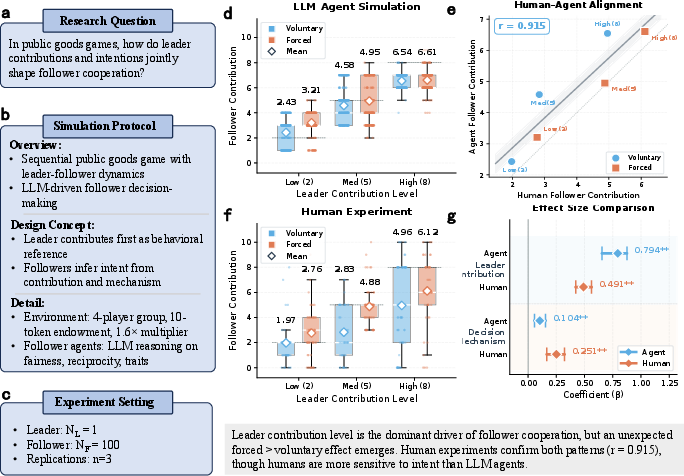

Abductive Case: Mechanism Discovery in Public Goods Games

S-Researcher translates an open-ended causal question into a factorial design manipulating leader contribution intent (voluntary/forced) and magnitude. It identifies behavioral anchoring on leader’s visible actions as the dominant driver of follower cooperation, with a robust (and empirically validated) secondary effect of decision mechanism (forced > voluntary). Results are cross-validated in parallel human experiments (Pearson r=0.915 between simulated and human contributions), with effect size decomposition elucidating both correspondence and residual gaps in intent sensitivity.

Figure 5: Abductive simulation and validation—counterfactual experiment structure, LLM-human alignment, and comparative statistical effect sizes.

Evaluation and Principal Findings

Three blinded expert reviewers per paradigm provided multidimensional assessments:

Implications, Limitations, and Future Directions

Practical and Theoretical Implications

S-Researcher demonstrates that LLM-agent-based social simulation can operationalize and accelerate the complete empirical research workflow, not only as synthetic participants but as collaborative scientific agents. Empirically, LLM agents reliably replicate central tendencies and primary effects in controlled social scenarios, enabling scalable exploration of design spaces that are otherwise intractable with human subjects.

However, several bounds are evident:

- Reduced Heterogeneity: LLM agents display lower within-group variance and behavioral extremes compared to humans (up to 300× lower), suggesting caution in studies of tail behavior or strongly culture-dependent phenomena.

- Intent Sensitivity: Simulated agents underweight latent intent versus observable actions, limiting their ecological validity in domains where social inference is paramount.

- Reporting Depth: Automated reports underperform in integrating external theoretical context and robust sensitivity analysis, indicative of LLMs’ current limitations in literature synthesis and theory-driven critique.

Future Developments

Advances may target improved integration of automated literature retrieval/citation, deeper operationalization pipelines, scenario libraries encompassing affectively rich or culture-specific behaviors, and direct calibration of agent heterogeneity from real-world human data. Techniques for embedding human oversight and parameter tuning at key bottlenecks (e.g., mechanism validation, hypothesis pruning) could further hybridize human-AI research paradigms.

Conclusion

S-Researcher (2604.01520) establishes a comprehensive, modular platform supporting human–AI collaborative social science, from fully automated experiment design to scalable agent-based simulation and high-level report generation. Organized around induction, deduction, and abduction, it demonstrates strong empirical alignment with benchmark phenomena and robust cross-paradigm expert validation. Strategic limitations include agent heterogeneity, social inference fidelity, and literature integration, but these also define the clearest axes for ongoing progress in computational social science. S-Researcher thus provides both a functional baseline and a reference architecture for future research seeking to augment social inquiry via LLM-agent-based simulation.