Functional Force-Aware Retargeting from Virtual Human Demos to Soft Robot Policies

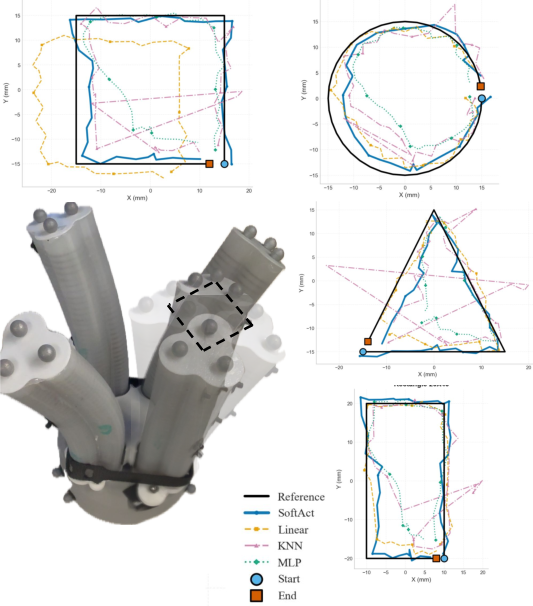

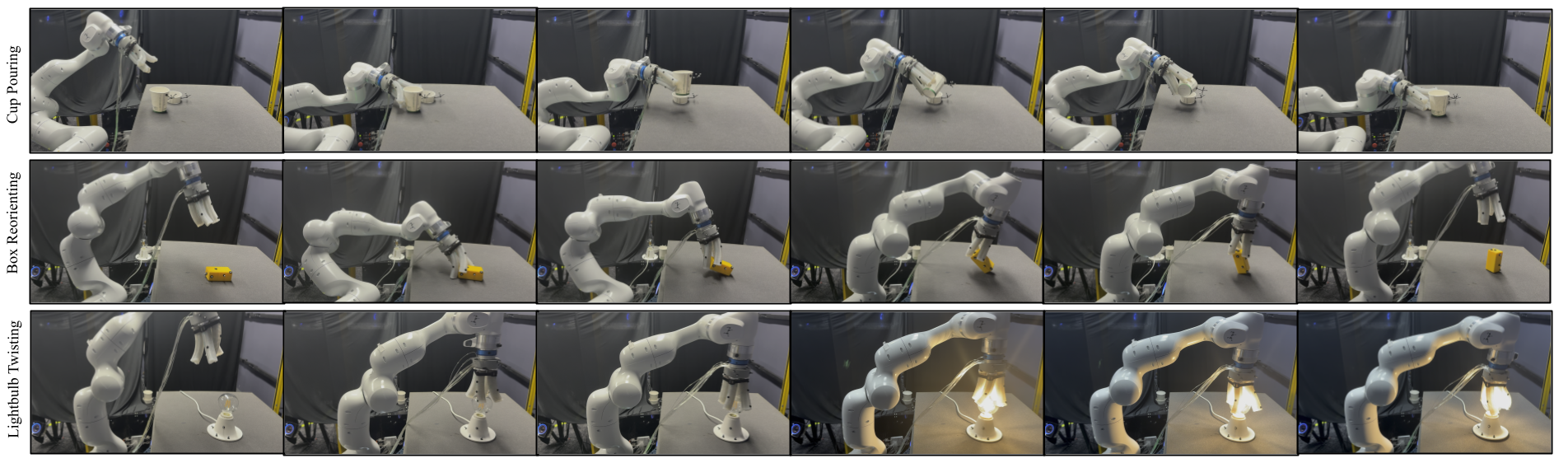

Abstract: We introduce SoftAct, a framework for teaching soft robot hands to perform human-like manipulation skills by explicitly reasoning about contact forces. Leveraging immersive virtual reality, our system captures rich human demonstrations, including hand kinematics, object motion, dense contact patches, and detailed contact force information. Unlike conventional approaches that retarget human joint trajectories, SoftAct employs a two-stage, force-aware retargeting algorithm. The first stage attributes demonstrated contact forces to individual human fingers and allocates robot fingers proportionally, establishing a force-balanced mapping between human and robot hands. The second stage performs online retargeting by combining baseline end-effector pose tracking with geodesic-weighted contact refinements, using contact geometry and force magnitude to adjust robot fingertip targets in real time. This formulation enables soft robotic hands to reproduce the functional intent of human demonstrations while naturally accommodating extreme embodiment mismatch and nonlinear compliance. We evaluate SoftAct on a suite of contact-rich manipulation tasks using a custom non-anthropomorphic pneumatic soft robot hand. SoftAct's controller reduces fingertip trajectory tracking RMSE by up to 55 percent and reduces tracking variance by up to 69 percent compared to kinematic and learning-based baselines. At the policy level, SoftAct achieves consistently higher success in zero-shot real-world deployment and in simulation. These results demonstrate that explicitly modeling contact geometry and force distribution is essential for effective skill transfer to soft robotic hands, and cannot be recovered through kinematic imitation alone. Project videos and additional details are available at https://soft-act.github.io/.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper introduces SoftAct, a new way to teach soft robot hands how to use objects like humans do. Instead of trying to copy the exact shape and motion of a human hand, it focuses on what really makes manipulation work: where the hand touches an object and how hard it pushes. The team records human demonstrations in virtual reality (VR), figures out the important contact forces, and then transfers those “force-based skills” to a soft, air-powered robot hand that doesn’t look or move like a human hand.

The big questions the paper asks

- How can we teach a soft robot hand that’s very different from a human hand to do human-like tasks?

- Is focusing on contact forces (where and how hard you press) better than just copying hand positions and joint angles?

- Can this approach work both in simulation and in real-world tests without extra fine-tuning?

How they did it, in simple terms

Think of learning to open a bottle or pour a cup. The exact shape of your hand matters less than how you press, push, or twist the object. SoftAct turns that idea into a method robots can use.

Here’s the approach:

- Collect human demonstrations in VR

- People perform tasks in a virtual world (like pouring from a cup or screwing in a light bulb).

- The system records:

- Hand motion and object motion

- Where the hand touches the object (contact patches)

- How hard and in what direction the hand pushes (contact forces)

- In VR, the system can measure contact forces precisely, which is hard to do in the real world.

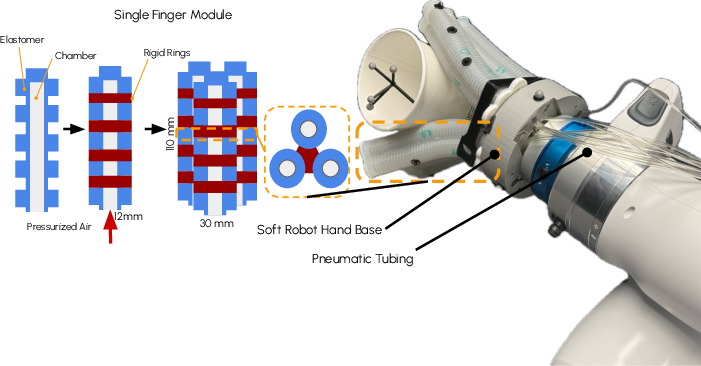

- Use a soft, air-powered robot hand

- The robot has soft fingers with air chambers. Adding or releasing air bends the fingers.

- This kind of hand is safe and squishy, but it’s harder to control than a rigid robot because it bends in complicated ways.

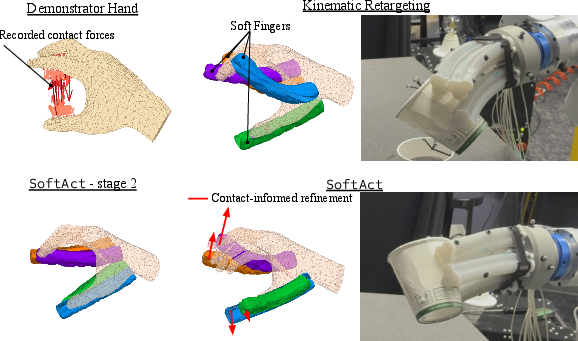

- Two-stage “force-aware” retargeting (mapping human skills to the robot)

- Stage 1: Force-balanced finger assignment

- The system looks at which human fingers did most of the pushing and how the forces were spread out.

- It then assigns the robot’s fingers to take over those roles, not necessarily one-to-one. For example, two robot fingers might share the “job” of a strong human thumb if that’s what the forces suggest.

- Stage 2: Contact-informed refinement in real time

- As the robot moves, it slightly adjusts where its fingertips aim based on two things: nearby contact points from the demo and how strong those forces were.

- To decide what’s “nearby,” the system measures distance along the hand’s surface—like walking along the skin rather than cutting straight through the hand. This helps align the robot’s touches with the human’s in a meaningful way.

- These small corrections help the robot keep good contact, even though its hand is shaped differently.

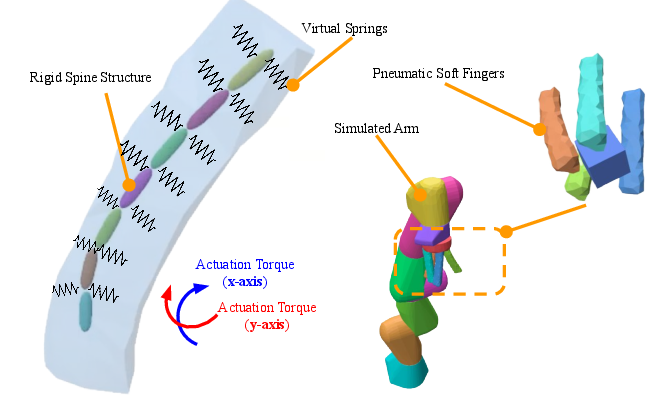

- Low-level control for soft fingers

- Controlling squishy fingers with air is tricky. The team trained a small neural network to learn how air pressure changes the fingertip’s position.

- Then, when the robot needs a fingertip to move to a spot, it solves for the right pressures to make that happen.

- This makes the movements more precise and consistent.

- Learning the policy

- After retargeting human demos to robot motion, the robot learns an overall “policy” (a decision-making program) by imitation.

- You can think of this as learning the next small moves to make by studying lots of examples.

What they found and why it matters

- Better accuracy and steadiness

- The robot’s fingertips followed target paths much more accurately than with standard methods, with up to about 55% less error and up to about 69% less variability.

- Higher task success

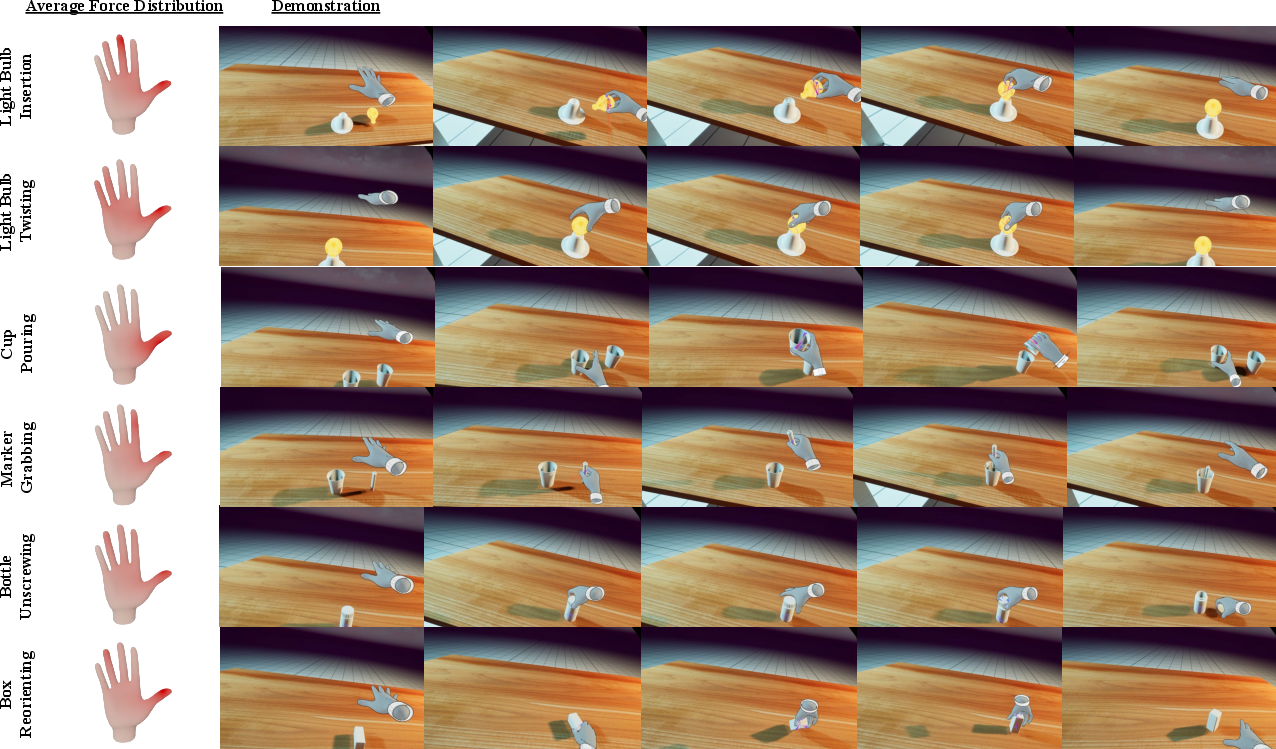

- Across tasks like cup pouring, light bulb insertion/twisting, marker grabbing, bottle unscrewing, and box reorienting, SoftAct’s success rates were consistently higher than a “copy the hand kinematics” baseline.

- Example: in simulation, cup pouring succeeded 90% of the time with SoftAct vs 40% for the baseline; light bulb twisting succeeded 100% vs 77%.

- In real-world tests (without extra training), SoftAct also outperformed the baseline, for example 85% vs 35% for cup pouring.

- Key insight

- Focusing on contact geometry and forces—rather than just trying to match human finger positions—made the soft robot much better at maintaining the right kind of touch. That’s crucial for contact-rich tasks like twisting caps or inserting parts.

Why this is important

- Bridges the “mismatch” between humans and robots

- Many robots (especially soft ones) don’t look or move like human hands. By teaching the “why” of the action (how and where to apply force) instead of the “exact pose,” this method transfers skills more reliably.

- Safer and more adaptable robots

- Soft hands are good for handling fragile objects and working near people. Making them better at everyday tasks could help in homes, healthcare, and factories.

- Practical teaching via VR

- Collecting high-quality force information in real life is hard. VR lets researchers record clean, detailed demonstrations to train robots more easily.

- Future directions

- Estimate contact forces from cameras instead of relying on VR/simulation data.

- Handle longer, multi-step tasks where the way you touch objects changes over time.

- Move away from motion-capture markers so robots can work from normal vision sensors.

In short, SoftAct shows that teaching robots “how to press and where” beats “copy my hand shape,” especially for soft, squishy hands that don’t move like ours. This makes robots better at real-world, touch-heavy tasks.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper. Each point is phrased to guide immediate follow‑up research.

- Reliance on ground‑truth contact forces from VR: no evaluation of how errors in visually or tactually estimated forces affect Stage 1/Stage 2 retargeting or downstream policy performance.

- Unknown generality of VR‑generated contact forces: no calibration to real human–object interaction parameters (friction, compliance), leaving the physical fidelity of “precise and noise‑free” VR contact labels unvalidated.

- Surface geodesic ambiguity across embodiments: Stage 2 uses geodesic distances with robot fingertip positions and human contact points, but the paper does not specify how geodesics are computed when the human and robot hand meshes differ (no cross‑surface mapping or manifold alignment procedure is described).

- Geodesic computation on deforming geometries: no method for handling mesh geodesics when the robot’s soft surface deforms during manipulation (static geodesics may be inconsistent with time‑varying contact geometry).

- Sensitivity to geodesic‑heat kernel hyperparameters: no ablations on λ (kernel bandwidth), ε (stability term), or δ_max (adjustment cap) to quantify trade‑offs between stability and contact alignment.

- Force attribution granularity: contact diffusion and per‑finger load assignment ignore tangential/normal decomposition and moments; it remains unknown whether using directional components or torque attribution improves mapping.

- Force‑balanced finger assignment is static: assignments are fixed for the entire task; no mechanism for dynamic reallocation when contact roles change mid‑trajectory (common in multi‑stage tasks).

- Integer allocation solver details missing: the discrete optimization for allocating robot fingers per human finger (n_r) lacks algorithmic specifics or complexity analysis; no study of suboptimal or greedy solutions vs optimality.

- Collision and interference during many‑to‑one mapping: assigning multiple robot fingers to a single human finger may cause self‑contact or object interference; no collision handling or spacing strategy is described or evaluated.

- No tactile or force feedback at runtime: the policy and online refinement do not use on‑hand sensing to close the loop on contact state; the impact of adding tactile sensors or pressure/strain feedback remains unexplored.

- Robustness to contact misalignment: no experiments introducing perturbations (contact location noise, friction changes, unexpected slips) to test whether contact‑informed refinement recovers or destabilizes under errors.

- Low‑level controller is quasi‑static and planar: the learned pressure→displacement model omits dynamics (hysteresis, rate effects, latency) and restricts control to planar motion; performance on tasks requiring out‑of‑plane or dynamic fingertip behavior is untested.

- Closed‑loop low‑level control is missing: optimization inverts a static MLP without using fingertip state feedback during execution; benefits of adding real‑time fingertip pose/force feedback to compensate model errors are not evaluated.

- Real‑time performance and scalability: per‑waypoint optimization (Adam) and contact‑informed updates lack runtime measurements, leaving feasibility at 200 Hz and scaling to more fingers, contacts, or longer horizons unclear.

- Simulation actuation abstraction: pneumatic pressure is approximated with internal torques; no quantitative validation against measured pressure–deformation trajectories or contact mechanics from the physical hand.

- Contact/friction modeling and sim‑to‑real gap: friction coefficients, surface roughness, and compliance in simulation are not specified or randomized; the sensitivity of learned policies and retargeting to these parameters remains unknown.

- Dataset composition and human variability: the number of subjects, demonstrations per task, and variability (hand sizes, strategies) are not reported; generalization across demonstrators and demonstration diversity is untested.

- Comparison breadth at the policy level: only kinematic retargeting is compared; no baselines using contact‑aware retargeting with explicit surface correspondences, object‑centric retargeting, or RL fine‑tuning from demonstrations.

- Task distribution and generalization: success is reported on six tasks, but transfer to unseen objects, varying mass/inertia, textures, or significant initial pose distributions is not characterized.

- Longer‑horizon, multi‑stage manipulation: while noted as future work, there is no proposed mechanism for handling evolving contact roles and goals (e.g., automatic sub‑task segmentation or dynamic finger re‑assignment).

- Observation design excludes contact state at deployment: the policy uses proprioception and object pose, not contact/tactile signals; resilience to unseen contact conditions and switching events is unverified.

- Dependence on motion capture for object pose: no assessment of markerless perception’s impact (latency, noise, occlusions) or training a vision‑conditioned policy and its sim‑to‑real implications.

- Policy stability and safety guarantees: no analysis of stability when applying online δ‑adjustments or bounds on contact forces to protect fragile objects; passivity or safety constraints are not incorporated.

- Failure mode analysis is missing: the paper reports aggregate success and tracking errors but does not categorize failures (e.g., slip, missed contact, over‑compression) or link them to algorithmic components.

- Data efficiency and coverage for low‑level model: the amount of pressure–displacement data, workspace coverage, and strategies for active data collection to improve model fidelity are not described.

- Adaptation to hardware drift and wear: no methods for online re‑calibration or continual learning to counter elastomer aging, valve dynamics changes, or sensor drift.

- Bimanual or tool‑use scenarios: extension to tasks requiring two hands, coordinated contact planning, or tool affordances remains unexplored.

- Hardware generality: results are shown on one custom pneumatically actuated design; transferability to different soft hand morphologies (fiber‑reinforced, tendon‑driven, different DOFs) is not demonstrated.

- Guaranteeing reproducibility: key implementation details (mesh resolutions, contact sampling, geodesic computation libraries, optimization hyperparameters, and code for Stage 1/Stage 2) are not provided, making replication difficult.

Practical Applications

Summary of Practical Applications

SoftAct introduces a force-aware, contact-centric retargeting pipeline that transfers human manipulation skills from VR demonstrations to non-anthropomorphic soft robot hands. Key innovations include: (1) two-stage retargeting that balances demonstrated contact forces across robot fingers and refines fingertip targets using geodesic-weighted contact influence; (2) a learned low-level inverse model for controlling pneumatic soft fingers; and (3) an end-to-end system validated in simulation and zero-shot real-world tasks (e.g., pouring, screwing, insertion). These enable safer, more robust contact-rich manipulation with soft hands despite extreme embodiment mismatch.

Below are actionable applications, organized by deployment horizon.

Immediate Applications

The following can be deployed with today’s hardware/software stack (soft hand end-effectors, VR demo collection, FEM simulation, diffusion policy training, 200 Hz pressure control, and motion capture for object pose):

- Industry (Manufacturing/Assembly; Robotics)

- Gentle assembly and finishing for fragile/irregular objects (e.g., screwing caps, inserting threaded parts like light bulbs, manipulating deformable packaging).

- Potential products/workflows: retrofittable soft-hand end-effectors for 6–7 DoF arms; a “SoftAct Flow” pipeline (VR demo capture → force-aware retargeting → policy training → on-robot deployment).

- Dependencies/assumptions: motion capture or equivalent object pose tracking; pressure-regulated soft actuators; task-specific calibration; safe workspace design.

- Packaging and kitting for consumer goods that deform or scuff easily (cosmetics, small electronics, food containers).

- Potential tools: contact-aware retargeting scripts to quickly adapt workflows to new SKUs; operator-in-the-loop VR demo capture for rapid task onboarding.

- Dependencies/assumptions: consistent object geometry; access to a VR data collection setup; integration to factory robot arms.

- Test/QA operations requiring controlled contact (e.g., twist/torque validation for closures, friction tests, position/force consistency checks).

- Potential tools: force-distribution-aware test stations with reproducible contact paths.

- Dependencies/assumptions: calibration for torque/force thresholds; simulation-grounded force targets.

- Healthcare and Assistive Robotics

- Safe, compliant manipulation for activities of daily living (e.g., pouring liquids, opening bottles, repositioning small boxes/containers).

- Potential products/workflows: assistive mobile manipulators with soft hands for eldercare and rehabilitation settings; care facility pilots focusing on low-risk tasks (pouring, opening).

- Dependencies/assumptions: reliable perception (currently motion capture; near-term replacement with fiducials or depth-based perception); caregiver oversight; infection control for soft materials.

- Laboratory support tasks involving delicate handling (e.g., gentle tube-capping, sample transfer in labs).

- Potential tools: bench-top soft-hand stations trained with VR demos for lab-specific procedures.

- Dependencies/assumptions: standard lab safety; sterility requirements; task-specific training data.

- Warehousing and Logistics

- Handling of deformable or fragile items (e.g., bagged goods, lightweight consumer items), where rigid grippers cause damage or slips.

- Potential tools: warehouse picking cells using soft grippers trained via VR demonstrations on representative items.

- Dependencies/assumptions: item catalog coverage in training; sensing for object poses; throughput aligned to soft actuator dynamics.

- Academia and R&D (Robotics; Software)

- Rapid prototyping of dexterous soft-hand skills for new tasks/embodiments, without anthropomorphic assumptions.

- Potential tools: open-source retargeting modules (geodesic contact modeling, force-balanced finger assignment), low-level pneumatic controller templates, Unreal-based VR capture packages.

- Dependencies/assumptions: accurate hand mesh/geodesic computation; soft-hand hardware availability; FEM or equivalent simulators.

- Benchmarking contact-aware imitation learning.

- Potential workflows: publish datasets of VR demonstrations with dense contact patches/forces; standardized tasks (insertion, twisting, pouring) for cross-embodiment evaluation.

- Dependencies/assumptions: shared data formats; reproducible simulation setups.

- Daily Life (Home Robotics)

- Household tasks requiring care and contact stability (e.g., screwing light bulbs, pouring drinks, opening jars/bottles).

- Potential products: pilot home-assistant prototypes using soft hands for safer interaction near people.

- Dependencies/assumptions: structured environments; marker-based or robust vision for object pose; simple failure recovery strategies.

- Policy and Standards

- Pilot safety assessments and sandbox evaluations for soft-hand manipulation in shared spaces.

- Potential actions: guidelines for contact-rich data collection via VR; best practices for deploying soft end-effectors in hospitals and factories (pressure limits, surface hygiene, pinch-point avoidance).

- Dependencies/assumptions: cross-institution collaboration; risk assessments tailored to soft materials and pneumatic actuation.

Long-Term Applications

These require further research, scaling, or new components (e.g., vision-based contact/pose, markerless perception, broader generalization) before broad deployment:

- Industry (Manufacturing/Assembly; Robotics)

- Markerless, vision-only perception for object/hand state and contact forces, enabling more flexible shop-floor deployments without motion capture.

- Potential tools/products: vision models (learning-based or physics-informed) that estimate contact patches/forces for retargeting; plug-and-play perception stacks.

- Dependencies/assumptions: robust lighting- and texture-invariant perception; validated contact-force estimation from RGB-D or tactile proxies.

- General-purpose soft-hand cobots performing multi-stage workflows (e.g., pick-adjust-insert-twist sequences) with dynamic contact role changes.

- Potential tools: hierarchical policies combining contact-mode inference with force-aware retargeting; task graph planners.

- Dependencies/assumptions: longer-horizon training; contact-mode switching robustness; safety certifications.

- Healthcare and Assistive Robotics

- Broad ADL assistance with vision-only perception and in-the-wild learning (e.g., meal prep, grooming, personal care) using soft, compliant hands.

- Potential products: caregiver-augmented training workflows (VR + on-device refinement), home-deployable assistive robots.

- Dependencies/assumptions: privacy-preserving perception; sterilizable soft materials; human-in-the-loop oversight; regulatory approval.

- Soft prosthetics and orthotics that leverage contact-intent mapping (retargeting functional force patterns rather than joint angles).

- Potential tools: prosthetic control interfaces that infer user intent and translate it into safe, functional contact strategies for non-anthropomorphic sockets.

- Dependencies/assumptions: robust intent detection (EMG, vision, or wearable sensing); personalized calibration; clinical trials.

- Agriculture and Food Handling

- Delicate harvesting and post-harvest manipulation (sorting, packing) of produce with contact-aware retargeting across varied crop geometries.

- Potential products: field-deployable soft end-effectors trained with synthetic/VR demos and refined in situ.

- Dependencies/assumptions: outdoor-robust perception; contamination control; seasonal variability; rapid adaptation to new cultivars.

- Service and Domestic Robotics

- Versatile household manipulation with contact-aware foundation models that generalize across objects, tasks, and embodiments.

- Potential tools/products: contact-centric manipulation foundation models integrated into service robots; cloud-based “retargeting as a service.”

- Dependencies/assumptions: large-scale datasets with contact labels; compute infrastructure; standardized APIs across hardware vendors.

- Academia and R&D (Robotics; Software)

- Unified frameworks for cross-embodiment retargeting (rigid, soft, anthropomorphic, non-anthropomorphic) leveraging contact/force primitives and geodesics.

- Potential tools: libraries for geodesic-based contact influence, differentiable simulators for soft bodies, and benchmark suites for functional manipulation transfer.

- Dependencies/assumptions: community standards for contact data; reproducible interfaces for various soft actuators (pneumatic, tendon-driven, electroactive).

- Bimanual and whole-arm soft manipulation with coordinated force distribution across multiple compliant effectors.

- Potential workflows: multi-effector force allocation algorithms; coordinated low-level controllers for heterogeneous soft morphologies.

- Dependencies/assumptions: high-bandwidth pressure/tendon control; synchronized perception and planning.

- Policy and Standards

- Standards for contact-rich robot learning: data schemas for contact force/patch labels, safety thresholds for soft contact, and evaluation protocols for contact-rich tasks.

- Potential actions: NIST-like benchmarks for soft manipulation; certification pathways for compliant contact in proximity to humans.

- Dependencies/assumptions: consensus across academia, industry, and regulators; transparent reporting of sim-to-real performance and failure modes.

- Cross-Sector Tooling and Platforms

- End-to-end commercial platforms for VR-to-robot skill transfer in contact-rich tasks (from data capture to deployment across many robot models).

- Potential products: enterprise “SoftAct Studio” (Unreal plugin + retargeting engine + low-level controller pack), with cloud training and fleet updates.

- Dependencies/assumptions: vendor-agnostic drivers; secure data handling; automated calibration and self-checks.

Notes on feasibility common to many applications:

- Perception: Current real-world pipeline relies on motion-capture object pose; moving to robust, markerless vision is a key dependency.

- Demonstration data: Ground-truth contact forces are currently obtained in VR/simulation; real-world deployment will benefit from vision- or tactile-based contact estimation.

- Hardware: Requires precise pressure regulation (≈200 Hz), reliable soft-hand fabrication, and calibration per device.

- Generalization: Task/domain shifts may require new VR demos or on-site fine-tuning; long-horizon, multi-stage tasks need additional policy structure.

- Safety and hygiene: Soft materials introduce cleaning/sterility concerns in healthcare/food; pressure limits and failure recovery must be engineered for shared spaces.

Glossary

- Affordance: The action possibilities that an environment or object offers to an agent, given its morphology and capabilities. "differences in workspaces, affordances, and contrability."

- Anthropomorphic: Having a human-like form or structure; in robotics, resembling the human body or hand. "Unlike rigid and anthropomorphic robot hands, soft robotic hands are typically underactuated and driven with nonlinear actuators such as artificial muscles,"

- Behavior cloning: A supervised imitation-learning approach that maps observations to actions using expert demonstrations. "These demonstrations are typically used to supervise imitation learning or behavior cloning policies,"

- Contact patch: A contiguous area of surface contact between two bodies where forces are exchanged. "Specifically, we extract contact patches between the hand and the object,"

- Diffusion policy: A control policy that leverages diffusion models to generate actions by denoising from noise. "We train a diffusion policy~\cite{chi2023diffusion} to imitate retargeted trajectories."

- Embodiment mismatch: A discrepancy between the bodies or morphologies of the demonstrator and the robot that complicates skill transfer. "This formulation enables soft robotic hands to reproduce the functional intent of human demonstrations while naturally accommodating extreme embodiment mismatch and nonlinear compliance."

- End-effector: The terminal element of a robot manipulator that interacts with the environment (e.g., hand, gripper). "The soft fingers are mounted on a rigid end-effector base attached to a 7-DoF robotic arm,"

- Finite element method (FEM): A numerical technique for simulating continuum mechanics by discretizing objects into elements. "Retargeting and evaluation are performed in a custom finite-element-based (FEM) physics engine that supports coupled rigid and soft body dynamics."

- Force-balanced mapping: An allocation or correspondence that proportionally shares contact force loads across effectors. "establishing a force-balanced mapping between human and robot hands."

- Force-closure: A grasp condition where available contacts can resist arbitrary external wrenches, fully constraining the object. "Some methods incorporate contact states or force-closure constraints to improve robustness,"

- Geodesic distance: The shortest path length between two points along a surface or manifold, respecting its geometry. "their geodesic distance is computed over the hand mesh graph,"

- Geodesic heat kernel: A kernel that diffuses influence over a surface according to geodesic distances, akin to heat propagation. "Contact forces are diffused over the hand surface using a geodesic heat kernel."

- Inverse kinematics: Computing joint configurations that achieve a desired end-effector pose. "mapped to robot configurations via inverse kinematics, heuristic rules, or learned pose-to-pose mappings"

- Jacobian controller: A controller that uses the manipulator Jacobian to map task-space errors or velocities to actuator commands via local linearization. "a linear Jacobian controller derived from local kinematic linearization"

- Kinematic correspondence: A structural relationship that aligns the kinematic variables (e.g., joints, links) between two embodiments. "Common retargeting methods rely on kinematic correspondence between human and robot hands,"

- Learning from demonstration (LfD): A paradigm where robots learn behaviors directly from expert demonstrations rather than explicit programming. "Learning from demonstration (LfD) provides a powerful paradigm for transferring complex manipulation skills from humans to robots"

- Motion capture: A sensing setup that records precise motion using tracked markers and cameras. "Hand motion is captured using an OptiTrack motion capture system"

- Motion retargeting: Mapping motions from a source agent (e.g., human) to a target agent (e.g., robot) while respecting constraints. "Motion retargeting aims to map human demonstrations to robot actions,"

- Non-anthropomorphic: Not resembling human form; in hands, differing notably from human hand morphology. "a custom non-anthropomorphic pneumatic soft robot hand."

- Pneumatic actuation: Driving movement using pressurized air in chambers or muscles. "pneumatic actuation is approximated in simulation through applied torques that induce articulated bending of the fingers."

- Proportional pressure regulator: A device that precisely controls output pressure in proportion to an input command signal. "The internal pressure to the soft robot chambers are controlled precisely at 200 Hz with a proportional pressure regulator."

- Proprioceptive state: Internal sensing of a robot’s own configuration and dynamics (e.g., joint positions, velocities). "The observation space is defined by robot proprioceptive state and object poses"

- Rigid--soft hybrid architecture: A design that integrates rigid structures with soft materials to balance compliance and controllability. "we adopt a rigid--soft hybrid architecture that combines compliant elastomeric materials with embedded rigid elements to improve controllability and repeatability."

- Sim-to-real transfer: Deploying policies learned in simulation on real hardware with minimal performance loss. "This suggests that explicitly reasoning about contact geometry and force distribution improves sim-to-real transfer for soft robotic manipulation."

- Strain-limiting structure: Internal reinforcement that restricts undesired deformations to promote predictable motion. "Internally, each finger contains a rigid strain-limiting structures that constrain undesired deformation modes such as ballooning and promote repeatable bending behavior."

- Task-space retargeting: Retargeting that prioritizes object/task outcomes (e.g., poses, contacts) rather than joint-level matching. "recent work has explored task-space retargeting, object-centric alignment, and contact-aware formulations"

- Teleoperation: Real-time human control of a robot via an interface, often over distance or through a mapping. "Unlike conventional VR-based teleoperation systems~\cite{qin2023anyteleop}, which use VR interfaces to directly control a physical robot in real time,"

- Underactuated: Having fewer independent actuators than mechanical degrees of freedom, limiting direct control of all motions. "soft robotic hands are typically underactuated and driven with nonlinear actuators such as artificial muscles,"

- Virtual spring constraints: Simulation constraints that emulate springs to shape deformations or contacts without explicit hardware. "which deforms the surrounding soft finger through distributed virtual spring constraints."

- Zero-shot: Performing a task in a target setting without additional training or fine-tuning for that setting. "At the policy level, SoftAct achieves consistently higher success in zero-shot real-world deployment and in simulation."

Collections

Sign up for free to add this paper to one or more collections.