- The paper introduces a three-primitive model (diffuse, reflection, transmittance) that overcomes limitations in capturing high-frequency specular and transparent effects.

- It utilizes a full microfacet BSDF and mesh-guided differentiable ray tracing to accurately separate and blend reflective and transmitted light components.

- Experimental results demonstrate improved PSNR, SSIM, and LPIPS metrics on challenging datasets, validating the framework's efficacy.

RT-GS: Gaussian Splatting with Reflection and Transmittance Primitives

Introduction

The RT-GS framework proposes a unified approach for physically realistic novel view synthesis using Gaussian Splatting (GS) that simultaneously addresses specular reflection and transmittance effects. This work differentiates itself from prior GS-based and implicit neural field methods by explicitly modeling specular and transmittance paths with dedicated Gaussian primitives, leveraging a microfacet-based BSDF and differentiable ray tracing. This modeling makes it possible to reconstruct complex scenes containing challenging specular highlights and transparent objects, improving both qualitative appearance and standard image quality metrics.

Methodology

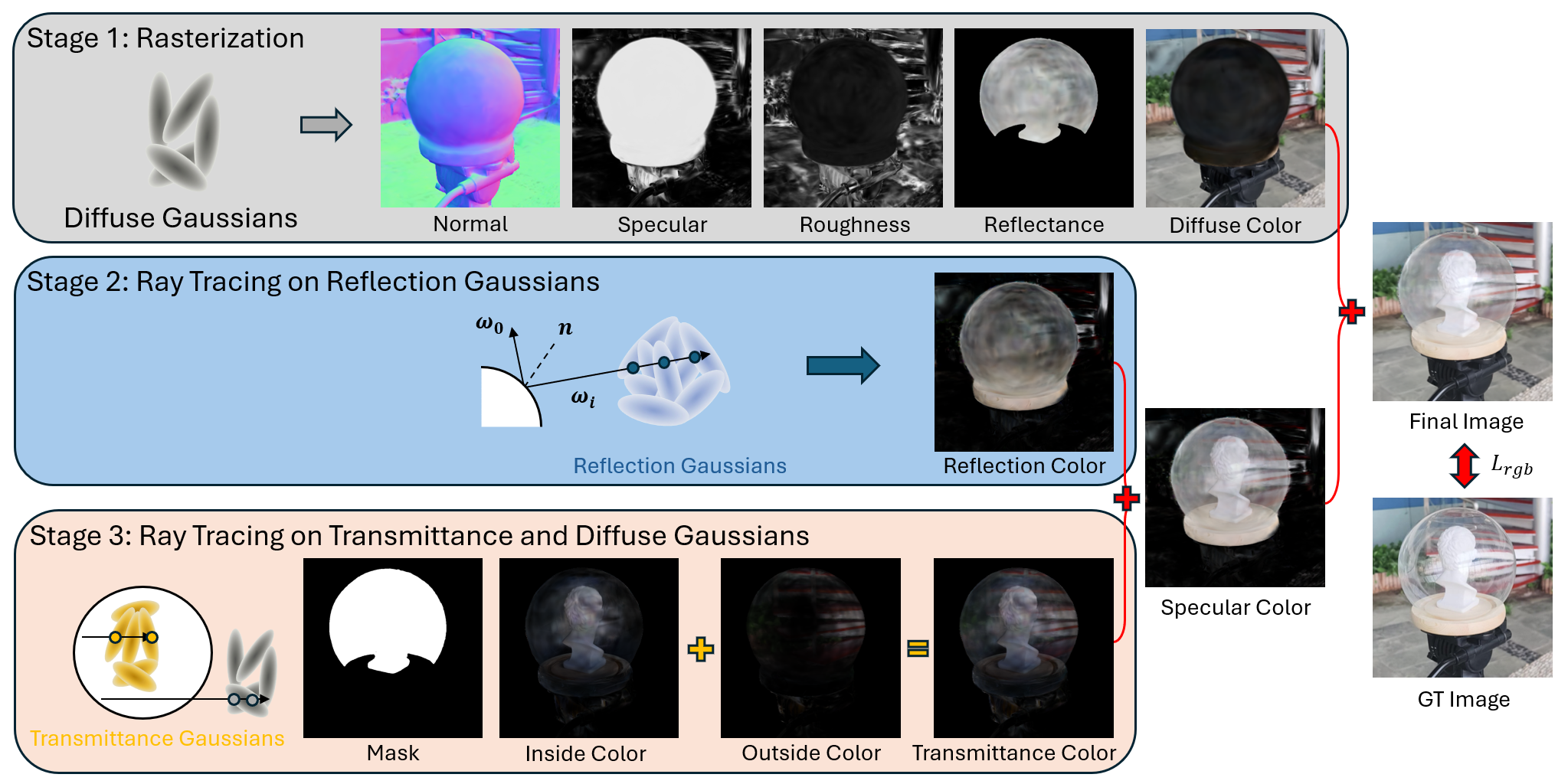

RT-GS augments standard GS scene representations with three decoupled types of Gaussian primitives: diffuse, reflection, and transmittance. Training is guided by multi-stage optimization, integrating physically-based material representations and mesh-guided ray tracing for transparent geometry.

Figure 1: High-level overview of the RT-GS method, illustrating the separation of diffuse, reflection, and transmittance components and their joint optimization pipeline.

Scene Representation

Diffuse Gaussians capture base color and most geometry attributes and are initialized via SfM point clouds, while reflection and transmittance Gaussians are randomly initialized within the scene bounds. All primitives are parameterized by depth, normals, roughness, base reflectance, and specular blending weights, with diffuse Gaussians producing per-pixel material property maps via rasterization.

Microfacet Material Model

The RT-GS pipeline integrates a non-approximated microfacet BSDF without the split-sum simplification, providing a more accurate separation and blending of diffuse, reflection, and transmittance terms. The Torrance-Sparrow model is used for the BRDF, and specular transmittance is modeled for the BTDF.

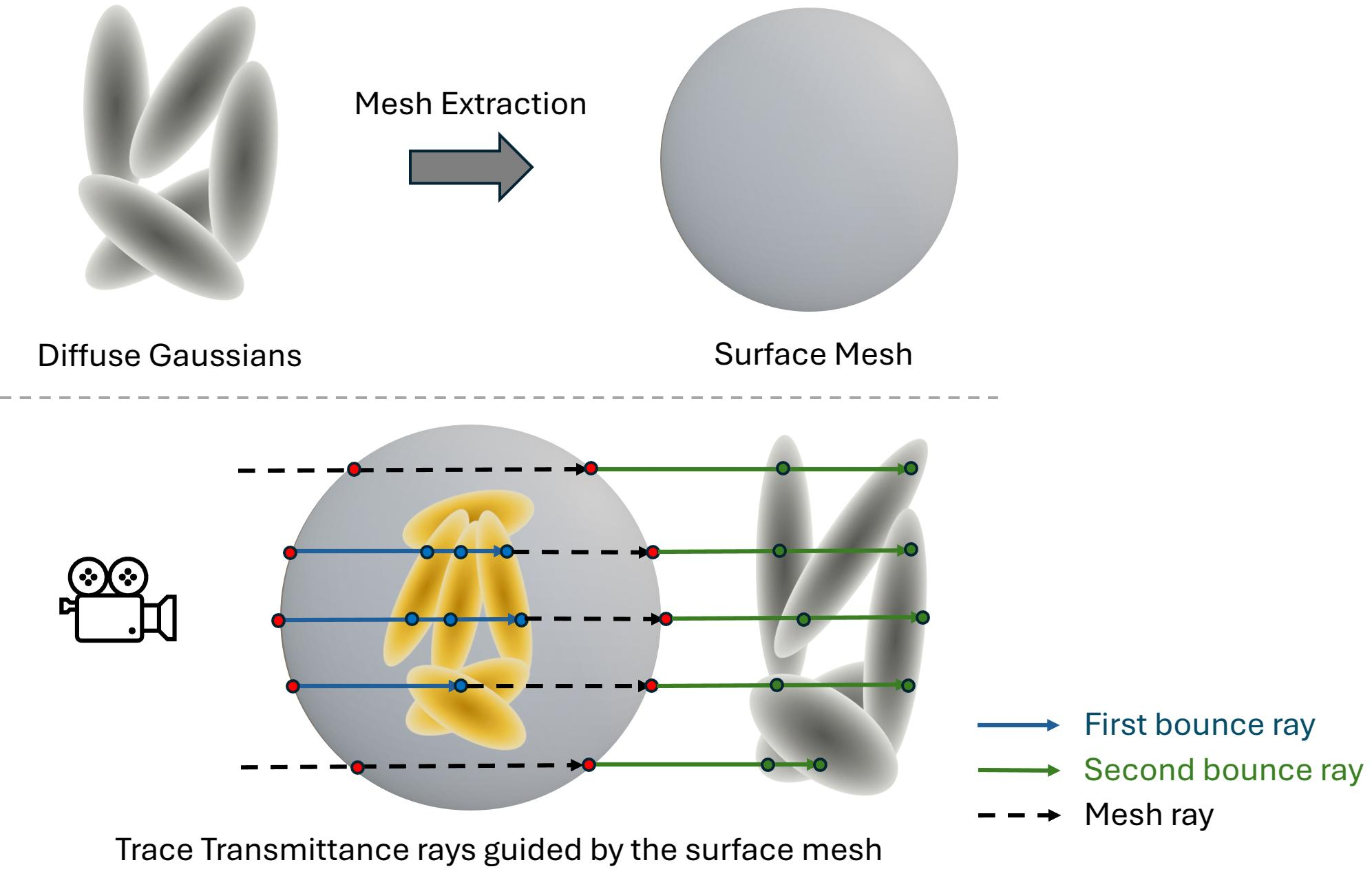

Differentiable Ray Tracing

After computing geometry and material properties, reflection and transmittance rays are spawned using mesh guidance. Reflection rays are traced for all surfaces, while transmittance paths are only traced at transparent regions (identified via SAM2+GroundingDINO). Mesh extraction is used to enable two-stage (front and back face) tracing through transparent geometry.

Figure 2: Mesh-guided ray tracing for transparent geometry supports reconstruction of objects both inside and behind semi-transparent materials.

Regularization and Training

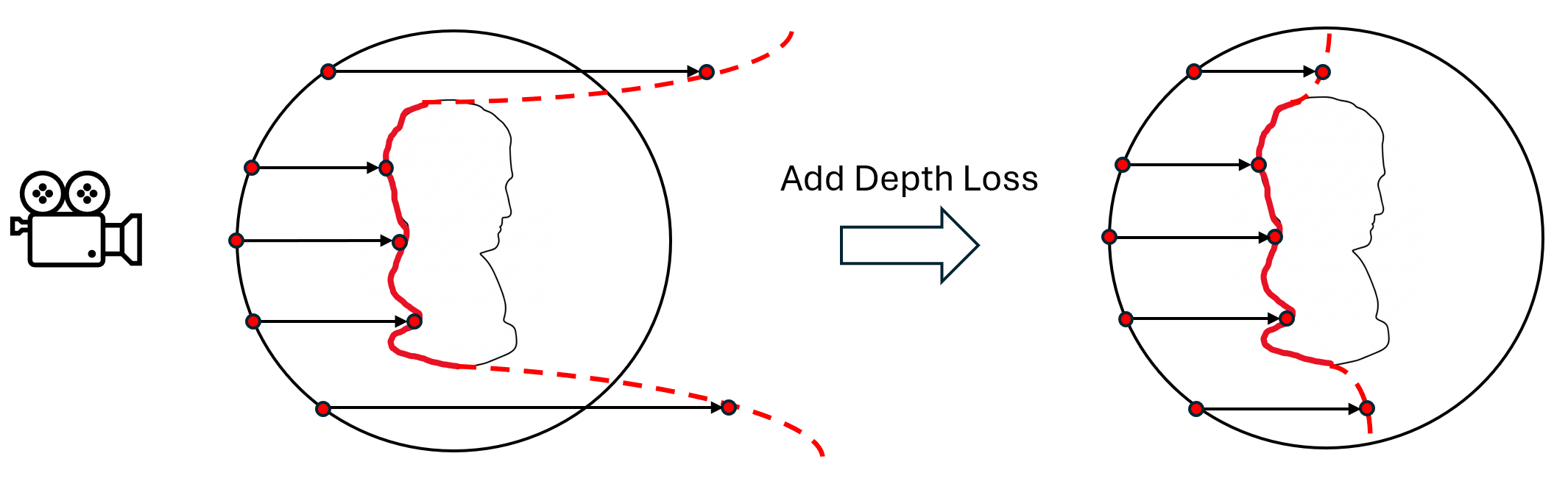

RT-GS is optimized with joint supervision over all three primitive types (diffuse, reflection, transmittance), using a mixture of photometric, perceptual, and material loss terms. Key constraints guide specular strength in transparent regions and restrict transmittance Gaussians to physically plausible regions. Regularization on normal alignment and transmittance ray depth helps avoid degenerate solutions.

Figure 3: Depth regularization ensures the reconstructed geometry of transmitted rays remains consistent with the physical extent of transparent objects.

Experimental Results

Quantitative and Qualitative Comparisons

RT-GS was validated on challenging real-world datasets capturing both near-field reflections (Ref-Real) and transparent objects (NU-NeRF). Across all metrics (PSNR, SSIM, LPIPS), RT-GS either matches or exceeds the strongest baselines, especially in scenarios with co-occurring reflection and transmittance effects.

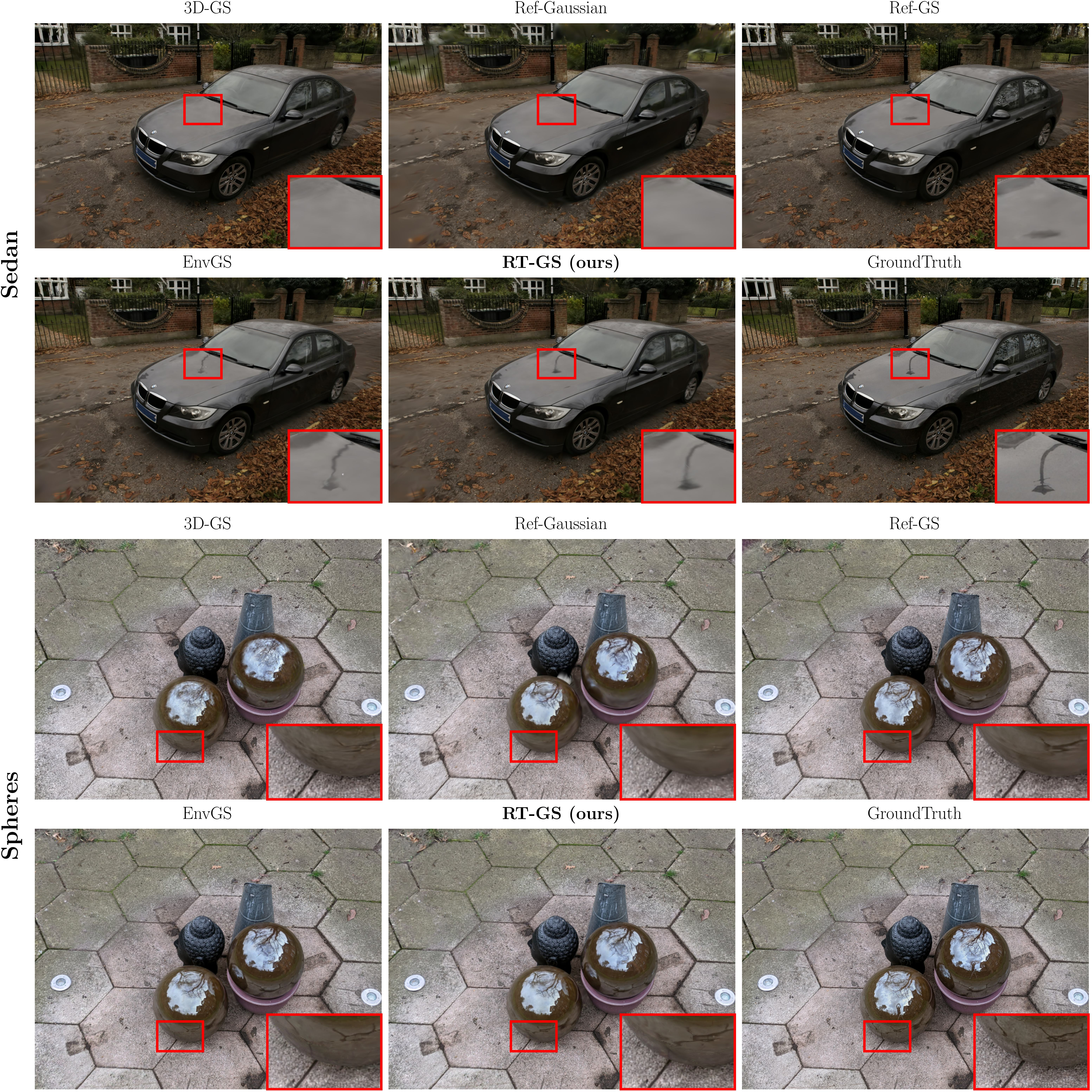

Figure 4: Qualitative comparison (Ref-Real): RT-GS delivers superior specular reflection detail on real reflective surfaces compared to prior methods.

Figure 5: Qualitative comparison (NU-NeRF): RT-GS reconstructs both transmittance appearance and specular details in complex transparent scenes.

Notably, while GS baselines like 3D-GS can capture diffuse and some transmittance effects, they fail to resolve high-frequency reflections. Reflection-augmented methods (e.g., EnvGS) cannot model interiors of transparent objects. RT-GS uniquely solves both, as shown in both numerical and visual studies.

Ablation Analysis

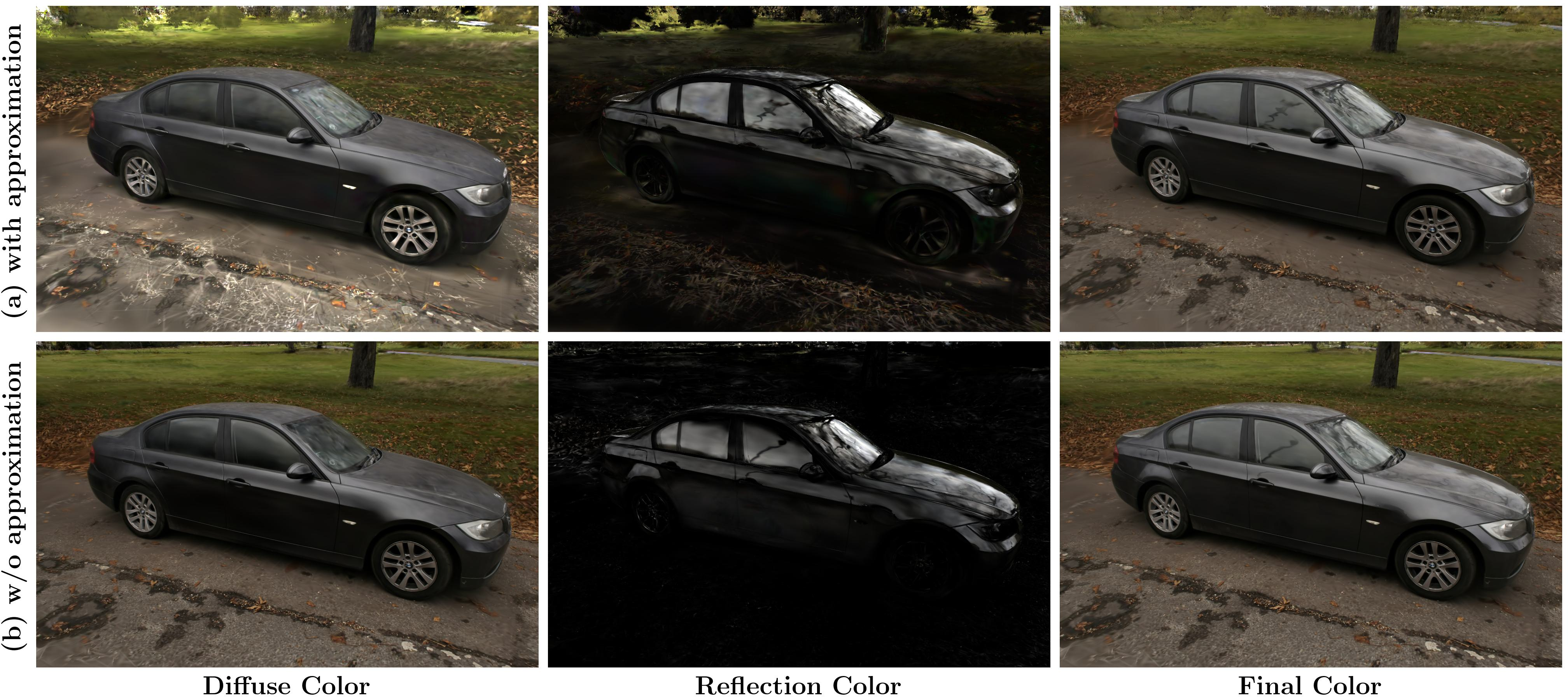

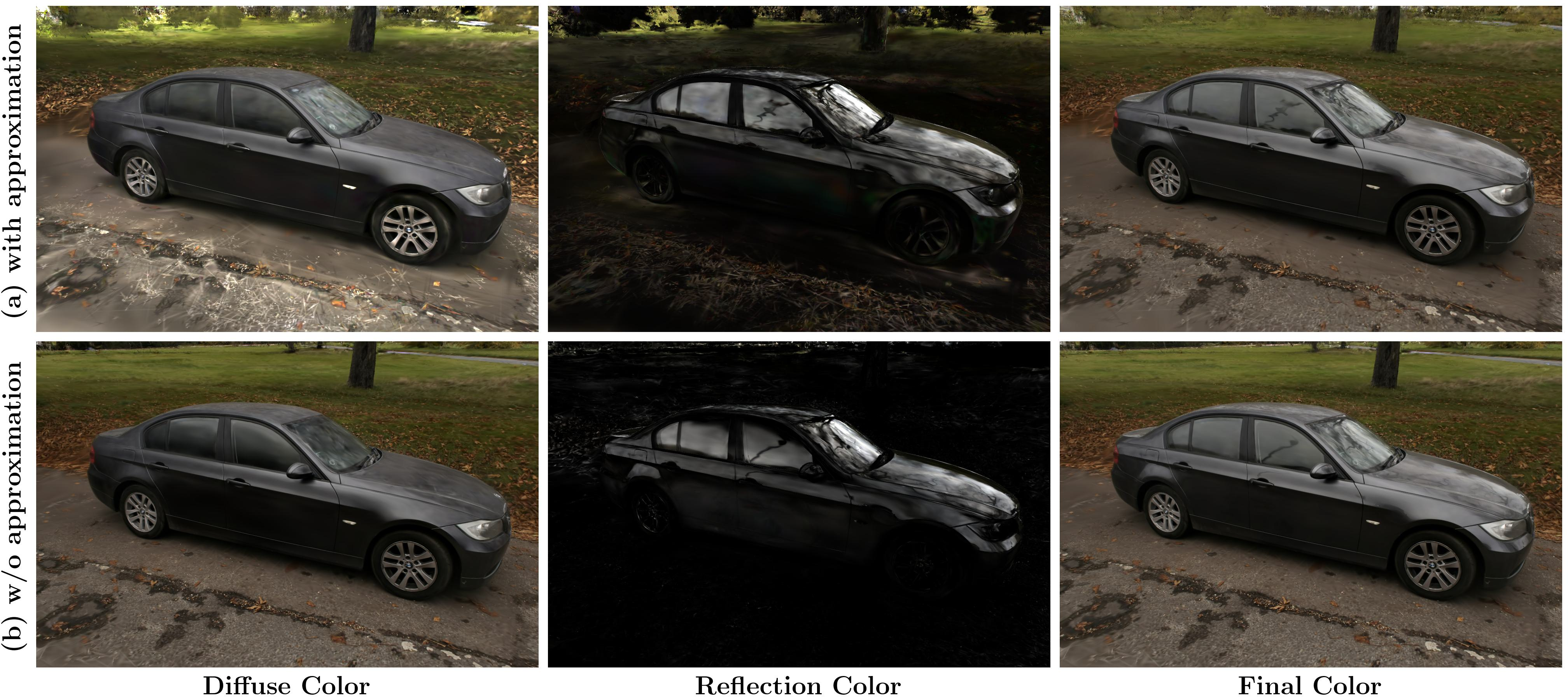

A suite of ablations confirms that each RT-GS component is essential. Removing the transmittance primitive eliminates interior reconstruction of transparent objects. Omitting specular-constraining regularizers causes misallocation of reflection and transmission among the Gaussians. Neglecting mesh guidance degrades transmittance ray accuracy. Using the split-sum approximation in the BSDF reduces correct separation of diffuse and specular terms, harming global realism.

Figure 6: Ablation study on BallStatue scene: Each RT-GS component is necessary to avoid loss of reconstructed detail or introduction of artifacts.

Figure 7: Ablation study on Sedan: Use of full microfacet model is necessary to correctly separate and blend diffuse and specular signals.

Implications and Future Work

RT-GS marks a significant methodological advance in explicit, physically-constrained neural rendering, demonstrating that structured material and light-transport models can be effectively married with Gaussian-based scene representations. Practically, this enables real-time, photorealistic rendering even in the presence of complex reflective and transparent geometry, bridging the gap between speed-oriented rasterization and physically-realistic ray tracing.

A current limitation is the assumption of infinitesimal thickness for transparent object boundaries, which precludes accurate modeling of multi-bounce refractions or caustics in thick glass. Future efforts should seek to relax this, perhaps by introducing refractive path modeling or extending the transmittance primitive parameterization. Extending RT-GS to dynamic scenes or general participating media remains an open research direction.

Conclusion

RT-GS generalizes Gaussian Splatting for physically-plausible rendering, introducing a three-primitive model, microfacet BSDF, and mesh-guided differentiable ray tracing to produce scenes with correct specular, transparent, and transmitted light effects. Experiments demonstrate the necessity of each modeling and optimization innovation, yielding state-of-the-art results for material reconstruction and view synthesis in highly challenging scenarios (2604.00509).