MRReP: Mixed Reality-based Hand-drawn Reference Path Editing Interface for Mobile Robot Navigation

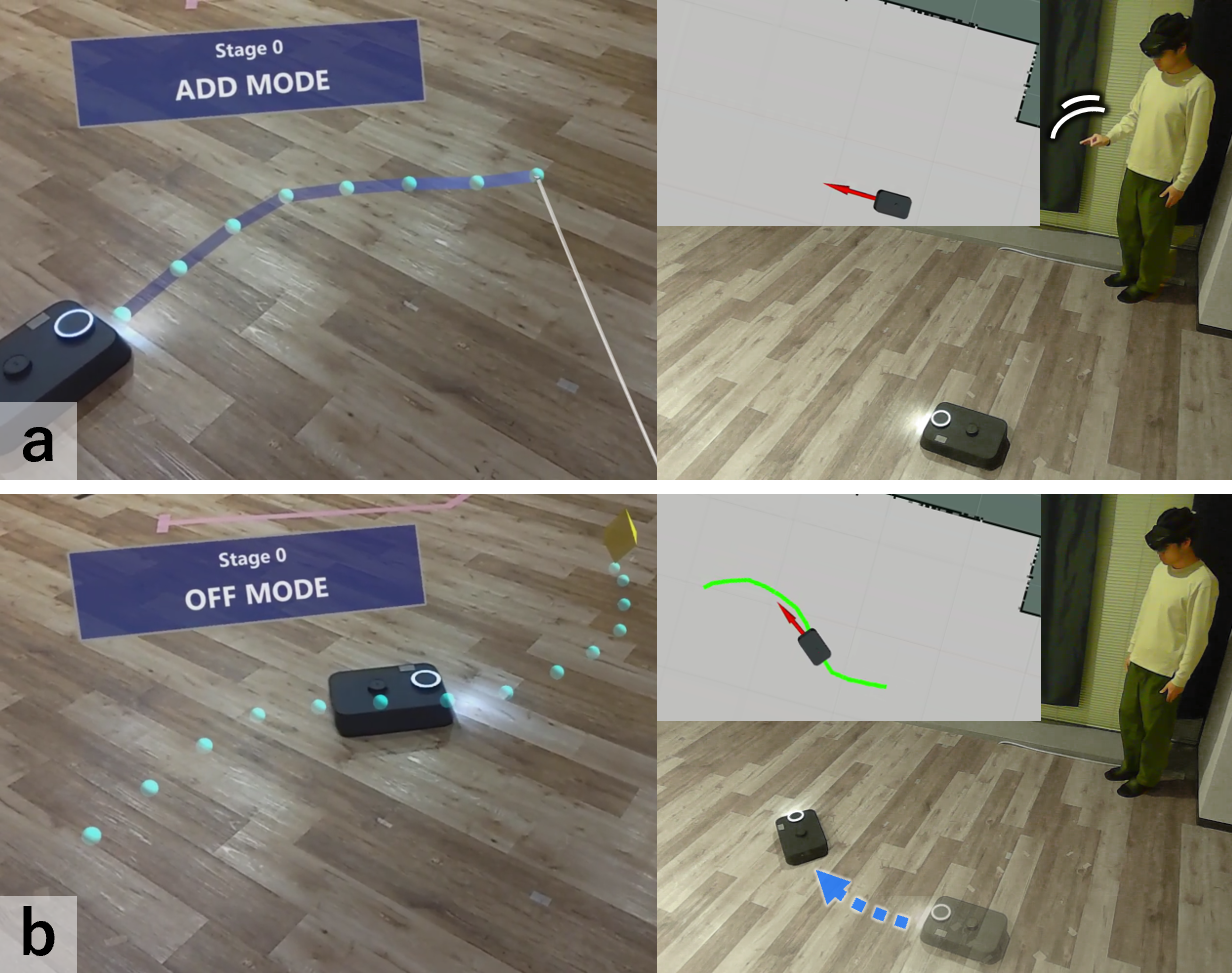

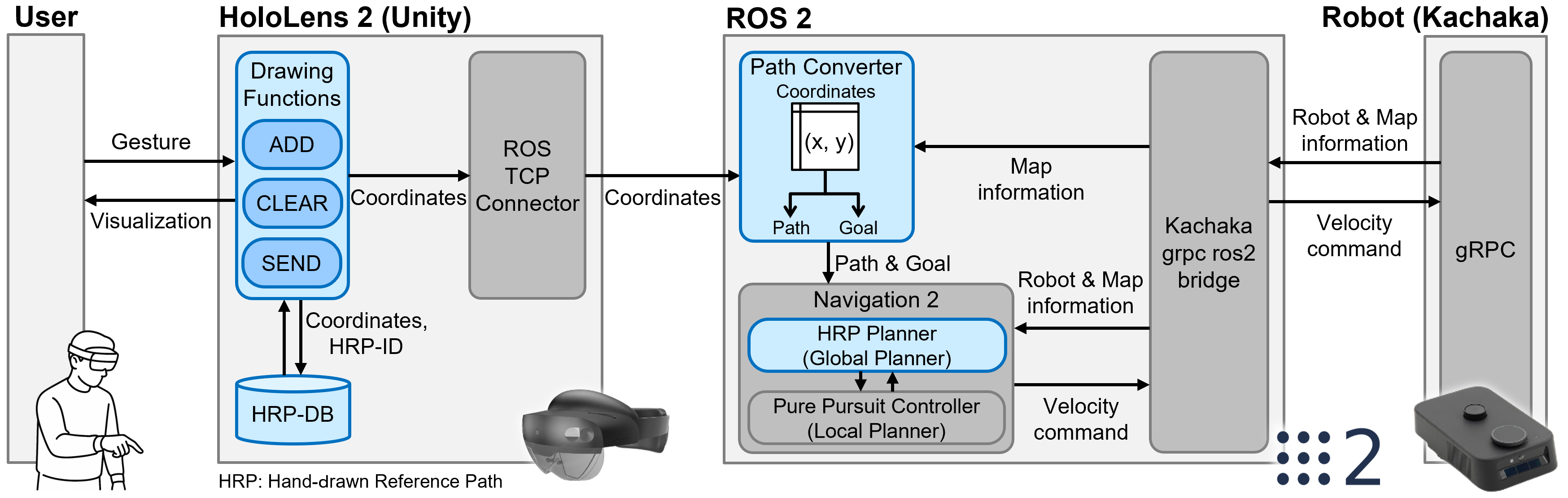

Abstract: Autonomous mobile robots operating in human-shared indoor environments often require paths that reflect human spatial intentions, such as avoiding interference with pedestrian flow or maintaining comfortable clearance. However, conventional path planners primarily optimize geometric costs and provide limited support for explicit route specification by human operators. This paper presents MRReP, a Mixed Reality-based interface that enables users to draw a Hand-drawn Reference Path (HRP) directly on the physical floor using hand gestures. The drawn HRP is integrated into the robot navigation stack through a custom Hand-drawn Reference Path Planner, which converts the user-specified point sequence into a global path for autonomous navigation. We evaluated MRReP in a within-subject experiment against a conventional 2D baseline interface. The results demonstrated that MRReP enhanced path specification accuracy, usability, and perceived workload, while enabling more stable path specification in the physical environment. These findings suggest that direct path specification in MR is an effective approach for incorporating human spatial intention into mobile robot navigation. Additional material is available at https://mertcookimg.github.io/mrrep

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces a new way to tell a mobile robot exactly where to go by “drawing” a route on the real floor that the robot can see and follow. The system is called MRReP. It uses a mixed reality headset (think special glasses that can show virtual lines on top of the real world) so a person can sketch a path with hand gestures. The robot then follows that path on its own.

What were the researchers trying to find out?

They wanted to know if letting people draw a path directly in the real world (using mixed reality) would:

- Make it easier to tell the robot the exact route they have in mind

- Lead to more accurate paths than drawing on a flat 2D map on a laptop

- Feel easier and more usable for people

How did they do it?

First, here are a few simple explanations of terms you’ll see:

- Mixed Reality (MR): Like wearing special glasses that let you see both the real world and computer-made graphics at the same time. Imagine drawing a glowing chalk line that only you can see through the glasses.

- Reference path: The exact route you want the robot to take, like a line on the ground.

- Navigation stack: The robot’s “driving brain” that takes a path and figures out how to move safely along it.

What they built:

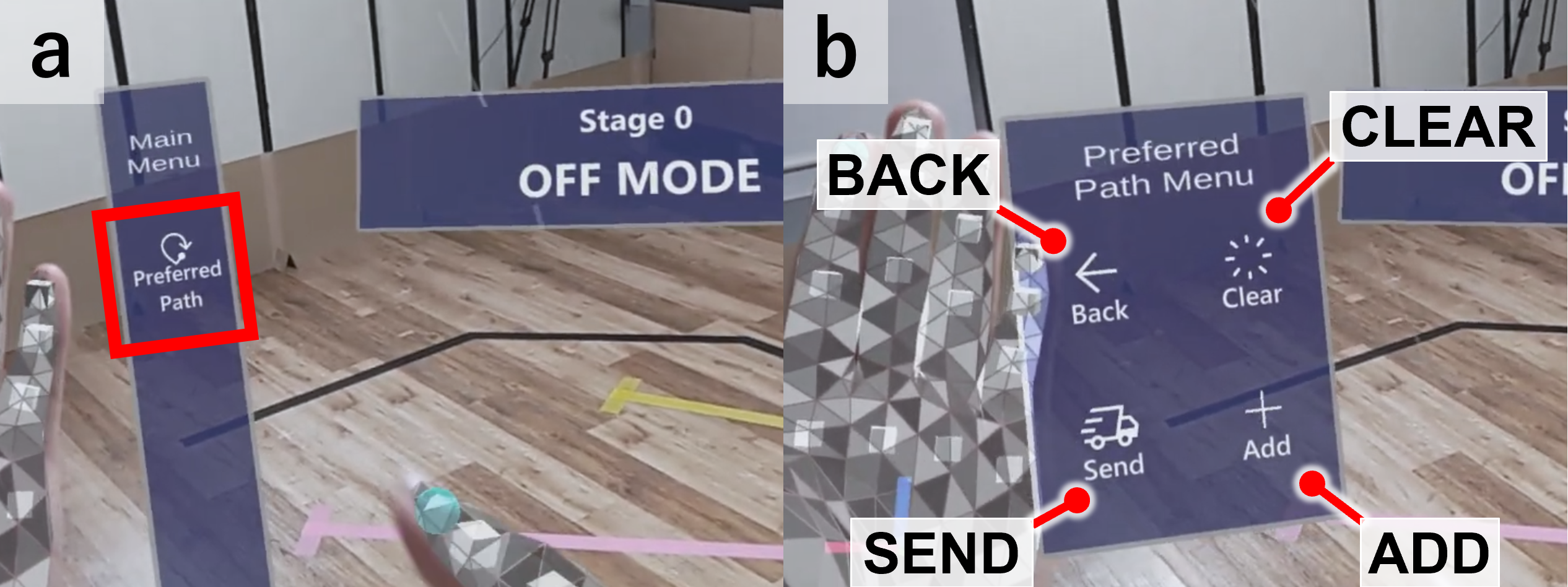

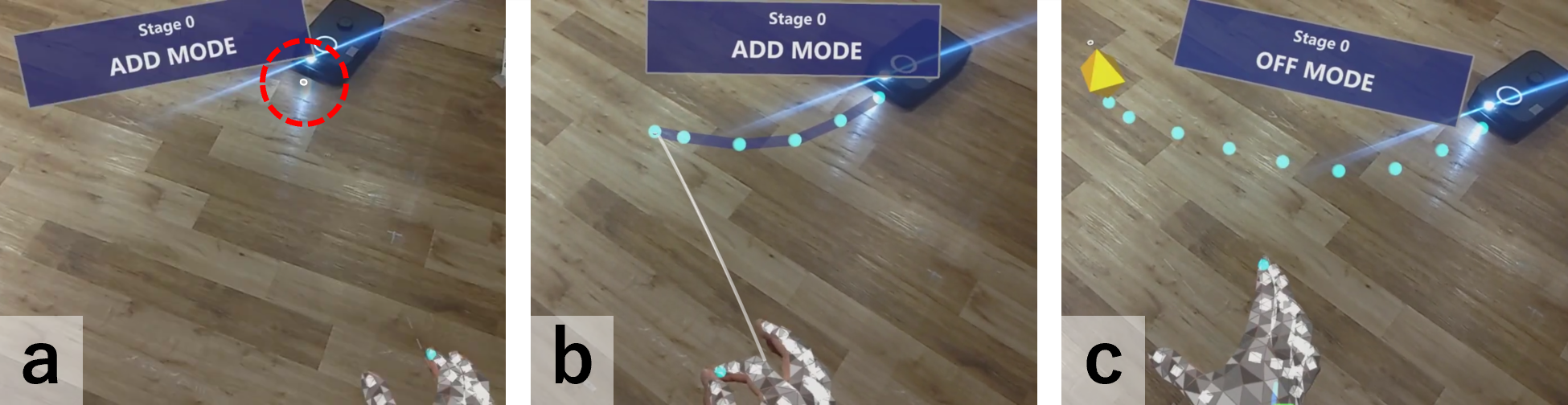

- A person wears a HoloLens 2 headset and uses hand gestures (like pinching) to “draw” a line on the real floor. The line shows up as a virtual path.

- When the person presses “Send,” the system converts that hand-drawn path into a route the robot understands, then the robot starts following it.

- Behind the scenes, a standard robot software system (ROS 2 with Navigation2) handles the path and makes the robot move smoothly. It uses a simple idea called “look-ahead steering” (like how you ride a bike by looking a little ahead along the path), so the robot keeps following the drawn line.

How they tested it:

- 16 people took part in a study.

- Each person tried two ways to draw paths for the robot: 1) Using MRReP to draw on the real floor with hand gestures 2) Using a regular 2D laptop interface to draw on a map with a mouse

- The target routes were taped on the real floor (one straight path and one with several turns), so participants tried to match those routes as closely as possible.

- The robot then followed whatever path the person sent.

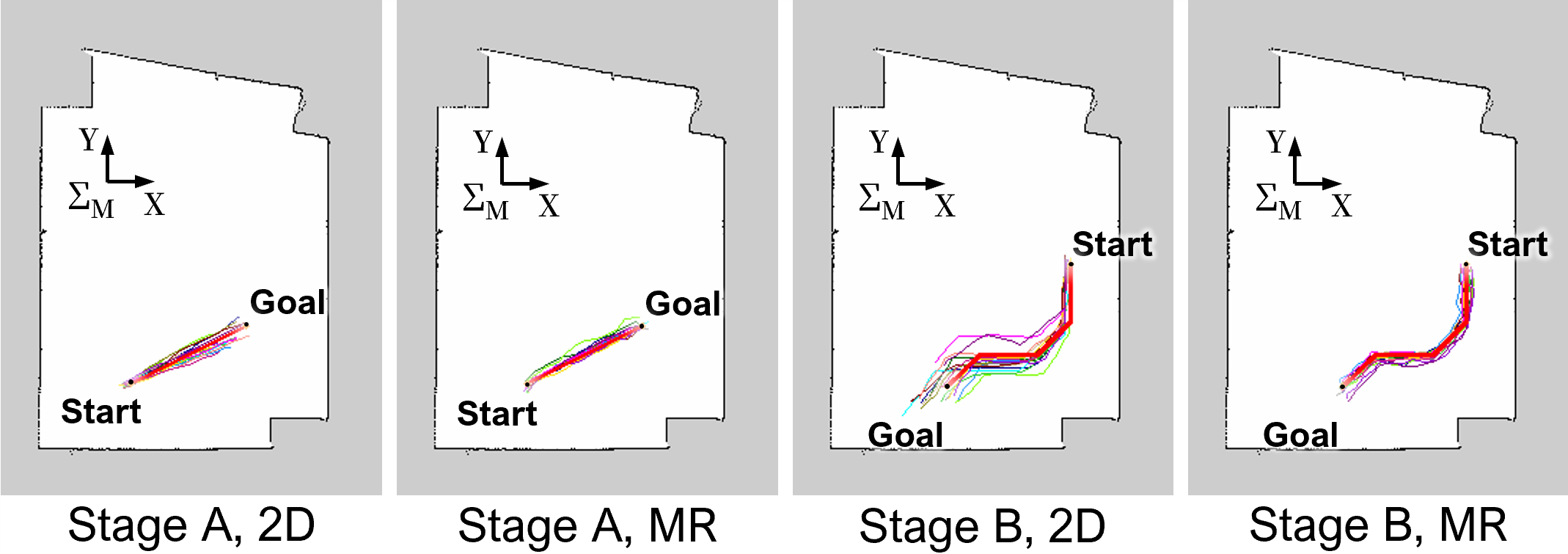

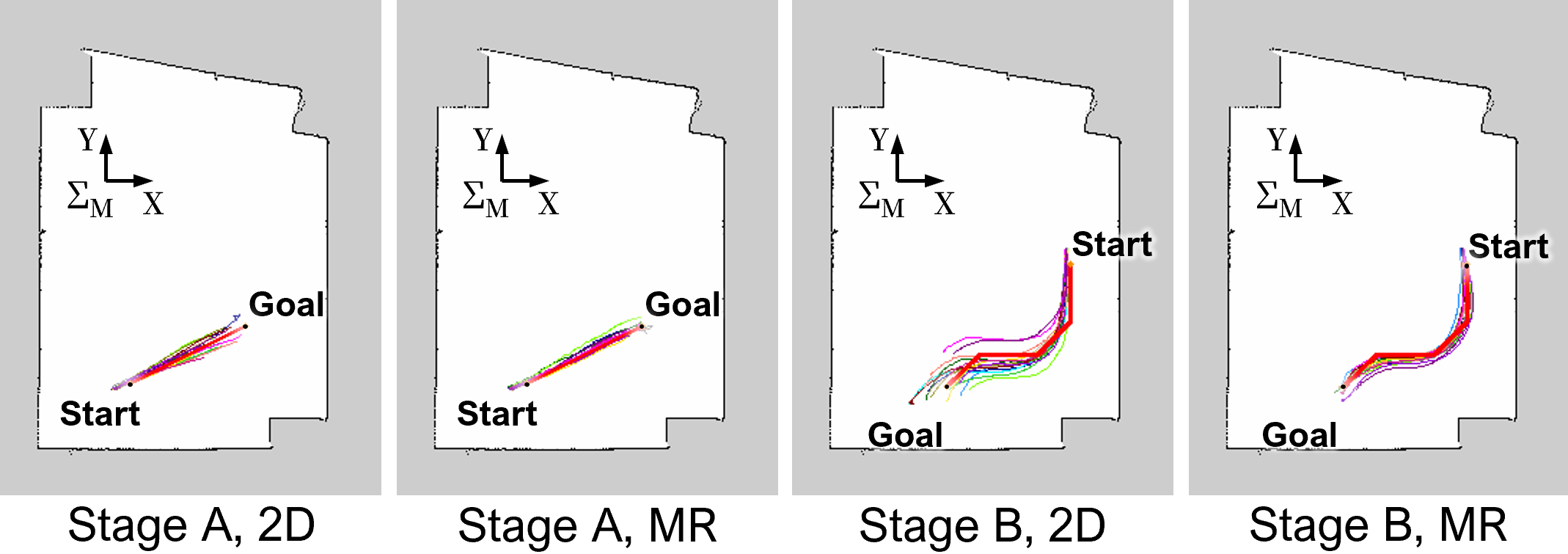

- They measured path accuracy, number of drawing attempts, time to finish, how stable the robot’s route was, and how people felt about the tools (usability and mental effort).

What did they find, and why is it important?

Here are the main results from comparing MRReP (mixed reality) with the 2D laptop interface:

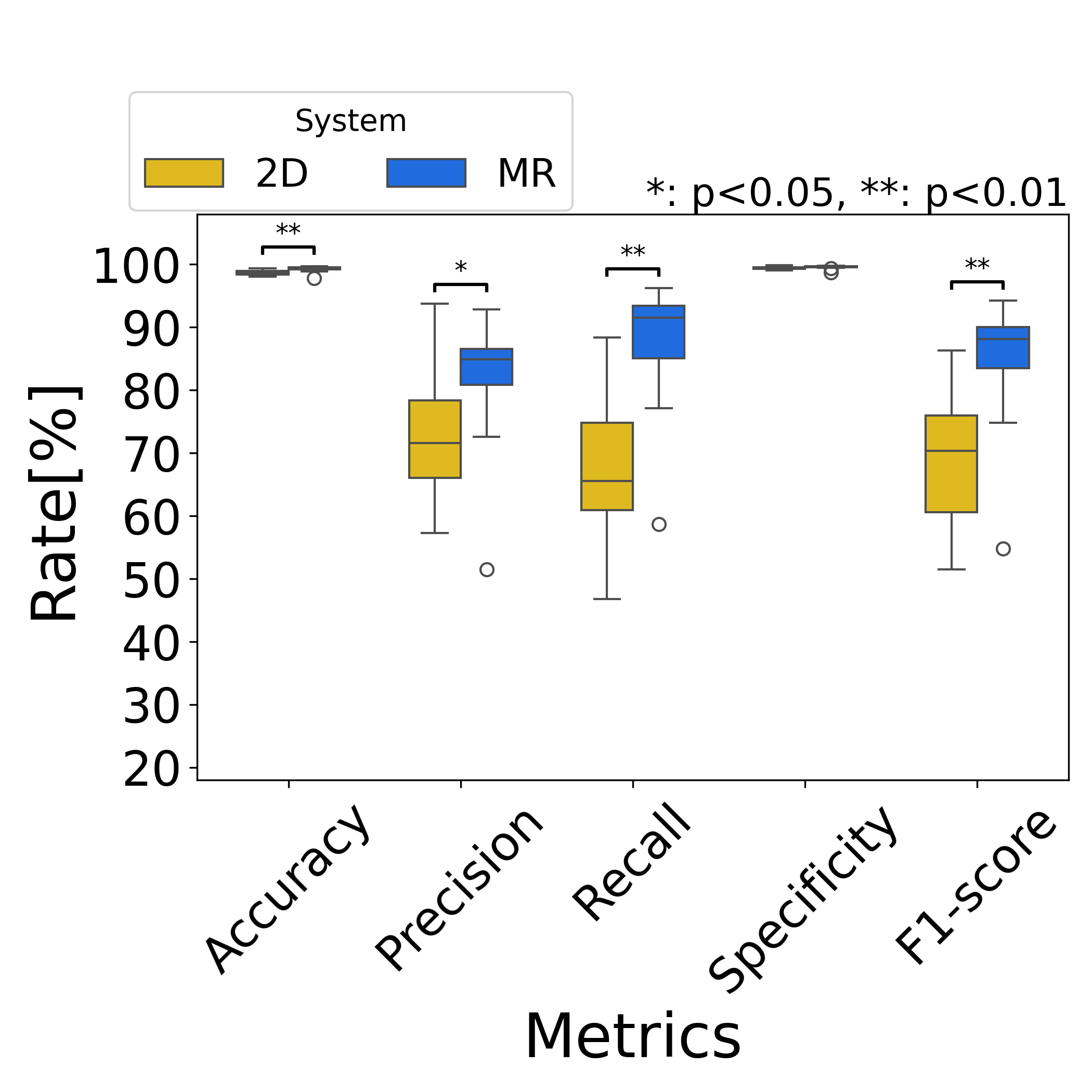

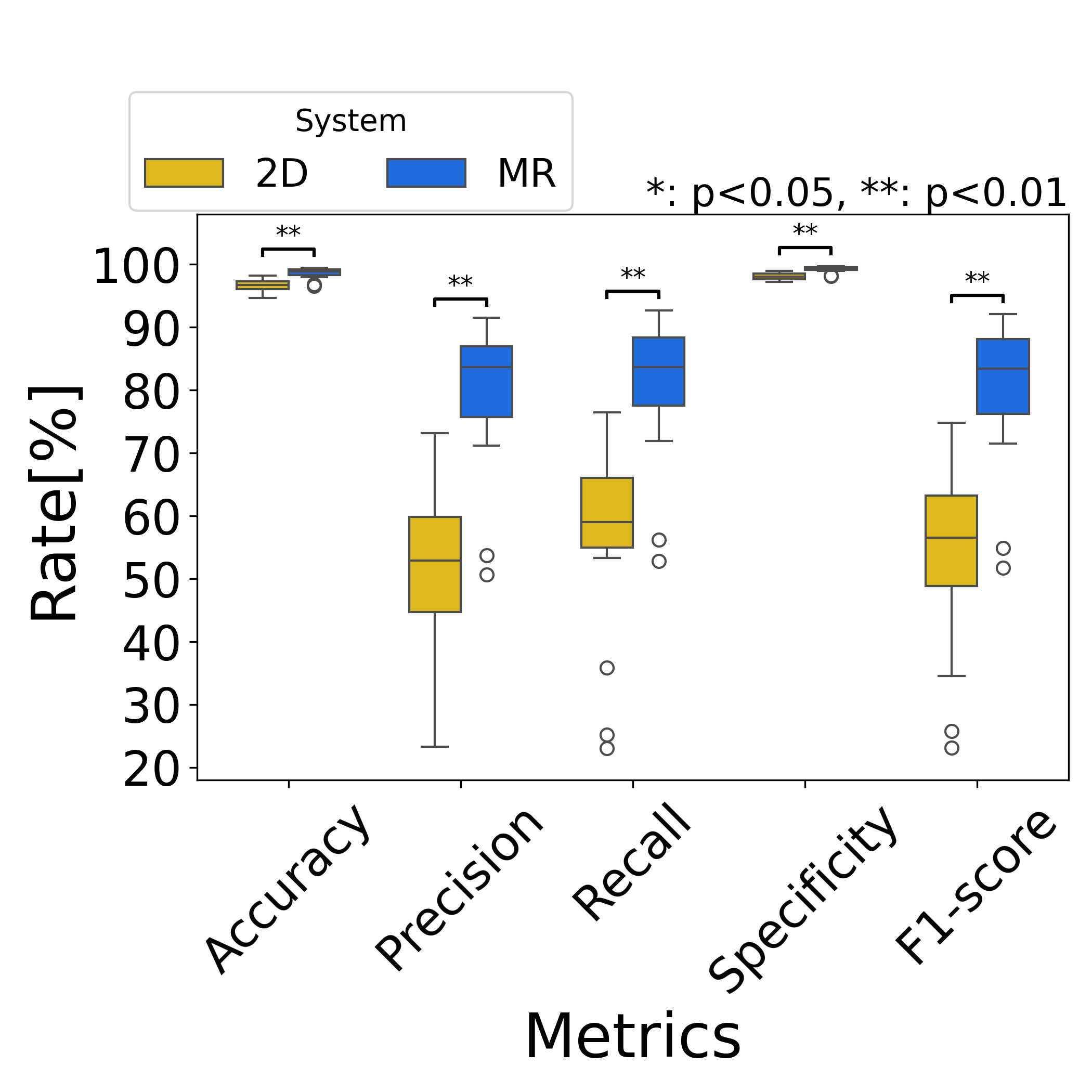

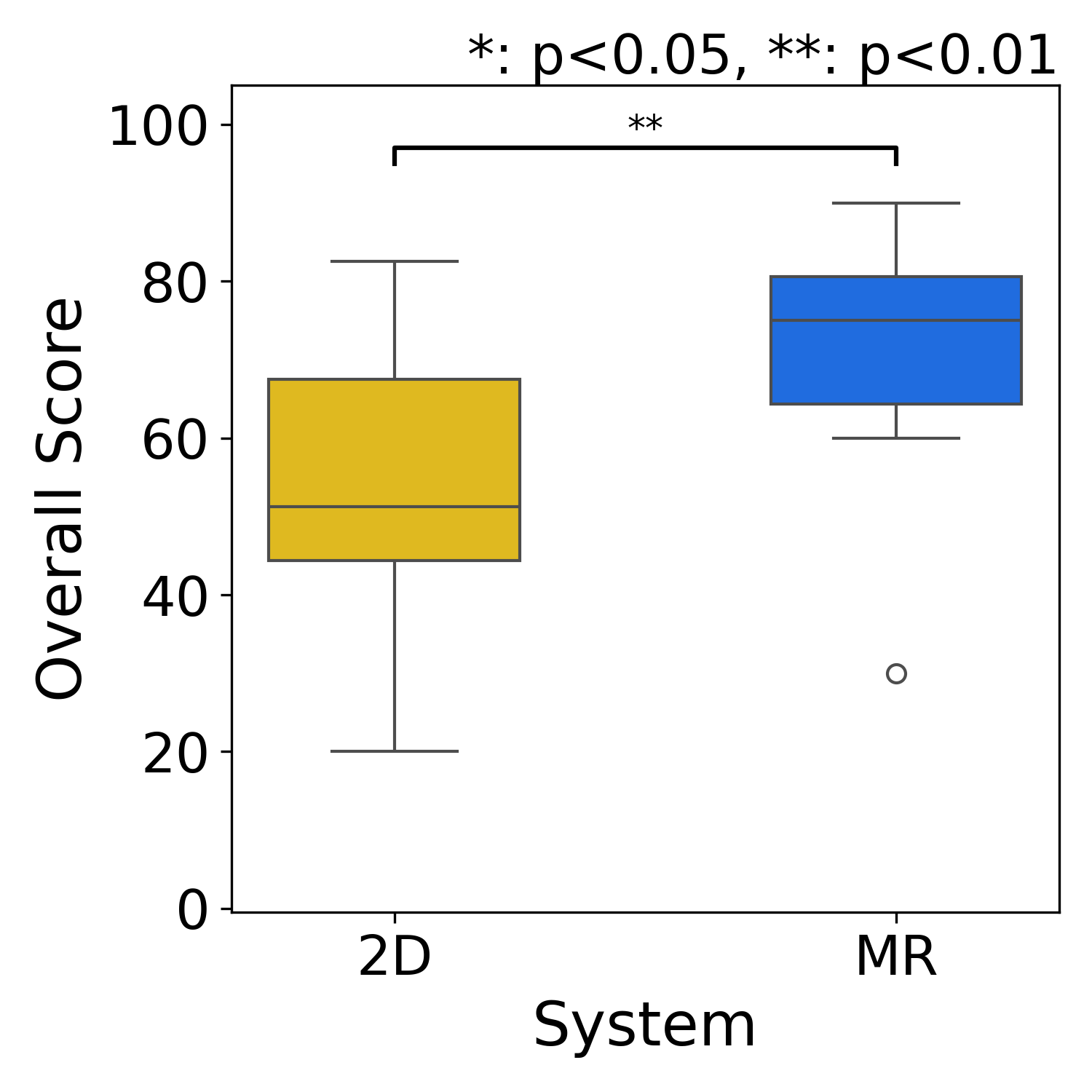

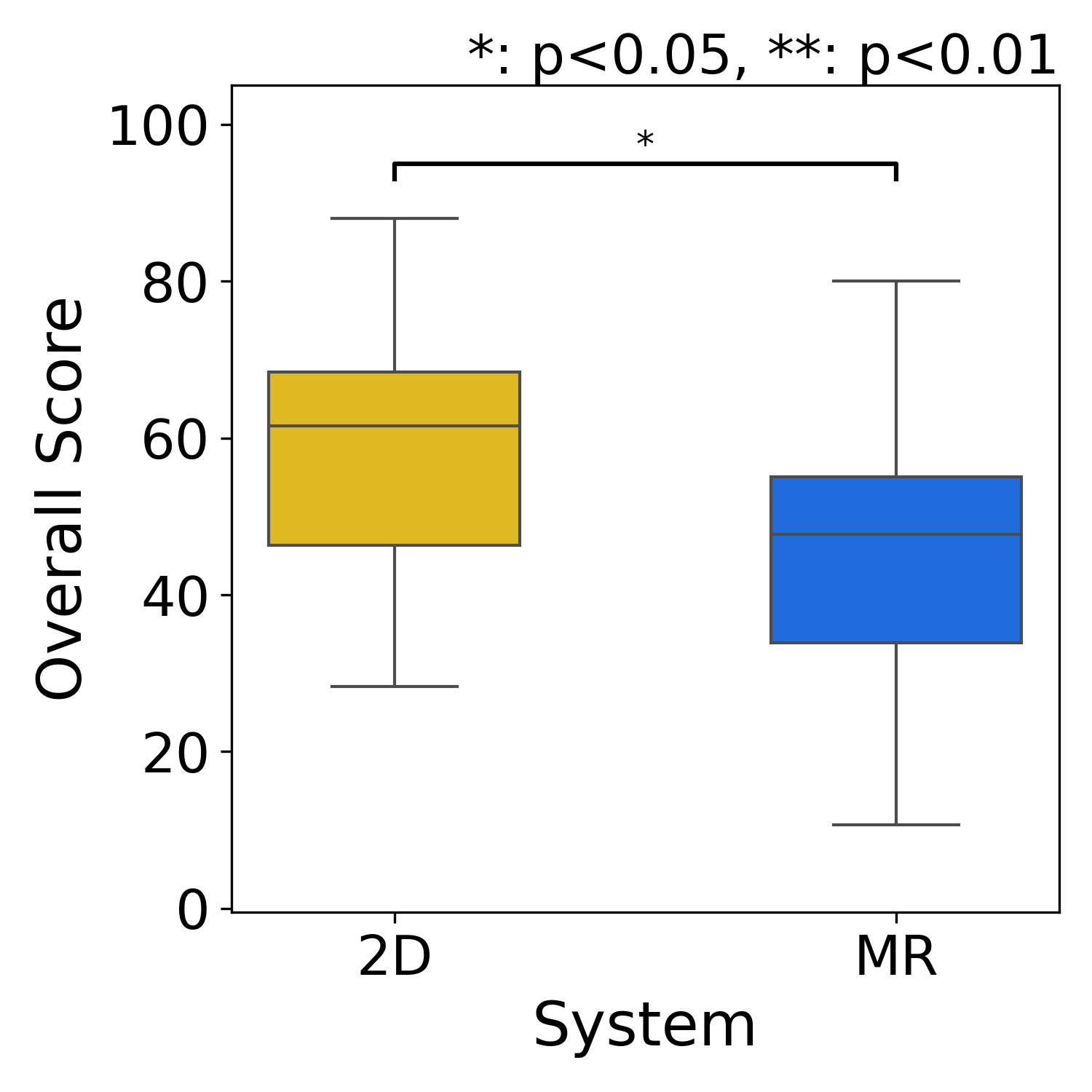

- Better path accuracy: People who used MRReP drew paths that matched the taped routes more closely. This was true for both the straight path and the one with many turns. In everyday terms, the “glowing chalk line” drawn in the real space helped people trace exactly what they meant.

- More complete coverage of the target route: MRReP paths stayed inside the intended area more often and missed fewer parts of the route.

- More consistent results: People’s paths were more similar to each other with MRReP, especially on the trickier path. That means the tool helped reduce guesswork.

- Higher usability and lower mental workload: Participants said MRReP felt easier to use and less mentally tiring overall.

- Time trade-off: Drawing in MR took a bit longer on average. Likely reasons: slightly fussier hand gestures and the fact that moving your arms in space takes more effort than a quick mouse drag.

- Similar number of drawing attempts: People redrew or tweaked their path about the same number of times in both systems, but for different reasons. With MRReP, fine adjustments happened because small errors were easy to see in the real world; with 2D maps, people sometimes had to fix things because it’s hard to translate a flat map into the real space in your head.

Why this matters:

- In real places like hospitals, warehouses, or offices, we often want robots to move in very human-friendly ways—like keeping to one side of a hallway or avoiding busy spots. Being able to draw a path directly on the floor makes it easier to communicate those intentions clearly.

- More accurate and consistent paths mean robots can better follow exactly what people want, which can improve safety and teamwork with humans.

What does this mean for the future?

This research suggests that letting people “draw where you want the robot to go” in the real world is a powerful idea. It can:

- Make robot setup faster and more intuitive for non-experts

- Help robots fit better into human spaces by capturing subtle preferences (like giving people more space)

- Reduce errors that come from translating between 2D screens and the 3D world

There are still practical issues to improve, like reducing arm fatigue and making hand-gesture recognition even more reliable. But overall, MRReP shows that mixed reality is a promising way to give robots clearer, more human-friendly instructions.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list synthesizes what remains missing, uncertain, or unexplored in the paper and can guide concrete next steps for future research:

- External validity and task diversity: Results are based on N=16 young participants in a single indoor setting with two simple path types (straight and piecewise linear with 45° turns). Generalization to complex, cluttered, curved, non-Manhattan layouts, larger spaces, and varied user populations (e.g., professional operators, older adults) is unknown.

- Baseline comparability: The benchmark is a mouse-based 2D laptop interface; no comparison against stronger alternatives (e.g., stylus/tablet drawing, touch screens, AR-on-tablet, 2D interfaces with perspective camera overlays) that might narrow the gap with MR.

- Learning effects and long-term use: The study captures single-session performance. It does not measure learning curves, retention, or performance after extended practice, nor whether MR time penalties decrease over time.

- MR–ROS map registration accuracy: There is no quantitative evaluation of the HoloLens-to-ROS map alignment (initial error, drift over time and distance, sensitivity to QR-code placement/occlusion), nor its effect on path accuracy and robot tracking.

- Multi-anchor calibration and robustness: Reliance on a single QR code and Vuforia is fragile for large areas and line-of-sight occlusions. Whether multi-anchor schemes (e.g., spatial anchors, fiducial networks) reduce drift and improve large-scale consistency is untested.

- End-to-end latency and reliability: Communication delays (Unity↔ROS 2↔robot), packet loss, and failure modes (e.g., disconnects during operation) are neither characterized nor mitigated.

- Gesture recognition quality: Hand jitter, misrecognition, and arm fatigue are reported qualitatively but not quantified. There is no evaluation of filters, smoothing, or alternative input modalities (controller, pen, voice) to reduce noise and fatigue.

- Sampling threshold sensitivity: The waypoint distance threshold is fixed (0.2 m) with no ablation to study its impact on accuracy, workload, path smoothness, and controller performance.

- Path geometry and feasibility: The planner uses piecewise-linear paths with naïve orientation assignment. There is no curvature/smoothness optimization, kinodynamic feasibility check, or enforcement of robot-specific limits (e.g., minimum turning radius).

- Path–costmap conflict handling: It is unclear whether the HRP is treated as a hard or soft constraint when obstacles are present in the costmap. The system’s behavior when the HRP intersects static or newly detected obstacles (e.g., detours, halts, user prompts) is not defined or evaluated.

- Dynamic environments and social navigation: Despite the motivation of reflecting human spatial intentions, the system is not tested with moving pedestrians, crowd flows, or social comfort constraints; how the HRP adapts to dynamic obstacles remains open.

- Online editing and incremental correction: The interface only supports ADD, CLEAR, and SEND. There is no ability to edit, undo, drag control points, or locally refine segments, nor to update the path while the robot is moving.

- Corridor/width and tolerance specification: Users cannot specify a desired path corridor, clearance, or tolerance band; the evaluation expands path width offline for analysis, but the interface lacks a native concept of path width or comfort buffers.

- Multi-user collaboration: The system has no mechanism for multiple operators to co-edit, review, or approve HRPs, resolve conflicts, or manage roles/permissions.

- Multi-robot coordination: How HRPs are allocated, prioritized, or deconflicted across multiple robots is not addressed.

- Scalability and field-of-view constraints: The feasibility of drawing long paths beyond the HoloLens field of view, across rooms, or around occlusions is untested; drift and usability over larger scales are unknown.

- Environmental robustness: Performance across different floor textures, lighting conditions, reflective surfaces, or poor plane detection is not evaluated.

- Safety and situational awareness: Wearing an HMD around moving robots may impair awareness; the safety impact and mitigation (e.g., proxemic warnings, shared awareness cues) are not studied.

- Quantitative tracking performance: Robot path-following quality (cross-track error, overshoot, max deviation, success/failure rates) is presented qualitatively; there is no rigorous quantitative tracking evaluation or parameter sensitivity analysis of the controller.

- Planner–controller interplay: Only a regulated pure pursuit controller is used; comparisons with alternative controllers (e.g., MPC, DWA variants) and their sensitivity to HRP point density and smoothness are missing.

- Platform generality: Integration and performance are demonstrated with a specific platform (Kachaka via gRPC). Portability to other robots (differential, omnidirectional, Ackermann) and to different Nav2 configurations is not evaluated.

- Persistence and robustness of path storage: JSON-based storage is simple but lacks concurrency control, versioning, conflict resolution, and recovery procedures after crashes or partial transmissions.

- UX affordances for precision: The interface lacks snapping to map features (walls, centerlines), constraint-aware suggestions, or visual feedback on feasibility (e.g., curvature/clearance warnings) that could prevent drawing infeasible paths.

- Combining semantics with geometry: While the motivation mentions human spatial intentions, the system does not capture higher-level semantics (e.g., “keep right,” “avoid doorways”) or integrate language constraints with HRP drawing.

- Parameterization along the path: Users cannot specify speed profiles, stop points, or task annotations along the path; the effect of such parameters on usability and execution is unexplored.

- Comparative ergonomics: Alternatives that may reduce fatigue (e.g., handheld pointer, wrist-rest stands, seated operation, mixed input modalities) are not compared, nor are task-dependent ergonomic recommendations provided.

Practical Applications

Immediate Applications

These use cases can be deployed with today’s MR headsets (e.g., HoloLens 2), ROS 2/Navigation2-compatible mobile robots, and the paper’s MRReP workflow (ADD/CLEAR/SEND) plus the custom HRP planner.

- Healthcare (hospitals, clinics)

- Human-aware routing for delivery/escort robots

- What: Clinicians draw preferred corridors/clearances on the actual floor to avoid patient flow, sensitive zones, or infection-control areas.

- Potential tools/products/workflows: MRReP app + ROS 2 Nav2 plugin; “clinical route templates” library; shift-based route adjustments; audit logs of HRPs.

- Assumptions/dependencies: Indoor localization alignment (QR anchors); stable network; staff training; facility approval for MR use; robots operating on ROS 2 or with a bridge.

- Bedtime/silent-hours routes

- What: Draw paths that minimize noise/exposure by hugging walls or bypassing wards.

- Tools/workflows: Time-of-day route switching via fleet manager; MRReP for quick edits at handover.

- Dependencies: Policy alignment; consistent anchor persistence across shifts.

- Warehousing and Logistics

- Lane discipline and aisle-side preferences for AMRs

- What: Supervisors draw “keep-right/keep-left” or wide-aisle preferences on the floor to reduce interference with humans/forklifts.

- Tools/workflows: MRReP integrated with WMS/FMS; pre-shift path validation; QR/Vuforia anchors at aisle starts.

- Dependencies: Accurate map–world registration; throughput analysis to avoid bottlenecks.

- Commissioning and route tuning

- What: Rapid path definition for new routes during layout changes without re-tuning global planner costmaps.

- Tools/workflows: “Commissioning mode” in MRReP; JSON path versioning; rollback to previous HRPs.

- Dependencies: Safe stopping distances; Nav2 pure pursuit parameters set for loaded carts.

- Offices and Corporate Facilities

- Socially compliant telepresence or courier routes

- What: Admins draw routes that avoid busy corridors, meeting rooms, or narrow passages at peak times.

- Tools/workflows: Calendar-linked route sets; MRReP quick edits during events.

- Dependencies: Consistent spatial anchors; privacy policy for MR headset use.

- Retail and Hospitality

- Guest-friendly delivery and cleaning paths

- What: Staff draw visual-comfort routes around dining areas and queues to reduce guest disruption.

- Tools/workflows: MRReP + “service mode” HRPs; per-venue route sharing.

- Dependencies: Lighting adequate for tracking; staff familiarity with gesture input.

- Public-sector and Campus Environments

- Event/temporary routing

- What: Quickly author temporary detours during maintenance, exhibitions, or emergencies.

- Tools/workflows: MRReP on a loaner headset; central repository of HRPs with effective dates.

- Dependencies: Rapid anchor setup; incident command approval; signage coordination.

- Robotics/Software Vendors and System Integrators

- On-site path configuration and acceptance testing

- What: Engineers capture client-intended paths in MR to validate “what the customer meant” without extensive costmap tweaking.

- Tools/products: MR path-editing module for Nav2; packaged “MR-to-ROS bridge” with QA checklists; path-difference reports (precision/recall/F1) as acceptance artifacts.

- Dependencies: ROS 2 compatibility; client IT/network access; security hardening for MR devices.

- Academia and Education

- HRI and social navigation experiments

- What: Researchers draw experimental paths in-situ to study human comfort zones and path fidelity without bespoke GUIs.

- Tools/workflows: MRReP + dataset logging (HRPs, robot trajectories); reproducible anchor setups; classroom demos in intro robotics.

- Dependencies: IRB approvals for human studies; stable lab anchors.

- Daily Life (tech-savvy users, facilities teams)

- Custom cleaning/patrol routes for prosumer robots

- What: Define “preferred” vacuum or patrol paths in offices or shared spaces.

- Tools/workflows: MRReP-like app on headset; JSON-to-vendor format converter.

- Dependencies: Vendor API/ROS 2 bridges; headset access; safety constraints.

Long-Term Applications

These require further research, productization, or ecosystem maturation (e.g., broader MR hardware, semantic perception, fleet-scale coordination, or standards).

- Healthcare

- Intent-aware, compliance-checked navigation

- What: Use MR-drawn HRPs as priors to learn hospital-wide semantic costmaps (comfort, privacy, infection zones).

- Potential tools/products/workflows: Learning pipeline that distills repeated HRPs into dynamic cost layers; compliance checker for ISO 3691-4/IEC 80601; automatic alerts for paths near restricted zones.

- Assumptions/dependencies: Data governance; long-term anchor stability; integration with hospital information systems.

- Warehousing/Manufacturing

- Fleet-level lane planning and congestion control

- What: Operators sketch “virtual lanes” and passing bays in MR; a fleet manager allocates lanes, resolves conflicts, and updates AMRs.

- Tools/workflows: MR “lane composer” + fleet orchestration; capacity modeling; live heatmaps to refine lanes.

- Dependencies: Centralized FMS; precise multi-robot localization; standardized lane semantics in planners.

- Smart Buildings and Digital Twins

- MR-authored navigation layers in a building twin

- What: Persist HRPs as a navigable layer within the BIM/digital twin; synchronize with maintenance schedules and access control.

- Tools/workflows: APIs between MRReP, BIM, and Nav2; version-controlled “route policies” with rollback.

- Dependencies: Cross-platform spatial anchors; BIM integration; change management.

- Retail/Hospitality

- Customer experience optimization via learned intent

- What: Aggregate staff-drawn HRPs and customer flow data to compute “comfort zones,” automatically shaping robot routes during peak hours.

- Tools/workflows: Analytics backend; A/B testing; MR visualizations of predicted disruption.

- Dependencies: Privacy-preserving flow sensing; real-time re-planning guarantees.

- Education and Workforce Training

- Standard curricula and certification for MR-based robot commissioning

- What: Train technicians on MR path authoring, safety overlays, and verification.

- Tools/workflows: Simulator + MR hybrid labs; standardized HRP acceptance tests.

- Dependencies: Industry-aligned competency frameworks; device availability.

- Policy and Safety

- MR as a regulatory and audit interface

- What: Inspectors visualize and approve robot routes on-site, annotate hazards, and certify clearance in MR.

- Tools/workflows: Digital signatures on HRPs; immutable route logs; automated checks (minimum clearances, speed zones).

- Dependencies: Standards for MR route records; cybersecurity; legal acceptance of digital audits.

- Consumer and Assistive Robotics

- Phone-based AR path authoring for home robots

- What: Port MRReP to ARCore/ARKit so users draw “preferred” vacuum/mower paths without a headset.

- Tools/workflows: SLAM-anchored floor grids; vendor-neutral export format for paths.

- Dependencies: Robust mobile AR tracking; vendor openness/APIs; UI for small screens.

- Learning and Autonomy

- From hand-drawn paths to intent-conditioned planners

- What: Use HRPs as demonstrations to train planners that internalize human-preferred geometry and social norms.

- Tools/workflows: Dataset pipeline (HRP, costmaps, outcomes); offline RL/Imitation Learning; evaluation on fidelity and comfort metrics.

- Dependencies: Scalable data collection; generalization across spaces; safety envelopes during learning.

- Multi-Modal Interaction

- Language + MR hybrid path editing

- What: Combine verbal constraints (“keep 1 m from doors, avoid reception”) with MR sketching for fast, precise authoring.

- Tools/workflows: LLM-based constraint parser to augment HRPs with semantic cost layers; interactive MR feedback.

- Dependencies: Reliable grounding of language to geometry; conflict-resolution logic between sketches and rules.

- Cross-Vendor Ecosystem

- Standardized “HRP” exchange format and APIs

- What: Open schema for hand-authored paths, anchors, and metadata usable across robot vendors and planners.

- Tools/workflows: ROS 2 REP for HRP messages; adapters in commercial FMS; conformance test suites.

- Dependencies: Industry consortium participation; IP/licensing agreements.

- Evaluation and Verification

- Automated path validation and robustness testing

- What: Stress-test MR-authored paths against dynamic obstacles and map drift; certify acceptable tracking error and comfort thresholds.

- Tools/workflows: Digital twin simulation linked to MR; scenario generators; pass/fail scorecards.

- Dependencies: High-fidelity simulators; standardized metrics for social comfort and fidelity.

Notes on feasibility across applications:

- Core dependencies: ROS 2/Navigation2 (or equivalent), MR headset or AR device, reliable world–map alignment (e.g., QR/spatial anchors), stable networking, and safety policies for human-shared spaces.

- Key assumptions: Indoor environments with consistent lighting and minimal drift; operator training for MR gestures; robots capable of following externally provided global paths; acceptance of MR devices in regulated settings.

- Risks/limitations: Gesture fatigue and misrecognition; anchor drift over long horizons; pure-pursuit limits on tight turns unless path smoothing or alternative controllers are used; dynamic obstacle handling may diverge from HRP without additional constraints or replanning logic.

In sum, MRReP’s validated gains in path fidelity and usability over 2D map drawing make immediate deployments attractive for supervised indoor mobile robots, while its data and interaction paradigm open longer-term directions in intent-aware autonomy, fleet orchestration, and standards for MR-authored robot navigation.

Glossary

- A*: A graph search algorithm used by global planners to find cost-optimal paths on grids or graphs. "using global planners such as A*, which generate paths mainly by optimizing geometric criteria such as path length and obstacle-related costs."

- cost tuning: Adjusting planner cost parameters to indirectly influence the robot’s route. "operators often have to rely on indirect methods such as cost tuning, repeated replanning, or discrete goal and waypoint input"

- costmap: A grid-based map encoding traversal costs (e.g., obstacles) for planning. "(b) Generated global path and costmap."

- counterbalanced: An experimental design technique that rotates condition order to reduce order effects. "they were evenly divided into two groups, and the order of system use was counterbalanced."

- effect size: A quantitative measure of the magnitude of a result or difference. "Effect sizes were also calculated to quantify the magnitude of the differences."

- False Negative (FN): A ground-truth cell not covered by the drawn region in the evaluation. "False Negative (FN): Cells belonging to the GT but not the drawn region."

- False Positive (FP): A drawn-region cell that does not belong to the ground truth in the evaluation. "False Positive (FP): Cells belonging to the drawn region but not the GT."

- F1-score: The harmonic mean of precision and recall used to assess path fidelity. "F1-score: Harmonic mean of Precision and Recall."

- gRPC: A high-performance RPC framework used here for robot-API communication. "Communication between ROS~2 and Kachaka is handled through the gRPC-based kachaka-api, which provides robot control and state retrieval functions"

- Ground Truth (GT): The reference, correct path region used for accuracy comparisons. "The spatial accuracy of the HRP was evaluated by comparing it with the Ground Truth (GT) derived from the target path indicated by tape on the floor."

- Hand-drawn Reference Path (HRP): A user-sketched trajectory intended to guide robot navigation. "The user draws a Hand-drawn Reference Path (HRP) directly on the physical floor while observing the real environment through an MR head-mounted display."

- Hand-drawn Reference Path Planner: A planner that converts the HRP point sequence into a global path. "we develop a custom Hand-drawn Reference Path Planner that converts the drawn HRP into a global path within the robot navigation stack"

- lookahead distance: The forward distance used by a tracking controller to choose a target point on the path. "a target point selected with a predefined lookahead distance."

- Mixed Reality (MR): Technology blending digital content with the physical environment for in-situ interaction. "Recent mixed reality (MR) interfaces have enabled more intuitive spatial interaction in the physical environment."

- MR-HMD: A mixed reality head-mounted display for visualizing and authoring spatial content. "(a) A user draws a Hand-drawn Reference Path (HRP) directly on the physical floor using hand gestures and an MR-HMD."

- Navigation2 (Nav2) stack: The ROS 2 navigation framework providing planning, control, and behavior execution. "path following along the HRP is executed using the Nav2 stack in ROS 2."

- negative constraints: Explicitly specified regions the robot should avoid during planning. "these approaches mainly support discrete destination specification or negative constraints, rather than direct specification of a continuous path."

- occupancy grid maps: Discretized maps where each cell represents occupancy probability for navigation. "navigation is commonly performed on occupancy grid maps using global planners such as A*"

- Pure Pursuit controller: A geometric path-tracking controller that steers toward a lookahead point on the path. "A Pure Pursuit controller computes translational and angular velocities from a locally transformed path and a target point selected with a predefined lookahead distance."

- QR code: A fiducial marker used here for cross-system coordinate alignment. "HoloLens~2-based QR code recognition using the Vuforia Engine."

- Recall: The fraction of ground-truth path cells covered by the drawn region. "Recall: Proportion of GT cells covered by the drawn region."

- ROS 2: A middleware framework for robotics used for messaging, navigation, and control. "Unity and ROS~2 communicate via ROS-TCP-Connector and ROS-TCP-Endpoint."

- ROS-TCP-Connector: The Unity-side component that connects to ROS 2 over TCP for topic exchange. "Unity uses a ROS-TCP-Connector configured with the IP address and port of the ROS~2 host to exchange topic messages."

- ROS-TCP-Endpoint: The ROS 2-side TCP server that interfaces with Unity for ROS messaging. "ROS-TCP-Endpoint runs on the ROS~2 side as a TCP server"

- Specificity: The fraction of non-ground-truth cells correctly left undrawn. "Specificity: Proportion of non-GT cells where no path was drawn."

- System Usability Scale (SUS): A standardized questionnaire for assessing perceived usability. "Usability and mental workload were evaluated using the System Usability Scale (SUS) and the NASA Task Load Index (NASA-TLX), respectively."

- True Negative (TN): A cell correctly identified as not part of the path in the evaluation. "True Negative (TN): Cells belonging to neither the GT nor the drawn region."

- True Positive (TP): A cell correctly identified as part of the path in the evaluation. "True Positive (TP): Cells belonging to both the GT and the drawn region."

- Vuforia Engine: A computer-vision SDK used for marker detection and spatial registration. "HoloLens~2-based QR code recognition using the Vuforia Engine."

- waypoint: A discrete point along a path used to define or follow a trajectory. "a new waypoint is generated when the distance between the current cursor position and the previous waypoint exceeds a predefined threshold"

- Wilcoxon signed-rank test: A nonparametric statistical test for paired comparisons. "a Wilcoxon signed-rank test was used to compare the MR and 2D conditions."

- within-subject experiment: A study design where each participant experiences all conditions for direct comparison. "We evaluated MRReP in a within-subject experiment against a conventional 2D baseline interface."

Collections

Sign up for free to add this paper to one or more collections.