- The paper introduces C-TRAIL, which integrates LLM-derived commonsense with a dual trust mechanism to enhance trajectory planning in autonomous driving.

- It employs a closed-loop Recall–Plan–Update cycle combined with a Dirichlet trust-guided policy within MCTS to dynamically adapt to novel, safety-critical situations.

- Empirical evaluations show significant trajectory accuracy improvements and robust performance in unseen environments, with notable gains in ADE, FDE, and success rates.

C-TRAIL: Trust-Aware Commonsense Trajectory Planning with LLMs for Autonomous Driving

Problem Motivation and Conceptual Framework

Trajectory planning in autonomous driving is challenged by dynamic, previously unseen environments where traditional world models require extensive observational data and struggle with generalization and robustness due to dataset bias and overfitting. Inspired by the cognitive capability of humans to apply abstract commonsense reasoning—such as understanding road structure and traffic conventions—when planning routes in unfamiliar scenarios, this paper introduces C-TRAIL, a planning framework that systematically integrates LLM-derived commonsense with reliable trust mechanisms.

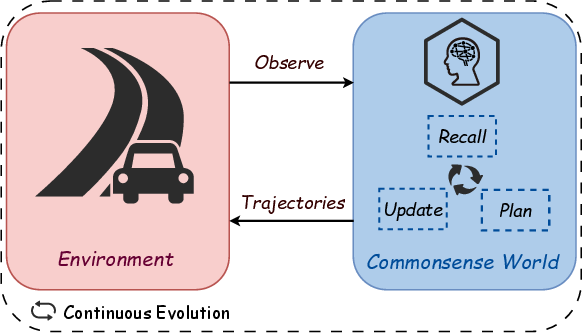

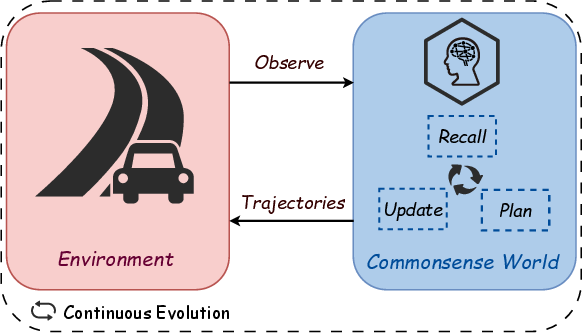

C-TRAIL operationalizes trajectory planning through a human-inspired closed-loop paradigm: Recall, Plan, and Update. Here, commonsense knowledge is dynamically recalled from LLMs, judiciously filtered and weighted via dual trust scoring, fed into MCTS planning via a Dirichlet trust-guided policy, and adaptively recalibrated with environmental feedback. The resulting system produces robust, adaptive policies capable of generalizing to novel and safety-critical situations (Figure 1).

Figure 1: The commonsense world paradigm for trajectory planning in dynamic environments.

Architecture: Trust-Aware Closed-Loop Cycle

The C-TRAIL architecture consists of three tightly coupled modules:

Trust-Aware Commonsense Recall

Vehicle and environment states are encoded as kinematic feature vectors and structured spatial relations. LLMs are queried for semantic relations and high-level action proposals using a standardized prompt, which is independently sampled multiple times. Trust scores are computed along two axes:

- Commonsense trust: entropy-based assessment of consistency across LLM outputs.

- Kinematic trust: verification of physical feasibility of proposed actions.

Unreliable or inconsistent commonsense (high-entropy or physically infeasible actions) is filtered using a gating mechanism. The resulting trust-weighted commonsense graph is encoded via a transformer for downstream planning.

Trust-Guided Planning

LLM-derived action priors are transformed into a Dirichlet policy, whose concentration parameters are dynamically modulated by the global trust score. This Dirichlet prior is injected into a modified PUCT selection rule in MCTS, such that planning is adaptively guided—exploiting LLM commonsense under high trust, and exploring alternatives under low trust. The system caches LLM queries, leveraging them across multiple simulations, enhancing both efficiency and robustness.

Trust Calibration Update

Upon execution, environmental feedback (new state, reward) is used to recalibrate trust scores and Dirichlet concentration parameters. Masked blending and reward-adaptive decay distinguish knowledge reliability from environmental stochasticity. Exponential moving average ensures temporal coherence in policy updates and prevents abrupt adaptation.

Numerical Evaluation and Ablations

Experiments were performed on four Highway-env scenarios (highway, merge, roundabout, intersection) and two real-world datasets (highD, rounD) comprising 105 step-level samples per scenario. Baselines spanned classical planners, fine-tuned LLM trajectory predictors, and LLM-guided planners without explicit trust calibration.

Key Results:

- ADE reduced by 40.2%, FDE reduced by 51.7%, SR improved by 16.9 pp over state-of-the-art methods, all with p<0.05 significance.

- SR degradation in unseen environments averaged only 1.7 pp; classical and non-trust-aware LLM planners experienced much heavier performance drops.

- Dual-trust mechanism raised relation prediction accuracy (RPA) from 63.4% (raw LLM) to 86.1%, directly impacting planning success rate.

Ablation Findings:

- Dirichlet prior ablation showed largest SR drop (−28.9 pp intersection), confirming the criticality of trust-calibrated priors.

- Dual-trust filtering and adaptive update removal further degraded performance, highlighting the necessity of reliability quantification and temporal smoothing.

Robustness and Safety

The trust mechanism reliably detects and attenuates LLM errors—when random incorrect outputs are injected, trust scores drop sharply, automatically triggering exploratory MCTS policies instead of following unreliable guidance. C-TRAIL maintains stable SR under aggressive NPC behaviors, with substantially smaller performance drops compared to non-trust-aware LLM planners.

Computational and Practical Considerations

While classical planners are computationally faster, LLM-based approaches including C-TRAIL provide substantially higher planning accuracy. The C-TRAIL inference pipeline is dominated by LLM latency (74+%), with MCTS and trust update modules being lightweight. Modular integration supports deployment in AV stacks; planner gracefully degrades under high LLM latency or low trust scores.

Failure Modes and Mitigations

Failure analysis reveals a predominance of policy errors due to conflicting action priors in high-complexity settings, followed by model errors (incomplete commonsense recall) and mapping errors (incorrect action grounding). Distribution of failure types remains similar between simulated and real-world domains, motivating ensemble querying, conservative trust thresholds, and improved action grounding for mitigation.

Existing literature either addresses isolated modules—recall, planning, or adaptation—but lacks comprehensive integration of trust-aware commonsense and dynamic real-time calibration. The unified Recall–Plan–Update cycle of C-TRAIL is unique in establishing continuous reliability assessment and closed-loop adaptation in trajectory planning.

Conclusion

C-TRAIL introduces a principled integration of LLM-derived commonsense into trajectory planning for autonomous driving, grounded in trust-weighted reasoning and adaptive closed-loop calibration. Empirical results substantiate significant gains in trajectory accuracy and planning robustness. Trust mechanism is essential for generalization and safety, especially in complex or unseen environments.

Future work can extend C-TRAIL to multi-agent settings, leverage multimodal grounding, and validate on high-fidelity simulators with continuous vehicle dynamics for enhanced realism and safety verifiability.