- The paper reveals that Trusted Flaggers operate under severe funding and resource constraints, limiting their effectiveness in reporting illegal content.

- The study uses semi-structured interviews and validation workshops to document challenges in accreditation, technical hurdles, and inconsistent reporting practices.

- The findings call for policy reforms including harmonized reporting protocols, standardized processes, and dedicated funding to support TF operations.

"There is literally zero funding": The Role, Challenges, and Accountability of Trusted Flaggers under the EU Digital Services Act

Introduction and Policy Context

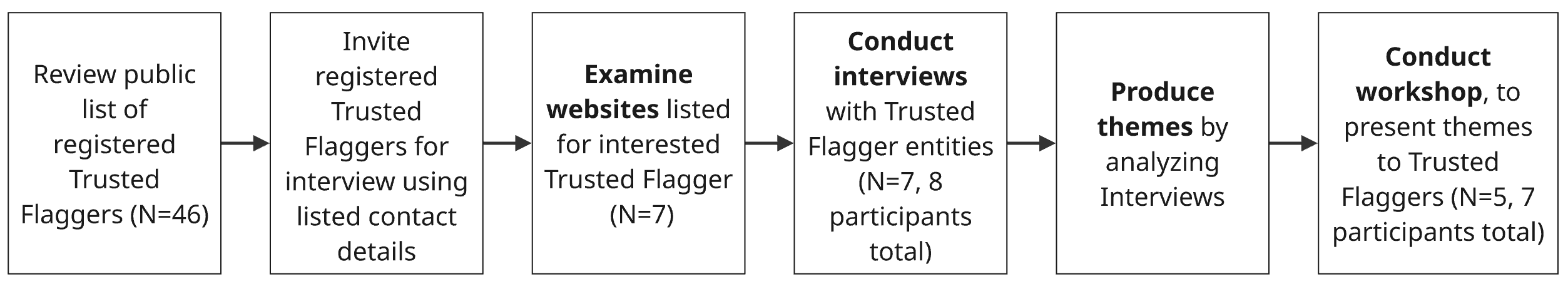

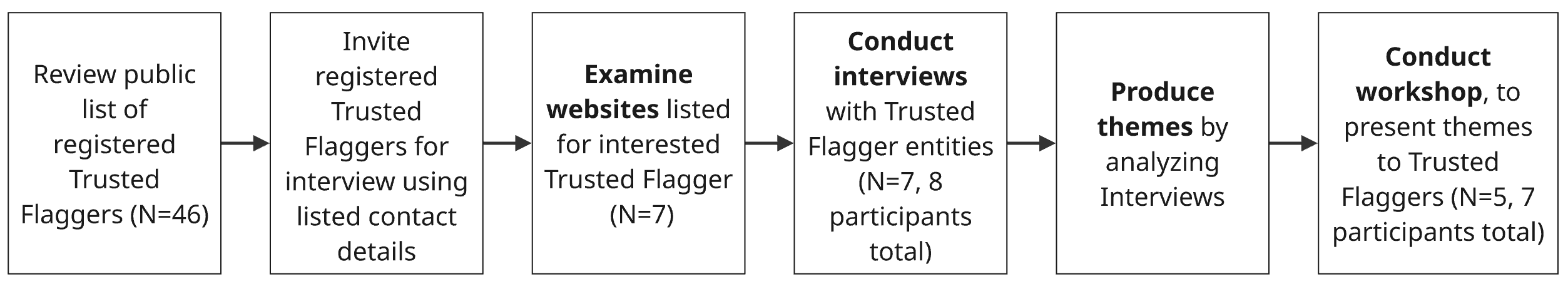

The Digital Services Act (DSA) introduces a formally accredited "Trusted Flagger" (TF) mechanism aimed at accelerating reporting and removal of illegal content from online platforms. TFs are independent entities granted platform-prioritized status by national Digital Services Coordinators (DSCs), expected to deploy domain-specific expertise in identifying, documenting, and reporting illicit material. This paper interrogates the lived experience of seven TF organizations, through semi-structured interviews and a peer validation workshop, to surface practical, resource, and coordination challenges encountered during the DSA's initial year of implementation (2603.29874).

Accreditation, Mandate Setting, and Operational Processes

TF accreditation is procedurally burdensome, often demanding hundreds of hours per entity. Applicants must submit granular financial, organizational, and competence evidence, including proof of platform independence and legal expertise, to DSCs. The mandate is strictly scoped, bounded by the designated illegal content categories that an organization is permitted to flag. Organizations resist mission drift due to unresourced expansion pressures and legal requirements for impartiality in notice preparation.

TFs devote substantial labor to monitoring, documenting, compiling evidence, producing structured reports, submitting through platform interfaces, tracking response latencies, and generating annual audit artifacts. This process often involves constructing internal monitoring tools leveraging both algorithmic and manual workflows, including AI-based scanning for candidate content and post hoc human validation.

Figure 1: Steps in engagement with Trusted Flagger entities, outlining multi-stage accreditation, reporting, liaison, and feedback cycles.

Resource Constraints and Volunteer Labor

Despite critical escalation responsibilities, TFs report an unequivocal absence of additional funding streams, echoing the titular claim of "literally zero funding." Staff tasked with TF work operate in a volunteer capacity or add reporting to an already overloaded schedule, typically without dedicated budget or staffing allocation. Membership fees, donations, and government grants supply modest support, yet no systemic financing has materialized for DSA-generated TF workloads. This dynamic induces vulnerability, especially when platforms contest TF evidence in legal proceedings, and causes organizations to narrow their activities strictly within their accredited mandate.

User Interaction, Visibility, and Representational Gaps

TFs exhibit divergent models of user engagement. Some serve indirect societal benefit by proactively surface violations; others interface reactively with users, NGOs, or community groups submitting incident reports. The lack of standardization in TF visibility—manifest in inconsistent website disclosures and limited cross-linguistic accessibility—generates confusion for end users about available recourse and reduces opportunities for under-represented causes to gain TF representation.

Participating TFs recurrently confront friction from platforms, especially in the operationalization of reporting prioritization. Technical implementations vary idiosyncratically: submission channels, URL caps, account verification procedures, and feedback mechanisms are neither standardized nor uniformly compliant. Some platforms cap submission volumes, force convoluted account registration, or selectively automate report handling. Smaller platforms often fall outside DSA obligations, further fragmenting the TF impact surface.

Platform incentives frequently diverge from TF priorities, with evidence suggesting platform policies both buttress and undermine TF reporting. The lack of harmonized reporting APIs and workflows across platforms not only slows incident response but also creates opaque, labor-intensive moderation feedback loops.

Legal and Operational Ambiguity

The operational qualification of what constitutes "illegal" content is frequently ambiguous. TFs report high takedown success for clear-cut intellectual property violations but struggle with edge cases in political or context-sensitive material. Automated moderation tools installed by platforms occasionally misclassify or ignore TF reports, further complicating evidentiary substantiation and downstream transparency.

Accountability and Transparency Artifacts

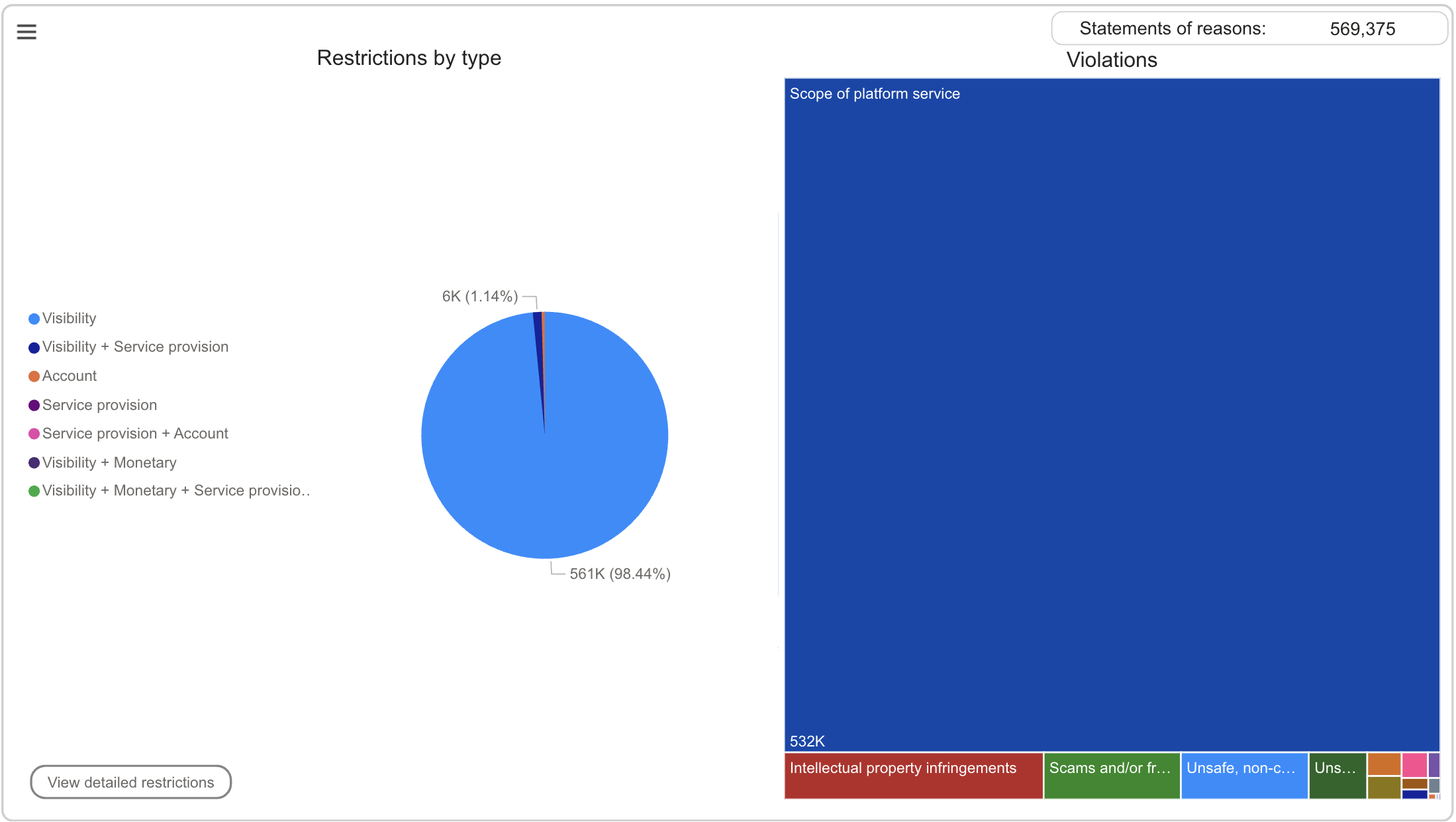

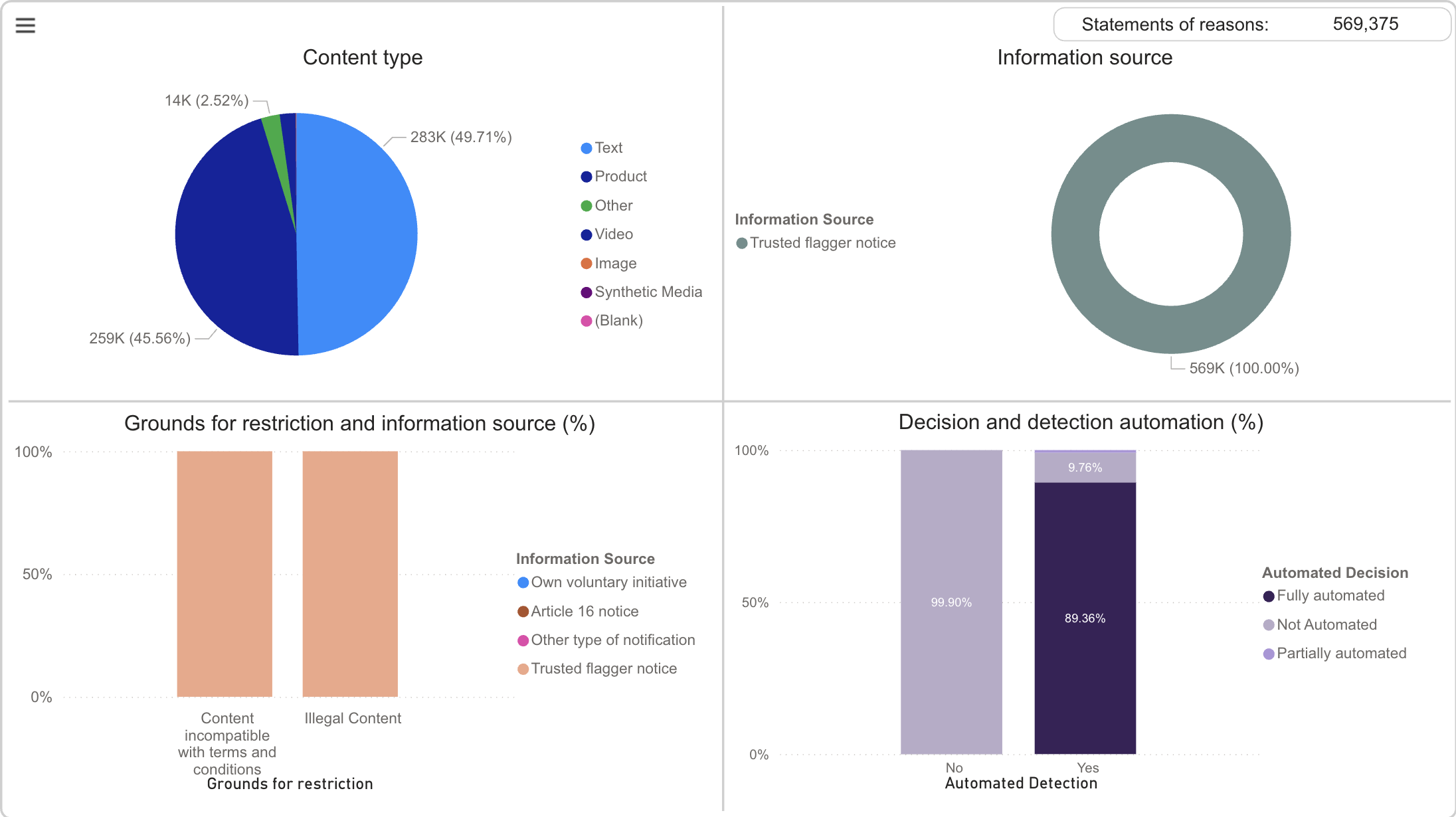

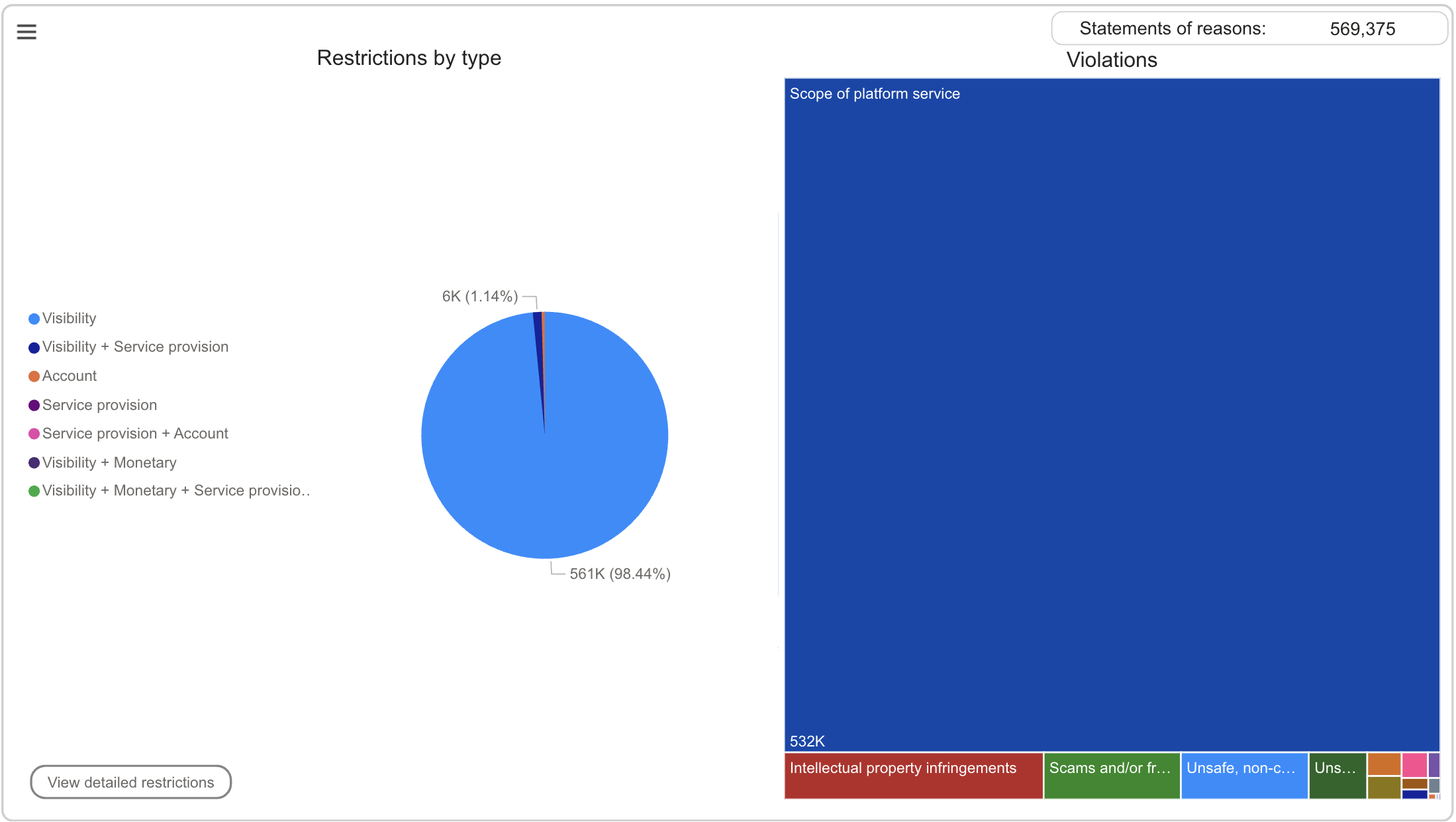

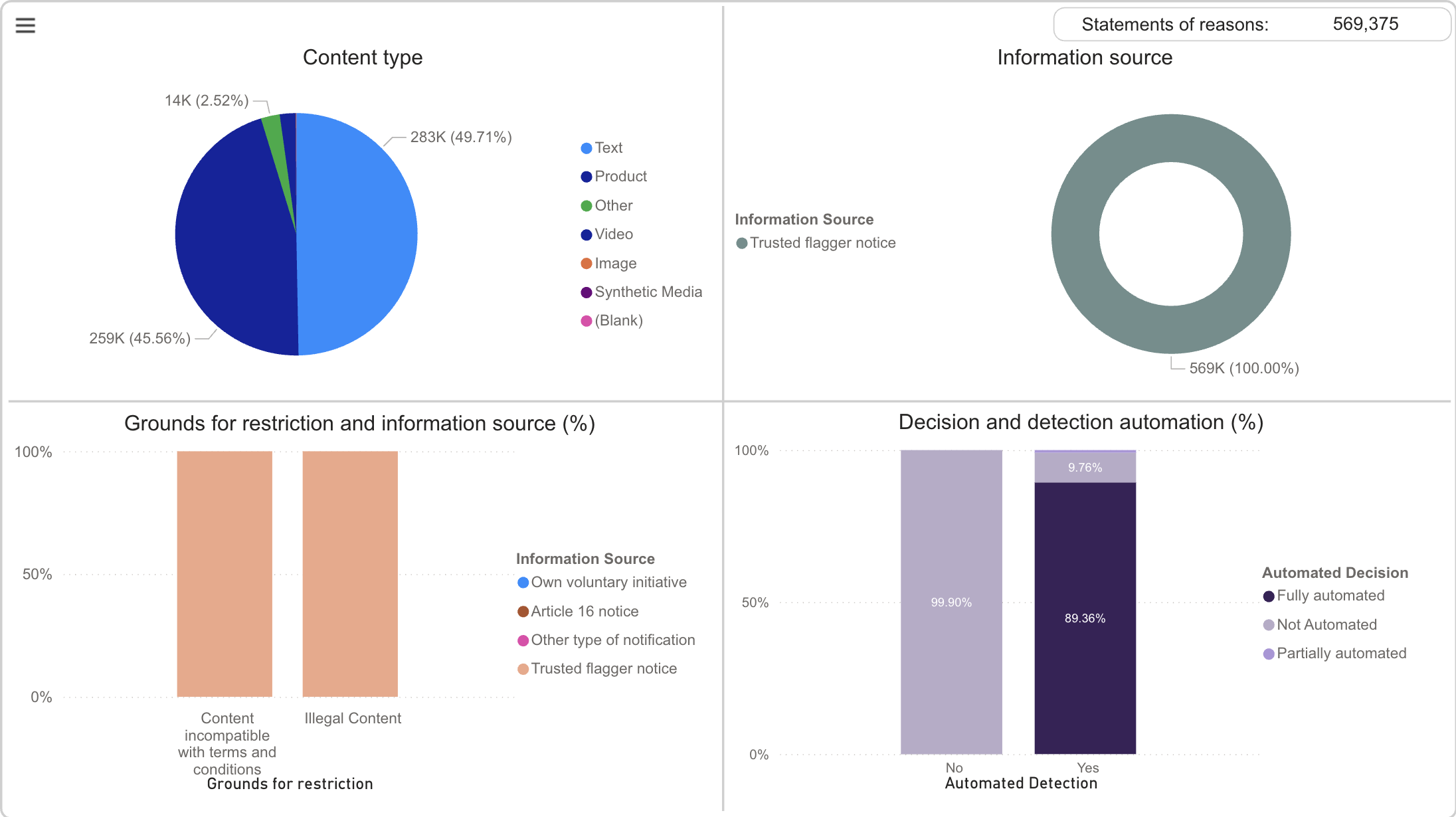

Analysis of the DSA Statement of Reason (SOR) database exposes substantive gaps in transparency and accountability. TFs note discrepancies between the actual volume of flagged reports and those documented in SOR artifacts, suggesting that platform discretion or technical infrastructure inadequately registers TF activity. The dominant restriction categories and violation types in database analytics often misrepresent the scope and impact of TF labor.

Figure 2: SOR database dashboard capturing restriction types and violation categories for Trusted Flagger notices—indicates severe reporting distortion.

Figure 3: SOR database graphic detailing content type and moderation measure frequencies, highlighting limited automation and content diversity in TF notices.

Coordination Challenges and Workshop Findings

TFs describe challenging inter-org and cross-platform coordination, with early efforts at standardization lacking practical traction. Accreditation criteria vary across member states, leading to differentiated onboarding and operational practices. The workshop validated that technical constraints, platform channel fragmentation, and methodological underspecification in measuring prioritization (e.g., takedown latency computation) are shared pain points.

The lack of systematic on-boarding from regulators (e.g., DSCs), contact point maintenance, and template sharing exacerbate coordination costs. TFs call for centralized guidelines and best-practice forums, noting that workshop-style exchanges facilitate practical learning and operational harmonization.

Implications, Recommendations, and Future Directions

Practical Implications

The findings directly challenge normative assumptions underlying DSA's Trusted Flagger regime:

- Resource deficit: The absence of funding is systematic, risking burnout, mission contraction, and unequal cause representation.

- Platform fragmentation: Platform-driven heterogeneity in reporting processes undermines procedural efficiency as well as regulatory objectives.

- Accountability gap: Transparency artifacts (e.g., SOR) do not adequately document TF contributions, providing poor basis for evaluation, audit, and user redress.

- Representation bias: Causes lacking established, resourced organizations remain effectively excluded, a limitation compounded by the TF mandate-centric mechanism.

Recommendations

- Standardization: Accelerate regulatory and industry adoption of harmonized reporting protocols and legal compliance APIs, as envisaged under DSA Art. 44(1)(c), to reduce coordination and tooling overhead.

- Community and knowledge commons: Institutionalize regular TF workshops and cross-national forums, led by DSCs and supported by academic actors, to share templates, metrics, and lessons learned.

- Inclusive outreach: Proactively identify under-represented user groups and causes, leveraging privacy clinic models and GSE outreach to address reporting and support gaps.

- Public guidance: Develop educational materials for end users to clarify what constitutes harmful or illegal content and enumerate pathways for engaging TF organizations.

Theoretical and Policy Implications

From a regulatory theory perspective, TFs embody a hybrid "expert crowd-sourced" enforcement mechanism, but structural resource constraints and opaque platform interaction risk entrenching exclusionary dynamics, reducing the efficacy of formalized reporting speed and prioritization. In future DSA iterations and related platform regulation, explicit funding allocation, onboarding channel centralization, and outcome-sensitive transparency metrics are vital for corrective adaptation.

Future Research

There is a need for studies that:

- Quantify the resource burden on TFs and model optimal financing schemes for sustainable enforcement.

- Analyze platform compliance behavior and technical implementation of TF prioritization in practice.

- Develop audits and metrics that capture lived TF effort and platform response beyond what is exposed in the SOR database.

- Explore TF impact on the digital safety of marginalized or at-risk populations, with special attention to representation and efficacy in non-English, non-mainstream contexts.

Conclusion

Trusted Flaggers under the DSA accelerate platform action and provide a conduit for expert-driven reporting of illegal content but do so under acute resource constraints, procedural fragmentation, and accountability gaps. Transparency artifacts insufficiently reflect actual labor, while platform incentives and technical implementations thwart procedural uniformity. Addressing these shortcomings demands regulatory standardization, funding reform, coordination infrastructure, and inclusive outreach strategies. Without these, the TF mechanism risks reinforcing existing representational deficits and procedural inefficiencies, undermining its intended role as a cornerstone in European online safety governance.