- The paper reveals that prompt entry now serves as the primary onboarding method, bypassing traditional tutorials and documentation.

- It highlights distinct iterative practices: professionals employ detailed, efficient prompts while casual users rely on external AI chaining for refinements.

- The study shows that credit constraints and varying output evaluation criteria shape user interactions, suggesting a need for dual-mode interface designs.

Study Context and Methodological Overview

This paper presents an empirical investigation into workflow adaptation, onboarding, and help-seeking strategies among both casual and professional users as they engage with prompt-driven, generative 3D modeling tools. By leveraging Meshy AI and Spline AI as representative commercial platforms, the study targets the rapidly shifting learnability dynamics in creative toolchains now mediated by generative models. An observational protocol with 26 participants (14 casual, 12 professional) was deployed, including in-situ task completion and semi-structured interviews spanning three canonical generative tasks: text-to-3D, image-to-3D, and open-ended model generation. The analysis employs reflexive thematic methods to capture the nuanced trajectories of onboarding and learning, explicitly comparing behaviors across expertise gradients and resource (credit) constraints.

Collapse of Traditional Onboarding: The Primacy of the Prompt

The results reveal that prompt entry has emerged as the de facto onboarding gateway, superseding engagement with tutorials, manuals, or even built-in contextual help. Most participants—regardless of prior 3D experience—began with immediate prompt formulation, collapsing the deliberative space of onboarding into direct system interaction via language instructions, rather than traditional exploratory feature discovery.

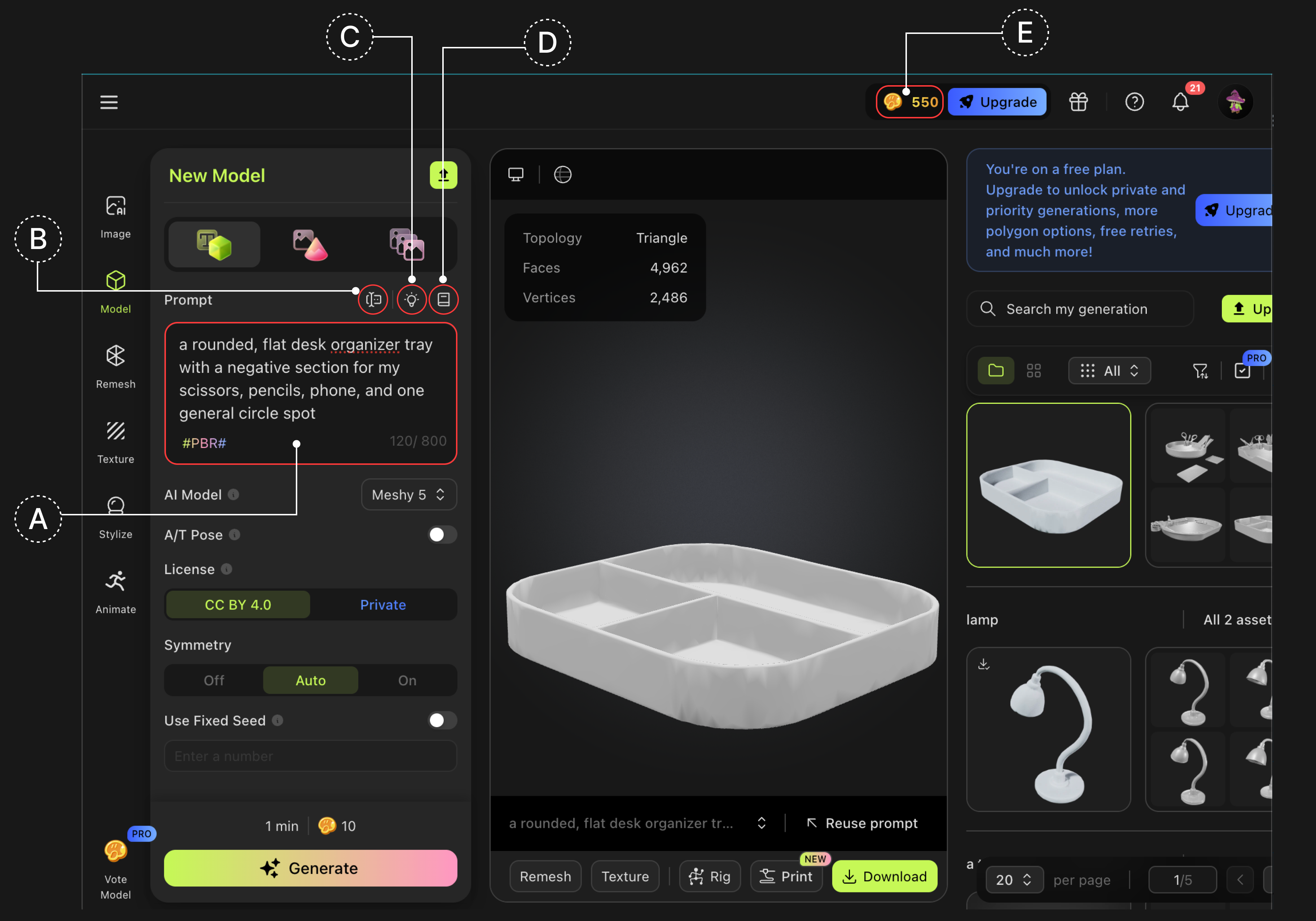

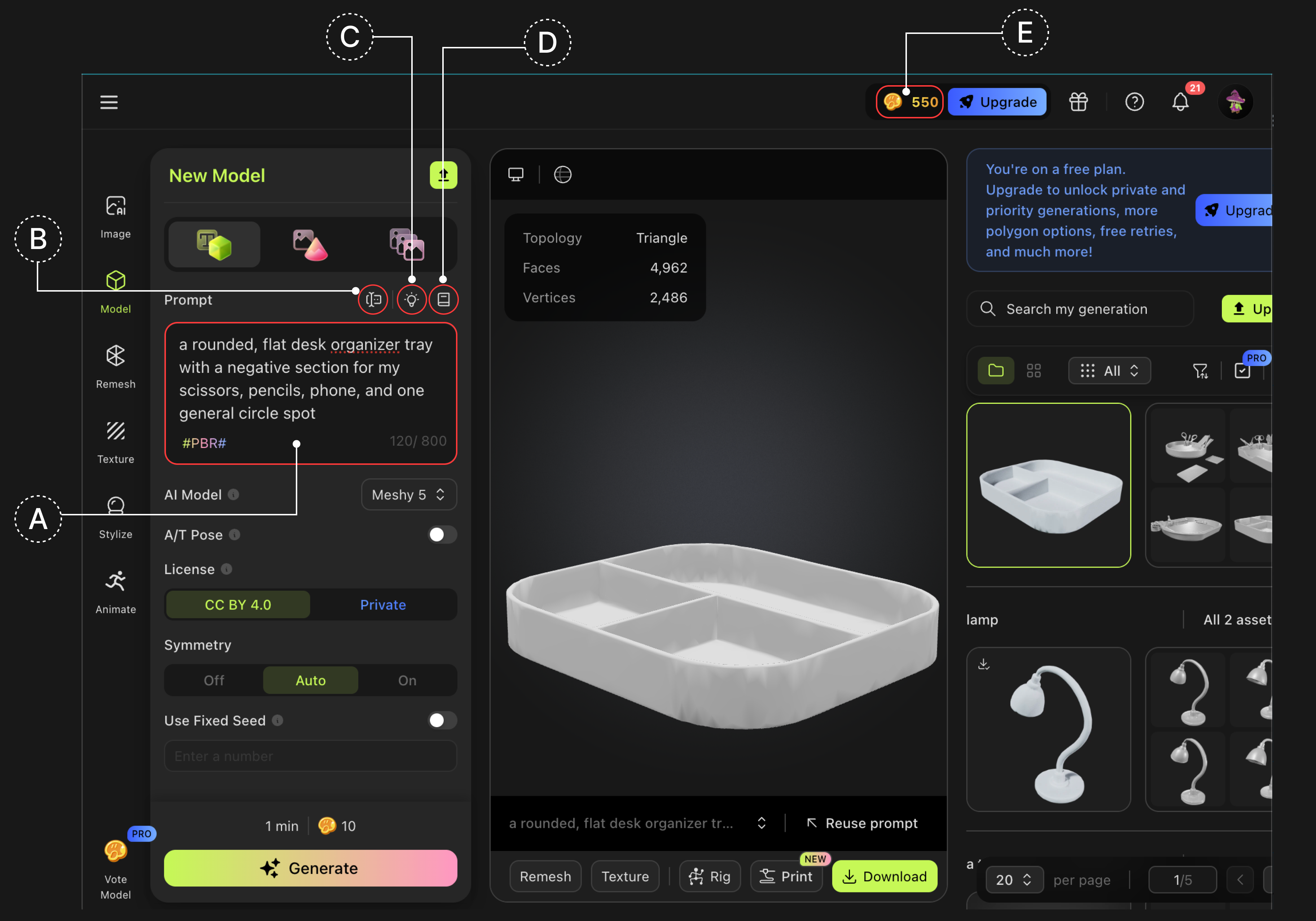

Figure 1: Meshy AI interface illustrating the centrality of the prompt input; built-in help options were largely ignored in practice.

This "prompt-first" orientation marks a paradigm inversion: onboarding is now an integrated process of trial-and-error, occurring primarily within the generative dialog loop instead of external documentation consumption. Casual users, in particular, ignored in-built resources and migrated to ChatGPT or similar external LLMs to scaffold their prompt-writing, effectively constructing recursive AI-for-AI support chains.

Divergent Iterative and Help-Seeking Patterns by Expertise

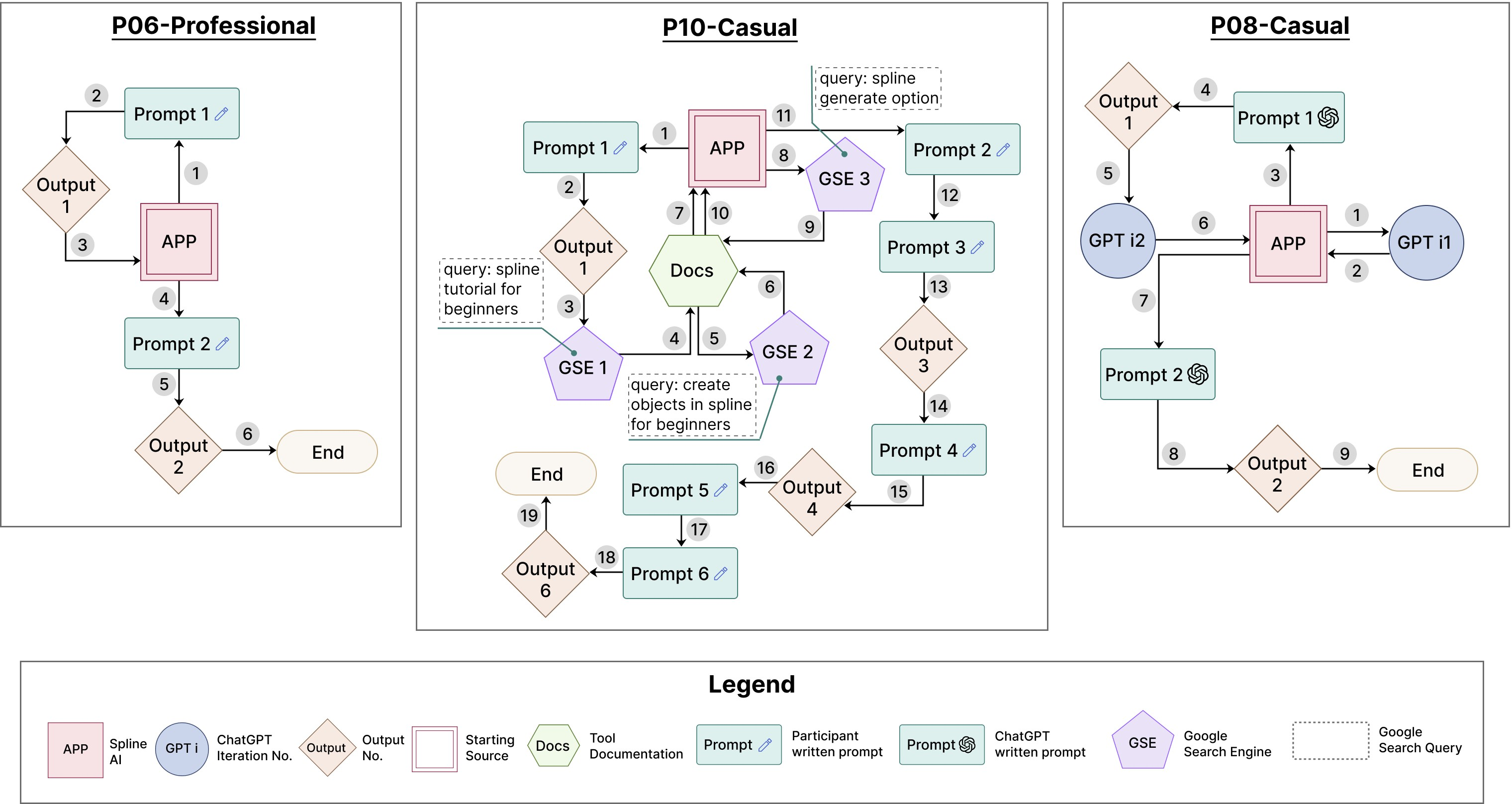

Detailed workflow analyses indicate substantial stratification by expertise. Professionals formulated longer, structurally explicit prompts (average: 83 words) and iterated only when output defects signaled substantive geometric or semantic misalignment, discarding unpromising outputs rapidly. Their interaction was characterized by resource-rationality, with iteration contingent not merely on feedback but on an a priori ceiling of expected model quality. In contrast, casual users engaged in high-frequency, shallow prompt tweaks, layering visual adjectives or switching stylistic descriptors, with low concern for geometric fidelity.

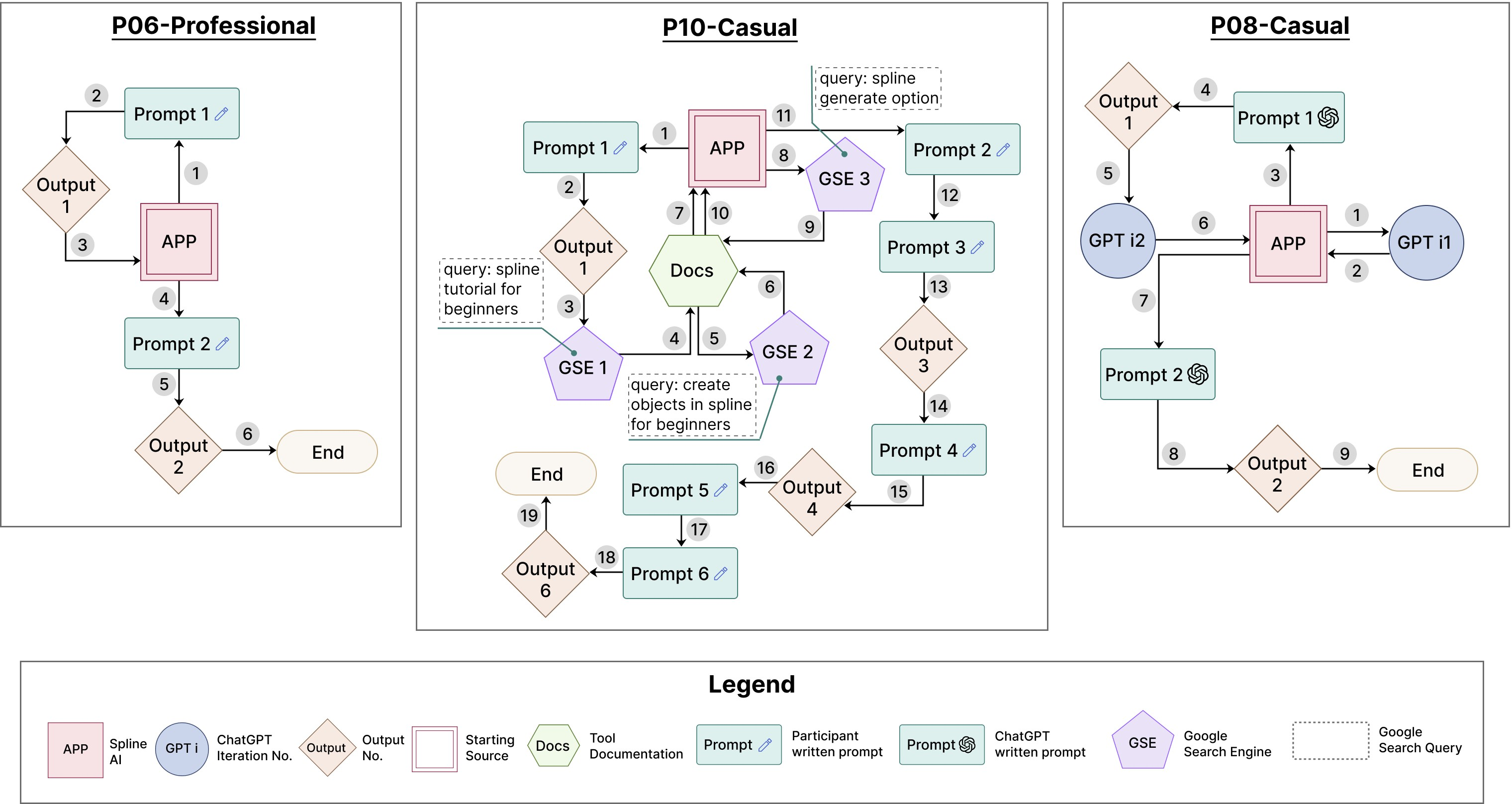

Figure 2: Three onboarding and iteration trajectories—(left) expert prompt-first minimalism, (center) casual multi-source help exploration, (right) casual AI-chaining for prompt construction.

Despite explicit encouragement to use embedded documentation and tutorial features, reliance on such was minimal. Instead, external LLMs (notably ChatGPT) emerged as the dominant auxiliary for prompt specification among casuals, especially when credit/token constraints discouraged riskier in-tool iteration. This mediates a distinctive form of "AI chaining," where the prompt for one generative system is constructed or refined by another AI, offloading both the linguistic and conceptual burdens from the user.

Workflow Integration and Output Evaluation: "Good Enough" vs "Production Ready"

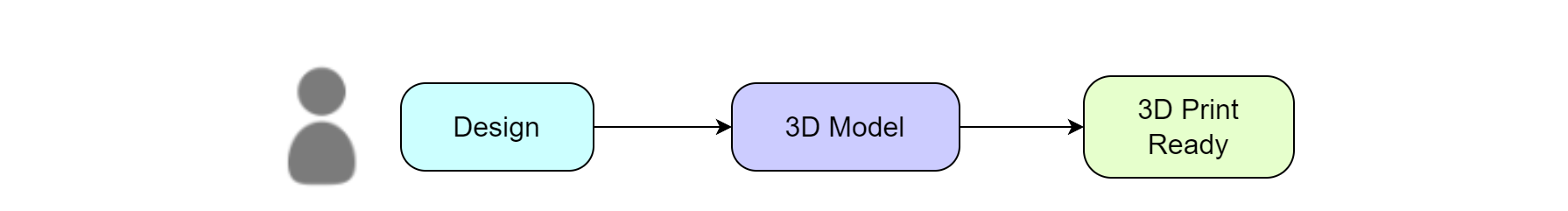

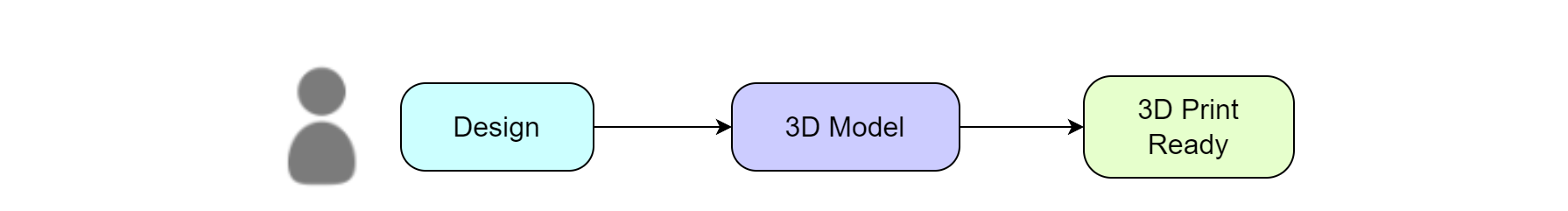

Output evaluation sharply bifurcates between user classes. Casual users overwhelmingly accepted visual plausibility as a sufficient criterion ("good enough" for 3D printing), disregarding manifold or topological defects, or any mesh-level nuances. The generative tool becomes, for casuals, a walk-up pipeline: prompt-in, print-out.

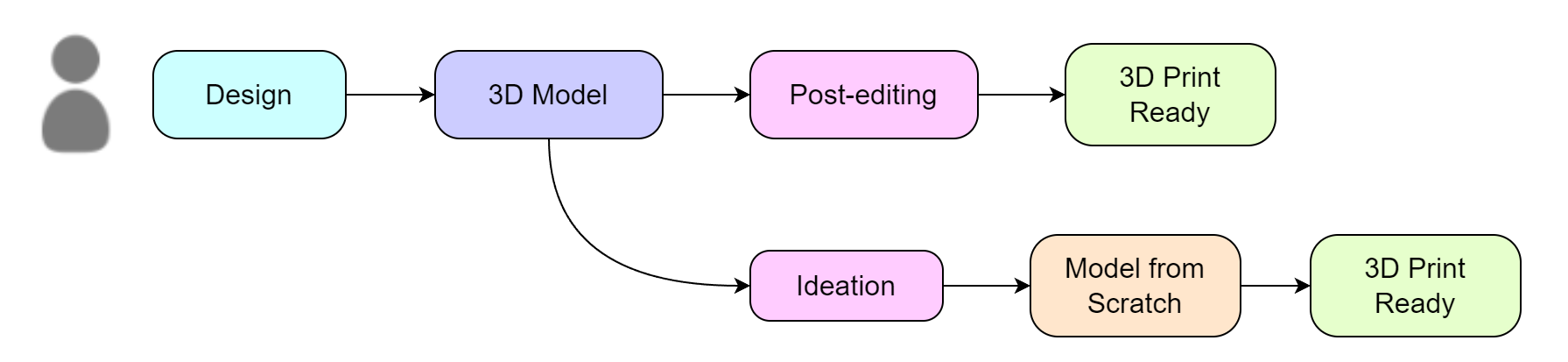

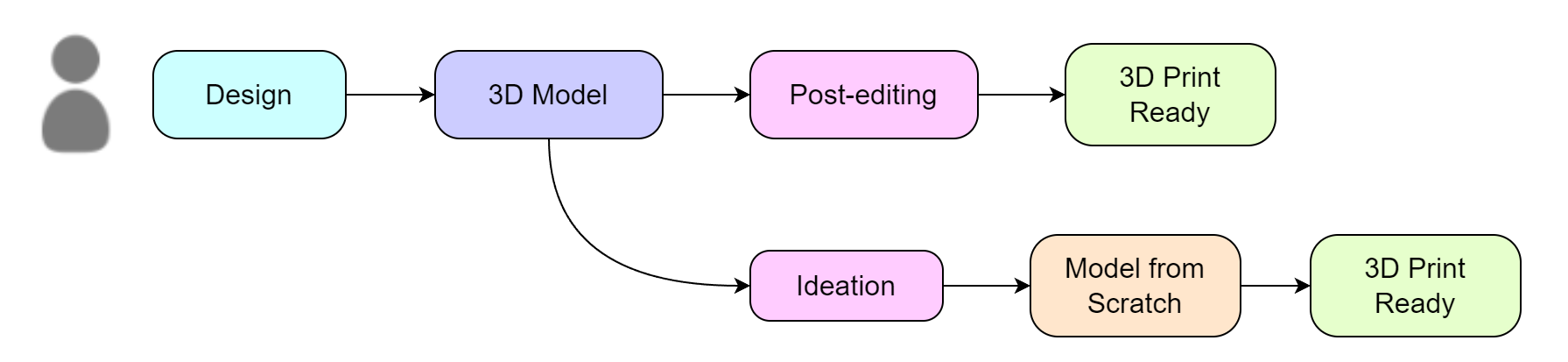

Figure 3: (a) Generalized workflow diagram for casuals—direct output acceptance; (b) For professionals, outputs bifurcate into post-editing or full rejection in favor of traditional workflows.

Conversely, professionals systematically interrogated outputs for manufacturing or mesh-readiness criteria, including structural stability, non-manifold errors, and post-editing effort required. Most professionals relegated generative models to the ideation phase—rapid generation of sketches or references—rather than for direct-to-production workflows, citing persistent limitations in model output reliability and precision.

Impact of Credit Constraints

The study surfaces pronounced behavioral modulation under credit-constrained scenarios. Casual users, in particular, developed a form of risk-averse exploration, reducing iterative refinements as available credits depleted. Iteration count dropped precipitously under awareness of credit consumption, with users prematurely settling on suboptimal artifacts to conserve generative resources. Professionals, while mindful of credits, were more often deterred by perceived output quality ceiling rather than explicit credit exhaustion.

Theoretical and Practical Implications

Redefining Help-Seeking as Distributed, Multi-AI Ecosystems

The findings pivotally reconceptualize help-seeking as no longer anchored in manuals or direct peer assistance but coordinated across a distributed ecosystem of generative and conversational AI. User onboarding and troubleshooting increasingly manifest as AI-to-AI or user-AI-to-AI interactions, with prompt engineering as a central skill and locus of system understanding (2603.29118). This ecosystemic reframing mandates a new focus for HCI: supporting coordination, handoff, and ambiguity resolution across multiple agentic interfaces, rather than optimizing isolated tool learnability.

The "prompt loop"—iterative, self-corrective dialoguing with the generative model—replaces both procedural training and feature exploration as the dominant onboarding mode. For novices, the reduced interface complexity lowers entry barriers, but for experts, the opacity of text-to-model mapping reconstructs new frictions: lack of reproducibility, poor parametric control, and unpredictability undermine the transfer of domain expertise.

Design Implications

- Workflow-level multi-model guidance: Interfaces should embrace transparency around their multi-stage pipelines, surfacing both stochastic output uncertainty and intermediate artifacts. This design reification can reduce reliance on black-box trial-and-error, and enable both guided progression for novices and surgical intervention for experts.

- Prompt-local scaffolding ("micro-scaffolds"): Instead of static tooltips, in-situ suggestion mechanisms should adapt dynamically to failed iterations, nudging the user toward actionable vocabulary or functional constraints in their prompt loop.

- Dual-mode interfaces: The observed divide between ideation- and production-level sufficiency supports explicit "concept" vs. "production" operational modes, each with tailored diagnostics, feedback, and mesh-validity tools. This accommodates both rapid ideation for casuals and manufacturability audits for professionals.

- Credit transparency: Predictive cost-benefit indicators and warnings regarding resource/risk trade-offs should precede generation steps, given their direct impact on user willingness to iterate and explore tool capabilities.

Broader Implications and Future Directions

The implication for creative toolchain design is that as generative models mediate more of the onboarding and creation process, traditional learning and help-seeking models become less salient, and the skill profile shifts toward prompt engineering and AI literacy. For researchers, comparative studies in other generative domains (e.g., text-to-image, AI code generation) are warranted to probe the generalization of these onboarding and help-seeking dynamics. Additionally, the recursive and ecosystemic character of AI-mediated assistance points toward new forms of distributed cognition and collaborative agency—an area ripe for further empirical and design-centered exploration.

Conclusion

This paper demonstrates a fundamental shift in how users interact with and learn generative 3D modeling tools: onboarding is now dynamically co-constructed across distributed, language-mediated prompts and recursive AI assistance, with expertise-specific workflow integration and evaluation criteria. These patterns signal that generative AI does not simply lower barriers to creative production but reconfigures the nature of tool learnability, help-seeking, and workflow integration. Tool designers and HCI researchers must recognize and adapt to a future in which AI tools are both collaborator and instructor, and where the boundaries between software use, learning, and creative collaboration are increasingly indistinct.