"Oops! ChatGPT is Temporarily Unavailable!": A Diary Study on Knowledge Workers' Experiences of LLM Withdrawal

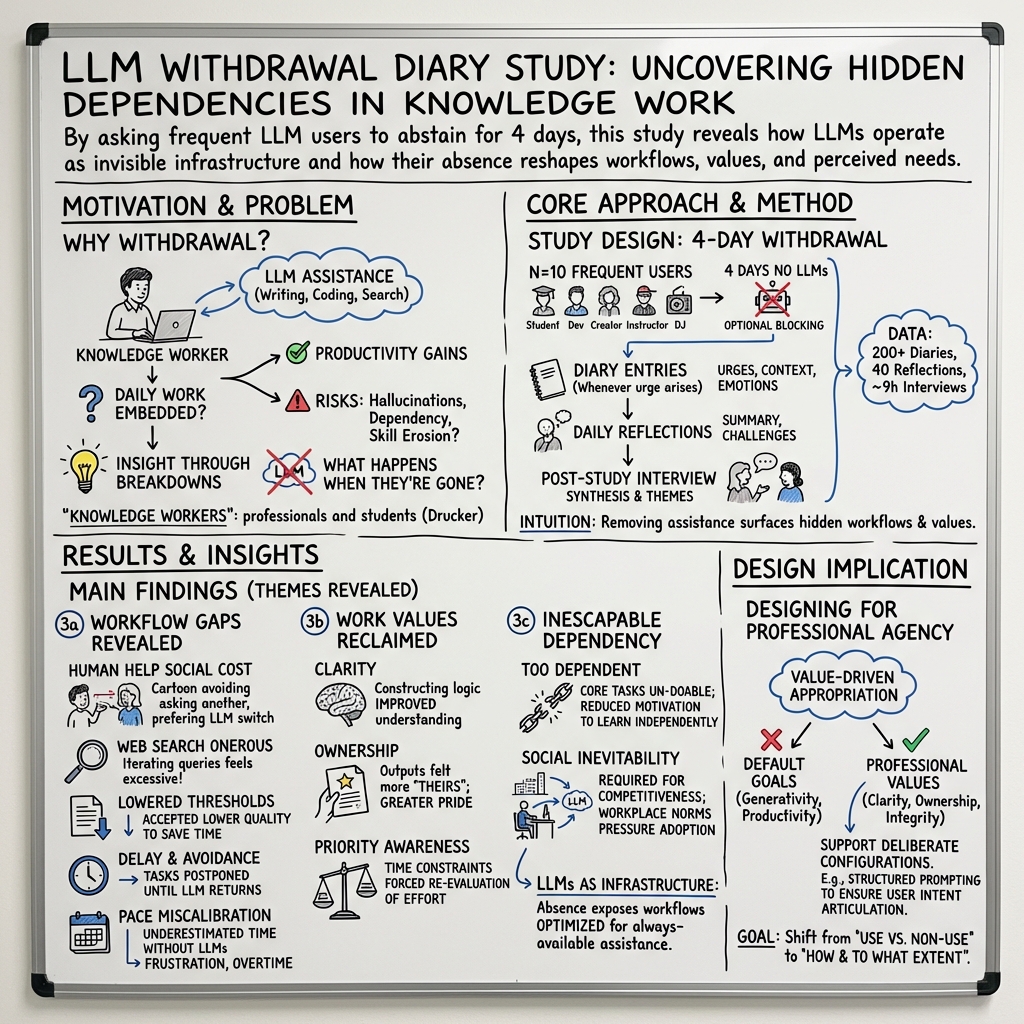

Abstract: LLMs have become deeply embedded in knowledge work, raising concerns about growing dependency and the potential undermining of human skills. To investigate the pervasiveness of LLMs in work practices, we conducted a four-day diary study with frequent LLM users (N=10), observing how knowledge workers responded to a temporary withdrawal of LLMs. Our findings show how LLM withdrawal disrupted participants' workflows by identifying gaps in task execution, how self-directed work led participants to reclaim professional values, and how everyday practices revealed the extent to which LLM use had become inescapably normative. Conceptualizing LLMs as infrastructural to contemporary knowledge work, this research contributes empirical insights into the often invisible role of LLMs and proposes value-driven appropriation as an approach to supporting professional values in the current LLM-pervasive work environment.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

This paper looks at what happens when people who usually rely on AI chatbots like ChatGPT suddenly can’t use them for a few days at work. The authors wanted to see how deeply these tools are woven into everyday tasks—and what people learn about their own skills and habits when the AI is “turned off.”

The main questions

The researchers kept their goals simple:

- How common and important are AI tools (like ChatGPT) in day‑to‑day work?

- What breaks down—or changes—when those tools are taken away for a short time?

- Do people notice any positives from working without AI, like feeling more confident or clearer about their work?

- Is using AI now seen as optional, or as something you “have” to do to keep up?

How the study worked

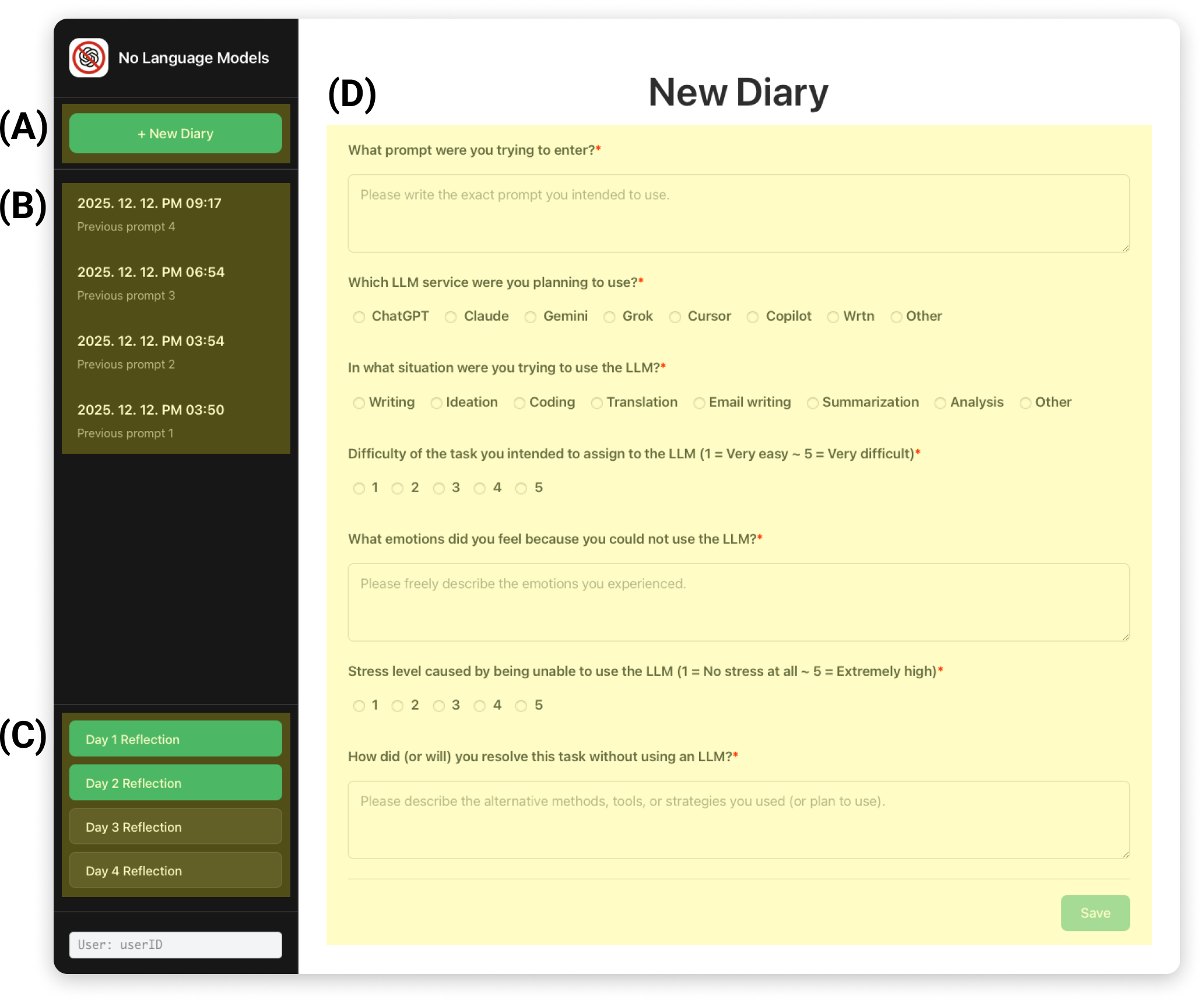

The team ran a “diary study,” which is like a short, guided journal experiment:

- They recruited 10 frequent AI users in South Korea, including students and professionals whose work involves thinking, writing, coding, and researching (often called “knowledge workers”).

- For four workdays, participants tried not to use any AI chat tools. If that was hard, they could install website blockers to help.

- Every time they felt the urge to use an AI tool, they wrote a short diary note about the situation and their feelings. They also wrote a daily reflection at the end of each day.

- After the four days, each person did an interview to talk through their experience.

- The researchers analyzed all the notes and interview transcripts using “thematic analysis,” which means they looked for common patterns and themes across people’s stories.

Think of it like this: If AI chatbots are as normal as electricity at work, what happens when the power goes out? What parts of work slow down, stop, or change?

What they found

The main results fell into three big areas.

1) Gaps in daily work showed up without AI

When AI was removed, people ran into problems they hadn’t noticed before:

- Asking humans felt awkward: People who were used to asking AI for help felt shy or worried about bothering colleagues with questions.

- Searching took more effort: Without AI to summarize or explain, people spent more time guessing the right keywords and piecing together information from many pages.

- Reading and writing standards slipped: Without quick AI edits or summaries, some were okay with “good enough” emails or drafts, rather than polishing them.

- Tasks got delayed: Some chose to postpone certain jobs until they could use AI again.

- They misjudged time: People realized they didn’t know how long tasks would take without AI help and felt frustrated by the slower pace.

In short, AI had quietly become the “default helper,” and taking it away made everyday work feel clunky and slow.

2) Some personal and professional values came back

Working without AI wasn’t all bad. People also noticed positives:

- More clarity: Doing the steps themselves helped them understand problems more deeply, instead of just accepting an AI’s reasoning.

- Stronger ownership: When they completed tasks on their own, they felt more proud and felt the work was truly theirs.

- Clearer priorities: With less “speed boost” from AI, people had to choose what mattered most. Some realized they’d been outsourcing even core, identity‑defining tasks to AI and reconsidered which skills they truly wanted to maintain.

3) AI use felt “inevitable”—both personally and socially

- Personal dependence: Many felt they could no longer do certain tasks without AI because they had gotten used to leaning on it. Some even avoided learning to work without it because AI was so convenient.

- Social pressure: Using AI wasn’t just a personal choice—it felt like a new norm. People worried they’d fall behind if they didn’t use it, and some said teachers or bosses expected AI use to save time.

Overall, AI tools weren’t just handy gadgets; they acted like work infrastructure—like Wi‑Fi or email—always there and quietly shaping how people work.

Why this matters

- The big takeaway is not “use AI” versus “don’t use AI.” It’s about deciding how to use AI in a way that matches your values and your job’s goals.

- The authors suggest “value‑driven appropriation.” That means shaping AI use to support what you care about—like learning, honesty, or ownership—instead of letting the tool’s defaults push you toward only speed and output.

- For example: Brainstorm by yourself first, then ask AI for alternatives; or ask AI to explain sources instead of just giving final answers; or set rules like “AI can help rewrite after I draft my own first version.”

Limitations and what’s next

- All participants were in South Korea and were already heavy AI users, so results may differ in other countries or among people who use AI less.

- The study lasted only four workdays; longer studies could reveal more.

- Future research could help people plan their AI use more intentionally and see how different strategies affect learning, speed, and confidence.

Bottom line

Turning off AI for a few days showed just how much today’s work depends on it—and what we might lose or gain because of that. AI can make us faster, but it can also quietly change our habits and skills. The challenge now is to keep the benefits while protecting the things that make our work feel clear, valuable, and truly ours.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, focused list of what remains missing, uncertain, or unexplored in the paper, phrased to guide future research:

- External validity: replication beyond a small (N=10), single-country (South Korea), high-dependency sample; include varied cultures, industries, and users with low-to-moderate LLM reliance.

- Duration effects: extend beyond a four-day abstinence to observe adaptation, relapse, coping strategy evolution, and potential skill decay or recovery over weeks/months.

- Compliance verification: objectively verify LLM abstinence (e.g., telemetry, app logs) and quantify substitution to embedded AI features or alternative tools during withdrawal.

- Tool-ecosystem scope: clarify and map the boundary of “LLM-based services” (e.g., Copilot, Grammarly, search AI overviews, IDE integrations) to understand partial vs. full withdrawal effects.

- Task-level outcomes: measure objective productivity, quality, error rates, and time-on-task across task types to identify where withdrawal harms or helps performance.

- Heterogeneity of effects: analyze differences across subgroups (students vs. professionals, domain expertise, programming vs. writing vs. research tasks, English vs. Korean tasks).

- Organizational context: examine how team norms, policies, managerial expectations, and performance metrics create or mitigate social pressure to use LLMs.

- Infrastructural embedding: operationalize and measure the “LLM as infrastructure” claim (e.g., dependency networks, process mapping, outage impact metrics) rather than rely on self-reports.

- Social norm mechanisms: study how normative pressure to use LLMs emerges and diffuses (e.g., social network analyses within teams/departments; cross-organizational comparisons).

- Intervention testing: move from the proposed “value-driven appropriation” concept to concrete, testable designs (prompts, UI constraints, friction, scaffolds) evaluated via experiments or field trials.

- Learning trajectories: longitudinally assess impacts on skill acquisition, retention, and transfer (e.g., coding, writing, information synthesis) with and without LLM support.

- Cognitive and affective states: include psychometric and physiological measures (stress, cognitive load, self-efficacy, anxiety) during withdrawal to triangulate self-reports.

- Planning and pacing: quantify changes in time estimation, planning fallacy, and scheduling behaviors when LLMs are absent; evaluate planning aids that improve calibration.

- Collaboration patterns: examine how LLM reliance reshapes help-seeking, mentorship, peer discussion, and knowledge sharing networks; identify conditions where human interaction increases or decreases.

- Equity implications: investigate how differential access to LLMs, abstinence policies, or outages affect inclusion, job security, and performance evaluations across roles and demographics.

- Domain boundaries: test generalizability in regulated or safety-critical domains (healthcare, law, finance) where errors and accountability stakes differ.

- Model/tool variance: compare dependency and withdrawal experiences across different LLMs, modalities (text, code, multimodal), and quality levels to see if effects generalize.

- Creativity and originality: objectively evaluate whether withdrawal influences originality, depth of reasoning, or creative output (beyond reported “ownership” and “clarity”).

- Mixed-method triangulation: complement interviews/diaries with behavioral logs, artifact audits, and outcome reviews to reduce self-report and demand-characteristic biases.

- Natural experiments: leverage real-world outages or policy changes to observe organizational resilience, continuity plans, and emergent workarounds in situ.

- Healthy dependency thresholds: develop and validate metrics (building on LLM-D12, including relational dimensions) to identify “healthy” vs. “harmful” reliance levels for different tasks.

- Privacy and security practices: study how withdrawal alters handling of sensitive data and whether dependency shapes risk perceptions and data governance behaviors.

- Training and resilience playbooks: identify and evaluate non-LLM workflows, training protocols, and contingency plans that maintain performance during unavailability.

- Career identity and progression: assess how sustained LLM use or intermittent withdrawal affects professional identity, perceived competence, and long-term career development.

Practical Applications

Immediate Applications

The study’s findings on LLMs as “work infrastructure,” the disruptions observed during withdrawal, and the proposed “value-driven appropriation” approach translate into the following deployable use cases:

- AI-outage continuity planning (industry, software, finance, healthcare)

- What: Create LLM outage playbooks (task triage, handoffs, SLAs, escalation) and run “AI failure drills.”

- Tools/workflows: Multi-vendor redundancy; documented failover to search/manual procedures; internal subject-matter expert rosters; offline documentation caches.

- Assumptions/dependencies: Vendor status APIs; trained staff; clear data-access alternatives; security approval for backups.

- “Value-driven appropriation” modes in AI tools (software, productivity suites, education)

- What: Ship assistant modes that defer answers until user intent/reasoning is articulated (explain-first), or require user-generated steps before revealing completions.

- Tools/workflows: Prompt templates; “slow/scaffolded” modes; friction toggles; per-task policies.

- Assumptions/dependencies: Vendor support for UI changes; user acceptance; minimal productivity penalty on routine tasks.

- Task-mapping and delegation guidelines (industry, academia, healthcare)

- What: Define which tasks must remain human-led (core/professional identity) vs. safely delegable to LLMs.

- Tools/workflows: Team charters and RACI matrices; checklists in project management tools; code-review and writing rubrics emphasizing human rationale.

- Assumptions/dependencies: Leadership buy-in; alignment with compliance and accreditation standards.

- LLM-dependency assessment in organizations (industry HR/L&D, academia)

- What: Screen teams with validated instruments (e.g., instrumental items of LLM-D12) to identify high-risk dependencies.

- Tools/workflows: HRIS-integrated pulse surveys; team coaching; targeted training plans.

- Assumptions/dependencies: Access to instruments; privacy safeguards; willingness to act on results.

- “AI fasting” micro-interventions for individuals (daily life, education, software teams)

- What: Scheduled LLM abstinence intervals to reclaim clarity/ownership and recalibrate effort expectations.

- Tools/workflows: Browser extension blockers (e.g., BlockSite) with calendars; journaling prompts capturing intent, effort, and learning.

- Assumptions/dependencies: Voluntary adherence; low-risk task windows; cultural support for reflective practice.

- Search and knowledge-management strengthening (industry, software, research)

- What: Improve internal search, wikis, and curated FAQ so workers rely less on LLM summarization for routine retrieval.

- Tools/workflows: Enterprise search tuning; content governance; “source-of-truth” pages; tagging and ownership.

- Assumptions/dependencies: Content maintenance capacity; adoption incentives.

- Human-help workflows to counter social cost of asking (industry, academia)

- What: Normalize human consultation via office hours, rotating “on-call” internal experts, and lightweight Q&A channels.

- Tools/workflows: Slack/Teams routing; mentorship rotations; peer-review clinics.

- Assumptions/dependencies: Management support; time allocation; recognition/reward systems.

- Education: Structured prompting and articulation-first assignments (education)

- What: Require students to articulate goals, plans, and evaluation criteria before LLM usage; include LLM-withdrawal days.

- Tools/workflows: LMS templates; rubrics grading reasoning and ownership; logs of pre-LLM articulation.

- Assumptions/dependencies: Faculty training; clear academic integrity policies; accessibility accommodations.

- Software engineering safeguards (software)

- What: Require “design doc first” and debugging diaries before LLM suggestions; flag high AI-origin code for deeper review.

- Tools/workflows: IDE plugins marking AI-sourced snippets; mandatory tests/specs prior to code-generation; pair reviews.

- Assumptions/dependencies: Tooling integration; developer buy-in; performance metrics that value quality.

- Compliance- and risk-aware LLM controls (finance, healthcare, legal)

- What: Task- and data-sensitive gating (DLP integrations, model restrictions) to reduce routine over-delegation and privacy risks.

- Tools/workflows: Policy-driven access control; redaction pipelines; audit logs of AI-assisted decisions.

- Assumptions/dependencies: Regulator-aligned policies; IT/security resources.

- Procurement requirements for AI resilience (industry, government)

- What: Contractual SLAs requiring outage notifications, failover support, and exportable logs; evaluation of on-prem/edge backups.

- Tools/workflows: Vendor scorecards; resilience drills as acceptance criteria.

- Assumptions/dependencies: Market alternatives; procurement authority; budget.

- UX research via “LLM abstinence” probes (industry, HCI practitioners)

- What: Use short withdrawal studies to reveal hidden dependencies and surface process improvements.

- Tools/workflows: 3–5 day diary+interview protocols; ethics review; synthesis workshops.

- Assumptions/dependencies: Participant consent; non-critical work windows.

Long-Term Applications

The paper also suggests design and policy directions that require additional research, scaling, or product development:

- Autonomy-aware assistants that modulate help (software, education, healthcare)

- What: Adaptive systems that adjust assistance to preserve user skill (e.g., more scaffolding for novices, withholding answers to maintain challenge).

- Dependencies: Reliable proficiency models; user control; evidence on skill retention vs. productivity trade-offs.

- “Ownership meters” and effort analytics (software)

- What: Product features that quantify human contribution vs. AI generation to encourage ownership and clarity.

- Dependencies: Attribution standards; privacy-preserving telemetry; UX validation.

- Standards for AI-dependency assessment and organizational resilience (industry consortia, policy)

- What: Sector-level benchmarks and certifications for AI-resilient operations and balanced AI use.

- Dependencies: Multi-stakeholder consensus; audit frameworks; regulator recognition.

- Regulatory guidelines on AI reliance in critical sectors (policy: healthcare, finance, energy, public services)

- What: Mandate AI outage drills, non-AI alternatives, and documentation of human rationale for high-stakes decisions.

- Dependencies: Impact assessments; stakeholder engagement; enforcement mechanisms.

- Enterprise “LLM infrastructure” architecture (industry, software)

- What: Observability (usage dashboards, circuit breakers), graceful degradation, on-prem/edge backups, and knowledge-graph augmentation to reduce brittle dependence.

- Dependencies: MLOps maturity; cost/benefit alignment; data governance.

- Curricula focused on AI-withdrawal literacy and skill preservation (education)

- What: Courses that integrate abstinence modules, articulation-first practices, and longitudinal assessments of learning outcomes.

- Dependencies: Curriculum redesign; faculty development; assessment research.

- Sector-specific “appropriation modes” (healthcare, finance, legal)

- What: Task templates that encode professional values (e.g., clinical reasoning-first, compliance-first) before AI assistance.

- Dependencies: Domain ontologies; human-factors validation; liability considerations.

- Workforce planning with “AI reliance” metrics (industry, HR analytics)

- What: Incorporate AI-dependency measures into job design and career development to protect core competencies.

- Dependencies: Validated metrics; fairness and anti-bias safeguards; labor relations.

- IDEs and authoring tools with reflection-first workflows (software, publishing)

- What: Editors that prompt problem framing and success criteria prior to code/text generation; require tests/outlines first.

- Dependencies: Tool vendor integration; user acceptance; performance impact studies.

- Safety cases for AI-assisted operations (energy, transportation, robotics)

- What: Formal methods to demonstrate acceptable risk under AI outages; fallback human–machine interfaces for degraded modes.

- Dependencies: Standards bodies; simulation infrastructure; certification pathways.

- Clinical decision support with competence retention (healthcare)

- What: Systems that preserve clinician reasoning (e.g., differential-first) and limit over-reliance; periodic “AI-off” drills.

- Dependencies: Trials on patient safety and outcomes; EHR integration; ethics oversight.

- Cross-cultural, longitudinal deprivation research and repositories (academia, HCI)

- What: Open protocols, datasets, and pattern libraries of “LLM failover” solutions across cultures and professions.

- Dependencies: Funding; IRB approvals; global partnerships.

- Personal digital ergonomics for cognitive offloading (daily life, occupational health)

- What: Evidence-based guidelines and tools balancing AI convenience with cognitive engagement to sustain skills and well-being.

- Dependencies: Longitudinal behavioral studies; clinical validation.

These applications assume organization- and vendor-level willingness to embed “value-driven appropriation,” availability of multi-provider or on-prem LLM options where resilience is needed, and cultural/sectoral alignment with professional standards. Feasibility will vary with regulatory context, risk profile of tasks, and maturity of MLOps and knowledge management practices.

Glossary

- Abstinence (technology): The intentional and temporary refraining from using a technology to examine its effects on behavior or well-being. "smartphone and social media abstinence"

- Appropriation (technological): The process by which users adopt and adapt a technology beyond its intended use to fit their practices and contexts. "Building on the concept of technological appropriation"

- Codebook (qualitative research): A structured set of codes and their definitions used to guide consistent coding and analysis in qualitative studies. "the three authors met to collaboratively develop a codebook."

- Deprivation: A research condition in which access to a technology is removed to observe impacts on users’ experiences and behaviors. "to study technology non-use and deprivation"

- Diary study: A method where participants log activities, experiences, or reflections over time to capture in-situ behaviors and contexts. "we conducted a four-day diary study with frequent LLM users (N=10)"

- Generativity: The capacity of a system to enable the creation of new content, uses, or innovations beyond initial designs. "such as generativity, productivity, or proactivity"

- Institutional Review Board (IRB): A committee that reviews and oversees research involving human participants to ensure ethical standards are met. "Institutional Review Board (IRB)"

- Instrumental dependency: Reliance on a tool or system for accomplishing tasks and functions in a practical, task-oriented way. "We used only the instrumental dependency items from the LLM-D12, as relational dependency was out of scope."

- Infrastructural: Describing a technology that functions as underlying, taken-for-granted support for everyday activities or systems. "Conceptualizing LLMs as infrastructural to contemporary knowledge work"

- Knowledge collapse: A hypothesized degradation or homogenization of knowledge and creativity due to over-reliance on generative AI outputs. "potentially contribute to knowledge collapse in creative works"

- Knowledge worker: A person whose primary work centers on creating, using, or managing information and knowledge. "We use ``knowledge workers'' to refer to both employed knowledge workers and university students."

- LLM-D12: A psychometric scale designed to assess levels and facets of dependency on LLMs. "measured utilizing LLM-D12 scale"

- LLM withdrawal: A period during which users are asked or required to refrain from using LLMs to study behavioral and experiential effects. "a four-day period of LLM withdrawal"

- Mediation (statistical): A relationship where the effect of one variable on an outcome operates through an intermediate variable. "mediated by self-efficacy"

- Normative: Pertaining to what is socially accepted as standard or expected behavior within a context or community. "inescapably normative"

- Proactivity: An AI system property where the system initiates actions or suggestions without explicit user prompts. "such as generativity, productivity, or proactivity"

- Professional agency: The capacity of individuals to act autonomously and uphold professional standards and values in their work. "human collaboration and professional agency"

- Relational dependency: Dependence characterized by emotional, social, or relational attachment to a technology, beyond instrumental use. "as relational dependency was out of scope."

- Semi-structured interview: An interview technique using a flexible guide of topics or questions, allowing open-ended follow-ups. "a semi-structured interview"

- Student agency: Learners’ capacity to direct their own learning, make choices, and exercise control over tasks. "to support student agency"

- Technology non-use: The intentional practice or study of not engaging with a technology, often to understand its role by contrast. "to study technology non-use and deprivation"

- Thematic analysis: A qualitative method for identifying, analyzing, and interpreting patterns (themes) within data. "We conducted a thematic analysis"

- Value-driven appropriation: Shaping the use of a technology according to users’ professional or personal values rather than default or provider-defined uses. "value-driven appropriation as an approach to supporting professional values"

Collections

Sign up for free to add this paper to one or more collections.