Confidence-Based Mesh Extraction from 3D Gaussians

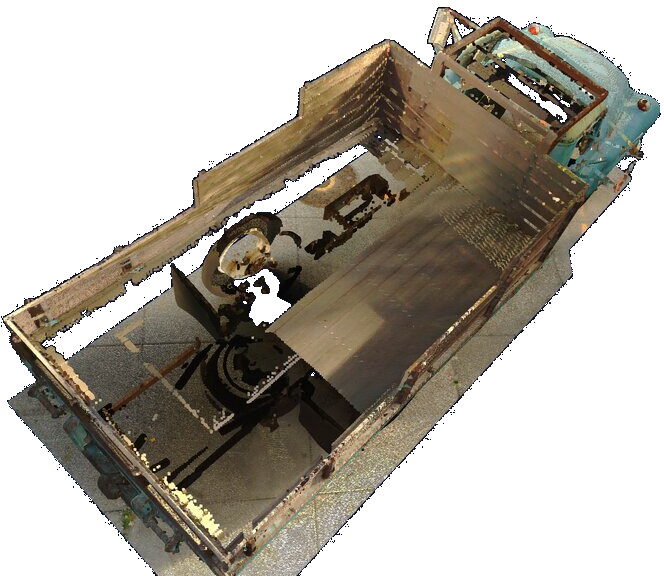

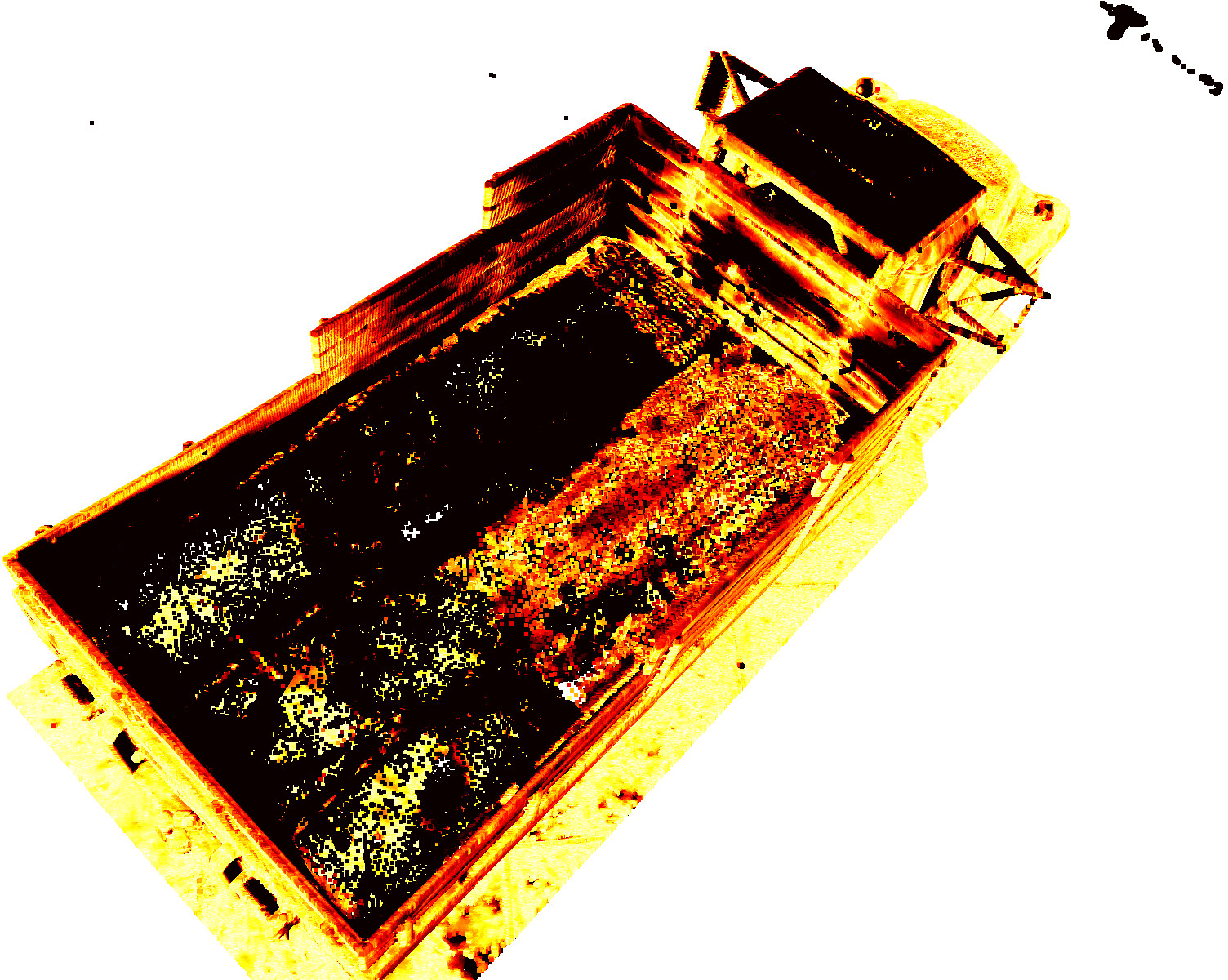

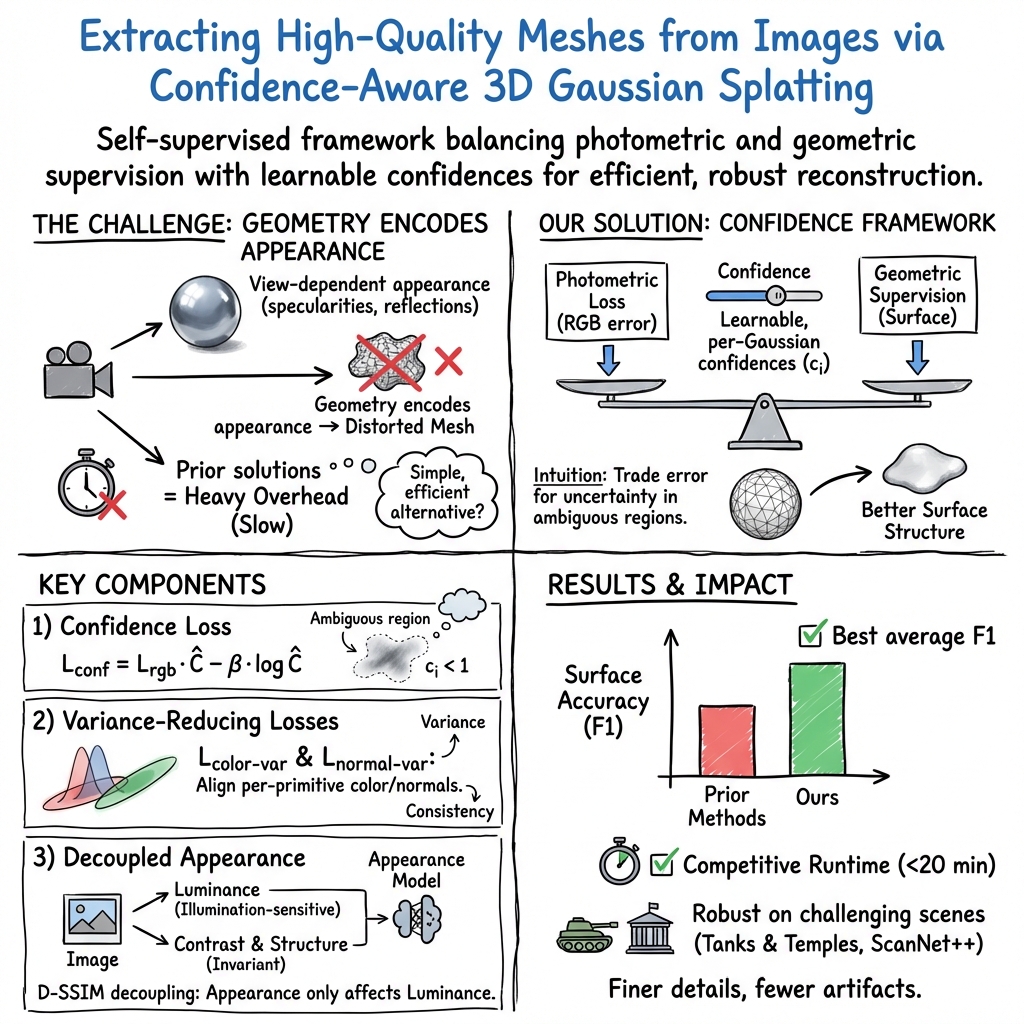

Abstract: Recently, 3D Gaussian Splatting (3DGS) greatly accelerated mesh extraction from posed images due to its explicit representation and fast software rasterization. While the addition of geometric losses and other priors has improved the accuracy of extracted surfaces, mesh extraction remains difficult in scenes with abundant view-dependent effects. To resolve the resulting ambiguities, prior works rely on multi-view techniques, iterative mesh extraction, or large pre-trained models, sacrificing the inherent efficiency of 3DGS. In this work, we present a simple and efficient alternative by introducing a self-supervised confidence framework to 3DGS: within this framework, learnable confidence values dynamically balance photometric and geometric supervision. Extending our confidence-driven formulation, we introduce losses which penalize per-primitive color and normal variance and demonstrate their benefits to surface extraction. Finally, we complement the above with an improved appearance model, by decoupling the individual terms of the D-SSIM loss. Our final approach delivers state-of-the-art results for unbounded meshes while remaining highly efficient.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about turning a bunch of photos of a scene into a clean 3D model (a “mesh”) quickly and accurately. The authors build on a fast technique called 3D Gaussian Splatting (think of it as painting a scene with lots of tiny soft 3D blobs) and add a smart way to decide when to trust the colors in the photos versus the shape of objects. Their goal: get detailed, low‑error 3D surfaces without slow, complicated extra systems.

What questions are they trying to answer?

In simple terms:

- How can we get a clean, detailed 3D surface from many photos without using slow tricks or giant pre‑trained AI models?

- Can we teach the system to “know” when photos are unreliable (like shiny reflections or changing lighting) and rely more on shape instead?

- Can we reduce confusion when many blobs overlap in the same pixel by making their colors and directions more consistent?

- Can we better handle different lighting and camera settings so the 3D model stays consistent?

How did they do it?

3D Gaussian Splatting, in plain words

- Imagine reconstructing a scene with many tiny, soft blobs (Gaussians) in 3D. Each blob has a position, size, direction, color, and opacity.

- When you render an image from a camera, you “look” through the blobs and blend their colors from front to back, like layering semi‑transparent stickers.

- A mesh is like a 3D “skin” or net that wraps the surfaces in the scene. The system learns the blobs first, then extracts the mesh.

Adding “trust meters” (confidence) to balance color vs. shape

- Problem: Shiny spots, glass, or changing lighting can trick the model into changing geometry just to match colors. That makes messy surfaces.

- Their idea: give each blob a learnable “confidence” (a trust meter). When the photos are unreliable, the system can lower confidence and pay less attention to color differences, and more attention to geometry (shape) rules.

- They render a “confidence map” for each image, so the system learns which areas are trustworthy and which are not, all by itself (self‑supervised—no labels needed).

Preventing “over‑growth” of blobs (confidence‑aware densification)

- During training, blobs split into smaller blobs to capture detail (like zooming in).

- In tricky areas (like reflections), standard methods split too much, flooding the scene with tiny blobs and causing artifacts.

- The fix: use the blob’s confidence to raise or lower the threshold for splitting. Low‑confidence areas split less, so the model doesn’t overreact.

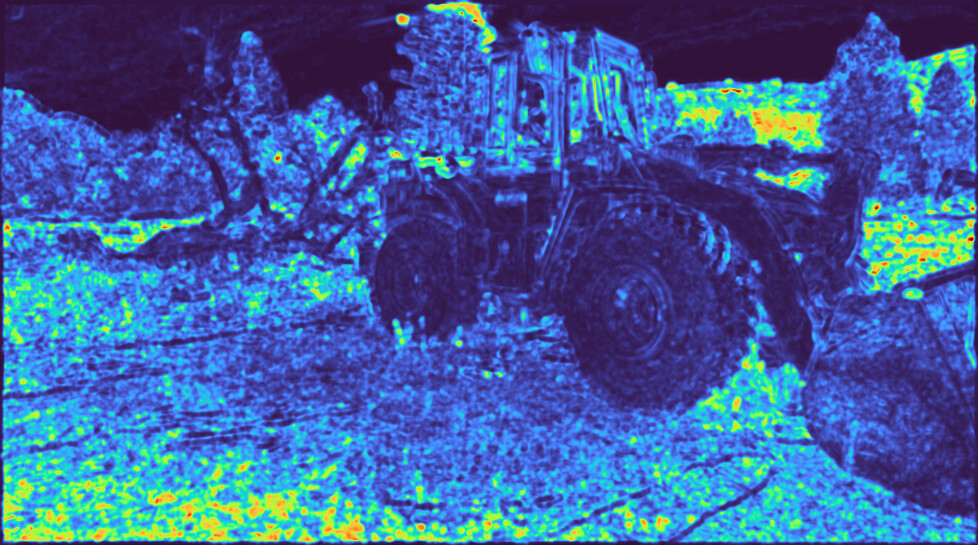

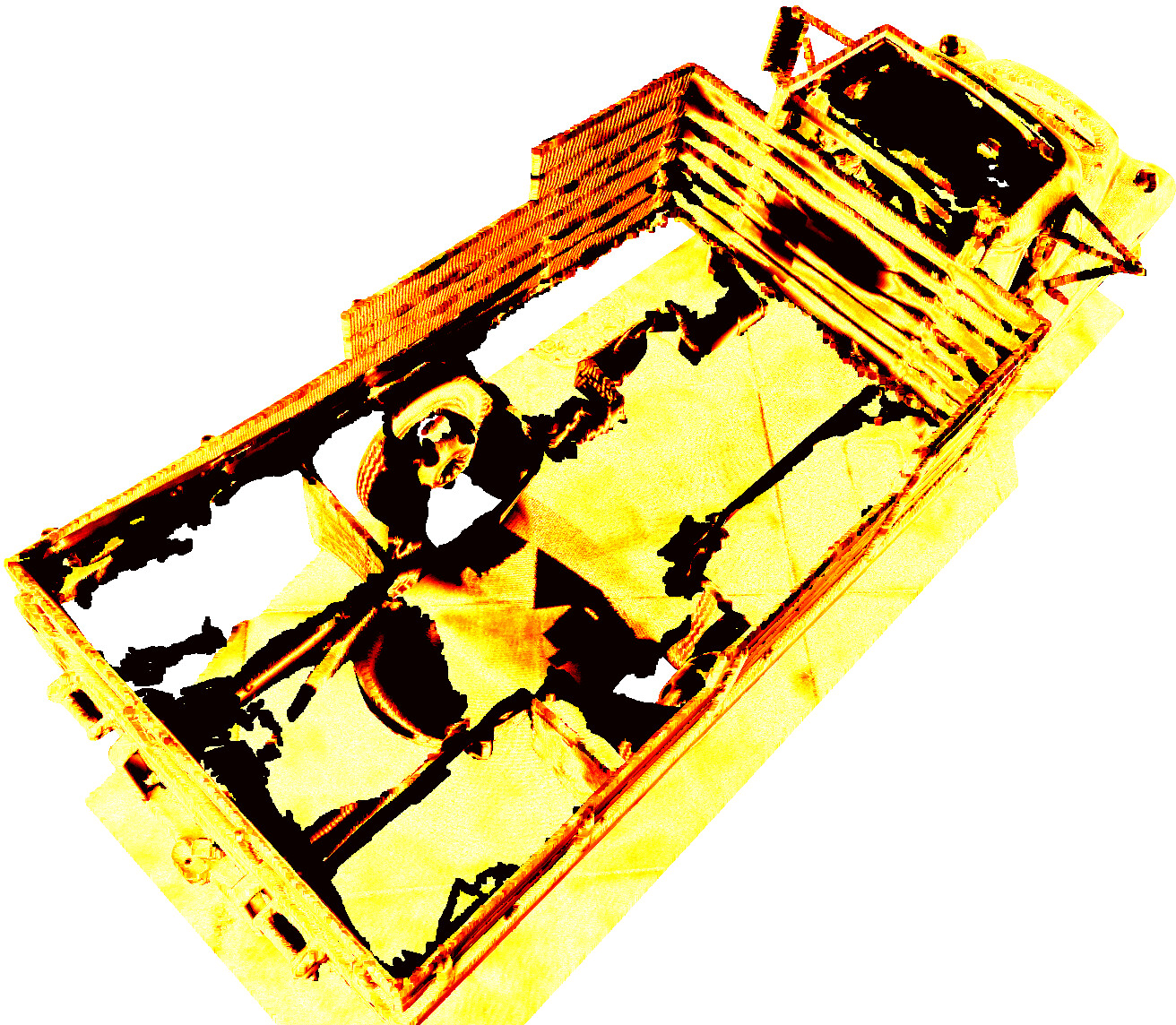

Reducing “mixing confusion” with variance losses

- When multiple blobs blend to make a pixel’s color, the system only sees the final mix, not what each blob contributed. That can hide mistakes.

- Two extra nudges help:

- Color variance loss: encourages each contributing blob’s color to be close to the final pixel color, reducing disagreement between blobs.

- Normal variance loss: makes each blob’s surface direction (normal) agree with the combined direction, keeping surfaces smooth and aligned.

- Think of it like a choir: not just sounding good together, but each singer staying on the right note.

Better handling of changing camera appearance (decoupled appearance)

- Different photos can have different brightness or contrast because of camera settings or lighting.

- They add a small per‑image correction (a lightweight neural “filter”) to adjust the rendered image.

- Important detail: they only use this correction for the brightness part of a common image comparison metric (D‑SSIM), and keep the structure/contrast parts unmodified. This keeps the 3D geometry honest while still allowing brightness fixes.

What did they find?

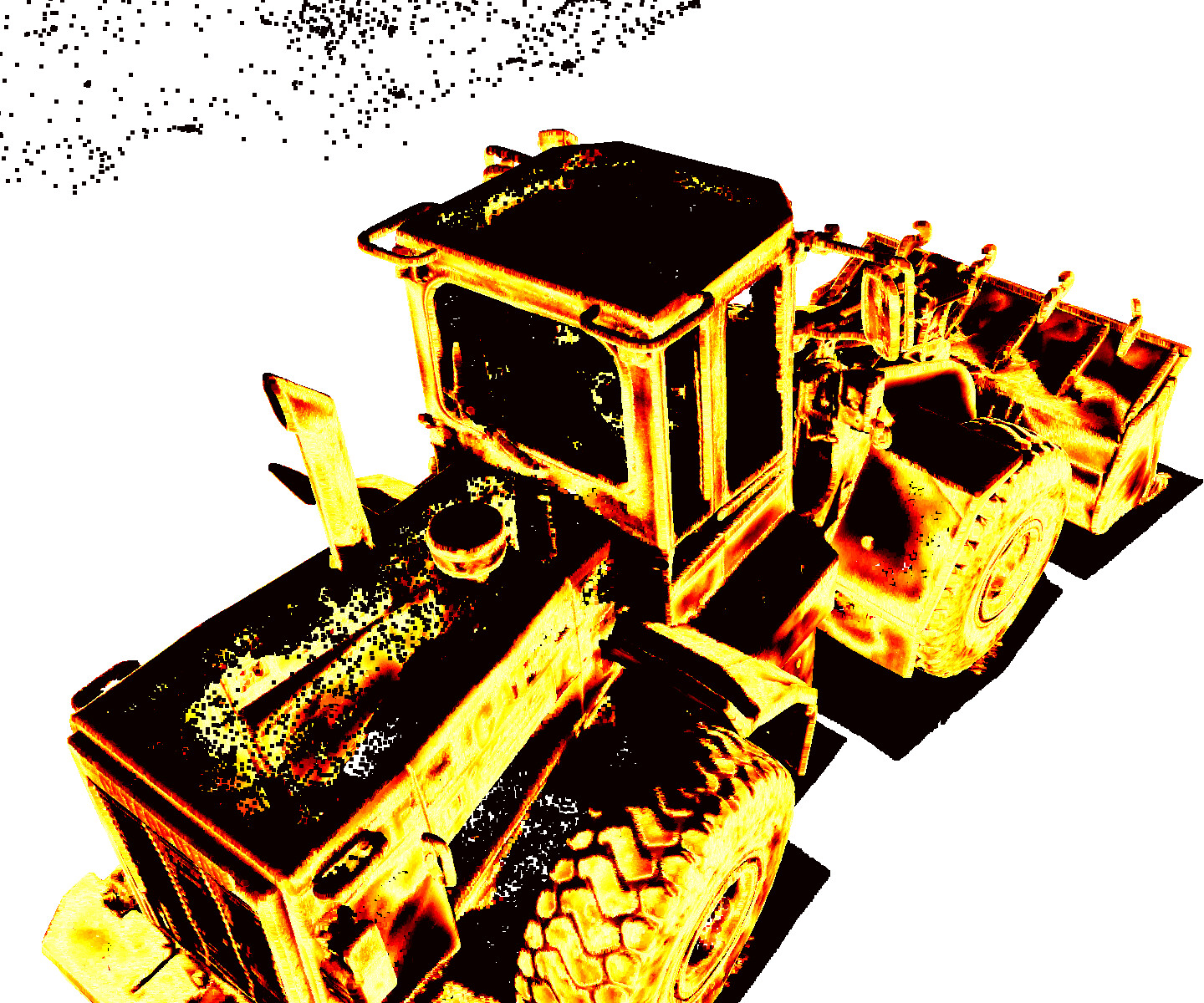

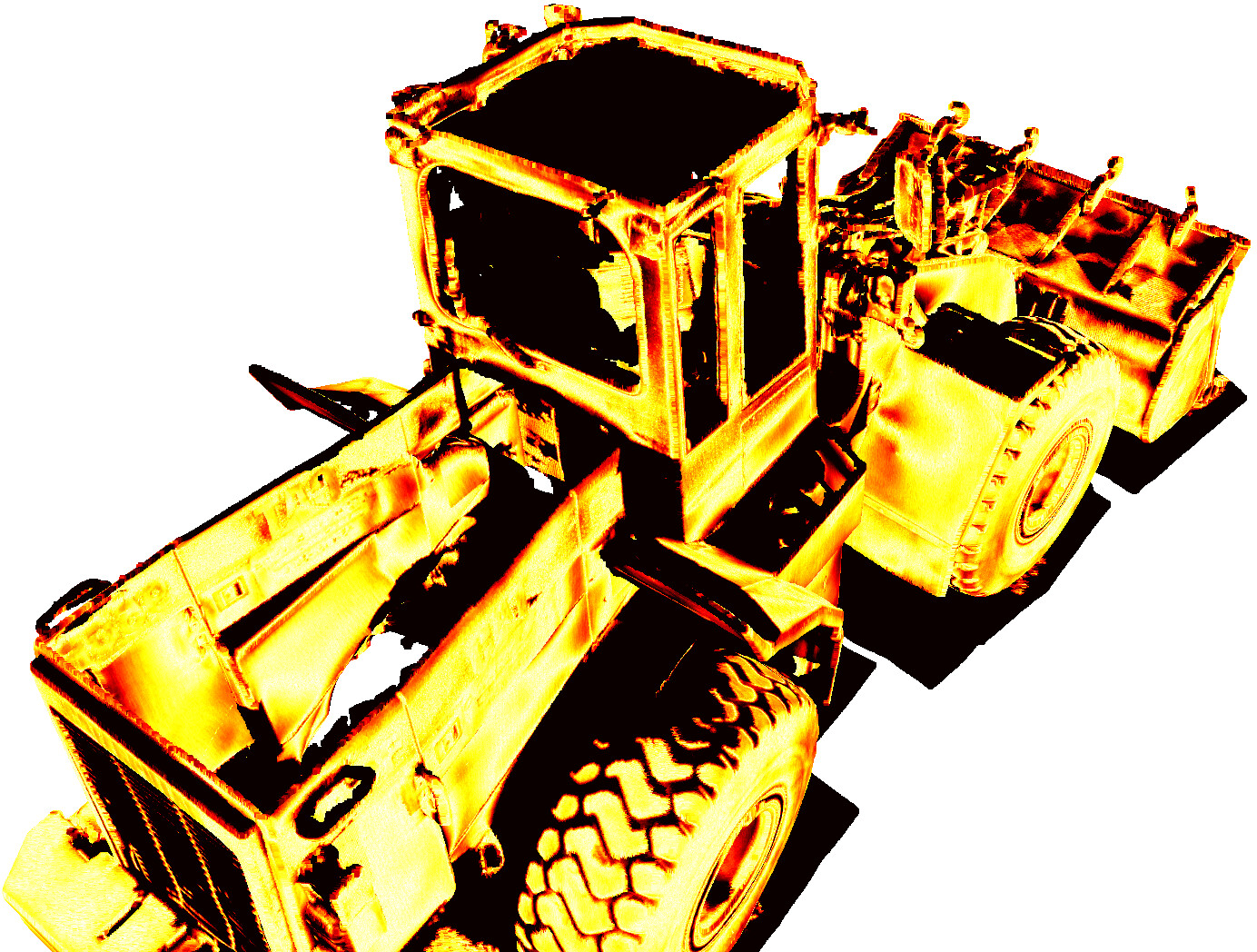

- Their method produces cleaner, more detailed meshes with fewer artifacts than other fast, self‑contained methods.

- It works especially well in hard scenes with reflections, thin structures (like leaves), and lighting changes.

- On popular benchmarks:

- Tanks and Temples (outdoor/object scenes): they reached the best overall F1 score among methods that avoid heavy extra constraints, while training in under 20 minutes on a high‑end GPU.

- ScanNet++ (indoor, real‑world scenes with tricky lighting): they also achieved the best scores, showing strong real‑world reliability.

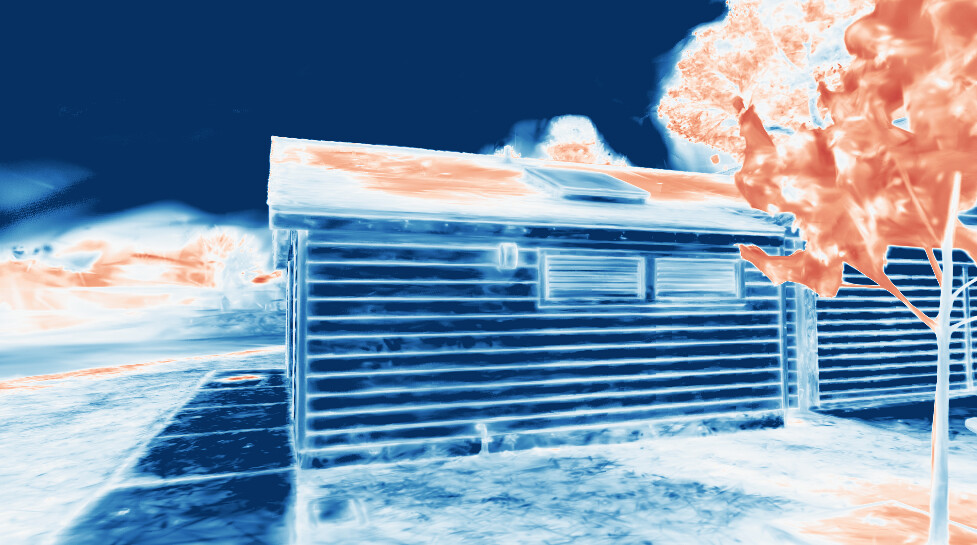

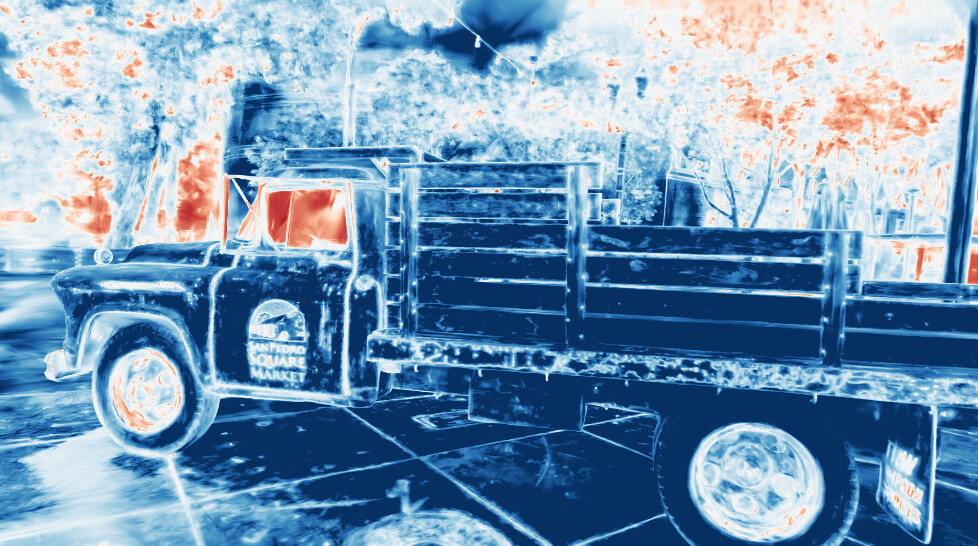

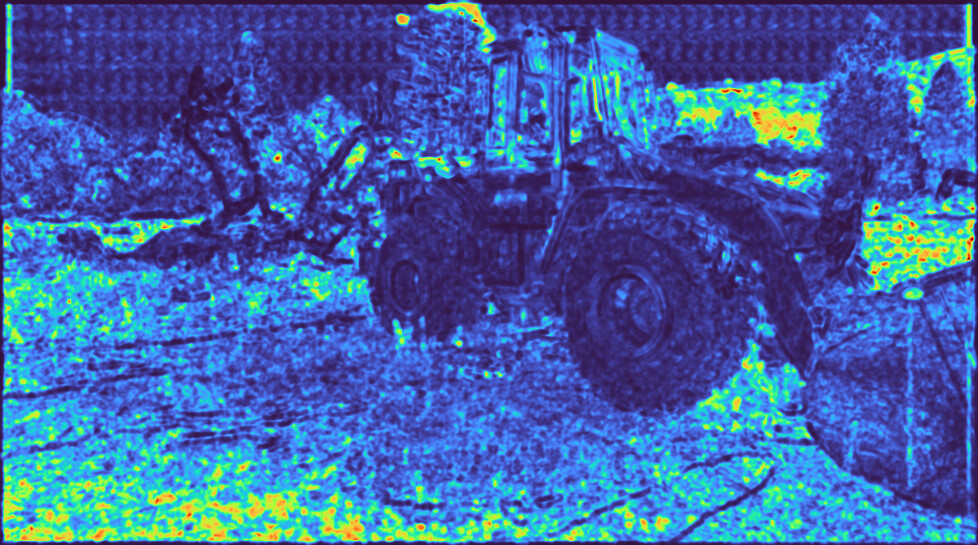

- The confidence maps learned to highlight challenging areas (like shiny surfaces or rarely seen spots), and the final geometry in those places improved notably.

- The color and normal variance losses helped blobs align their colors and directions better, making surfaces more accurate and less “fake.”

Why does this matter?

- Better 3D models from ordinary photos help in many areas: robotics (navigation), AR/VR (immersive worlds), games, architecture, and cultural heritage.

- This approach keeps the speed advantage of 3D Gaussian Splatting but reduces common quality problems—without depending on slow multi‑view tricks or massive pre‑trained networks.

- The “confidence” idea is general: it could help other 3D tasks where the system has to decide how much to trust what it sees, especially in messy real‑world conditions.

In short, the paper shows a simple but powerful way to make fast 3D reconstruction more reliable: teach the system when to trust images, encourage blobs to agree with each other, and handle lighting changes smartly. The result is cleaner, more detailed 3D surfaces in less time.

Knowledge Gaps

Unresolved Gaps, Limitations, and Open Questions

Below is a concise, actionable list of what remains uncertain or unexplored in the paper. Each item highlights a concrete direction future work could pursue.

- Sparse-view and occluded regions: The method assumes moderately dense capture. How to robustly extend confidence-steered training to sparse-view settings or heavily occluded areas (e.g., with learned priors, multi-view constraints, or explicit occlusion-aware regularization) remains unresolved.

- Distant background and large-scale scenes: Reconstruction quality degrades for far-field content; no mechanism adapts densification or supervision for distant geometry. How to couple confidence with distance-aware densification or multiscale representations is open.

- Dynamic scenes and transient content: The framework assumes static scenes. Whether confidence reliably suppresses or separates moving objects and how to extract static meshes from scenes with dynamics is not evaluated.

- Camera pose errors: Robustness to calibration/pose noise is not studied. Can confidence disentangle photometric ambiguity from pose misalignment, and can it guide joint pose refinement?

- Confidence semantics and calibration: The per-primitive scalar confidence lacks a probabilistic grounding and calibration evaluation. Are confidences predictive of reconstruction error (e.g., ECE, AUROC for error detection)? How to align β and confidence with a principled noise model?

- Confidence degeneracy and regularization: With L_conf = L_rgb·C − β log C, negative losses and unbounded C are possible; no explicit upper bound or spatial regularizer is used. What prevents confidence inflation/deflation and how to regularize (e.g., priors, smoothing, KL penalties)?

- Direction-dependent uncertainty: Confidence is a view-agnostic scalar per primitive. Many ambiguities are view-dependent; extending to direction- or illumination-dependent confidence (e.g., per-primitive anisotropic or SH-parameterized uncertainty) is unexplored.

- Interaction with occlusions and ordering: Confidence is alpha-blended like color; the behavior under heavy occlusions or mis-sorting is unexamined. Could occluded but influential primitives exploit low confidence to evade supervision?

- Auto-tuning and schedule for β and confidence activation: β is globally fixed and confidence learning starts at iteration 500. No algorithm to auto-tune β per-scene or adapt the schedule (e.g., curriculum, annealing) is proposed or evaluated.

- Confidence-aware densification stability: Modulating the gradient threshold by min(C,1) is heuristic. Sensitivity to other densification triggers (e.g., scale/opacity thresholds), theoretical stability, and failure modes (under-coverage in low-confidence areas) remain open.

- Coupling with external priors: Although the method avoids pre-trained priors for efficiency, the synergy between confidence-weighted self-supervision and monocular or multi-view priors (to handle truly underconstrained cases) is not explored.

- Appearance embedding generality: The decoupled D-SSIM design is validated on Tanks&Temples and ScanNet++, but behavior under extreme ISP variation, time-of-day changes, localized tone mapping, or heavy HDR pipelines is not comprehensively studied.

- Potential leakage of structure into appearance: Even with luminance-only compensation, per-image CNN mappings could absorb structural errors. There is no explicit regularization or diagnostics ensuring the appearance module does not hide geometry mistakes.

- Alternative photometric losses: Only D-SSIM is decoupled. The impact of multi-scale SSIM, robust perceptual losses, or learned feature losses under the same decoupling principle is not investigated.

- Variance losses side-effects: Color/normal variance losses may bias against valid multi-layered radiance (e.g., semi-transparency, sub-surface scattering) or suppress fine specular lobes. The trade-off between reducing “geometry faking” and preserving photorealistic view-dependence is not quantified.

- Impact on novel view synthesis: The effect of the proposed variance and confidence losses on NVS metrics (e.g., PSNR/SSIM/LPIPS) is not systematically reported, leaving open whether mesh gains degrade rendering fidelity.

- Depth variance/uncertainty: While color and normal variance are addressed, there is no explicit use of depth variance beyond citing distortion loss. A unified variance framework across color, normal, and depth (with shared confidence) is untested.

- Per-primitive normals: The source and reliability of per-primitive normals used in the normal-variance loss are not detailed; their estimation noise and coupling to the depth-normal consistency loss could introduce bias. Robust normal estimation strategies remain to be evaluated.

- High-frequency appearance modeling: The approach relies on SH for low-frequency view-dependence and confidence to handle the rest. Combining the framework with more expressive reflectance/lighting models (e.g., learned basis, neural BRDF) to further reduce geometry faking is unaddressed.

- Mesh extraction post-processing with confidence: Confidence is not used during extraction (e.g., confidence-weighted isovalue selection, pruning low-confidence triangles, or uncertainty-aware surface smoothing). Designing confidence-guided meshing remains open.

- Topology and watertightness: Beyond F1, mesh quality aspects such as watertightness, manifoldness, normal accuracy, and topology preservation are not evaluated or optimized.

- Evaluation scope and generalization: The ScanNet++ subset selection and omission of challenging materials (glass, water, translucent media) limit insight into generalization. Dedicated benchmarks for reflective/translucent scenes are absent.

- Scalability and resource profiling: Although runtimes are reported, memory usage, scalability to very large scenes, and throughput under constrained hardware are not characterized.

- Theoretical grounding of the confidence loss: The link between L_conf and a proper likelihood or heteroscedastic model (à la Kendall) is informal. A principled derivation (e.g., from a probabilistic model with learnable variance) and guidance to set β are missing.

- Failure analysis: The paper shows qualitative success cases and some limitations but lacks systematic failure case taxonomy (e.g., thin structures under backlighting, repeated textures, severe motion blur) and diagnostic tools for practitioners.

- Active acquisition and downstream use: While related work suggests uncertainty-guided view planning, the paper does not demonstrate how its confidence maps can drive data capture, mesh refinement, or selective post-processing in practice.

- Robustness to different SH orders and renderers: Sensitivity to SH order, alternative appearance encodings, and rasterizer variants (e.g., perspective-correct, anti-aliased) is not explored, leaving implementation portability uncertain.

- Hyperparameter transferability: Global constants (e.g., λ_color-var, λ_normal-var, β) are fixed across scenes; there is no study of their robustness across diverse datasets or an approach to adapt them automatically.

These gaps collectively point to opportunities in uncertainty calibration and theory, robustness to real-world capture artefacts, enhanced appearance/geometry disentanglement, confidence-guided meshing, and principled integration with priors and active data acquisition.

Practical Applications

Immediate Applications

The following applications can be deployed using the paper’s methods as-is or with straightforward engineering integration. They leverage: (a) confidence-weighted photometric/geometric balancing, (b) confidence-aware densification, (c) color/normal variance losses that reduce blending ambiguity, and (d) the improved decoupled appearance model that handles illumination variability.

- High-throughput photogrammetry for VFX and game asset production (Media/Entertainment, Software)

- What: Replace or augment current 3DGS/photogrammetry steps to produce cleaner, more complete unbounded meshes with fewer artifacts in <20 minutes per scene on a single RTX 4090.

- Why: Confidence-guided training reduces geometry “faking” of view-dependent effects; variance losses align Gaussians to surfaces; decoupled appearance keeps structure consistent under illumination changes.

- Outputs/workflows: “Mesh-from-video” pipeline; DCC (Blender/Unreal) import; QA panel with confidence heatmaps to flag weak regions.

- Assumptions/dependencies: Moderately dense, posed image sets (e.g., COLMAP/ARKit poses); modern GPU; scenes with sufficient coverage; open-source 3DGS code integration.

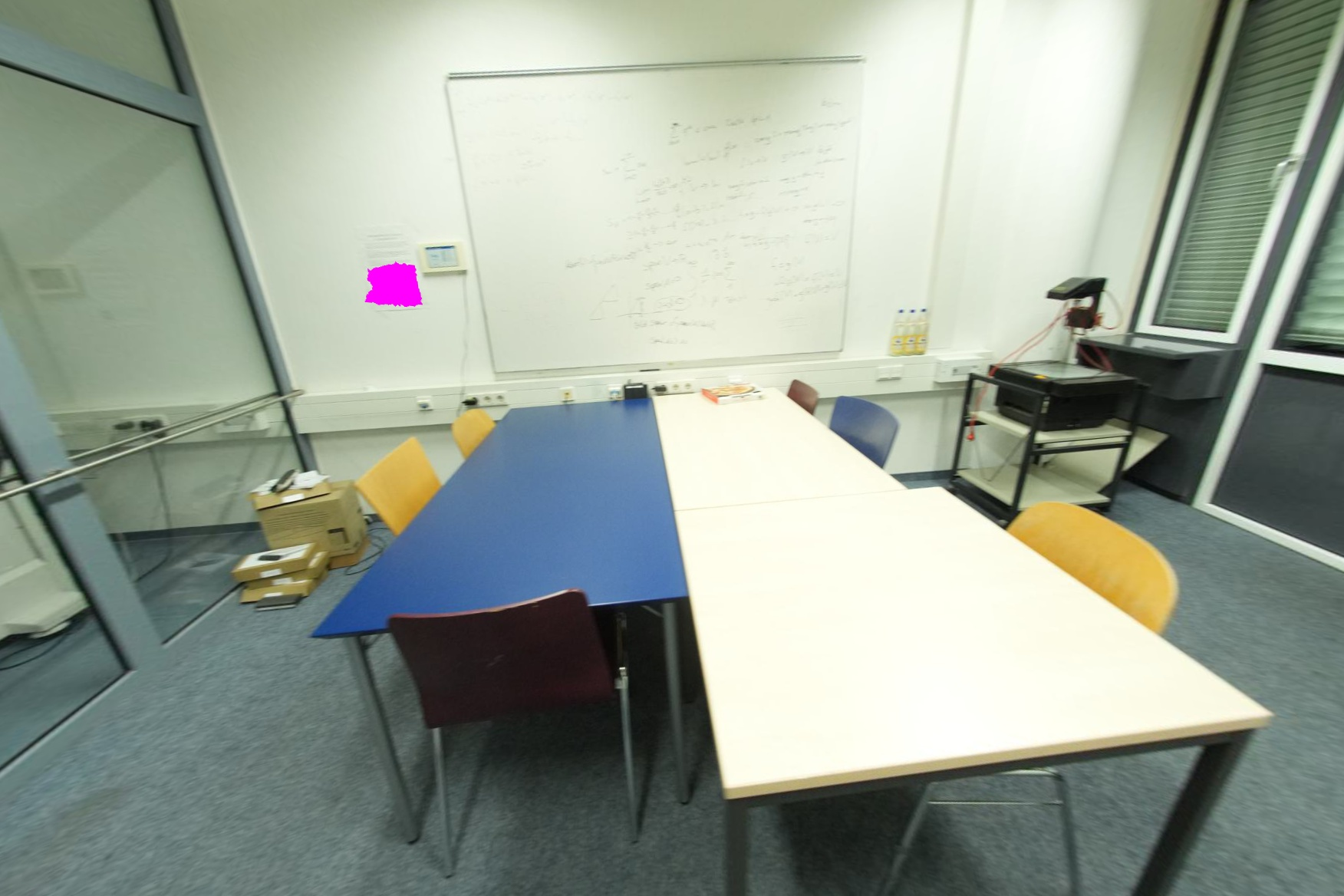

- Digital twins for AEC and facilities (AEC/FM, Digital Twins)

- What: Rapidly reconstruct interiors/exteriors with robust handling of exposure/lighting shifts (ScanNet++ conditions), producing watertight meshes for planning, clash detection, and as-builts.

- Why: The improved appearance model stabilizes SSIM’s luminance term; confidence maps identify underconstrained areas (e.g., blank walls, occluded corners) for targeted re-capture.

- Outputs/workflows: BIM-aligned mesh export, coverage/confidence reports for re-scan planning.

- Assumptions/dependencies: Sufficient view overlap; reliable camera poses; GPU compute.

- Product digitization for e-commerce, including glossy/reflective items (Retail/E-commerce)

- What: Capture assets with fewer “specular-geometry” artifacts and improved normal/color consistency.

- Why: Color/normal variance losses discourage encoding highlights with geometry; confidence maps localize problematic reflections.

- Outputs/workflows: Capture assistant that visualizes low-confidence regions; PBR-ready mesh base for downstream material fitting.

- Assumptions/dependencies: Multiple views under varying angles; controlled or known lighting improves results but not required.

- Cultural heritage and outdoor scene capture (Cultural Heritage, GIS)

- What: Efficient unbounded mesh extraction that preserves fine structures (foliage, rails) while avoiding over-smoothing.

- Why: Confidence-aware balancing prevents over-densification; variance losses limit multi-layer blend artifacts behind semi-transparent areas.

- Outputs/workflows: Field capture plus “confidence coverage” maps; curators can prioritize re-shoots in low-confidence regions.

- Assumptions/dependencies: Adequate viewpoint diversity; treatment for large-scale background sparsity remains limited.

- Robotics/offline mapping back-end (Robotics, Autonomy)

- What: Improve reconstruction quality from robot image logs for navigation and simulation, especially under mixed lighting and shiny surfaces.

- Why: Confidence learns to relax photometric loss in ambiguous regions while preserving geometric consistency; normal variance produces surface-aligned primitives.

- Outputs/workflows: Backend pipeline from SLAM pose graphs → 3DGS → mesh; uncertainty layers for planning and map QA.

- Assumptions/dependencies: Good pose estimates; moderate view density; offline GPU processing.

- XR environment ingestion for occlusion/physics (AR/VR)

- What: Precompute environment meshes for MR/VR experiences with fewer artifacts from exposure changes and view-dependent effects.

- Why: Decoupled D-SSIM luminance plus variance losses yield consistent geometry and normals.

- Outputs/workflows: Unity/Unreal import; room-scale mesh finalization; confidence overlays for level designers.

- Assumptions/dependencies: Offline training step; GPU; sufficient capture coverage.

- Real estate and claims documentation (Real Estate, Insurance/Finance)

- What: Fast interior meshes for listings, appraisals, and claims; highlight areas requiring additional evidence due to low confidence.

- Why: Confidence map is interpretable for non-technical QA; handles lighting inconsistency typical of on-site captures.

- Outputs/workflows: “Confidence report” attached to a mesh deliverable; guided re-capture list.

- Assumptions/dependencies: Moderately dense photo sets; compute access (local/cloud).

- Industrial site and asset inspection (Energy, Manufacturing)

- What: Build inspection-ready meshes of facilities, pipelines, and equipment despite metal reflections and variable illumination.

- Why: Variance losses mitigate view-dependency encoded as geometry; confidence indicates where measurements are unreliable.

- Outputs/workflows: Inspection mesh with uncertainty overlays; maintenance ticketing for low-confidence zones.

- Assumptions/dependencies: Adequate coverage and poses; high-frequency lighting variation may still need controlled capture.

- Academic dataset curation and benchmarking (Academia)

- What: Use rendered confidence to detect underconstrained regions, mislabeled poses, or insufficient coverage; quantify and compare reconstruction uncertainty across datasets.

- Why: Self-supervised confidence is a practical, continuous measure correlated with blending variance and uncertainty.

- Outputs/workflows: Dataset QA dashboards; auto-selection of frames to re-label/re-capture.

- Assumptions/dependencies: Access to training loop and logs; reproducible settings; target dataset conditions similar to Tanks & Temples/ScanNet++.

- Cloud “mesh-from-video” API and SDKs (Software/Platforms)

- What: Offer a hosted pipeline that accepts posed videos or photo sets and returns meshes plus confidence maps in minutes.

- Why: Efficient 3DGS training and extraction (<20 minutes) without dependency on large pre-trained monocular models.

- Outputs/workflows: REST/gRPC API; SDK plugins for DCC tools; CLI for batch processing.

- Assumptions/dependencies: GPU-backed infrastructure; pose estimation stage upstream or in-service.

- Education and training content creation (Education, Online Learning)

- What: Instructors and creators can quickly produce clean 3D assets of labs/classrooms with confidence-informed re-capture guidance.

- Why: Decoupled appearance yields consistent structure across mixed illumination typical of campus environments.

- Outputs/workflows: Classroom scans; tutorial materials on uncertainty-aware 3D reconstruction.

- Assumptions/dependencies: Camera pose availability; modest compute resources (local or cloud).

- Post-capture quality assurance for photogrammetry vendors (Professional Services)

- What: Adopt confidence maps and variance metrics as QA gates before delivery.

- Why: Single-pass, self-supervised signals highlight geometric/photometric ambiguity without extra priors.

- Outputs/workflows: Automated QA scripts; scorecards with thresholds for acceptance.

- Assumptions/dependencies: Consistent training setup; clients accept uncertainty metadata.

Long-Term Applications

The following applications are enabled by the paper’s ideas but require further research, real-time adaptation, or integration with complementary priors/sensors.

- Active view planning for capture apps and robots (Robotics, Mobile, Consumer Capture)

- Vision: Use per-pixel confidence to guide a user/robot to the “next best view” to rapidly close coverage gaps.

- Why: The learned confidence is an in-situ uncertainty signal; can drive exploration policies.

- Dependencies: Online/streaming training or fast incremental updates; energy-efficient inference; robust on-device SLAM; UI for human-in-the-loop guidance.

- Real-time or on-device reconstruction for AR glasses (XR, Mobile)

- Vision: Confidence-aware, heuristic-free densification that self-tunes to keep a bounded primitive count while maintaining quality in real time.

- Why: The paper shows β as a knob trading quality/primitive count; could control complexity on-device.

- Dependencies: Significant GPU/NPUs on mobile headsets; further algorithmic optimizations; partial retraining-free updates.

- Heuristic-free densification as a general 3DGS control plane (Core 3DGS, Software)

- Vision: Replace rule-based schedules with confidence-penalized objectives across tasks (novel view synthesis, upsampling, inpainting).

- Why: Confidence already stabilizes densification in ambiguous regions.

- Dependencies: Empirical validation across varied datasets/tasks; principled defaults for β; integration into mainstream 3DGS libraries.

- Multi-sensor fusion with uncertainty weighting (Robotics, Surveying)

- Vision: Fuse LiDAR, IMU, and images by letting confidence down-weight unreliable image regions (glare, motion blur) during optimization.

- Why: Confidence map acts as a learned reliability prior.

- Dependencies: Joint calibration; multi-modal differentiable rendering; robust pose synchronization.

- Material and reflectance recovery pipelines (DCC, E-commerce, Digital Twins)

- Vision: Extend variance losses and confidence to disentangle BRDF from geometry, enabling better PBR parameter estimation.

- Why: Reducing color/normal blending variance is a step toward consistent per-surface radiance.

- Dependencies: Additional reflectance models, controlled illumination capture, or learned priors.

- Sparse-view robust reconstruction with monocular or diffusion priors (Consumer Apps, Research)

- Vision: Combine confidence-based learning with pre-trained depth/normal/diffusion priors to operate in low-coverage capture regimes.

- Why: Paper notes limitations under sparse views; priors can regularize missing content, especially distant backgrounds.

- Dependencies: Model integration, compute overhead management, and bias control from priors.

- Standardization of uncertainty-aware deliverables (Policy, Public Sector)

- Vision: Require uncertainty/confidence reporting in procurement specs for digital twins of infrastructure or public spaces.

- Why: Confidence is interpretable and linked to data coverage and scene ambiguity.

- Dependencies: Cross-vendor consensus on metrics; reference benchmarks; regulator adoption.

- Telepresence and remote operations with trust overlays (Telecom, Industrial Operations)

- Vision: Stream 3D environments with live overlays of reconstruction confidence so operators know where geometry is trustworthy for decisions.

- Why: Uncertainty-aware visualization can mitigate risk in remote inspection and manipulation.

- Dependencies: Near-real-time updates; low-latency compute; robust SLAM feeds.

- Automated dataset auditing and curation at scale (Academia, Platforms)

- Vision: Use confidence/variance analytics to auto-triage massive capture corpora, prioritizing scenes for re-shoots or removal.

- Why: Scales manual QA; improves benchmark reliability.

- Dependencies: Batch automation, standardized thresholds, storage/indexing integration.

- Scene relighting and editing with structurally consistent meshes (DCC, Film/TV)

- Vision: Stable geometry and normals from this method act as a stronger base for downstream relighting and physics-consistent editing.

- Why: The decoupled appearance design improves structural consistency despite illumination changes.

- Dependencies: Coupling to material/lighting estimation modules; artist-facing tools.

- Safety-critical mapping for autonomous systems (Aviation, Automotive, Rail)

- Vision: Use confidence to gate behaviors (e.g., slow down in low-confidence reconstructions) or trigger re-mapping runs.

- Why: Quantified uncertainty is a prerequisite for risk-aware autonomy.

- Dependencies: Certification processes; rigorous testing under edge cases; deterministic runtime guarantees.

- Large-scale background completion using generative priors (Media, AEC)

- Vision: Fill in distant or sparsely observed backgrounds via multi-view diffusion while retaining confidence triggers for human verification.

- Why: Paper notes limitations in distant regions; generative priors can plausibly complete them.

- Dependencies: Responsible generative integration; provenance tracking; human-in-the-loop review.

Common Assumptions and Dependencies Across Applications

- Requires posed, moderately dense multi-view imagery; extremely sparse views still benefit from added priors.

- GPU resources are needed for sub-20-minute training; local or cloud GPUs (e.g., RTX 4090-class) recommended.

- Performance depends on view coverage, accurate camera calibration/poses, and scene characteristics (specular/transparent surfaces improve but remain challenging).

- Integration effort into existing 3DGS/photogrammetry stacks (code availability at the project page), and operationalizing confidence maps/variance metrics into QA or UI.

- For regulated or safety-critical use, additional validation and domain-specific compliance are necessary.

Glossary

- alpha-blending: A compositing process that accumulates contributions along a ray using opacity weights. "accumulated via alpha-blending:"

- anisotropic 3D Gaussians: Gaussian primitives with direction-dependent covariance used to represent 3D scenes. "3DGS~\cite{kerbl20233dgs} represents scenes using anisotropic 3D Gaussians."

- appearance embeddings: Learnable per-image features used to compensate for lighting and exposure changes. "we investigate commonly used appearance embeddings that compensate for varying illumination"

- Bayes' Rays: A method that estimates post-optimization uncertainty in radiance fields via a Bayesian approximation. "Alternatively, Bayes' Rays~\cite{goli2023bayes} estimates the uncertainty after optimization via Laplace approximation"

- Bayesian Networks: Probabilistic graphical models used here as inspiration for learning confidence to balance losses. "inspired by recent work on Bayesian Networks~\cite{kendall2017uncertainties}"

- bidirectional consistency: A training strategy enforcing mutual agreement between Gaussians and the extracted mesh. "mesh-aware optimization strategies, enforcing bidirectional consistency between Gaussians and the extracted mesh during training."

- bilateral grids: A data structure enabling efficient, edge-aware image transformations for appearance compensation. "extend learnable affine mappings with bilateral grids~\cite{wang2024bilateral}"

- blending variance: Variation in per-primitive predictions along a ray under alpha blending, indicative of geometric uncertainty. "we identify blending variance as a primary indicator of geometric uncertainty."

- color variance loss: A regularizer that penalizes differences between per-primitive colors and the ground-truth pixel color to reduce blending variance. "The color variance loss further improves performance"

- Confidence-Aware Densification: A densification strategy that adapts split/clone thresholds based on learned confidence to avoid over-densification. "{Confidence-Aware Densification}"

- covariance matrix: A matrix describing the spatial spread and orientation of a Gaussian primitive. "The covariance matrix is given by"

- D-SSIM: The dissimilarity form of the Structural Similarity Index used as a photometric loss component. "by decoupling the individual terms of the D-SSIM loss."

- D-SSIM decoupling: Using the appearance-compensated image only in specific SSIM terms to improve training signals. "a novel D-SSIM decoupling mechanism"

- decoupled appearance module: An appearance compensation component that improves consistency by separating illumination effects from structure. "our decoupled appearance module effectively resolves the varying illumination"

- depth distortion loss: A NeRF-derived regularizer that can be interpreted as minimizing variance in blended depth estimates. "We note that the depth distortion loss~\cite{barron2022mipnerf360} can also be interpreted as a variance loss on the blended depth."

- depth-normal consistency loss: A geometric constraint enforcing agreement between depths and normals rendered from Gaussians. "The per-pixel normals used in the depth-normal consistency loss~\cite{huang20242dgs, Radl2025SOF, yu2024gof} are also alpha-blended"

- diagonal scaling matrix: A per-primitive matrix of positive scales defining the Gaussian’s axis lengths. "diagonal scaling matrix "

- feed-forward reconstruction models: Methods that predict 3D structure in a single pass, used here to inspire confidence weighting. "we take inspiration from recent feed-forward reconstruction models~\cite{wang2024duster}"

- first-hit Gaussians: The front-most Gaussians along a ray that dominate the rendered color and normal. "Rendering color and normals only for the first-hit Gaussians shows how our variance losses align individual Gaussians better"

- Gaussian surfels: Surface elements modeled as Gaussians used as an alternative surface representation. "or the use of Gaussian surfels directly~\cite{huang20242dgs, Dai2024GaussianSurfels}"

- generative latent optimization: An optimization technique that learns per-image or per-instance latent codes to explain variation. "repurposes generative latent optimization~\cite{bojanowski2017optimizing}"

- image sensor processing (ISP): The camera-specific pipeline that alters captured images, requiring compensation during reconstruction. "Compensating for camera-specific image sensor processing (ISP) is central to all 3D reconstruction tasks from images."

- Laplace approximation: A second-order approximation method used to estimate posterior uncertainty around a mode. "estimates the uncertainty after optimization via Laplace approximation"

- Mahalanobis distance: A distance metric accounting for covariance, used to find a ray’s closest point to a Gaussian. "minimizing the Mahalanobis distance between the ray and the Gaussian center"

- Marching Tetrahedra: A mesh extraction algorithm that reconstructs isosurfaces (here, used for unbounded meshes). "use Marching Tetrahedra with binary search"

- monocular priors: Single-view depth or normal predictions used as additional supervision. "monocular priors~\cite{chen2024vcr, li2025geosvr, li2025vags}"

- multi-view constraints: Geometric/photometric constraints leveraging multiple views to regularize reconstruction. "Some methods~\cite{chen2024pgsr, li2025vags, zhang2025qgs} rely on multi-view geometric and photometric constraints"

- neural SDF: A neural signed distance function jointly optimized with Gaussians for mesh extraction. "Some works jointly optimize a neural SDF for mesh extraction"

- Neural Radiance Fields (NeRF): A neural volumetric representation for novel view synthesis and 3D reconstruction. "Neural Radiance Fields (NeRF)~\cite{Mildenhall2020NeRF}"

- opacity field: A 3D scalar field whose isosurface can be meshed (e.g., via marching methods). "Some works establish an opacity field in 3D to use marching tetrahedra for unbounded meshes"

- per-image latents: Learnable per-image vectors that modulate a network to compensate appearance. "consists of per-image latents "

- per-primitive confidence: A learned scalar for each Gaussian that weighs photometric loss and guides densification. "learnable per-primitive confidence values dynamically balance photometric and geometric losses."

- photometric losses: Image reconstruction losses (e.g., L1, SSIM) that drive appearance fitting in training. "Most prior art optimizes a scene representation driven by photometric losses"

- radiance fields: Functions modeling spatially varying color and density to render images from new viewpoints. "The optimization of radiance fields given imperfect, real-world data is inherently underconstrained."

- self-ensembling techniques: Methods that average predictions from multiple model states to estimate uncertainty. "density-aware or self-ensembling techniques~\cite{sunderhauf2022density, zhao2025GSEnsemble}."

- self-supervised confidence framework: A training scheme that learns per-primitive confidence without external labels to balance losses. "we present a simple and efficient alternative by introducing a self-supervised confidence framework to 3DGS"

- SO(3): The Lie group of 3D rotations, used to parameterize Gaussian orientations. "orientation "

- sparse voxel rasterization: Rendering technique that rasterizes sparse voxel structures for efficiency. "while others leverage sparse voxel rasterization~\cite{Sun2024SVRaster, li2025geosvr}."

- Spherical Harmonics (SH): A basis for representing low-frequency directional functions used to model view-dependent color. "Spherical Harmonics (SH), as currently employed, are limited to low-frequency components"

- SSIM (Structural Similarity Index): A perceptual image similarity metric decomposed into luminance, contrast, and structure. "We refer the interested reader to~\cite{nilsson2020understandingssim} for an in-depth analysis of SSIM."

- unbounded meshes: Meshes extracted without predefined spatial bounds, often requiring special extraction strategies. "Our final approach delivers state-of-the-art results for unbounded meshes while remaining highly efficient."

- variational inference: A Bayesian inference technique that approximates complex posteriors with tractable distributions. "Variational inference has been used to learn probability distributions over radiance fields"

- view-dependent appearance: Appearance that changes with viewing direction (e.g., specular highlights), challenging geometric supervision. "In challenging scenarios with high-frequency view-dependent appearance, updating the geometry may, in fact, represent the only viable way to truly minimize the photometric error;"

Collections

Sign up for free to add this paper to one or more collections.