- The paper proposes a unified orchestration framework that integrates CPUs, GPUs, and QPUs to enable scalable hybrid quantum–classical computing.

- It employs declarative Argo DAGs and Kueue for resource-aware scheduling, ensuring dynamic workload distribution and reproducible experiments.

- The system is validated via distributed quantum circuit cutting, demonstrating effective coordination and real-time observability using Prometheus and Grafana.

Kubernetes-Orchestrated Hybrid Quantum–Classical Workflows: A Technical Overview

Introduction

This work proposes a cloud-native framework for orchestrating hybrid quantum–classical workflows, leveraging Kubernetes, Argo Workflows, and Kueue to unify CPUs, GPUs, and QPUs within a single scalable orchestration layer (2603.24206). The primary objective is to address the unique challenges posed by coordinating heterogeneous resources for quantum-classical computation in High-Performance Computing (HPC) and cloud environments. The system is validated through a distributed quantum circuit cutting application, demonstrating dynamic resource allocation, reproducibility, and observability across modern hybrid computing platforms.

System Architecture

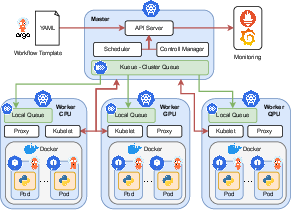

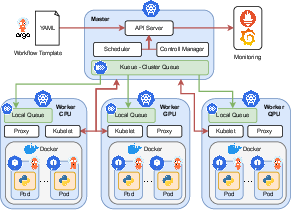

The architecture employs Kubernetes as the orchestration backbone, enabling resource abstraction, failure isolation, and scalable execution of containerized workloads across heterogeneous clusters. Argo Workflows is used to define experiments as Directed Acyclic Graphs (DAGs) in YAML, encapsulating hybrid pipelines with explicit data dependencies and modular components. Kueue introduces advanced, resource-aware queueing for dynamic, workload-driven scheduling spanning CPUs, GPUs, and quantum backends. Continuous monitoring is handled by a Prometheus-Grafana stack, providing real-time, fine-grained telemetry of resource utilization and system performance.

Figure 1: Kubernetes-native hybrid quantum–HPC workflow architecture, showing the integration of cluster orchestration, workflow management, scheduling, and monitoring.

Key features of the system include:

- Declarative workflow specification with modularity and version control.

- Transparent abstraction of physical resources, enabling reproducible and portable experiments.

- Fine-grained scheduling across heterogenous node types using node labeling and evolving toward Kubernetes Dynamic Resource Allocation (DRA).

- Secure integration of cloud and on-prem QPU access managed as first-class Kubernetes resources.

Workflow Management and Scheduling

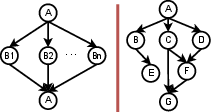

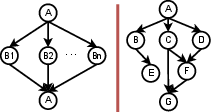

Hybrid quantum–classical tasks are modeled as Argo DAGs, expressing composable pipelines with explicit fan-out/fan-in structure and clear separation of quantum, classical simulation, and post-processing stages.

Figure 2: Example Argo DAG representations—generic fan-out/fan-in pattern and a fully specified quantum-classical application workflow.

Resource targeting of workflow tasks is achieved by node labels or explicit resource requests. For demonstration, nodeSelector constraints map high-intensity tasks to GPU nodes and QPU API-interfacing containers to appropriate Kubernetes pods. Kueue enables robust, queue-based scheduling, providing fair contention management for resources that are both scarce and heterogenous. ResourceFlavors and LocalQueues abstract underlying hardware, improving portability and cluster utilization as Kubernetes matures its support for device-class resource discovery.

Integration of Quantum and Classical Resources

The framework supports both direct (on-prem) and indirect (cloud API) QPU integration. On-prem QPUs are exposed as worker nodes in the cluster, allowing low-latency quantum-classical interaction. For cloud quantum providers, API credentials are injected into pods using Kubernetes Secrets and persistent volume mounts, enabling authenticated remote job submission from workflow containers. Thus, workloads can be transparently targeted to quantum hardware regardless of access modality or physical location.

Case Study: Distributed Circuit Cut Workflow

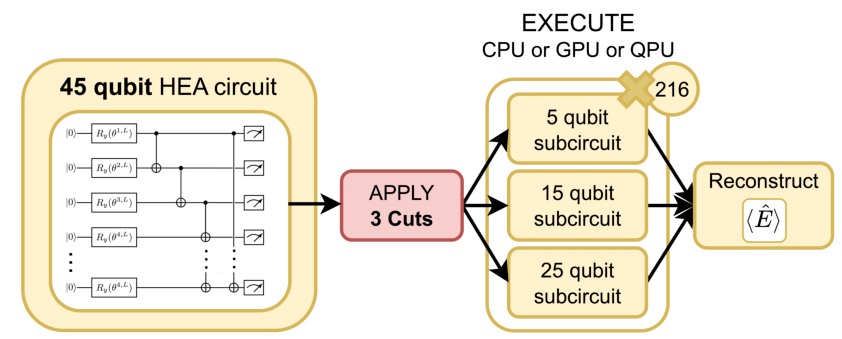

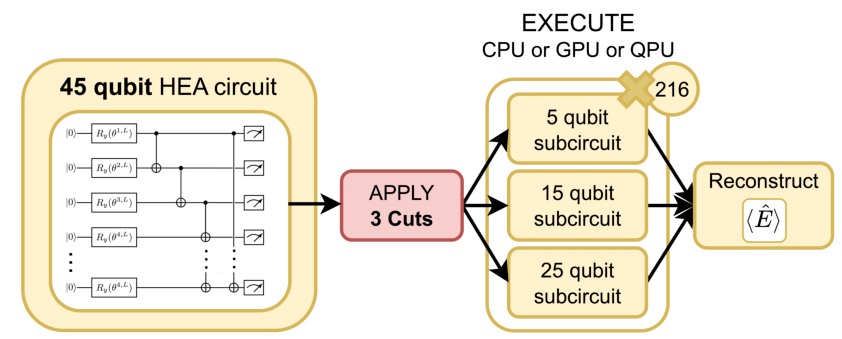

As a proof of concept, the authors implement distributed quantum circuit cutting, a paradigmatic hybrid workload. Large n-qubit circuits are decomposed into smaller “fragments” using Qdislib and Qiskit, which are subsequently dispatched across CPU, GPU, and QPU resources for execution. Backend selection (CPU/GPU/QPU) is based on circuit size, serving as an illustration of orchestration flexibility.

Figure 3: Schematic application of three cuts in a hardware-efficient ansatz (HEA) quantum circuit, illustrating partitioning for distributed execution.

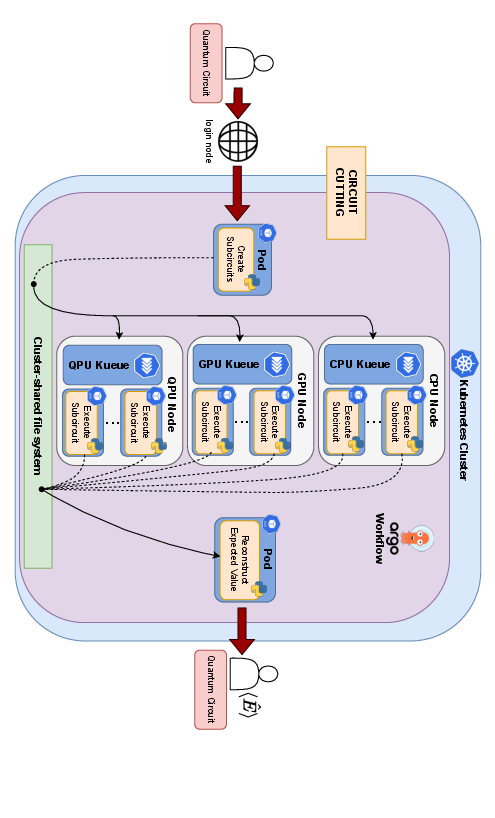

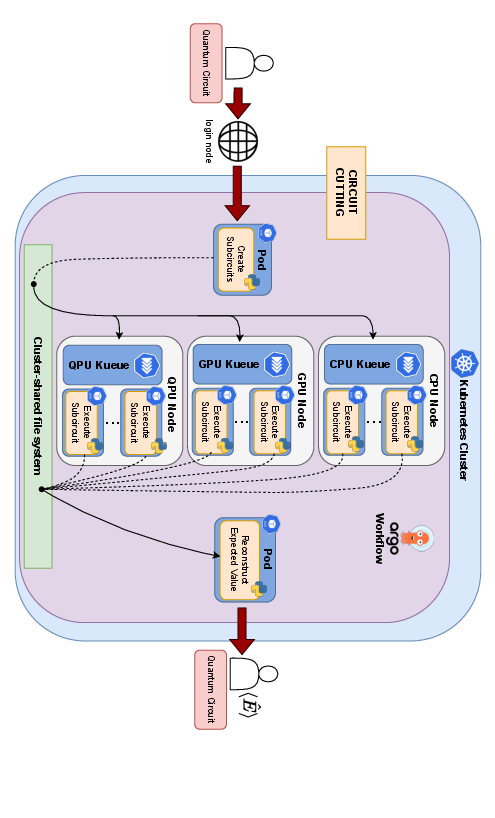

The 3-stage Argo pipeline consists of (1) subcircuit generation, (2) parallel backend execution, and (3) classical output reconstruction. Each fragment is serialized and routed through the Argo DAG, with Kueue balancing resource assignments to maximize throughput and minimize idle times. The system naturally supports concurrent execution of independent tasks across all available backends.

Figure 4: Concurrent execution of subcircuits across CPU, GPU, and QPU nodes with Argo managing dependencies and Kueue optimizing resource usage.

Workflow execution is fully declarative, launched via a single kubectl command, and supports arbitrary scaling in the number and type of backend resources, subject to cluster policy and available hardware.

Monitoring, Observability, and Limitations

Prometheus and Grafana provide continuous metrics collection, supporting live observation of task state, hardware utilization, queue delays, and error rates. Fine-grained observability is crucial for debugging, performance tuning, and system benchmarking in hybrid environments.

The current implementation exhibits several known limitations:

- Absence of standard QPU resource interfaces in Kubernetes limits abstraction and late binding.

- GPU tasks are still allocated exclusively rather than fractionally, constraining total concurrency; DRA adoption is expected to mitigate this.

- Input/output bottlenecks can arise from persistent volume saturation at scale.

- Backend selection is not performance-aware or telemetry-driven, hindering optimal resource assignment in production.

- Integration with legacy schedulers (e.g., SLURM) remains outside the present architecture but is feasible for hybrid deployments.

Implications and Future Directions

Practically, this framework enables scalable execution of complex quantum-classical pipelines in modern research environments, supporting workloads relevant to quantum chemistry, QML, and distributed simulation. The portability and reproducibility inherent in this design accelerate experimentation and facilitate scientific validation of new hybrid algorithms. Theoretically, it underpins new abstractions for device-agnostic quantum-classical resource scheduling.

Future directions include integration of intelligent, telemetry-driven backends, support for parameterized variational circuits with quantum-classical feedback, expansion to multi-cluster and federated workflows, and reduction of I/O latency through distributed in-memory data layers.

Conclusion

Kubernetes-native orchestration of hybrid quantum–classical workflows represents a critical advancement toward scalable, flexible, and reproducible quantum-HPC computing (2603.24206). By abstracting heterogenous resources and leveraging mature cloud-native primitives, this framework provides a robust operational foundation for the evolving field of distributed quantum applications, supporting both near-term hybrid algorithms and future, fault-tolerant quantum-classical integration.