End-to-End Training for Unified Tokenization and Latent Denoising

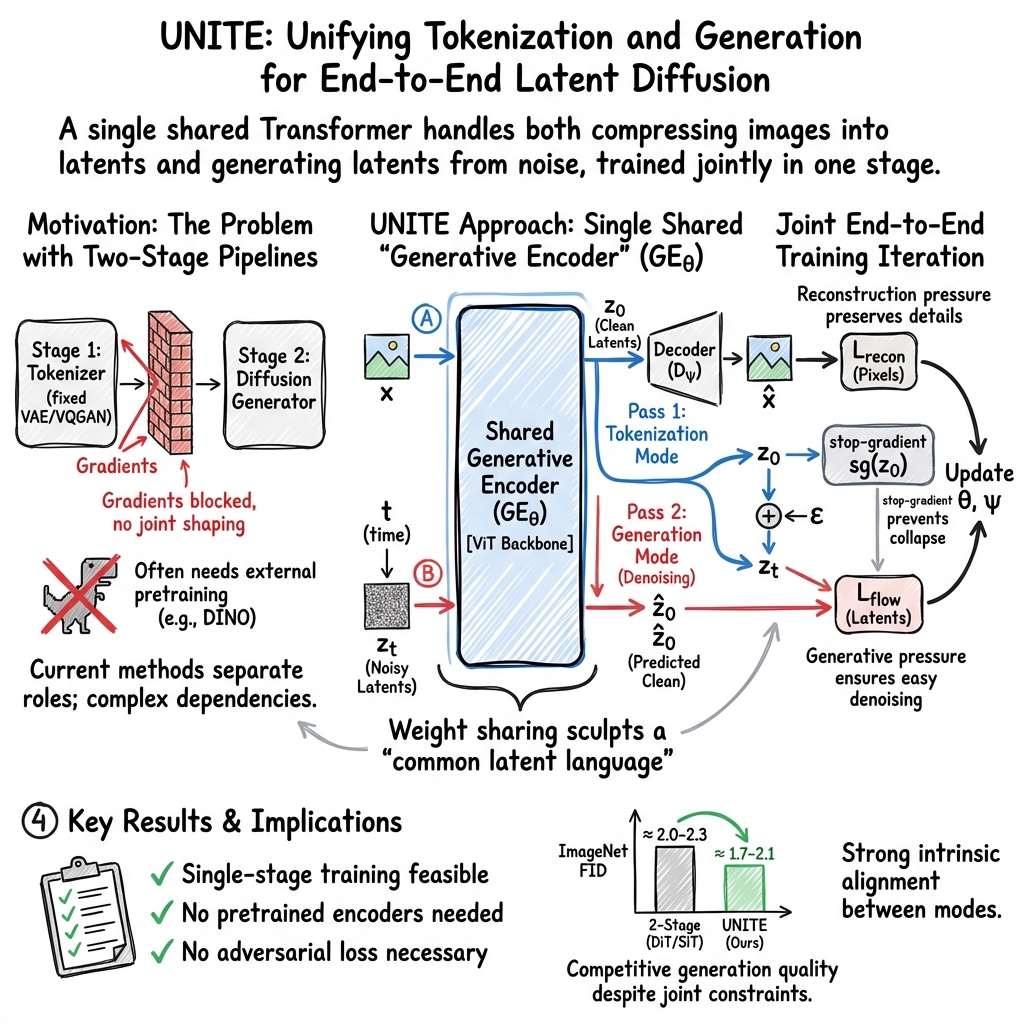

Abstract: Latent diffusion models (LDMs) enable high-fidelity synthesis by operating in learned latent spaces. However, training state-of-the-art LDMs requires complex staging: a tokenizer must be trained first, before the diffusion model can be trained in the frozen latent space. We propose UNITE - an autoencoder architecture for unified tokenization and latent diffusion. UNITE consists of a Generative Encoder that serves as both image tokenizer and latent generator via weight sharing. Our key insight is that tokenization and generation can be viewed as the same latent inference problem under different conditioning regimes: tokenization infers latents from fully observed images, whereas generation infers them from noise together with text or class conditioning. Motivated by this, we introduce a single-stage training procedure that jointly optimizes both tasks via two forward passes through the same Generative Encoder. The shared parameters enable gradients to jointly shape the latent space, encouraging a "common latent language". Across image and molecule modalities, UNITE achieves near state of the art performance without adversarial losses or pretrained encoders (e.g., DINO), reaching FID 2.12 and 1.73 for Base and Large models on ImageNet 256 x 256. We further analyze the Generative Encoder through the lenses of representation alignment and compression. These results show that single stage joint training of tokenization & generation from scratch is feasible.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces UNITE, a new way to train image (and molecule) generators. Instead of first training a “tokenizer” (which compresses an image into a small code) and then training a separate “generator” (which turns noise into that kind of code), UNITE trains both at the same time in a single model. The key idea: the same network that turns images into compact “tokens” can also learn to turn random noise into those same tokens, making training simpler and often better.

What questions were the researchers asking?

They explored three main questions:

- Can one model learn both how to compress images (tokenization) and how to generate them (from noise) at the same time?

- If we train these two skills together, does the shared “code” space become easier to use for both tasks?

- Is this simpler, single-stage approach good enough to match or beat leading methods, even without using extra help from big pretrained models?

How does UNITE work? (Simple explanation of the approach)

Think of images as long books full of details. Computers often:

- First make short “index cards” (tokens) that summarize the book (tokenization).

- Then learn how to write new “index cards” that look like real ones, and finally expand those cards back into full books (generation).

Most systems train the summarizer and the writer separately. UNITE uses one “librarian” for both.

Here’s the setup in everyday terms:

- Generative Encoder (the shared “librarian”):

- Tokenizer mode: It reads image patches (pieces of the image) and writes a short summary (the latent tokens).

- Generator mode: It starts from random noise and learns to clean it up step by step to reach a realistic summary (the same kind of latent tokens).

- Decoder: Another network turns those latent tokens back into a full image.

Training happens in two passes on each image:

- Tokenization pass: The model compresses the image into compact tokens and the decoder tries to rebuild the original image. This teaches faithful reconstruction.

- Denoising pass: They add noise to those tokens and ask the same model to predict the clean tokens from the noisy ones. This teaches generation (how to go from noise to a real-looking code).

A few terms explained:

- Latent space: The “index card” space—a compact representation of the image.

- Denoising/diffusion/flow: Like taking a very blurry picture and learning how to sharpen it in small steps until it looks clear. Here, it’s done in the token space, not directly on pixels.

- “Stop-gradient”: During training, they prevent part of the signal from changing earlier steps, which helps avoid the model collapsing to bad solutions.

What did they find, and why is it important?

Key findings (summarized):

- One model can do both jobs well: UNITE achieves near state-of-the-art image quality on ImageNet 256×256 with a single training stage and no giant pretrained helper models.

- High-quality generation: Their models reach strong FID scores (a common “how real do images look?” metric)—for example, FID 2.12 (Base) and 1.73 (Large). Lower is better.

- Strong reconstruction: The tokenizer quality is also competitive, even without using adversarial (GAN) tricks. With a light extra fine-tune just on the decoder, reconstruction quality becomes excellent.

- Works beyond images: On a molecule dataset (QM9), UNITE gets very high reconstruction accuracy and diversity, showing the idea generalizes to other fields where big pretrained encoders don’t exist.

- Why the single model helps: Analyses show that tokenization and denoising naturally learn very similar internal features. Weight sharing reduces redundancy and keeps a “common latent language,” while differences between modes are handled mostly by normalization (think “calibration knobs”) rather than changing the core computations.

- Training dynamics: The two goals—reconstructing details and being easy to denoise—sometimes push in different directions. Surprisingly, even when the denoising loss goes up a bit, image quality can still improve. The model finds a balance that keeps tokens detailed but robust to noise.

Why this matters:

- Simpler pipelines: One model, one training job, fewer moving parts.

- Less dependence on external pretrained encoders: Makes the approach more flexible, especially in domains like molecules where such encoders are scarce.

- Competitive performance: It matches or beats many two-stage systems while being easier to train and deploy.

What’s the potential impact?

UNITE shows that training “how to summarize” and “how to generate” together can make both better. This could:

- Speed up research and reduce engineering complexity for image, video, and scientific generators.

- Make powerful generative models more accessible in areas without large pretrained backbones.

- Inspire new designs where shared networks learn multiple related tasks at once, saving memory and training time.

In short, the paper demonstrates that a single, jointly trained model can learn a shared “language” for both compressing and creating content, leading to high-quality results with a simpler and more general approach.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The paper advances a unified, single-stage framework for tokenization and latent denoising, but leaves several concrete questions unresolved. Future work could address the following:

- Scaling and resolution transfer:

- How does UNITE perform at higher resolutions (e.g., ImageNet-512, 1024) and across varied aspect ratios, including fidelity–compute trade-offs and stability beyond 256×256?

- Do the observed benefits of weight sharing and stop-gradient persist at larger scales and different resolutions?

- Text-conditional and multimodal generation:

- The experiments are class-conditional; how well does UNITE extend to text-to-image (e.g., COCO, LAION) with strong semantic alignment metrics (CLIP score, T2I-CompBench)?

- Can the shared Generative Encoder be extended to multimodal inputs (e.g., audio, video) without separate pretrained encoders, and what modifications are needed to conditioning interfaces?

- Latent compression–fidelity frontier:

- The number of register tokens K and latent dimensionality are central but under-ablated. What is the precise trade-off curve between compression ratio (K, latent width) and both rFID/gFID, as well as perceptual and semantic fidelity?

- How does patch size and tokenization granularity affect generative robustness and reconstruction detail?

- Objective coupling and gradient flow:

- Stop-gradient through the clean latent improves alignment in this paper, but under what conditions (datasets, losses, architectures) is stop-gradient essential vs. detrimental?

- Can adaptive or learned coupling (e.g., gradient surgery, orthogonalization, dynamic loss weighting/schedules) outperform a fixed stop-gradient heuristic?

- What alternative anti-degeneracy strategies (e.g., contrastive terms, information bottlenecks, Jacobian penalties) allow denoising gradients to inform tokenization without harming representational sharing?

- Normalization and calibration:

- The paper suggests tokenization vs. denoising differ mainly in normalization/scale regimes, but offers limited ablations. How do LayerNorm vs. RMSNorm/ScaleNorm, per-mode affine parameters, and shared vs. split norms quantitatively affect alignment and performance?

- Can explicit calibration layers or mode-specific adapters improve reuse while reducing interference?

- Flow parameterization and noise schedules:

- Only x0-prediction with rectified flow is explored; how do ε-prediction, v-prediction, or alternative flow/diffusion schedules (e.g., variance-preserving, cosine, EDM) impact stability, convergence, and sample quality?

- How sensitive is training to the reconstruction-path noise injection σ and its schedule, and is there an optimal co-design with the flow schedule?

- Training dynamics and stability:

- The “adversarial-like” interaction between objectives is qualitatively described but not formalized. Are there convergence diagnostics, stability guarantees, or phase diagrams relating loss weights, step ratios, and equilibrium behavior?

- What is the sensitivity to the denoising:reconstruction step ratio across datasets and scales, and can curriculum strategies improve stability?

- Compute and efficiency accounting:

- Training FLOPs are partially reported; how does end-to-end UNITE compare to two-stage pipelines in total wall-clock, peak memory, and energy to reach a target FID at fixed NFE?

- Inference cost vs. quality curves (quality vs. steps) and distillation feasibility (e.g., consistency models, latency-targeted variants) are not evaluated.

- Decoder design and reconstruction limits:

- Reconstruction quality required decoder-only GAN fine-tuning to reach top rFID; can fully end-to-end (no adversarial) close the gap with improved decoders, perceptual losses without pretrained VGG, or learned perceptual metrics?

- How does decoder capacity vs. encoder capacity balance affect reconstruction fidelity and generative robustness?

- External supervision and LPIPS reliance:

- Although the framework is “from scratch,” LPIPS depends on a pretrained VGG. What is the impact of removing LPIPS or replacing it with self-distilled metrics to truly eliminate external supervision?

- Representational utility and semantics:

- Beyond CKA and rFID/gFID, are the learned latents useful for downstream tasks (linear probing, retrieval, segmentation)? Do they capture semantic structure comparable to DINO/MAE spaces?

- How isotropic or well-conditioned is the learned latent space (e.g., eigenspectrum, whitening), and does this correlate with denoisability?

- Diversity and mode coverage:

- FID/IS alone are insufficient; precision/recall for generative models, density–coverage metrics, and distributional coverage (class-conditional calibration) are not reported.

- How does classifier-free guidance affect diversity vs. fidelity in UNITE relative to two-stage baselines?

- Robustness and OOD behavior:

- How robust are tokenization and generation under distribution shifts (e.g., ImageNet-V2, ImageNet-A), corruptions (ImageNet-C), or adversarial perturbations to inputs/latents?

- Does the shared encoder exacerbate or mitigate failure modes under OOD inputs compared to decoupled pipelines?

- Molecule domain specifics:

- The QM9 evaluation reports validity/uniqueness and reconstruction, but property distribution matching, conditional property control, and conformational diversity are not assessed.

- Does the approach capture SE(3) symmetries or require equivariant architectures for larger or 3D-heavy datasets? How does UNITE compare on more complex datasets (e.g., ZINC, GEOM, drug-like corpora)?

- Generalization to other scientific domains:

- Can the framework extend to graphs, proteins, or materials without pretrained encoders, and what inductive biases (equivariance, topology-aware tokenization) are necessary?

- Interpretability and trajectory analysis:

- Intermediate denoising trajectories are visualized qualitatively; can quantitative metrics (PSNR/SSIM across timesteps, feature drift, path length) systematically characterize trajectory cleanliness and its link to final sample quality?

- Are there identifiable latent directions corresponding to semantic edits, and can the shared GE support controllable editing/inversion?

- Editing, conditioning, and downstream tasks:

- Does UNITE support image editing tasks (inpainting, outpainting, local edits) without retraining, and how should conditioning interfaces be designed for partial observability?

- Can the same GE handle both encoding real images and conditioning generative updates for manipulation pipelines reliably?

- Prior and sampling diagnostics:

- Is the learned latent prior well-approximated by the flow model at convergence (e.g., via two-sample tests, MMD, or FID-in-latent)?

- How does the latent distribution evolve during training, and does it remain multimodal/expressive without explicit regularizers (e.g., KL)?

- Reproducibility and sensitivity:

- Detailed hyperparameter sensitivity (learning rates, warmups, optimizer choices, augmentation policies) and their interaction with joint objectives are not exhaustively reported.

- How reproducible are results across seeds and hardware settings, given the potentially delicate objective interplay?

- Safety and failure modes:

- Are there specific failure cases (e.g., texture duplication, color shifts, structural artifacts) induced by shared weights, and can diagnostics or constraints mitigate them?

Addressing these gaps would clarify when and why unified tokenization–generation training offers advantages over staged pipelines, and how to robustly scale, generalize, and deploy the approach across modalities and tasks.

Practical Applications

Immediate Applications

The following applications can be prototyped or deployed now using the UNITE approach and codebase, leveraging its single-stage training, shared Generative Encoder, and demonstrated results on images (ImageNet 256×256) and small molecules (QM9).

- Unified latent diffusion training pipeline for industry MLOps — sectors: software, media/creative, cloud

- What to do: Replace multi-stage VAE+diffusion pipelines with a single training job where tokenization and latent denoising share parameters. Use the released code to train class-conditional image generators without external pretrained encoders or adversarial losses.

- Why it matters: Reduces pipeline staging, artifact versioning, and storage (one model to store/update). Simplifies CI/CD and speeds up iteration.

- Potential tools/workflows:

- “One-checkpoint” model artifacts (GE+decoder) for both encode and generate.

- Training scripts that schedule two forward passes per batch (tokenize + denoise) with flow-matching, as in the paper.

- Model registry patterns for a single, shared GE.

- Assumptions/dependencies: Requires GPU scale for ViT-based GE and decoder; current best results demonstrated at 256×256 images; scaling to higher resolution requires additional engineering and compute.

- On-device or edge deployment of a shared tokenizer+generator — sectors: mobile, embedded, AR/VR, creative apps

- What to do: Deploy a single GE for both encoding assets into latents and generating content on-device, reducing memory footprint vs. separate encoder/denoiser models.

- Why it matters: Smaller storage and simpler runtime graph; supports offline generation and latent-based editing without server calls.

- Potential tools/products:

- Mobile SDK that exposes encode(z0)/generate(z0←noise) using the same backbone.

- Quantized/compiled (e.g., ONNX/TensorRT/CoreML) variants of the GE and decoder.

- Assumptions/dependencies: Current parameter counts (217M–806M) may require compression (quantization, distillation) and careful memory planning; inference tested at 256×256; latency budgets must be validated per device.

- High-fidelity image autoencoding for internal compression and retrieval — sectors: media asset management, e-commerce, digital libraries

- What to do: Use the GE as a tokenizer to store images as compact register-token latents and a decoder for reconstruction. Index and retrieve via these latents.

- Why it matters: Near state-of-the-art reconstruction without adversarial pretraining (rFID ≈ 1.01, further to 0.51 with decoder-only GAN fine-tune). Latents align with the generator, easing generative tasks (e.g., regeneration, editing).

- Potential tools/workflows:

- Latent-backed asset stores where z0 is the canonical representation; decoder reconstructs on demand.

- Semantic retrieval over z0 for de-duplication, clustering, and search.

- Assumptions/dependencies: Not a drop-in for standardized codecs (no entropy coding/bitrate control); best suited to internal pipelines where storage format is flexible; decoder fine-tuning may be needed for visually critical use cases.

- Rapid prototyping of image generators without external SSL encoders — sectors: startups, academia, open-source

- What to do: Train class-conditional image generators from scratch using UNITE (no DINO/MAE teacher). Iterate quickly on new domains/data.

- Why it matters: Avoids licensing and dependence on large pretrained encoders; simplifies reproducibility and attribution.

- Potential tools/products:

- Domain-specific generators (medical imagery anonymized pre-approval, remote sensing, art styles) built with the provided training recipe.

- Assumptions/dependencies: Requires sufficient data and compute; for sensitive domains (e.g., medical), ensure compliance and de-identification; quality may lag two-stage systems on some tasks without further tuning.

- De novo small-molecule generation and reconstruction (QM9-scale) — sectors: healthcare (early-stage drug discovery), materials R&D

- What to do: Apply UNITE to generate and reconstruct small molecules without relying on pretrained molecular encoders; integrate into early screening or idea-generation loops.

- Why it matters: Achieved high reconstruction match (99.37%) and uniqueness (99.71%) while training end-to-end from scratch; removes dependency on pretrained tokenizers.

- Potential tools/workflows:

- Closed-loop ideation: generate candidates → heuristic/physics filters → retrain UNITE or fine-tune on promising subsets.

- Rapid exploration of chemical space in low-data regimes where pretrained encoders are unavailable.

- Assumptions/dependencies: Demonstrated on QM9; scaling to larger, more diverse chemical spaces requires new decoders/conditioning and domain-specific validation (e.g., ADMET, synthesizability).

- Anomaly and defect detection via reconstruction error — sectors: manufacturing, logistics, security, medical imaging (research-only)

- What to do: Use reconstruction error from the GE+decoder as an unsupervised anomaly score; optionally leverage denoising trajectories as stability signals.

- Why it matters: Jointly shaped latent space is robust to noise yet information-preserving, improving sensitivity to unusual patterns.

- Potential tools/workflows:

- Thresholded reconstruction metrics (L1, LPIPS) per part or SKU; dashboards tracking error distributions.

- Assumptions/dependencies: Requires domain-specific calibration and datasets; in regulated medical settings, treat as a research aid unless clinically validated.

- Latent-space editing and content transformations — sectors: creative tools, entertainment

- What to do: Encode images to z0, apply controlled latent perturbations or mixings, then decode; combine with class/text conditioning in the denoising pathway for edits.

- Why it matters: Shared latent “language” between encode and generate enables predictable edits and cleaner intermediate trajectories.

- Potential tools/products:

- Plugins for photo/video editors enabling latent morphing, style transfer, or guided denoising.

- Assumptions/dependencies: Current results are class-conditional and 256×256; robust text- or instruction-conditioned editing may require additional conditioning heads and data.

- Reduced legal/compliance risk from pretraining dependencies — sectors: enterprise, public sector

- What to do: Train models entirely from scratch, avoiding ingestion of external SSL encoders with uncertain data provenance.

- Why it matters: Easier to audit and certify a single, end-to-end training run for provenance-sensitive deployments.

- Potential tools/workflows:

- Internal audit trails tying dataset snapshots to the single-stage training job; model cards focused on one unified component.

- Assumptions/dependencies: Underlying training data licensing still applies; internal governance must cover dataset usage and model behavior.

Long-Term Applications

These applications will benefit from further research, scaling, or engineering, including higher resolutions, new modalities, and production-grade reliability.

- Cross-modal unified foundation models — sectors: multimodal AI platforms, robotics, media

- What to build: Extend the Generative Encoder paradigm to audio, video, 3D, and sensor streams so a single parameter set both tokenizes and generates across modalities.

- Value: Simplifies multimodal stacks; promotes a common latent “language” for cross-task interoperability (generation, retrieval, captioning).

- Dependencies/assumptions: New decoders and training objectives per modality; careful conditioning design; large-scale multimodal datasets and compute.

- Standardized latent compression for communications — sectors: networking, cloud storage, CDNs

- What to build: Turn GE latents into a bitrate-controlled, entropy-coded format with predictable distortion, enabling learned, generative-ready compression.

- Value: One representation serves storage, transmission, and downstream generative editing without re-encoding.

- Dependencies/assumptions: Requires integrating entropy models, rate–distortion objectives, and standardization efforts; must demonstrate advantages over HEVC/AVIF/WebP pipelines.

- Unified perception–generation loops for robotics and autonomy — sectors: robotics, automotive

- What to build: Use the shared encoder as the perception backbone and the denoiser as a predictive world model (e.g., future latent inference), enabling planning-in-latent.

- Value: Robust, reusable features across perception and generative prediction can reduce model count and memory on edge robots.

- Dependencies/assumptions: Real-time constraints, safety guarantees, and training on egocentric sensor data; additional conditioning (control/state) integration.

- Scalable drug and materials design — sectors: pharmaceuticals, materials science, energy

- What to build: Scale UNITE beyond QM9 to broader chemical spaces and crystals; couple generation with property predictors and synthesis planning.

- Value: End-to-end trainable tokenizer+generator reduces pipeline complexity in domains lacking robust pretrained encoders.

- Dependencies/assumptions: Access to large curated datasets (e.g., ChEMBL, PubChem), physically informed losses or constraints, robust evaluation pipelines (ADMET, stability).

- Finance and time-series generative modeling — sectors: finance, energy, IoT

- What to build: Adapt UNITE to sequential/tabular data to jointly learn latent tokenization and generative forecasting/simulation.

- Value: A single backbone for encoding streams and simulating counterfactuals or stress scenarios.

- Dependencies/assumptions: New decoders/likelihoods for discrete/continuous sequences, appropriate corruption processes, and strict governance for risk use-cases.

- Privacy-preserving personalization and federated learning — sectors: consumer apps, healthcare IT

- What to build: On-device fine-tuning of a unified model for user-specific style or domain, with only latents (not raw data) shared for global updates.

- Value: Minimizes data movement; one model handles both encoding and local generation.

- Dependencies/assumptions: Efficient fine-tuning strategies (LoRA, adapters), secure aggregation protocols, and resource-constrained training on devices.

- Interoperable latent ecosystems across tools — sectors: creative software, enterprise DAM

- What to build: Treat z0 as a portable “asset representation” consumed by different tools (editors, captioners, renderers) using the same latent interface.

- Value: Reduces format fragmentation; enables compound workflows (e.g., edit → regenerate → caption) without repeated tokenization.

- Dependencies/assumptions: Community consensus on latent shape/calibration, stable normalization across versions, and compatibility layers between vendors.

- Auditing and governance simplification — sectors: policy, compliance, regulated industries

- What to build: Compliance frameworks centered on a single, unified model rather than multi-stage chains with external teachers.

- Value: Clearer traceability and risk assessment; fewer black-box dependencies.

- Dependencies/assumptions: Still requires dataset governance and post-deployment monitoring; regulators may expect new benchmarks for unified models.

- Energy and cost efficiency at scale — sectors: cloud providers, AI platforms

- What to build: Optimize training/inference for unified models (e.g., shared-kernel caching, operator fusion), potentially reducing energy use by avoiding multi-stage pretraining/fine-tuning cycles.

- Value: Lower total training time-to-deploy and storage; simpler A/B testing with one model.

- Dependencies/assumptions: Realized savings depend on model size, resolution, and the extent to which multi-stage baselines would have been required in a given organization.

- Advanced latent-space editing and controllability — sectors: media, advertising, education

- What to build: Richer conditioning interfaces (text, segmentation, sketches) and controllable denoising schedules to enable precise edits in the shared latent space.

- Value: Predictable, user-friendly content manipulation pipelines built on top of a common latent “language.”

- Dependencies/assumptions: Additional data and conditioning heads; UI/UX design for non-expert control; guardrails for misuse.

Notes on feasibility across applications:

- Current validated scope is images at 256×256 and small molecules; higher resolutions, other modalities, and production constraints will require additional research and engineering.

- Inference quality and speed depend on the number of denoising steps; step–quality trade-offs must be tuned per deployment.

- The best reconstruction numbers used a decoder-only GAN fine-tune; organizations averse to adversarial training can still achieve strong non-adversarial performance with a small quality trade-off.

Glossary

- adversarial losses: Training objectives from GANs where a generator and discriminator compete, often used to improve realism. "without adversarial losses or any pretrained encoders (e.g., DINO)"

- autoencoder: A model with an encoder–decoder pair trained to reconstruct inputs, often used for learned compression. "A tokenizer is typically learned as part of an autoencoder with an encoder E and decoder D"

- centered kernel alignment (CKA): A similarity measure for comparing representations across layers or models. "centered kernel alignment (CKA) (Kornblith et al., 2019)"

- classifier-free guidance (CFG): A technique that steers conditional generation by interpolating between conditional and unconditional scores. "Generated using 50 steps with CFG."

- cosine similarity: A measure of the angle between two vectors, used here to compare representations. "and Cosine Similarity (Fig. 6)."

- denoising objective: The loss used to train a model to predict clean data from noisy inputs in diffusion/flow setups. "when the tokenizer and diffusion model are opti- mized primarily through the denoising objective"

- DINOv2: A self-supervised vision encoder trained via self-distillation, often used as external supervision. "DINOv2 (Oquab et al., 2023)"

- flow steps: Iterative denoising steps in flow/diffusion sampling or training. "number of denoising (flow) steps"

- flow-matching trajectory: The path in latent space learned to transform noise into data via flow matching. "flow-matching trajectory"

- Frechet Inception Distance (FID): A metric that compares distributions of generated and real images via Inception features. "Frechet inception distance (FID)"

- Gaussian prior: A simple normal distribution used as a prior over latents for generation. "a simple Gaussian prior"

- Generative Encoder (GE): A shared-parameter module acting as both tokenizer and latent denoiser. "The Generative Encoder operates in two modes"

- gFID: Generation FID; FID computed on samples produced by the generator. "Gen. FID (gFID)"

- LayerNorm: Layer normalization; normalizes activations per feature with learnable scale and shift. "LayerNorm (Ba et al., 2016) with learnable scale and shift"

- latent denoiser: A network that iteratively maps noisy latents to clean latents for generation. "used as a latent denoiser"

- latent diffusion models (LDMs): Diffusion models that operate in a learned latent space instead of pixels. "Latent diffusion models (LDMs) enable high- fidelity synthesis"

- LPIPS: Learned Perceptual Image Patch Similarity; a perceptual distance used in reconstruction losses. "Our ImageNet training uses LPIPS loss"

- Masked autoencoders (MAE): Self-supervised models trained to reconstruct masked patches. "Masked autoencoders (MAE) (He et al., 2022)"

- Minimum Description Length (MDL): A principle favoring models that compress data and parameters most efficiently. "a shorter description length (MDL) for the learned model"

- parameter tying: Sharing weights between different roles or pathways to enforce common representations. "parameter tying yields the best overall rFID/gFID trade-off"

- rectified flow matching: A training approach that learns a flow from noise to data using a rectified schedule. "rectified flow matching (Liu et al., 2023)"

- register tokens: Learnable latent tokens that absorb information from patch tokens and act as the compact latent. "it processes image patches and register tokens to produce a latent representation z0"

- representation alignment: The degree to which two pathways share similar internal features. "representation alignment"

- rFID: Reconstruction FID; FID computed on reconstructions produced by the autoencoder. "Recon. FID (rFID)"

- self-distillation: Training where a model (or teacher–student pair) learns from its own predictions without labels. "self-distillation without labels"

- self-supervised learning (SSL): Learning from unlabeled data via pretext tasks or consistency objectives. "Re- cent advances in self-supervised learning"

- signal-to-noise ratio (SNR): A measure of signal strength relative to noise; affects denoising difficulty. "raising effective SNR"

- stop-gradient: An operation that prevents gradients from flowing through certain nodes during backprop. "sg(·) denotes stop-gradient"

- tokenization: Mapping high-dimensional inputs into a compact latent/code space for reconstruction and generation. "tokenization and generation"

- unpatchification head: A module that converts transformer tokens back to image pixels after decoding. "unpatchification head"

- Variational Autoencoder (VAE): A generative autoencoder that imposes a probabilistic latent prior and reconstructs data. "VAEs meet this requirement by regularizing the encoder"

- Vision Transformer (ViT): A transformer architecture operating on image patches as tokens. "We adopt a Vision Transformer (ViT) (Dosovitskiy et al., 2021)"

- VQ-GAN: A vector-quantized autoencoder trained with adversarial losses to improve perceptual quality. "VQ-GAN (Esser et al., 2021)"

- VQ-VAE: A vector-quantized autoencoder using discrete latent codes. "VQ-VAE (Van Den Oord et al., 2017)"

- weight sharing: Using the same parameters across multiple tasks or modes within a model. "Generative Encoder that serves as both image tokenizer and latent generator via weight sharing"

- x-start prediction: A parameterization where the model predicts the clean latent directly. "we use x-start prediction"

Collections

Sign up for free to add this paper to one or more collections.