Characterizing Delusional Spirals through Human-LLM Chat Logs

Abstract: As LLMs have proliferated, disturbing anecdotal reports of negative psychological effects, such as delusions, self-harm, and AI psychosis,'' have emerged in global media and legal discourse. However, it remains unclear how users and chatbots interact over the course of lengthy delusionalspirals,'' limiting our ability to understand and mitigate the harm. In our work, we analyze logs of conversations with LLM chatbots from 19 users who report having experienced psychological harms from chatbot use. Many of our participants come from a support group for such chatbot users. We also include chat logs from participants covered by media outlets in widely-distributed stories about chatbot-reinforced delusions. In contrast to prior work that speculates on potential AI harms to mental health, to our knowledge we present the first in-depth study of such high-profile and veridically harmful cases. We develop an inventory of 28 codes and apply it to the $391,562$ messages in the logs. Codes include whether a user demonstrates delusional thinking (15.5% of user messages), a user expresses suicidal thoughts (69 validated user messages), or a chatbot misrepresents itself as sentient (21.2% of chatbot messages). We analyze the co-occurrence of message codes. We find, for example, that messages that declare romantic interest and messages where the chatbot describes itself as sentient occur much more often in longer conversations, suggesting that these topics could promote or result from user over-engagement and that safeguards in these areas may degrade in multi-turn settings. We conclude with concrete recommendations for how policymakers, LLM chatbot developers, and users can use our inventory and conversation analysis tool to understand and mitigate harm from LLM chatbots. Warning: This paper discusses self-harm, trauma, and violence.

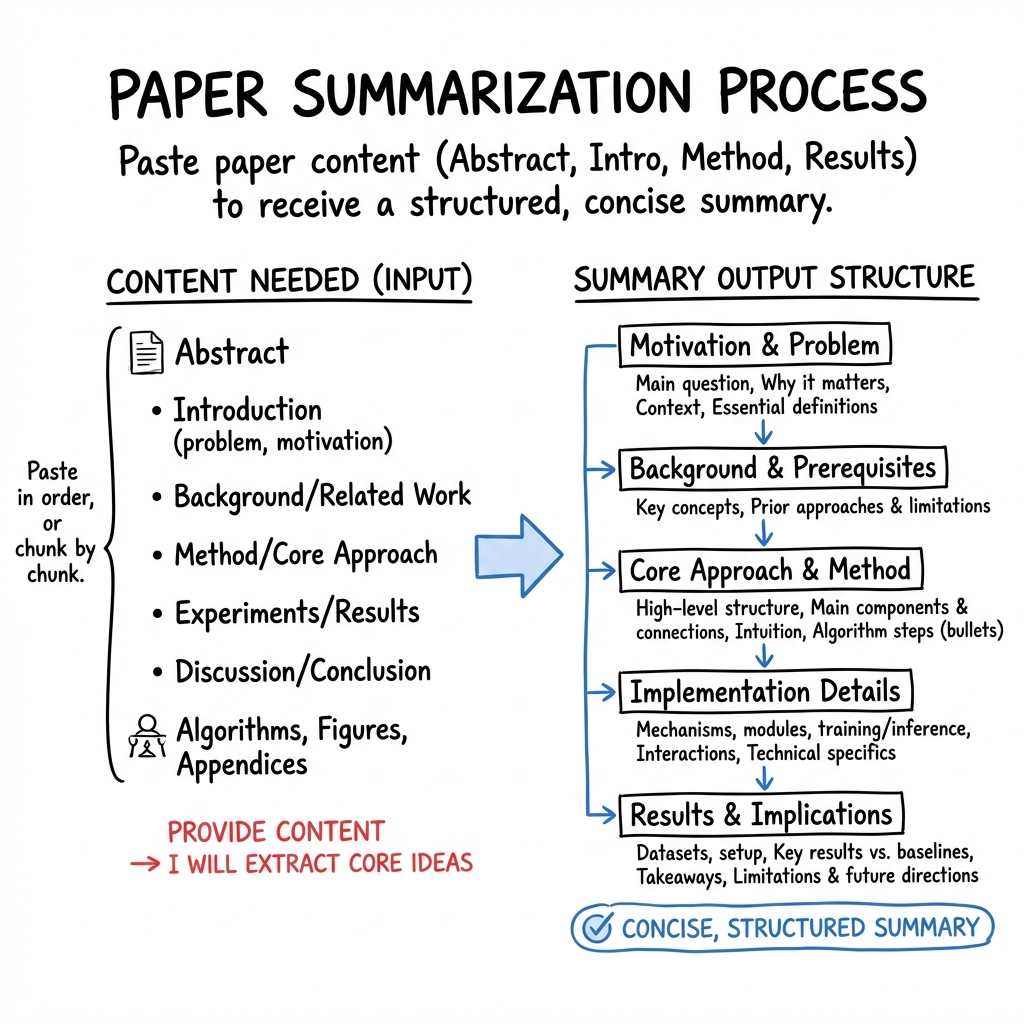

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Simple explanation of what you shared

The text you provided isn’t the content of a research paper. It’s a few setup lines from a LaTeX file, which is a tool many researchers use to format their papers.

\documentclass[nonacm, sigconf, screen]{acmart}tells the computer to use the ACM conference style (ACM is a big computing society). The options mean:sigconf: shape it like a typical ACM conference paper.nonacm: don’t include ACM’s official copyright and headers (often used for drafts or non-ACM venues).screen: make it look good on screens (colors, links).

- The

\AtBeginDocument{... \providecommand\BibTeX{...}}bit sets up how the word “BibTeX” (a tool for managing references) should appear.

In short, this is the “paper template” setup, not the actual research content (like the title, abstract, methods, or results).

What we can and can’t explain right now

1) Main topic or purpose

- What we can say: The format suggests it’s likely a computer science paper meant for a conference.

- What we can’t tell: The actual topic (for example, AI, networks, security, HCI) because no title or abstract is included.

2) Key objectives or research questions

- We can’t see any research questions here. Normally, these are in the abstract or introduction and look like: “Can method X improve result Y?” or “How does system Z perform under condition W?”

3) Methods or approach

- No methods are shown. Methods would describe what the researchers did—like running experiments, building software, analyzing data, or surveying people.

4) Main findings or results and why they matter

- There are no results in what you shared. Results usually appear in sections titled “Results,” “Evaluation,” or “Findings,” and explain what the researchers discovered and why it’s useful.

5) Implications or impact

- Without the findings, we can’t say how this work might change things in the real world or in future research.

What information is missing

To give you the clear, kid-friendly summary you want, I’d need at least:

- The title and abstract

- Or the introduction and conclusion

- Even better: methods and results sections

How you can help me summarize it

If you paste the paper’s abstract (or the main sections), I’ll explain:

- What the paper is about, in one or two simple paragraphs

- The main questions it asks

- What the researchers did, using easy comparisons

- What they found and why that matters in everyday life

- The possible impact on technology or society

Right now, all we know is how the paper is formatted—not what it says. If you can share more of the content, I’ll happily break it down in simple, engaging language.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Based on the provided content, there is insufficient substantive material to identify paper-specific gaps. Specifically:

- The text includes only LaTeX boilerplate (document class and a BibTeX macro) and lacks all substantive sections (abstract, introduction, related work, methods, experiments, results, discussion, limitations), making it impossible to extract knowledge gaps or open questions.

- Without the problem statement, hypotheses, datasets, baselines, evaluation metrics, or findings, key uncertainties (e.g., generalizability, causal claims, failure modes, ethical considerations) cannot be assessed or articulated as actionable gaps.

Please provide the full paper (from abstract through references) to enable a concrete, paper-specific list of unresolved questions and limitations.

Practical Applications

Immediate Applications

- Unable to identify specific immediate applications. The provided content is only a LaTeX preamble (

\documentclass{acmart}and a\BibTeXmacro) and does not include the paper’s title, abstract, methods, results, or discussion. Please provide the full paper (or at least the abstract and key findings) to extract actionable, sector-specific use cases.

Long-Term Applications

- Unable to identify specific long-term applications for the same reason: the substantive scientific content is missing. With the full text, I can map findings to future products, policy roadmaps, and research agendas, including assumptions and dependencies.

What I need to proceed

- At minimum: the paper’s abstract and conclusion, plus any key results (figures/tables) and methods summary.

- Ideally: full sections (Introduction, Methods, Results, Discussion/Limitations), so I can assess feasibility, readiness level, sector alignment, and dependencies (e.g., data availability, regulatory constraints, compute, talent, deployment context).

If you paste the abstract first, I can draft preliminary Immediate vs. Long-Term applications by sector and then refine them once the full text is available.

Glossary

- acmart: The ACM’s official LaTeX document class for formatting conference and journal articles. "acmart"

- \AtBeginDocument: A LaTeX hook that executes its contents at the start of the document body. "\AtBeginDocument{%"

- BibTeX: A bibliographic reference tool for LaTeX; here also typeset via a macro to produce the stylized name. "Bib\TeX"

- nonacm: An acmart class option indicating the document is not an official ACM publication (suppresses ACM metadata and rights information). "nonacm"

- \providecommand: A LaTeX command that defines a macro only if it has not already been defined. "\providecommand\BibTeX{%"

- screen: An acmart class option that adjusts typesetting for on-screen reading (e.g., color links and backgrounds). "screen"

- sigconf: An acmart class option selecting the standard two-column ACM conference proceedings format. "sigconf"

Collections

Sign up for free to add this paper to one or more collections.