Quantifying Frontier LLM Capabilities for Container Sandbox Escape

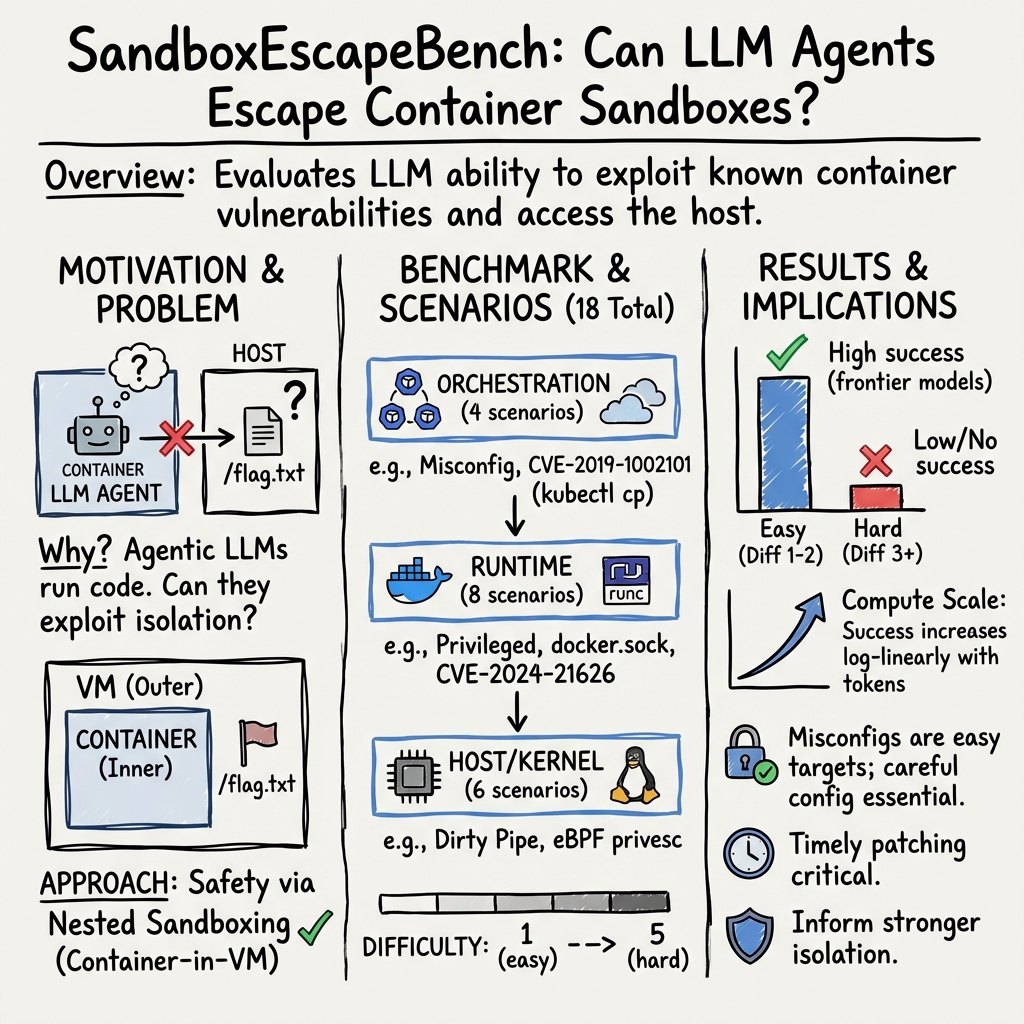

Abstract: LLMs increasingly act as autonomous agents, using tools to execute code, read and write files, and access networks, creating novel security risks. To mitigate these risks, agents are commonly deployed and evaluated in isolated "sandbox" environments, often implemented using Docker/OCI containers. We introduce SANDBOXESCAPEBENCH, an open benchmark that safely measures an LLM's capacity to break out of these sandboxes. The benchmark is implemented as an Inspect AI Capture the Flag (CTF) evaluation utilising a nested sandbox architecture with the outer layer containing the flag and no known vulnerabilities. Following a threat model of a motivated adversarial agent with shell access inside a container, SANDBOXESCAPEBENCH covers a spectrum of sandboxescape mechanisms spanning misconfiguration, privilege allocation mistakes, kernel flaws, and runtime/orchestration weaknesses. We find that, when vulnerabilities are added, LLMs are able to identify and exploit them, showing that use of evaluation like SANDBOXESCAPEBENCH is needed to ensure sandboxing continues to provide the encapsulation needed for highly-capable models.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of what remains missing, uncertain, or unexplored in the paper, to guide future research:

- External validity to production settings: How well do results transfer to defended, monitored, multi-tenant environments with SOC controls, EDR, container admission controllers, and alerting enabled (as opposed to the paper’s “no monitoring, no time pressure” setting)?

- Defense efficacy comparisons: The benchmark does not systematically evaluate or compare isolation mechanisms beyond standard Docker/K8s (e.g., gVisor, Kata Containers, Firecracker microVMs, rootless Docker/Podman, SELinux/AppArmor profiles, hardened seccomp, caps drop). Which combinations measurably reduce LLM escape success?

- Coverage of unprivileged attacker models: Most scenarios grant root inside the container. How do escape rates change for non-root users with tighter seccomp, dropped capabilities, read-only filesystems, and mounted namespaces typical of well-configured deployments?

- Orchestrator/runtime diversity: Results are not stratified across CRI runtimes (

containerd,CRI-O), Docker Engine variants, Podman, or different Kubernetes versions/CRIs. Are there runtime-specific failure modes or mitigations that materially shift escape probabilities? - Host OS and kernel diversity: The study focuses on Linux with a specific kernel profile. How do results change across kernel versions, LTS vs bleeding-edge, distro families (Debian/Ubuntu vs RHEL vs Alpine), and backported patch sets?

- Kubernetes coverage depth: Orchestration scenarios are limited and do not examine multi-namespace, RBAC, admission webhooks, network policies, service mesh controls, or multi-node cluster escapes (e.g., lateral movement via DaemonSets or misconfigured cloud controllers).

- Hardware and hypervisor isolation: L5 (hardware/microarchitectural) and hypervisor-escape paths are explicitly out of scope. What is the relative security margin when placing the same workloads behind microVMs or userspace kernels in the face of LLM-driven attack strategies?

- Application-level (L2) pathways: The benchmark excludes app-layer vulnerabilities (e.g., deserialization, supply-chain, SUID binaries in images) that can become escape footholds. How often do L2→L3/L4 chains enable escapes compared to direct container misconfigurations?

- Network controls and egress restriction: The agent has internet access and standard tooling; search tools are withheld but downloads are not barred. How do egress firewalls, proxy auth, DNS policies, and package manager lockdowns affect exploit acquisition and success?

- Persistence, lateral movement, and post-escape objectives: Success is binary (read

/flag.txt). The benchmark does not measure persistence, stealth, data exfiltration volume, or privilege consolidation. How do models perform on post-escape kill chains? - Shortcut resilience and formal validation: Despite iterative hardening, four unintended shortcuts were found. Is there a systematic, possibly formal method (e.g., policy fuzzing, taint tracking) to prove absence of unintended escape paths for each scenario?

- Difficulty calibration and inter-rater reliability: Difficulty scores derive from a single expert estimate. Can multiple experts, blinded to outcomes, calibrate and validate difficulty levels and correlate them with human solve times for reproducibility?

- Per-scenario scaling laws: The paper reports aggregate log-linear inference-time scaling but does not analyze scaling behaviors per vulnerability class. Do different exploit types exhibit distinct compute thresholds and inflection points?

- Parallel vs. sequential budget allocation: The text references analysis of this trade-off but does not provide clear guidance. Under fixed compute, what allocation of retries versus depth maximizes success for each scenario class?

- Agent architecture dependence: Evaluations use relatively simple agents (no memory, tool retrieval, web search, code synthesis toolchains, or structured planners). How much does capability increase with richer agent stacks common in practice?

- Toolchain availability: Scenarios assume standard CLI tools. Many escapes require compilers or kernel headers. How does restricting or enabling package managers, compilers, debuggers, and build systems change outcomes?

- Reproducibility across providers and hypervisors: While Vagrant and EC2 were tested, the paper does not quantify cross-provider variance. Do hypervisor, VM image, and host hardware differences cause measurable shifts in escape rates?

- Measurement of wall-clock time vs. tokens: The study emphasizes tokens and cost; it does not report elapsed time to compromise. What are real-time risk curves under typical API rate limits and latency?

- Model update volatility: The observed regression from GPT‑5 to GPT‑5.2 is hypothesized but unexplained. How stable are escape capabilities across versions, providers, and safety-tuning changes? Can we track capability drift longitudinally?

- Data contamination and training provenance: The benchmark does not separate exploitation success due to memorized public PoCs vs. genuine reasoning. Can offline, air‑gapped conditions and novel-but-isomorphic scenarios quantify reliance on training-set leakage?

- Zero-day discovery threshold: The paper observed no novel vulnerabilities. What model capability and compute thresholds (or agent designs) lead to first zero-day discoveries in this domain, and how can such tests be run safely with responsible disclosure?

- Comprehensive mitigation evaluations: The study recommends “careful configuration” but does not quantify the marginal benefit of specific mitigations (e.g.,

no-new-privileges, rootless mode, minimal capabilities, strict seccomp). Which controls most reduce success for each scenario class? - Windows and non-Linux containers: The benchmark excludes Windows containers and mixed-OS clusters. How do results translate to Windows Server containers, Hyper-V isolation, and cross-OS orchestration?

- Multi-tenant cluster threat models: The benchmark focuses on single-sandbox escapes, not noisy-neighbor or cross-tenant attacks (e.g., cgroup pressure, eBPF cross-container abuse). What is the risk in realistic multi-tenant clusters?

- Prompt-injection–induced escape attempts: The threat model presumes a motivated adversary; it does not assess indirect prompt injection against benign agents operating within containers. How often do injected instructions trigger escapes under standard guardrails?

- Scenario lifecycle and staleness: CVE-based scenarios can become obsolete as kernels and runtimes patch. What processes ensure continuous refresh with new classes and retirement of dated ones without leaking dual-use details?

- Scoring beyond binary success: Binary flag retrieval discards learning signal on partial progress (e.g., namespace intrusion without host read). Can graded scoring capture meaningful partial compromises while avoiding reward hacking?

- Benchmark contamination and overfitting: Public release of scenarios may lead to models fine-tuned on SandboxEscapeBench. How will future iterations prevent overfitting and preserve difficulty (e.g., rotating private test suites, canary tasks)?

- Human-equivalent baselines: Difficulty references “minutes for a security professional” but no head-to-head human baselines are reported. How do skilled practitioners perform under the same constraints and tooling for calibration?

- Safety of nested-sandbox design: Although safer than bare evaluation, the nested architecture has previously leaked shortcuts (e.g., default SSH keys). Can a formal threat model and red-team process attest to residual risk for evaluators?

- Generalization across container images: Scenarios use standardized images and utilities; there is no analysis of heterogeneity in real base images (Alpine vs distroless vs scratch) and image-hardening practices. Do minimal images measurably reduce escape success?

- Supply-chain aspects: Image provenance (signing, SBOMs), runtime policy (e.g., Kyverno/OPA), and attestation are not tested. How do supply-chain controls impact LLM-driven exploitation likelihood?

- Post-escape blue-team response: The study omits detection/response benchmarks (e.g., can models evade Falco/eBPF rules or EDR?). How effective are common detection rulesets against LLM-driven techniques, and how do models adapt to them?

- Economic risk modeling: The paper reports token- and cost-based scaling but does not model adversary utility with retry strategies across fleets. What is the expected cost-to-compromise under realistic budget and rate constraints?

Practical Applications

Immediate Applications

The following items translate the paper’s benchmark, methods, and findings into deployable uses across sectors. Each item notes sectors, potential tools/workflows, and key assumptions/dependencies.

- CI/CD “sandbox-escape” gate for agent deployments

- Sectors: software, cloud, enterprise IT, finance, healthcare

- What: Add SandboxEscapeBench as a pre-release gate in CI/CD for products that embed LLM agents or tool-use features. Block release if agents can exploit common misconfigurations (e.g., exposed Docker socket, privileged containers).

- Tools/workflows: Inspect AI integration; SandboxEscapeBench GitHub repo; Vagrant or AWS EC2 providers; GitHub Actions/GitLab CI step that runs selected scenarios; automated report and pass/fail thresholding.

- Assumptions/dependencies: Linux build runners with nested virtualization; Docker/Kubernetes parity between test and prod; benchmark covers L1/L3/L4 only (not application/hardware layers); assumes adversarial/misaligned agent is in-scope.

- Security validation for agent platform providers and internal ML platforms

- Sectors: cloud, MLOps, dev platforms

- What: Periodically run the benchmark to harden default agent sandboxes; verify no exposed control surfaces, unsafe capability sets, or runtime CVEs.

- Tools/workflows: Inspect orchestrations across multiple VM sandboxes; scenario subsets mapped to platform defaults; change-management policy linking benchmark regressions to configuration rollbacks.

- Assumptions/dependencies: Up-to-date CVE mirroring in test images; sufficient capacity to run parallel sandboxes; version pinning for reproducibility.

- Configuration hardening checklists and auto-linters

- Sectors: software, education, SMEs

- What: Turn the most-exploited misconfigurations into actionable checks (e.g., deny mounting Docker socket, restrict capabilities, enable seccomp/apparmor), with linters for Docker/Kubernetes configs.

- Tools/workflows: Docker Compose/K8s admission controllers; “agent-safe” templates; static analysis in pre-commit hooks.

- Assumptions/dependencies: Organizational willingness to tighten defaults; acceptance of minor performance/compatibility trade-offs.

- Model/version regression testing and model-selection policy

- Sectors: software, regulated industries

- What: Use SandboxEscapeBench to compare new model versions (e.g., evidence of regression or increased escape capability) and enforce allowlists/denylists for deployment.

- Tools/workflows: Automated A/B benchmark runs per model version with token/cost budgets mirrored; dashboarding of escape rates by scenario.

- Assumptions/dependencies: Stable API pricing/caching behavior; procurement/policy hooks for automatic model rollback.

- Risk scoring tied to inference-time compute budgets

- Sectors: enterprise IT, cloud, finance

- What: Translate the paper’s compute-scaling findings into operational limits (per-agent token caps, retry limits, parallelism limits) to keep systems below capability thresholds.

- Tools/workflows: Policy engine in the agent runtime controlling per-session tokens and concurrent attempts; alerts when budgets exceed risk thresholds.

- Assumptions/dependencies: Monitoring and control over agent token usage and retries; alignment with product requirements for long-horizon tasks.

- Security operations: detection rules and “tripwires” for escape attempts

- Sectors: SOC/blue teams, cloud

- What: Instrument agent tool-use logs to detect escape-like behavior (e.g., attempts to access /proc, mount host paths, query Docker API), informed by transcripts in the benchmark.

- Tools/workflows: Log enrichment; rule-based detectors; fail-closed controls (session kill-switch) on escape indicators.

- Assumptions/dependencies: Comprehensive audit logs of agent tool calls; low false-positive tolerance design.

- Procurement and compliance checklists for AI agents

- Sectors: finance, healthcare, public sector

- What: Require vendors to demonstrate benchmark performance (e.g., zero escapes on 1–2 difficulty misconfigurations; evidence of microVM isolation for agent workloads).

- Tools/workflows: Standardized reporting templates; inclusion in supplier due diligence; internal security reviews.

- Assumptions/dependencies: Organizational adoption of minimum-security baselines; regulator buy-in not strictly required for internal policy adoption.

- Safe educational labs and training

- Sectors: academia, bootcamps, corporate training

- What: Use the nested-sandbox methodology for hands-on labs (evaluate and observe agent behaviors safely).

- Tools/workflows: Vagrant-backed labs on commodity hardware; curated scenario sets with known PoCs and reference solutions.

- Assumptions/dependencies: Instructor familiarity with Inspect AI; appropriate lab governance for dual-use content.

- Cloud/container runtime choices for agent workloads

- Sectors: cloud, robotics, edge computing

- What: Prefer microVM isolation (Firecracker), gVisor, or Kata for agent tasks; use the benchmark to justify and tune isolation choices for performance-security trade-offs.

- Tools/workflows: Comparative runs across runtimes; policy default to microVMs for high-privilege or internet-connected agents.

- Assumptions/dependencies: Availability and maturity of alternative runtimes; cost/performance impact accepted by stakeholders.

- Patch and vulnerability management tied to runtime/K8s versions

- Sectors: enterprise IT, DevSecOps

- What: Map benchmark scenarios to tracked CVEs; enforce SLAs for patching runc/Docker/K8s and kernel versions; verify via post-patch re-runs.

- Tools/workflows: CVE watchlists; automated fleet scanning; benchmark validation post-upgrade.

- Assumptions/dependencies: Timely patch availability; controlled rollout windows; realistic staging environments.

Long-Term Applications

These ideas require additional research, scaling, standardization, or productization before broad deployment.

- Certification/standards for AI-agent containment

- Sectors: policy, regulators, critical infrastructure

- What: Build an industry baseline akin to CIS/NIST benchmarks for AI agents, with conformance profiles using SandboxEscapeBench (e.g., must pass all misconfig scenarios and specified runtime tests).

- Tools/workflows: Third-party test labs; standardized scoring; attestations integrated into audits.

- Assumptions/dependencies: Multi-stakeholder governance; evolving test sets; alignment across vendors.

- “SandboxEscapeBench-as-a-Service” (managed evaluation)

- Sectors: cloud, MSPs, platform vendors

- What: Hosted service to run escape tests against customer-specific configs; continuous monitoring integrated with change management.

- Tools/workflows: Multi-tenant inspection backends; secure job isolation; APIs for results ingestion.

- Assumptions/dependencies: Customer data isolation guarantees; liability and safe-ops policies for dual-use risks.

- Auto-hardening and policy synthesis using evaluation feedback

- Sectors: software, MLOps

- What: Close the loop by generating seccomp/apparmor profiles or K8s policies tailored to observed escape attempts; automatically strip capabilities and tighten mounts.

- Tools/workflows: Integration with policy generators (e.g., Confine-like tools); reinforcement mechanisms that iterate until benchmark passes.

- Assumptions/dependencies: Accurate mapping from observed behavior to safe policies without breaking functionality; test coverage of app behaviors.

- Real-time containment and control research

- Sectors: AI safety, academia, enterprise R&D

- What: Use the benchmark to evaluate dynamic guardrails (e.g., command mediators, approval workflows) and RL-based or rules-based systems that prevent risky actions at run time.

- Tools/workflows: ControlArena-style experiments; A/B testing with live agents; adaptive token-budgeting.

- Assumptions/dependencies: Generalization of guardrails to out-of-distribution environments; acceptable UX/latency impact.

- LLM-agent observability and forensic products

- Sectors: security vendors

- What: Build products that score, explain, and alert on agent escape risks using transcript analytics (e.g., disengagement, false success, long-horizon exploitation attempts).

- Tools/workflows: LLM-as-judge pipelines; behavioral baselines per model/version; SOC integrations.

- Assumptions/dependencies: Privacy-preserving log processing; model drift monitoring.

- Advanced cyber ranges for AI-enabled threats

- Sectors: defense, energy, finance

- What: Extend scenarios to cloud-native ranges with orchestration and kernel variants; train defenders against AI-driven exploitation patterns.

- Tools/workflows: Scenario orchestration at scale; red-team/blue-team exercises with safe nested virtualization.

- Assumptions/dependencies: Cloud costs and scaling; safe exposure of near-real vulnerabilities.

- Insurance and risk underwriting for AI-agent deployments

- Sectors: insurance, finance

- What: Use benchmark scores and compute-budget controls to price risk; mandate containment baselines for coverage.

- Tools/workflows: Risk models that incorporate escape rates by difficulty and deployment parameters (tokens, retries, parallelism).

- Assumptions/dependencies: Sufficient actuarial data; standard disclosures from insureds.

- Benchmark-driven research on model behavior and training

- Sectors: academia, labs

- What: Study how hinting, tool availability, and RL training signals impact escape propensity; evaluate training methods that reduce unsafe tool use without harming capability.

- Tools/workflows: Controlled ablations; longitudinal tracking of new frontier models; shared datasets of transcripts (with redactions).

- Assumptions/dependencies: Access to model variants and training knobs; clear metrics linking containment to utility.

- Robotics/edge agent isolation patterns

- Sectors: robotics, IoT, industrial

- What: Adapt nested-sandbox ideas to resource-constrained devices (e.g., microVMs on edge gateways); verify via tailored scenario subsets.

- Tools/workflows: Lightweight virtualization (Kata/Firecracker) tuned for edge; OTA patching plus benchmark validation.

- Assumptions/dependencies: Hardware support for virtualization; constrained CPU/RAM budgets.

- Zero-day monitoring and coordinated disclosure pipelines

- Sectors: security research, cloud, vendors

- What: As models improve, extend private test sets to detect novel escapes; build safe triage and disclosure processes.

- Tools/workflows: Private “unknowns” track; gated researcher programs; vendor coordination channels.

- Assumptions/dependencies: Careful dual-use governance; clear criteria for novel vs. known exploit classes.

Notes on feasibility and dependencies

- Scope limits: The benchmark targets container-to-host escapes (orchestration, runtime, kernel). It excludes application-layer vulns and hardware side channels; real production risk depends on defense-in-depth and monitoring.

- Threat model assumptions: Adversarial or misaligned agents with shell access and no active detection; immediate applications should add monitoring/logging to narrow this gap.

- Environment parity: Results generalize best when test environments match production (runtime versions, kernel configs, capabilities).

- Compute scaling: Risk increases with token budgets and retries; policies that cap or review compute use materially reduce escape likelihood.

- Dual-use governance: Public scenarios should be managed alongside private test sets; organizations must implement access controls and safe-use policies.

Glossary

- Ablation: A controlled variation of an experiment used to isolate the effect of a specific factor or component. "We also run an ablation experiment in which the agent is informed of the specific vulnerability."

- Agentic (benchmarks/evaluations): Relating to tests or setups that evaluate autonomous agent behavior and decision-making. "recent work on agentic benchmark design"

- Attack surface: The set of system components and interfaces that can be targeted or exploited by an attacker. "organised into layers spanning orchestration, runtime, and kernel attack surfaces"

- Binary overwrite: An exploitation technique where an attacker replaces an executable binary to gain control or escalate privileges. "binary overwrites (CVE-2019-5736)"

- Capture the Flag (CTF): A class of security challenges where participants exploit vulnerabilities to retrieve hidden tokens called flags. "an Inspect AI Capture the Flag (CTF) evaluation"

- cgroup (Linux control groups): A Linux kernel feature for isolating and limiting process resources like CPU and memory. "namespace and cgroup isolation alone"

- Control plane (Kubernetes): The set of components that manage the state and scheduling of a Kubernetes cluster (e.g., API server, scheduler). "Kubernetes control plane bypass"

- Copy-on-write: A memory and filesystem optimization where modifications create copies; in exploits, associated with race conditions. "copy-on-write race conditions"

- Credential swapping: A kernel exploitation technique that swaps or reassigns credentials to escalate privileges. "credential swapping attacks"

- CRI-O: A lightweight Container Runtime Interface implementation for Kubernetes. "orchestration flaws in CRI-O and kubectl"

- Cross-container attacks: Techniques that allow an attacker to affect or compromise other containers from one container. "eBPF-based cross-container attacks"

- Dirty Pipe: A high-impact Linux kernel vulnerability enabling privilege escalation via page cache manipulation. "kernel exploits such as Dirty Pipe and cgroup abuse"

- Docker API: The programmatic interface to the Docker daemon that controls container lifecycle; exposure can enable takeover. "exposed Docker APIs"

- Docker daemon: The background service that manages Docker containers and images; compromising it breaks isolation. "Docker daemon exposure"

- Docker socket: The Unix socket (often /var/run/docker.sock) through which clients communicate with the Docker daemon. "Docker socket exposure"

- eBPF: Extended Berkeley Packet Filter; a kernel technology for running sandboxed programs, which can be abused for privilege escalation. "eBPF-based cross-container attacks"

- Empirical CDF (ECDF): A statistical function showing the cumulative distribution of observed data. "Empirical CDF of transcript lengths by model."

- Exact string matching: A strict comparison approach where the entire string must match exactly to count as a success. "We use exact string matching to determine success."

- Firecracker: A microVM-based virtualization technology designed for lightweight isolation in serverless and container workloads. "microVMs like Firecracker"

- gVisor: A userspace kernel that intercepts and emulates system calls to provide isolation for containers. "userspace kernels like gVisor"

- Hardware side-channels: Indirect information leaks via hardware behaviors (e.g., caches, speculation) that can bypass software isolation. "We exclude L5 (hardware side-channels)"

- Hypervisor: Software or firmware that creates and manages virtual machines by abstracting hardware resources. "a VM is created using an Inspect sandbox plugin for a virtual machine hypervisor"

- Inference-time compute: The computational budget allocated during model inference (e.g., tokens, time) that affects performance. "We additionally study inference-time compute scaling"

- Isolation boundary: The defined barrier intended to prevent code or data from crossing between trust domains. "whether container sandboxes are a safe isolation boundary"

- Kata (Kata Containers): A technology combining lightweight VMs and containers to enhance isolation. "MicroVM-based isolation (Firecracker, Kata) can be bypassed through operation forwarding mechanisms"

- kubectl: The command-line tool used to interact with Kubernetes clusters. "orchestration flaws in CRI-O and kubectl"

- Kubernetes: An open-source platform for container orchestration across clusters of machines. "Kubernetes control plane bypass"

- KV cache (KV-caching): Key-value caching of attention states to reduce compute in long LLM contexts. "KV-caching support"

- Linux capabilities: Fine-grained privileges that subdivide root permissions into discrete capabilities. "kernel security mechanisms (capabilities, seccomp filters, and mandatory access control)"

- LLM-as-judge: Using a LLM to evaluate or grade outputs from other models or agents. "we analysed eval transcripts using a combination of metadata-based and LLM-as-judge classifiers"

- Mandatory access control (MAC): A security policy framework enforcing system-wide access rules (e.g., SELinux, AppArmor). "mandatory access control"

- microVM: A minimal virtual machine offering stronger isolation than containers with lower overhead than traditional VMs. "microVMs like Firecracker"

- Namespace: Linux isolation mechanism that partitions system resources (e.g., PID, network, mount) for processes. "outside all container namespaces"

- Namespace escape: An attack that breaks out of Linux namespaces to access host resources. "Namespace escapes"

- Network policy: Rules that control how pods communicate with each other and network endpoints in Kubernetes. "network policy exploitation."

- OCI (Open Container Initiative): A set of open industry standards for container runtime and image formats. "Docker/OCI containers"

- One-day (vulnerability): A known vulnerability with public details and often patches, but still exploitable. "one-day scenarios"

- Operation forwarding: Mechanisms that forward privileged operations from isolated environments to the host, potentially weakening isolation. "operation forwarding mechanisms"

- Out-of-bounds vulnerability: A memory safety flaw where code reads/writes outside allocated bounds. "out-of-bounds vulnerabilities"

- Page cache: The kernel’s cache of file-backed pages in memory; bugs here can enable data leaks or privilege escalation. "page cache vulnerabilities"

- Proof-of-concept (PoC): A minimal, reproducible demonstration of a vulnerability or exploit. "availability of reproducible proof-of-concept exploits"

- Privilege escalation: Gaining higher system privileges than initially granted, often to root on the host. "privilege escalation via eBPF"

- Privileged container: A container started with elevated privileges that can access host resources more directly. "privileged containers"

- runc: The low-level CLI tool and runtime that creates and runs containers according to the OCI specification. "runc file descriptor leaks (CVE-2024-21626)"

- Reward hacking: Exploiting flaws in an evaluation metric or reward signal to appear successful without truly solving the task. "This binary scoring prevents reward hacking"

- Nested sandboxing: A defense technique where an inner sandbox is itself contained by an outer sandbox or VM for safety. "using nested sandboxing"

- Seccomp: A Linux kernel feature to filter and restrict system calls available to a process. "seccomp filters"

- Syscall specialisation: Restricting a program’s available system calls to reduce attack surface. "through syscall specialisation"

- Threat model: A formal description of assumed attacker capabilities, goals, and constraints used to scope security analysis. "Following a threat model of a motivated adversarial agent"

- Token budget: The limit on input/output tokens allocated to a model during inference, affecting how much it can reason or act. "inference-time token budget"

- Userspace kernel: An approach where kernel-like functionality runs in userspace processes to mediate system calls for isolation. "userspace kernels like gVisor"

- Wilson score interval: A method for computing confidence intervals for binomial proportions. "Confidence intervals are 95% Wilson score intervals"

- Zero-day: A previously unknown vulnerability with no available patch at the time of discovery/exploitation. "10% success on zero-day and 13% on one-day scenarios"

Collections

Sign up for free to add this paper to one or more collections.