A golden-ratio partition of information and the balance between prediction and surprise: a neuro-cognitive route to antifragility

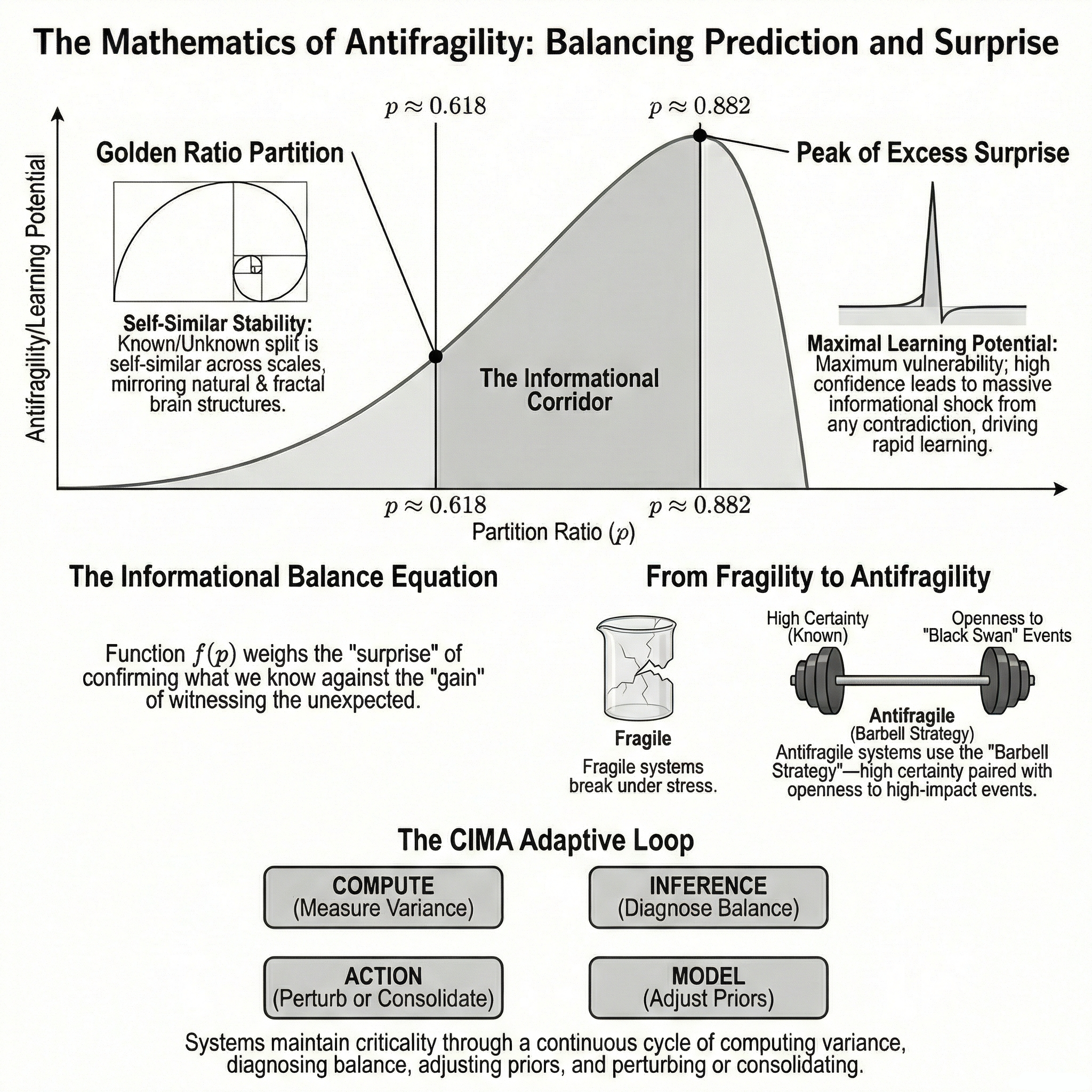

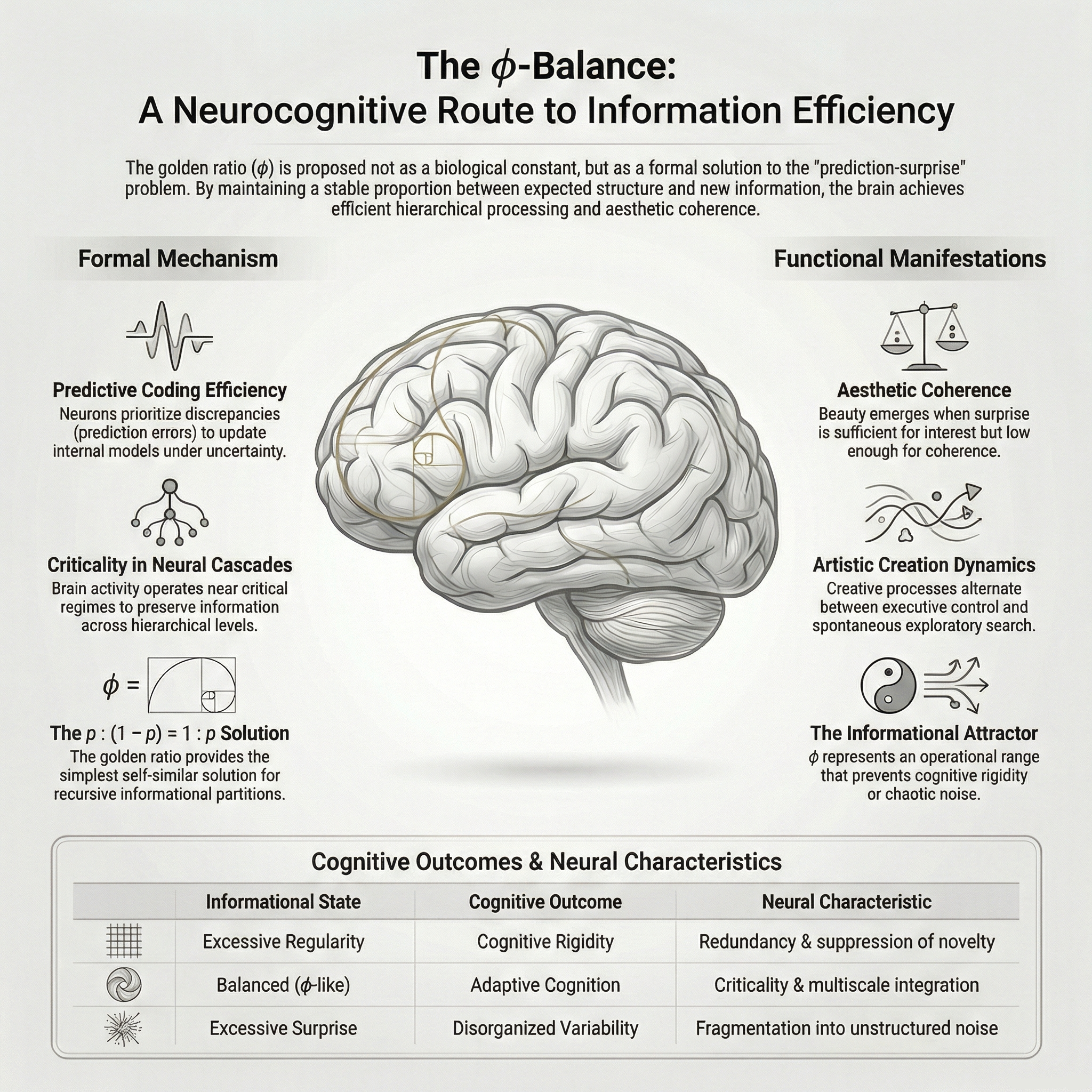

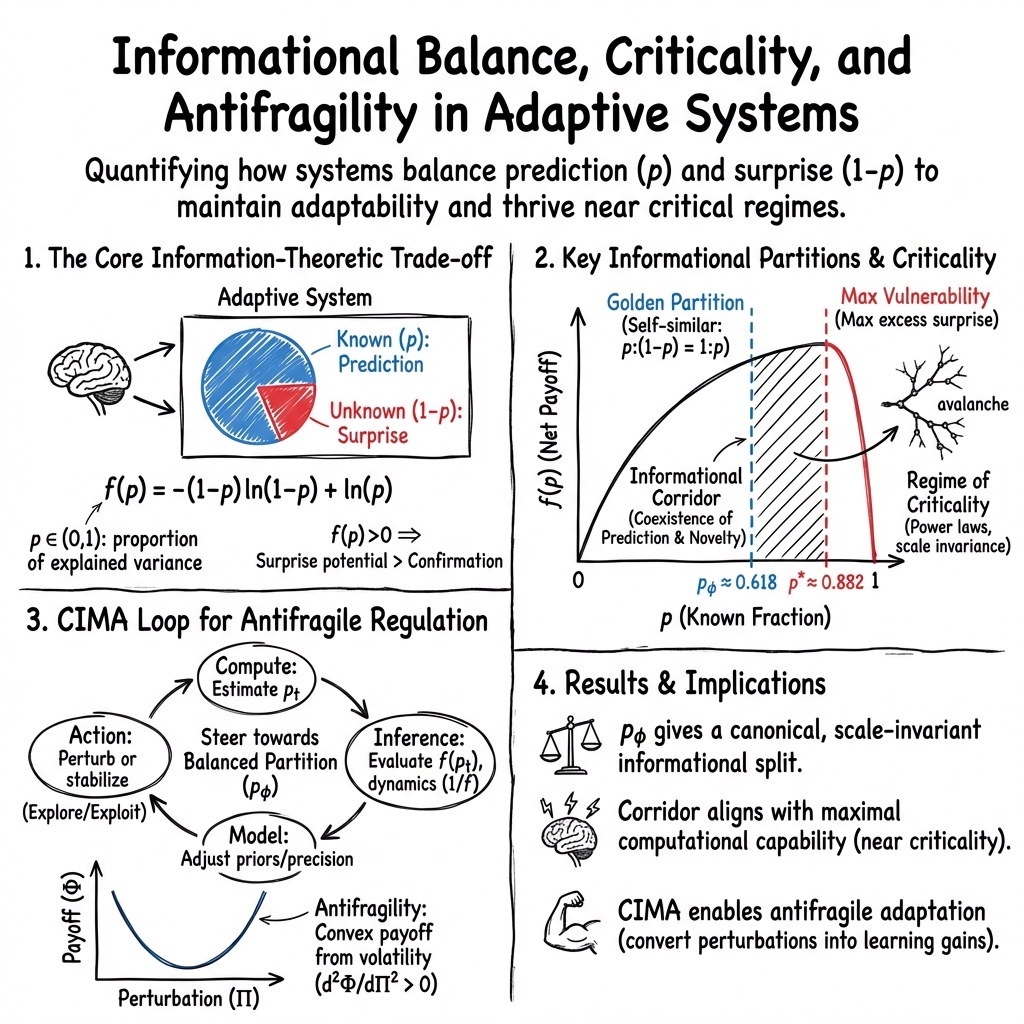

Abstract: Adaptive systems must strike a balance between prediction and surprise to thrive in uncertain environments. We propose an information-theoretic balance function, $ f(p) = -(1 - p)\ln(1 - p) + \ln p $, which quantifies the net informational gain from contrasting explained variance $p$ with unexplained novelty $(1 - p)$. This function is strictly concave on $(0,1)$ and reaches its unique maximum at $ p* \approx 0.882$, revealing a regime where confidence is high but the residual uncertainty carries a disproportionate potential for surprise. Independently of this maximum, imposing a self-similarity condition between known, unknown and total information, $p : (1-p) = 1 : p$, leads to the golden-ratio reciprocal $p = 1/\varphi \approx 0.618$, where $ \varphi$ is the golden ratio. We interpret this value not as the maximizer of $f$, but as a structurally privileged \emph{partition} in which known and unknown are proportionally nested across scales. Embedding this dual structure into a Compute-Inference-Model-Action (CIMA) loop yields a dynamic process that maintains the system near a critical regime where prediction and surprise coexist. At this edge, neuronal dynamics exhibit power-law structure and maximal dynamic range, while the system's response to perturbations becomes convex at the level of its payoff function-fulfilling the formal definition of antifragility. We suggest that the golden-ratio partition is not merely a mathematical artifact, but a candidate design principle linking prediction, surprise, criticality, and antifragile adaptation across scales and domains, while the maximum of $f$ identifies the point of greatest informational vulnerability to being wrong.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper asks a simple but powerful question: how can smart systems (like brains or AI) stay good at predicting what happens next, while also staying open to surprises that help them learn? The authors build a math-based way to measure the balance between “what we think we know” and “what we don’t,” and show that keeping this balance near a special zone can make systems antifragile—that is, they get better when stressed or surprised.

Objectives

The paper’s goals, in everyday terms, are to:

- Create a clear measure of the tug-of-war between prediction and surprise.

- Find special points where this balance is most meaningful: one where being wrong hurts the most, and one that divides “known” and “unknown” in a self-similar, golden-ratio way.

- Connect this balance to how brains work near “criticality” (the edge between order and chaos) and to how systems can improve when challenged (antifragility).

- Show how to use this balance inside a simple decision-making loop so systems can keep themselves in that sweet spot.

Methods and Approach

The authors use a mix of math and simple brain-inspired modeling:

- They define a balance function that compares expected surprise from the unknown to the “cost” of confirming what you already expected:

- Here, is the fraction of variation in the world your model can explain (think: how confident you are), and $1 - p$ is the leftover novelty (the part you don’t explain yet).

- “ln” (natural logarithm) is used because in information theory it measures surprise: unlikely events have high surprise, likely events have low surprise.

- They analyze the shape of :

- It’s concave (curving downward), which guarantees a single highest point.

- They find a unique maximum at . This is where you’re very confident, but the small remaining uncertainty is most likely to catch you off guard.

- They also look for a “self-similar” split between known and unknown using the golden ratio. They set the proportion between known and unknown equal to the proportion between known and the whole:

- This (about 62%) is not where is largest—it’s a structurally special partition that repeats the same proportion at different scales (like many natural patterns).

- They embed these ideas into a simple four-step loop called CIMA (Compute–Inference–Model–Action), showing how a system can measure its balance, adjust its internal model, and act to maintain a healthy mix of prediction and surprise.

- They sketch a minimal brain-like setup (predictive coding) where the system tries to predict incoming signals, measures how much it’s getting right (), and tunes its sensitivity to stay near the balanced zone.

Key Findings

Here are the main results, explained simply:

- The balance function captures “net informational payoff”: how much more surprise you can expect from the unknown compared to the boring confirmation of what you already expect. This helps to judge whether your confidence is helpful or risky.

- There are two special values of :

- : the point of maximum “vulnerability.” You’re very confident, but the small unknown part has the greatest potential to shock you.

- : the golden-ratio partition. It’s a self-similar split where the ratio of known to unknown equals the ratio of known to the whole. Think of it as a balanced, repeatable structure across scales.

- Between about 0.618 and 0.882 lies an “informational corridor” where prediction and surprise coexist. Operating here keeps a system coherent but responsive—like balancing between rigid routine and total randomness.

- This corridor lines up with how brains seem to work near “criticality”:

- At criticality, patterns look similar when you zoom in or out (scale invariance), and the system has a wide dynamic range (it can respond well to weak and strong signals).

- Being near this edge makes it easier to learn from surprises, compute efficiently, and stay flexible.

- When the CIMA loop uses the golden-ratio split as a guiding rule and watches the balance function, the system can maintain itself near that edge and become antifragile: it gains more from bigger perturbations than from smaller ones.

Why It Matters

- If a system is too confident ( close to 1), it can miss important surprises and become fragile—one unexpected event can cause big problems.

- If a system is too uncertain ( too low), it loses coherence and can’t make useful predictions.

- The sweet spot is a repeatable, natural-looking balance where you know enough to act but keep enough curiosity to learn.

This matters for brains, AI, organizations, and even ecosystems: all need a principled way to decide how much to exploit what they know versus explore what they don’t.

Implications and Potential Impact

- Design principle: The golden-ratio partition (roughly a 62% known / 38% unknown split) is a simple, scalable rule-of-thumb for balancing stability and exploration. It suggests you should invest most resources in reliable predictions, while keeping a meaningful slice for novelty and tests.

- Safer learning: By monitoring and , systems can detect overconfidence and inject controlled uncertainty (like A/B tests or noise) to avoid brittle behavior.

- Brain and health research: The idea aligns with evidence that healthy brain activity sits near criticality—too far on either side (too ordered or too chaotic) is linked to problems.

- Better AI and decision-making: Embedding the CIMA loop lets AI systems and organizations adapt in real time, turning surprises into learning gains—antifragility in action.

- Across domains: Because the math is general (about information and proportions), the idea applies from neurons to markets to social systems: thrive by balancing prediction with surprise, and use stress to improve.

In short, the paper offers a clear, mathematically grounded way to keep systems smart, adaptable, and improving—even in unpredictable worlds.

Knowledge Gaps

Identified Knowledge Gaps, Limitations, and Open Questions

The paper presents an innovative framework for understanding the balance between prediction and surprise but leaves several aspects unexplored. Future research may address the following gaps:

Knowledge Gaps

- Empirical Validation: The framework is heavily theoretical. There is a need for empirical studies to validate the proposed informational balance function within various biological or artificial systems.

- Dynamic Feedback Mechanisms: The paper does not detail the mechanisms through which systems dynamically adjust between prediction and surprise in response to changing environments.

- Complex Systems Application: While the model is posited to apply to complex adaptive systems broadly, specific real-world applications and domain-specific calibrations are not discussed.

Limitations

- Assumed Homogeneity: The model assumes uniformity in the response across different types of systems, which may not hold in systems with heterogeneous components.

- Context Dependency: The paper lacks discussion on how varying contexts influence the informational balance, especially under different environmental stresses or perturbations.

- Nuance in Adaptive Mechanisms: The paper implies a broad applicability of a single framework across diverse systems without addressing potential variations in adaptive mechanisms.

- Simplification in Golden-ratio Application: Using the golden-ratio partition as a design principle may oversimplify the nuanced balance in cognitive and adaptive processes across different scales or domains.

Open Questions

- Integration with Existing Theories: How does this framework integrate with existing theories of learning and cognition, such as Bayesian brain hypotheses or free energy principles?

- Parameter Sensitivity: How sensitive is the balance function to variations in model parameters, and how does this affect its application in different systems?

- Neuroscience Correlations: How can neurophysiological data be mapped directly onto the proposed models to identify instances of antifragility within brain dynamics?

- Further Mathematical Exploration: What alternative mathematical formulations or extensions of could accommodate more complex interactions in adaptive systems?

- Cross-domain Applicability: How can the principles in this paper be applied to non-biological systems, such as financial markets or weather prediction models, which also balance prediction and uncertainty?

Addressing these gaps and questions could significantly enhance the understanding and applicability of the concepts presented in the paper. Future studies should focus on empirical validation, contextual adaptations, and integration with existing theoretical frameworks.

Collections

Sign up for free to add this paper to one or more collections.