A Response to paper Critical Evaluation of Studies Alleging Evidence for Technosignatures in the POSS1-E Photographic Plates by Watters et al. (2026)

Abstract: We respond to the critique by Watters et al. (2026) of the statistical analyses in Villarroel et al. (2025) and Bruehl & Villarroel (2025). We argue that the critique conflates object-level validation with ensemble-level statistical inference and relies on a reduced, heterogeneously filtered subset originally constructed for a different scientific purpose. We further question whether the aggressively filtered subset used in Watters et al. (2026) demonstrates a meaningful improvement in sample purity, given the twenty-fold reduction in sample size. Our simple, visual check does not suggest that it does. The subset further lacks complete temporal information and is seriously statistically underpowered for testing the reported Earth-shadow deficit. We emphasise that the horizontal separation metric used for plate assignment and time reconstruction as in Watters et al. (2026) depends on the inclusion of the cos(Dec) factor to ensure geometric consistency. Any omission would alter plate assignment and inferred observation times. Moreover, the analyses presented in Watters et al. (2026) do not include uncertainty estimates or error propagation, limiting the interpretability of the claimed null results. We conclude that the principal findings reported in Villarroel et al. (2025) and Bruehl & Villarroel (2025) are not invalidated by the analyses presented in Watters et al. (2026).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is a reply to another paper by Watters et al. (2026). The authors explain why they believe their earlier results about mysterious short, bright flashes seen in old sky photographs are still valid. They show that Watters et al. used a very small and heavily filtered dataset that wasn’t designed for the questions being asked, didn’t include proper timing information, and missed important steps needed for correct sky geometry. The authors argue that when you use a larger, better-suited dataset and the right methods, you still see strong evidence that many of these flashes are real, likely sunlight reflections from objects near Earth, and that they are connected to Earth’s shadow and possibly to certain human activities.

Key questions

The paper focuses on a few simple questions:

- Are the short, bright flashes found in old sky images mostly camera or plate defects, or do some represent real things in space?

- Do these flashes avoid Earth’s shadow in a way that you’d expect if they are sunlight reflections?

- Are there meaningful connections between when these flashes happen and certain human activities, such as nuclear tests or increased reports of unusual aerial phenomena?

- Did Watters et al. (2026) fairly test these ideas, or did their methods and data choices make their tests too weak to find the real pattern?

How did the researchers approach it?

Think of this like studying a huge crowd to spot a pattern, rather than trying to verify every single person’s identity. The authors use “ensemble-level inference,” which means:

- Instead of proving each flash is real one-by-one, they look for big-picture patterns across many thousands of flashes. If there’s a pattern that matches a physical effect (like Earth’s shadow), that’s evidence some flashes are genuine.

- They work with a large dataset of about 107,000 flashes from old Palomar Observatory plates, and they know the time and date for each event. Having the correct times matters because Earth’s shadow moves with time.

- They compare where flashes appear on the sky to where Earth’s shadow would be during each exposure. If flashes are caused by sunlight reflecting off nearby objects, you’d expect fewer inside Earth’s shadow, where sunlight is blocked.

- They use standard statistical tools to measure how strong a pattern is. The “sigma” number (like ) is a way scientists talk about confidence: higher sigma means stronger evidence that the pattern isn’t just random.

- They explain why smaller samples are “underpowered.” That means there just aren’t enough events to reliably see the pattern. In statistics, if you dramatically shrink your sample, your ability to detect a real effect drops roughly with the square root of the size.

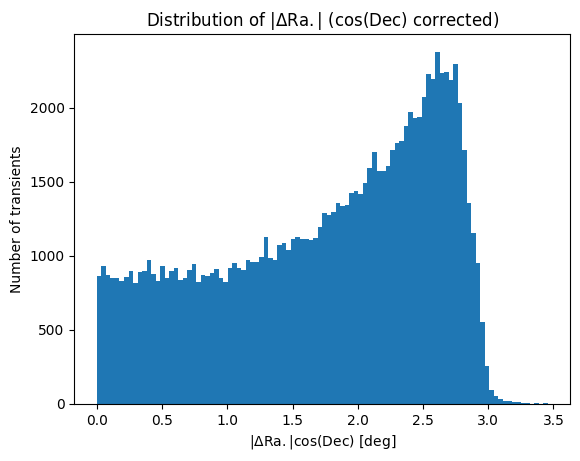

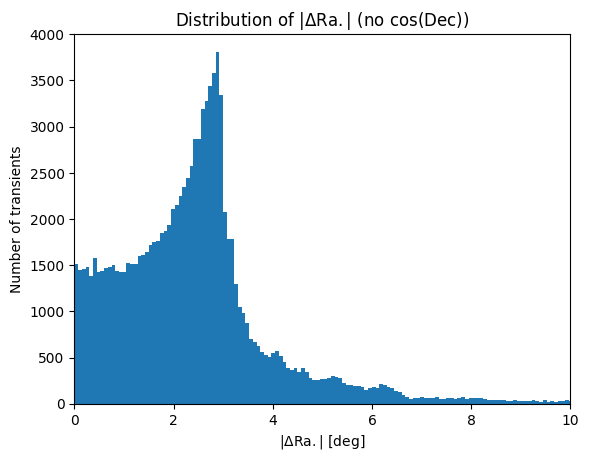

- They highlight a geometric detail called the cos(Dec) factor. Dec (declination) is like latitude on the sky. Measuring horizontal distances on the sky without multiplying by cos(Dec) is like measuring distances on a globe as if it were a flat map. You’ll get the wrong plate assignments and wrong observation times at higher “latitudes,” which breaks time-sensitive tests like the Earth’s shadow analysis.

Main findings and why they matter

Here are the most important points the authors make:

- The big dataset shows a strong deficit of flashes in Earth’s shadow: about significance when using realistic sky coverage, and even higher against a simple theoretical expectation. This supports the idea that many flashes are sunlight reflections from objects near Earth. In Earth’s shadow, sunlight is blocked, so you shouldn’t see reflections there—and you mostly don’t.

- The authors find correlations between flash activity and time windows around nuclear tests, and a smaller but present link to nights with more unusual aerial reports. Even after restricting the analysis to nights when the telescope actually observed (to be extra cautious), the association with nuclear test windows still appears. In plain terms: on nights near nuclear tests, there were more flashes than on other nights, and this wasn’t just because the telescope happened to be on.

- Using a tiny, heavily filtered sample (5,399 objects, and later 4,866) makes the test too weak to see the Earth’s shadow effect. If you shrink a dataset by about 20 times, the expected “sigma” drops a lot. So not finding the effect in a small sample doesn’t mean the effect isn’t real—it often just means the test didn’t have enough data.

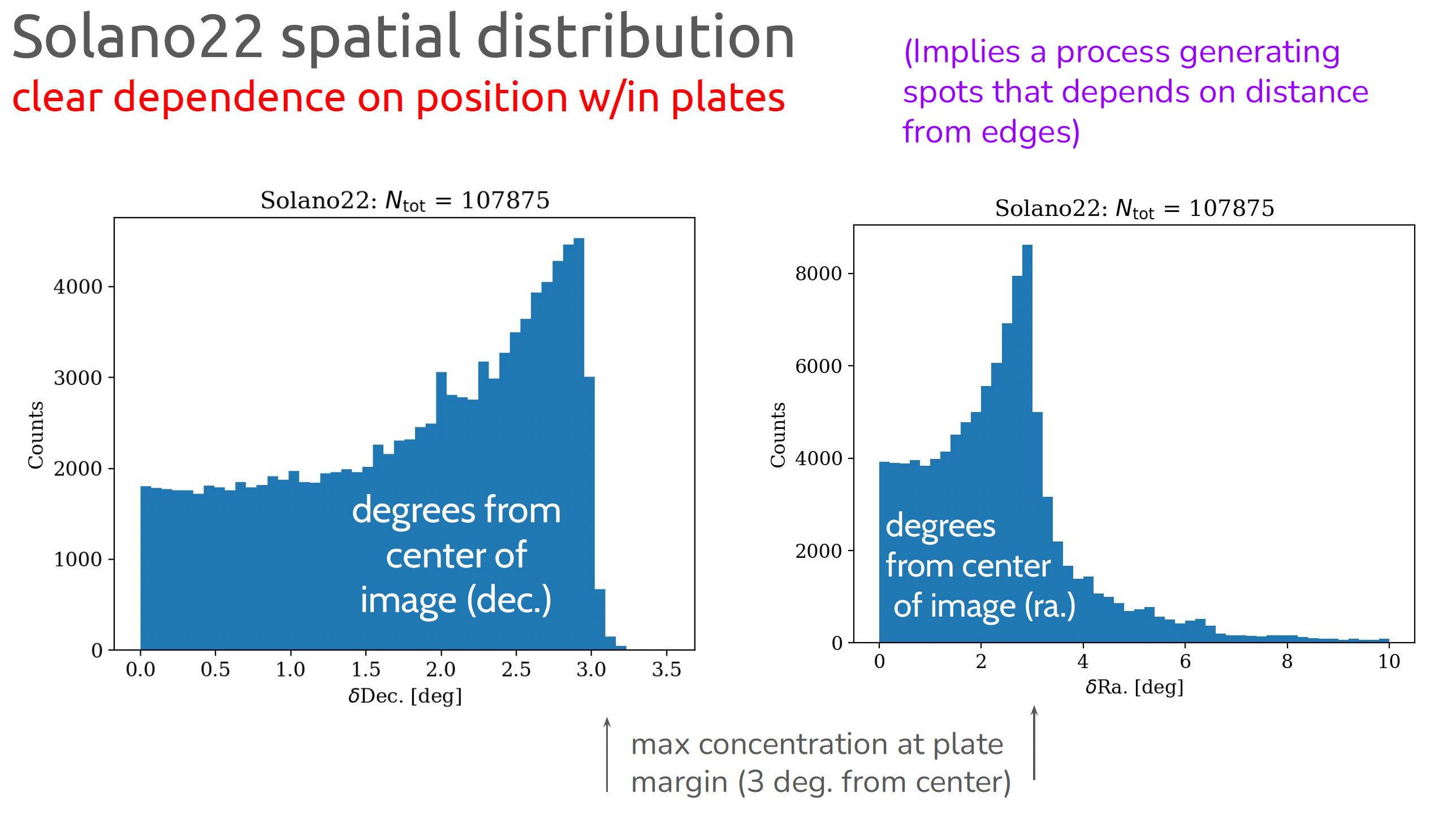

- Watters et al. relied on a dataset that lacks exact observation times and tried to reconstruct times by assigning flashes to nearby photographic plates using “horizontal separation.” If you do this without the cos(Dec) correction, you can assign many flashes to the wrong plates and times, especially at high declinations. That misassignment can erase or scramble time-based patterns like the Earth’s shadow.

- Comparing these short-lived flashes to the MAPS catalog (which mainly includes sources that show up in multiple plates, i.e., longer-lived astronomical objects) isn’t a fair test. If you’re studying brief, one-time glints, a catalog designed to exclude such things won’t be a good reference.

Why this matters:

- It challenges the old view that most one-off points of light on old plates are just defects. Instead, the evidence suggests that at least some are real reflections from objects near Earth—possibly a population that was largely overlooked.

- It shows how important it is to pick the right dataset and method for the question. If you use the wrong tools or cut your data too much, you can miss real signals.

Implications and potential impact

In simple terms, this research suggests:

- Astronomers may have missed a whole class of near-Earth reflective objects in historical sky photos. Today, similar glints could be mixed in with reflections from satellites and space debris, making them hard to separate.

- To study short, rare events, it’s better to use large, carefully modeled datasets and look for patterns, rather than trying to perfectly clean the data by removing anything suspicious. Over-filtering can throw away the very signals you want to find.

- Future work will likely use machine learning to reduce false positives while keeping real events, and will pay close attention to accurate timing and sky geometry.

- These methods could improve how we analyze both historical and modern sky surveys, helping us tell apart natural phenomena, human-made objects, and potentially other unusual sources of light.

Overall, the authors argue that their main conclusions—especially the Earth’s shadow deficit pointing to sunlight reflections—remain solid, and that careful, large-scale statistical approaches are the right way to study brief, mysterious flashes in the sky.

Knowledge Gaps

Unresolved knowledge gaps, limitations, and open questions

The paper clarifies points of contention with Watters et al. (2026) but leaves several concrete issues unresolved. Future work could address the following gaps:

- Dataset provenance and reproducibility:

- Public, versioned release of the ~107,000-transient catalog with complete per-object metadata (plate ID, exact UTC time, exposure length, filter/emulsion, plate center and WCS, quality flags, deduplication logic), the survey mask/coverage model, and analysis code to allow independent replication.

- Transparent documentation of the full filtering pipeline from the 298,165-object intermediate set to ~107,000 objects, including completeness and purity estimates at each stage and the rationale for removing the southern-hemisphere subset.

- Sample purity and contamination rates:

- A rigorously labelled, expert-validated ground-truth subset (e.g., stratified by plate, date, declination, and radius from plate center) to quantify false-positive/false-negative rates and their dependence on plate position, scanning batch, and morphology.

- Quantitative separation of plate/scan artefacts, cosmic-ray hits, meteors, variable stars, and true point-like flashes using morphometrics and supervised models; report confusion matrices and contamination fractions with uncertainties.

- Systematic assessment of edge-of-plate and vignetting effects on transient detection and artefact rates; provide correction or masking strategies and sensitivity of results to radius cuts.

- Uncertainty quantification and power:

- End-to-end uncertainty propagation for all derived quantities (e.g., sky coverage, shadow fraction, solid-angle weights, per-plate rates, positional uncertainties) and use of bootstrapping/Monte Carlo to derive confidence intervals for effect sizes.

- Explicit power analyses for key tests (Earth-shadow deficit, temporal correlations) to predefine minimally required sample sizes and to interpret null results or weak effects.

- Earth-shadow deficit robustness:

- Detailed, published sky-coverage model that explicitly accounts for:

- Moon avoidance and lunar phase (fields near the antisolar point are often avoided near full Moon).

- Seasonal and nightly scheduling biases (e.g., meridian targeting, weather, instrument downtime).

- Plate overlap geometry and nonuniform exposure times.

- Sensitivity analyses for:

- Different plate-center radius cuts (e.g., 1.5°, 2°, 2.5°, 3°), cell sizes, and weighting schemes.

- Alternative control samples and null models (randomized times, plate shuffles, stratified by plate/emulsion).

- Hierarchical models with plate-level random effects to control for clustering and per-plate heterogeneity.

- “Look-elsewhere” correction: if the altitude of ~42,000 km was selected by scanning over radii, adjust significance for the trials factor; publish the search grid and the full altitude–significance curve.

- Independent replication on other plate archives (e.g., POSS-II, DSS, DASCH, ESO/SRC) to test consistency across instruments, emulsions, and scanning pipelines.

- Physical interpretation and predictive tests:

- Forward physical models of glints from reflective objects near GEO (size, BRDF, rotation states, phase-angle dependence, illumination geometry, glint duration vs exposure time) to predict:

- Sky distribution (ecliptic latitude/longitude dependence) beyond the umbral deficit.

- Time-of-night and seasonal patterns.

- Brightness and PSF shape distributions.

- Confront predictions with observations (e.g., excess near opposition/ecliptic, magnitude distributions, plate-to-plate rates).

- Explore alternative natural/terrestrial origins (meteors, aircraft/baloons, lightning, ground reflections) with targeted tests:

- Correlation with air-traffic corridors, meteor showers, and geomagnetic/atmospheric events.

- Morphological/trajectory diagnostics to distinguish meteors and cosmic rays from stellar PSFs.

- Temporal correlations with nuclear tests and UAP reports:

- Pre-registered analysis plan specifying window widths (e.g., ±1 day), metrics (presence/absence vs counts), and multiple-comparisons control across window sizes, event types, and lag structures.

- Models that jointly adjust for seasonality, observation-night availability, lunar phase, weather/seeing, exposure length, emulsion/filter, and long-term trends (e.g., Poisson GLMs with plate- and date-level covariates; time-series models with autocorrelation).

- Treatment of reporting biases in UAP datasets (heterogeneous completeness over time/geography); incorporate an observation model or instrumental variables.

- Robustness checks using alternative nuclear-test datasets, different lag structures, and placebo tests (e.g., shifted windows) to assess spurious associations.

- Time reconstruction and plate assignment for datasets lacking timestamps:

- A validated, quantitative method (with cos(Dec) correction and full WCS handling) for assigning objects to plates and times, including:

- Simulated misassignment rates as a function of declination and plate overlap.

- Propagation of assignment uncertainty into downstream tests (shadow deficit, temporal correlations).

- Benchmarks against the 107k dataset with known times to quantify bias introduced by reconstruction methods.

- Independence and clustering:

- Explicit modeling of within-plate clustering (groupings) and repeated detections; use plate-level random effects or cluster-robust inference so that significance is not inflated by non-independence.

- Investigation of whether clustered events have distinct morphometrics or temporal/spatial signatures.

- Completeness and selection-function characterization:

- Injection–recovery tests (inject synthetic point-like flashes with realistic PSFs and backgrounds) across plates to map detection efficiency versus magnitude, position, and observing conditions.

- Publish the resulting selection function and use it to correct rate estimates and spatial tests.

- Additional sanity checks and diagnostics:

- Tests for anisotropies unrelated to the Earth’s shadow (e.g., RA/Dec stripes from scanning, plate-grid patterns).

- Cross-matching with known (post-1957) satellite glint catalogs on later plates to validate the glint-detection pipeline and expected signatures.

- Cross-archive replication of key results by independent teams using shared code and data, ideally under a registered-report framework.

These actions would allow the community to independently verify the Earth-shadow deficit, clarify the nature of the short-lived flashes, and assess the claimed temporal associations with external events under rigorously controlled conditions.

Practical Applications

Immediate Applications

Below are actionable use cases that can be deployed now, drawn directly from the paper’s findings, methods, and critiques. Each item names relevant sectors, potential tools/products/workflows, and key assumptions or dependencies.

- Earth-shadow geometry filters for transient pipelines

- Sector: astronomy, software, space situational awareness (SSA)

- Application: Integrate Earth’s umbra/penumbra geometry into transient detection pipelines to down-weight or classify likely solar-reflection glints versus astrophysical transients; use cos(dec)-corrected horizontal separation in all plate/geometric calculations.

- Tools/workflows: A lightweight “Earth-Shadow Filter” module for ZTF/Pan-STARRS/LSST pipelines; a geometry library that enforces cos(dec) corrections and plate size/overlap constraints; pre- and post-detection filters to estimate reflection likelihood based on shadow coverage at observation time.

- Assumptions/dependencies: Accurate time and date metadata per exposure; robust plate center positions; correct survey sky-coverage modeling; assumes a material contribution of solar reflections to transient catalogs.

- Statistical power and uncertainty auditing for ensemble-level inference

- Sector: academia (astronomy, data science), publishing

- Application: Adopt ensemble-level inference practices with explicit error bars and uncertainty propagation; mandate power analyses and sensitivity to bin size/cell size before claiming null results.

- Tools/workflows: “PowerCheck” and “UncertaintyProp” add-ons for astro-statistics notebooks; a journal submission checklist focused on ensemble inference versus object-level validation.

- Assumptions/dependencies: Access to full sample sizes and variance sources; community uptake; does not replace object verification, but complements it when testing population-level effects.

- Monte Carlo sky coverage and plate-edge modeling

- Sector: astronomy, software

- Application: Use Monte Carlo modeling of survey geometry and plate overlaps to quantify edge effects and control for spurious excesses beyond ~2–2.5 degrees from plate center.

- Tools/workflows: “SkyCoverageMC” library with plate mosaics and overlap masks; histogram diagnostics that enforce plate-valid radius and cos(dec) corrections.

- Assumptions/dependencies: Accurate plate footprints and overlap maps; requires a reproducible geometry model per survey.

- Archival plate time/date validation toolkit

- Sector: astronomy archives, software

- Application: Build a time/date reconciliation workflow for historical plates so transient analyses aren’t forced to infer timestamps via nearest-plate heuristics; emphasize cos(dec)-corrected matching and plate overlap handling.

- Tools/workflows: “TimeCert” reconciliation scripts integrated with VizieR/POSS-I metadata; flagging of uncertain assignments; audit reports for downstream analyses.

- Assumptions/dependencies: Availability and digitization of plate logs; consistent metadata across archives.

- Transient classification prototypes using supervised learning

- Sector: astronomy, software, education

- Application: Prototype ML classifiers trained on the ~107,000 labeled-by-context transient dataset to triage likely glints, artifacts, and genuine transients; prioritize ensemble-level features over strict object validation for population studies.

- Tools/workflows: Open-source training scripts and baseline models; curated feature sets (position relative to umbra, plate radius, local artifact rates).

- Assumptions/dependencies: Sufficient labeled examples or weak labels; realistic contamination modeling; careful validation to avoid overfitting to survey-specific quirks.

- Observatory scheduling heuristics to manage glints

- Sector: astronomy operations, SSA

- Application: Schedule observations within Earth’s umbra to reduce solar-reflection false positives when the science case demands astrophysical transients; schedule outside of the umbra when mapping reflective near-Earth objects is desirable.

- Tools/workflows: “ShadowAwareScheduler” that ingests predicted umbra footprints and target lists; configurable policies per science program.

- Assumptions/dependencies: Accurate shadow predictions; acceptance of trade-offs (fewer glints versus possible changes in sky brightness/observing constraints).

- Methodological training and curriculum updates

- Sector: education, academia

- Application: Integrate modules on ensemble inference versus object-level validation, statistical power scaling (∝ √N), and error propagation in survey astronomy and data science courses.

- Tools/workflows: Teaching notebooks, case studies (VASCO, POSS-I), assessment rubrics.

- Assumptions/dependencies: Instructor adoption; access to open datasets and reproducible code.

- Replicable correlation analysis workflows for event windows

- Sector: academia, policy-adjacent research (risk monitoring)

- Application: Provide reproducible workflows for analyzing transient counts within “event windows” (e.g., ±1 day of nuclear test dates), with one-tailed tests, Poisson GLMs, and non-parametric checks.

- Tools/workflows: “EventOverlap Analyzer” notebooks; standardized windowing and control selection; sensitivity analyses to observation-night confounding.

- Assumptions/dependencies: Verified observation calendars; strictly documented statistical choices; result interpretation remains cautious given historical context.

Long-Term Applications

These use cases require further research, scaling, development, or standardization before reliable deployment.

- Dedicated glint-mapping survey and catalog for near-Earth reflective objects

- Sector: SSA, aerospace, astronomy

- Application: Launch a coordinated effort to detect, localize, and statistically characterize short-lived optical glints from non-cooperative objects (e.g., debris, unknowns) in MEO/GEO and beyond; publish a “GlintMap” catalog.

- Tools/products: A persistent survey program with real-time shadow modeling, high-cadence imaging, and multi-epoch follow-up; cross-sensor fusion (optical, radar).

- Assumptions/dependencies: Modern instrumentation and scheduling; reliable orbit inference from sparse glints; data-sharing across agencies; clear privacy and security protocols.

- Robust ML pipelines for transient detection that generalize across instruments

- Sector: astronomy (LSST-era), software

- Application: Develop ML models robust to plate/CCD differences, incorporating geometry-aware features (umbra proximity, plate center distance) and statistically principled thresholds to reduce false positives at scale.

- Tools/products: Production-ready classifiers integrated into LSST/ZTF pipelines; benchmarking datasets; standardized metrics for contamination and recall.

- Assumptions/dependencies: Large, labeled datasets across multiple surveys; community validation; active maintenance and bias audits.

- Standardized archival metadata and time certification across observatories

- Sector: archives, policy, academia

- Application: Establish a cross-observatory standard for time/date metadata (“TimeCert”) for historical and modern exposures; mandate minimal metadata quality for population-level studies.

- Tools/products: A metadata schema and validation service; funding for archival digitization; governance via professional societies.

- Assumptions/dependencies: Institutional buy-in and resources; alignment with existing systems (VizieR, CDS); handling of legacy inconsistencies.

- Survey scheduling optimization to control glint contamination at scale

- Sector: astronomy operations

- Application: Integrate shadow-aware scheduling within large survey management (e.g., LSST) to balance transient science yield with contamination control; produce per-field contamination forecasts.

- Tools/products: “ShadowOp” optimization engine; policy guidelines for program prioritization.

- Assumptions/dependencies: Complex trade-offs with other constraints (weather, cadence, target visibility); requires validation of contamination models.

- Nuclear-test and anthropogenic-event monitoring via astronomical proxies

- Sector: policy, risk monitoring, academia

- Application: Explore whether transient counts and patterns can serve as weak, indirect indicators of anthropogenic events (e.g., tests) when paired with rigorous confounder control and independent data sources.

- Tools/products: A vetted “EventSignal” platform employing GLMs and causal inference frameworks; clearly stated limitations and ethics guidelines.

- Assumptions/dependencies: Strong validation against independent datasets; strict handling of observation scheduling confounders; recognition that correlations do not prove causation; policy caution essential.

- Technosignature search programs targeting specular reflection alignments

- Sector: academia (SETI), astronomy

- Application: Pursue specialized searches for aligned groupings of short-lived optical flashes consistent with specular reflections from non-natural objects; refine physical models and detection criteria.

- Tools/products: “Specular Technosignature Search (STS)” protocol, simulator for reflection geometry, anomaly scoring pipeline.

- Assumptions/dependencies: Comprehensive artifact modeling; reproducible statistical thresholds; community review and oversight to avoid premature claims.

- Journal and funder guidelines for ensemble-level statistical studies

- Sector: academia, publishing, funding

- Application: Codify best practices for ensemble inference (power analysis, uncertainty propagation, geometry modeling, metadata verification), distinguishing it from object validation workflows.

- Tools/products: “Ensemble Inference Checklist” adopted by journals and grant panels; reviewer training materials.

- Assumptions/dependencies: Community consensus; compatibility with diverse subfields; iterative updates as methods evolve.

- Open educational programs on survey inference and archival analytics

- Sector: education

- Application: Launch MOOCs and workshops teaching the interplay of survey geometry, statistical power, and metadata integrity using VASCO/POSS-I case studies.

- Tools/products: Courseware with real datasets, lab exercises, and assessment frameworks.

- Assumptions/dependencies: Sustained institutional support; accessible computing resources for students.

- Multi-epoch orbit inference from glint-only detections

- Sector: SSA, aerospace, astronomy

- Application: Develop methods to infer orbits from sparse, glint-only observations by coupling shadow-aware timing with multi-night detections and Bayesian tracking.

- Tools/products: “GlintTrack” inference engine; data fusion with cataloged satellites and radar returns.

- Assumptions/dependencies: Sufficient recurrence of glints; reliable timing and photometry; tight integration with external sensors; method validation across known objects.

Glossary

- Aggressively filtered sample: A dataset narrowed using stringent criteria to minimize false positives, often at the expense of completeness. "The aggressively filtered sample of 5{,}399 transients presented in \cite{Solano2022} was constructed for a different scientific purpose"

- Catalogue cross-matching: Comparing positions of sources across multiple astronomical catalogues to identify counterparts or duplicates. "a strict requirement was imposed that no cross-match with any other astronomical catalogue could exist across the electromagnetic spectrum"

- cos(Dec) factor: The cosine of declination used to correct horizontal (right ascension) separations for geometric consistency on the celestial sphere. "depends on the inclusion of the cos(Dec) factor to ensure geometric consistency."

- Declination (Dec): The angular coordinate measuring an object's position north or south of the celestial equator. "Since nearly half the plates lie at Dec. "

- Earth-shadow deficit: A statistically observed shortfall of events within the Earth’s shadow region compared to expectation. "seriously statistically underpowered for testing the reported Earth-shadow deficit."

- Earth’s umbra: The fully shadowed region behind Earth where direct sunlight is completely blocked. "a pronounced deficit of these transients within the Earth’s umbra at an altitude of approximately 42{,}000~km"

- Ensemble-level statistical inference: Drawing conclusions about a population from aggregate statistics without verifying each item individually. "conflates object-level validation with ensemble-level statistical inference"

- Error propagation: Quantifying how measurement uncertainties affect derived quantities in an analysis. "do not include uncertainty estimates or error propagation"

- Generalised linear model (GLM): A statistical framework extending linear models to non-normal response distributions (e.g., Poisson). "A Poisson generalised linear model analysis further revealed significantly more transients"

- Hemispherical coverage: The expected or modeled area coverage over half of the celestial sphere used as a baseline for comparisons. "when compared to the theoretical hemispherical coverage"

- Horizontal separation: The angular difference in right ascension from the plate center, typically requiring a cos(dec) correction. "the horizontal separation calculation used for plate assignment and time reconstruction"

- Isotropic expectation: The assumption that events are uniformly distributed across directions on the sky. "excess relative to an isotropic expectation"

- Kinematic parameters: Features describing motion-related properties of sources (e.g., apparent motion vectors). "based on morphometric and kinematic parameters."

- MAPS catalogue: A catalogue of sources persistently detected across multiple plates and bandpasses, used for comparison. "objects drawn from the MAPS catalogue"

- Monte Carlo simulation: A computational method using random sampling to approximate complex geometric or statistical quantities. "Taking into account plate overlap among POSS-I plates and the survey geometry through a Monte Carlo simulation"

- Morphometric parameters: Shape and structural descriptors (e.g., size, elongation) used to classify sources. "based on morphometric and kinematic parameters."

- Null results: Findings that show no statistically significant effect or deviation from expectations. "limiting the interpretability of the claimed null results."

- Object-level validation: Verifying the authenticity or physical nature of individual detections. "object-level validation, as used in the construction of machine-learning training datasets"

- Palomar Observatory Sky Survey (POSS-I): A mid-20th-century wide-field photographic sky survey conducted at Palomar Observatory. "POSS-I observation dates"

- Plate assignment: Associating a transient with the correct photographic plate (and thus date/time) based on positional geometry. "used for plate assignment and time reconstruction"

- Plate edge artifacts: Spurious signals near the edges of photographic plates arising from imaging or scanning processes. "to avoid plate edge artifacts"

- Plate emulsion defects: Flaws in the photographic emulsion that can mimic astronomical sources. "plate emulsion defects"

- Sky coverage: The portion of the sky observed or included in a dataset or survey. "when the survey sky coverage is taken into account"

- Solid angle: The measure of the size of a sky region (e.g., in square degrees or steradians) used in geometric analyses. "plate-by-plate solid-angle modelling"

- Spearman’s rho: A nonparametric rank correlation coefficient assessing monotonic relationships. "$\rho_{\mathrm{Spearman} = 0.13$, "

- Statistical power: The probability that a test will detect an effect of a given size; increases with sample size and decreases with noise. "effects of reduced statistical power"

- Technosignature reflections: Optical glints or signals potentially produced by artificial objects indicative of technology. "alignments of these objects, indicative of technosignature reflections"

- Temporal correlations: Associations between events based on their timing or co-occurrence in time. "the reported temporal correlations between feature detections and nuclear tests or UAP sighting reports"

- Time reconstruction: Inferring observation times and dates for events using plate geometry and positional matching. "used for plate assignment and time reconstruction"

- Transients: Short-duration astronomical events appearing briefly in a single exposure. "107,000 transients"

- UAP (Unidentified Aerial Phenomena): Reports of anomalous aerial observations of uncertain origin. "UAP sighting reports"

- Underpowered test: A statistical analysis with insufficient sample size or sensitivity to reliably detect effects. "the statistically expected outcome of an underpowered test."

- Vanishing stars: Objects that appear in historical observations but cannot be found in subsequent catalogues. "search for genuinely vanishing stars—objects that disappear without leaving any trace behind."

- VASCO: The Vanishing and Appearing Sources during a Century of Observations project investigating historical plate transients. "the VASCO transients"

Collections

Sign up for free to add this paper to one or more collections.