- The paper introduces a novel approach that integrates partial causal graphs into CFMs using learnable attention biases.

- It demonstrates significant improvements in prediction accuracy and reduced uncertainty across various semi-synthetic benchmarks.

- The methodology enables CFMs to leverage domain expertise for robust all-in-one causal inference, bridging bespoke and general-purpose models.

Overview of "Use What You Know: Causal Foundation Models with Partial Graphs" (2602.14972)

The paper "Use What You Know: Causal Foundation Models with Partial Graphs" (2602.14972) addresses the significant challenge of integrating domain knowledge into Causal Foundation Models (CFMs). Traditional estimators for causal inference have been specifically designed for particular assumptions, but CFMs offer a unified approach by amortizing causal discovery and inference in one step. However, current CFMs fail to incorporate domain knowledge, potentially leading to suboptimal predictions. This paper introduces methods to condition CFMs on partial causal information, improving their performance by injecting learnable biases into the attention mechanism.

Methodological Innovations

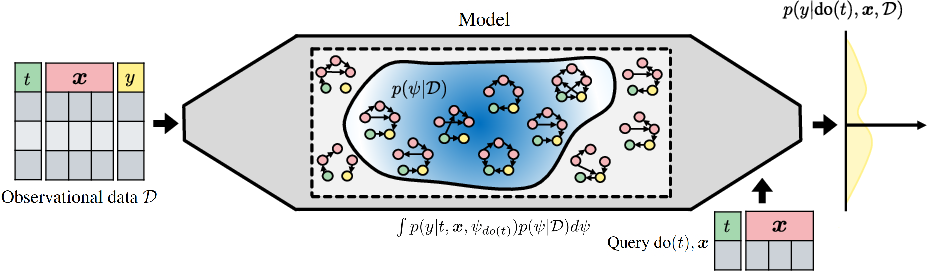

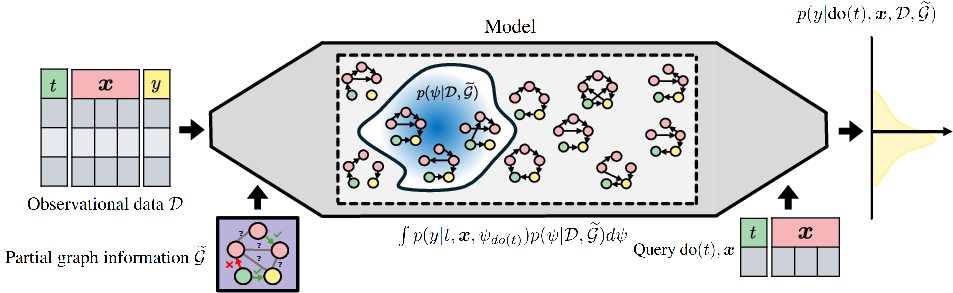

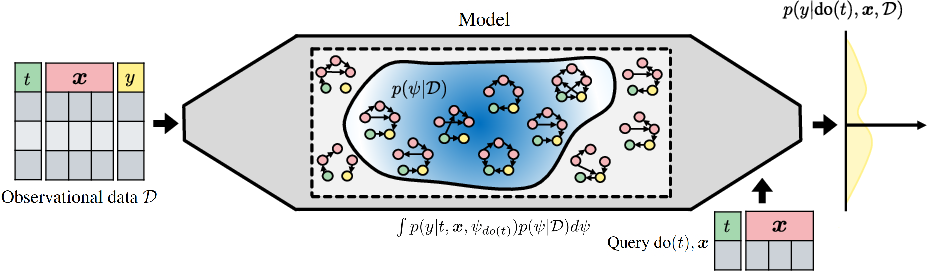

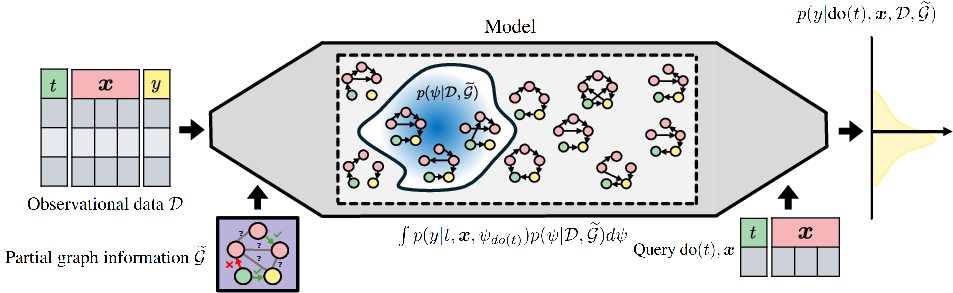

The authors propose a methodology for conditioning CFMs on available causal information like causal graphs or ancestral information. The proposed approach effectively leverages partial causal information when complete graph data is unavailable, narrowing down the set of plausible causal structures and thus enhancing prediction precision. This is visualized in Figure 1, where the model uses partial graph information to achieve a more concentrated posterior predictive distribution compared to using only observational data.

Figure 1: CFMs with partial graph information significantly reduce posterior uncertainty, enabling more precise predictions.

The paper explores multiple strategies for incorporating such information, with an emphasis on using learnable attention biases. Among the tested strategies, learnable attention biases were found to be most effective in both full and partial information scenarios, allowing CFMs to perform comparably with specialized models optimized for specific causal structures.

Numerical Results

The experimental results demonstrate a significant performance uplift when CFMs are conditioned with partial causal information. The results were robust across various semi-synthetic causal benchmarks and complex simulation environments, showing that CFMs with graph conditioning can match or exceed the performance of models tailored for particular causal structures.

Implications and Future Directions

This work resolves a crucial issue in the pathway to developing all-in-one CFMs: integrating domain expertise and observational data for answering causal queries. By effectively utilizing any available causal information, the proposed method makes CFMs more flexible and broadly applicable. The paper suggests that future research could focus on expanding the types of causal information utilized by CFMs, such as conditioning on aspects of functional mechanisms or noise distributions.

By tackling the challenge of conditioning with partial information, this research lays the groundwork for the next generation of CFMs. These models promise to provide robust all-in-one causal effect estimation, further blurring the lines between bespoke model design and general-purpose application in diverse causal inference scenarios.