Information-Theoretic Approach to Financial Market Modelling

Abstract: The paper treats the financial market as a communication system, using four information-theoretic assumptions to derive an idealized model with only one parameter. State variables are scalar stationary diffusions. The model minimizes the surprisal of the market and the Kullback-Leibler divergence between the benchmark-neutral pricing measure and the real-world probability measure. The state variables, their sums, and the growth optimal portfolio of the stocks evolve as squared radial Ornstein-Uhlenbeck processes in respective activity times.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at financial markets in a surprising way: as a communication system. Just like a phone network sends messages efficiently, the market “sends” information through prices and trades. The author uses ideas from information theory (how we measure and minimize surprise in messages) to build a simple, realistic model of how markets move. The goal is a model that matches what we see in real markets and makes pricing and hedging financial products more practical.

What questions is the paper trying to answer?

- How can we model market behavior so it reflects real-world facts like changing volatility, sudden spikes, and long-run growth?

- Can we design a model that “uses” the least possible extra information, making it efficient and simple?

- How can we price financial products (like options) without relying on assumptions that don’t hold perfectly in real markets?

- Is there a way to base pricing on a portfolio that actually exists and grows fastest over time?

How do the researchers approach the problem?

Seeing markets as a communication system

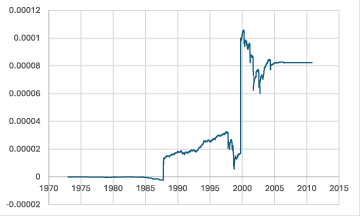

Think of every trade as sending a message about price. The market’s “message speed” changes over time: during a crisis, lots of messages (trades) happen very fast; on calm days, fewer messages happen. The paper treats this message speed as “market activity,” and its running total as “market time.” The key idea is that prices evolve in market time, not strictly in clock time, which helps explain sudden volatility spikes.

Four core assumptions

The model is built on four simple, powerful assumptions:

- Independent building blocks in market time (A1): The market is built from “factors” (think: simple portfolios that act like building blocks). Each factor’s randomness is stable over time when seen in market time, and the factors move independently.

- A best long-term growth portfolio exists (A2): There is a “growth optimal portfolio” (GOP), also called the Kelly portfolio. It’s like the best long-term bet that grows the fastest on average. This ensures the market isn’t broken by wild arbitrage tricks.

- Prices reveal as little extra surprise as possible (A3): The market organizes itself to minimize expected “surprisal” (surprise) in the factors’ probabilities. In everyday terms: prices carry the least extra information beyond what’s needed, which keeps the system efficient.

- Pricing should stay close to reality (A4): When we switch from real-world probabilities to a pricing probability (used to calculate fair prices), we do it in the way that changes the least possible information. This is measured by the Kullback-Leibler divergence, a number that tells us how different two probability descriptions are.

Market time vs. calendar time

- Calendar time is your clock or calendar.

- Market time speeds up when trading is intense and slows down when trading is quiet.

- Modeling randomness in market time lets the model produce realistic features like volatility spikes—something that simple calendar-time models struggle to do.

Building blocks: factors and the benchmark

- Factors: Independent, nonnegative “mini-securities” that together can reproduce stock behavior. They are designed so their volatility is stationary (doesn’t drift over time in market time).

- Benchmark: The GOP of the factors. Think of it as the strongest long-term performer across all factors, with weights that end up being simple and balanced under the idealized model. This benchmark is used as a numeraire (a measuring stick) for pricing in the paper’s method.

What did they find?

Here are the main results, explained simply:

- Normalized factors follow a special type of random process that stays positive and is pulled toward a typical level. In technical terms, they follow a Cox–Ingersoll–Ross (CIR) process in their own “activity time.” This choice comes from minimizing surprisal.

- The factors themselves, their sums, and the benchmark (the GOP of factors) follow squared radial Ornstein–Uhlenbeck (SROU) processes in their activity times. These processes are handy because they never go negative and have lots of known formulas, which makes calculations easier.

- The model, called the Minimal Market Model (MMM), has only one key parameter in market time: the net risk-adjusted return. That keeps it simple and reduces overfitting.

- Volatility spikes and “rough” behavior appear naturally because market time speeds up during hectic periods. This matches real markets much better than classic smooth models.

- Using the benchmark as the numeraire for pricing (benchmark-neutral pricing) gives minimal, practical prices for products that can be replicated, and it stays close to the real-world probabilities. It also avoids relying on hard-to-estimate drifts that can make traditional risk-neutral pricing unrealistic in this framework.

- The model explains several “stylized facts” observed in finance, such as:

- Volatility that has a stable long-run distribution.

- Returns with heavy tails (like a Student-t distribution), not just thin-tailed normal distributions.

- The leverage effect (volatility tends to rise when prices fall).

- Volatility clustering and roughness.

- A mix of fast and slow volatility changes.

- Scaling and self-similarity in returns.

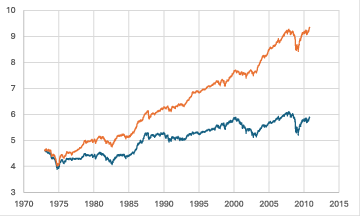

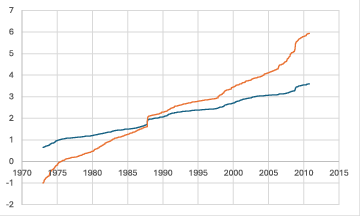

- Long-run growth of world indices with mean reversion.

Why does it matter?

- Better realism: The model captures important features real markets show every day.

- Simpler, stronger tools: With only one main parameter in market time and well-understood processes, it’s easier to compute prices and hedges.

- Practical pricing: Benchmark-neutral pricing can produce fairer, more robust prices that don’t depend on risky assumptions and are closer to how markets actually behave.

- Enhanced hedging: Because the processes have many explicit formulas, hedging strategies can be very accurate.

Takeaway

By viewing the market as a communication system and applying information theory, the paper derives a clean, practical market model that minimizes surprise and stays close to reality. Its building blocks (factors) and its measuring stick (the benchmark) evolve in market time, naturally producing realistic volatility behavior and matching many empirical facts. The result is the Minimal Market Model, a simple yet powerful framework that helps price and hedge financial products more reliably.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise, actionable list of aspects the paper leaves unresolved or only partially addresses. These identify concrete directions for future research.

- Empirical identifiability of market time: The paper treats market time as “observable” via integrated average trading intensity but does not specify how to measure it across assets, at what sampling frequency, or how to de-noise microstructure effects. A statistically robust, real-time estimator of and activities is needed.

- Mapping to calendar time and roughness: While market-time diffusion plus a stochastic time-change is proposed to explain rough volatility and clustering, the paper does not derive conditions on that produce the empirically observed Hurst exponents (), nor provide calibration or tests confirming roughness in calendar time.

- Factor construction and identifiability: Factors must be tradable self-financing portfolios of stocks, but the paper does not provide a constructive method to extract/estimate these factors from data (e.g., weights ), resolve non-uniqueness, or ensure numerical stability in discrete time.

- Choice and number of factors (): The dimension of the CIR/SROU dynamics is tied to via $4/n$. The paper does not provide a criterion for selecting , nor a sensitivity analysis of zero-hitting probabilities, tail behavior, and pricing/hedging accuracy as varies.

- Independence assumption: Normalized factors are assumed independent. Real markets display strong cross-factor dependence. How to extend surprisal-minimization to allow dependence (e.g., via covariance or copula constraints), and what joint stationary density/dynamics result?

- Stationarity in market time: The framework assumes stationary densities for normalized factors in market time; however, real markets undergo regime shifts. How robust are the results to breaks in stationarity, and how can nonstationarity or structural breaks be modeled within this approach?

- Boundary behavior at zero: The paper assumes instantaneous reflection at zero for factors/normalized factors. The financial interpretation, empirical frequency of boundary hits, and implications for pricing, hedging, and stability (including integrability under BN measure) are not analyzed.

- Surprisal-minimization constraints: The chosen constraints (e.g., , , and ) require deeper justification. How sensitive is the solution (gamma stationary density and CIR dynamics with dimension $4/n$) to these specific constraints? Are there alternative constraint sets with better empirical fit?

- Empirical validation of stylized facts: Claims that the model reproduces heavy tails (Student-t with 4 degrees of freedom), leverage, scaling, and long-run mean reversion are not demonstrated. Derivations, simulation studies, and statistical tests are needed to verify these properties quantitatively in calendar time.

- Leverage effect mechanism: The sign and magnitude of return–volatility correlation implied by the 3/2-type volatility structure are not derived. Under what parameterizations (including time-change) does the model reproduce the empirically observed negative leverage effect?

- Multi-timescale volatility: The model uses a single activity parameter per factor. How to incorporate multiple volatility components (fast/slow) within the framework—e.g., nested or hierarchical time changes—and how to calibrate them?

- Benchmark-neutral (BN) measure and KL minimization: The paper asserts that BN pricing minimizes the Kullback-Leibler divergence to the real-world measure, but the optimization domain (over which class of equivalent measures) and conditions for uniqueness/existence are not fully characterized.

- Conditions for BN measure equivalence: The stated corollary requires and bounded . It remains unclear whether BN remains equivalent under time-varying/stochastic , non-semimartingale time changes, or .

- Role of the net risk-adjusted return : Although the MMM in market time claims only one parameter, the paper does not present a practical, robust estimator for (or justify when it is unnecessary), nor assess the impact of estimation error on pricing and hedging.

- Practical implementability of NP/BN hedging: Since the NP involves leveraged positions and shorting the savings account, and the benchmark involves dynamic rebalancing, the paper does not quantify hedging error under discrete trading, transaction costs, and funding constraints, nor provide implementable algorithms.

- Transaction costs and frictions: The framework assumes continuous trading, no costs, and full shorting with use of proceeds. Extensions incorporating costs, discrete trading, borrowing constraints, and market impact—and the consequences for BN pricing minimality—are not developed.

- Market completeness and residual risk: With finitely many factors and many claims, markets are generally incomplete. The paper does not quantify residual hedging error, propose variance-minimizing strategies, or derive error bounds under the MMM/BN framework.

- Interest rates and savings account: By setting , the model avoids interest rate modeling. How to extend to stochastic rates or multi-currency settings and assess implications for BN/NP numeraires and pricing?

- Stability and uniqueness of SDE solutions: Formal conditions ensuring unique strong solutions with reflection at zero under time change are not detailed. A rigorous treatment of path properties (e.g., when and jumps) is needed.

- Domain of admissible claims for BN pricing: The paper references integrability conditions but does not specify the class of claims (e.g., path-dependent, barrier options) for which BN pricing yields minimal prices and stable hedges.

- Option pricing formulas: Although squared Bessel/SROU processes are tractable, the paper does not provide explicit BN prices or characteristic functions for standard derivatives (vanilla options, variance swaps), nor calibration examples.

- Factor heterogeneity: Surprisal minimization seems to imply (equal risk-premium weights) and equal factor contribution to the benchmark. How to accommodate heterogeneous factor premia consistent with empirical cross-sections?

- Calibration procedure: A practical workflow for estimating from observed stock prices is not provided. Identification, convergence diagnostics, and out-of-sample validation are needed.

- Economic micro-foundations: The interpretation of the market as a communication system that minimizes surprisal is appealing but lacks micro-founded behavioral/equilibrium justification. What trader preferences or market mechanisms imply these information-theoretic objectives?

- Cross-asset generalization: The applicability of the framework to other asset classes (FX, rates, credit, commodities) and multi-asset joint modeling (shared market time vs. asset-specific market times) is not discussed.

- Robustness to measurement error: The dependence on trading intensity implies sensitivity to noisy volume/flow proxies. How robust are parameter estimates and inferred to measurement noise and missing data?

- Extreme events and jumps: The model relies on diffusions in market time. How to accommodate jump risk, market halts, or structural breaks while preserving the information-theoretic foundations?

- Impact of zero hits on pricing: When factors approach zero, the model implies volatility spikes and jumps in benchmark time. The impact on BN prices, integrability conditions, and numerical stability during such episodes is not analyzed.

- Benchmarks vs. cap-weighted indices: The derived benchmark is equal-weighted over factors; how it relates to real-world cap-weighted indices used in practice, and the consequences for empirical pricing/hedging, are unclear.

- Comparative performance: No empirical comparison is provided between BN pricing/MMM and risk-neutral or other reduced-form/rough volatility models in terms of pricing errors, hedging performance, or likelihood scores.

Practical Applications

Immediate Applications

The following applications can be deployed using the paper’s information-theoretic market model and benchmark-neutral (BN) pricing methodology, with practical workflows, sector links, and feasibility notes.

- Derivatives pricing and hedging with BN pricing

- Sector: finance (capital markets), software (quant libraries)

- What: Use the benchmark (GOP of factors) as numeraire and the BN pricing measure to compute minimal prices for replicable contingent claims. Implement SROU/squared Bessel dynamics for factors and the benchmark; use market time (integrated average trading intensity) as the primary driver instead of calendar time.

- Tools/Products/Workflows:

- BN pricing library that replaces risk-neutral measure workflows with BN measure transformations.

- SROU-based Monte Carlo simulator for path-dependent options and hedging Greeks under market-time dynamics.

- A “market time” input stream computed from trading intensity feeds integrated into pricing engines.

- Assumptions/Dependencies:

- Existence of GOP/NP (NUPBR) with unique strong solutions for the SDEs; independence of normalized factors.

- For equivalence of BN measure: n > 2; bounded market-time stopping times; finite constant net risk-adjusted return.

- A robust proxy for the benchmark is tradable; discrete trading approximates continuous rebalancing.

- Volatility modeling and risk analytics aligned with stylized facts

- Sector: finance (risk management), energy/commodities, FX

- What: Implement SROU/3/2-type stochastic volatility models that endogenously produce leverage effects, volatility clustering, roughness, spikes in calendar time, multi-scale components, and heavy-tailed Student-t-like returns.

- Tools/Products/Workflows:

- Risk engines that simulate SROU/3/2 volatility and generate realistic stress scenarios for indices and commodity curves.

- Calibration routines that fit gamma stationary densities for normalized factors and reproduce empirical return/volatility features.

- Assumptions/Dependencies:

- Quality market-time estimation; factor normalization and independence; reflection at zero to handle spikes.

- Market Activity Index (MAI) for time change and monitoring

- Sector: finance (trading, market microstructure), software (data engineering)

- What: Compute and publish an internal Market Activity Index representing the integrated average trading intensity; drive model time change and monitor activity-based risk.

- Tools/Products/Workflows:

- ETL pipelines that aggregate trade/quote data to estimate market time in near real-time.

- Dashboards that flag expected volatility spikes as market activity accelerates.

- Assumptions/Dependencies:

- High-quality, low-latency data; robust microstructure noise handling; consistent definition of trading intensity across venues/instruments.

- Equal-weight factor portfolio as a benchmark proxy

- Sector: asset management

- What: Construct and maintain an equal-weight factor portfolio (or suitably diversified stock proxy) to approximate the benchmark used in BN pricing and hedging.

- Tools/Products/Workflows:

- Rule-based rebalancing strategies; tracking error analytics; operational controls for discrete reallocation intervals.

- Assumptions/Dependencies:

- Factors represent independent auxiliary portfolios; shortfalls due to discrete trading and transaction costs; extreme volatility when a factor nears zero managed via controls.

- Minimal-price internal valuation for replicable claims

- Sector: finance (treasury, accounting policy)

- What: Adopt BN pricing internally for replicable claims to avoid systematic overpricing that may occur under risk-neutral assumptions in markets with FLVRs.

- Tools/Products/Workflows:

- Policy guidelines and audit trails evidencing minimal pricing via BN measure and KL divergence minimization.

- Assumptions/Dependencies:

- Governance buy-in; documentation for auditors; clear mapping of product replicability and hedging feasibility.

- Academic curriculum and research tooling

- Sector: academia, education

- What: Introduce courses and labs on information-minimized market modeling (MMM), BN pricing, surprisal/KL minimization, and activity-time diffusions.

- Tools/Products/Workflows:

- Open-source teaching notebooks implementing CIR/SROU processes; empirical labs estimating market time and testing stylized facts.

- Assumptions/Dependencies:

- Access to historical tick data; computational resources; reproducibility practices.

- Stress testing and scenario generation that respects empirical distributions

- Sector: finance (risk, compliance)

- What: Use SROU/3/2 volatility and gamma stationary densities to generate heavy-tailed, leverage-sensitive scenarios aligned with observed Student-t-like returns.

- Tools/Products/Workflows:

- Scenario libraries; backtesting harnesses comparing BN-based hedges vs risk-neutral hedges.

- Assumptions/Dependencies:

- Calibration discipline; data coverage across regimes; validation versus realized outcomes.

- Calibration constraints and estimation aids

- Sector: quant research

- What: Use model constraints (sum of risk-premium parameters equals 1; activity constraint) to regularize estimation and reduce degrees of freedom.

- Tools/Products/Workflows:

- Penalized estimation that enforces ω⊤1 = 1; inference of gamma parameters for normalized factors; diagnostics for independence/stationarity in market time.

- Assumptions/Dependencies:

- Independence of normalized factors is a strong assumption; sensitivity analyses and hierarchical models may be needed in practice.

Long-Term Applications

These opportunities require additional research, infrastructure, regulatory considerations, or product development to scale the approach across markets and institutions.

- Industry standardization of BN pricing and market-time models

- Sector: finance (industry consortia), policy/regulation

- What: Establish BN pricing as an alternative valuation standard for replicable claims; formalize market-time conventions and data standards.

- Tools/Products/Workflows:

- Protocols for BN valuation in clearinghouses/CCPs; standard APIs for Market Activity Index dissemination.

- Assumptions/Dependencies:

- Broad stakeholder acceptance; regulatory endorsement; alignment with accounting standards.

- Exchange-level publication of Market Time feeds

- Sector: market infrastructure

- What: Exchanges and data vendors publish certified market activity indices to support activity-time modeling across instruments and venues.

- Tools/Products/Workflows:

- Standardized calculation methodologies; governance for revisions; latency SLAs.

- Assumptions/Dependencies:

- Agreement on definitions; cross-market harmonization; protections against gaming.

- Tradable benchmark (GOP) vehicles

- Sector: asset management, ETF/structured products

- What: Launch funds that aim to track or approximate the growth optimal portfolio; enable direct trading of the numeraire for BN hedging.

- Tools/Products/Workflows:

- Dynamic leverage and rebalancing systems; risk controls for spikes when factors approach zero; liquidity provisioning.

- Assumptions/Dependencies:

- Regulatory approval; investor suitability; robust proxy construction given discrete trading and costs.

- Activity-time instruments and market-time-linked payoffs

- Sector: derivatives

- What: Design derivatives whose maturities or payoffs depend on market time rather than calendar time (e.g., “activity-time options” that expire after a specified cumulative trading intensity).

- Tools/Products/Workflows:

- Contract specifications; pricing and hedging frameworks; market-time observability at contract level.

- Assumptions/Dependencies:

- Reliable and manipulable-resilient market-time measurement; alignment with legal and settlement frameworks.

- Multi-asset MMM integration (equities, rates, credit, commodities, FX)

- Sector: finance (multi-asset trading and risk)

- What: Extend independence/stationarity assumptions to cross-asset normalized factors; synchronize market-time processes across asset classes.

- Tools/Products/Workflows:

- Unified multi-asset BN engines; joint activity-time estimation; cross-asset stress testing incorporating shared activity shocks.

- Assumptions/Dependencies:

- Cross-asset factor independence is likely violated; requires hierarchical or copula-based extensions and empirical validation.

- Regulatory reframing of no-arbitrage conditions

- Sector: policy/regulation

- What: Consider NUPBR and GOP existence as practical no-arbitrage foundations, acknowledging FLVRs; integrate BN approaches into prudential valuation and capital frameworks.

- Tools/Products/Workflows:

- Supervisory methodologies that benchmark KL divergence between pricing and real-world measures; disclosure standards for valuation methods.

- Assumptions/Dependencies:

- Policy consensus; empirical evidence of BN hedging reliability under stress; compatibility with existing solvency rules.

- Information-minimization strategies in algorithmic trading

- Sector: electronic trading

- What: Develop strategies that minimize surprisal/KL divergence in price formation, interpreting markets as communication systems with activity-driven dynamics.

- Tools/Products/Workflows:

- Adaptive execution algorithms that modulate aggression based on market time; reinforcement learning with information-theoretic rewards.

- Assumptions/Dependencies:

- Robust estimation of surprisal and activity; safeguards against adverse selection and microstructure effects.

- Empirical validation and estimation of the net risk-adjusted return

- Sector: academia/quant research

- What: Investigate whether the “single parameter in market time” claim holds materially in practice; quantify drift estimation error impact versus BN’s parameter independence.

- Tools/Products/Workflows:

- Long-horizon studies of benchmark growth; inference methods under changing regimes; model selection tests vs alternative stochastic volatility models.

- Assumptions/Dependencies:

- Availability of long-run total return data; careful treatment of structural breaks and sectoral shifts.

- Cross-sector adoption of activity-time volatility modeling

- Sector: energy, transportation, logistics

- What: Apply activity-time modeling to systems with bursty information flow and clustering (e.g., demand spikes, grid imbalance events).

- Tools/Products/Workflows:

- SROU-inspired operational risk modeling; capacity planning under activity spikes; insurance pricing for clustered events.

- Assumptions/Dependencies:

- Valid mapping of “activity time” to sector-specific intensity metrics; domain adaptation of diffusion assumptions.

Across all applications, feasibility depends on:

- Measuring and validating market time (integrated trading intensity) reliably.

- Constructing tradable proxies for the benchmark under discrete trading, costs, and constraints.

- Managing deviations from independence/stationarity in real markets.

- Ensuring governance for KL divergence minimization and model risk controls.

- Adapting continuous-time theory to discrete execution and microstructure realities.

Glossary

- 3/2 stochastic volatility dynamics: A stochastic volatility model where the variance follows a 3/2-type diffusion, often yielding heavy-tailed behavior and tractability. "the {\em $3/2$ stochastic volatility dynamics} that are characterized by the SDE"

- Activity time: An alternative time scale driven by trading intensity in which certain processes evolve, separating fast market activity from diffusion dynamics. "evolves in the -th activity time "

- Benchmark: The growth optimal portfolio (of factors) used as the performance and pricing numeraire within the benchmark approach. "The benchmark satisfies the SDE"

- Benchmark-neutral (BN) pricing: A pricing method that uses the benchmark as numeraire and its associated measure, aiming for minimal prices and practical hedging. "As an alternative and practicable pricing method to risk-neutral pricing, benchmark-neutral (BN) pricing has been proposed"

- Benchmark-neutral pricing measure: The probability measure associated with BN pricing, chosen to minimize divergence from the real-world measure. "The Kullback-Leibler divergence between the benchmark-neutral pricing measure and the real-world probability measure is also minimized."

- Benchmark time: The activity time associated with the benchmark process, in which the normalized benchmark follows an SROU dynamic. "evolving in the {\em benchmark time}"

- Brownian motion: A continuous-time stochastic process with stationary, independent increments used to model random market shocks. "the independent driving Brownian motions "

- CIR process: The Cox–Ingersoll–Ross diffusion, a mean-reverting square-root process used to model positive quantities like variance. "is that of a CIR process of dimension "

- Contingent claim: A payoff dependent on underlying assets’ outcomes, priced and hedged within the proposed framework. "enable precise contingent claim hedging."

- Diffusion (scalar diffusion): A continuous-time stochastic process characterized by stochastic differential equations with drift and diffusion terms. "state variables are scalar stationary diffusions."

- Extended market: The market that includes both the factors and the savings account, enabling formation of broader self-financing portfolios. "to form the {\em extended market} so that the interest rate does not need to be modelled."

- Fokker-Planck equation: A partial differential equation describing the evolution (or stationary form) of a process’s probability density. "the Fokker-Planck equation"

- Free Lunch with Vanishing Risk (FLVR): A classical no-arbitrage notion; absence of FLVRs is linked to risk-neutral pricing in the fundamental theorem. "absence of free lunches with vanishing risk (FLVRs)"

- Growth optimal portfolio (GOP): The portfolio that maximizes instantaneous growth rate; central as benchmark/numeraire in this approach. "The existence of the {\em growth optimal portfolio} (GOP) is assumed"

- Itô formula: A calculus rule for stochastic processes used to derive dynamics of transformed variables. "By application of the It^o formula one obtains"

- Kullback-Leibler divergence: An information-theoretic measure of discrepancy between two probability distributions used here to align pricing with real-world probabilities. "The Kullback-Leibler divergence between the benchmark-neutral pricing measure and the real-world probability measure is also minimized."

- Kelly portfolio: The investment strategy maximizing expected logarithmic utility, equivalent to the GOP in continuous-time finance. "called the Kelly portfolio"

- Lagrange multiplier: A variable that arises in constrained optimization; here associated with growth-rate maximization, yielding key market parameters. "emerges as the Lagrange multiplier of the growth rate maximization"

- Lagrangian: An optimization construct combining objective and constraints; used repeatedly to derive market dynamics and pricing. "a first Lagrangian comes into play."

- Leverage effect: The empirical negative correlation between returns and volatility, with declines in price increasing volatility. "display the leverage effect: declines in the index are associated with increased volatility"

- Locally riskfree portfolio (LRP): A portfolio with zero instantaneous volatility; its absence shapes the market’s structure and GOP. "no locally riskfree portfolio (LRP)"

- Market activity: A measure of trading intensity interpreted as the speed of information flow; integrates to market time. "the paper models by the {\em market activity}."

- Market price of risk (vector): The vector quantifying compensation per unit of diffusion risk in the numeraire portfolio’s dynamics. "with initial value $S^{**}_{\tau_{t_0}>0$, {\em market price of risk vector}"

- Market time: The integrated market activity providing a stochastic clock for factor and benchmark diffusions. "The integrated market activity is called the {\em market time}."

- Minimal Market Model (MMM): The idealized information-minimized market model with a single parameter in market time, producing tractable dynamics. "is called {\em minimal market model} (MMM)."

- No Unbounded Profit with Bounded Risk (NUPBR): A weak no-arbitrage condition equivalent to the existence of the GOP/numeraire portfolio. "their {\em No Unbounded Profit with Bounded Risk} (NUPBR) condition."

- Normalized factor: A factor scaled by an exponential basis so its volatility becomes stationary in market time. "the {\em normalized factor}."

- Numeraire portfolio (NP): The growth-optimal portfolio in the extended market used as numeraire for real-world pricing. "the NP satisfies the SDE"

- Radon-Nikodym derivative: The density process defining a change of measure, used to specify the BN pricing measure. "the {\em Radon-Nikodym derivative}"

- Risk-neutral pricing: Pricing under a risk-neutral measure using a numeraire; classically linked to the absence of FLVRs. "risk-neutral pricing is equivalent to the absence of free lunches with vanishing risk (FLVRs)."

- Self-financing portfolio: A portfolio whose value changes only due to asset returns, not external cashflows. "a self-financing portfolio "

- Squared Bessel process: A nonnegative diffusion (related to squared radial OU) governing factors and benchmark under BN pricing. "follow squared Bessel processes"

- Squared radial Ornstein-Uhlenbeck (SROU) process: A diffusion for nonnegative magnitudes with mean reversion; central to factors and benchmark dynamics. "squared radial Ornstein-Uhlenbeck (SROU) processes"

- Stationary probability density: A time-invariant distribution of a stochastic process used for normalized factors and volatility. "stationary probability densities"

- Subfiltration: A filtration contained within another, here the one generated by Brownian motions is a subfiltration of the full filtration. "the filtration generated by the Brownian motions is a subfiltration of the given filtration"

- Subordinator: An increasing stochastic process used as a time-change (derivative of market time in calendar time). "its derivative serving as a subordinator"

- Surprisal: The expected information content (negative entropy) minimized for the joint stationary density of normalized factors. "The surprisal, which is the expected information content of the stationary joint probability density of the normalized factors, is minimized."

- Student-t distribution: A heavy-tailed distribution; the paper notes index log-returns are well approximated by a t with 4 degrees of freedom. "approximate a Student-t distribution with four degrees of freedom"

Collections

Sign up for free to add this paper to one or more collections.