- The paper introduces MARS, a framework that integrates budget-aware MCTS, modular construction, and reflective memory to optimize autonomous AI research.

- It restructures traditional monolithic designs into repository-level modular components, enhancing scalability, code reuse, and search efficiency.

- Experimental results demonstrate significant performance gains, with a 31.1% Gold Medal rate and improved efficiency over baseline methods.

Modular Agent with Reflective Search for Automated AI Research

Introduction

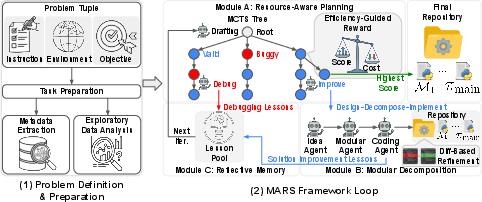

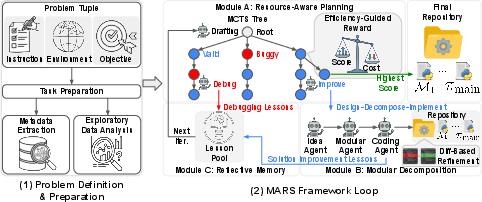

The paper introduces MARS (Modular Agent with Reflective Search), a framework for autonomous AI research designed to address key deficiencies in existing LLM-based agents operating in resource-intensive and high-complexity research environments. Unlike standard software engineering, the automation of AI research tasks involves algorithmic uncertainty, expensive evaluations, and challenging credit assignment. Current agentic frameworks are largely monolithic, leading to brittle solutions, high computational costs, and ineffective exploitation of prior trials due to inadequate memory mechanisms.

Methodology

MARS is founded on three pillars: Budget-Aware Monte Carlo Tree Search (MCTS), Modular Construction via repository-level decomposition, and Comparative Reflective Memory (Lesson Learning). The framework transitions from generating single-file scripts to orchestrating research as the optimal synthesis of a code repository comprising independently testable modules, with a pipeline enforcing the separation of design, decomposition, and implementation.

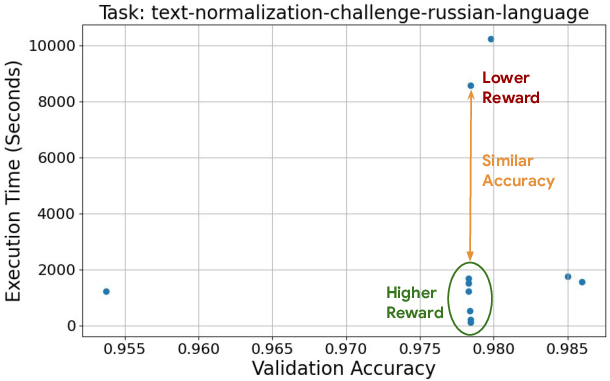

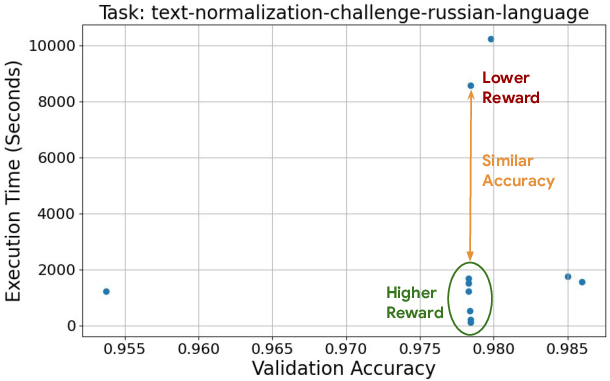

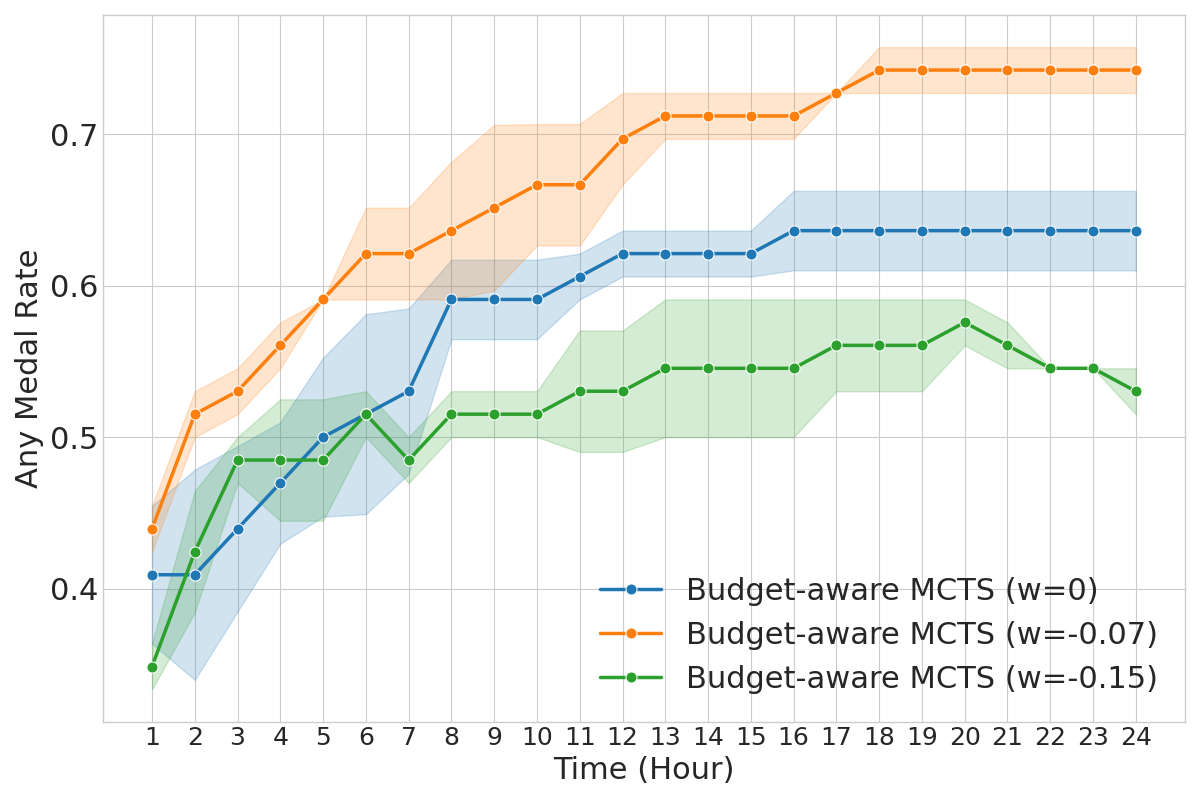

Budget-Aware MCTS: Traditional MCTS and other search algorithms typically ignore execution expense. MARS modifies the standard MCTS reward mechanism to penalize latency and prioritize search trajectories yielding superior results per unit cost. The efficiency-guided reward is defined as:

R(v)=G(v)⋅[t(v)/L(v)]w,

where G(v) is the normalized performance metric and w is a penalty hyperparameter (default w=−0.07). This modulates the search to prune solutions exceeding resource budgets or exhibiting suboptimal latency-performance trade-offs.

Modular Construction: MARS decomposes solutions into independent modules orchestrated via a main script, facilitating precision, code reuse, caching, and localized debugging. Node solutions take the form:

sn=⟨{Mj}j=1l,πmain⟩

A standardized diff-based editing protocol enables atomic updates across the codebase, maximizing code robustness and facilitating continuous improvement.

Reflective Memory (Lesson Learning): To transcend context window limits and enhance causal inference, MARS distills empirical findings and debug experiences into high-signal lessons. These lessons, classified as Solution Improvement and Debugging Lessons, guide both performance optimization and error avoidance, fostering efficient credit assignment.

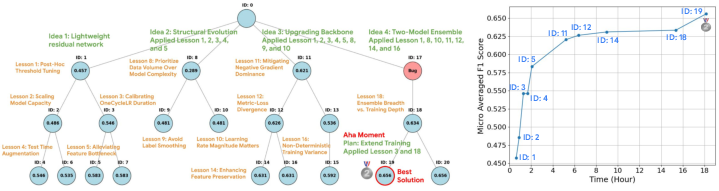

Figure 1: Overview of the MARS framework showing its modular, iterative, and memory-augmented search process.

Experimental Evaluation

MARS is benchmarked on MLE-Bench, a suite of 75 long-horizon Kaggle competitions. Evaluation adheres to strict resource constraints (24h wall-clock, A100 GPU) and direct comparison against state-of-the-art open-source and leaderboard baselines. Metrics include Above Median Rate, Any Medal Rate, and Gold Medal Rate.

Results: In controlled comparisons, MARS achieves a Gold Medal rate of 31.1%, the highest among reported agents under identical hardware conditions. The parallelized scaling variant (+MARS) attains an Above Median Rate of 73.3% and Any Medal Rate of 59.6%, surpassing ML-Master 2.0, which utilizes a larger computational budget.

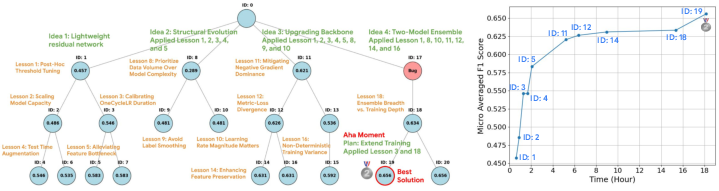

Figure 2: MARS experiencing an "Aha!" moment during the iMet-2020-FGVC7 task, with stepwise performance improvement triggered by lessons and strategy refinement.

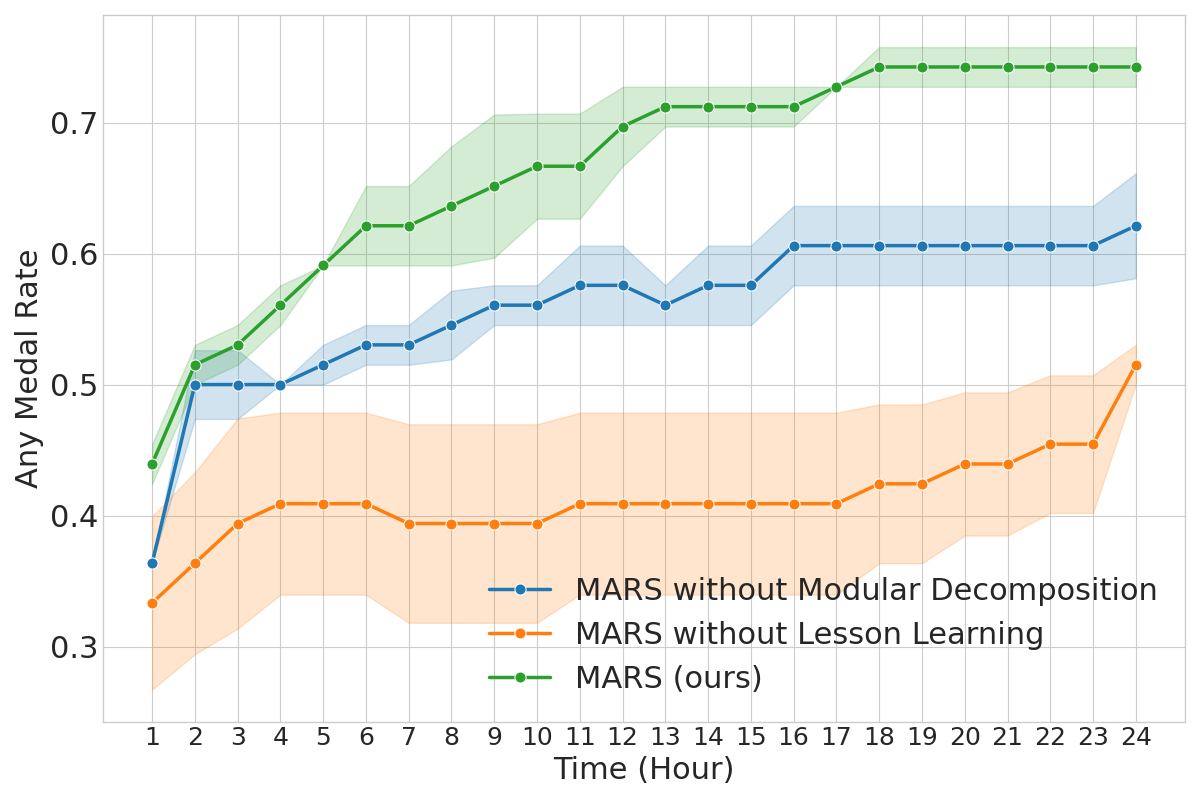

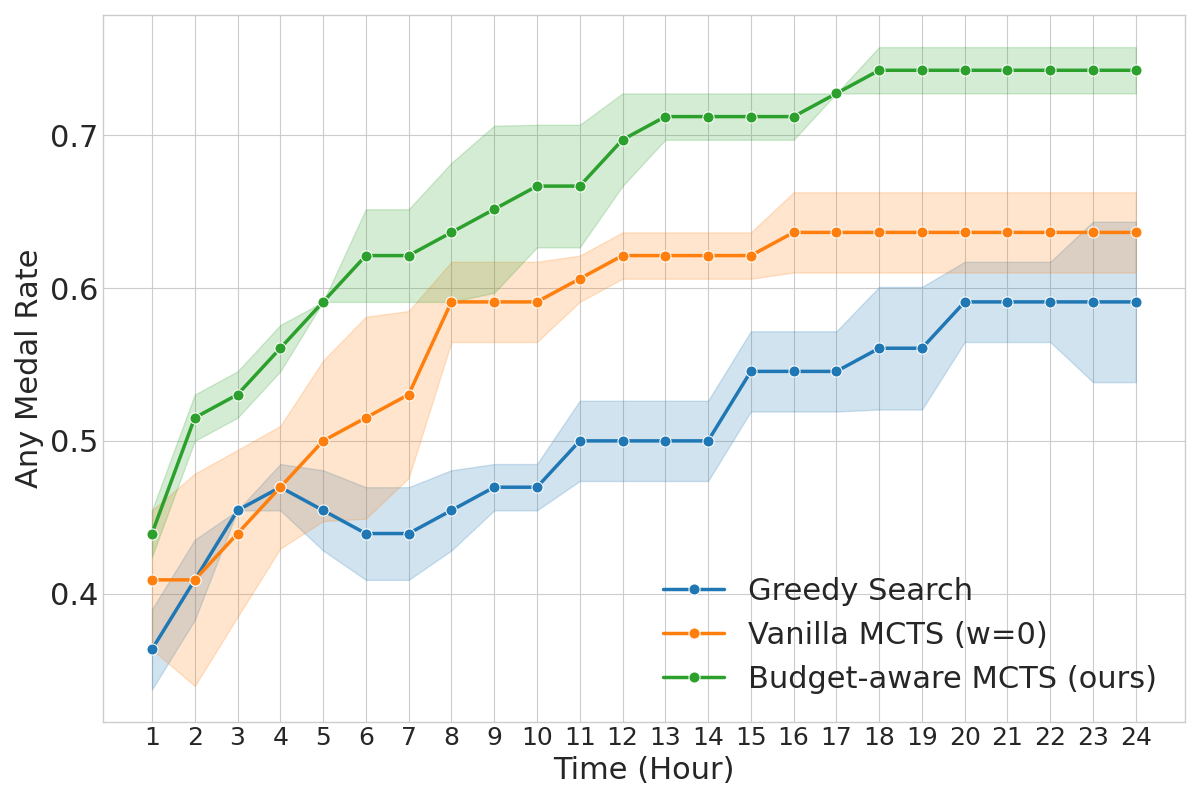

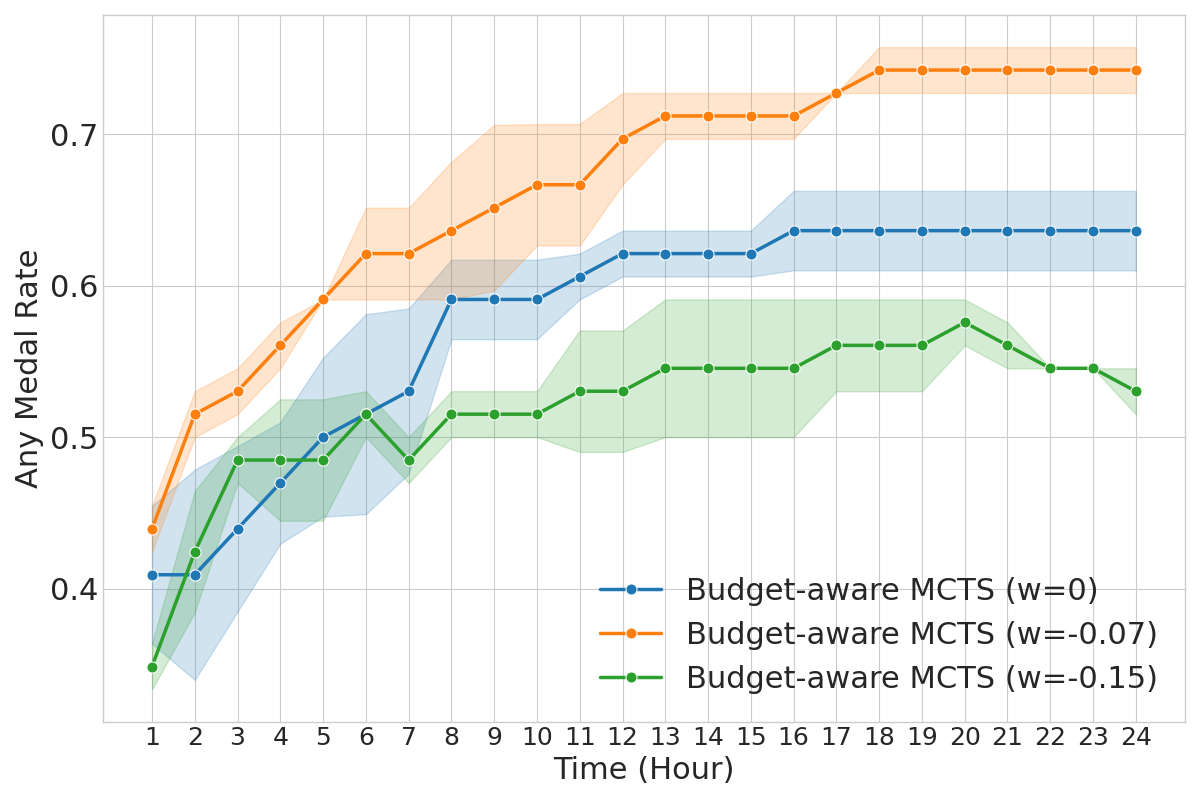

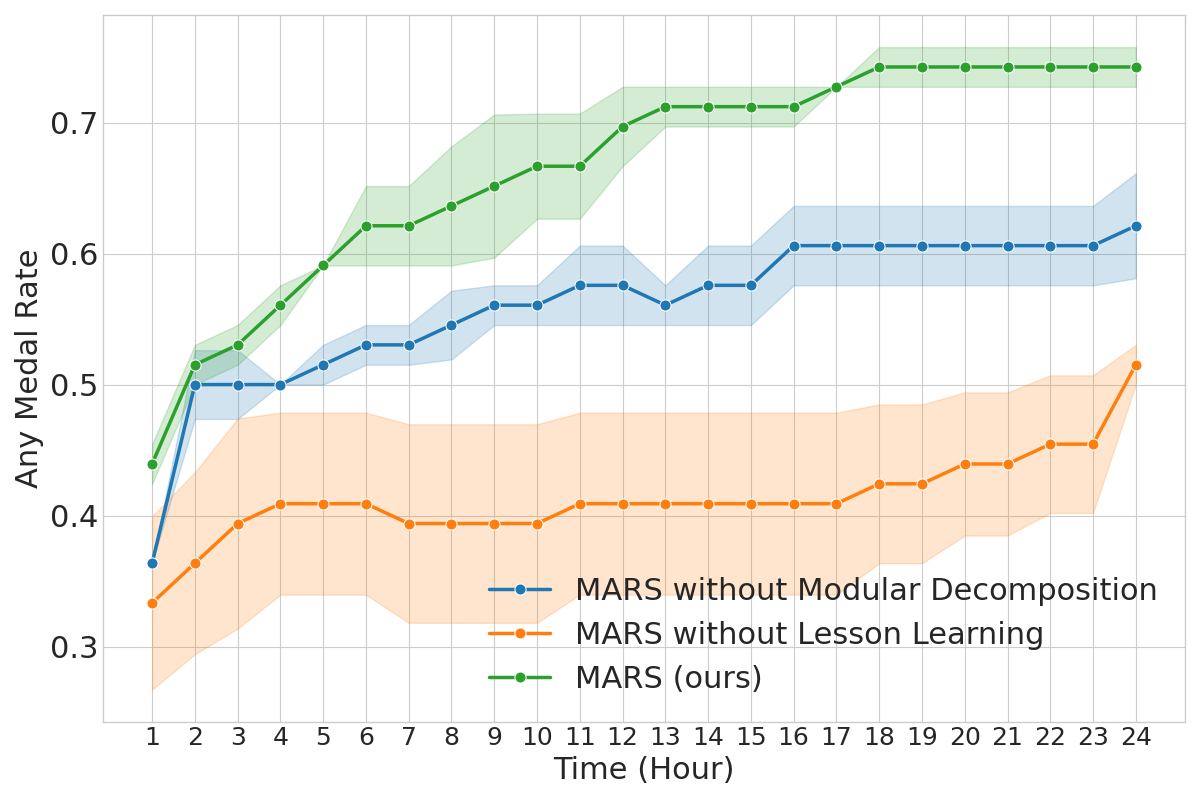

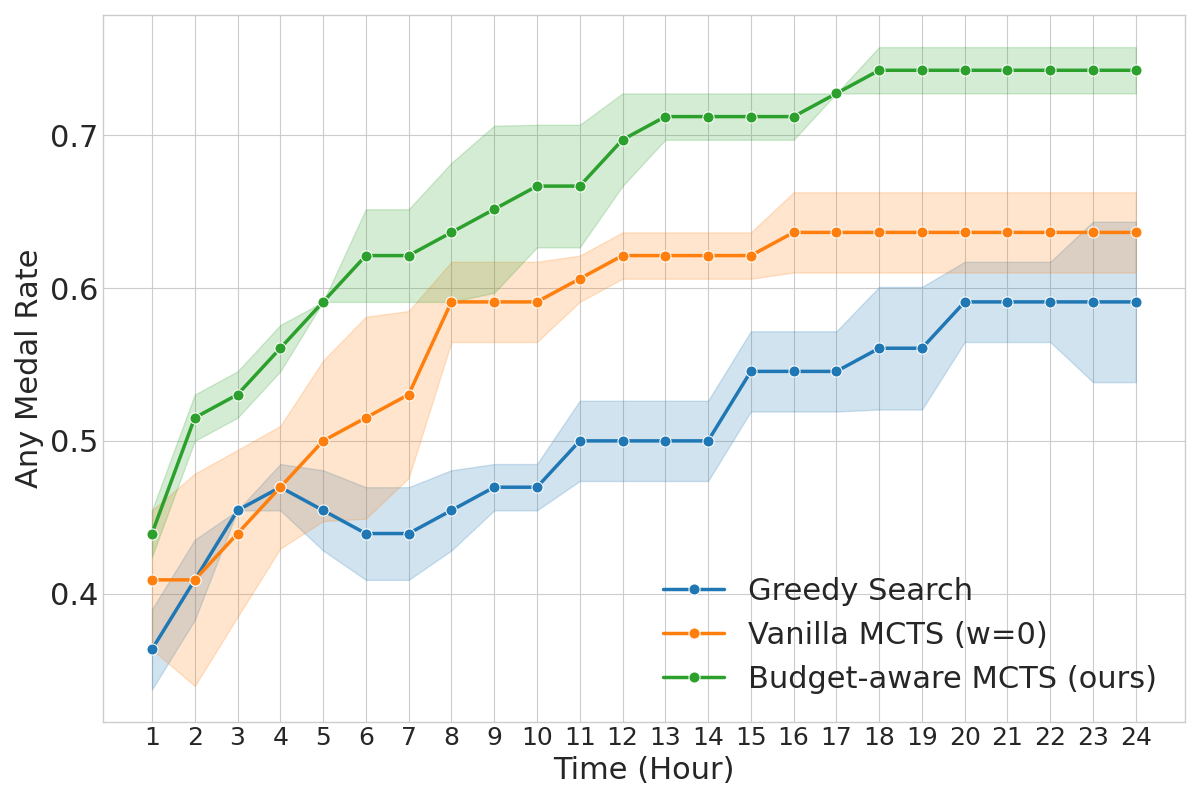

Ablation analyses reveal that both Modular Decomposition and Lesson Learning are critical for performance; disabling either leads to marked regressions. Budget-Aware MCTS also consistently improves exploration efficiency compared to greedy and vanilla MCTS, yielding more high-quality solutions under fixed budgets.

Figure 3: Ablation study quantifying the impact of modularity and lesson learning on agent performance.

Figure 4: Comparison of tree search strategies, showing the superior efficiency of Budget-Aware MCTS.

Discussion

Modularization: The modular paradigm enables the construction of substantially larger and more structured repositories (mean 1103.9 LOC, 6.7 files), with diverse module types mapped to the demands of specific competitions. This supports solution scalability and mirrors professional scientific workflows.

Search Efficiency: Budget-aware exploration increases the effective solution rate (19.5% vs. 16.1% with vanilla MCTS), demonstrating the importance of latency-aware reward modulation.

Figure 5: Efficiency-guided reward function that prioritizes faster candidates with similar performance.

Lesson Transfer and Generalization: MARS exhibits lesson-utilization rates of 65.8% and cross-branch lesson-transfer rates of 63.0%, signifying effective generalization of empirical insights across solution trajectories.

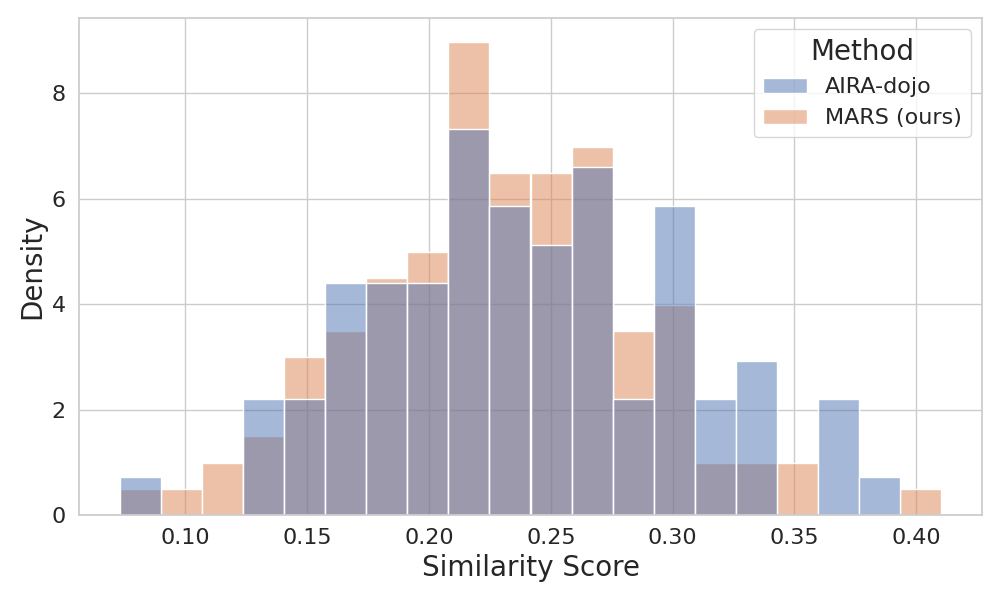

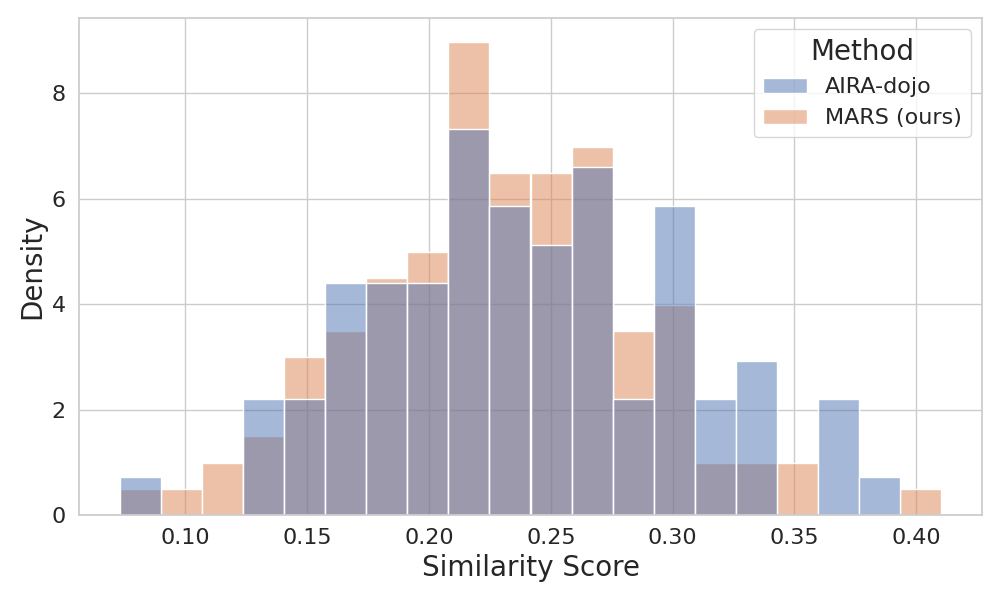

Compliance and Originality: Automated code and log audits confirm strict rule adherence (0% violation rate). Similarity analysis with the Dolos tool demonstrates originality; none of the submissions exceed 60% similarity to public Kaggle code.

Figure 6: Distribution of maximum code similarity scores for medal-winning submissions, confirming code originality.

Cost-Performance Tradeoff: The memory mechanism in MARS incurs higher input token costs, but this is offset by a substantial increase in Any Medal Rate, validating the efficacy of maintaining comprehensive lesson context.

Figure 7: Sensitivity of performance to penalty weight w in the reward modulation, establishing w=−0.07 as optimal.

Implications and Future Directions

MARS presents evidence that repository-level modularity combined with resource-aware planning and sophisticated reflective memory mechanisms can close the gap between automated software maintenance and scientific research engineering. The approach provides a template for scaling autonomous agent frameworks to even broader classes of scientific discovery, with direct implications for AI-powered research automation, code synthesis for novel algorithms, and experimental design under resource constraints.

Future research may focus on extending modular agent architectures to domains beyond machine learning, incorporating richer forms of context caching and external knowledge, and integrating early stopping protocols to further optimize economic viability.

Conclusion

MARS systematically addresses the unique challenges of automated AI research by integrating budget-sensitive search, modular architecture, and advanced memory for lesson distillation and credit assignment. Comprehensive empirical evaluation establishes its superiority in resource-constrained long-horizon learning scenarios, while its methodological contributions set the stage for further advances in agentic scientific discovery (2602.02660).