- The paper presents the first formalization of statistical learning theory in Lean 4, creating machine-checkable proofs and reusable libraries.

- It employs advanced Gaussian analysis techniques including Efron-Stein, Gaussian Poincaré, and Dudley’s entropy integral to rigorously control empirical processes.

- The work demonstrates a human–AI collaborative proof strategy and applies the toolbox to establish minimax-optimal learning rates in least squares regression.

Motivation and Context

The paper presents the first comprehensive formalization of statistical learning theory (SLT) in Lean 4, focusing on empirical process theory as a foundation. As the complexity of modern machine learning models—and their theoretical analysis—has increased, so too has the challenge of handling advanced mathematical arguments and ensuring their correctness. Traditional approaches in SLT rely on implicit assumptions and condensed reasoning, making formal verification and reuse difficult. By encoding SLT in Lean 4, this work seeks to provide not only machine-checkable proofs and reusable libraries, but also to expose and resolve the subtle technical gaps that frequently occur in textbook-level theory.

Scope and Main Contributions

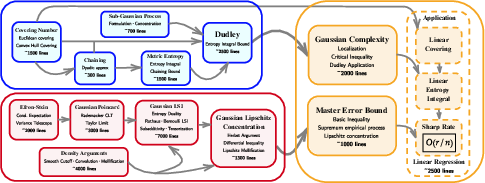

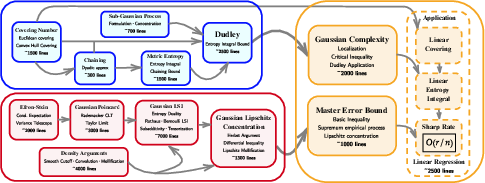

This project develops a complete Lean 4 formalization pipeline encompassing the full analytic machinery required for modern SLT, implementing several foundational results and techniques previously absent from the Lean 4 mathematical library. The core contributions are:

- A reusable formal toolbox for high-dimensional Gaussian analysis, including Efron-Stein's inequality, Gaussian Poincaré inequality, density arguments for extending regularity classes, subadditivity of entropy via tensorization, and the full Gaussian logarithmic Sobolev inequality (LSI).

- The first formalization of Dudley's entropy integral theorem for sub-Gaussian processes, providing a rigorous, end-to-end chaining argument connecting metric entropy with expected suprema of processes.

- A unified application to least-squares regression and its high-dimensional variants, using the newly formalized tools to obtain minimax-optimal learning rates for both ordinary and ℓ1-constrained linear regression.

- A human–AI collaborative paradigm for Lean formalization, in which human researchers design the proof strategies and decompose tasks, and AI agents (notably Claude Code and Opus-4.5) contribute proof tactics, with all code validated line-by-line without placeholders or unchecked axioms.

- Substantial codebase of ∼30,000 Lean 4 lines, signifying a major leap in the scale and depth of formalized learning theory.

Figure 1: The dependency graph of the project's formalizations, highlighting that all nodes represent new additions to Lean 4.

High-Dimensional Gaussian Analysis

The authors systematically formalize the backbone inequalities for Gaussian analysis, starting from measure-theoretic probability and pushing through advanced results:

- Efron-Stein's Inequality is instantiated in full generality, with a Lean-native formulation of conditional expectation (condExpExceptCoord) for coordinatewise independence. This required developing new measure-theoretical machinery for handling conditional independence and iterated expectations in multivariate distributions.

- Gaussian Poincaré Inequality is established for compactly supported smooth functions and extended to general differentiable functions through density arguments. This involved careful handling of convergence (using mollifiers and Sobolev space denseness), and the formalization of functional-analytic elements like Cc∞-density in the Gaussian Sobolev space.

- Tensorization (Subadditivity of Entropy) and Gaussian LSI are proven in the full vector-valued, non-bounded setting, using telescoping sums, entropy duality formulas, and dimension-free arguments. The approach mirrors and makes explicit steps in Boucheron-Lugosi-Massart.

- Gaussian Lipschitz Concentration is derived via the Herbst argument. By meticulous tracking of regularity throughout the mollification of Lipschitz functions and integrating through the entropy machinery, the authors obtain the classical Gaussian concentration bound for arbitrary Lipschitz functions on Rn.

Implementation Challenges

The process revealed structural gaps in both Lean 4 and the literature: formalization requires all measurability, integrability, and topological conditions to be checked explicitly, unlike the typical SLT progression in textbooks. This forces an unprecedented level of precision and surfaces hidden assumptions (e.g., measure rectangle construction, functional analytic density, and pathwise continuity).

Dudley's Entropy Integral

Dudley's theorem—a cornerstone in empirical process theory—links the metric entropy (covering numbers) of a function class to the expected supremum of a sub-Gaussian process indexed by that class. The authors' approach includes:

- Formally defining covering numbers, metric entropy, and the entropy integral with Lean 4's support for both extended non-negative reals and real-valued integrals, handling all edge cases rigorously.

- Rigorous encoding of sub-Gaussian processes via moment generating functions and careful orchestration of telescoping and chaining over dyadic nets.

- Original Lean 4 proof of the chaining argument, with recursive projection yielding sharper constants and a staged limit process extending bounds from finite nets to countable dense subsets and finally to the full set using path continuity.

These technical choices not only replicate but often refine classical arguments by eliminating nonconstructive or imprecise elements and clarifying constant dependencies.

Least Squares Applications

Building upon the formalized machinery, the authors encode both standard and high-dimensional least squares estimation, including:

- Precise formalization of empirical risk minimization (ERM) and prediction error for least squares, within a generic regression framework.

- Implementation of the localization technique for empirical processes, star-shapedness of function classes, and explicit definition of localized Gaussian complexity.

- Modular application of the Dudley and concentration toolboxes to obtain master error bounds with sharp rates for both linear regression in the classical (n≥d) regime and for constrained regression over ℓ1 balls in the high-dimensional (d>n) regime. Covering number estimates in both the ℓ2 and ℓ1 norms are formalized, including probabilistic methods like Maurey's lemma.

Implicit Assumptions Exposed

Throughout, formalization surfaces and obviates a range of technical caveats often ignored in the literature, such as:

- Nonemptiness of empirical spheres for suprema.

- Integrability and boundedness-above of supremum random variables.

- Uniformity and pathwise continuity under empirical metrics.

These explicit hypotheses clarify potential pitfalls in extending theory to new settings, serving as an implicit checklist for rigorous downstream work.

A notable component is the human–AI collaboration model: humans architect the granularity and connectivity of the formalization, while the AI substantially accelerates the tactical completion of proof scripts. Unlike some prior formalization efforts, all code compiles with no unchecked steps. This demonstrates the potential for future rapid scaling of formalized mathematics in complex probabilistic domains.

Implications and Future Prospects

From a practical perspective, the resulting Lean 4 SLT toolbox will serve as a foundation for further machine learning theory formalization, enabling modular reasoning about generalization, empirical processes, and high-dimensional phenomena. The approach not only confers high confidence in correctness but also maximizes reusability and reduces the likelihood of technical oversights in future theoretical work.

Theoretically, this project sets a precedent for deep formalization at the interface of probability theory, empirical process theory, and high-dimensional statistics. The exposure and resolution of implicit assumptions—especially in complex measure-theoretic and analytic settings—signal a maturing field ready for large-scale automation and scalable verification.

For future AI developments, this work opens several avenues:

- Integration of these verified SLT results into automated assistants for theorem discovery or research validation.

- Expansion towards deep learning theory, reinforcement learning, or non-Euclidean statistical settings using similar methodology.

- Extension of the Lean library for broader classes of stochastic processes, complexity measures, and advanced functional inequalities.

Conclusion

This paper presents a milestone in the formalization of learning theory, providing both a high-quality, reusable codebase and a clarifying dissection of SLT’s mathematical underpinnings. The comprehensive human–AI formalization in Lean 4 addresses critical gaps in theory verification, pushes the boundaries of what is currently possible in formal mathematics, and lays the groundwork for the next phase of rigor and automation in machine learning research.