- The paper proposes a novel in-situ benchmarking protocol that extracts physical and logical Pauli error rates directly from syndrome data in Clifford circuits.

- It leverages mappings to subsystem and spacetime codes, achieving polynomial sample complexity scaling compared to exponential requirements of direct measurements.

- Numerical simulations and experimental results validate the method, offering rapid gate calibration, error drift detection, and enhanced decoder design.

In-situ Benchmarking of Fault-Tolerant Quantum Circuits. I. Clifford Circuits

Introduction and Problem Statement

Fault-tolerant quantum computation (FTQC) hinges on quantum error correction (QEC), which necessitates robust characterization of both physical device noise and logical error channels. Standard approaches typically employ pre-experiment benchmarking to extract physical error models; however, these often ignore context-specific, circuit-level nuances present in deployed computation and post-processing. The critical insight of "In-situ benchmarking of fault-tolerant quantum circuits. I. Clifford circuits" (2601.21472) is the development of scalable algorithms for in-situ noise learning using the syndrome data generated naturally during FTQC operation, especially Clifford circuit executions.

The core technical focus is the extraction of physical and logical Pauli error rates directly from syndrome measurements, leveraging mappings from Clifford circuits to subsystem codes via the spacetime code formalism. The authors establish formal learnability criteria, sample complexity guarantees, and scalable numerical optimization protocols. Importantly, their results demonstrate that logical error rates can be learned with sample overhead that is only polynomial in code distance or circuit size, contrasting sharply with direct logical-level measurements that require exponential resources as logical error rates are suppressed.

Pauli Error Model and Scalability

The study adopts the local Pauli error channel formalism, wherein the total error channel NΓ decomposes into overlapping local Pauli channels Nγ acting on subsets γ⊂[n] of qubits. This convolutional structure (see interaction between Pγ and global P in equations (1)-(4)) is mapped onto a factor graph representation amenable to Fourier-Walsh-Hadamard transformation for tractable inference.

The scalability of the learning protocol critically depends on both the locality and sparsity of the error model: error estimation remains efficient (i.e., with polynomial classical runtime and sample complexity) when channel supports and overlap counts rΓ,cΓ are bounded by constants, as in realistic hardware implementations of surface codes, Steane code, and qLDPC stabilizer families.

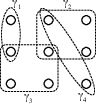

Figure 1: An example of the total Pauli error channel N as a composition of local Pauli channels, showing the convolutional factor graph structure.

Syndrome-based Noise Learning: Theoretical Foundations

The algorithmic foundation for noise inference utilizes the commutation structure between Pauli errors and measured stabilizer subgroups M≤Pn. The syndrome expectations Λ(M)=E[syndrome outcomes] are related to Pauli eigenvalues via Walsh-Hadamard transforms, thus enabling noise model parameterization in terms of syndromic observations.

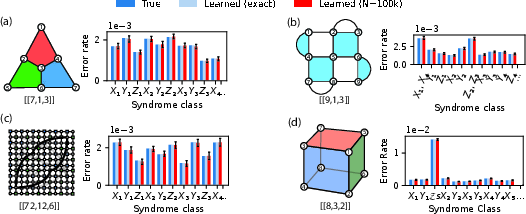

The central theoretical result provides necessary and sufficient conditions for physical and logical error learnability: the noise is reconstructible from syndrome data if and only if each error class produces unique, nontrivial syndrome signatures (Theorem 1). When learnability conditions fail—typically in codes with low-weight logical operators or boundaries—the protocol still enables estimation of aggregate error rates within syndrome equivalence classes with rigorous control of second-order corrections.

Figure 2: Learned syndrome class total error rates for several codes, comparing exact and sampled expectation values for Steane, rotated surface, bivariate bicycle, and 3D color codes.

The matrix equations A(M) and D(M) encode syndrome-to-parameter mappings; efficient subset selection algorithms (see appendix) reduce exponential syndromic spaces to minimal, irredundant stabilizer sets, ensuring tractable optimization.

Sample Complexity and Scaling Results

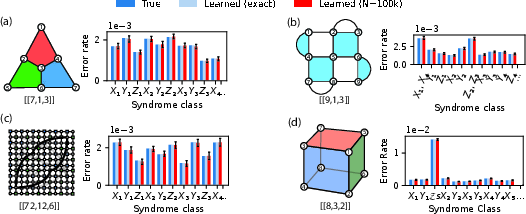

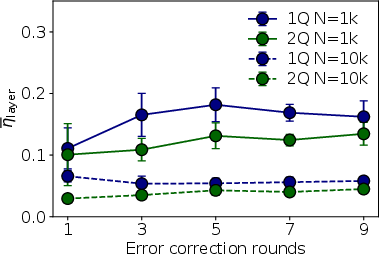

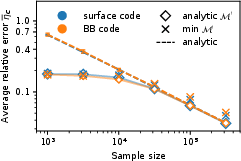

For local sparse noise on qLDPC codes, the learning precision for syndrome class error rates PC approaches the additive shot noise limit, independent of code size (Theorem 2). Recursive Gaussian elimination constructs analytical solutions for detector error rates; however, overfitting risks remain in low-sample regimes, for which gradient-based optimization with early stopping is shown to be superior in practice.

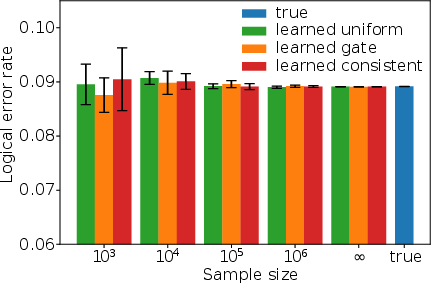

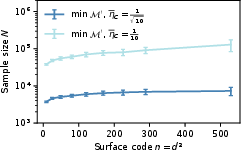

The presented numerical simulations (Figure 3) validate a near-constant sample size requirement for given physical precision across increasing code distances and syndrome extraction rounds—crucial for the scalability of FTQC calibration and logical verification.

Figure 3: Relative error of learned single-qubit error rates vs sample size, illustrating sample efficiency as code size increases and under optimization vs analytic solutions.

Circuit-level Generalization: Clifford Circuits and Spacetime Codes

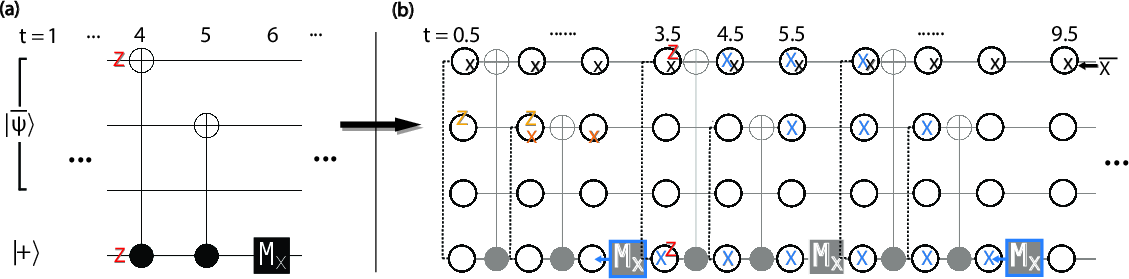

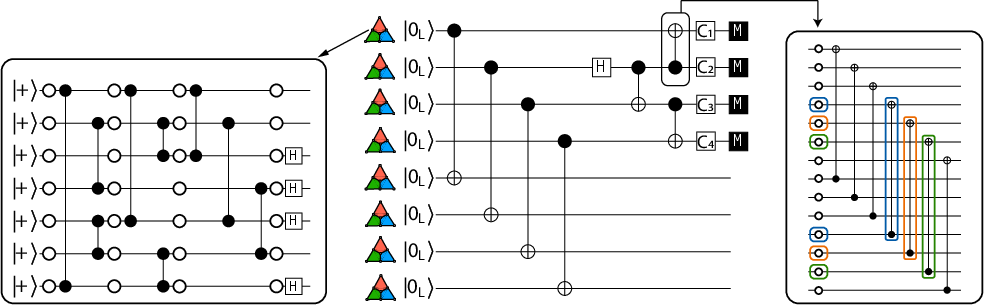

Extension to arbitrary fault-tolerant Clifford circuits is achieved through the spacetime code mapping, which transmutes circuit-level noise to static code noise on an (n⋅(T+1))-qubit subsystem code. Circuit operations—including transversal gates, measurements, and syndrome extraction—define spacetime stabilizer parity constraints that serve as detectors in the error model.

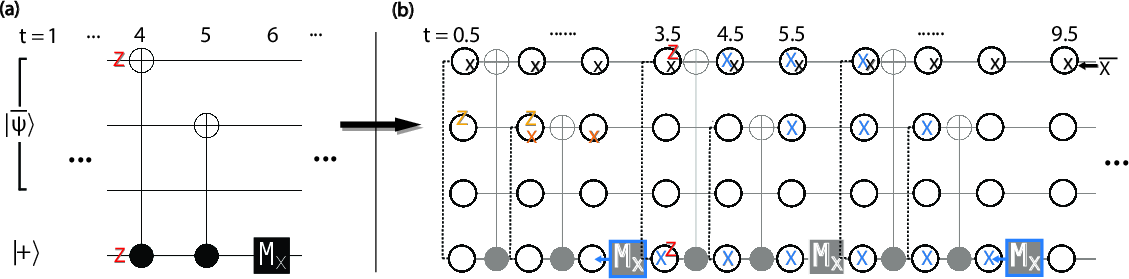

Figure 4: Circuit-to-code mapping via syndrome extraction for the repetition code: conversion of circuit layers into spacetime stabilizer generators.

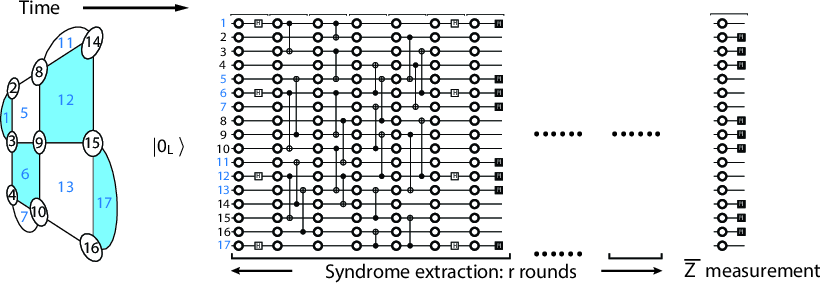

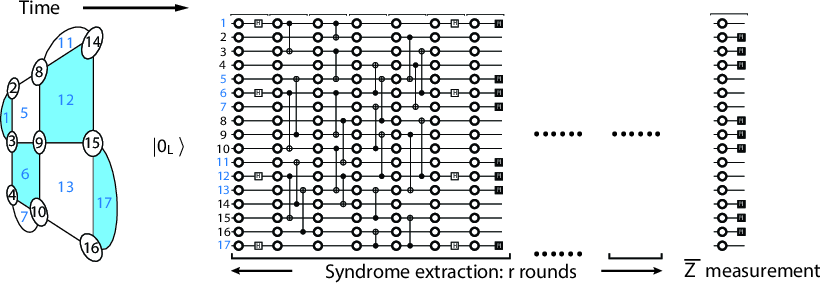

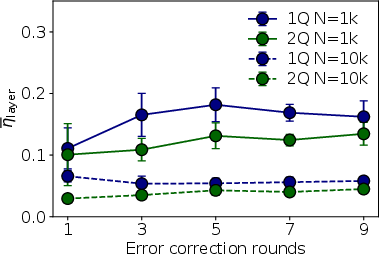

The learning protocol naturally generalizes to tracking temporally inhomogeneous or correlated errors, offering temporal resolution of gate fidelities and error drift. The method is tested on simulated surface code EC circuits and transversal CNOT sequences, including scenarios with time-varying, random-walk gate noise.

Figure 5: Error correction circuit for rotated surface code and corresponding spacetime code; right: layer-wise learning of 1Q and 2Q error rates under temporally varying error models.

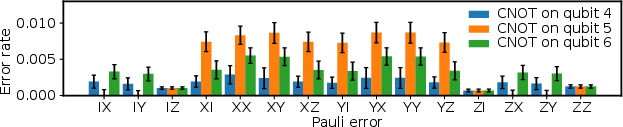

Physical and Logical Noise Benchmarking: Experimental Application

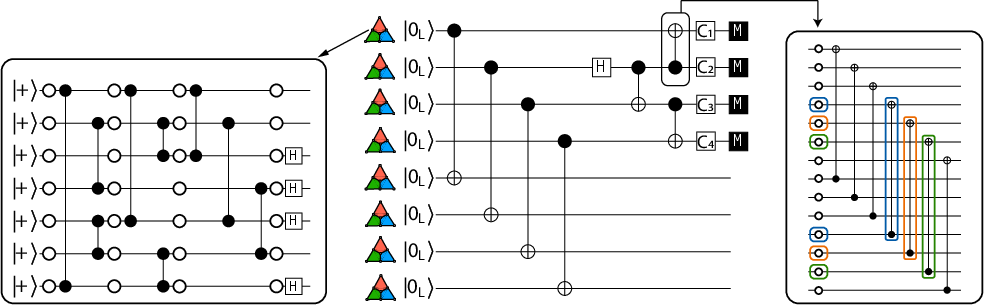

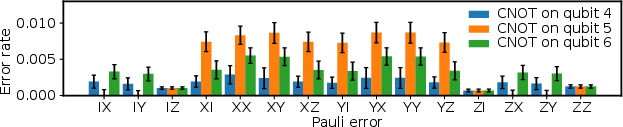

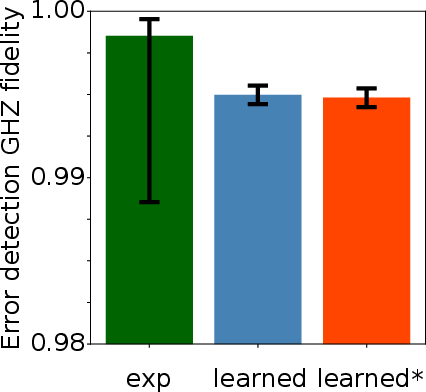

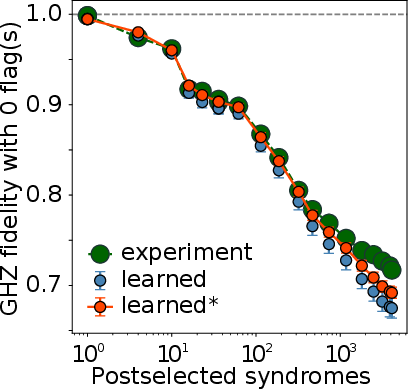

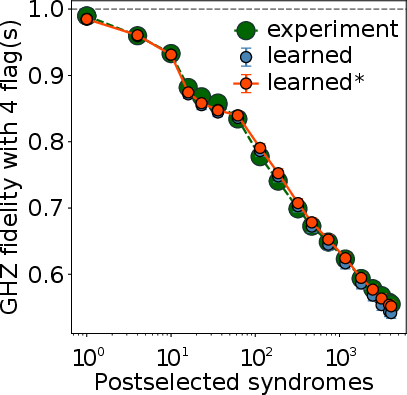

The practical utility of the scheme is demonstrated on experimental data from a 4-block logical GHZ state preparation in the Harvard neutral atom platform [Bluvstein et al., Nature 626]. By leveraging syndrome data only, the authors infer CNOT and measurement error rates with relative strengths matching device calibrations, and observe error rate drift consistent with atom loss. These estimates are robust to partial syndrome data and small sample sizes, aided by intraclass uniformity regularization.

Figure 6: Logical GHZ state preparation circuit schematic and physical error rate estimation for logical measurement circuits.

Logical Error Rates and Fidelity Estimation

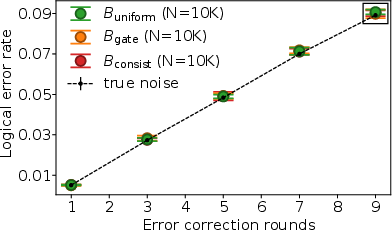

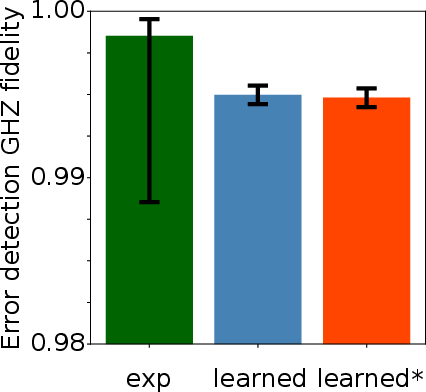

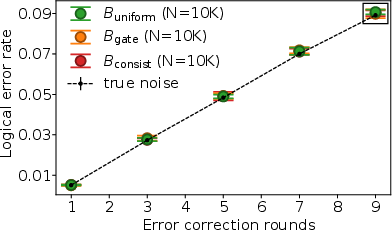

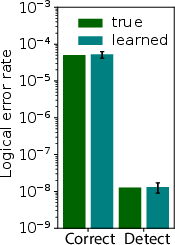

Theoretical development extends noise learnability to logical error rates and logical fidelity, conditional on the fault-tolerance of the Clifford circuit with respect to the assumed Pauli noise (Theorem 3). The protocol enables computation of decoder-independent logical error rates via syndrome-to-logical commutator structures and Monte Carlo simulation. It further permits efficient, syndrome-only logical fidelity estimation for arbitrary logical Pauli measurements, including direct fidelity estimation (DFE) and post-selection protocols.

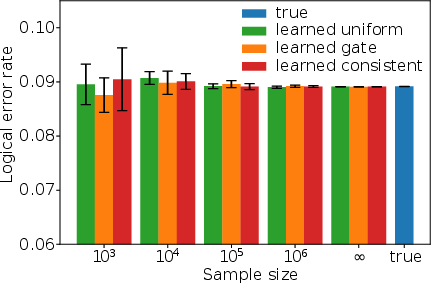

Figure 7: Logical error rate estimation for simulated surface code EC circuits; zoom shows estimator independence from physical error constraints.

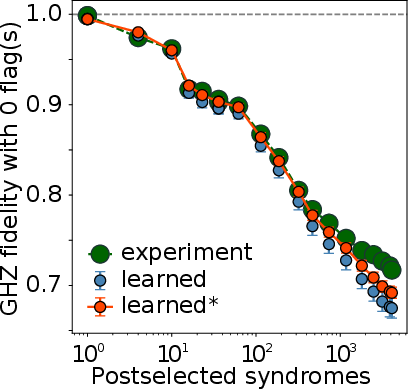

Figure 8: Comparison of logical DFE from experimental measurements and learned models, including sliding-scale postselection by syndrome flags.

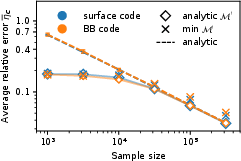

Exponential Sample Complexity Gap and Postselection

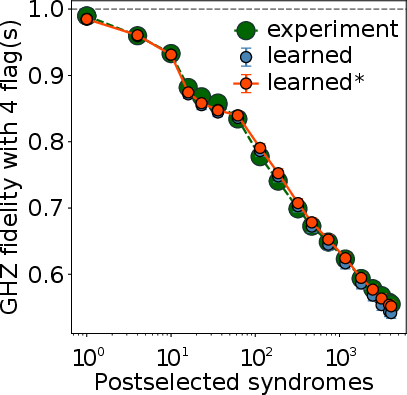

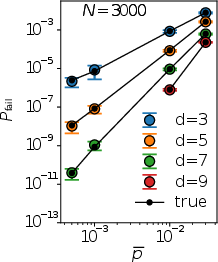

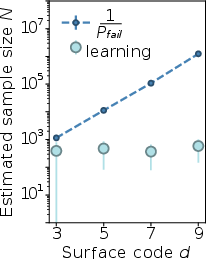

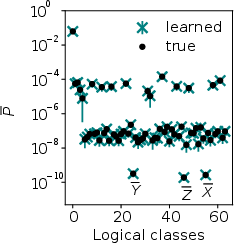

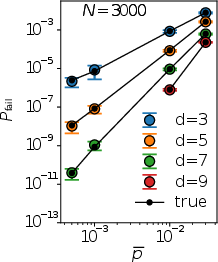

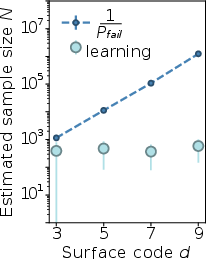

An essential quantitative result is the exponential separation in sample complexity between syndrome-based logical error learning and direct logical measurement. For below-threshold regimes, sample size for syndrome-based logical error rate scaling is polynomial in code distance d, while direct estimation incurs exponential cost proportional to 1/Pfail (Theorem 4, Figure 9).

Figure 9: Logical error rates Pfail for rotated surface codes at various sizes, illustrating the scaling and sample size requirements for direct vs syndrome-based estimation.

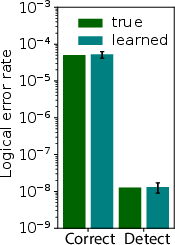

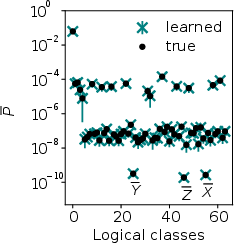

Comprehensive logical error class probability estimation also supports detailed post-selected logical benchmarking—enabling fine-grained characterization of conditioned logical error rates for error-detection and distillation protocols.

Figure 10: Learned logical class error rates for the five-qubit code, demonstrating multiplicative precision across $64$ logical error classes.

Implications, Extensions, and Future Directions

Practical Implications

- In-situ calibration: Gate error benchmarking and logical fidelity estimation require only syndrome data, providing rapid device feedback without special-purpose experiments.

- Decoder improvement: Physical noise models inferred from in-situ syndromes enhance prior distributions for optimal and correlated decoders, directly improving logical algorithm performance.

- Drift detection: Temporal resolution enables routine identification and compensation of non-Markovian and drift phenomena in deployed computation.

Theoretical Implications

- Noise model completeness: Learnability proofs and gauge analysis ensure maximal extraction of physical and logical noise consistent with information content in syndrome data.

- Sample complexity scaling: Rigorous demonstration of polynomial sample requirements sets bounds for feasible error suppression and logical operation verification in large codes.

Prospects and Extensions

The methods shown here pave the way for:

- Verification beyond Clifford circuits: Extension to magic-state injection and non-Clifford circuits will address classically-hard logical algorithms and universal quantum computation, as explored in follow-up work [XiaoWorkInProgress2026].

- General error models: Adaptation to coherent, correlated, and leakage error mechanisms beyond the Pauli framework, including cross-talk and spatially extended noise events.

- Fault-tolerance validation: Systematic syndrome-based validation of fault-tolerance thresholds and error correction principles, particularly under realistic, device-specific noise.

Conclusion

This work presents a rigorous, scalable framework for learning physical and logical Pauli noise channels from the naturally generated syndrome data in fault-tolerant Clifford circuits. By employing subsystem code and spacetime code mappings, the authors develop polynomial sample complexity benchmarks for reconstructing both gate-level and logical error rates, with precise learnability criteria. The experimental and simulation results validate syndromic approaches for in-situ FTQC characterization, gate calibration, logical verification, and enhanced decoding. The insights enable practical deployment of robust benchmarking and verification protocols for scalable quantum computing systems.