- The paper reveals that LLMs improve code summarization and automated testing while also introducing risks like hallucinated outputs.

- It employs systematic literature review and empirical studies to detail benefits and challenges, such as instability and scalability issues.

- The study proposes remediation strategies, including structured prompting and human-in-the-loop quality assurance, to mitigate LLM-related maintenance issues.

Impact of LLMs on Software Evolvability and Maintainability

Introduction

The paper "A Survey on LLM Impact on Software Evolvability and Maintainability: the Good, the Bad, the Ugly, and the Remedy" (2601.20879) seeks to explore the nuanced implications of using LLMs in software engineering, particularly focusing on how these models influence software maintainability and evolvability. The studies within this paper are organized into thematic areas that discuss both positive and negative impacts, encompassing methodological challenges, structural weaknesses, and proposed remedies for identified issues.

Opportunities: Enhancements to Software Engineering Practice

The research emphasizes several areas where LLMs have positively impacted software engineering. Significant enhancements are noted in code comprehension, productivity, and automated repair capabilities. For instance, empirical studies often highlight improvements in natural language processing tasks like summarization, classification, and mapping between natural language and code. Results indicate that LLMs effectively reduce cognitive load and improve task efficiency, supporting roles such as code generation, testing, coverage improvement, and documentation assistance.

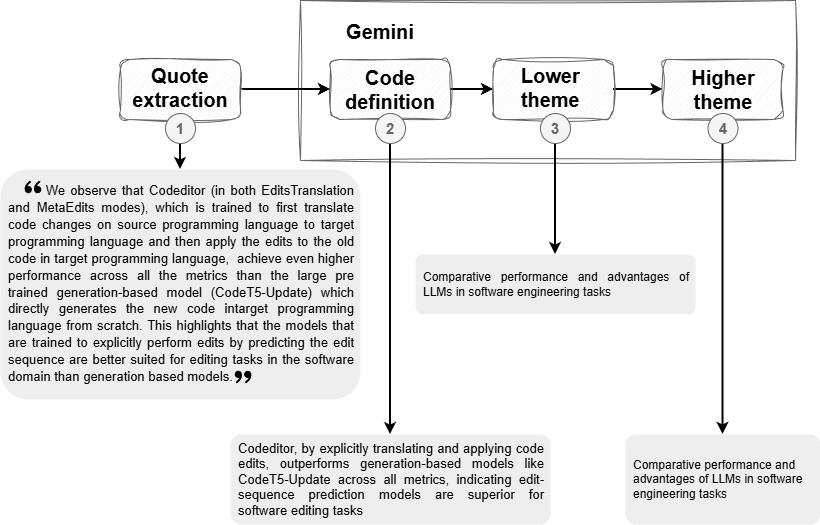

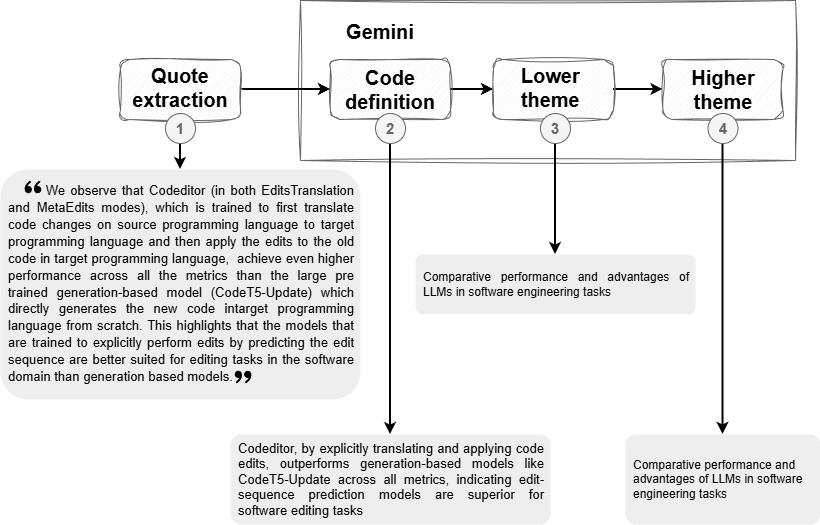

Figure 1: Example illustrating the synthesis pipeline from manual quote extraction to LLM-generated codes and themes made by LLM-ThemeCrafter.

Moreover, through efficient application of prompting techniques, LLMs demonstrate versatile adaptability to tasks within the Software Development Life Cycle (SDLC), showcasing improvements in tasks such as code summarization and automated test generation. Their zero-shot or few-shot functionality provides a practical advantage in task execution without extensive prior training.

Risks: Challenges to Long-term Sustainability

Despite these benefits, substantial risks complicate LLM integration into software engineering workflows. The research identifies critical issues, such as incorrect or hallucinated outputs and instability across tasks. Methodological pitfalls, like reliance on outdated or insufficiently diverse datasets and inadequate qualitative validation, pose risks that result in overestimation of LLM capabilities.

Insufficient or inappropriate application of LLMs can hinder practical deployment, manifesting as unpredictable performance or domain-specific errors when human oversight is inadequate. Additionally, ethical and security concerns arise due to potential bias inherent in training datasets, which can propagate through model-generated outputs.

Structural Weaknesses: Limitations of Current LLM Technologies

The paper outlines inherent structural weaknesses of LLMs, such as limited semantic understanding and inability to generalize across diverse domains, which pose significant threats to long-term software evolvability. LLMs frequently produce artifacts that fail to comply with established standards of code integrity and design, often requiring overly specific prompt structures to guide output accuracy. This imposes substantial burden on developers, reducing the expected labor-saving benefits.

Furthermore, scalability and performance issues in LLMs limit their applicability to large-scale industrial software systems, where operational costs, computational constraints, and latency can significantly impact workflow adoption.

The study provides several strategic interventions aimed at mitigating identified weaknesses. These include improving LLM interactions through structured prompting, enhancing contextualization, and incorporating domain-specific knowledge into prompts to stabilize outputs. Methodological rigor is emphasized as essential, advocating for robust research practices, better datasets, and standardization of evaluation benchmarks.

Human-driven post-generation quality assurance processes are proposed as critical to ensuring reliability, with techniques such as automated checking, static analysis, and human-in-the-loop validations playing pivotal roles in maintaining quality standards.

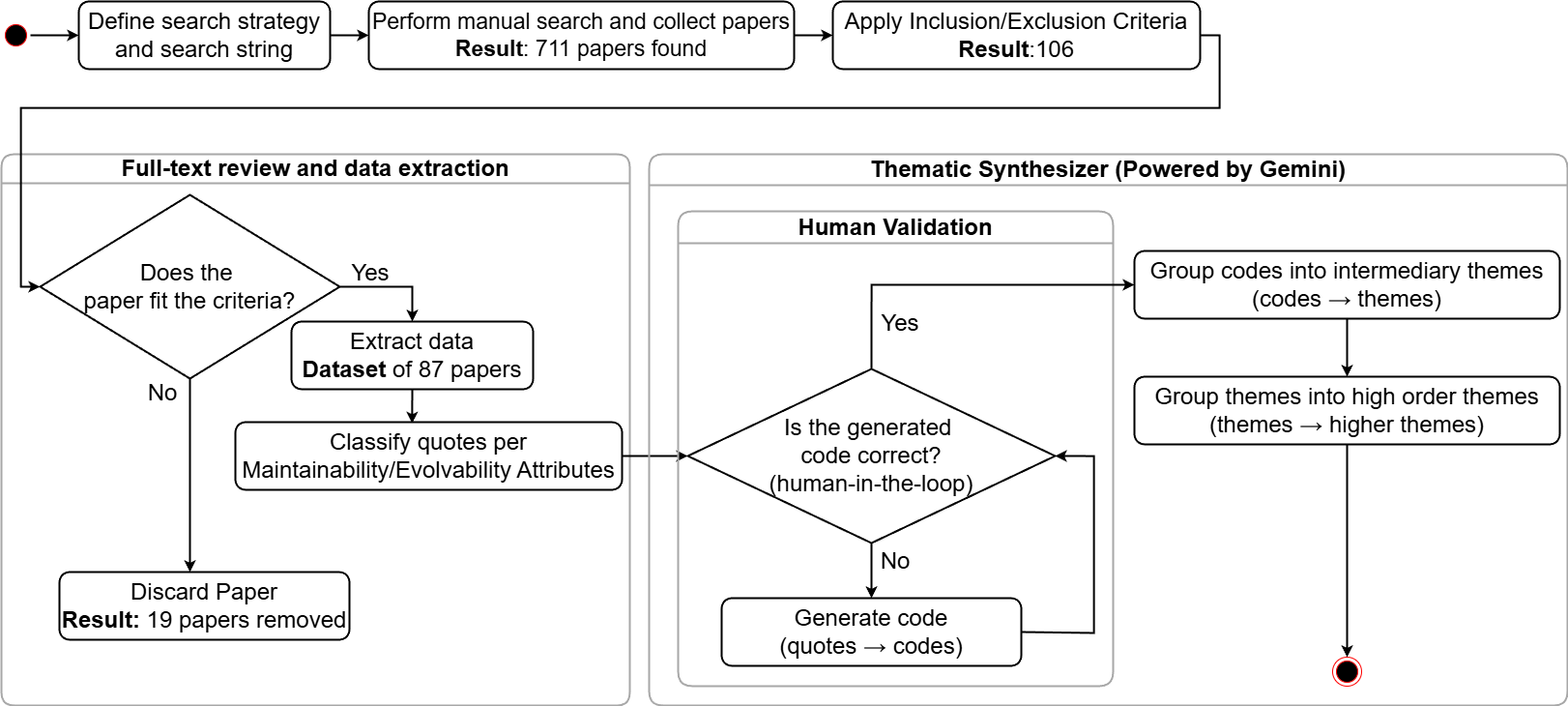

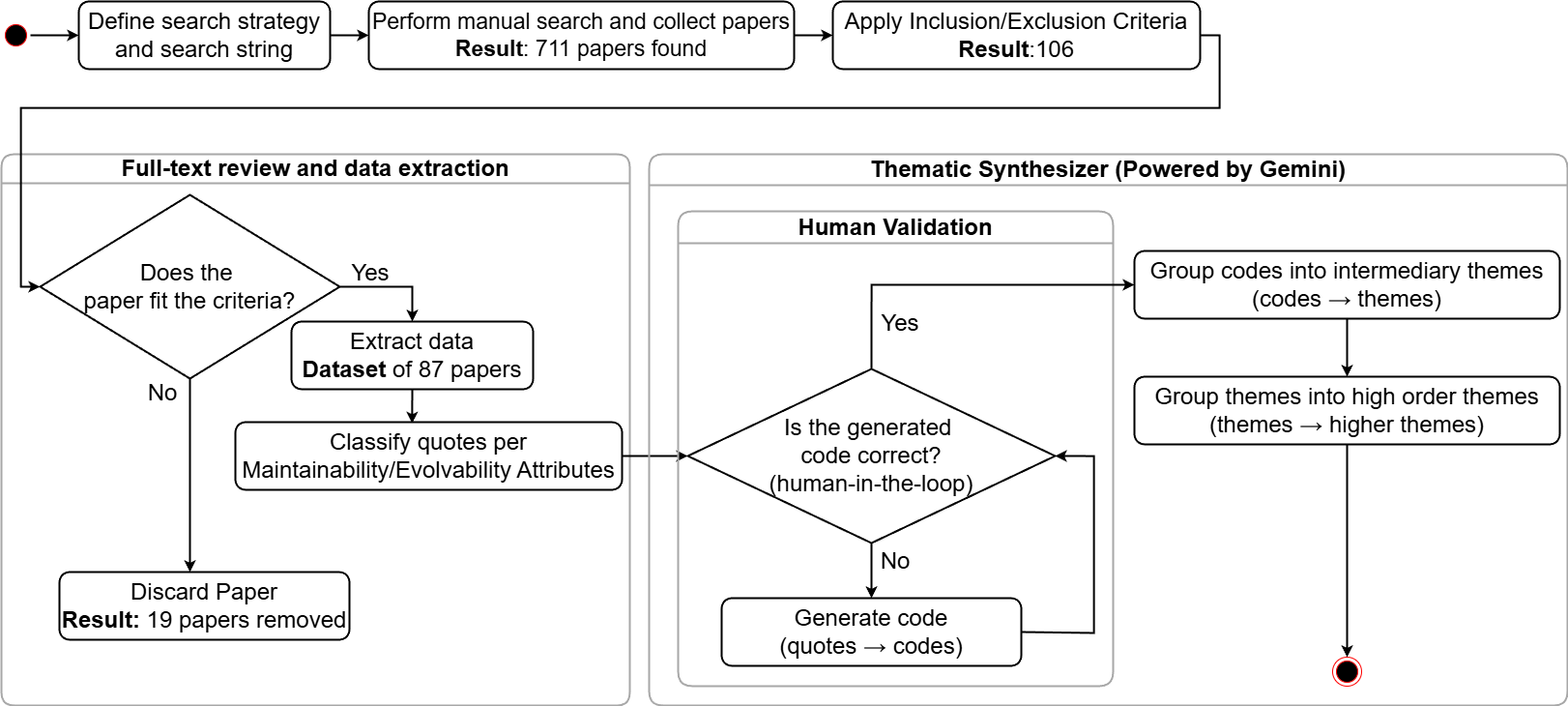

Figure 2: Followed systematic literature review workflow.

Conclusion

This paper provides a comprehensive examination of LLM impacts on software maintainability and evolvability, highlighting key opportunities and risks. It offers critical insights into how LLMs can augment software engineering tasks while also detailing significant risks that necessitate comprehensive management strategies. As LLM technologies advance and integrate further into software development workflows, understanding these dimensions becomes increasingly crucial for optimizing their contribution to sustainable software engineering. Effective mitigation through methodological rigor, systematic human oversight, and automated validation mechanisms is indispensable to harnessing the full potential of LLMs in software evolution.