LuxRemix: Lighting Decomposition and Remixing for Indoor Scenes

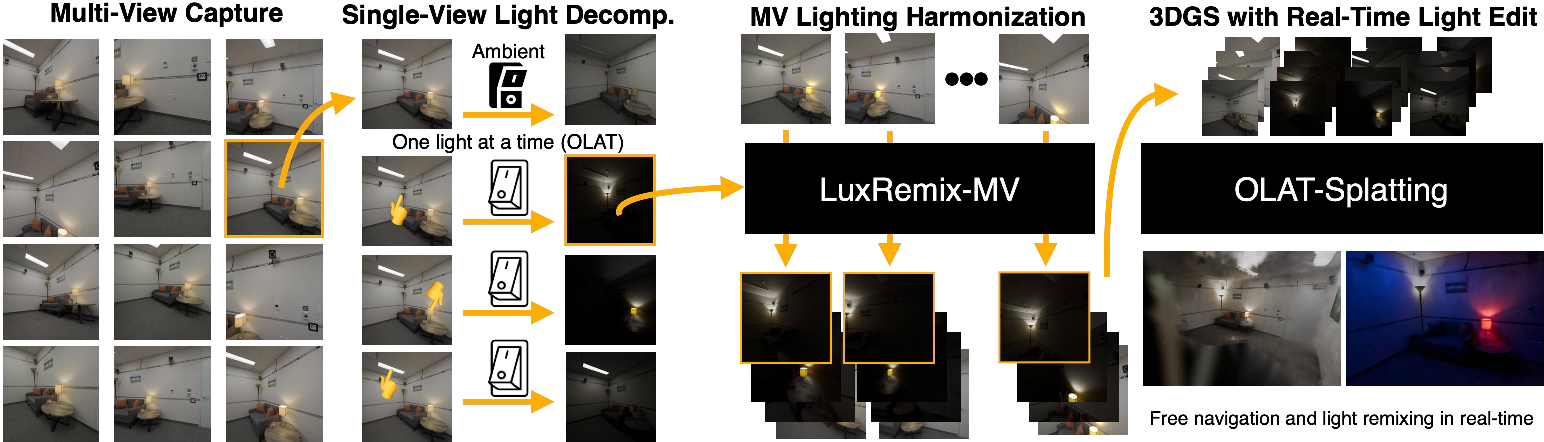

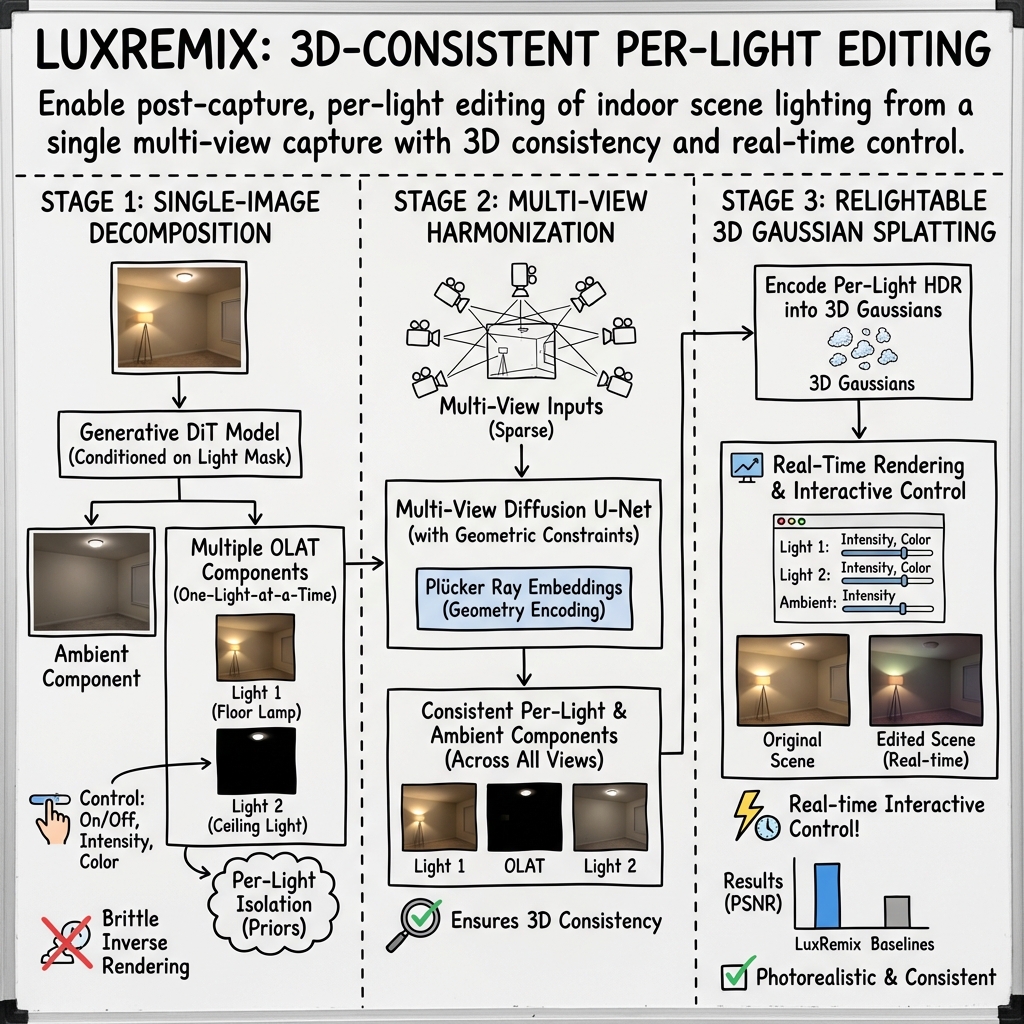

Abstract: We present a novel approach for interactive light editing in indoor scenes from a single multi-view scene capture. Our method leverages a generative image-based light decomposition model that factorizes complex indoor scene illumination into its constituent light sources. This factorization enables independent manipulation of individual light sources, specifically allowing control over their state (on/off), chromaticity, and intensity. We further introduce multi-view lighting harmonization to ensure consistent propagation of the lighting decomposition across all scene views. This is integrated into a relightable 3D Gaussian splatting representation, providing real-time interactive control over the individual light sources. Our results demonstrate highly photorealistic lighting decomposition and relighting outcomes across diverse indoor scenes. We evaluate our method on both synthetic and real-world datasets and provide a quantitative and qualitative comparison to state-of-the-art techniques. For video results and interactive demos, see https://luxremix.github.io.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

LuxRemix: Lighting Decomposition and Remixing for Indoor Scenes — A Simple Explanation

Simple overview

LuxRemix is a system that lets you change the lighting in a room after you’ve already taken the photos from different angles. Think of it like having a set of light switches for each lamp in a photo. You can turn individual lights on or off, make them brighter or dimmer, or even change their color — and it still looks realistic from every camera view.

What were the goals?

The paper set out to answer three friendly questions:

- Can we split the lighting in a photo into separate “tracks,” one per light, plus the background glow?

- Can we keep that split consistent across many photos of the same room taken from different angles?

- Can we make a fast 3D version so you can relight the scene interactively in real time?

How did they do it?

The method works in three main stages. You can think of it like music production:

- First, isolate each “instrument” (each light).

- Second, make sure all microphones (camera views) agree on what they hear from each instrument.

- Third, build a fast mixer you can play live in 3D.

Building training data in a virtual world

To teach the system how lights affect a room, the team generated about 12,000 virtual indoor scenes (living rooms, kitchens, etc.) and added up to six controllable lights per scene. They rendered:

- All lights on,

- One-light-at-a-time (OLAT) views — like turning on just one lamp to see what it contributes,

- Ambient lighting — the general glow that’s not tied to a single lamp.

This “virtual photo studio” gives perfect examples of how each light changes the scene, which is hard to get in the real world.

Key terms made simple:

- OLAT (One-Light-At-a-Time): Imagine turning on only one lamp in the room to see exactly what it lights up — no other lights allowed.

- Ambient lighting: The soft, overall brightness in a room that isn’t from a single lamp, like daylight leaking through curtains or far-away lights.

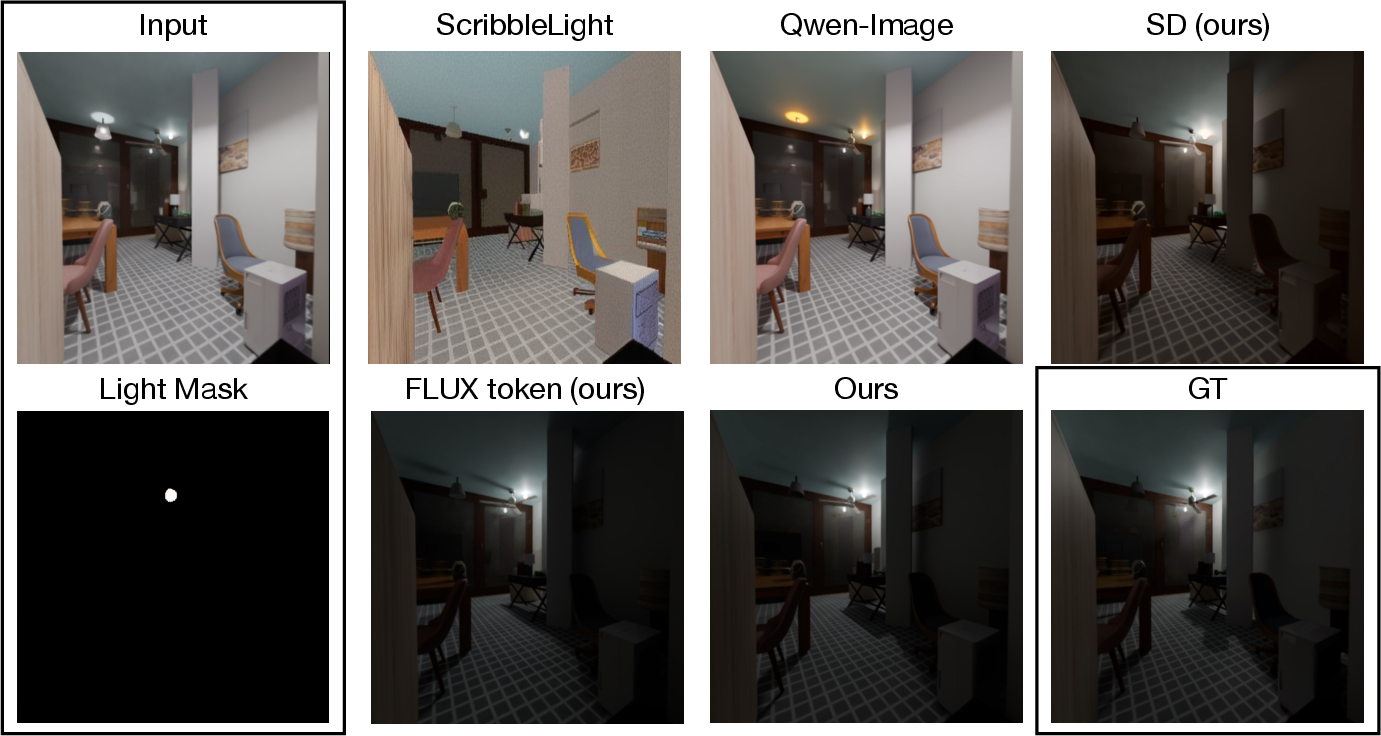

Step 1 — Split the light in a single photo

They fine-tuned a powerful image-editing AI (a diffusion model — think of it as a very smart, learned photo editor) to take one picture and separate it into:

- The ambient layer (overall glow),

- A set of OLAT layers (one per light).

To tell the AI which lamp to isolate, they give it a simple mask (a highlighted area) over the lamp fixture. The model learns prompts like “switch off all lights except the selected one,” which helps it produce the OLAT version for that lamp. They also guide it to handle different brightness levels so it understands both dim and very bright light.

In short: one photo in, multiple “light tracks” out.

Step 2 — Make all camera views agree

Now imagine you have many photos of the same room from different angles. If you split lighting in each photo separately, the results might not match across views. So they add a “harmonization” step: the system uses geometry-aware cues to spread the lighting split from some views to all views consistently.

Simple idea: the system learns how light and surfaces line up in 3D, so the “one-lamp” effect stays the same no matter which camera you look through. They also create HDR results by blending several exposures (like taking bright, medium, and dark versions and combining them) so shiny spots and dark corners both look right.

Key terms made simple:

- Multi-view consistency: All the different camera angles agree on where the light falls.

- HDR (High Dynamic Range): Keeps detail in both very bright and very dark areas by combining different exposures.

Step 3 — Make it work in 3D, in real time

They store the scene using “3D Gaussian splatting,” which you can picture as filling the room with thousands of tiny, soft glowing dots that together form the surfaces. Each dot stores how much each light contributes. When you render the scene, you “mix” the per-light layers based on your chosen settings (on/off, brightness, color) and get instant results from any viewpoint.

Key term made simple:

- 3D Gaussian splatting: A fast way to draw 3D scenes using many small, fuzzy blobs that are easy to render quickly and look smooth.

What did they find and why it matters?

Main results:

- The system can convincingly separate a room’s lighting into per-light layers from a few photos.

- It keeps those layers consistent across many camera views.

- It runs in real time in 3D, so you can interactively relight the scene — turn lamps on/off, adjust colors, move the camera — and it responds immediately.

- In tests, it beat other image-editing baselines at isolating individual lights and stayed more consistent across views.

Why this is important:

- Photographers, filmmakers, and game or VR creators can fix or experiment with lighting after shooting — no need to physically re-light the scene.

- It opens creative possibilities: try a warm evening look, a cool daylight look, or a dramatic spotlight, all from the same capture.

- It saves time and money in virtual production and visual effects.

What could this change in the real world?

- Faster, more flexible photo and video workflows: shoot once, relight later.

- Better pre-visualization for film and TV: try many lighting plans before building sets.

- More immersive, adaptive lighting in VR/AR: scenes that respond to user choices in real time.

A few limits to keep in mind

- It’s trained on synthetic (computer-generated) indoor scenes, so it may struggle with outdoor scenes or moving objects.

- It currently focuses on typical indoor lamps; very unusual lighting may be harder.

- It doesn’t yet handle far-away, all-around light sources (like full environmental HDR skies) as a controllable input.

Overall, LuxRemix shows a practical path to “remixing” real-world indoor lighting with per-light control, multi-view consistency, and real-time 3D performance — bringing the flexibility of a music mixer to the world of scene lighting.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of what remains missing, uncertain, or unexplored in the paper, targeted to guide future research.

- Real-world quantitative validation: The evaluation is reported only on synthetic test scenes; there is no quantitative assessment on real multi-view captures with diverse indoor lighting and materials, nor standardized benchmarks for per-light decomposition accuracy in the wild.

- Automatic light-source detection and masking: The single-image model requires light masks; how to robustly detect, segment, and categorize light sources (including occluded or partially visible fixtures, recessed lighting, screens, and windows) in real scenes remains unaddressed.

- Coverage and completeness of per-light sets: The pipeline assumes initial OLAT decompositions exist for one or more views; it is unclear how the method handles lights that are never visible in the seed views, or how to ensure all relevant lights in a scene are discovered and controllable.

- Generalization beyond static indoor scenes: The method is trained solely on synthetic, static indoor scenes; behavior under outdoor illumination, mixed indoor–outdoor scenarios (large windows, skylights), dynamic scenes (moving people/objects/lights), or time-varying lighting is not explored.

- Scalability to many and diverse lights: Training data limits to up to six lights; performance and stability in scenes with dozens of heterogeneous lights (area lights, luminous signage, projectors, displays) and complex fixture geometry is unknown.

- Bias toward cone-like/directional lighting: The paper notes decomposition bias toward light cones; accurate handling of broad, diffuse area lights and soft-shadow penumbrae is insufficiently validated.

- HDR and exposure handling: HDR outputs are synthesized via exposure bracketing with a generative model; the fidelity of HDR reconstruction, robustness to clipping/saturation, and consistency under camera auto-exposure and cross-view exposure variation are not quantified.

- Physical plausibility and energy consistency: The generative OLAT decomposition and linear recomposition assume physically linear light transport; there is no analysis of energy conservation, color constancy, or error accumulation when aggressively changing intensities/colors beyond training distributions.

- Spectral effects and color temperature: The approach operates in RGB; how well edited color temperatures reproduce material-dependent spectral responses (metamerism, fluorescence) and realistic white balance across different sensors is unclear.

- View-dependent reflectance and specularities: Per-light contributions stored as per-Gaussian RGB coefficients may be inadequate for strongly view-dependent effects (glossy/specular, anisotropy); the accuracy of highlights, Fresnel effects, and microfacet lobe changes under relighting needs assessment.

- Indirect illumination under edited lighting: While light transport is linear in intensity, the pipeline does not model changes in multi-bounce global illumination under large edits (e.g., strong color shifts or disabling major lights); the degree to which existing OLAT captures suffice for realistic indirect effects is uncertain.

- Translucent/participating media: Handling of subsurface scattering, semi-transparent materials (curtains, glass), volumetric media (smoke, fog), and caustics is not discussed; decomposition and recomposition with these phenomena remains open.

- Shadows and occlusion fidelity: There is no quantitative analysis of shadow edge softness, occluder interactions, and shadow accuracy after decomposition and recomposition, especially for area lights or complex occlusion chains.

- Robustness to geometry errors: The 3D Gaussian geometry is pretrained on original views and then frozen; how errors in geometry or materials impact per-light consistency, and whether joint optimization would improve physical accuracy, is unexplored.

- Control limitations (light pose and type): Editing is limited to intensity and color; there is no capability to move lights, change beam spread, convert point to area lights, add new virtual lights, or reorient fixtures while maintaining physical consistency.

- Uncertainty estimation: The pipeline does not provide per-pixel or per-light confidence/uncertainty measures for the decomposed components, making it hard to detect unreliable edits or guide user corrections.

- Multi-view harmonization robustness: The diffusion-based harmonization’s behavior under sparse views, wide baselines, strong parallax, significant occlusions, or camera calibration errors is not characterized.

- Computational performance and resource profile: Real-time rendering is demonstrated qualitatively, but there are no reported training/inference times, memory footprints, FPS metrics under different scene sizes/light counts, or scalability analyses.

- Domain gap bridging: Strategies to reduce synthetic-to-real domain shift (e.g., finetuning on real OLAT-like data, physics-guided losses, adversarial domain adaptation) are not investigated.

- Benchmarking against multi-view relighting baselines: The multi-view evaluation compares only to internal ablations; comparisons to established multi-view relighting and harmonization methods on shared datasets are missing.

- Ambient/environment lighting control: Ambient is defined as “all remaining sources and environment lighting”; explicit editing or replacement of distant/global illumination via HDRIs or spatially varying environment maps is not supported.

- Light discovery across views: Methods to automatically fuse multi-view evidence to discover, localize, and parameterize lights (pose, size, emission profile) and reconcile duplicates across views remain unaddressed.

- User interaction and correction tools: The workflow for user-in-the-loop correction (e.g., revising masks, fixing misassigned light contributions, tuning decomposition strength) is not specified; tooling to improve reliability in production settings is lacking.

- Reproducibility and dataset coverage: While a synthetic dataset is introduced, details on release, licensing, diversity of materials/BRDFs, fixture catalogs, and coverage of challenging cases (mirror rooms, high-gloss kitchens, mixed CCT lighting) are insufficient for reproducibility and stress-testing.

Practical Applications

Immediate Applications

The following applications can be deployed now using the paper’s methods and outputs (single-image OLAT decomposition, multi-view harmonization, and relightable 3D Gaussian splatting), assuming a static indoor scene captured with multiple views and access to modern GPUs.

- Post-capture per-light relighting for photography and cinematography (media/entertainment)

- Action: Turn individual lights on/off, adjust color temperature and intensity after a multi-view sweep of the set, preserving multi-view consistency.

- Tools/workflows: A LuxRemix plug-in for Adobe Photoshop/Lightroom/Nuke; an on-set relighting panel in DaVinci Resolve; a Blender/Unreal/Unity add-on that imports the relightable 3DGS scene with sliders for each fixture.

- Assumptions/dependencies: Static scene; multi-view capture with known camera poses; indoor near-field lighting; GPU for real-time 3DGS; ethical disclosure to avoid misleading post-production changes.

- Virtual production previsualization and rapid lighting iteration (software/media)

- Action: Capture a set once, then explore lighting alternatives interactively (e.g., color temperature sweeps, practical lights toggling) in a relightable 3DGS.

- Tools/workflows: Unreal Engine editor panel for LuxRemix layers; shot planning with per-light presets; LUT export for target looks.

- Assumptions/dependencies: Scene must remain static between takes; some domain gap from synthetic training may require light mask refinement; HDR environment lights not supported.

- Architectural visualization and interior design mockups (AEC/real estate)

- Action: Present client-ready variants of a space with different fixture selections, intensities, and color temperatures without re-shooting.

- Tools/workflows: A “Lighting Variants” mode in CAD/BIM-to-visualization pipelines; a web viewer for relightable showrooms.

- Assumptions/dependencies: Accurate fixture masks; photometric calibration if precise lux values are needed; indoor-only and near-field emphasis.

- Ethical real estate and retail listing variants (real estate/e-commerce)

- Action: Produce consistent daylight/evening and “warm vs cool” versions of indoor listings to match buyer preferences while maintaining scene geometry.

- Tools/workflows: CMS integration to generate per-light variants; A/B testing in e-commerce product pages or model homes.

- Assumptions/dependencies: Clear disclosure to avoid deceptive marketing; avoids outdoor/distant sunlight edits.

- Lighting education and training (education)

- Action: Interactive learning about one-light-at-a-time (OLAT) setups, color temperature effects, and light placement on shadows/reflections.

- Tools/workflows: Classroom web demos; photography courses using LuxRemix OLAT layers; VR teaching aids.

- Assumptions/dependencies: Basic multi-view capture; GPU viewer; may need curated datasets for non-technical learners.

- Robustness testing for machine vision (software/robotics)

- Action: Systematically vary indoor lighting in captured scenes to evaluate perception systems (detection, SLAM) under controlled illumination changes.

- Tools/workflows: A “lighting stress test” harness that remixes per-light layers to generate challenging inputs for QA pipelines.

- Assumptions/dependencies: Static indoor testbeds; per-light decomposition limited to near-field sources; consistency needed across views for multi-camera systems.

- Dataset augmentation for academic research (academia/computer vision)

- Action: Generate multi-illumination variants (OLAT and ambient layers) to train/evaluate algorithms for intrinsic decomposition, reflectance estimation, or relighting.

- Tools/workflows: Public release of the synthetic OLAT dataset and harmonized outputs; scripts to export exposure-bracketed HDR per-light images; benchmark protocols.

- Assumptions/dependencies: Licensing/availability of the synthetic dataset; documented exposure/tone-mapping; domain gap considerations for real-world generalization.

- On-set lighting continuity checks (media/production QA)

- Action: Compare expected vs captured per-light contributions to catch continuity or fixture issues before a re-shoot.

- Tools/workflows: A LuxRemix “lighting diff” tool that highlights deviations per fixture across angles.

- Assumptions/dependencies: Accurate per-light identification masks; static blocking; requires controlled capture pass.

- Home lighting planning for consumers (daily life/consumer software)

- Action: Test how changing bulb temperature or turning specific lamps on/off affects ambiance in a room after a quick phone sweep capture.

- Tools/workflows: Mobile app that builds a relightable 3DGS and offers sliders for each lamp; shares before/after variants.

- Assumptions/dependencies: Sufficient multi-view coverage; device GPU/edge computing; color accuracy can be influenced by phone camera calibration.

Long-Term Applications

The following applications are feasible with further research and development, including improved generalization to dynamic scenes, support for distant/outdoor illumination, photometric calibration, and tighter integration with physical systems.

- Smart-home light mapping and closed-loop optimization (smart home/energy)

- Action: Automatically discover each fixture’s spatial contribution and optimize schedules/intensities for comfort, glare reduction, or energy savings.

- Tools/workflows: Integration with home hubs (Matter/HomeKit), LuxRemix-derived per-light influence maps driving BMS/IoT controls.

- Assumptions/dependencies: Real-time capture and re-calibration; dynamic occupant modeling; photometric validation; privacy safeguards.

- Building energy audits and code compliance via visual simulation (policy/AEC/energy)

- Action: Use relightable indoor twins to assess compliance (e.g., illuminance uniformity), simulate retrofits (LED vs halogen), and forecast energy outcomes.

- Tools/workflows: BIM integration; standards-based visual checks (ASHRAE/IES); reports with per-light metrics.

- Assumptions/dependencies: Physically accurate photometry (beyond current perceptual decomposition); standardized calibration workflows; extension to mixed natural lighting.

- Assistive lighting personalization for low vision (healthcare/accessibility)

- Action: Configure lighting to optimize contrast and reduce glare for individuals, validated through personalized relightable simulations.

- Tools/workflows: Clinical apps that simulate patient-specific lighting preferences and export smart-home routines.

- Assumptions/dependencies: Clinical validation studies; precise luminance modeling; integration with assistive devices.

- Autonomous systems planning under dynamic lighting (robotics)

- Action: Use “what-if” lighting simulations to plan routes and actions robust to flicker/shadow; drive sensor fusion changes under different illumination.

- Tools/workflows: Simulation-to-real training pipelines that remix per-light conditions; safety validation suites.

- Assumptions/dependencies: Extension to moving agents and non-static scenes; multimodal sensor modeling; physics-based indirect illumination.

- Telepresence and collaborative design with shared relightable twins (enterprise/communication)

- Action: Remote teams co-edit the lighting of a captured environment (studio, showroom, OR) and evaluate effects in real time.

- Tools/workflows: Cloud-hosted LuxRemix viewers with multi-user session control; integration with BIM and DCC tools.

- Assumptions/dependencies: Networked rendering; permissioned access and audit logs; standardized exposure/color management.

- Forensic and compliance analysis of lighting-related events (public safety/insurance)

- Action: Reconstruct probable lighting configurations at incident time from multi-view captures to analyze visibility and glare.

- Tools/workflows: Expert tools to replay per-light states; reports for insurance/legal contexts.

- Assumptions/dependencies: Reliable provenance; temporal reconstruction for dynamic scenes; admissibility standards.

- Retail and museum lighting optimization (commerce/cultural heritage)

- Action: A/B test per-light configurations on exhibits/products to maximize visual impact while preserving conservation constraints or reducing energy.

- Tools/workflows: Scheduling and analytics platforms; relightable previews matching human perceptual criteria.

- Assumptions/dependencies: Human factor studies; photometric calibration; integration with facility management systems.

- Physically based relighting with mixed near- and far-field sources (graphics/academia)

- Action: Extend LuxRemix to handle HDRI and outdoor/distant illumination, supporting accurate multi-bounce global illumination edits.

- Tools/workflows: Hybrid 3DGS + path tracing; improved inverse rendering modules; benchmarks for far-field light editing.

- Assumptions/dependencies: New datasets with calibrated HDRI and indirect lighting; computational scaling; robust material estimation.

- Dynamic scene and video relighting with temporal consistency (media/video)

- Action: Apply per-light control to moving subjects/cameras, maintaining flicker-free temporal consistency across frames.

- Tools/workflows: Video diffusion with multi-view temporal priors; continuous relightable reconstructions.

- Assumptions/dependencies: Motion-aware harmonization; stable tracking and geometry; higher compute budgets; new training data.

Cross-cutting assumptions and dependencies

- Static indoor scenes with near-field lighting are the current sweet spot; dynamic/outdoor scenes and distant illumination (HDRIs/sunlight) require additional research.

- Multi-view capture quality (coverage, parallax, camera pose accuracy) strongly affects decomposition and harmonization.

- Light masks must be accurate; complex fixtures and diffuse sources may challenge current priors (bias toward cone-like sources).

- Real-time interaction depends on GPU resources; colorimetric accuracy benefits from photometric calibration and consistent tone mapping.

- Ethical use and disclosure are important when relighting could materially change user perception (e.g., real estate, product pages).

Glossary

- Albedo: Intrinsic surface reflectance independent of illumination. "UniRelight \cite{HeLMHVKFGGW2025} jointly estimates albedo and synthesizes outputs in one pass."

- Ambient lighting: Diffuse background illumination from indirect or unseen sources. "the ambient lighting (highlighted in blue), which includes all unseen light sources and environment lighting."

- Appearance embeddings: Learned latent features that encode appearance variations across views or lighting. "use appearance embeddings for varying illumination"

- Black-body color temperatures: Physical scale (in Kelvin) describing the color of light emitted by an ideal radiator, used to sample realistic white-light spectra. "The emitted light source colors are sampled from black-body color temperatures"

- Chromaticity: The color quality (hue and saturation) independent of brightness. "state (on/off), chromaticity, and intensity."

- Convex hull: The smallest convex shape enclosing a set of points; used to expand or regularize light masks. "the convex hull of the light fixture mask."

- DiT (Diffusion Transformer): A transformer-based diffusion model architecture for image generation/editing. "We fine-tune a pretrained image editing DiT model"

- Differentiable rendering: Rendering pipelines that provide gradients for optimizing scene parameters. "leverage differentiable rendering"

- Diffusion models: Generative models that synthesize data via iterative denoising guided by learned priors. "leverage pretrained diffusion models for relighting"

- Emissive materials: Materials that emit light in rendering, used to identify light source pixels. "computed from pixels with emissive materials only"

- Environment maps: Image-based lighting representations assuming distant illumination, typically omnidirectional. "However, environment maps assume distant illumination"

- Equirectangular HDR images: Panoramic images in equirectangular projection with high dynamic range. "We render each scene as four equirectangular HDR images"

- EV (Exposure Value): A logarithmic measure of exposure levels in imaging. "matching EV0, EV-2, EV-4 of the target HDR OLAT"

- Exposure bracketing: Capturing multiple exposures to merge into an HDR result. "merge the three exposure-bracketed images to create the final HDR output"

- G-buffers: Per-pixel buffers storing geometry/appearance attributes to decouple rendering stages. "uses G-buffers to decouple inverse and forward rendering"

- Gaussian splatting (3D): A point/primitive-based 3D representation using Gaussian kernels for fast rendering. "3D Gaussian splatting representation"

- Global illumination: Indirect light transport effects, including interreflections and multi-bounce lighting. "enable real-time global illumination with multi-bounce lighting"

- HDR (High Dynamic Range): Imaging with a wide luminance range for capturing/producing bright and dark details. "generate high dynamic range (HDR) outputs"

- HDRI (High Dynamic Range Image-based Lighting): HDR environment textures for lighting scenes. "distant global illumination editing via HDRIs is not supported"

- HSV (Hue–Saturation–Value): A color space parameterization used for augmenting light colors. "augmented with 10\% HSV variations"

- In-context LoRA: A LoRA fine-tuning setup applied via concatenated or contextual tokens. "fine-tuned as an in-context LoRA"

- Intrinsic decomposition: Factorizing an image into reflectance (albedo) and shading components. "Early work on intrinsic decomposition separated images into shading and albedo components"

- Inverse rendering: Estimating geometry, materials, and lighting from images. "optimization-based inverse rendering can decompose scenes into geometry, materials, and lighting"

- Light stage: A controlled capture rig with many lights used to measure reflectance and relighting. "light stage training"

- LoRA (Low-Rank Adaptation): Parameter-efficient fine-tuning technique for large models. "We fine-tune a pretrained image editing DiT model using LoRA"

- LPIPS: A learned perceptual image similarity metric. "using PSNR, SSIM, and LPIPS \cite{ZhangIESW2018}"

- Multi-bounce lighting: Illumination involving multiple reflections in the scene. "enable real-time global illumination with multi-bounce lighting"

- Multi-view lighting harmonization: Propagating and enforcing consistent per-light decompositions across viewpoints. "We further introduce multi-view lighting harmonization"

- Multi-view stereo: Geometry reconstruction from multiple images by matching and triangulation. "proxy geometry from multi-view stereo"

- NeRF (Neural Radiance Fields): A neural volumetric scene representation for view synthesis and appearance modeling. "NeRF-in-the-Wild \cite{MartiRSBDD2021}"

- OLAT (One-Light-At-a-Time): Capturing or decomposing lighting with only one light active at a time. "one-light-at-a-time (OLAT)"

- Path tracing: A Monte Carlo rendering algorithm simulating light transport. "combine path-tracing with neural rendering"

- Photometric consistency: Maintaining consistent intensity and color across views or edits. "maintaining photometric consistency"

- Plücker ray embeddings: Ray representations using Plücker coordinates for multi-view geometry conditioning. "Plücker ray embeddings"

- Precomputed radiance transfer: Precomputing light transport for fast, real-time relighting under varying illumination. "precomputed radiance transfer \cite{ZhangHLLX2024}"

- Pre-integrated lighting networks: Neural architectures that encode lighting integration for rendering. "pre-integrated lighting networks \cite{BossJBLBL2021,ChoiKK2023a}"

- PSNR: Peak signal-to-noise ratio, a reconstruction quality metric. "using PSNR, SSIM, and LPIPS \cite{ZhangIESW2018}"

- Ray marching: Incrementally stepping along rays to estimate interactions like shadows. "shadow prediction via learned ray-marching"

- Ray tracing: Rendering by tracing rays through scenes to compute intersections and lighting. "ray tracing \cite{GaoGLZCZY2024}"

- SSIM: Structural similarity index, a perceptual image quality metric. "using PSNR, SSIM, and LPIPS \cite{ZhangIESW2018}"

- SVBRDF: Spatially varying bidirectional reflectance distribution function. "recovering shape, lighting, and SVBRDF"

- Tone mapping: Converting HDR radiance into displayable low dynamic range images. "differentiable tone mapping"

- U-Net: An encoder–decoder convolutional architecture widely used in diffusion models. "pretrained multi-view diffusion U-Net"

- VAE (Variational Autoencoder): A generative model with an encoder/decoder and probabilistic latent space. "FLUX VAE latent dimensions"

- Vision transformers: Transformer architectures adapted for vision tasks. "vision transformers \cite{ZhuLMPC2022}"

- Volumetric reflectance representations: Learned volumetric models that capture reflectance and appearance. "learned volumetric reflectance representations \cite{BiXSHHKR2020}"

Collections

Sign up for free to add this paper to one or more collections.