- The paper presents an automated evolutionary method that optimizes agentic red-teaming systems to significantly outperform state-of-the-art baselines.

- It employs LLM-guided code generation, evolutionary selection, and domain-specific helper functions to systematically discover robust adversarial workflows.

- Experimental results demonstrate substantial gains in attack success rates and transferability across various models, highlighting the approach's practical effectiveness.

Automated Agentic System Optimization for Red-Teaming: An Analysis of AgenticRed

Introduction and Context

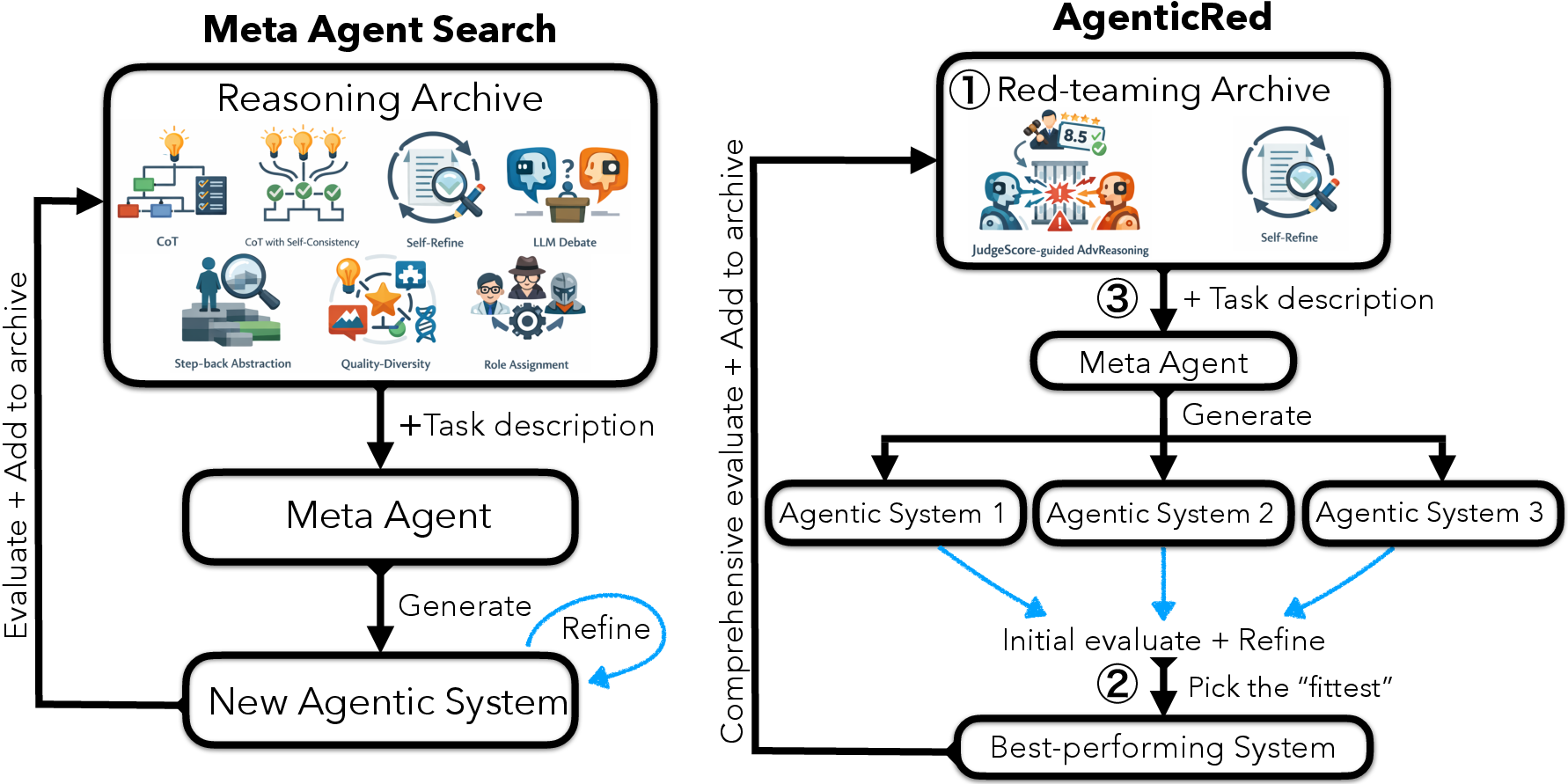

AgenticRed represents an automated approach to agentic system design for red-teaming LLMs, formulating red-teaming not merely as an RL-based policy search but as a system-level workflow optimization problem. By leveraging LLM-facilitated code generation with evolutionary selection, AgenticRed systematically iterates through the agentic system design space with minimal human intervention. This approach diverges from prior work that relies heavily on manually crafted multi-step attack strategies or fixed, human-engineered agentic workflows, exposing significant limitations in human bias and search inefficiency.

The methodology integrates principles from Meta Agent Search with domain-specific guidance and helper functions, enhancing automated system discovery in the red-teaming landscape. This reformulates red-teaming not just as adversarial prompt search, but as a new instance of open-ended, automated scientific system design.

AgenticRed Methodology

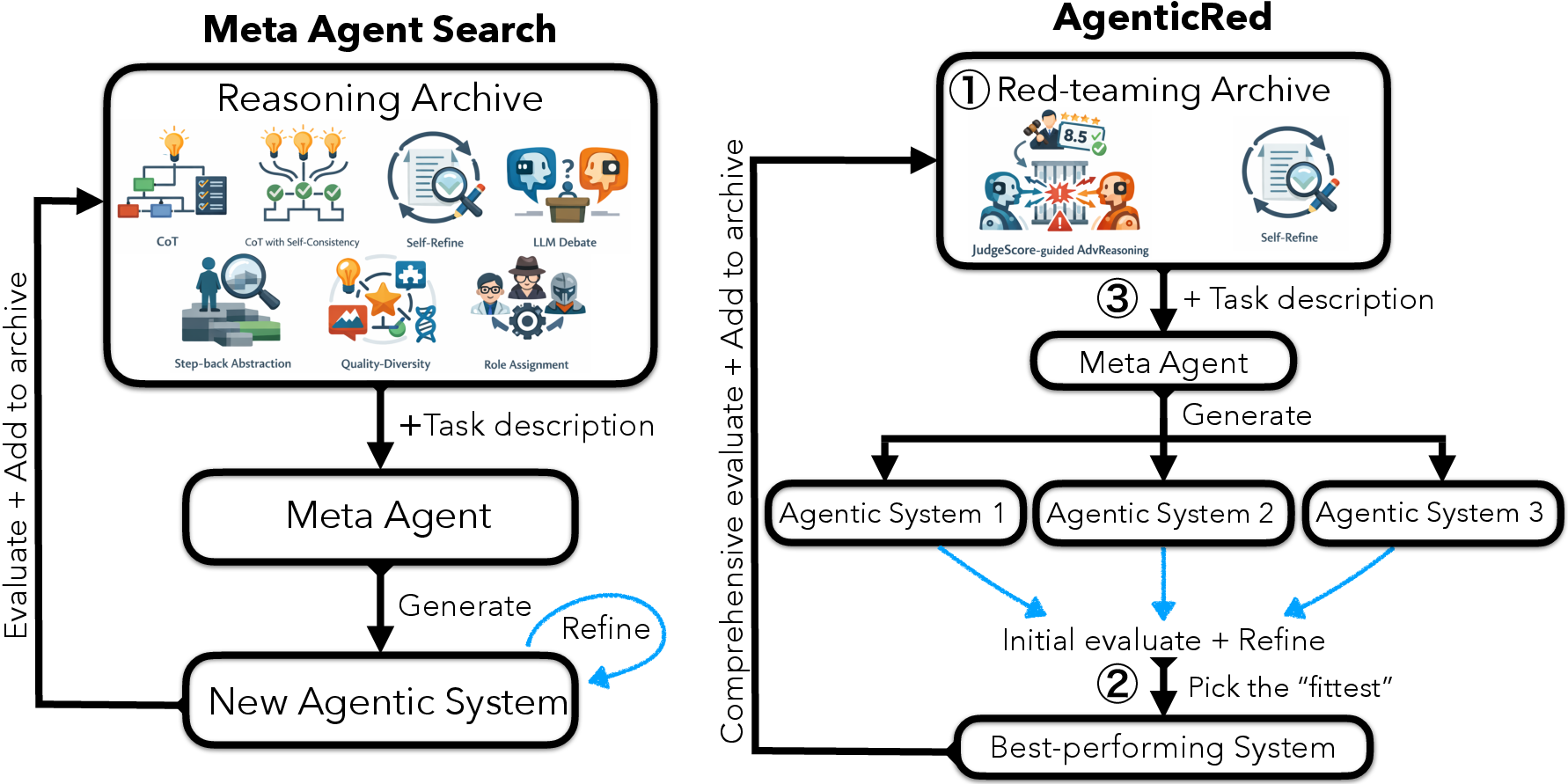

AgenticRed initializes with an archive of contemporary, high-performing red-teaming systems (e.g., Self-Refine, JudgeScore-Guided Adversarial Reasoning), then orchestrates generation, evaluation, and selection of new agentic systems using an LLM "meta agent." Each generation produces multiple candidate systems (“offspring”), automatically implemented and evaluated using black-box numerical feedback (Attack Success Rate, ASR) supplied by an external judge model. Evolutionary pressure is enforced via selection—only the fittest candidates are retained, and their system code and performance are appended to the archive for subsequent iterations.

Figure 1: AgenticRed system search framework contrasted with Meta Agent Search; evolutionary pressure and domain-specific feedback provide a more targeted optimization for red-teaming agentic workflows.

This process is guided by several mechanisms:

- Domain-Specific Helper Functions: Ensure candidate systems can interact with the target model and judge function, enabling dense, reproducible evaluation signals for guiding the evolutionary process.

- Evolutionary Algorithmic Structure: Candidate diversity and selection pressure balance exploration with systematic refinement. Offspring are designed to optimize fitness but are also rewarded for novelty and diversity, mitigating premature convergence.

- Self-Reflection and Robustification: Systems with runtime errors (e.g., tokenization issues, formatting errors) autonomously invoke self-correction to preserve the autonomy and continuity of search.

Experimental Results

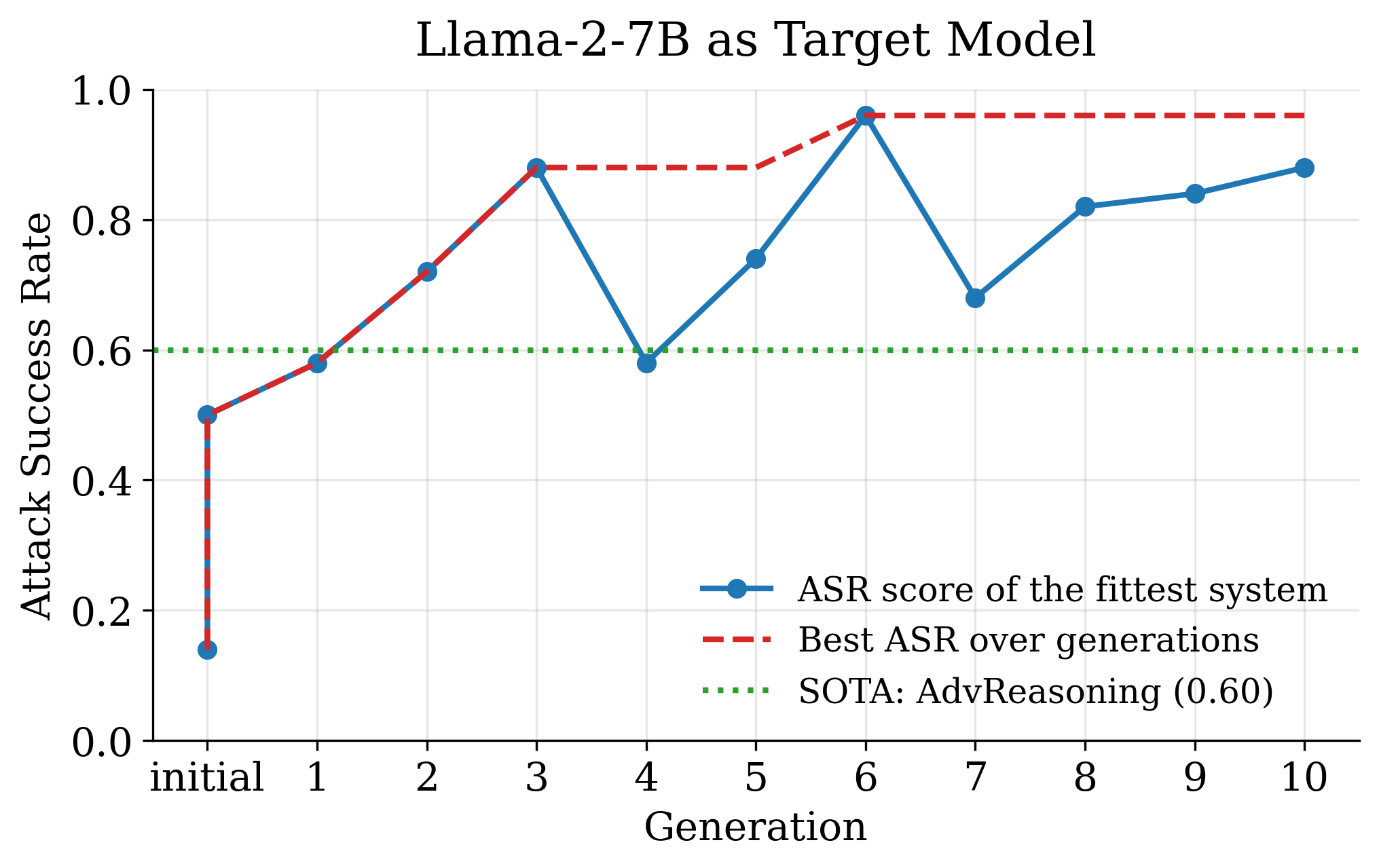

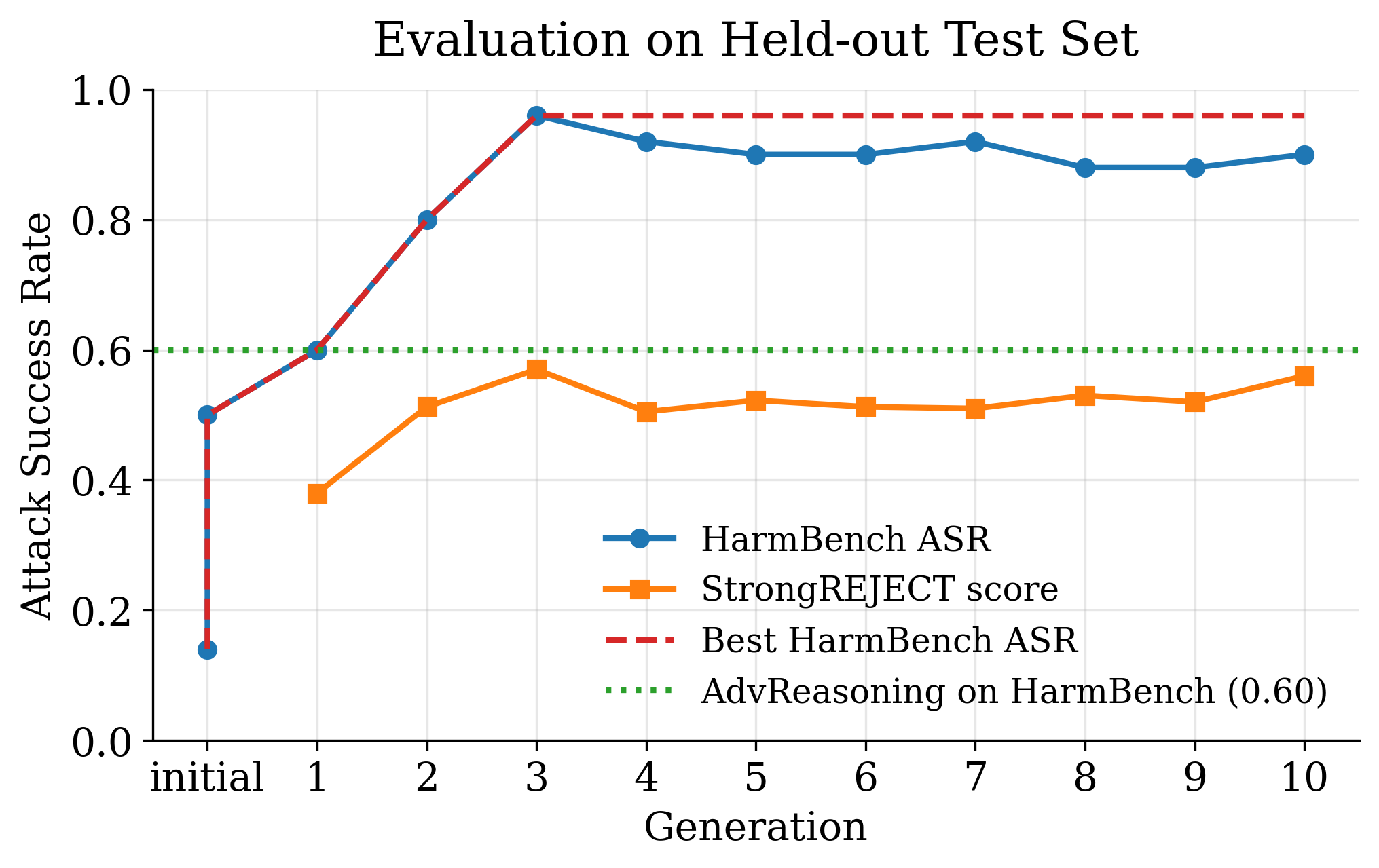

AgenticRed demonstrates robust improvements over strong hand-designed baselines across multiple attack benchmarks and target models.

Ablation studies further reveal:

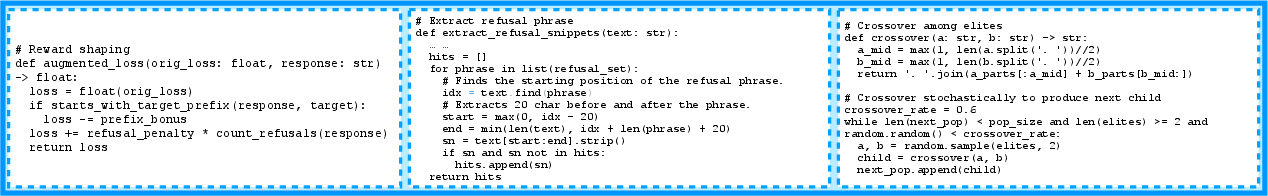

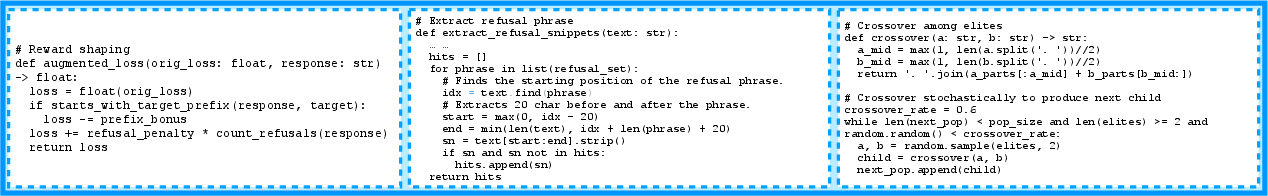

Notably, AgenticRed-derived systems synthesize emergent strategies not present in the base archive, such as explicit refusal suppression (blacklining refusal tokens detected during the search), reward shaping (sanctioning prefix compliance), and genetic operations analogous to crossover and mutation for prompt evolution.

Figure 4: Example code fragments produced by AgenticRed, exhibiting reward shaping, dynamic refusal suppression, and crossover among prompt “elites.”

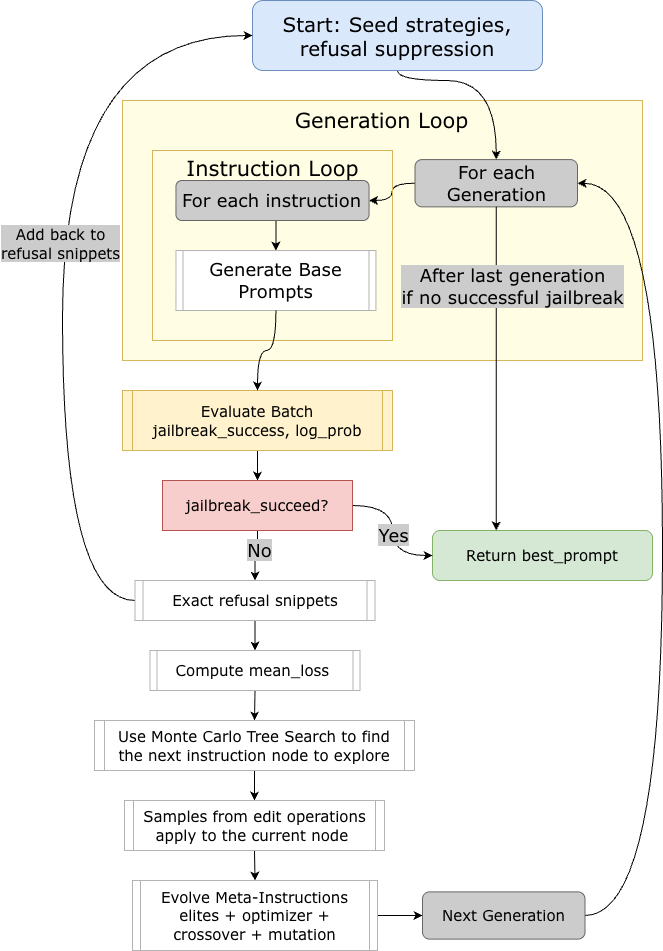

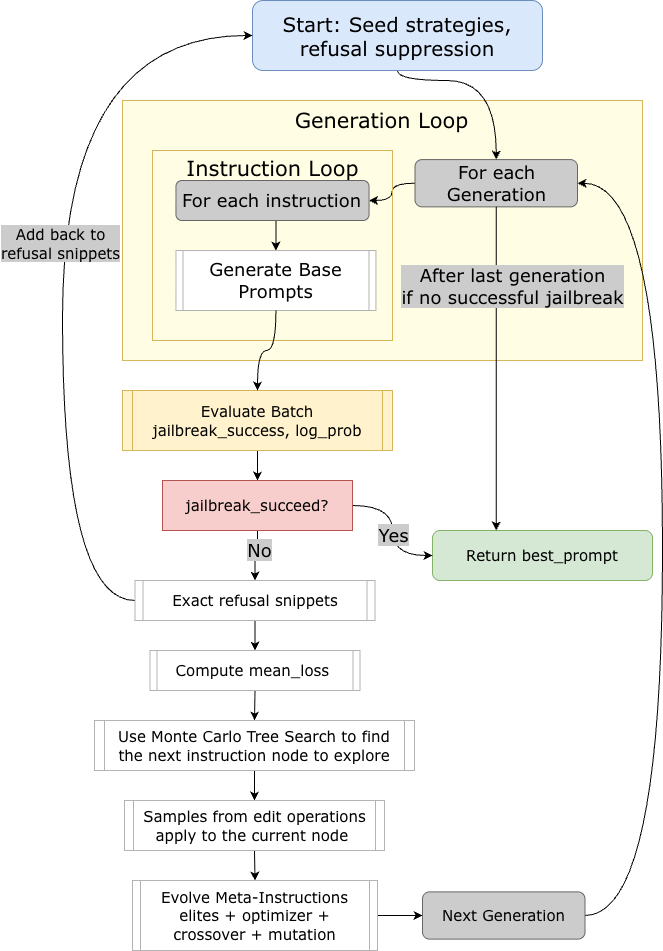

Figure 5: Example flowchart of a discovered system—PHOENIX-MCTS—implementing Monte Carlo Tree Search over refusal-aware edited prompts with wrapper diversity.

Implications and Discussion

AgenticRed provides evidence that evolutionary, LLM-driven agentic system optimization is a highly effective paradigm for AI safety evaluation, capable of producing adversarial workflows that flexibly adapt and scale with model capabilities. By automating not only the low-level attack policy but the higher-level workflow architecture, it shifts the research focus towards continuous, open-ended automated scientific discovery.

Practical implications include:

- Scalability and Minimal Human Bias: Automated design searches wider and deeper than hand tuning, accelerating the discovery of new classes of red-teaming attack workflows as LLMs and defenses co-evolve.

- Model-Agnostic Robustness: The evolutionary mechanism and domain-specific dense feedback enable transferability to models outside the original training distribution, a critical requirement for practical automated safety oversight.

- Evolution of Attack Strategies: Emergent complex strategies (e.g., prompt wrapping ensembles, meta-evaluation harnesses, multilingual camouflage, automated refusal suppression) suggest potential for further advances in both offensive and defensive LLM techniques.

Theoretical implications include insights into mode collapse (repeated emergence of similar strategies), the necessity of high-quality initial baselines, and the role of quality-diversity optimization in open-ended agentic system generation. The results also underscore the limits of RL-based jailbreak optimizers—AgenticRed directly drives system-level exploration, avoiding local optima and overfitting on sparse reward signals.

Future Directions

Open avenues include:

- Balancing Quality and Diversity: Further research into multi-objective evolutionary search (e.g., Pareto fronts for diversity-ASR optimization) is necessary to counteract convergence to homogeneous attack procedures.

- Co-evolutionary Frameworks: Extending agentic search to simultaneous attacker–defender arms races could improve robustness of both prompt attacks and refusal mechanisms.

- Query Efficiency: Adapting objectives (e.g., query cost regularization) would improve training-time resource utilization for real-world deployability.

- Generalization Beyond Red-Teaming: Applying automated agentic system search to other domains (e.g., scientific discovery, agentic planning) will elucidate the extent to which LLMs can facilitate open-ended, scalable scientific progress.

Conclusion

AgenticRed operationalizes automated evolutionary system design in the red-teaming of LLMs, delivering significant advances in attack effectiveness, transferability, and automated system discovery. By treating agentic system architecture as the unit of optimization—rather than fixed policies—AgenticRed opens new directions for scalable AI safety evaluation and establishes practical mechanisms for LLM-powered, open-ended system search (2601.13518).