- The paper presents a dynamic few-shot learning paradigm that uses templating and robust retrieval to generate answerable query suggestions.

- It demonstrates significant improvements in answerability (up to 88.5%) and maintains competitive semantic similarity compared to static and retrieval-only baselines.

- The study introduces self-learning mechanisms for automatic query classification, enabling rapid adaptation in multi-tool, agentic RAG systems.

Query Suggestion for Agentic Retrieval-Augmented Generation via Dynamic In-Context Learning

Motivation and Problem Statement

This work addresses the problem of query suggestion within agentic retrieval-augmented generation (RAG) systems, particularly in environments where workflow execution relies on LLMs performing multi-step tool calls. In these settings, user queries frequently surpass the agent's knowledge boundaries or tool capabilities, leading to interaction failures. The crucial open challenge is how to recommend follow-up queries that are both semantically similar to the original and verifiably answerable by the agent—without direct knowledge of the underlying tool affordances or dataset coverage. Prior query suggestion work focused on text-based retrieval and does not extend to the multi-workflow, tool-based agentic RAG scenario, thus motivating a new line of inquiry.

Approach: Dynamic Few-Shot Learning with Templating and Robust Retrieval

The proposed method introduces a dynamic few-shot learning paradigm for query suggestion, leveraging the compositional workflows inherent in agentic RAG. Rather than relying on static, hand-crafted examples or requiring comprehensive tool documentation, the approach operationalizes in-context learning through the following mechanisms:

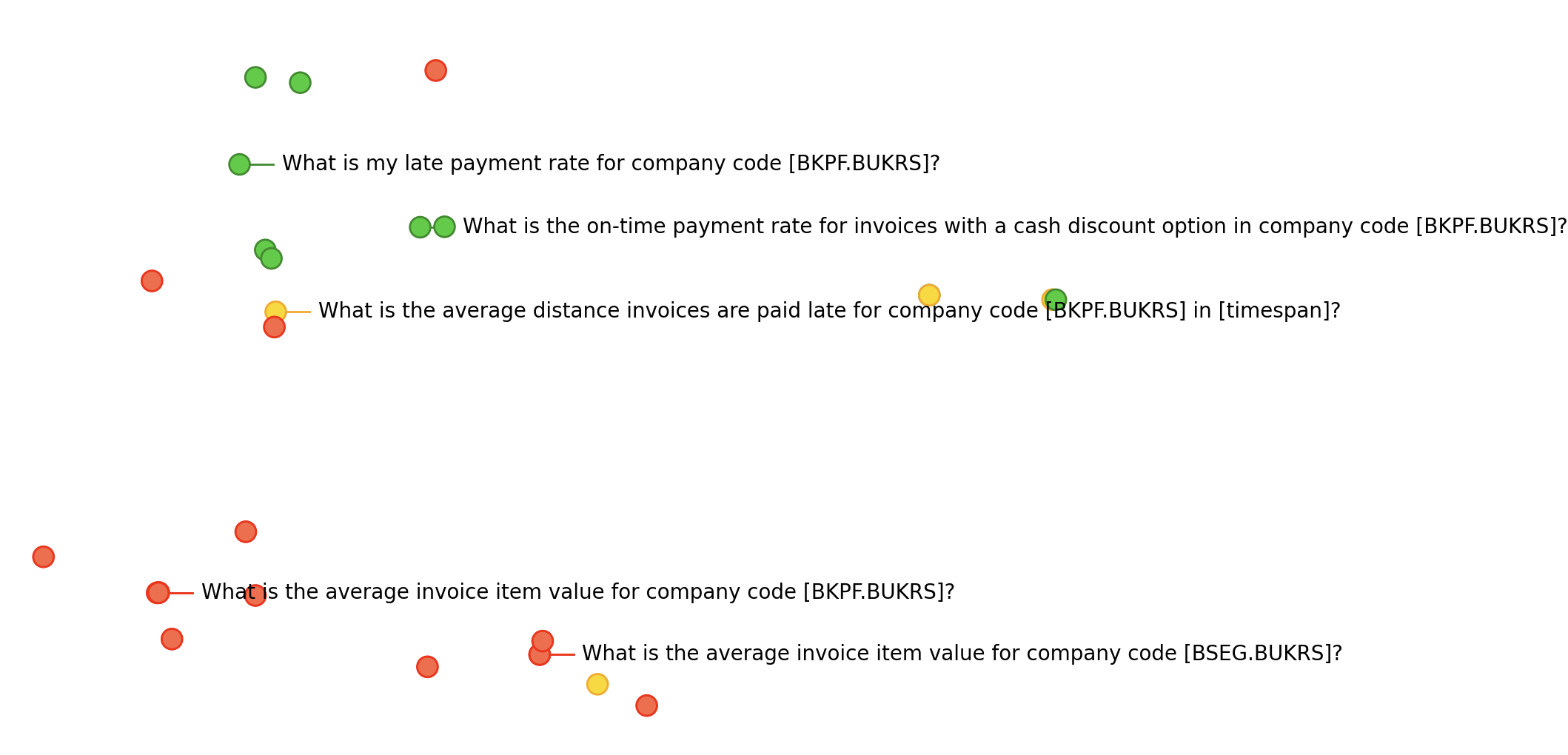

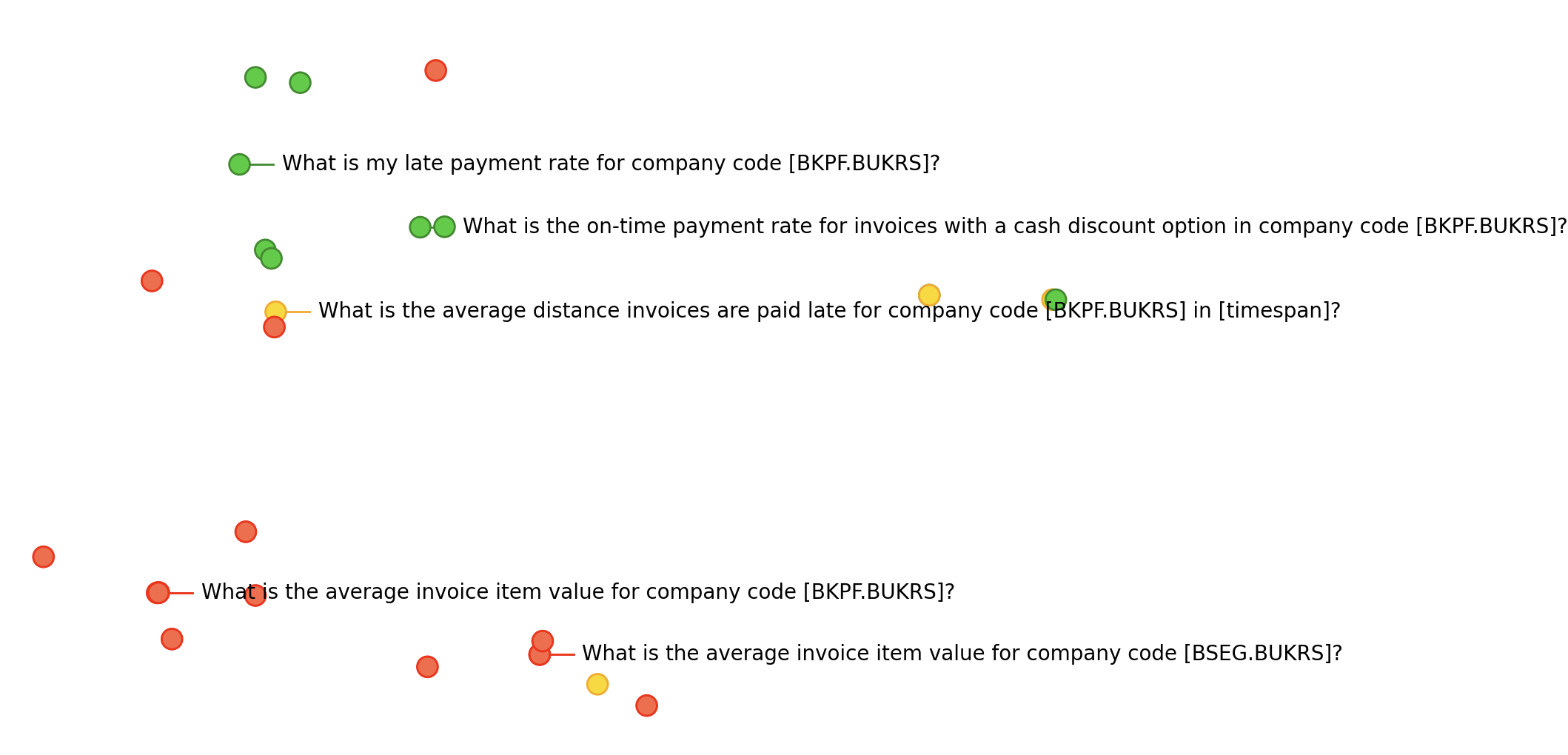

- Templating: Each user query is abstracted into a workflow template by systematically masking entity values with their respective argument names. This allows generalization across queries sharing the same underlying workflow, mitigating the high-dimensionality of possible value combinations.

- Embedding-Based Retrieval: Workflow templates are encoded using embedding models, enabling semantically relevant few-shot example retrieval based on cosine similarity.

- Robust Retrieval Algorithm: To address faulty or hallucinated responses, the retrieval process incorporates a local majority vote on answerability, ensuring that positive/negative examples are selected based on the model's demonstrated execution history and agent feedback.

- Self-Learning: Labeled examples—classified as answerable, no knowledge, or no workflow—are generated automatically from prior user interactions using the agent's response history, tool call traces, and model-based answerability evaluation, entirely removing the need for manually annotated datasets.

- Suggestion Generation and Value Imputation: Suggested queries are instantiated by replacing value masks with domain-appropriate arguments, informed by original query values, alternative responses from tools, or tool-specific sample values.

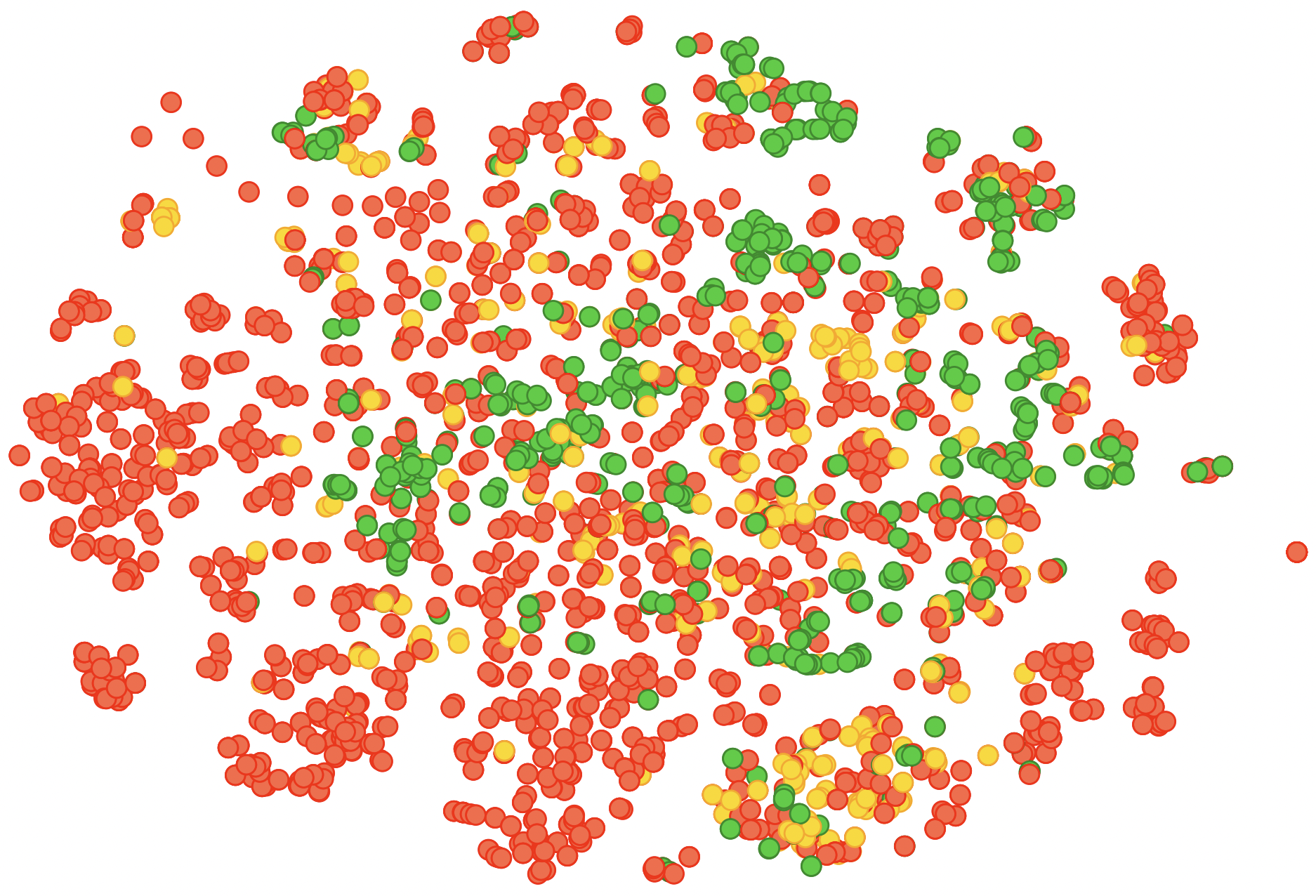

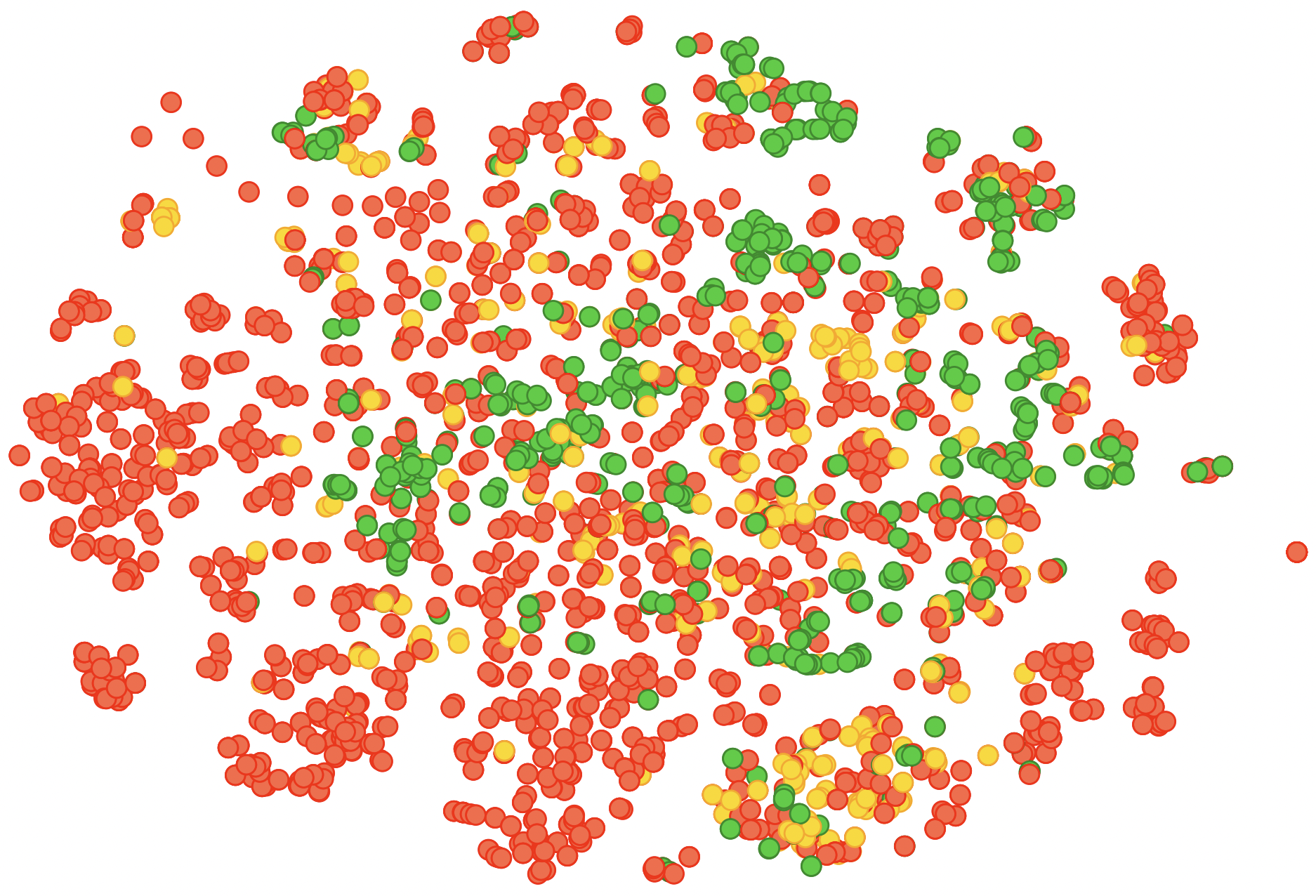

Figure 1: TSNE visualization of workflow embeddings reveals distinct clusters corresponding to answerable, no knowledge, and no workflow categories, demonstrating effective semantic partitioning by the templating and embedding strategy.

Experimental Evaluation and Results

Experiments are conducted on three RAG agents—two invoice-processing systems (differing in Python tool access) and an order-management setup—using real-world, unlabeled datasets composed of ≈2000–2700 queries per agent. The performance is compared against two baselines: static few-shot prompting (using fixed examples) and retrieval-only (direct retrieval of the top matching template).

The evaluation employs two primary metrics:

- Answerability: Categorization of suggestions into answerable, no workflow, or no knowledge, based on model-driven post-hoc evaluation of the agent's response.

- Semantic Similarity: Cosine similarity between the query embeddings of the original and suggested queries.

Results, visualized in the main text, demonstrate the following:

- The dynamic few-shot approach consistently yields a higher fraction of answerable suggestions (up to 88.5% on InvoicesNoPython) compared to static few-shot (as low as 18.7%) and retrieval-only (as low as 34.5%) baselines.

- Semantic similarity remains competitive or superior across datasets, with dynamic few-shot suggestions closely matching user intent while respecting agent capabilities.

- Robust self-learning and retrieval produce rapid gains in answerability within ~500 training queries, substantiating the sample efficiency and practicality of the approach for production deployments.

- Detailed trade-off analyses reveal that static few-shot learning over-optimizes for similarity but fails on answerability, while retrieval-only strategies degrade in answerability with higher similarity scores, which dynamic few-shot learning balances more effectively.

Categorization and Analysis of Unanswerable Queries

A nuanced classification framework distinguishes three types of queries:

- No Workflow: The agent cannot translate the query into an executable workflow (i.e., unsupported intent or missing tool capabilities).

- No Knowledge: The workflow is feasible, but the required data for computation is absent or inaccessible (empty results, irrelevant values).

- Answerable: The agent delivers a meaningful response.

This taxonomy is central for both the retrieval of examples and the evaluation of suggestion success, highlighting the practical complexities of agentic RAG systems where internal tool coverage and data availability are opaque to both user and model.

Implications and Future Directions

The presented framework offers multiple implications for AI research and practical agent deployment:

- Scalability and Adaptability: The self-learning procedure enables continual improvement from actual usage logs, supporting rapid adaptation to domain shifts and tool evolution.

- Agent-Aware Prompting: Conditioning LLMs with dynamically curated, answerability-aware examples paves the way for more robust interaction and system-aware reasoning in agentic contexts.

- Autonomous Agent Debugging and Inspection: Templating and workflow embedding can facilitate agent auditing, coverage analysis, and weakness detection—tools for developers and users in increasingly complex environments.

- Extension to Other Agentic Systems: The methods generalize beyond RAG to any tool-calling, workflow-driven agent, suggesting applicability for conversational AI, automated data analysis, and multi-system orchestration.

Potential future work includes integrating the framework with real-time feedback and reward-driven query suggestion, joint optimization with guardrail mechanisms for safe exploration, and meta-learning extensions for cross-agent knowledge transfer.

Conclusion

This paper formalizes the query suggestion problem for agentic RAG systems, introduces the first approach leveraging dynamic few-shot learning, workflow templating, and self-supervised retrieval, and delivers empirical verification of improved answerability and similarity through extensive evaluations. The work advances agentic interaction research, equipping LLM-powered systems with adaptive, context-sensitive suggestion capabilities, and lays the groundwork for broader AI agent self-improvement, debugging, and transparent interaction optimization.