- The paper introduces Drivora, a unified infrastructure that integrates scenario definition, testing engine, and ADS integration for search-based autonomous driving testing.

- It employs evolutionary search techniques and parallel scenario execution to effectively identify safety-critical violations across 12 ADSs.

- The framework’s modular design and OpenScenario standard enhance reproducibility, extensibility, and large-scale industrial validation.

Drivora: A Unified and Extensible Infrastructure for Search-based Autonomous Driving Testing

Motivation and Context

Autonomous driving systems (ADSs) demand rigorous validation, especially for safety and reliability, as their deployment carries substantial practical risk. Search-based simulation testing has emerged as a standard methodology to generate and identify safety-critical corner cases and violations in a controlled, reproducible manner. Existing research platforms for search-based ADS testing exhibit significant fragmentation, mainly due to heterogeneous scenario definitions, simulator bindings, single-system architectures, and limited extensibility. Migration between platforms—or conducting evaluations across multiple ADSs—remains cumbersome and error-prone due to this fragmentation.

Drivora addresses these deficiencies by introducing an extensible infrastructure that unifies scenario definition, decouples testing components, and supports multi-system integration, all built on the well-established CARLA simulation backend. The framework explicitly targets compatibility, extensibility to support new methods, and maximal execution efficiency required for large-scale search-based validation.

Architectural Overview

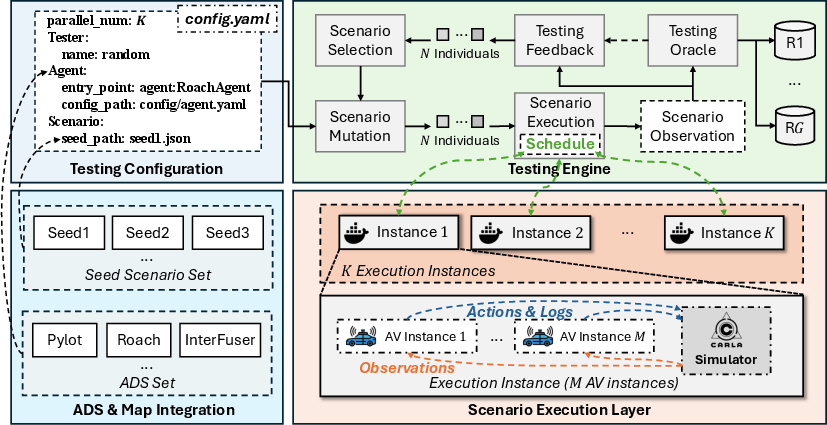

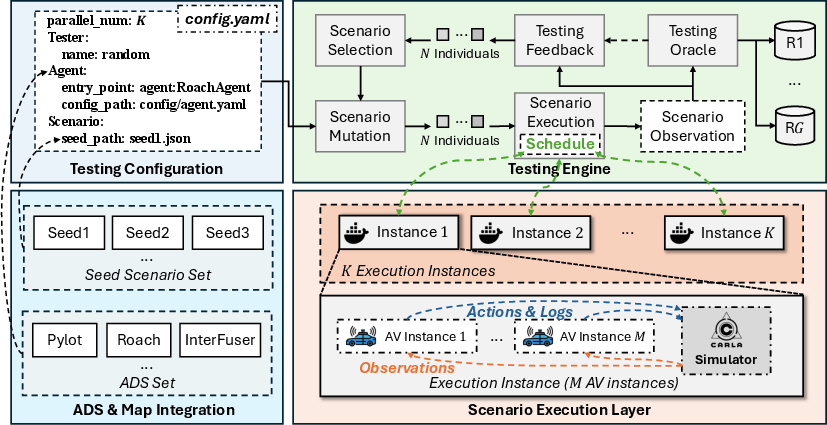

Drivora's architecture is constructed on distinct modular layers that cleanly separate key concerns: scenario definition, testing engine, scenario execution, and ADS integration. This separation not only accommodates direct integration of heterogeneous ADSs but also enables rapid prototyping and replacement of search algorithms. Figure 1 encapsulates the architecture, delineating the flow from resource ingestion to parallelized scenario execution and downstream analysis.

Figure 1: Drivora framework architecture, depicting the modular separation of ADS integration, testing configuration, evolutionary testing engine, and parallelized scenario execution.

The core contributions of Drivora are:

- Unified Scenario Definition (OpenScenario): Scenarios are encoded using low-level, actionable parameters (trajectories, start times, weather, traffic lights, actor types), which serve as a lingua franca between disparate scenario-generation techniques.

- Testing Engine: Implements classical evolutionary search, including scenario mutation, test oracle evaluation, feedback-driven selection, and flexible integration for arbitrary search algorithms.

- Parallel Scenario Execution Layer: Supports K-way parallel execution, maximizing hardware throughput. Each worker executes arbitrary multi-AV scenarios in isolation, critical for efficient exploration of high-dimensional input spaces.

- ADS Integration Interface: Provides a standardized API (setup_env and run_step) for any ADS binding, facilitating evaluation and method transfer across 12 integrated ADSs, including both module-based and end-to-end architectures.

Scenario Definition and Execution

OpenScenario defines scenario entities by low-level attributes, enabling a canonical internal representation for mutation, compatibility, and downstream analysis. The main entities are ego vehicles (with full route and ADS config), dynamic/static NPCs, map regions, weather, and traffic lights. This low-level description unifies the scenario spaces of a diverse set of existing search-based testing tools, laying the basis for broad compatibility and extensibility.

Scenario mutation explores this parameter space using evolutionary operators, supporting arbitrary search objectives defined via custom feedback functions and test oracles. Drivora’s parallelism in scenario execution supports both single- and multi-AV cases, capturing emergent risks inherent in interactions among multiple autonomous agents.

Empirical Results and Practical Capabilities

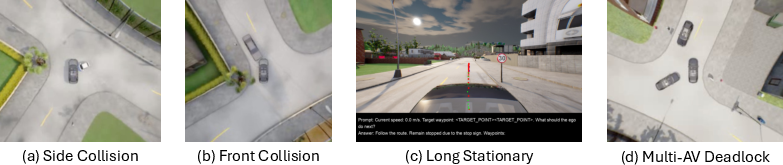

Demo results highlight Drivora's capacity to discover diverse categories of safety violations:

Execution throughput scales linearly as the degree of parallelization K increases, confirming that the scenario execution layer is not bottlenecked by synchronization or resource contention. The architecture natively supports multi-AV scenarios, elucidating complex, higher-order emergent behaviors—such as deadlocks among several AVs—which are fundamentally unobservable in single-vehicle paradigms.

Implications for Autonomous Driving Validation

The unification and modularization offered in Drivora allow for reproducibility, extensibility, and efficient large-scale testing critical for modern evolutionary, reinforcement learning–based, and diversity-guided techniques. The integration with 12 ADSs underscores Drivora's effectiveness as a benchmarking and validation platform, facilitating head-to-head comparison using identical scenarios and test harnesses.

The extensibility of OpenScenario may facilitate future experimentation with LLM–driven or behavior-distribution–guided scenario generators. The ability to plug in advanced test search strategies (e.g., RL, many-objective) and new oracles at minimal engineering cost provides a runtime foundation suitable for both software engineering researchers and industrial validation pipelines.

Future Directions

Further planned work includes comprehensive empirical evaluation across the integrated ADSs, quantifying the coverage and diversity actually achieved by different search strategies in the unifying infrastructure. The current design can be extended to incorporate new scenario mutation pipelines (e.g., RL-based generative adversaries), to support formal requirements-driven test case generation, and integration with incident databases or natural-language scenario descriptions.

Conclusion

Drivora represents a concrete step toward infrastructural convergence in the evaluation of autonomous driving systems. By offering a robust, extensible, and parallelized framework grounded on actionable scenario definitions and modular architecture, Drivora addresses critical gaps in the state-of-the-art for ADS testing. The framework’s ability to surface diverse categories of violations and emergent behaviors demonstrates its value for both researchers and practitioners, and positions Drivora as a central validation platform for ongoing ADS safety research and engineering (2601.05685).