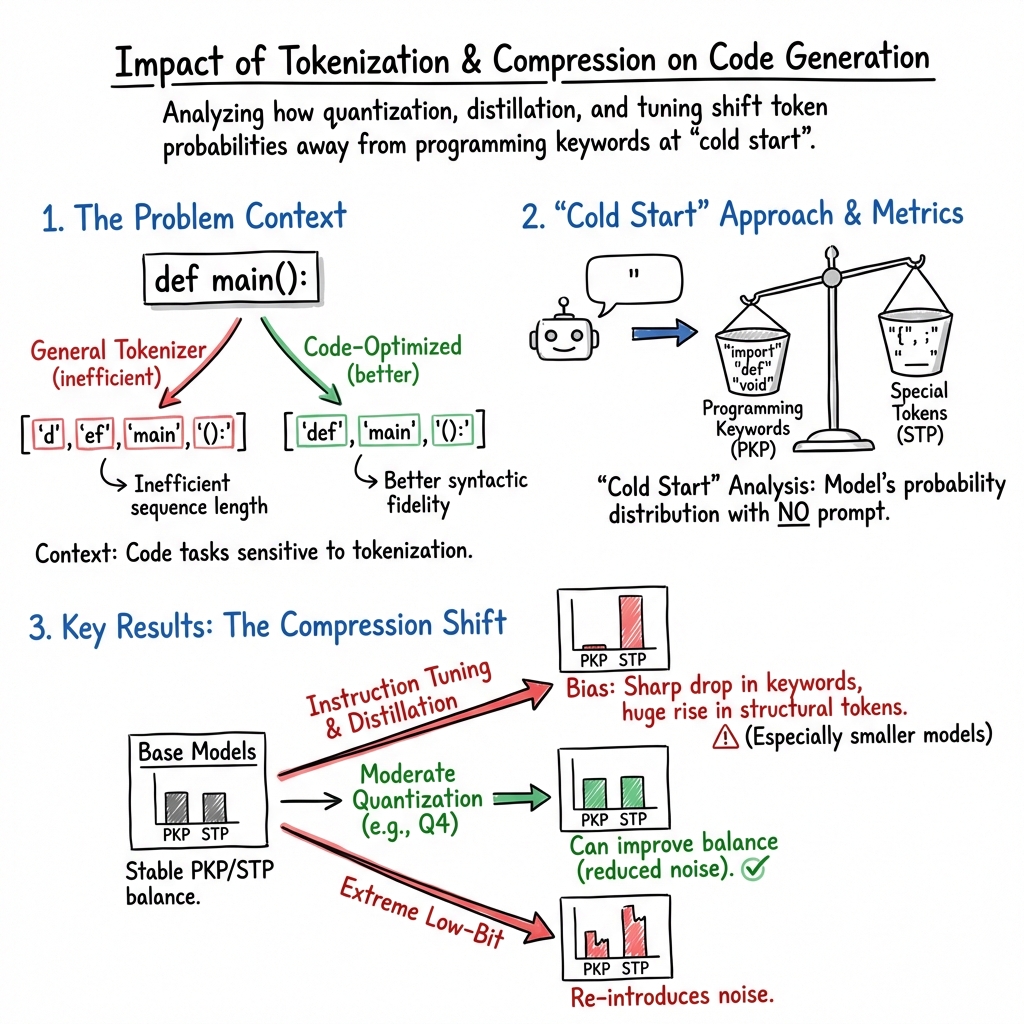

- The paper reveals that quantization and distillation significantly alter token distributions, impacting LLM performance in code generation.

- It introduces a cold-start probability analysis with metrics like PKP, STP, KAP, and NLP to evaluate token efficiency and keyword coverage.

- Findings highlight that aggressive distillation degrades token retention, urging a balanced approach to compression for robust code generation.

Compressed Code: The Hidden Effects of Quantization and Distillation on Programming Tokens

Introduction

The paper "Compressed code: the hidden effects of quantization and distillation on programming tokens" (2601.02563) investigates the intricacies of how LLMs handle programming languages, particularly focusing on token-level behavior under compression techniques like quantization and distillation. These models, while proficient at generating code, face challenges in token representation due to their reliance on tokenization paradigms optimized for natural language rather than code-specific structures.

Tokenization in Programming Languages

Tokenization plays a pivotal role in the performance of LLMs in code generation tasks. The efficiency of Byte Pair Encoding (BPE), a prevalent tokenization method, is often undermined when applied to programming languages due to its inability to preserve syntax-specific tokens effectively. As tokenization directly influences sequence length, computational cost, and model interpretability, understanding the mapping between programming language tokens and their distribution in model vocabularies is crucial for optimizing LLMs for code tasks.

Methodological Advances

This research introduces a cold-start probability analysis, offering insights into model behavior sans explicit prompts. Through this method, the paper profoundly explores how different models and tokenizers, such as Qwen2.5-Coder, Llama 3.1, and DeepSeek-R1 variants, encode programming languages by analyzing their vocabulary distributions and the coverage of programming keywords.

Effects of Model Compression

The paper explores common model compression strategies including quantization and distillation. Quantization reduces numerical precision, maintaining model performance while limiting computational costs. On the other hand, distillation involves the teacher-student paradigm, which often leads to biased distributions if specialized tokens are not well-preserved.

The analysis reveals that while moderate quantization can favorably adjust the balance between programming keywords and special tokens, aggressive distillation often degrades token distribution. Interestingly, while LLMs specialized for coding tasks don't develop unique token distributions compared to general LLMs, architectural decisions significantly impact their capacity for code generation.

Probability Metrics Evaluation

To evaluate coding capabilities, the paper introduces several key probabilities: Programming Keywords Probability (PKP), Special Tokens Probability (STP), Keyword Average Probability (KAP), and Natural Language Probability (NLP). These metrics assess LLMs' natural inclinations towards programming and natural language constructs, providing invaluable insight into the intrinsic biases introduced by different training objectives and compression techniques.

Practical Implications and Conclusions

The results underscore the nuanced relationship between tokenization and code generation. While Qwen2.5-Coder and Llama 3.1 generally offer better keyword coverage and ranking for programming languages, the use of distillation in DeepSeek-R1 notably degrades token efficiency, emphasizing the necessity to balance compression with model capacity to sustain code generation quality.

The findings have practical ramifications for deploying LLMs in production environments under resource constraints. By offering a comprehensive framework to evaluate LLMs' readiness for code-specific applications, the paper provides a basis for making informed decisions about model compression strategies.

Conclusion

This work advances both the theoretical understanding and practical deployment of LLMs for code generation. By demystifying the token-level effects of popular compression techniques, it offers actionable insights for optimizing LLM technology in production settings, ensuring reliable performance in programming language tasks. Such insights are especially crucial as the industry continues to leverage compressed models for efficient real-world applications.