- The paper introduces a hierarchical generative framework that decouples slate planning and item decoding to improve recommendation coherence and inference speed.

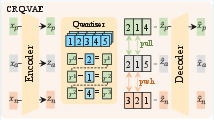

- It leverages CRQ-VAE for semantic tokenization, effectively reducing collision rates and enhancing interpretability in item representations.

- The ORPO objective optimizes slate-level engagement, achieving over 10% improvement in offline metrics and significant online efficiency gains.

Hierarchical Planning and Preference Alignment in Generative Slate Recommendation: The HiGR Framework

Introduction

The paper "HiGR: Efficient Generative Slate Recommendation via Hierarchical Planning and Multi-Objective Preference Alignment" (2512.24787) presents a comprehensive framework for generative slate recommendation systems targeting industrial-scale deployments. The work recognizes major bottlenecks in prior generative approaches such as semantic entanglement in tokenization, inefficient sequential decoding, and inadequate holistic slate planning. To address these, HiGR introduces advanced hierarchical generation, semantic ID quantization via contrastive learning, and direct slate-level preference alignment through reinforcement learning objectives.

Framework Overview

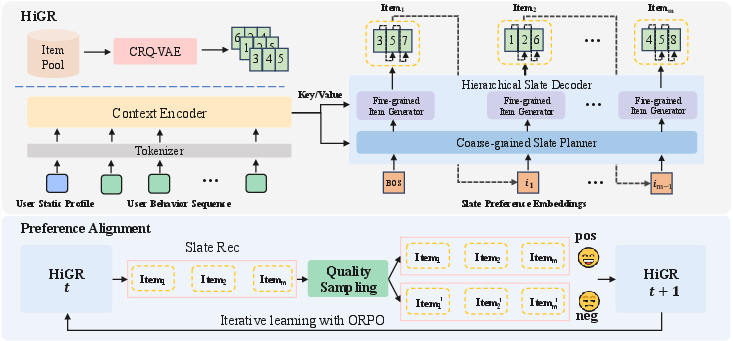

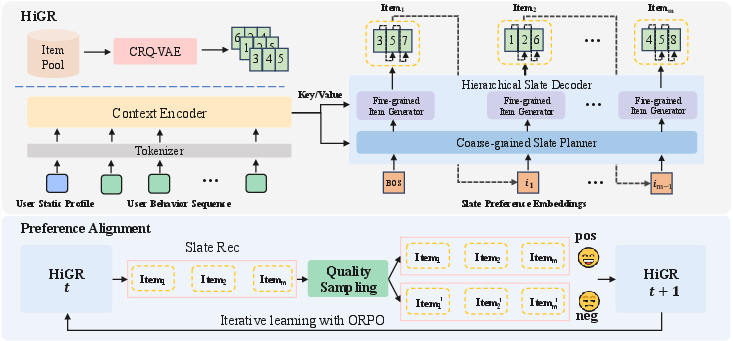

HiGR advances slate recommendation through three tightly-integrated modules:

- Contrastive Residual Quantization VAE (CRQ-VAE) for semantic tokenization: CRQ-VAE delivers structured semantic IDs with prefix-level contrastive alignment, improving the robustness, interpretability, and controllability of item representations.

- Hierarchical Slate Decoder (HSD): Decouples generation into two stages—global slate planning via preference embedding, followed by fine-grained item decoding—enabling both coherent listwise reasoning and substantial inference acceleration.

- Odds Ratio Preference Optimization (ORPO): A reinforcement learning-inspired objective directly optimizing slate-level engagement metrics without dependence on an auxiliary reference model, facilitating high-throughput and stability in training.

Figure 1: Overview of the HiGR framework, merging semantic tokenization, hierarchical decoding, and ORPO-based preference alignment for slate recommendation.

Semantic Tokenization: CRQ-VAE

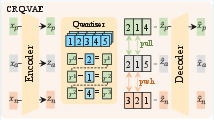

ID-based item tokenization (SID) forms the core of generative slate models. HiGR's CRQ-VAE mitigates prevailing issues of ID sparsity, entanglement, and weak collaborative signals in large-scale deployments.

The model exploits hierarchical residual quantization for item encoding. Each item embedding is mapped sequentially through multiple codebooks, capturing both coarse and fine semantics. Crucially, contrastive InfoNCE losses are applied to the codebook prefixes, pulling together positives (high semantic overlap or co-interaction) and repulsing negatives. Prefixes encode global semantics, while final codebook layers maintain item-level resolution, balancing interpretability and specificity.

Figure 2: The CRQ-VAE pipeline achieves semantic ID assignment with explicit prefix-level contrastive constraints.

Numerical evaluations demonstrate that CRQ-VAE reduces collision rates and increases consistency scores over RQ-Kmeans and RQ-VAE variants, substantiating its role in semantic structure optimization.

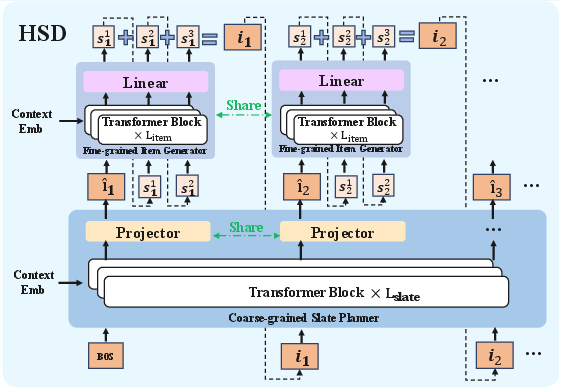

Hierarchical Slate Decoder Architecture

Slate recommendation quality depends on capturing inter-item relationships and global list intent. HiGR’s Hierarchical Slate Decoder (HSD) separates slate planning and item realization:

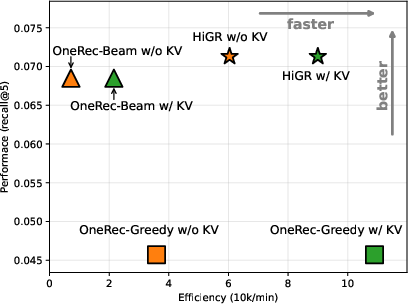

Efficient inference is delivered via Greedy-Slate Beam-Item (GSBI) search. This decoupling reduces computation from O(M3D3) (in sequential autoregressive models) to O(M3+B⋅MD3), yielding substantial improvement in inference latency.

Reinforcement Preference Alignment: ORPO

HiGR aligns the generative process with actual user preferences. Odds Ratio Preference Optimization (ORPO) eliminates the need for a reference model and integrates a post-training loss that queries slate-level engagement indicators (watch time, diversity, satisfaction).

Preference pairs are constructed for both ranking fidelity (e.g., item ordering), genuine interest (positive vs. negative feedback), and slate diversity (penalizing repetitive or cocooned item sets). This triple-objective alignment directly optimizes high-value business metrics in real-world recommendation settings.

Experimental Evaluation

Offline Metrics and Online Deployment

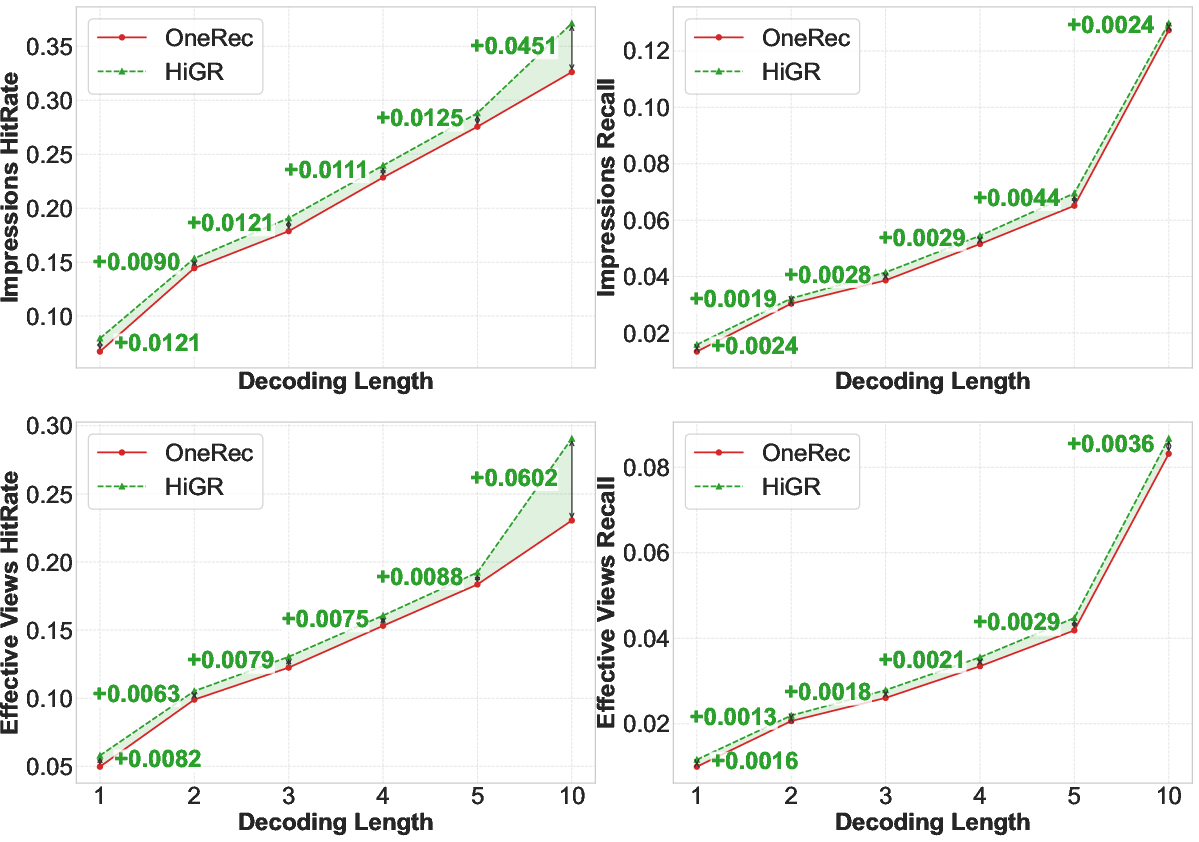

HiGR’s performance was validated on a Tencent-scale commercial media platform against classic, discriminative, and generative recommender baselines. Notable findings include:

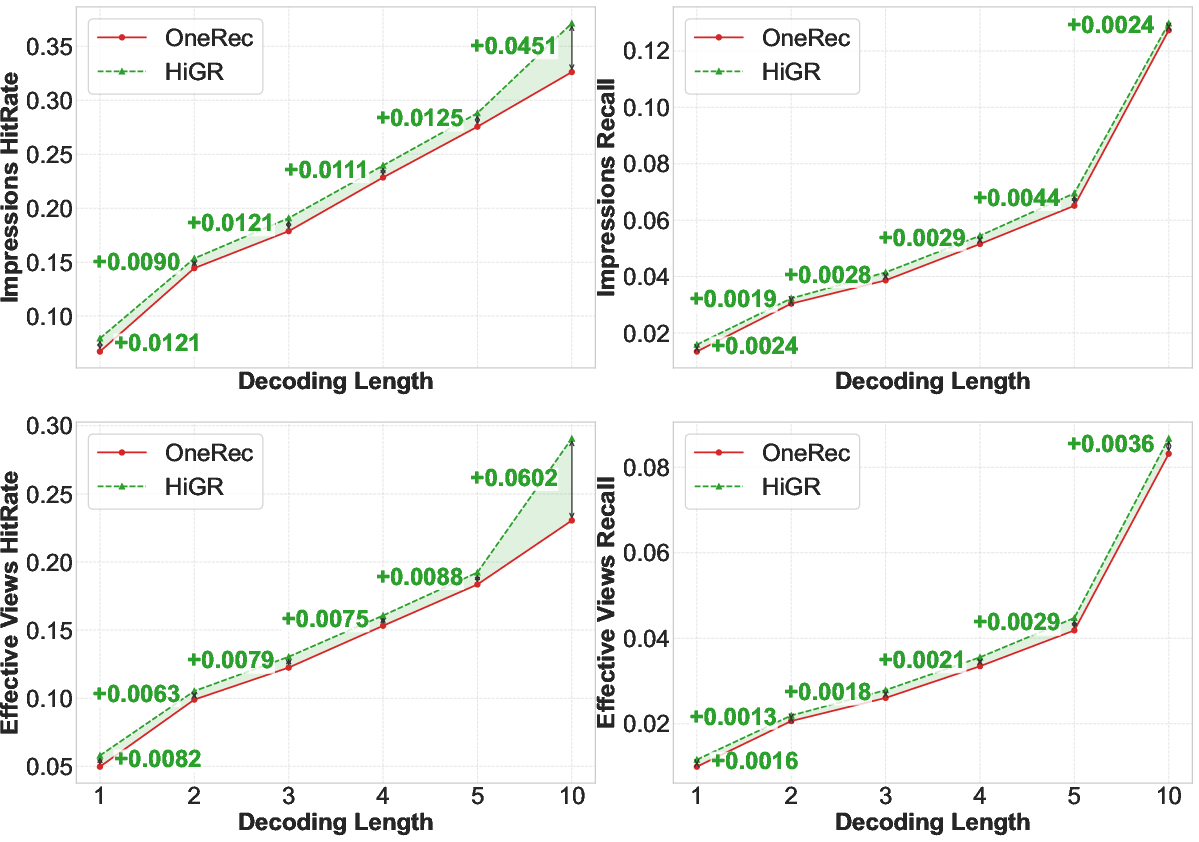

- >10% improvement in offline recommendation quality (NDCG, hit@5, recall@5) versus leading generative baseline (OneRec)

- 5× inference speedup attributable to hierarchical decoding architecture

- 1.22% and 1.73% increase in Average Watch Time and Average Video Views, substantiated by large-scale online A/B testing

Ablation studies confirm that CRQ-VAE, hierarchical decoding, and ORPO individually contribute to overall performance, with sum pooling for preference embeddings and shared item generator parameters being optimal.

Figure 4: Comparative performance of HiGR and baselines across different slate decoding lengths, highlighting robust quality retention in longer sequences.

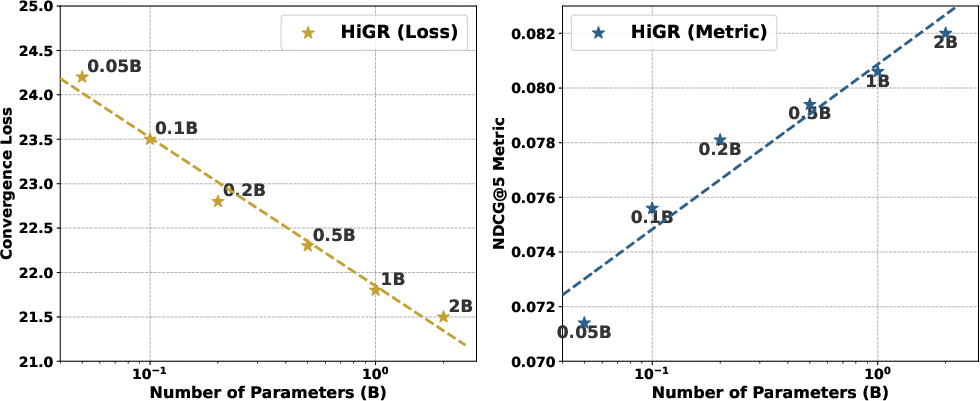

Scaling Laws

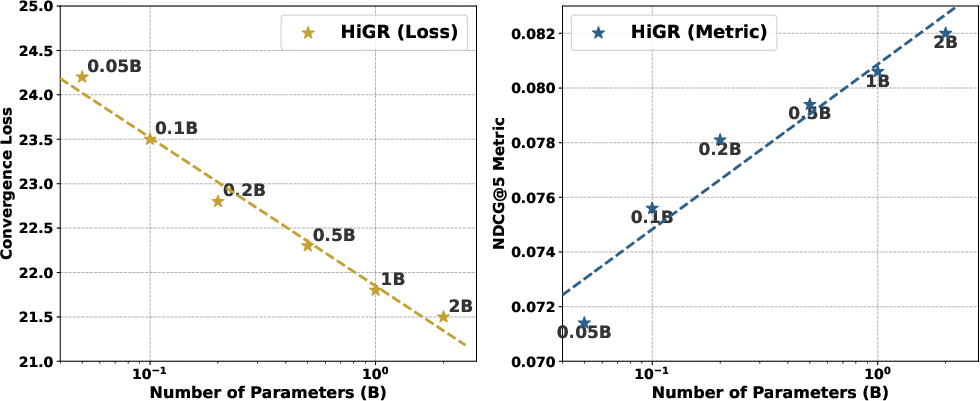

HiGR exhibits predictable scaling behavior: empirical scaling laws demonstrate linear improvements in slate NDCG and convergence loss with increased parameter count, providing a reliable growth trajectory for industrial recommendation system enhancements.

Figure 5: Scaling behavior of HiGR in terms of convergence loss and NDCG@5 as model size increases.

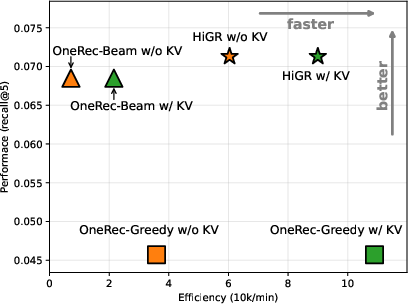

Computational Efficiency

Comprehensive theoretical and empirical benchmarks show HiGR's hierarchical decoder achieves up to fivefold speed improvement without loss of recommendation quality. Both beam and greedy search settings outperform OneRec.

Figure 6: Efficiency comparison: HiGR delivers markedly better effective view recall and throughput versus OneRec under identical settings.

Implications and Future Directions

HiGR sets a new modular standard for generative slate recommendation engines by harmonizing structured semantic tokenization, hierarchical inference, and direct user preference alignment:

- Practical Impact: Reduced inference latency and improved recommendation quality enable commercial deployment in high-throughput, low-latency platforms, directly translating to measurable engagement uplift.

- Theory: The hierarchical planning and semantic ID alignment techniques suggest further directions for compositional generative models in related multi-objective sequential tasks.

- Future Development: Potential advances include adaptive slate length tuning, integration of richer feedback signals, and automated preference pair construction for continuous alignment with evolving user behavior.

Conclusion

HiGR demonstrates a technically rigorous, scalable, and efficient solution for generative slate recommendation in real-world environments. Its hierarchical planning, contrastive semantic quantization, and reference-free preference alignment collectively overcome classical bottlenecks in autoregressive listwise recommender architectures. This framework provides a solid foundation for future expansion in generative recommendation research and large-scale system applications.