- The paper introduces LAP, a novel measure to quantify lookahead bias by assessing memorization in LLM-generated forecasts.

- It employs an econometric regression framework that demonstrates a significant amplification of forecast effects in stock returns and CapEx predictions.

- Robustness tests confirm that LAP distinguishes recall from genuine reasoning, highlighting a critical data leakage issue in LLM-based economic analysis.

Summary of "A Test of Lookahead Bias in LLM Forecasts" (2512.23847)

Introduction and Motivation

The paper develops a formal and empirical framework to detect lookahead bias in economic and financial forecasts generated by LLMs. Lookahead bias arises when an LLM’s predictions leverage memorized outcomes from training data, thus conflating genuine reasoning with data leakage—especially problematic for stock return and corporate forecast tasks where event-specific information and subsequent market reactions are publicly documented. Traditional evaluation strategies do not adequately separate inference from recall, and current mitigation tactics (anonymization, identifier masking) are often insufficient.

Lookahead Propensity (LAP) and Membership Inference Attacks

Central to the proposed methodology is the Lookahead Propensity (LAP): the likelihood that a prompt has appeared in the LLM’s pretraining corpus. LAP is constructed using techniques from the membership inference attack (MIA) literature—specifically, the MIN-K% PROB statistic. By focusing on the mean token probabilities of the lowest K\% (here, K=20) in a prompt, the LAP isolates memorization signals from ubiquitous language patterning. Prompts well-represented in the training data receive higher LAP, denoting greater contamination by recall in forecast generation.

Econometric Framework for Detecting Lookahead Bias

The detection of lookahead bias is formalized within an econometric regression:

Yt+1=β1μ^t+β2Lt+β3(Lt×μ^t)+ϵt+1

where Yt+1 is the realized outcome, μ^t is the LLM forecast, and Lt is the LAP for the prompt. The critical test statistic is β3: a statistically significant positive value unambiguously reveals the presence of lookahead bias, with theoretical grounding provided via the Frisch–Waugh–Lovell theorem and covariance analysis.

Empirical Evaluation: Stock Returns and Corporate Investment

The authors implement their LAP test on two canonical prediction tasks using Meta’s open-source Llama-3.3 LLM:

- News Headlines Predicting Stock Returns: Using Bloomberg headlines, LLM-predicted sentiment scores are regressed on next-day stock returns, with LAP computed for each headline. A one-standard-deviation increase in LAP amplifies the marginal effect of LLM prediction by 0.077%—roughly 37% of the baseline LLM effect. Strong amplification is observed for small-cap stocks, a regime sensitive to both news memorability and market inefficiency.

- Earnings Call Transcripts Predicting CapEx: Earnings call texts are passed to the LLM, with output mapped to future two-quarter capital expenditure ratios. LAP again interacts strongly—0.149% amplification on the marginal effect (19% of the baseline) per one-standard-deviation increase—suggesting model performance is driven in part by recall of outcome-linked input sequences.

Both exercises demonstrate that memorization, rather than reasoning, accounts for a nontrivial proportion of LLM forecast accuracy.

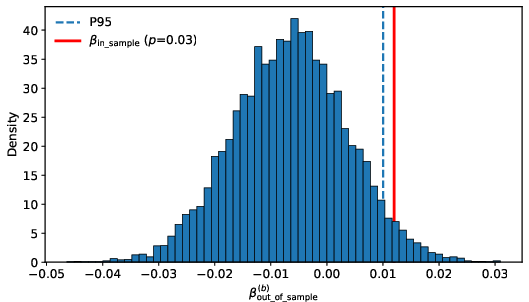

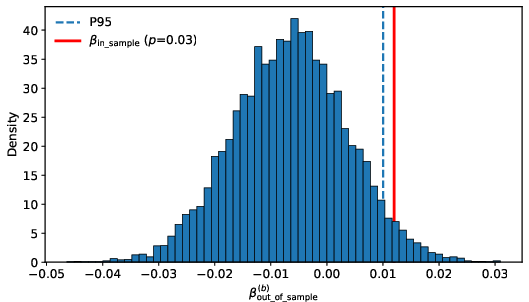

Robustness, Bootstrap Inference, and Out-of-Sample Tests

The amplification effect of LAP persists when controlling for intrinsic model confidence (first-token probability and self-reported confidence), indicating that LAP operates via a distinct mechanism. An out-of-sample test (using Llama-2 and headlines after model release) yields no significant LAP effect, confirming the LAP-forecast accuracy correlation as a valid marker for data leakage.

Figure 1: Bootstrap distribution of the coefficient for the interaction between standardized LLM prediction and LAP showing clear separation between in-sample and out-of-sample periods.

Practical and Theoretical Implications

The LAP test constitutes a diagnostic tool for researchers and practitioners, enabling cost-efficient detection of lookahead bias without requiring retraining or proprietary corpus access. The findings complicate the interpretation of recent LLM-enabled results in empirical economics and finance, as high apparent predictive power may reflect contaminated evaluation. Since lookahead bias is task-specific—dependent on input type, visibility, and model architecture—the LAP framework generalizes across domains.

Future Directions

- Systematic integration of LAP into the backtesting and deployment pipeline for LLM-based economic forecasting.

- Development of fine-grained benchmarks with temporally separated corpora and bespoke audit trails.

- Deeper investigation into LAP as it relates to scaling laws in LLM memorization (Lu et al., 2024) and policies for model unlearning or privacy risk [carlini2022membership].

- Coordination with ongoing work on prompt engineering countermeasures, time-indexed model families [sarkar2024storieslm], and empirical validation protocols.

Conclusion

The paper establishes a rigorous theoretical and empirical basis for the detection of lookahead bias in LLM-based forecasting. The Lookahead Propensity statistic and its econometric deployment afford a practical solution to the longstanding problem of disentangling reasoning from memorization. These results underscore the necessity of auditability and context-specific analysis for credible LLM-driven inference in economics and finance.