- The paper proposes a multi-scale state space model (MS-SSM) that integrates multi-resolution analysis to capture both high-frequency and low-frequency details.

- It introduces a novel input-dependent scale-mixer and scale-specific parameter initialization to dynamically adjust information flow and enhance temporal modeling.

- Experimental results show that MS-SSM outperforms traditional SSMs and Transformers on tasks like image recognition and time series analysis, demonstrating superior long-range and hierarchical modeling capabilities.

Summary of "MS-SSM: A Multi-Scale State Space Model for Efficient Sequence Modeling" (2512.23824)

Introduction

The landscape of sequence modeling has largely been dominated by architectures like RNNs and Transformers. While the latter has significantly advanced the capabilities of sequence models due to its attention mechanisms, it suffers from quadratic complexities that limit its efficiency. Recent approaches have shifted to explore linear alternatives such as state-space models (SSMs) which provide parallelization and scalability advantages.

The paper introduces the MS-SSM framework, which capitalizes on a multi-scale approach to enhance the capabilities of traditional state-space models further. By incorporating multi-resolution analysis, MS-SSM captures both high-frequency details and low-frequency global trends, thus addressing traditional SSMs' shortcomings in memory and scale representation.

State Space Model Variants

Standard SSMs and Their Limitations: Traditional SSMs use linear recurrences for sequence processing, offering fast and stable evaluations. However, they often struggle with capturing long-range dependencies and multi-scale information.

Enhanced SSM Designs: Incorporating nested multi-scale convolution layers allows decomposition of signals into multiple resolutions. The multi-scale architecture of MS-SSM thereby maintains a richer and more diverse memory representation which improves the model's ability to address long-range and hierarchical dependencies.

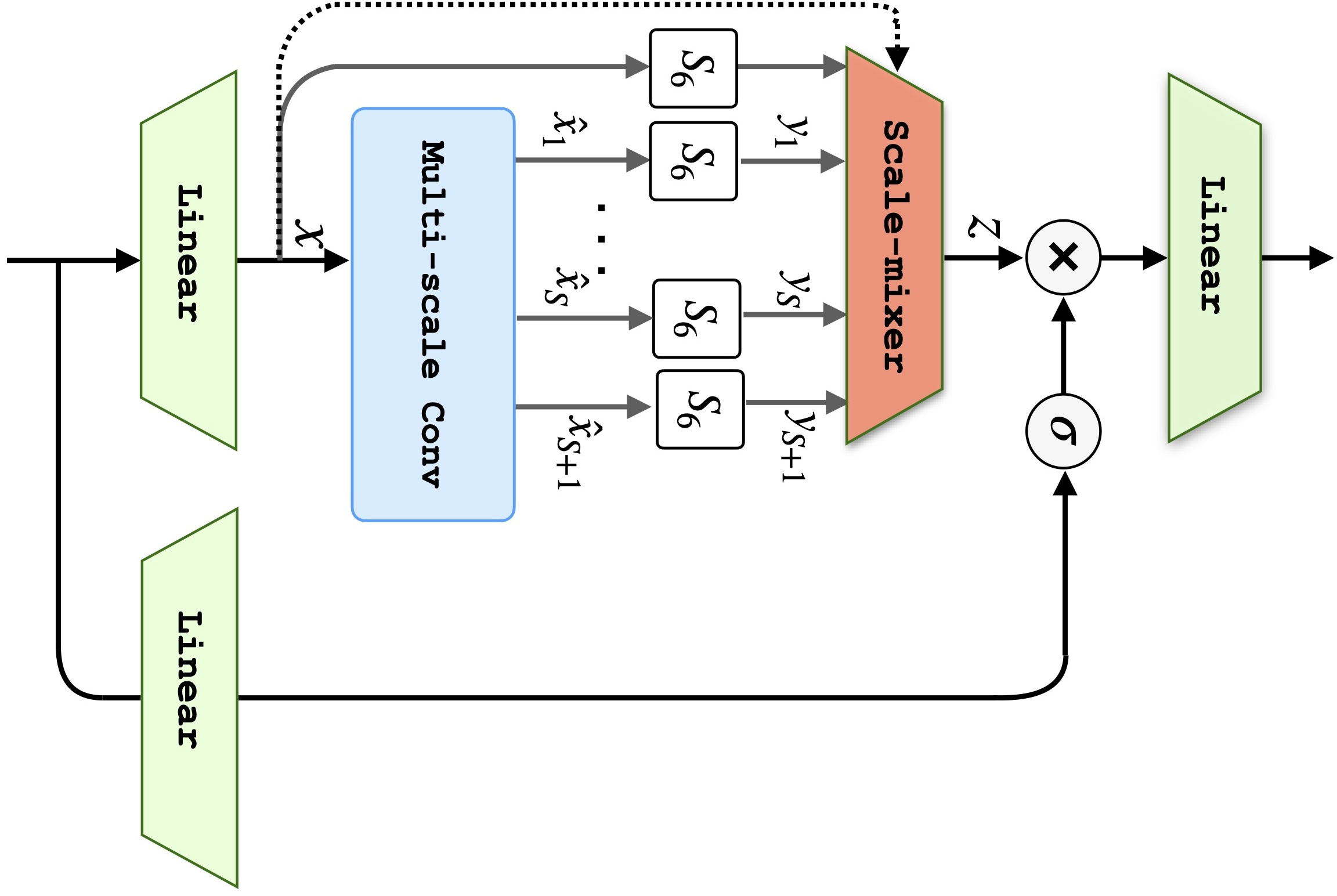

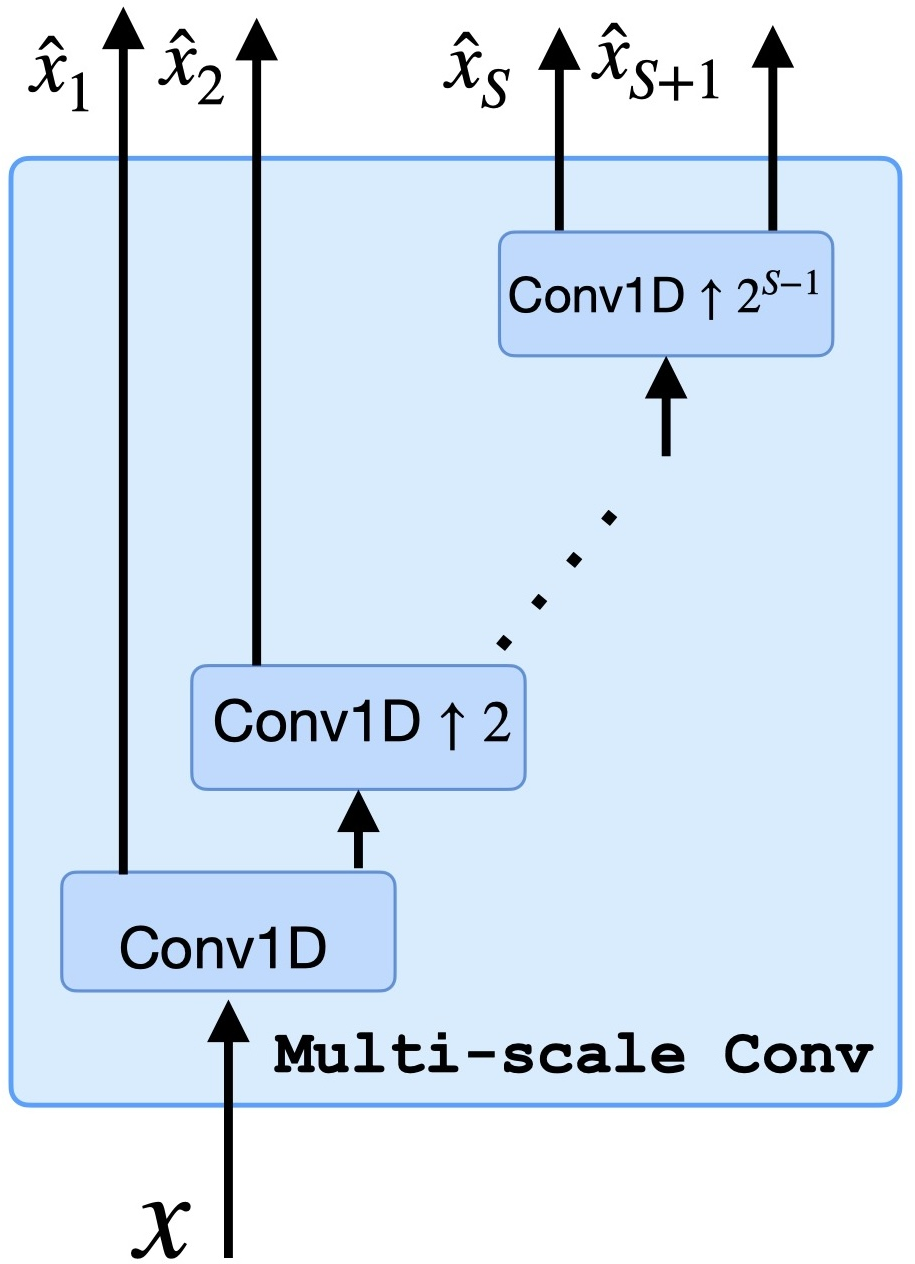

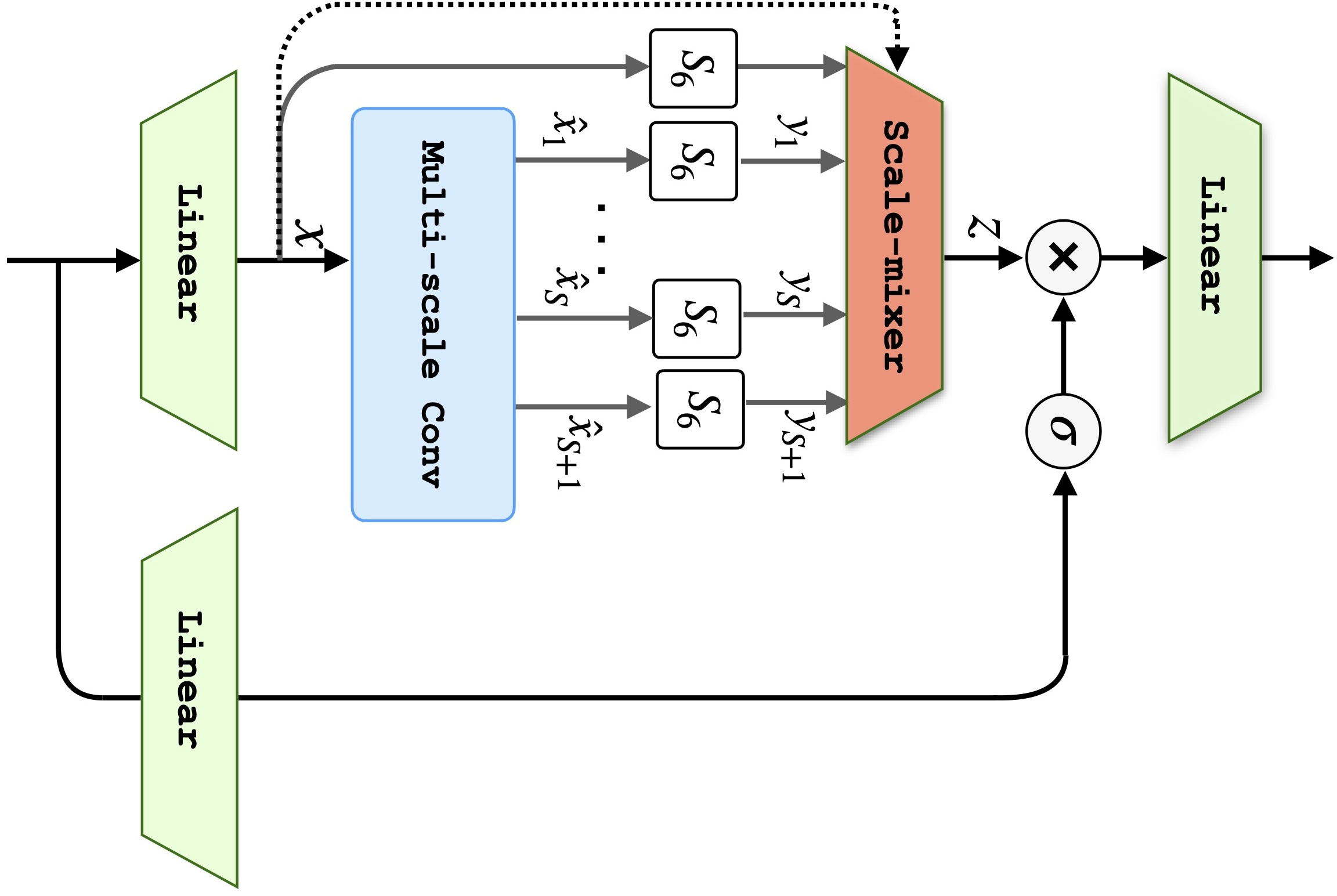

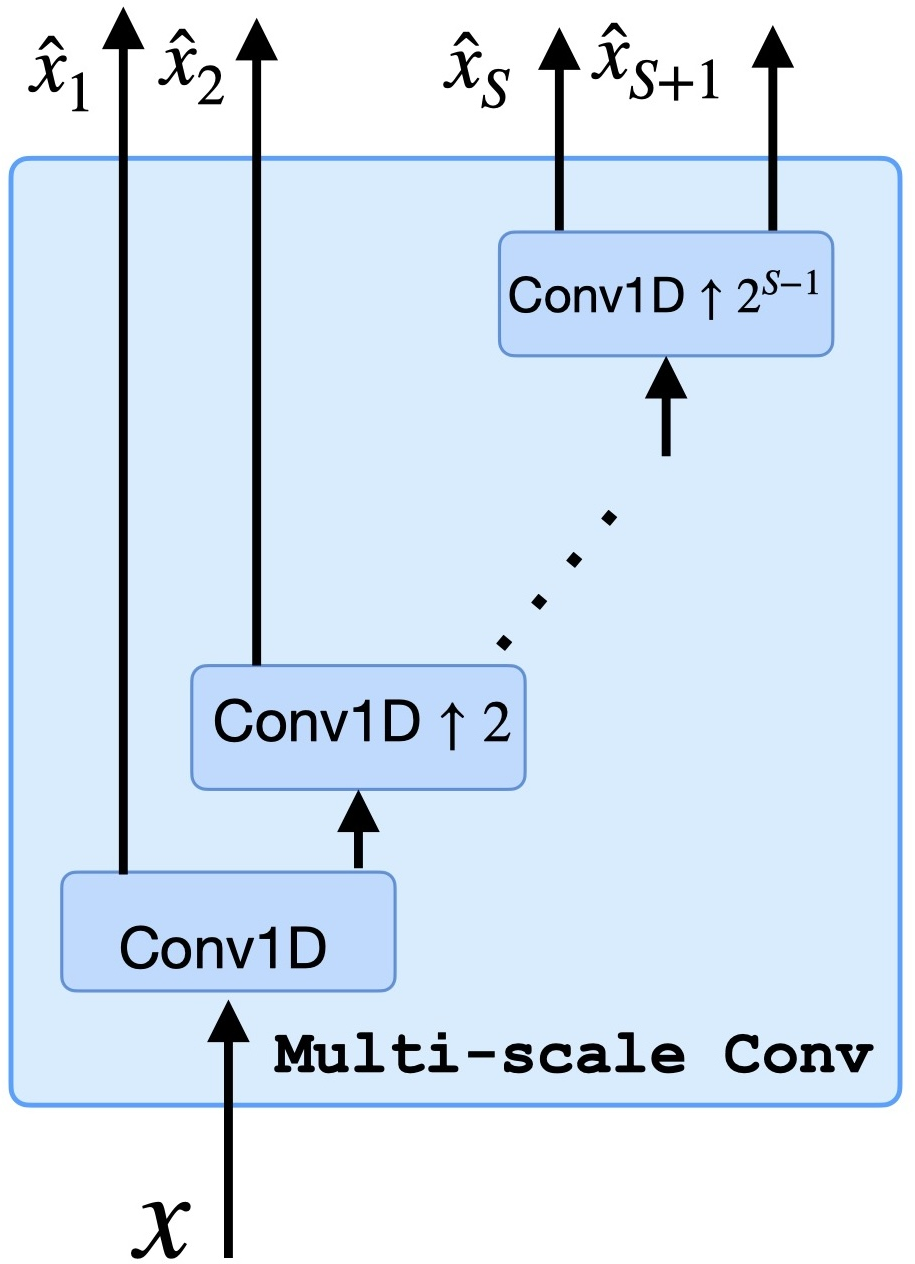

Figure 1: Diagram illustrating the MS-SSM model with multi-scale convolution layers which decompose the input signal into multiple scales.

Methodology

Multi-Scale Design: MS-SSM's design features multiple scales of analysis executed in parallel. At each scale, the decomposition of input sequences evolves through convolutional layers, coupled with SSM for temporal dynamics. This setup efficiently maintains a balance between high-frequency precision and low-frequency contextual information.

Input-Dependent Scale-Mixer: The novel input-dependent scale-mixer layer dynamically adjusts information flow across scales based on input characteristics, thus adding an additional layer of adaptability to how data is processed and interpreted.

Initialization Strategies: Scale-specific parameter initialization enhances the ability to model dynamics at varying resolutions, ensuring that eigenvalues allow for both stability and extended memory, depending on the designated scale.

Experimental Results

Benchmarking and Evaluation: MS-SSM demonstrates its advantages across various tasks, including image recognition and time series analysis. In both CIFAR-10 and ImageNet-1K, MS-SSM achieved superior performance compared to its predecessors, highlighting the utility of multi-resolution processing.

Hierarchical Reasoning: On tasks like ListOps with intrinsic hierarchical dependencies, MS-SSM outperformed competing models, illustrating its capability to process nested structures effectively.

Time Series and LRA: The architecture also excelled in time series applications like PTB-XL electrocardiogram classification and the Long Range Arena benchmark, showcasing robustness in capturing temporal patterns.

Conclusion

The research presents a compelling argument for integrating multi-scale analysis within the SSM framework. MS-SSM's design choices facilitate efficient memory usage and heightened expressive power, proving particularly beneficial for long-range and hierarchical sequence modeling tasks. This development opens avenues for integrating similar multi-resolution strategies into various model architectures and domains.

MS-SSM's contributions, especially the innovative multi-scale decomposition and scale-mixer design, offer promising directions for subsequent research. Future exploration could involve applying these mechanisms to diverse application domains, such as natural language processing and complex dynamical systems, leveraging the robust, efficient modeling capabilities that MS-SSM introduces.