Active inference and artificial reasoning

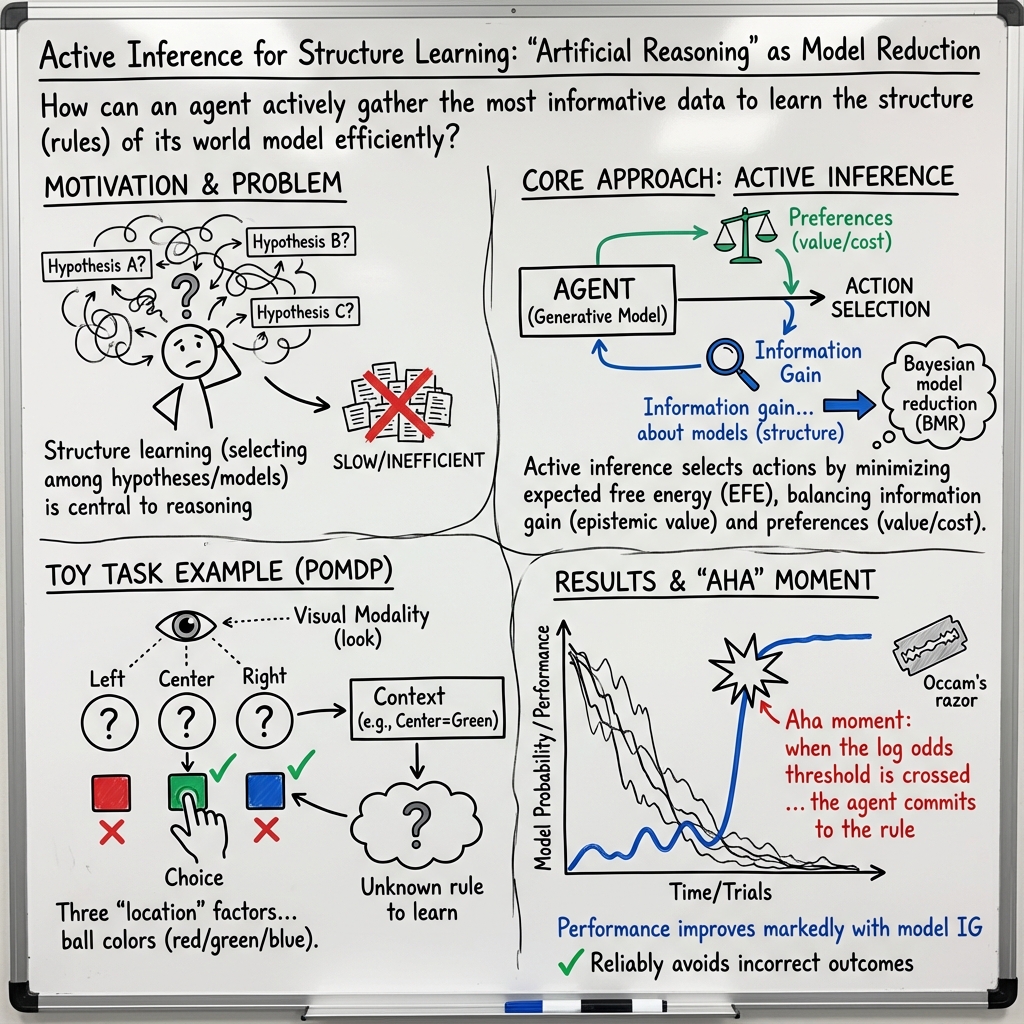

Abstract: This technical note considers the sampling of outcomes that provide the greatest amount of information about the structure of underlying world models. This generalisation furnishes a principled approach to structure learning under a plausible set of generative models or hypotheses. In active inference, policies - i.e., combinations of actions - are selected based on their expected free energy, which comprises expected information gain and value. Information gain corresponds to the KL divergence between predictive posteriors with, and without, the consequences of action. Posteriors over models can be evaluated quickly and efficiently using Bayesian Model Reduction, based upon accumulated posterior beliefs about model parameters. The ensuing information gain can then be used to select actions that disambiguate among alternative models, in the spirit of optimal experimental design. We illustrate this kind of active selection or reasoning using partially observed discrete models; namely, a 'three-ball' paradigm used previously to describe artificial insight and 'aha moments' via (synthetic) introspection or sleep. We focus on the sample efficiency afforded by seeking outcomes that resolve the greatest uncertainty about the world model, under which outcomes are generated.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Explaining “Active inference and artificial reasoning” in simple terms

Overview

This paper explores how an AI agent can “think like a scientist” to figure out the hidden rules of a game by actively choosing what to look at and what to do next. It extends a method called active inference so the agent not only learns from experience, but also plans smart actions designed to collect the most useful evidence about which rule is true. The authors show how this helps the agent reach “aha!” moments—when it suddenly becomes confident about the rule—and then act correctly with fewer trials.

Goals and questions

The paper asks:

- How can an agent choose actions that give the most information about the world’s hidden rules?

- Can we make the agent’s curiosity focused, so it collects evidence that best distinguishes between competing explanations?

- How can it quickly decide which rule is most likely, using the data it has already gathered?

In short: they want the agent to be both curious and strategic—like a good experimenter—so it learns the structure (the rule) behind what it observes, not just the best immediate action.

Methods and ideas, with everyday analogies

Think of the agent as a detective in a room with three covered boxes (the “three-ball” game). Each box hides a colored ball. The agent can only look inside one box at a time. It must guess a color and then gets feedback: correct or incorrect. The trick is that the “correct” color depends on a hidden rule based on the center ball’s color:

- If the center ball is green → the correct choice is green.

- If the center ball is red → the correct choice is the color of the left ball.

- If the center ball is blue → the correct choice is the color of the right ball.

To solve this efficiently, the agent uses a few key ideas:

- Active inference: Instead of just reacting, the agent plans by predicting what it will learn from different actions. It prefers actions that lead to valuable outcomes (being correct) and actions that reduce uncertainty (curiosity).

- Policies: These are sequences of actions (like “look at center, then look left, then choose red”). The agent evaluates policies by “expected free energy,” a score that combines expected usefulness (getting correct feedback) and expected information gain (how much it will learn).

- Information gain: This is how much an action helps reduce uncertainty. There are three useful kinds:

- About hidden states: “If I look at the center, I’ll learn the context (red/blue/green).”

- About parameters: “Over many trials, I learn how observations usually relate to hidden states.”

- About models (rules): “Does the evidence fit rule A or rule B better?”

- Bayesian model reduction: Imagine the agent keeps a scorecard (tally marks) for how often each observed situation matched each proposed rule. This method allows the agent to quickly compare many possible rules using the tallies it has already collected, instead of redoing all the math from scratch. It’s like reusing your notes efficiently to decide which hypothesis explains the data best.

- Occam’s razor: After gathering enough evidence, the agent commits to the single best rule (the one that explains the observations most simply and most likely). It only switches to that rule when it is very confident—so it doesn’t jump to conclusions.

A big reason this approach is needed is that the game is “partially observed”: the agent can’t see all balls at once and must decide where to look next. Simple “learn by trial-and-error” approaches often struggle when you can’t see everything at once. Active inference handles this by planning what to observe next to reduce uncertainty.

Main findings and why they matter

- Smart sampling of evidence: By adding “information gain over models” (i.e., focusing on which observation best separates possible rules), the agent chooses actions that are not just curious, but strategically informative. This is like asking the most revealing question in a mystery.

- Sample efficiency: The agent figures out the correct rule with fewer trials because it targets observations that resolve the biggest uncertainties first (for example, looking at the center ball to determine the context before deciding where else to look).

- Fast reasoning with existing data: Bayesian model reduction lets the agent quickly evaluate which rule is most likely using the counts (tallies) it has already accumulated. This turns the collected experience into faster “thinking.”

- Clear “aha!” moments: When the evidence strongly favors one rule (the agent’s confidence crosses a high threshold), it adopts that rule—leading to correct choices and better feedback more consistently.

These results show that planning with epistemic intentions (curiosity focused on resolving the right uncertainties) supports reasoning about the structure of problems, not just immediate actions. It’s a step toward AI that designs its own “experiments” to learn rules efficiently.

Implications and impact

This work points toward AI systems that:

- Learn rules faster with fewer examples by choosing the most informative observations.

- Handle real-world situations where you can’t see everything at once and must decide what to look at next.

- Move beyond simple trial-and-error toward deliberate reasoning—more like a scientist designing experiments.

- Could improve robots, tutoring systems, and problem-solving AIs that need to infer hidden rules (for example, puzzles like ARC-AGI challenges or navigation tasks with hidden constraints).

In essence, the paper extends active inference to include structured reasoning: the agent doesn’t just learn from whatever happens—it actively seeks the most enlightening evidence, then confidently commits to the best explanation. This makes AI more efficient, more adaptable, and closer to how humans reason when they’re curious and careful.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of unresolved issues that future work could address to strengthen, generalize, and validate the proposed approach to active reasoning via Bayesian model reduction.

- Multi-step reasoning over models is restricted to the next action: the paper explicitly avoids evaluating expected information gain over models across action sequences. How can planning be extended to multi-step horizons while maintaining tractability (e.g., via rollouts, approximate dynamic programming, or forward-updated predictive parameter counts)?

- Arbitrary Occam’s razor threshold: the choice of a 16-nat log Bayes factor and inflating selected-model counts (e.g., by 512) is heuristic. What thresholds and post-selection schemes optimize false discovery vs. detection rates, and how sensitive is behavior to these hyperparameters?

- Post-hoc selection with limited safeguards: premature commitment to a reduced model is acknowledged but not mitigated. What mechanisms (e.g., sequential Bayes factor monitoring, reversible model selection, dynamic thresholds, or evidence decay) prevent or recover from mis-selection in nonstationary or noisy environments?

- Known generative model assumption: the agent is assumed to have learned all model components except the feedback likelihood. How can the approach jointly learn the generative model structure (A, B, C, D, E) and the rule, including when state spaces, transitions, and observation noise are unknown or mis-specified?

- Scalability to large or continuous domains: all derivations assume discrete, small POMDPs and Dirichlet-Categorical parameterizations. How can the method be extended to continuous states/observations, richer parameterizations (e.g., Gaussian families, Bayesian neural networks), and high-dimensional tasks where exact beta-function computations are intractable?

- Model misspecification and noise robustness: the scheme assumes accurate feedback and stationary rules. How does active reduction behave under observation noise, stochastic feedback, adversarial/ambiguous cues, or concept drift, and what modifications (robust priors, change-point detection) are needed?

- Automatic model-space construction remains under-specified: the paper provides symmetry- and sparsity-based heuristics (context/criterion/choice factor isomorphisms) but the general procedure is incomplete. How can model spaces be automatically inferred from data (e.g., discovering factors, domains, and symmetries) with guarantees on completeness and tractability?

- Degenerate/duplicate rules: the paper notes degeneracy among the enumerated rules (79 unique out of 81). How should duplicates be detected, pruned, and represented, and what are the consequences for inference and selection?

- Expectation computation over future observations: expected information gains require expectations under predictive posteriors, but practical evaluation details (analytic vs. sampling approximations, variance control) are not provided. What are efficient, accurate methods for computing these expectations in complex tasks?

- Simultaneous multi-mapping uncertainty: the text asserts additive expected information gain when multiple likelihood mappings are unknown but does not analyze interactions or computational costs. Under what conditions is additivity valid, and how should coupling between mappings be handled?

- Long-horizon policy optimization with model uncertainty: planning two steps ahead is demonstrated for state/parameter uncertainty, but model-level uncertainty is only handled myopically. How can path-integral expected free energy incorporate model-level epistemic terms over longer horizons?

- Comparative evaluation is missing: there is no quantitative comparison to reinforcement learning, optimal experimental design baselines, intrinsic motivation methods, or other structure-learning approaches. What are the sample complexity, regret, and computational trade-offs relative to competing methods?

- Ablation and sensitivity analyses are absent: while a third section is mentioned, the paper does not report results isolating contributions of risk, ambiguity, novelty, and model-level information gain. What components are necessary/sufficient for performance and sample efficiency?

- Resource and complexity constraints: computational/memory costs of Bayesian model reduction with large hypothesis spaces are not analyzed. How does runtime scale with |M|, and what pruning, caching, or approximate search strategies (e.g., variational BMR, SMC) are effective?

- Prior preferences c and their influence: the selection relies on specific cost/value settings. How sensitive are results to c, and can preferences be learned or adapted to reflect resource constraints, task goals, or multi-objective trade-offs?

- Non-uniform structural priors: the model prior over hypotheses is uniform, despite known biases (simplicity, symmetry) in human and efficient artificial reasoning. How should non-uniform priors be set or learned, and what is their impact on convergence and generalization?

- Integration of online introspection: Bayesian model reduction is framed as offline introspection/sleep. How can recursive, online introspection be integrated with planning to support real-time reasoning, and what architectures enable this (e.g., hierarchical controllers with asynchronous BMR)?

- Recovery from wrong commitments: once a reduced model is selected and novelty seeking is suppressed, there is no mechanism to re-open exploration if evidence shifts. What schemes enable safe reversion (e.g., evidence triggers, uncertainty thresholds, elastic counts)?

- Generalization beyond the three-ball paradigm: demonstration is limited to a toy partially observed task. How does the approach perform on diverse ARC-AGI-style puzzles, real-world robotics, or tasks with richer relational/causal structure?

- Handling continuous-time and partially smoothed inference: details on smoothing vs. filtering choices are brief. What are the performance implications of smoothing, and how should message passing be adapted for continuous-time or event-based settings?

- Formal bounds and guarantees: no theoretical bounds are provided on the probability of correct model selection, expected time-to-insight, or sample efficiency. Can PAC-style, Bayesian, or information-theoretic guarantees be derived for the proposed active reduction?

- Discovering context/criterion/choice factorization from raw observations: the method assumes factor annotations. How can factors and their shared domains be discovered from raw sensory data, and how reliable is the isomorphism detection in noisy settings?

- Extension to multi-agent reasoning: the paper focuses on single-agent scenarios. How does active reduction operate in cooperative/competitive settings, and can agents share or negotiate model spaces and evidence?

- Reproducibility and implementation details: code, parameter settings, and evaluation protocols are not disclosed. Providing open implementations and benchmarks would enable replication, ablation, and broader adoption.

Glossary

- Active inference: A framework for perception, learning, and action where agents minimize free energy to infer and act under generative models. "Active inference offers a first principles approach to sense and decision-making under generative or world models."

- ARC-AGI-3 challenge: A benchmark task aiming to discover rules or abstractions without prior information, motivating reasoning and structure learning. "ARC-AGI-3 challenge (ARC-AGI-3); namely, the challenge to discover a rule, symmetry, invariance, law, or abstraction for which no prior information is available."

- Bayesian decision theory: A formalism for making optimal decisions under uncertainty using probabilistic beliefs and utilities. "Statistically speaking, expected free energy underwrites Bayes optimal behaviour, in the dual sense of Bayesian decision theory (Berger, 2011) and optimal experimental design (Lindley, 1956)."

- Bayesian filter: A sequential inference method that updates beliefs forward in time without backward smoothing. "it is sometimes simpler to omit backward messages; in which case the scheme becomes a Bayesian filter-as opposed to smoother."

- Bayesian model average: A prior or posterior formed by averaging over a set of models weighted by their probabilities. "This leads to a prior over parameters that can be read as a Bayesian model average:"

- Bayesian model reduction: A fast method to compare reduced models against a full model using posteriors from the full model. "Posteriors over models can be evaluated quickly and efficiently using Bayesian Model Reduction, based upon accumulated posterior beliefs about model parameters."

- Bayesian model selection: Choosing the best model based on the evidence (marginal likelihood) it assigns to observed data. "Bayesian model reduction is a fast and efficient form of Bayesian model selection that allows one to evaluate the evidence (i.e., marginal likelihood) of alternative models..."

- Bayesian surprise: A measure of how unexpected observations are under current beliefs, often linked to salience. "salience, corresponding to notions of Bayesian surprise in the visual search literature"

- Beta function: A special function used in the normalization of Dirichlet distributions and model evidence calculations. "Using B to denote the beta function, we have (Friston et al., 2018):"

- Categorical distribution: A probability distribution over discrete outcomes, often parameterized by Dirichlet priors. "categorical (Cat) distributions that are parameterised as Dirichlet (Dir) distributions."

- Digamma function: The derivative of the log gamma function, appearing in variational message updates with Dirichlet parameters. "y (.) is the digamma function."

- Dirichlet counts: Sufficient statistics (concentration parameters) for Dirichlet distributions that accumulate co-occurrence evidence. "whose sufficient statistics are Dirichlet counts, which count the number of times a particular combination of states and outcomes has been inferred."

- Dirichlet distribution: A distribution over categorical parameters used as conjugate priors for discrete models. "categorical (Cat) distributions that are parameterised as Dirichlet (Dir) distributions."

- Disentanglement: Learning representations where latent factors are statistically independent and interpretable. "In machine learning, this kind of prior underwrites disentanglement (Higgins et al., 2021; Sanchez et al., 2019)"

- Empirical prior: A prior that depends on a random variable, often set by learned expectations. "When a prior depends upon a random variable it is called an empirical prior."

- Epistemic value: The expected information gain that quantifies how much beliefs will be updated by an action. "Expected information gain or epistemic value scores the degree of belief updating an agent expects under each policy."

- Expected free energy: A quantity combining expected information gain and expected cost/value to guide action selection. "In active inference, policies-i.e., combinations of actions-are selected based on their expected free energy, which comprises expected information gain and value."

- Forney factor graph: A graphical representation of probabilistic models used to structure message passing algorithms. "the right panel presents the generative model as a Forney factor graph"

- Free energy principle: A theoretical framework stating that self-organizing systems minimize free energy to resist disorder. "Its functional form inherits from the path integral formulation of the free energy principle"

- Generative model: A probabilistic model that specifies how observations are generated from latent causes. "Active inference rests upon a generative model of observable outcomes."

- Hyperpriors: Priors placed on prior distributions themselves, controlling model complexity or selection behavior. "One could imagine invoking hyperpriors about the number of data points required before selecting a model."

- Isomorphism: A structure-preserving mapping between sets or factors, used here to constrain likelihood rules. "In particular, we will consider hypothetical likelihood mappings from latent causes to successful outcomes that feature certain isomorphisms."

- KL divergence: A measure of difference between two probability distributions used to quantify information gain. "Information gain corresponds to the KL divergence between predictive posteriors with, and without, the consequences of action."

- Log Bayes factor: The log of the ratio of model evidences used to decide when to adopt a reduced model. "a log Bayes factor (i.e., log odds ratio) scoring the probability that the best model is more likely than any other model:"

- Log odds ratio: The logarithm of odds comparing two hypotheses, used for model selection thresholds. "a log Bayes factor (i.e., log odds ratio) scoring the probability that the best model is more likely than any other model:"

- Marginal likelihood: The probability of data under a model, integrating over parameters; also called model evidence. "evaluate the evidence (i.e., marginal likelihood) of alternative models"

- Model evidence: The marginal likelihood used to compare models in Bayesian model selection. "maximises model evidence or marginal likelihood."

- Mutual information: A measure of shared information between variables; here, between states and observations. "The prior in (3) can be read as implementing a constrained principle of maximum mutual information or minimum redundancy"

- Novelty: Expected information gain about parameters, driving exploration of uncertain parts of the model. "the expected information gain is sometimes referred to as novelty; namely, the epistemic affordance offered by resolving uncertainty about the parameters of a generative model"

- Occam's razor: The principle favoring simpler models that explain data well; operationalized via model reduction. "we will refer to this form of structure learning (Gershman and Niv, 2010; Tenenbaum et al., 2011) as applying Occam's razor."

- Optimal experimental design: Selecting observations or actions that maximally disambiguate competing hypotheses. "in the spirit of optimal experimental design."

- Path integral: Summation over future sequences of actions/outcomes used to accumulate expected free energy. "Its functional form inherits from the path integral formulation of the free energy principle"

- Partially observed Markov decision process (POMDP): A decision-making model where states are hidden and must be inferred from observations. "namely, a partially observed Markov decision process (POMDP)."

- Posterior predictive distribution: The distribution of future observations given current beliefs over states and parameters. "posterior predictive distribution over parameters, hidden states and outcomes at the next time step, under a particular path."

- Probabilistic inductive logic programming: A paradigm combining logic and probability for learning rules and structures. "probabilistic inductive logic programming (Lake et al., 2015; Riguzzi et al., 2014)"

- Risk: The expected cost term in expected free energy that penalizes undesirable outcomes. "This expresses expected free energy in terms of risk, ambiguity and novelty for policy h;"

- Salience: The attentional or motivational value of observations due to expected information gain over states. "The expected information gain over states is often referred to in terms of salience"

- Savage-Dickey method: A technique for Bayesian model comparison simplifying evidence calculation between nested models. "such as the Savage-Dickey method (Savage, 1954)."

- Softmax: A normalization function mapping messages to probabilities in variational updates. "expectations about hidden states are a softmax function of messages"

- Sparse representations: Encodings where many components are zero, encouraged by mutual information priors. "and generally leads to sparse representations (Gros, 2009; Olshausen and Field, 1996; Sakthivadivel, 2022; Tipping, 2001)."

- Sparsity: The constraint that many parameters are zero, simplifying models and maximizing mutual information. "Sparsity is perhaps the simplest constraint"

- Structure learning: Discovering the model’s form (e.g., which parameters are present) rather than just their values. "we will refer to this form of structure learning (Gershman and Niv, 2010; Tenenbaum et al., 2011) as applying Occam's razor."

- Sum-product belief propagation: An exact message passing algorithm for computing marginal posteriors on graphs. "sum-product belief- propagation schemes to achieve exact marginal posteriors."

- Tensor contraction: An operation generalizing dot products over tensors used in message passing notation. "The @ notation denotes a generalised inner (i.e., dot) product or tensor contraction"

- Variational free energy: An objective combining complexity and accuracy to approximate Bayesian inference. "Technically, variational free energy is model complexity minus accuracy"

- Variational inference: Approximate Bayesian inference by optimizing a tractable family to minimize variational free energy. "In variational (a.k.a., approximate) Bayesian inference, model inversion entails the minimisation of variational free energy"

- Variational message passing: A procedure that iteratively updates local beliefs in factor graphs to minimize free energy. "Figure 1 illustrates these updates in the form of variational message passing"

Practical Applications

Overview

Below are practical, real-world applications that follow directly from the paper’s findings and methods on active inference, expected free energy, and Bayesian model reduction for structure learning and “active reasoning.” Applications are grouped by deployment horizon and include sectors, prospective tools/workflows, and key assumptions or dependencies that impact feasibility.

Immediate Applications

These can be prototyped or deployed now using existing tooling for POMDPs, Bayesian inference, and experimental design—especially in discrete, partially observed settings.

- Active experimental design assistant for research labs Sector: academia, biotech, materials science Tools/products/workflows: “Experiment Picker” that ranks next experiments by expected information gain over competing mechanistic models (BMR-backed, EFE-aware); integrations with lab automation platforms; notebooks that compute log Bayes factors to apply Occam’s razor. Assumptions/dependencies: A plausible hypothesis/model space must be enumerated; data/priors representable with Dirichlet counts; relatively stationary conditions; discrete or discretized outcomes; interpretable thresholds for model selection.

- Adaptive A/B/n testing optimizer in product and marketing Sector: software, e-commerce, media Tools/products/workflows: Bandit-like optimizer that chooses the next variant to maximally disambiguate competing customer-behavior models rather than just maximize short-term reward; offline “sleep” phase to perform Bayesian model reduction and commit to a simpler rule/model. Assumptions/dependencies: Discrete outcome and action spaces (or well-chosen discretization); defined hypothesis families (e.g., response curves, segment-specific rules); guardrails for cost/ethical constraints encoded as preferences in expected free energy.

- Diagnostic test selection in clinical decision support Sector: healthcare Tools/products/workflows: Decision-support that recommends the next diagnostic test to maximize expected information gain over disease hypotheses while respecting patient risk/cost preferences; uses log Bayes factors to switch to a leading diagnosis only when evidence is overwhelming. Assumptions/dependencies: Valid generative models of disease and test likelihoods; interpretable thresholds; regulatory approval; careful handling of non-stationarity and population heterogeneity.

- Sensor management and active perception for robots Sector: robotics, autonomous systems Tools/products/workflows: Gaze/sensor-control policies that first resolve contextual uncertainty (e.g., “look at the center ball first” analogue) before acting; planners that add “model-level curiosity” (structure disambiguation) to conventional EFE. Assumptions/dependencies: POMDP model of the task; partial observability; reliable belief updates (message passing or filters); discrete-state approximations or hybrid methods.

- Industrial process troubleshooting and root-cause analysis Sector: manufacturing, energy, industrial IoT Tools/products/workflows: Active probe selection (which measurement to run next) that disambiguates among fault models; Occam’s threshold to commit to a root cause and reduce downtime. Assumptions/dependencies: A curated hypothesis space of failure modes; sensor data mapped to discrete or semi-discrete observations; stable process regimes.

- Intelligent tutoring for misconception diagnosis Sector: education Tools/products/workflows: Tutors that ask the most informative next question to distinguish among competing student-conception models; “sleep/introspection” phases that prune misconception models via Bayesian model reduction. Assumptions/dependencies: A catalogued set of misconception models; observable outcomes (answers, steps) represented in Dirichlet form; fairness/ethics constraints encoded as preferences.

- Recommender systems with targeted disambiguation Sector: software, media streaming, retail Tools/products/workflows: Interactive recommenders that ask targeted questions or show specific items to disambiguate user preference structures (e.g., rule-based segments), not just refine parameter estimates. Assumptions/dependencies: Hypothesis families for preference structures; user interaction costs encoded in expected free energy; privacy-preserving data handling.

- Decision heuristics in daily life Sector: daily life Tools/products/workflows: Practical checklists (e.g., “first look at the most informative context”) for choosing actions that clarify which rule applies—akin to the paper’s optimal “look at center first” strategy. Assumptions/dependencies: Ability to enumerate plausible options/rules; willingness to delay commitment until evidence meets a threshold.

Long-Term Applications

These require further research, scaling to larger or continuous spaces, automated hypothesis space construction, or integration with broader AI systems.

- General-purpose rule discovery agents (ARC-like tasks) Sector: AI research, software Tools/products/workflows: Agents that automatically construct hypothesis spaces using symmetry/isomorphism detection (choice vs. criterion factors) and perform active reasoning to discover puzzle rules; “Active Reasoning SDK” for discrete tasks. Assumptions/dependencies: Automated and scalable hypothesis generation; robust handling of combinatorial explosion; extension beyond discrete-only settings.

- Hybrid LLM + active inference systems Sector: AI platforms Tools/products/workflows: LLMs propose structured hypothesis spaces; active inference selects disambiguating actions; offline “sleep” phases perform Bayesian model reduction; pipelines that separate fast inference, slow learning, and post-hoc selection. Assumptions/dependencies: Reliable model-to-environment grounding; interpretable priors over preference/cost; safe integration of action policies; benchmarks for reasoning efficacy.

- Adaptive clinical trial design with structure learning Sector: healthcare, policy Tools/products/workflows: Trial design platforms that select cohorts/endpoints to disambiguate among competing mechanistic/causal models, not only parameter values; commit to simpler models when Bayes factors surpass predefined thresholds. Assumptions/dependencies: Valid and transparent generative/causal models; ethics/regulatory oversight; robust handling of population drift and multi-modal outcomes.

- Autonomous labs for science and materials discovery Sector: biotech/chemistry/materials Tools/products/workflows: Closed-loop lab robots that plan the next experiment for maximal model-level information gain and perform post-hoc Bayesian model reduction to compress scientific hypotheses. Assumptions/dependencies: High-fidelity simulation or prior knowledge for generative models; safe automation; data infrastructure for evidence accumulation.

- Smart grid and IoT sensor orchestration for fault localization Sector: energy, utilities, smart cities Tools/products/workflows: Grid monitoring systems that choose the next sensor read/probe to disambiguate network fault models; conservative thresholds for model switching in safety-critical contexts. Assumptions/dependencies: Accurate network generative models; latency and reliability constraints; scalable message passing and evidence accounting.

- Market regime inference and exploratory finance Sector: finance Tools/products/workflows: Research platforms that design safe “information-seeking” probes (e.g., small, diversified positions) to disambiguate market regime models; rigorous thresholds before changing models or strategies. Assumptions/dependencies: Risk preferences and costs encoded in EFE; regulatory compliance; careful design to avoid market impact and unintended exposure.

- Verified safety workflows for autonomy Sector: aviation, automotive, industrial robotics Tools/products/workflows: Certification-oriented policy design that switches models only under strong evidence (large log Bayes factor), reducing premature belief changes; formal verification of EFE/BMR implementations. Assumptions/dependencies: Standards for Bayesian evidence thresholds; explainability and auditability; real-time guarantees for inference and planning.

- Evidence-driven public policy pilots Sector: public policy Tools/products/workflows: Policy labs that design pilot interventions to maximize information gain over competing policy-effect models; transparent Occam’s razor criteria for adopting a simpler policy model. Assumptions/dependencies: Credible causal models; social license; data governance; mechanisms to prevent overfitting and premature conclusions.

- Cognitive architectures and neuromorphic systems Sector: neuroscience, advanced computing Tools/products/workflows: Architectures that implement fast inference, slow learning, and offline model reduction (“sleep/introspection” analogues) for efficient structure learning and reasoning. Assumptions/dependencies: Hardware/software support for message passing; benchmarks linking artificial and biological cognition; extensions beyond discrete state-spaces.

- Curriculum design and sequencing by epistemic value Sector: education Tools/products/workflows: Tools that schedule learning activities to maximally disambiguate the learner’s knowledge-structure models, adapting content by expected information gain at model level. Assumptions/dependencies: Valid learner models; longitudinal evidence accumulation; fairness and equity considerations.

Cross-cutting caveats and dependencies

- The paper’s demonstrations assume largely discrete, partially observed models with Dirichlet parameterizations; continuous spaces and high-dimensional state/action sets require extensions.

- Expected information gain over models (structure) is evaluated for the next action; multi-step planning with structure learning needs further development or rolling updates of parameters during lookahead.

- A plausible, bounded hypothesis/model space is required; the paper provides symmetry/isomorphism-based construction heuristics, but automated and scalable enumeration is an open challenge.

- Premature model selection is possible if thresholds are too low; conservative log Bayes factor thresholds mitigate this but may delay action.

- Preference specification (cost/value over outcomes) critically shapes behavior; careful domain-specific design and validation are needed.

Collections

Sign up for free to add this paper to one or more collections.