Terrain Diffusion: A Diffusion-Based Successor to Perlin Noise in Infinite, Real-Time Terrain Generation

Abstract: For decades, procedural worlds have been built on procedural noise functions such as Perlin noise, which are fast and infinite, yet fundamentally limited in realism and large-scale coherence. We introduce Terrain Diffusion, an AI-era successor to Perlin noise that bridges the fidelity of diffusion models with the properties that made procedural noise indispensable: seamless infinite extent, seed-consistency, and constant-time random access. At its core is InfiniteDiffusion, a novel algorithm for infinite generation, enabling seamless, real-time synthesis of boundless landscapes. A hierarchical stack of diffusion models couples planetary context with local detail, while a compact Laplacian encoding stabilizes outputs across Earth-scale dynamic ranges. An open-source infinite-tensor framework supports constant-memory manipulation of unbounded tensors, and few-step consistency distillation enables efficient generation. Together, these components establish diffusion models as a practical foundation for procedural world generation, capable of synthesizing entire planets coherently, controllably, and without limits.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces Terrain Diffusion, a new way to create endless, realistic landscapes for games and simulations. For many years, creators used a method called Perlin noise to quickly build infinite worlds from a single “seed” (like a password). It’s fast and consistent, but the results often look a bit fake and repetitive. Terrain Diffusion uses modern AI (diffusion models) to keep the good parts of Perlin noise—endless worlds, seed consistency, and quick access to any spot—while making the terrain look far more natural, from whole continents down to fine ridges and valleys.

What questions does the paper try to answer?

The researchers set out to solve a few practical problems:

- How can AI models create terrain that feels real at both global and local scales, not just small patches?

- How can we make this generation truly infinite, so you can explore forever in any direction?

- How can we keep “seed consistency,” meaning the same seed always gives the exact same world, no matter what order you visit places?

- How can we make it fast enough for real-time use (like games), and use constant memory so it works on consumer GPUs?

- How can we handle huge differences in elevation (from deep ocean to high mountains) so the AI stays stable and accurate?

How does it work? (Methods explained in simple terms)

Think of the world as a giant, never-ending map made from tiles. Terrain Diffusion uses a few key ideas to stitch these tiles together cleanly and quickly:

Diffusion models (the AI engine)

A diffusion model starts with pure noise (like static on a TV) and gradually “denoises” it into a realistic image. Here, the “image” is a heightmap (terrain elevation). It learns what real landscapes look like by training on Earth data.

From MultiDiffusion to InfiniteDiffusion

- MultiDiffusion: Imagine you generate a big image by blending many overlapping windows (small patches). Each window contributes its best guess, and the overlaps are smoothly averaged so there aren’t seams.

- InfiniteDiffusion: The paper extends this idea to an endless world. Instead of building the whole map at once (impossible), it only computes the part you ask for, plus just enough surrounding context. It also caches results so if you come back later, it’s instant and consistent.

In everyday terms: you’re walking with a flashlight over a dark, infinite terrain. The system only “builds” what’s lit by the flashlight, remembers it, and guarantees that if you return, you’ll see the exact same terrain again. This makes exploration fast and order-independent.

Seed consistency and constant-time random access

- Seed consistency: A seed is like a recipe. Once chosen, it deterministically decides the whole planet. No matter the order you visit areas, they’re always the same.

- Constant-time random access: Asking for any single tile costs the same time, even if it’s far away or you’ve never been there. You can jump to any point on the planet instantly.

Infinite Tensor framework

This is a software layer that handles “infinite-sized” data like it’s normal, by streaming in only the visible pieces. It’s like Netflix for terrain tiles: it loads what you’re watching and unloads what you’ve left, keeping memory usage steady.

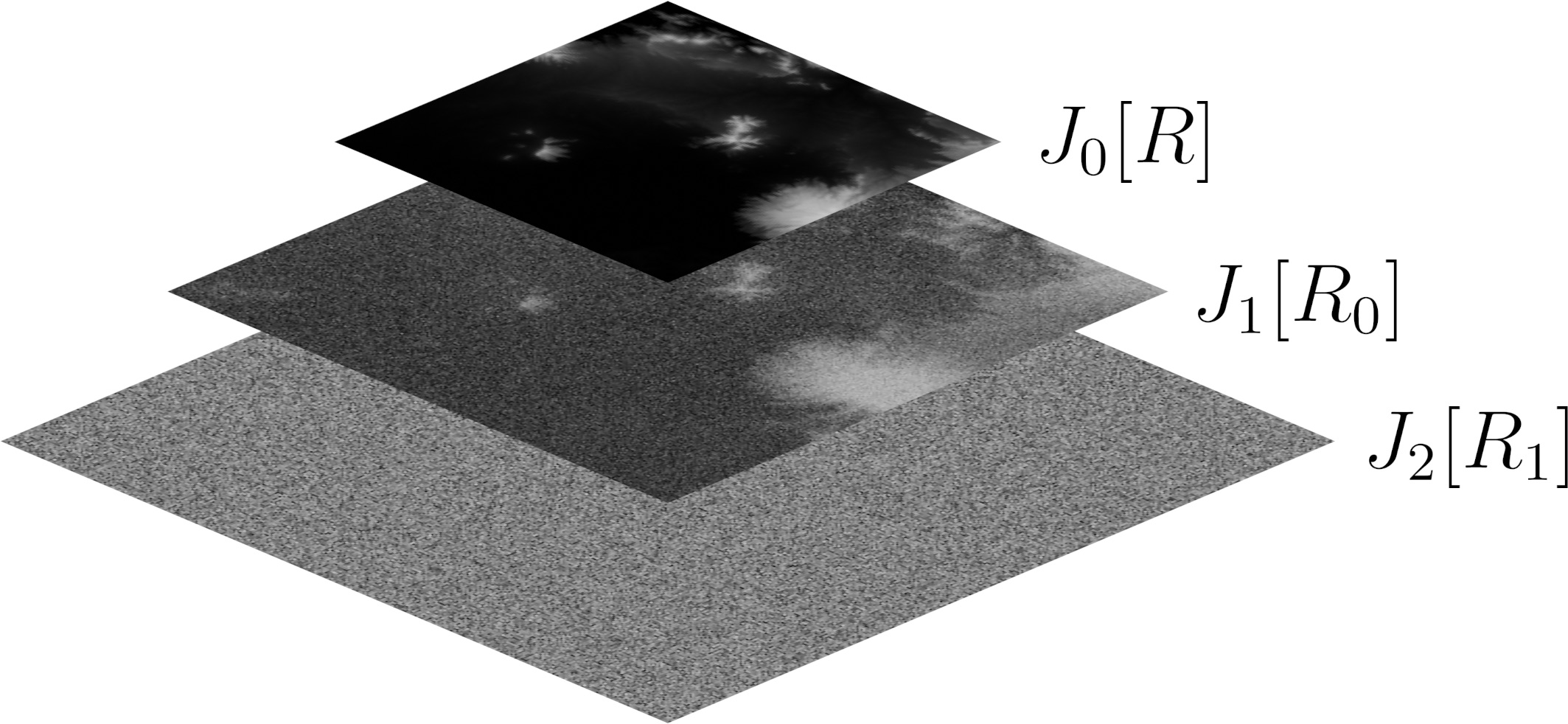

Hierarchical modeling (coarse-to-fine)

Real geography has structure at multiple scales. The system uses a stack of models:

- Coarse model: Sets up large structures—continents, climate patterns—based on simple inputs (procedural noise or a user’s sketch).

- Core latent diffusion model: Fills in mid-scale details like mountain ranges and valleys.

- Consistency decoder: Adds fine, sharp details, making ridges, erosion, and coastlines look realistic.

This pipeline keeps big-picture coherence while adding local realism.

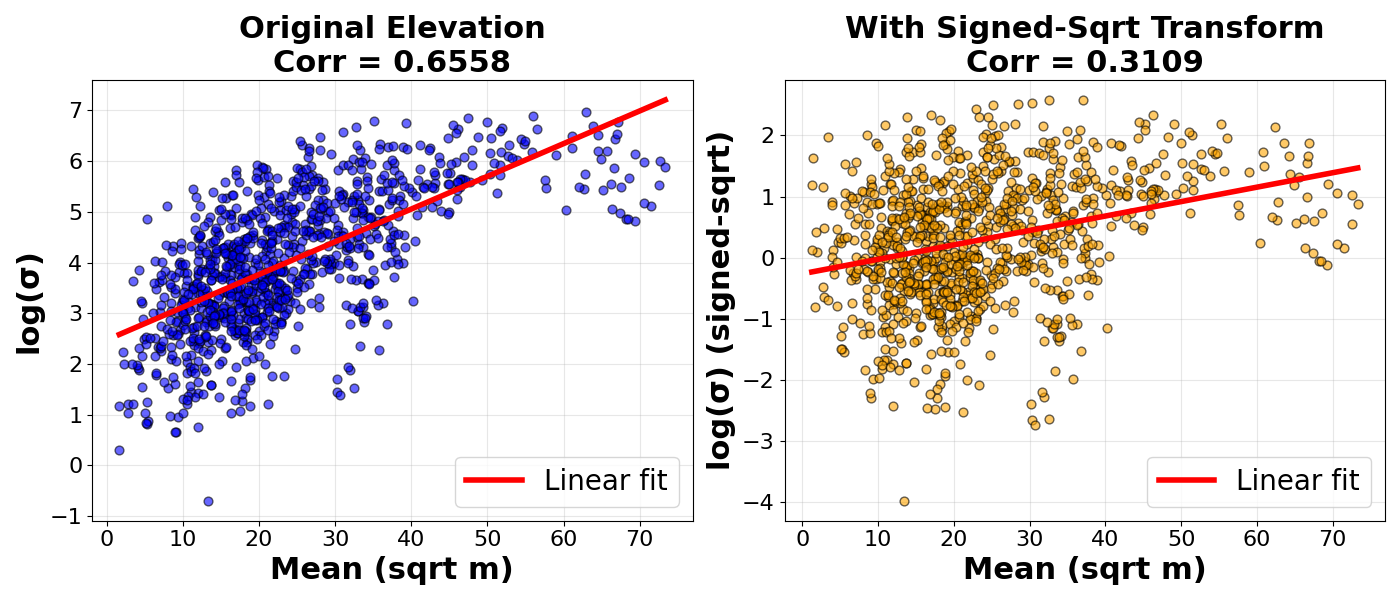

Elevation tricks to keep things stable

- Signed square-root transform: The model transforms heights so extremely tall or deep areas don’t overpower the training. It’s like turning down the volume on loud parts so the AI hears everything equally well.

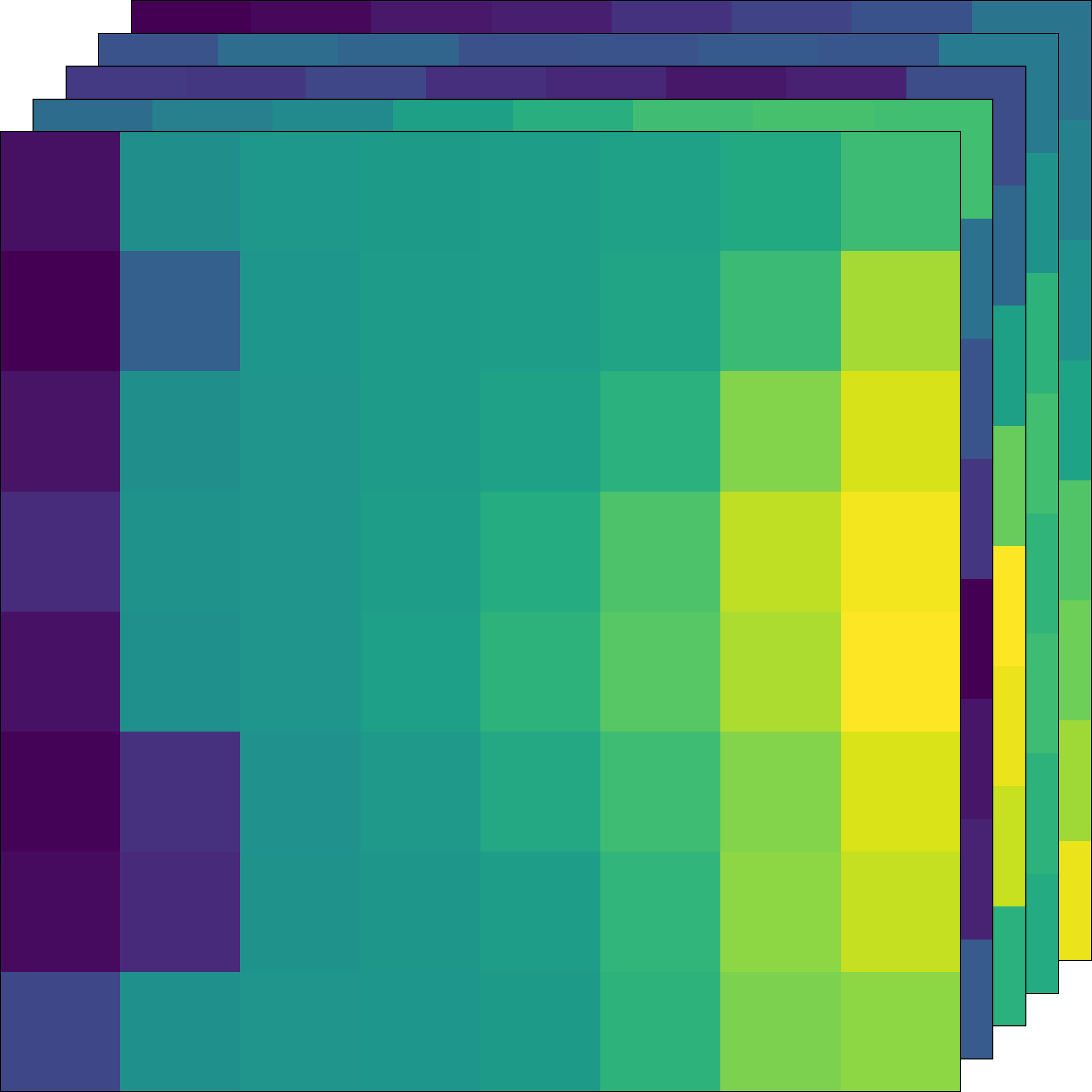

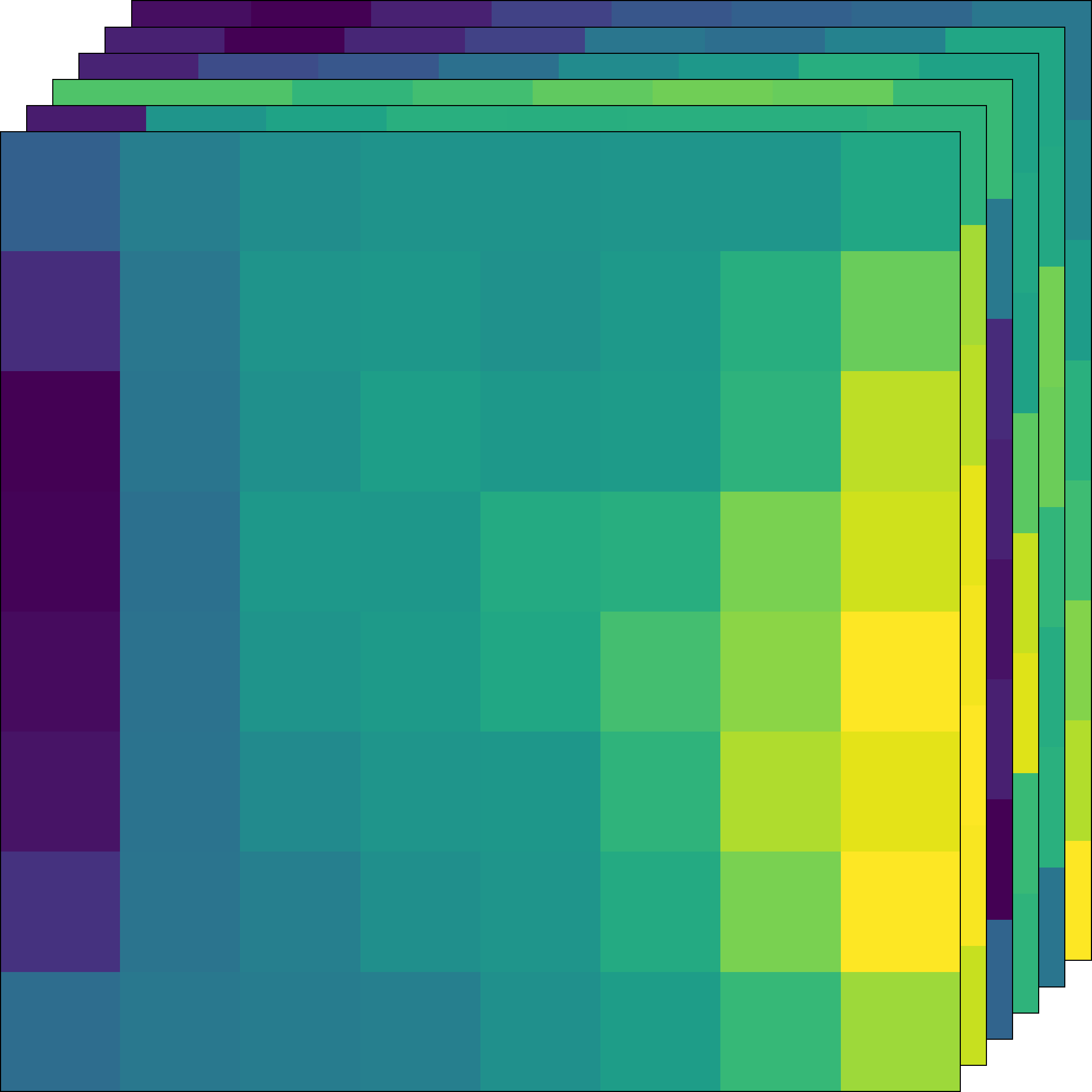

- Laplacian encoding: The model splits terrain into “low-frequency” (broad shapes) and “high-frequency” (fine details). It cleans the low-frequency part if it gets noisy, preserving crisp detail while preventing big, wobbly errors. This significantly improves visual quality.

Fast generation with few steps

Full diffusion can be slow. The authors use “consistency models” to get similar results in just one or two steps, plus a guidance trick to sharpen outputs. This enables real-time performance on a consumer GPU.

What did they find, and why does it matter?

- Realism and coherence: The system generates varied landscapes—mountain chains, valleys, islands, fjords—that look natural and fit with climate. Local features align with global context, which is hard to achieve with traditional noise.

- Seamless infinite worlds: Thanks to InfiniteDiffusion, tiles blend without visible seams, even across huge areas. You can jump around the planet without breaking consistency.

- Speed: On an RTX 3090 Ti, the first tile appears in about 7.6 seconds; each next tile takes about 2.4 seconds. That’s fast enough for streaming terrain as you move in real time.

- Stability and detail: The Laplacian approach and signed-sqrt transform cut errors and keep details crisp. This leads to better quality scores and smoother experiences.

- Practical demo: They plugged Terrain Diffusion into Minecraft’s world generator. The game could stream terrain based on AI elevation and climate, staying smooth during fast movement.

These results show that AI diffusion models can become a practical foundation for procedural world-building, not just for small images.

What does this mean for the future?

Terrain Diffusion bridges the old world of procedural noise and the new world of AI. It keeps the things game developers love—endless worlds, seed-based reproducibility, quick access—while adding realism and global structure. This can:

- Help build massive, believable worlds for games, sims, and virtual training.

- Let users design planets with high-level controls (like climate or beauty preferences).

- Extend beyond terrain to any large, tiled content (textures, maps, environments).

The authors note a key limitation: the system still needs some coarse guidance at the top (like Perlin noise or a sketch) to set very large structures. But they see room to add more features (soil, more climate variables, satellite imagery) and push resolution higher with extra refinement stages.

In short: Terrain Diffusion makes it possible to generate entire planets that are realistic, consistent, and endless—fast enough to explore them in real time—bringing AI terrain to the scale and control developers need.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of concrete gaps, uncertainties, and open questions the paper leaves unresolved. Each item is framed to be actionable for future research.

- Top-level generator: The hierarchy relies on externally specified continental-scale structure (e.g., Perlin noise). Develop a seed-consistent, controllable learned base model for planetary-scale layout and quantify trade-offs in realism vs. user control.

- Scalability of InfiniteDiffusion: The method incurs O(T3) complexity from region growth, effectively limiting T to 1–2. Investigate window layouts, recursive strategies, multi-step caching, or learned blending that reduce complexity and enable longer schedules without prohibitive cost.

- Decoder tiling: The high-resolution decoder is not tiled due to large context needs. Design context-efficient decoding (e.g., cross-window attention, multiscale context caches) to support truly infinite decoding at fine resolution.

- Determinism across hardware: Seed-consistency is assumed under deterministic computation, but floating-point non-associativity and mixed precision can introduce variance. Benchmark cross-hardware reproducibility and define error bounds/tolerances for strict determinism.

- Weight maps and strides: Linear weight windows and fixed stride were chosen heuristically. Systematically study the effect of window shapes, learned/adaptive weights, and stride on seams, FID, and latency; consider content-aware or learned blending.

- Constant-time random access assumptions: The O(1) claim depends on bounded overlap |κ(R)|. Measure this in practice for different window geometries and strides, and devise strategies to maintain bounded overlap under varying query patterns.

- Hydrological realism: Isolated (endorheic) basins still occur. Integrate differentiable hydrology constraints or post-processing to enforce drainage to oceans, realistic river networks, and coastal connectivity; add hydrology-specific evaluation metrics.

- Physical plausibility of landforms: Elevation-only synthesis may miss tectonic, lithologic, glacial, and volcanic processes. Test whether outputs obey geomorphological scaling laws (e.g., Hack’s law, slope–area, hypsometric curves), and incorporate appropriate conditioning or losses.

- Ocean climate conditioning: Missing ocean climate data are replaced with Gaussian noise. Develop ocean climate estimators (e.g., simplified circulation models or learned predictors) to provide realistic conditioning offshore.

- Coastal merging heuristic: The 100-pixel linear interpolation from 0 m to ocean depth is ad hoc. Evaluate artifacts and propose physically grounded coast-merging methods; quantify their impact on realism and continuity.

- Global tiling and polar regions: Equal-area longitude stretching may distort near poles and at wraparound seams. Explore geodesic or cube-sphere tilings, spherical coordinates, and seam handling to ensure coherence and path independence globally.

- Signed square-root normalization: The transform is heuristic. Analyze invertibility and its effect on hydrology and extremes; compare to alternatives (e.g., log/asinh, learned per-tile normalization), and calibrate noise schedules accordingly.

- Laplacian encoding hyperparameters: Blur kernel, downsample factor, and re-extraction choices are unspecified. Perform sensitivity analysis, formalize bias–variance trade-offs, and explore multiscale (pyramidal) encodings for further stability gains.

- Evaluation metrics: FID on elevation images may not capture geophysical realism or coherence. Introduce domain-specific metrics (topographic spectra, curvature distributions, drainage density, hypsometric integrals), seam/coherence metrics, and human perceptual studies.

- Performance portability: Latency (TTFT/TTST) is reported only on an RTX 3090 Ti. Profile across diverse GPUs/CPUs, mixed precision, multi-GPU batching, and quantify memory footprint, cache eviction/prefetch strategies; optimize initialization and incremental generation paths.

- Parallelization and batching: While window updates can be batched, the optimal scheduling is unexplored. Study batch size vs. quality/speed trade-offs and load balancing strategies for streaming engines.

- Few-step generation quality: The approach relies on consistency distillation with 1–2 steps. Characterize quality–speed curves, failure modes at T=1, and robustness under noisy/missing conditioning; compare against alternative few-step samplers (e.g., DDS, Rectified Flow).

- Latent modeling: The VAE decoder is discarded; latents are precomputed per tile. Analyze latent distribution, KL strength, and generalization when cropping; explore learned latent priors or diffusion in latent space to improve global coherence and editability.

- Conditioning breadth and controllability: Current conditioning uses coarse elevation patches plus limited climate variables. Expand to soil, vegetation, landcover, snow/ice, and assess controllability (accuracy, responsiveness) under user-specified targets.

- Aesthetic guidance: The “beauty” regressor is trained on ~150 samples with handcrafted features. Quantify bias/generalization and user control reliability; investigate learned aesthetic guidance with larger datasets and multimodal prompts.

- Infinite Tensor framework maturity: The runtime is introduced without benchmarks vs. alternatives. Document API, memory behavior, eviction/prefetch policies, concurrency/autograd integration; add stress tests and reproducibility reports.

- Order invariance in practice: Although order-invariant by design, numerical rounding may introduce subtle seams. Build a test suite to verify identical outputs under varied query orders, tile sizes, and hardware, and define tolerances.

- Cross-tile continuity in latent space: Tiling is performed in latents; quantify continuity across tile boundaries after decoding, and develop cross-tile latent regularizers or attention mechanisms to improve seam-free synthesis.

- Game engine integration: Some features (e.g., /locate biome) remain unsupported due to lack of global indices. Design hierarchical global indices (biome maps, rivers) for long-distance queries and evaluate gameplay impacts under extreme traversal.

- Generalization beyond Earth: The model trains on Earth DEM/climate; behavior under out-of-distribution conditions (fantasy climates, alien planets) is unknown. Create synthetic/augmented datasets and domain adaptation strategies; catalog failure cases.

- Ethical and licensing considerations: Dataset licensing, disclosure when synthetic terrain resembles real locations, and potential misuse are not discussed. Propose guidelines and tooling for provenance and safe deployment.

- Theoretical analysis depth: Formal properties are proved, but bounds on error accumulation across recursion/caching and stability under varying window strides/weights are not analyzed. Provide quantitative stability/error guarantees.

- Interactive editing: Beyond coarse maps, mechanisms for fine-grained edits and their propagation are limited. Develop local editing tools with global consistency constraints and study the impact of edits on coherence.

- 3D/mesh outputs and LOD: The work generates elevation rasters only. Extend to mesh/LOD pipelines, erosion-ready water networks, caves/volumes, and assess runtime/quality trade-offs for volumetric terrain.

- Networked streaming: Real-time multiplayer scenarios require serialization/compression of infinite tensors and cache consistency across clients. Investigate networking protocols, synchronization, and bandwidth–latency trade-offs.

Glossary

- AutoGuidance: An inference-time guidance scheme that improves the fidelity of consistency or diffusion model sampling. "To improve fidelity further, we apply the guidance scheme proposed in AutoGuidance \cite{Karras2024autoguidance}."

- Bathymetry: The measurement of ocean depths and underwater topography. "while ocean bathymetry is taken from the 30-arc-second ETOPO dataset \cite{noaa_national_geophysical_data_center_etopo1_2009}."

- Bilinear interpolation: A resampling method that linearly interpolates along two dimensions to upsample images. "For these interactive visualizations, we apply bilinear interpolation to upsample the heightmaps ."

- BlockFusion: A generative approach that conditions tiles on neighbors to produce large, continuous worlds. "BlockFusion \cite{wu_blockfusion_2024} and WorldGrow \cite{li_worldgrow_2025} generate worlds by conditioning each tile on its neighbors, producing continuous worlds but without seed consistency, since outputs depend on sampling order."

- Consistency decoder: A decoder trained as a consistency model to expand latent representations into high-fidelity outputs in few steps. "Finally, a consistency decoder expands these latents into a high-fidelity elevation map."

- Consistency distillation: Training that distills multi-step diffusion into one or few steps for faster sampling while retaining quality. "few-step consistency distillation \cite{song_consistency_2023, lu_simplifying_2025} enables real-time inference"

- Consistency models: Generative models that approximate diffusion sampling in one or a few steps, enabling faster generation. "Consistency models \cite{song_consistency_2023} approximate denoised diffusion outputs in one or a few steps, and continuous variants \cite{lu_simplifying_2025} achieve throughput competitive with GANs while retaining most of the quality of full diffusion sampling, enabling real-time use cases."

- Constant-time random access: A property where any fixed-size region can be generated in time independent of its position. "Assuming that for any window region , InfiniteDiffusion guarantees constant-time random access."

- Denoising diffusion models: Generative models that iteratively refine noise into samples through a denoising process. "Denoising diffusion models \cite{ho_denoising_2020, pmlr-v37-sohl-dickstein15} generate samples by iteratively refining noise and are widely used for high-fidelity synthesis."

- EDM2: A diffusion model backbone architecture used as the core network in the hierarchy. "All models share a common EDM2 \cite{Karras2024edm2} backbone with the modifications proposed in sCM \cite{lu_simplifying_2025}."

- Equal-area tiling: A tiling strategy where each tile covers the same surface area to ensure consistent spatial scale. "This equal-area tiling ensures consistent scale across training and allows all models to train on data without distortion."

- ETOPO: A global dataset providing Earth’s topography and ocean bathymetry. "while ocean bathymetry is taken from the 30-arc-second ETOPO dataset \cite{noaa_national_geophysical_data_center_etopo1_2009}."

- fractal Brownian motion (fBm): A procedural noise technique combining multiple octaves to create fractal-like textures. "often combined with fractal Brownian motion (fBm) \cite{fournier_computer_1982}."

- Fréchet Inception Distance (FID): A metric for evaluating generative image quality by comparing feature distributions. "This Laplacian denoising step reduces the FID of the untiled core diffusion model (introduced next) from $23.21$ to $9.33$"

- GAN (Generative Adversarial Network): A generative framework with a generator and discriminator trained adversarially. "InfinityGAN \cite{lin_infinitygan_2022} produces infinite images with GANs but does not extend to diffusion models, limiting scalability."

- Hadamard product: Elementwise multiplication of tensors. "where denotes the Hadamard product."

- Hydrological consistency: The coherence of terrain features with realistic water flow patterns and drainage. "with hydrological consistency that far exceeds procedural noise, although isolated basins still occur."

- Infinite Tensor framework: A library enabling sliding-window computation on tensors with infinite dimensions using constant memory. "we introduce the Infinite Tensor framework, a Python library that enables sliding window computation over tensors with infinite dimensions."

- InfiniteDiffusion: An extension of MultiDiffusion enabling seed-consistent generation over unbounded domains. "We introduce InfiniteDiffusion, an extension of MultiDiffusion that lifts this constraint."

- InfinityGAN: A GAN-based approach for infinite image generation. "InfinityGAN \cite{lin_infinitygan_2022} produces infinite images with GANs but does not extend to diffusion models, limiting scalability."

- KL term: A Kullback–Leibler regularization component used to control overfitting in latent models. "The model is optimized using L1 and LPIPS losses with a weak KL term to prevent overfitting."

- Laplacian-based representation: A decomposition into low-frequency and residual/high-frequency components to stabilize synthesis. "we predict a Laplacian-based representation comprising a low-frequency component, obtained by downsampling and blurring the original image, and a residual/high-frequency component given by subtracting the upsampled low frequency component from the original image."

- Latent diffusion model: A diffusion model that operates in a learned latent space rather than pixel space. "The next stage is the core latent diffusion model, which transforms that structure into realistic 46km tiles in latent space."

- LPIPS (Learned Perceptual Image Patch Similarity): A perceptual similarity metric used for training or evaluation. "The model is optimized using L1 and LPIPS losses with a weak KL term to prevent overfitting."

- MERIT DEM: A global digital elevation model providing land elevations. "Land elevations are drawn from the 3-arc-second MERIT DEM \cite{yamazaki_highaccuracy_2017}"

- Mixture of Diffusers: A method that combines multiple diffusion models to synthesize images larger than training bounds. "MultiDiffusion \cite{bar-tal_multidiffusion_2023} and Mixture of Diffusers \cite{jimenez_mixture_2023} generate images larger than the training canvas but still assume a bounded final extent."

- MultiDiffusion: A technique that averages predictions from overlapping windows to generate beyond native resolution. "MultiDiffusion extends standard diffusion sampling by averaging overlapping windows, producing consistent outputs from local predictions."

- Perlin noise: A procedural gradient noise function widely used in computer graphics. "procedural noise functions such as Perlin noise \cite{perlin_image_1985, perlin_improving_2002}"

- rFID: A variant of FID reported for the decoder at a specific resolution in this work. "For context, we also measure the decoder’s standalone rFID at resolution."

- sCM: Simplified consistency model modifications applied to the EDM2 backbone. "All models share a common EDM2 \cite{Karras2024edm2} backbone with the modifications proposed in sCM \cite{lu_simplifying_2025}."

- Seed consistency: The property that outputs are deterministic functions of a fixed seed and region, independent of query order. "Seed consistency follows directly from how the process is defined."

- Signed square-root transform: A data transform mapping values to sign(z)·sqrt(|z|) to normalize dynamic range. "we apply a signed square-root transform ."

- U-Net: A convolutional encoder–decoder architecture with skip connections used as a backbone. "We train a separate VAE-style autoencoder that shares the same U-Net backbone but omits diffusion-specific components."

- VAE (Variational Autoencoder): A probabilistic autoencoder that learns latent representations via reconstruction and KL regularization. "We train a separate VAE-style autoencoder that shares the same U-Net backbone but omits diffusion-specific components."

- WorldClim: A global climate dataset providing temperature and precipitation variables. "we supplement elevation with 30-arc-second WorldClim \cite{fick_worldclim_2017} data for temperature and precipitation."

- WorldGrow: A method for world generation that conditions tiles on neighbors without seed consistency. "BlockFusion \cite{wu_blockfusion_2024} and WorldGrow \cite{li_worldgrow_2025} generate worlds by conditioning each tile on its neighbors, producing continuous worlds but without seed consistency, since outputs depend on sampling order."

Practical Applications

Below is a concise mapping from the paper’s findings to practical, real-world applications, organized by deployment horizon and tagged with relevant sectors. Each item includes concrete product/workflow ideas and feasibility notes.

Immediate Applications

The following can be deployed today using the paper’s methods and open-source components, on a single consumer GPU as demonstrated.

- [Gaming/Interactive Media] Real-time infinite world generation plugin for Unity/Unreal

- Workflow/product: “Terrain Diffusion SDK” that streams seed-consistent elevation tiles and coarse climate to engine terrain systems; exposes controls for seed, “beauty” histogram, climate noise, and regional masks; supports random-access tile queries for fast teleport/travel.

- Dependencies/assumptions: RTX 3080/3090-class GPU for target latencies; uses the paper’s consistency-distilled models (few-step); elevation-only (textures/vegetation via biome mapping); continental-scale guidance via simple procedural inputs or hand sketches.

- [Gaming] Drop-in world generator for voxel games (Minecraft/Minetest)

- Workflow/product: A mod that replaces elevation and biome queries with Terrain Diffusion outputs (as in the paper’s Minecraft integration) and maps climate to biomes via rules.

- Dependencies/assumptions: Game modding APIs; upsampling heightmaps for engine mesh resolution; long-range biome queries may need custom support; gameplay physics/structures remain native.

- [Film/Animation/Previz] Rapid landscape blockout for previsualization and matte painting

- Workflow/product: DCC plugins (Blender, Houdini, Nuke) that fetch DEM tiles by seed/bbox; export as meshes/displacement for set dressing and camera layout.

- Dependencies/assumptions: Elevation is synthetic and hydrology is plausible but not physically exact; optional erosion passes or art-directed masks may be added in post.

- [Robotics/Autonomy] Diverse synthetic terrains for locomotion and path-planning validation

- Workflow/product: Gazebo/Isaac Sim adapters that procedurally stream DEMs with controllable slope, roughness, and moisture proxies (from climate channels) for sim-to-real robustness testing.

- Dependencies/assumptions: Elevation only—users map climate/elevation to surface materials/friction; add semantics and obstacles as a downstream step.

- [Simulation/Defense/Emergency Training] Synthetic, non-sensitive terrains for exercises

- Workflow/product: Training ranges that stream “plausible Earth-like” landscapes on demand for mission rehearsal/disaster drills without revealing real geography.

- Dependencies/assumptions: Add hazard/river/road layers in post; ensure trainees understand non-fidelity limits (rivers/basins approximate).

- [Geospatial/ML] Privacy-preserving DEM datasets for algorithm benchmarking and augmentation

- Workflow/product: Generate large, coherent DEM corpora to stress-test watershed extraction, slope stability analysis, segmentation, or up/down-scaling models without licensing or privacy concerns.

- Dependencies/assumptions: Not georeferenced; use for robustness/benchmarking, not for site-specific prediction; evaluate bias vs. Earth distributions.

- [Education] Interactive “procedural Earth” for teaching geography/geomorphology

- Workflow/product: A classroom app that lets learners explore landforms across scales with seed-consistent worlds; annotate ridges, valleys, watersheds, and climate/topography relations.

- Dependencies/assumptions: Runs on a gaming-class GPU or streamed from a server; add labels/overlays for pedagogy; clearly denote synthetic nature.

- [Content Creation Tools] Creator utilities for tabletop RPGs and mapmaking

- Workflow/product: A map generator that outputs coherent continents/regions by seed for campaigns; export height, rivers (derived), and biome overlays.

- Dependencies/assumptions: Rivers derived from elevation require additional flow routing; user control via procedural inputs and “beauty” bias.

- [Cloud/Platform] Tile server microservice for “Planet-as-a-Seed” APIs

- Workflow/product: A stateless service that returns elevation tiles for (seed, bbox, zoom) with constant-time random access and server-side caching (enabled by the Infinite Tensor framework).

- Dependencies/assumptions: GPU-backed instances; cache/memoization across users; rate limits; per-request cost model.

- [ML Systems/Infra] Infinite Tensor framework for memory-bounded inference on huge images

- Workflow/product: Integrate the open-source infinite-tensor library into pipelines (remote sensing mosaics, histology whole-slide inference, large-format graphics) to process unbounded canvases at constant memory.

- Dependencies/assumptions: Adapting sliding-window computation and blending to existing models; choose window/weighting to avoid seams.

- [Compression/Streaming R&D] Evaluation bed for DEM codecs and streaming protocols

- Workflow/product: Use infinite, realistic DEMs to stress-test progressive streaming, compression ratios, and latency handling at scale without dataset limits.

- Dependencies/assumptions: Metrics must reflect use-case fidelity; synthetic terrain distributions may differ from real DEMs.

Long-Term Applications

These rely on extending the hierarchy (e.g., more conditioning variables, new stages), scaling, or domain transfer of the InfiniteDiffusion method.

- [Earth/Environmental Modeling] Physically grounded hydrology and erosion

- Vision: Add river networks, drainage consistency, soil/rock properties, and erosion-aware refinement stages for near-physical realism.

- Dependencies/assumptions: New conditioning channels and datasets; hybrid learned + physics-based solvers; validation against gauged basins.

- [Urban/Infrastructure] Generative terrain + settlement/transport layers

- Vision: Couple terrain with road networks, urban morphology, and infrastructure siting to prototype synthetic regions and stress-test routing/logistics.

- Dependencies/assumptions: Annotated training data for settlements/roads; semantics-aware diffusion; controllable priors for human-made features.

- [Flight Simulation/Telepresence] Multi-sensor planetary streaming (DEM + textures + landcover)

- Vision: Complement elevation with photoreal textures, vegetation, and atmospheric effects for high-fidelity flight/space sims and virtual tourism.

- Dependencies/assumptions: Joint elevation–texture models; higher-throughput decoding; multi-GPU or cloud scaling; anti-repetition controls.

- [Defense/Emergency Management] Scenario-tailored synthetic terrains with hazards

- Vision: Inject realistic floodplains, wildfire risk, landslide susceptibility, or volcanic terrains for mission rehearsal and policy exercises.

- Dependencies/assumptions: Hazard models layered on synthetic DEMs; careful calibration to avoid misleading risk signals.

- [Geospatial Policy/Data Governance] Public release of high-fidelity synthetic terrain for open benchmarks

- Vision: Standardized, non-sensitive corpora to democratize ML benchmarking where real DEMs are restricted or incomplete.

- Dependencies/assumptions: Community-agreed validation metrics to prevent overfitting to synthetic artifacts; transparent documentation of generative biases.

- [Robotics/Autonomy] Domain-randomized sim-to-real pipelines across terrains

- Vision: Curriculum learning over progressively challenging synthetic terrains to improve off-road driving and legged-robot generalization.

- Dependencies/assumptions: Material/terrain-physics parameterization; field validation; sensor models (e.g., LiDAR returns) on top of DEMs.

- [Cross-Domain Generative Systems] InfiniteDiffusion for textures, maps, and large environments

- Vision: Train domain-specific models (e.g., materials, satellite mosaics, city-scale maps) to deliver seed-consistent, random-access generation on unbounded canvases.

- Dependencies/assumptions: New datasets and training; domain-specific blending/weighting schemes; evaluation protocols.

- [Education/Outreach at Scale] Curriculum-aligned “Procedural Earth” with assessment

- Vision: Scaffolded modules that teach landform processes via interactive exploration, with auto-generated quizzes and lab exercises.

- Dependencies/assumptions: Authoring tools for educators; alignment with standards; accessibility on commodity hardware or via streaming.

- [Energy/Infrastructure Planning (Synthetic Sandbox)] Early-stage, risk-free prototyping and algorithm testing

- Vision: Test siting heuristics, routing algorithms, or grid-layout planners on abundant synthetic landscapes before moving to real sites.

- Dependencies/assumptions: Not a substitute for real surveys; needs synthetic wind/solar/climate layers and constraints for realism.

- [Remote Sensing/Space Missions] Pre-mission coverage and algorithm readiness on plausible planets

- Vision: Generate “alien” but Earth-like worlds for instrument coverage analyses and autonomy algorithms for planetary rovers.

- Dependencies/assumptions: Domain adaptation to non-Earth parameters; synthetic reflectance/thermal models; physics-informed constraints.

- [Massive Multi-User Worlds] Distributed “planet-as-a-service” backends for MMOs/metaverse

- Vision: Persistently shared, seed-consistent planets with sharded caching and low-latency streaming for thousands of concurrent users.

- Dependencies/assumptions: Distributed memoization; consistency under concurrent writes (if editing is allowed); moderation/content tooling.

Notes on feasibility across items:

- Real-time performance claims are based on an RTX 3090 Ti with two-step consistency decoding. Throughput on lower-end GPUs or mobile requires further distillation or server-side streaming.

- The pipeline currently generates elevation and a few coarse climate variables; additional semantics (rivers, roads, landcover) and strict physical consistency require new model stages and data.

- Seed consistency and constant-time random access rely on the InfiniteDiffusion algorithm and the Infinite Tensor runtime; these must be integrated carefully (window layout, weighting) to avoid artifacts.

- Training data (MERIT DEM, ETOPO, WorldClim) licenses should be reviewed for commercial use; synthetic outputs are non-georeferenced and should be labeled accordingly when used in public or policy contexts.

Collections

Sign up for free to add this paper to one or more collections.