- The paper presents a two-stage approach that combines ILP for cost-optimal LLM provisioning with RL for selecting effective collaboration topologies.

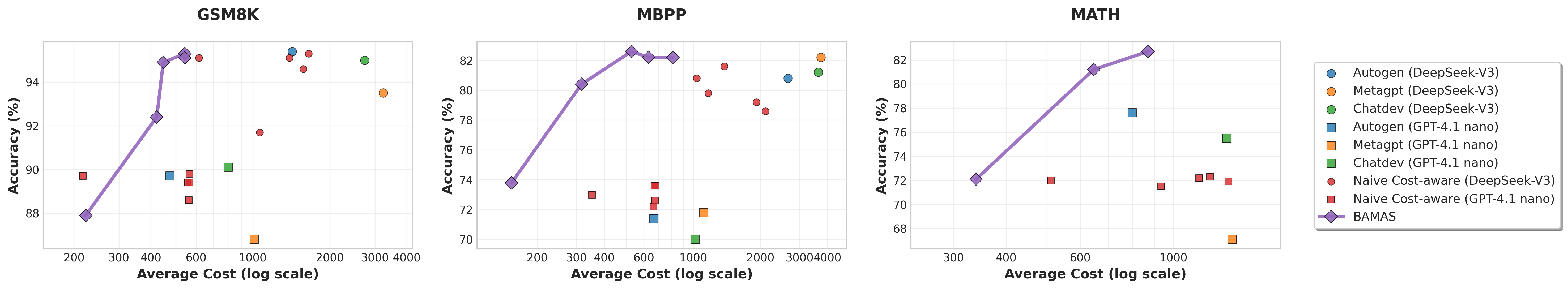

- It demonstrates up to 86% reduction in operational costs while achieving comparable or superior accuracy relative to leading MAS frameworks.

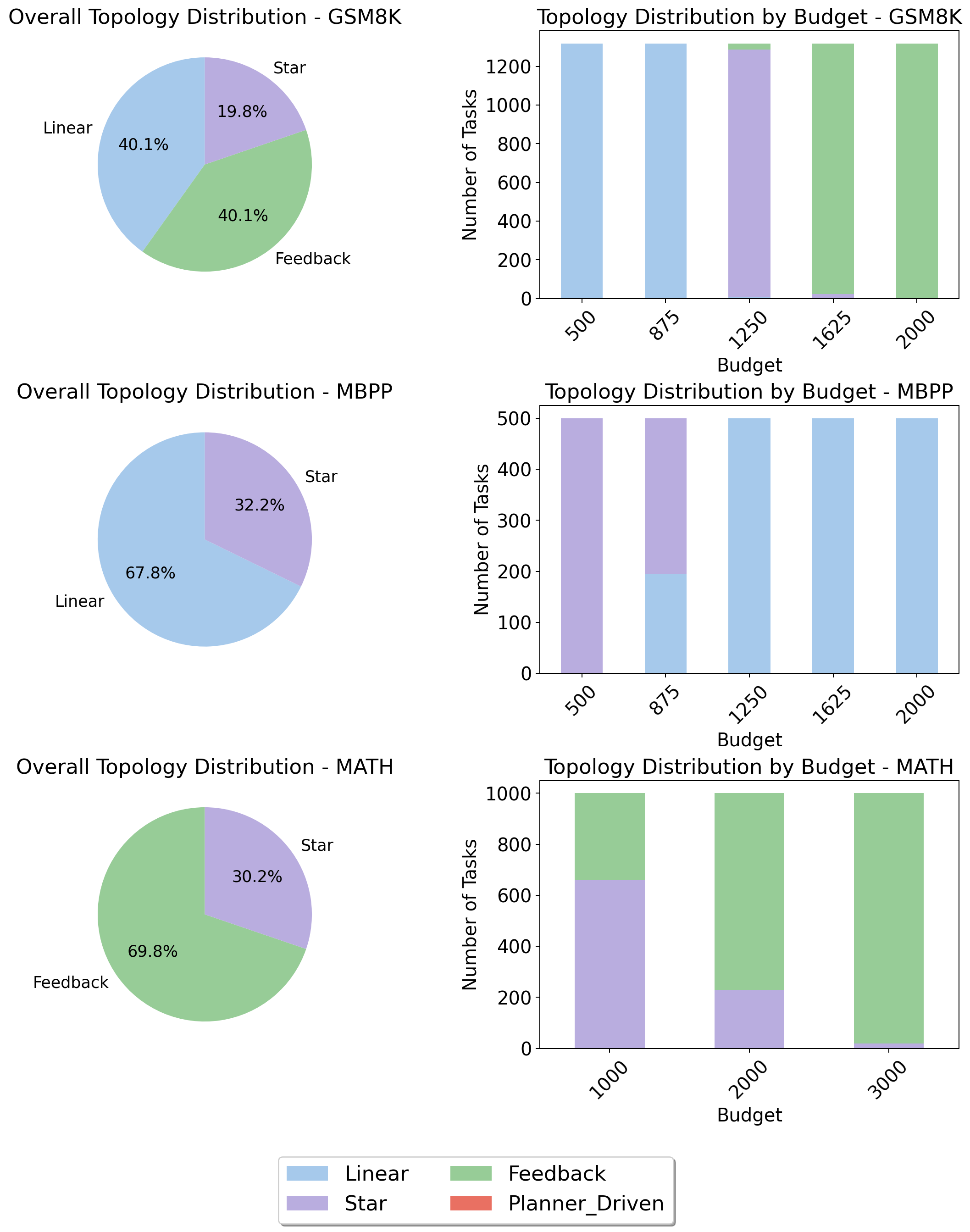

- The adaptive topology selection dynamically adjusts to task requirements and budget constraints, highlighting risk aversion in resource-limited scenarios.

Structured Budget-Aware Multi-Agent Systems with BAMAS

Introduction

BAMAS ("Structuring Budget-Aware Multi-Agent Systems") (2511.21572) addresses a critical gap in current LLM-based multi-agent system (MAS) design: adhering to explicit budget constraints while maximizing performance. Existing MAS frameworks focus on performance but largely ignore operational costs, primarily incurred via token consumption in LLM queries. BAMAS introduces a two-stage approach combining Integer Linear Programming (ILP) for optimal LLM provisioning and offline reinforcement learning (RL) for collaboration topology selection. The resulting system adapts both its agent pool and workflow structure to explicit budget limits, yielding strong performance at a fraction of conventional costs.

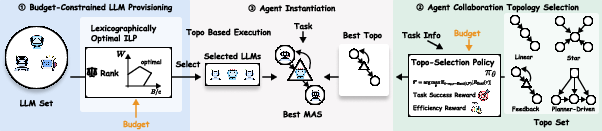

Figure 1: The BAMAS pipeline provisions a cost-optimal LLM set and selects a collaboration topology with RL to guide cost-efficient multi-agent execution.

Given a task T, an available LLM asset set A, and a cost budget B, the objective is to instantiate a MAS maximizing performance without exceeding B. BAMAS decomposes this into three stages:

- Budget-Constrained LLM Provisioning: Uses an ILP to select a pool P⊆A, maximizing cumulative performance weights while strictly meeting the budget constraint. Weights are recursively defined to ensure higher-tier LLMs (by Chatbot Arena ranking) are always preferred where affordable, yielding lexicographically optimal collections in terms of expected accuracy per unit cost.

- Collaboration Topology Selection: Employs an offline RL policy πθ over a discrete set of workflow topologies (Linear, Star, Feedback, Planner-driven). The policy is conditioned on the embedded task specification and budget and is optimized with a reward structure that combines success and cost efficiency, penalizing budget overruns and rewarding cost savings post-success.

- Agent Instantiation: Assigns provisioned LLMs to agent roles as determined by the chosen topology, favoring the highest-weight models for roles with the greatest impact (e.g., critic or planner).

BAMAS is comprehensively evaluated against state-of-the-art MAS frameworks: AutoGen, MetaGPT, and ChatDev, each operating under comparable LLM resources and controlled budget settings. Experiments span representative domains—math reasoning (GSM8K, MATH) and program synthesis (MBPP).

BAMAS achieves a tunable trade-off:

Unlike existing systems, BAMAS can be explicitly tuned to arbitrary budget levels, enabling cost-performance adaptation for deployment environments with highly variable constraints.

Analysis of BAMAS Components

Ablation studies against a greedy Naive-CostAware baseline (which incrementally adds more LLMs without consideration for topology or global optimization) demonstrate that BAMAS's joint ILP provisioning and RL-based topology selection is strictly superior for any fixed cost. The system's efficacy is not attributable to a single component but to the synergy of globally optimal LLM selection and context-sensitive workflow adaptation.

Adaptive Topology Selection

BAMAS does not default to a static collaboration pattern, instead learning to vary topology by task and budget:

Practical and Theoretical Implications

BAMAS operationalizes the trade-off between model quality, system architecture, and cost with a scalable, modular framework:

- For deployment, practitioners can explicitly guarantee resource ceilings while retaining near-optimal task performance.

- The modular use of ILP and offline RL facilitates integration with future advances in LLM cost-modeling or workflow libraries.

- Empirical evidence demonstrates that global, budget-aware planning is strictly superior to greedy resource-allocation and fixed-pattern multi-agent methods.

Theoretically, BAMAS suggests that robust MAS construction under cost constraints is tractably approximated by classical combinatorial optimization (ILP) coupled with data-driven topology policy learning; the approach generalizes to richer topological pattern libraries and non-binary LLM resource pools.

Conclusion

BAMAS establishes a principled framework for MAS design under budget constraints, leveraging exact ILP for agent selection and RL for flexible workflow structuring. It consistently matches or exceeds the accuracy of state-of-the-art methods while substantially reducing cost, and its adaptive topology selection policy demonstrates nuanced awareness of both domain and budget. The method sets a foundation for cost-aware, scalable multi-agent LLM deployment and highlights promising directions for future work in MAS budgeted reasoning, dynamic agent composition, and multi-objective optimization.