- The paper introduces GUARDIAN, a neuro-symbolic framework that integrates confidence-aware EEG decoding with dual invariant safety checks for neural robotics.

- It utilizes physiological invariants to assess decoder uncertainty and logical invariants to ensure symbolic grounding of actions, enhancing reliability.

- Empirical results demonstrate robust safety maintenance (94–97% safety rate) and sub-millisecond latency across various decoders in real-time operation.

Gated Uncertainty-Aware Runtime Dual Invariants for Neural Signal-Controlled Robotics

Overview and Motivation

This work introduces GUARDIAN (Gated Uncertainty-Aware Runtime Dual Invariants), a neuro-symbolic safety verification framework for neural signal-controlled robotics, specifically targeting safety-critical assistive domains. GUARDIAN establishes verifiable runtime guarantees by integrating confidence-aware decoding of EEG signals, hierarchical physiological and logical invariant monitoring, and symbolic goal grounding compatible with AI planning systems. The framework operates as an auditable and explainable safety gate—intervening when neural evidence is weak, decoder uncertainty is excessive, or logical constraints are violated—without interrupting real-time control throughput.

System Architecture and Algorithmic Design

GUARDIAN is formally structured around dual invariant layers:

- Physiological invariants: Quantify decoder uncertainty using normalized entropy of the calibrated class distribution, detect aberrant EEG artifacts via aggregated band-limited root-mean-square (RMS) energy, and identify rapid intent oscillations with an oscillation index over recent time windows.

- Logical invariants: Symbolically ground intent to action-level goals and verify their executability via precondition checks in PDDL-based planning schemas, ensuring reachability, configuration validity, and permitted transitions.

For each incoming EEG window xt, the neural decoder outputs a class-posterior pt over manipulation primitives (grasp, release, move_to, rotate). Calibration-aware intent distributions are produced by mixing pt with the uniform prior, mitigating overconfidence and miscalibration. Invariant violation triggers immediate intervention, maintaining the robot in a predefined safe state.

Experimental Protocol

GUARDIAN is evaluated on the BNCI2014 motor imagery EEG dataset, involving 9 subjects, 22 channels, and over 5,000 trials. Four decoder classes are analyzed: EEGNet (compact CNN), Riemannian covariance model, lightweight CNN, and an interpretable feature-based model (RealIntent). The system processes at 100Hz (sub-millisecond latency), enabling practical closed-loop deployment.

Decoder trustworthiness is quantified via Expected Calibration Error (ECE), Maximum Calibration Error (MCE), and intervention analysis. The safety monitor's performance is assessed with metrics for safety rate (proportion of correct interventions), intervention rate (frequency of HALT actions), and real-time latency.

Calibration and Decoder Analysis

Decoder validation accuracy spans 51–59%, aligning with motor imagery literature, but deployment test accuracy catastrophically degrades to 27–46%, reflecting non-stationarity and cross-session variance inherent to BCI signal regimes. ECE scores of 0.22–0.41 (and MCE as high as 0.91) are recorded—overconfidence and miscalibration are pervasive.

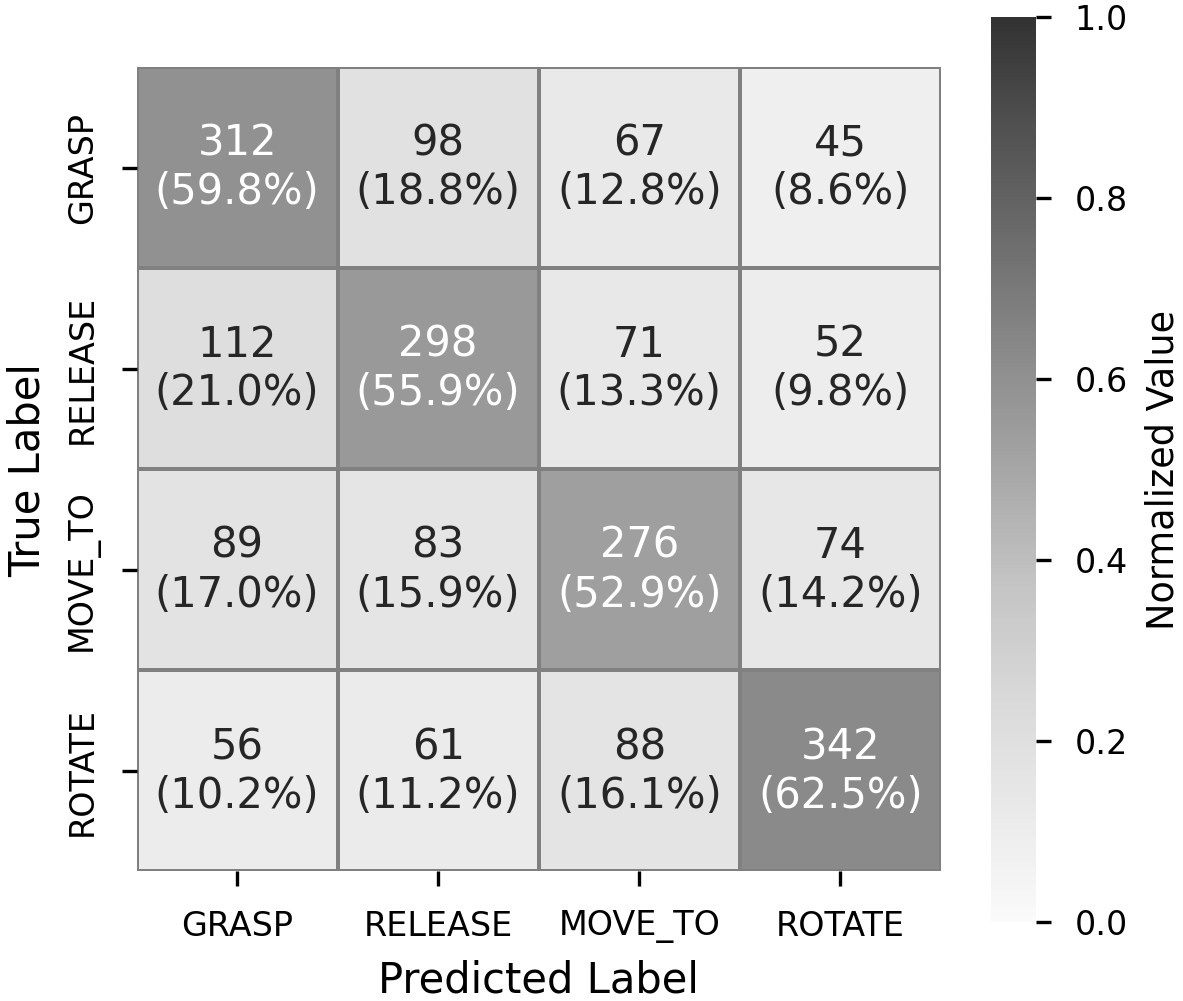

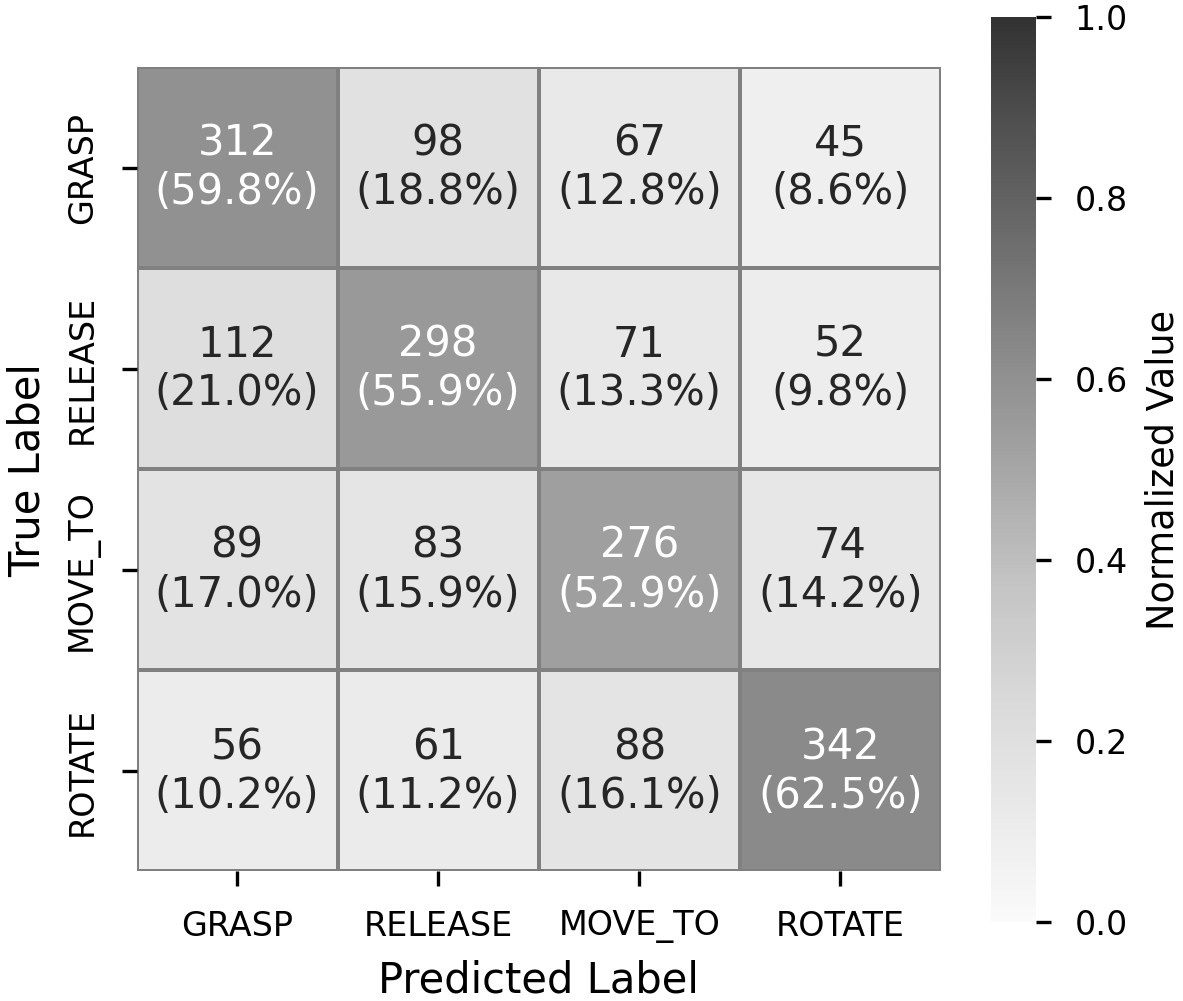

The confusion matrix for EEGNet illustrates substantial class confusion (Figure 1).

Figure 1: Confusion matrix for EEGNet (test set) highlights systematic misclassification, especially between similar motor imagery tasks.

Threshold optimization reveals that aggressive safety-optimal settings are necessary for low-accuracy decoders, but this increases intervention rates substantially. Ablation studies show that omitting entropy checks or calibration drastically reduces safety rates by 5–7 percentage points, and reliance on confidence alone yields the lowest overall safety (–16 to –24 percentage points depending on decoder).

Safety Monitoring and Robustness

GUARDIAN achieves a safety rate of 94–97% across all decoders, even under high miscalibration and severe signal degradation. Correct intervention rates increase up to 1.7x under simulated EEG noise, with the monitor dynamically adapting thresholding to preserve safety despite worsening signal quality.

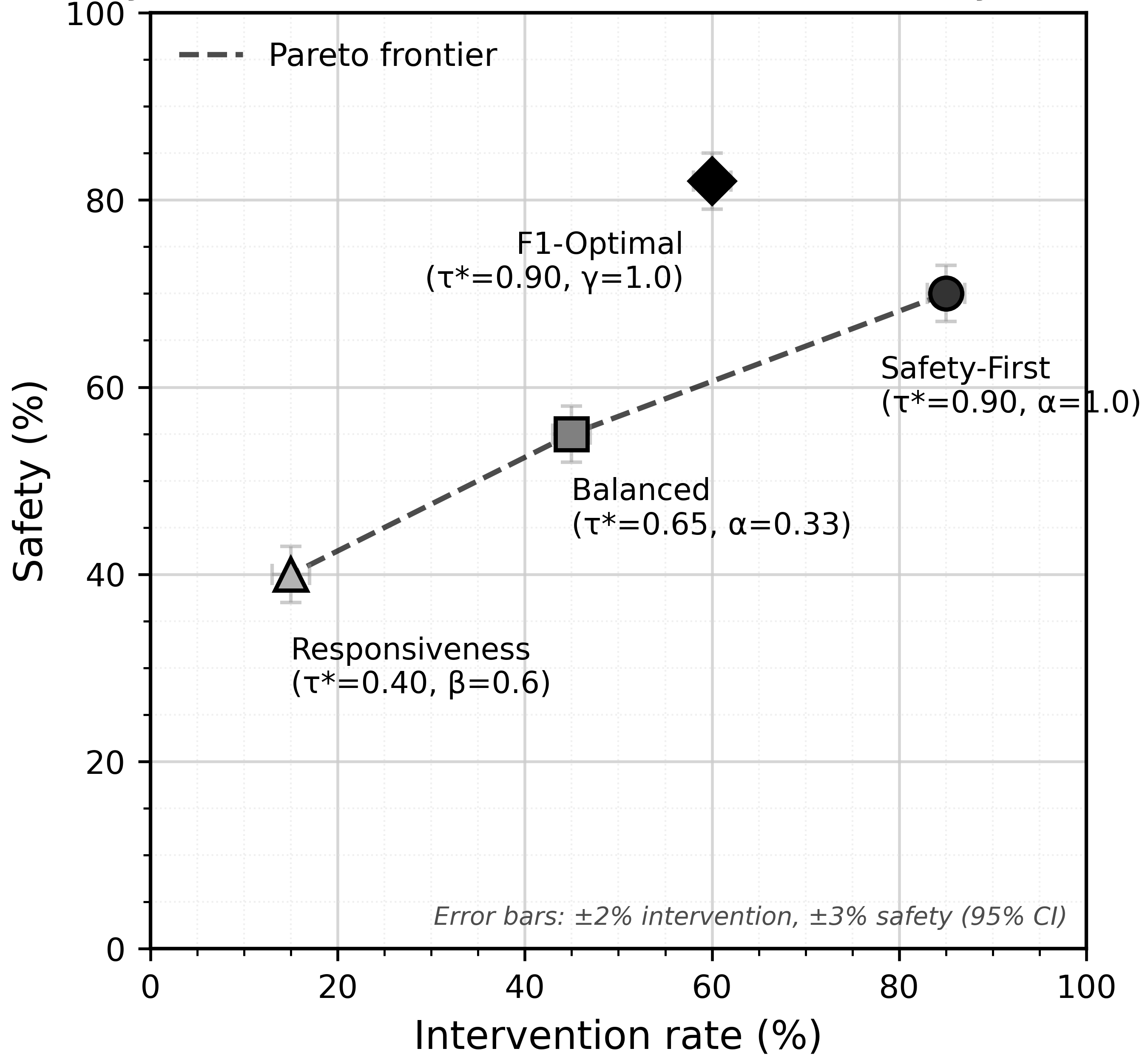

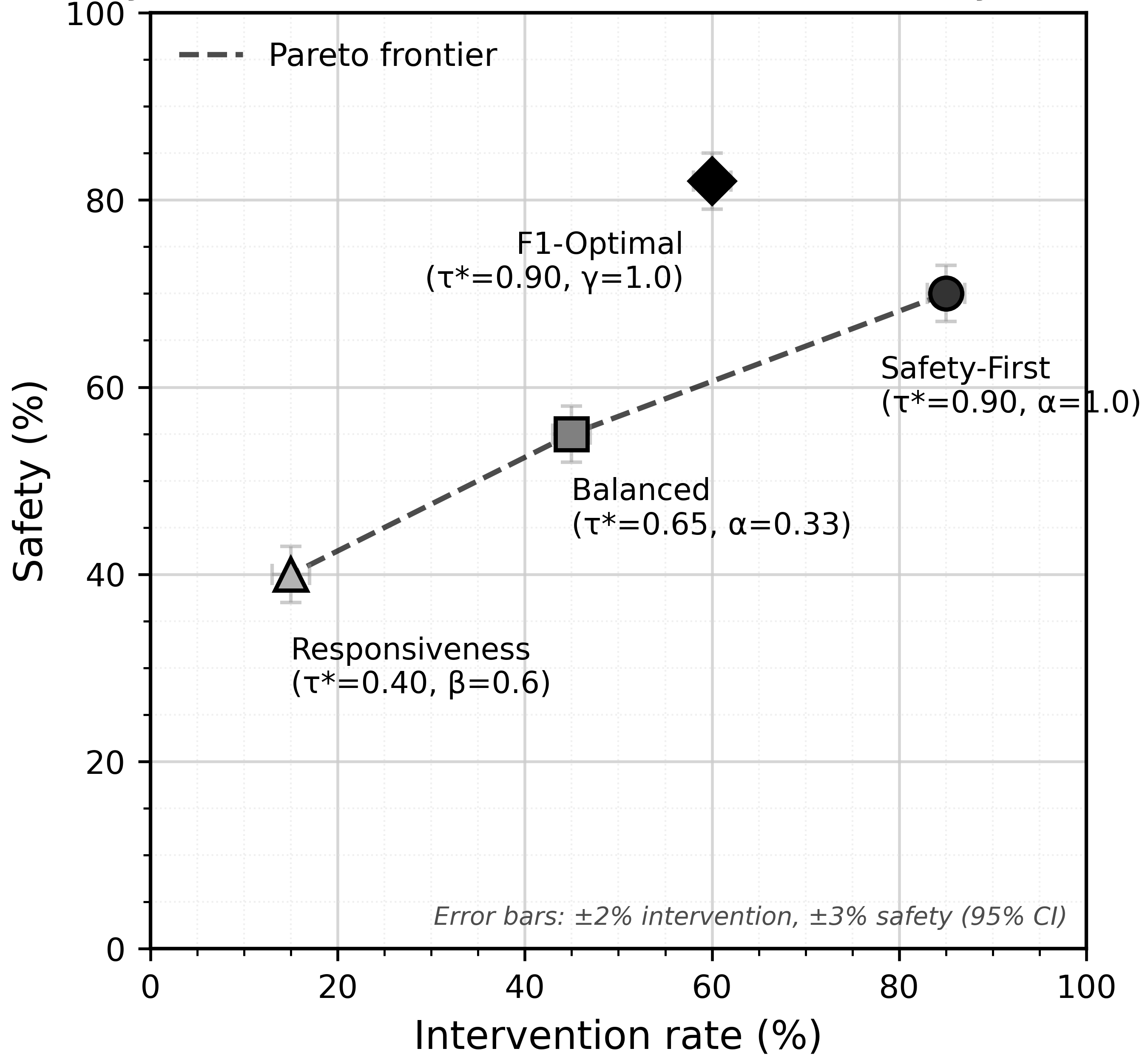

Pareto frontier analyses demonstrate the trade-off between safety and responsiveness as objective weights and thresholds are tuned (Figure 2).

Figure 2: Optimal thresholds under different objective weights, depicting trade-offs between safety and intervention rates and the Pareto-efficient frontier.

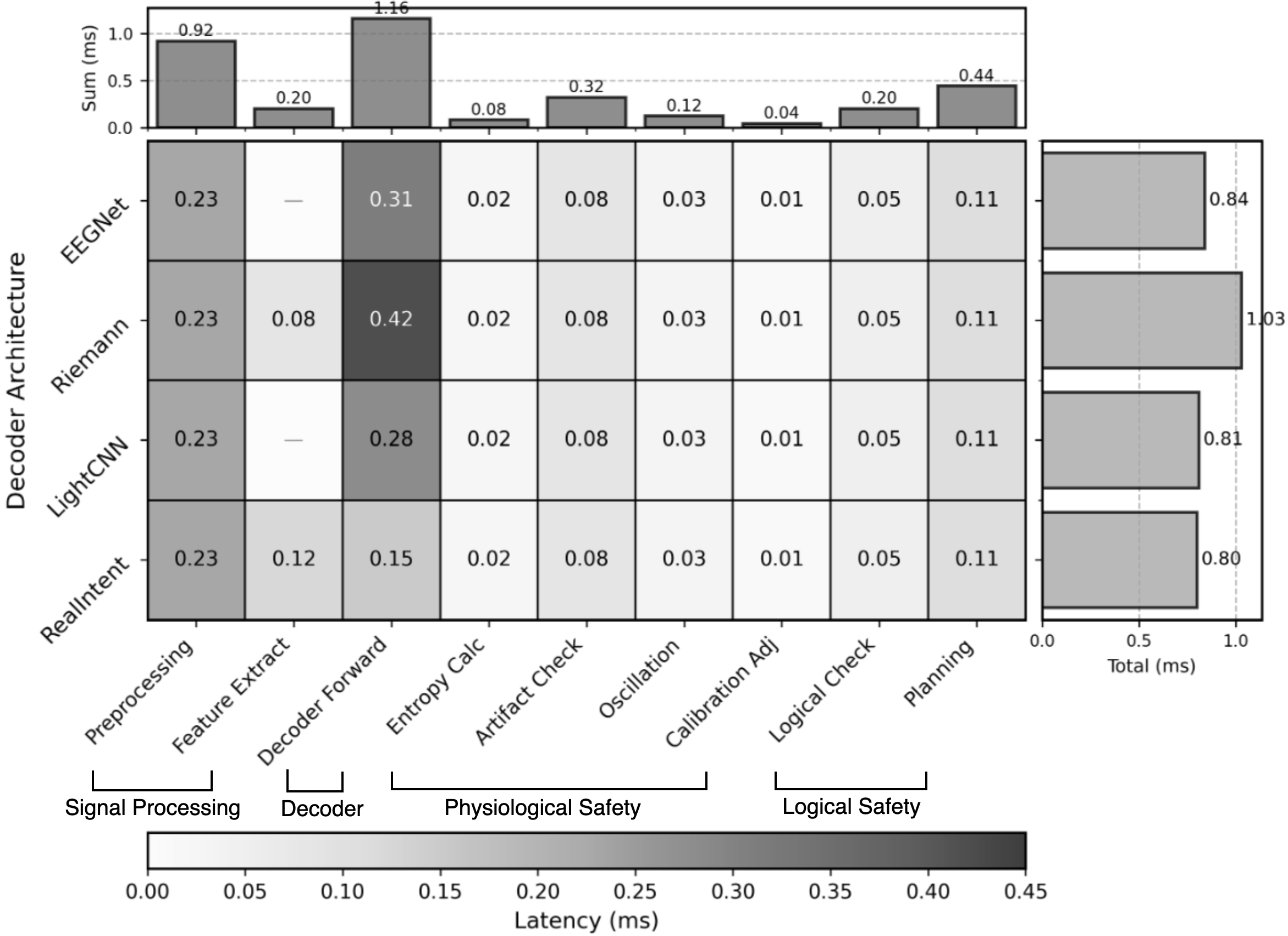

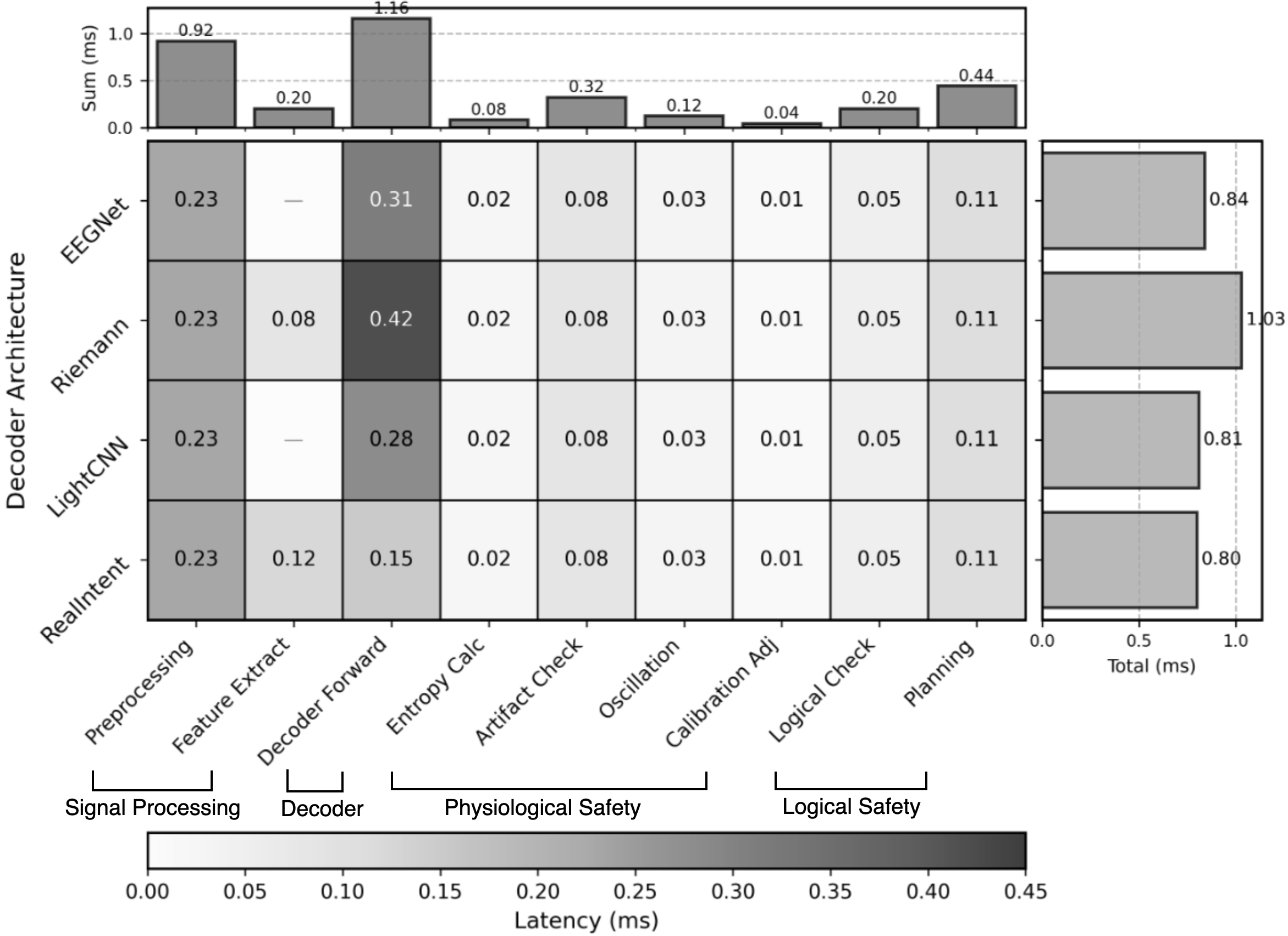

Latency measurements confirm the monitor's negligible computational overhead (<1ms per cycle) across all decoder architectures (Figure 3).

Figure 3: Component latency comparison shows consistent sub-millisecond decision latency regardless of decoder complexity, supporting closed-loop real-time operation.

Implications and Discussions

GUARDIAN's architecture generalizes across different EEG decoding modalities, facilitating integration with standard symbolic planning toolchains (e.g., PDDL). The dual-invariant runtime monitoring paradigm is especially beneficial in high-stakes shared autonomy settings, addressing typical BCI challenges of non-stationarity, signal drift, and calibration failure. The modular design admits threshold tuning for personalized profiles, including adaptation to user cognitive load and fatigue. GUARDIAN is compatible with regulatory assurance workflows in medical and collaborative robotics, providing structured auditability and transparency for certification purposes.

Importantly, the results underscore that accuracy and confidence scores are insufficient to ensure safety in neural signal-driven control—context-sensitive calibration and multi-level invariants are required for verifiable deployment.

From a theoretical standpoint, the neuro-symbolic approach advances the methodology for runtime verification in uncertain human-in-the-loop systems. GUARDIAN opens avenues for incorporating active learning, adaptive calibration, and physiological context-awareness into future closed-loop safety architectures. Its decoder-agnostic design anticipates integration with emerging BCI modalities and hybrid sensing platforms.

Conclusion

GUARDIAN delivers real-time, uncertainty-aware safety verification for neurally-controlled robot systems with decoder-agnostic support and minute computational overhead. Its dual-invariant framework addresses critical vulnerabilities in AI-assisted assistive robotics by coupling epistemic calibration checks with symbolic safety plan grounding. The empirical results demonstrate robust safety maintenance under low accuracy and severe miscalibration, establishing GUARDIAN as an effective safety gate for practical deployment.

Future development may focus on adaptive intervention schemes, user experience optimization, multi-modal sensing, and co-adaptive calibration protocols. Reliable, legible, and auditable safety verification architectures such as GUARDIAN will be central to the trustworthy deployment of neural-driven collaborative robotics.