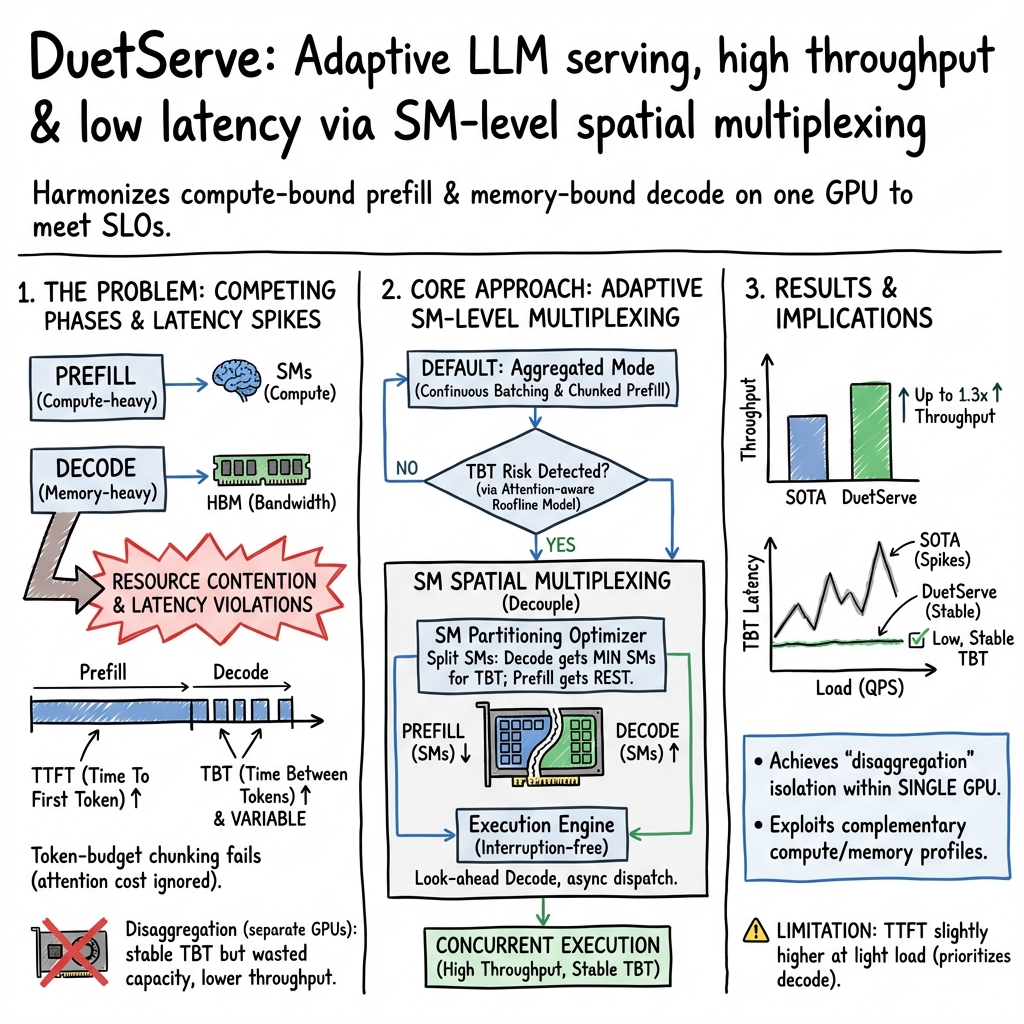

- The paper introduces an innovative framework that harmonizes prefill and decode phases to optimize LLM inference on GPUs.

- It employs an attention-aware roofline model, a partitioning optimizer, and an interruption-free engine to dynamically manage GPU resources.

- Evaluation shows up to 1.3x throughput improvement while maintaining low latency, paving the way for efficient cloud-based LLM serving.

A Technical Overview of DuetServe

DuetServe represents an advanced framework for optimizing the serving of LLMs on GPUs. This paper presents a unified method that harmonizes the prefill and decode phases of LLM inference, addressing the challenges of balancing throughput and latency inefficiencies in current systems. The framework is designed to intelligently multiplex GPU resources, enhancing both the performance and resource utilization of LLM serving processes.

Key Challenges in LLM Serving

LLM inference involves two primary phases: the compute-intensive prefill phase and the memory-bound decode phase. Each phase has distinct resource requirements, leading to inefficiencies when these phases are executed on shared GPU resources. Traditional approaches either aggregate both phases on a single GPU, which causes performance degradation due to resource contention, or disaggregate them across multiple GPUs, which incurs overheads from duplicated models and KV cache transfers.

The existing state-of-the-art techniques, like continuous batching, aim to balance throughput and latency by dynamically scheduling requests but are inadequate in preventing interference between the highly variable durations of these phases.

DuetServe: An Integrated Approach

DuetServe introduces an innovative method that provides phase isolation within a single GPU by utilizing adaptive spatial multiplexing at the level of streaming multiprocessors (SMs). Key components of DuetServe include:

- Attention-Aware Roofline Model: This model forecasts iteration latency using compute and memory characteristics to preemptively detect potential latency SLO violations.

- Partitioning Optimizer: It determines the optimal SM allocation between the prefill and decode phases, activating spatial multiplexing only when necessary to maximize throughput under latency constraints.

- Interruption-Free Execution Engine: This component removes synchronization overheads between the CPU and GPU, allowing for more seamless and concurrent execution of prefill and decode phases.

Evaluation and Results

The evaluations demonstrate that DuetServe significantly improves throughput by up to 1.3x compared to existing frameworks while maintaining low generation latency. By employing predictive latency models and dynamic resource partitioning, DuetServe efficiently manages the inherently variable workloads of LLM serving, mitigating the negative impact of resource contention without the overheads associated with traditional disaggregation.

Implications and Future Developments

The introduction of DuetServe represents a significant step forward in optimizing GPU resource utilization for LLMs. Its ability to dynamically allocate resources based on workload characteristics holds promise for more efficient operation in cloud-based environments where real-time response is critical. This framework can influence future developments in LLM serving by providing a blueprint for integrating resource multiplexing capabilities into existing and new architectures, enabling more efficient scaling of AI models.

As GPUs continue to evolve, with greater emphasis on parallelism and resource flexibility, systems like DuetServe will be fundamental in harnessing these capabilities, ensuring high performance and cost-effective LLM deployment.

Conclusion

DuetServe offers a comprehensive solution to the challenges faced in LLM server management, combining the benefits of both aggregated and disaggregated execution modes. By dynamically adjusting resource allocation in response to predictive latency models, DuetServe enhances both the efficiency and scalability of LLM serving systems. This work not only advances current methodologies but also establishes a foundation for future exploration into adaptive resource management for AI applications.