- The paper introduces a novel thought-adaptive KV cache compression that segments reasoning, execution, and transition tokens to reduce memory usage.

- It employs a hybrid quantization-eviction strategy that maintains near-lossless accuracy while utilizing less than 5% of the original cache size in tasks like coding and mathematics.

- Experimental results show up to a 5.8x improvement in inference throughput, enabling efficient long-context reasoning on limited GPU resources.

Thought-Adaptive KV Cache Compression

Introduction

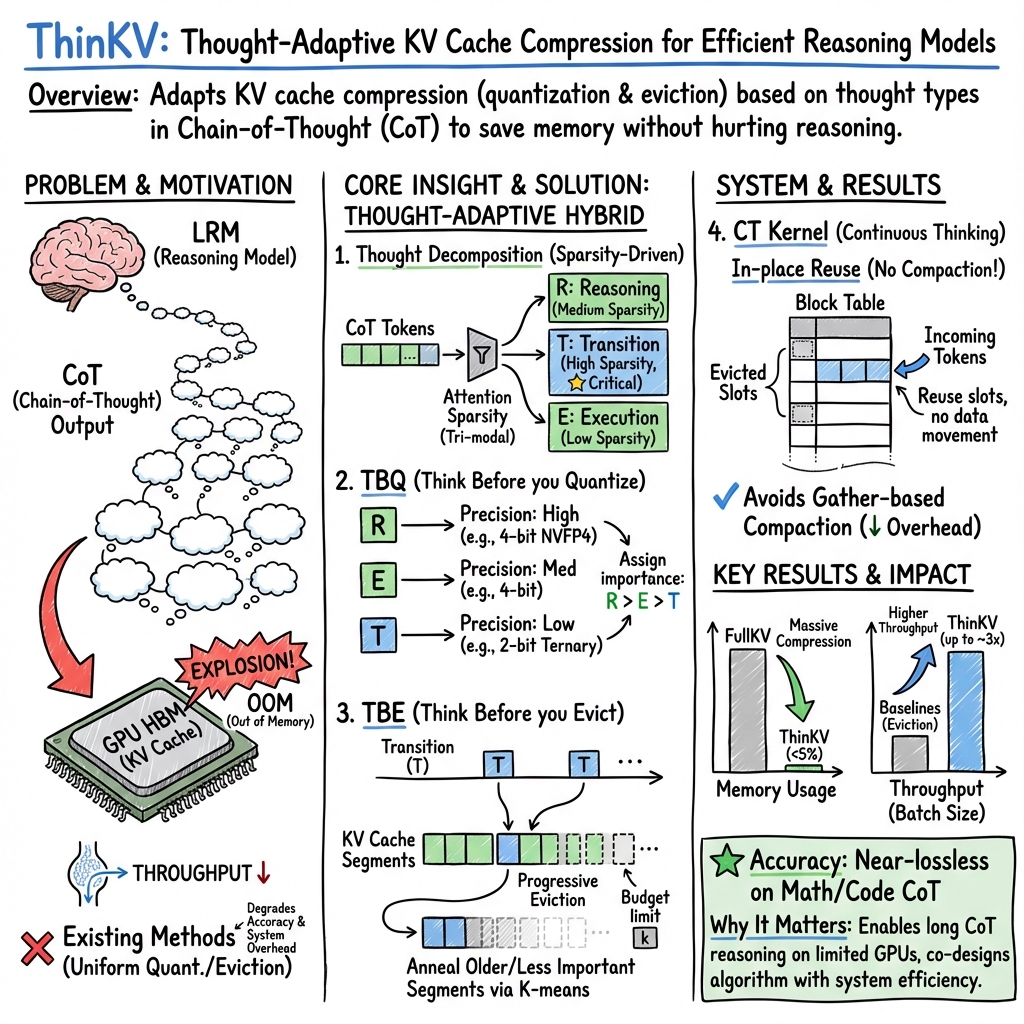

The paper "ThinKV: Thought-Adaptive KV Cache Compression for Efficient Reasoning Models" addresses a critical bottleneck in the deployment of Large Reasoning Models (LRMs) due to the rapid expansion of the key-value (KV) cache during long-output context generation. This problem results in excessive GPU memory consumption, hindering efficient inference. ThinKV introduces a novel framework that applies attention sparsity to identify and compress distinct thought types within the reasoning process, optimizing memory usage without compromising model accuracy.

Methodology

ThinKV leverages a hybrid quantization-eviction strategy that dynamically targets tokens based on their semantic importance within reasoning trajectories. The framework comprises several key components:

- Thought Decomposition: The paper identifies that LRMs generate distinct thought types—reasoning (R), execution (E), and transition (T)—and decomposes these using attention sparsity. Key and value entries are assigned a thought type based on their change in attention sparsity patterns.

- TBQ (Think Before you Quantize): ThinKV adapts token precision according to the thought type, ensuring high-importance thoughts (e.g., reasoning) receive finer quantization, while less critical thoughts (e.g., transition) are subjected to coarser quantization.

- TBE (Think Before You Evict): Implementing strategic token eviction guided by inter-thought dynamics observed in LRMs. Tokens from less critical thoughts are progressively removed to free memory slots as needed.

- Continuous Thinking: An extension of PagedAttention that enables efficient reuse of memory slots from evicted tokens, eliminating overheads from traditional compaction methods.

Results

Extensive experiments showcase ThinKV's robust performance across various benchmarks. In particular, mathematics and coding tasks demonstrate its remarkable ability to achieve near-lossless accuracy while utilizing less than 5% of the original KV cache size. Furthermore, ThinKV provides up to a 5.8x improvement in inference throughput compared to state-of-the-art methods, validating its efficacy over large datasets with LRMs like DeepSeek-R1-Distill and GPT-OSS.

Discussion

The implications of ThinKV are significant for deploying LRMs on hardware with limited memory capacity, such as GPUs with constrained VRAM. By compressing KV caches while preserving reasoning quality, ThinKV enables sustained inference over long output contexts—critical for real-world applications such as code synthesis and extended dialog systems. The thought-adaptive approach also promotes further exploration into semantic-guided compression techniques within AI models, showing promise for more nuanced and resource-efficient AI systems.

Conclusion

ThinKV represents a practical advancement in memory-efficient model inference, delivering scalable and efficient LRMs that align with memory constraints without sacrificing performance. By segmenting reasoning processes and employing adaptive compression tactics, ThinKV not only addresses current memory limitations but also paves the way for future enhancements in adaptive inference strategies tailored to thought complexity in AI.