- The paper presents an FPGA-based emulator integrating the LIF neuron model and STDP, enabling real-time spiking neural network emulation with on-chip learning.

- The paper demonstrates hardware validation with 68% accuracy on digit classification and effective STDP-based node classification on graph data.

- The paper details a modular design supported by a Python interface for spike train streaming, neuron monitoring, and synaptic weight readback.

NeuroCoreX: An Open-Source FPGA-Based Spiking Neural Network Emulator with On-Chip Learning

Introduction

The implementation of spiking neural networks (SNNs) leveraging neuromorphic hardware offers avenues for energy-efficient computation inspired by biological neural systems. NeuroCoreX: An Open-Source FPGA-Based Spiking Neural Network Emulator with On-Chip Learning provides a digital, flexible platform for SNN design, capable of real-time information processing with on-chip learning. A novel FPGA-based architecture integrates spiking models and local learning mechanisms, featuring the Leaky Integrate-and-Fire (LIF) neuron model and Spike-Timing-Dependent Plasticity (STDP).

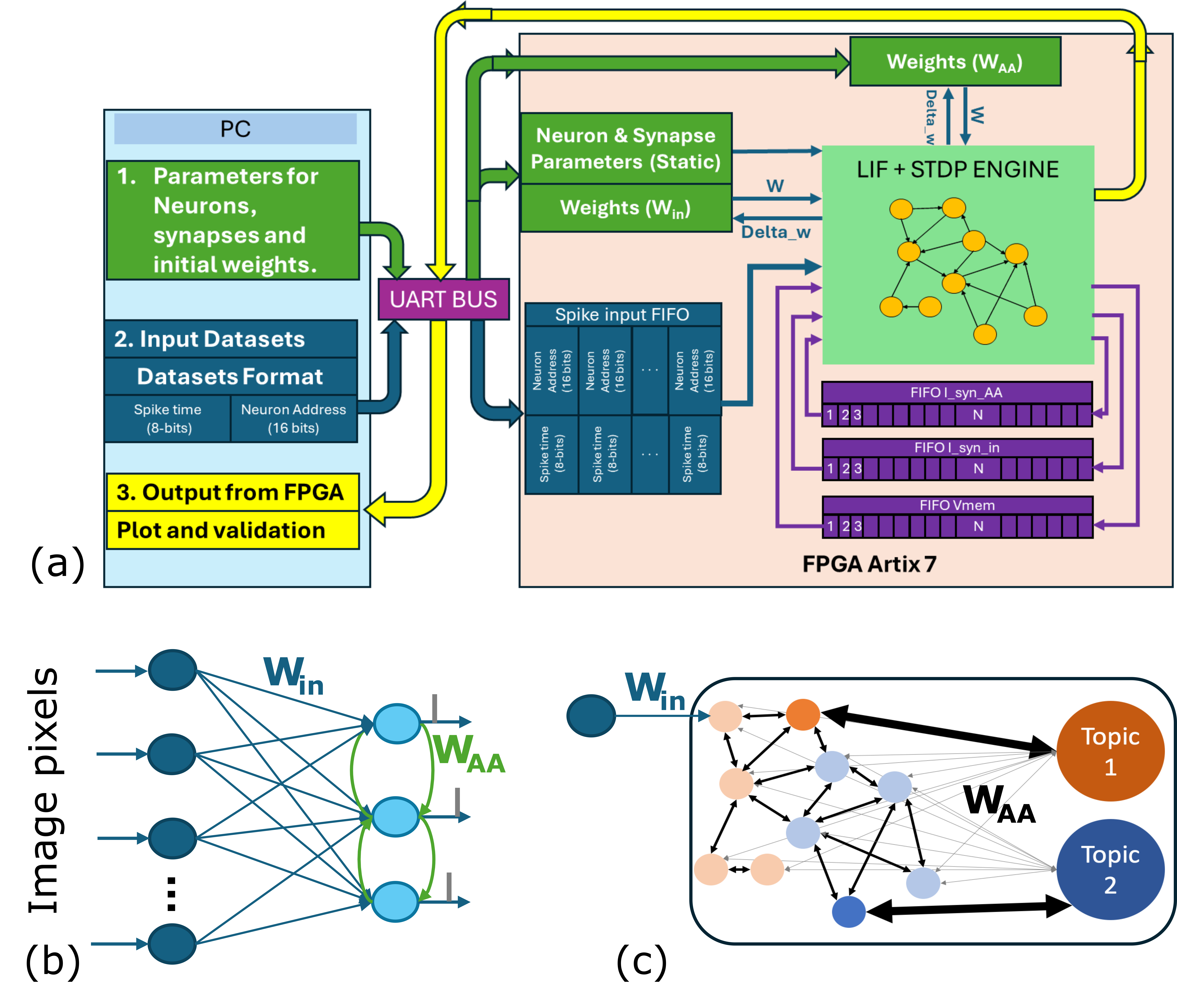

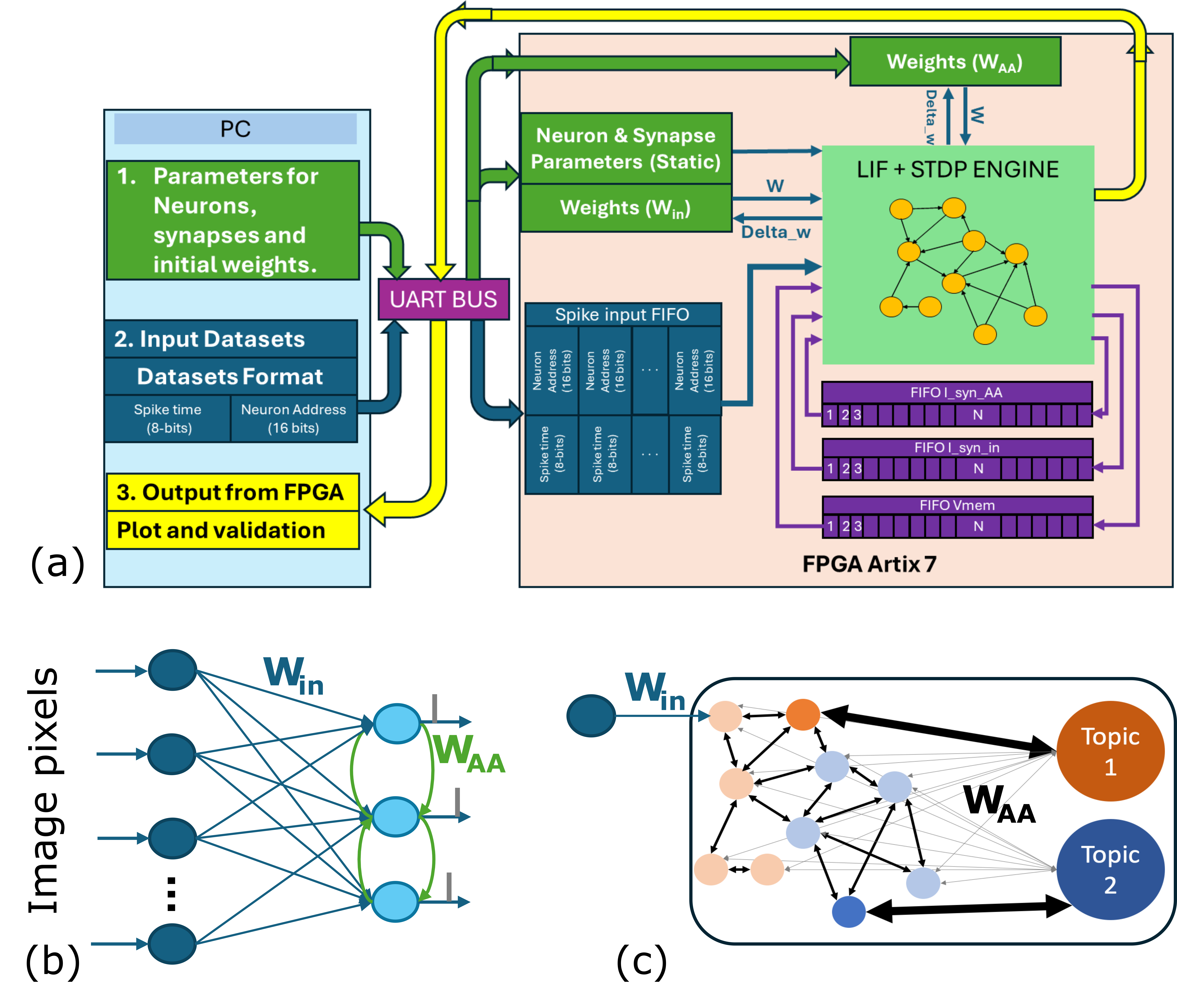

Figure 1: (a). Block diagram of our FPGA-based NeuroCoreX, (b). Feedforward SNN used for digits dataset classification, (c). Spiking Graph Neural Network for citation graph node classification problem.

NeuroCoreX Architecture

Emulator Overview

NeuroCoreX is configured using VHDL to adaptively emulate spiking neuron networks with up to 100 neurons. The architecture consists of neurons, synapses, and learning mechanisms, digitally synthesized on the FPGA. Utilizing the LIF model, neurons integrate inputs through current-based synapses enabling dynamic weight adjustments via STDP. The all-to-all connectivity supports diverse network topologies (Figure 1), enabling flexible and real-time emulation.

Implemented on Artix-7, NeuroCoreX operates at a maximum clock frequency of 100 MHz, with dual clock domains facilitating UART communication and neural processing. BRAM constraints limit scalability, making the Artix-7 suitable for neuromorphic prototyping in embedded applications.

Synapse Dynamics

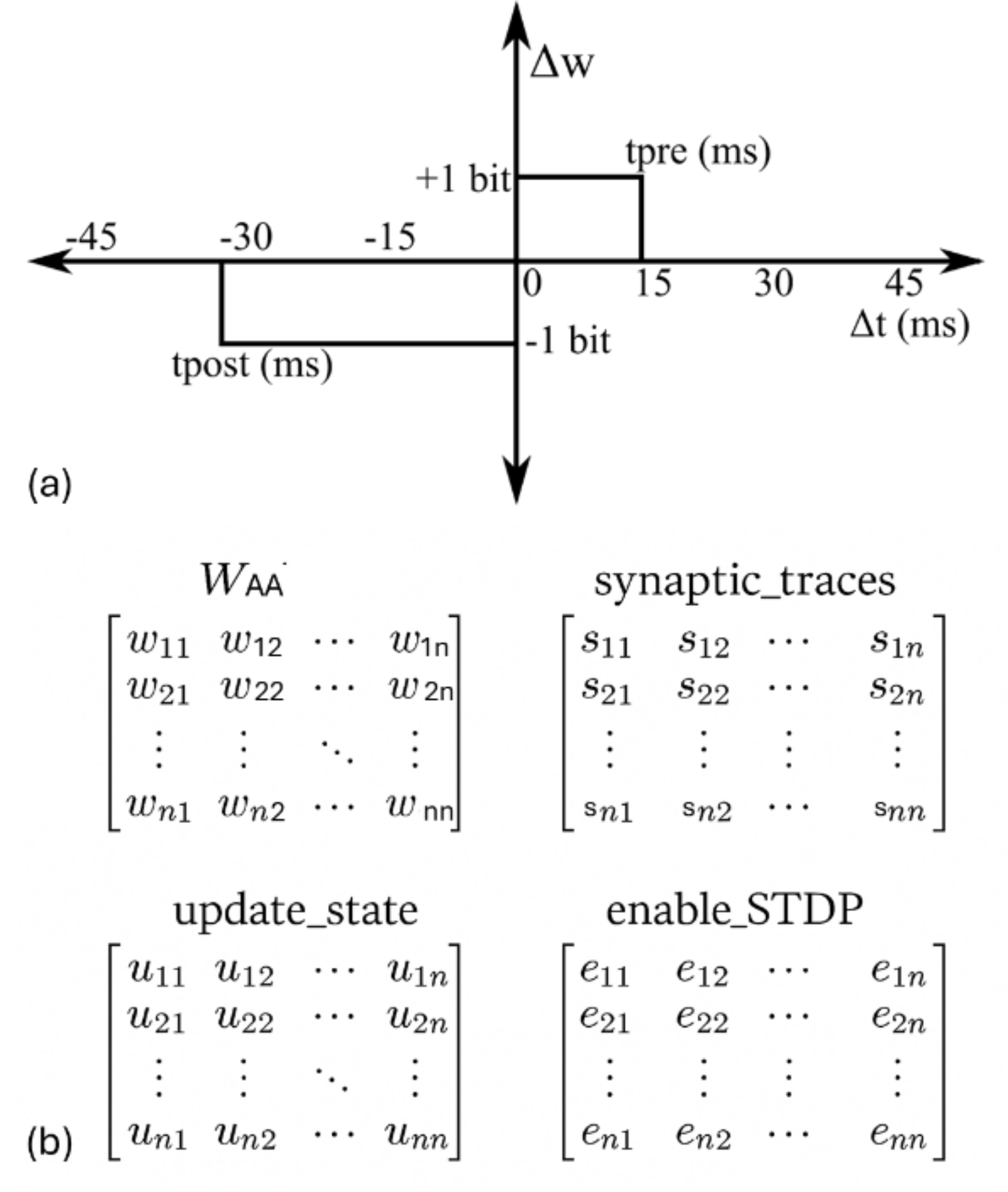

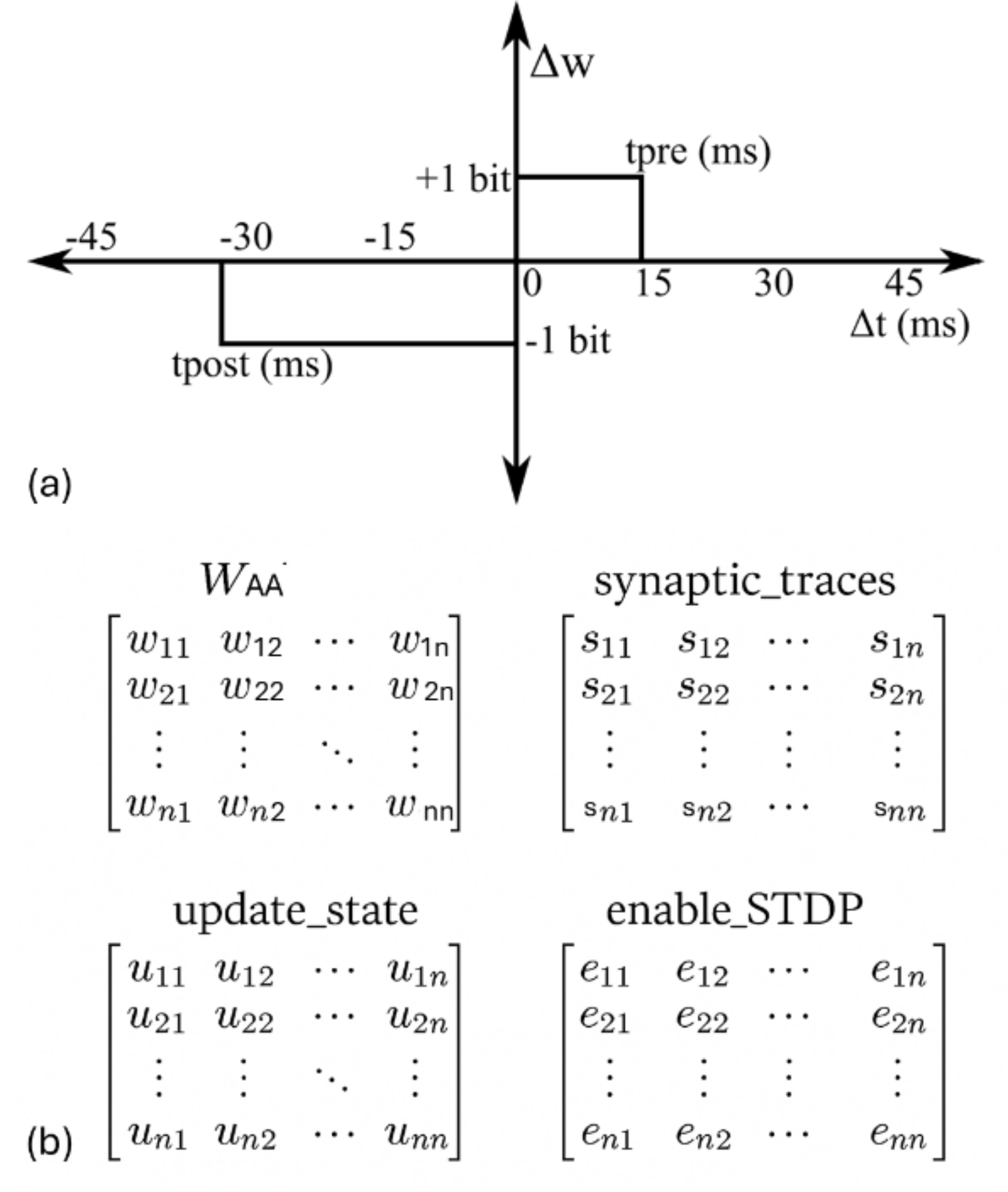

Synapses are modeled with exponential current responses and dynamic weights that evolve through STDP (Figure 2). This learning rule processes timing differences between spikes, adjusting synaptic strengths according to causal and acausal spike pairings.

Figure 2: (a) Simplified STDP learning rule implemented on NeuroCoreX. (b) Internal variables stored in BRAM for tracking STDP updates. All matrices are of size N×N and stored in row-major order.

System Operation

The NeuroCoreX operational cycle begins with configuration upload via UART, followed by real-time spike injection from external stimuli, processed through network dynamics defined by weight matrices WAA and Win. The modular design supports time-multiplexing, enabling neuron updates at biologically relevant timescales while constrained by memory architecture.

Results

User Interface Flexibility

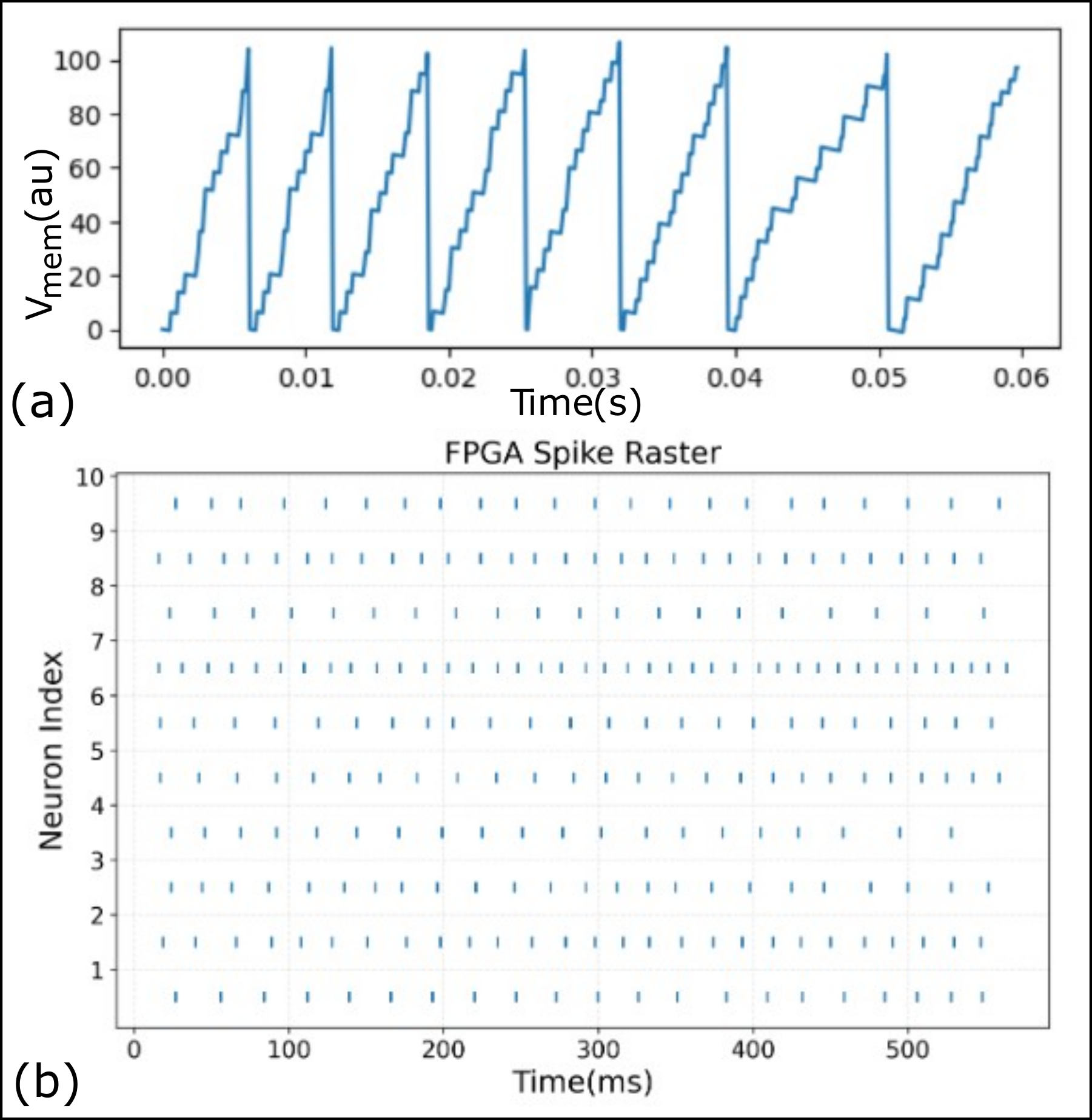

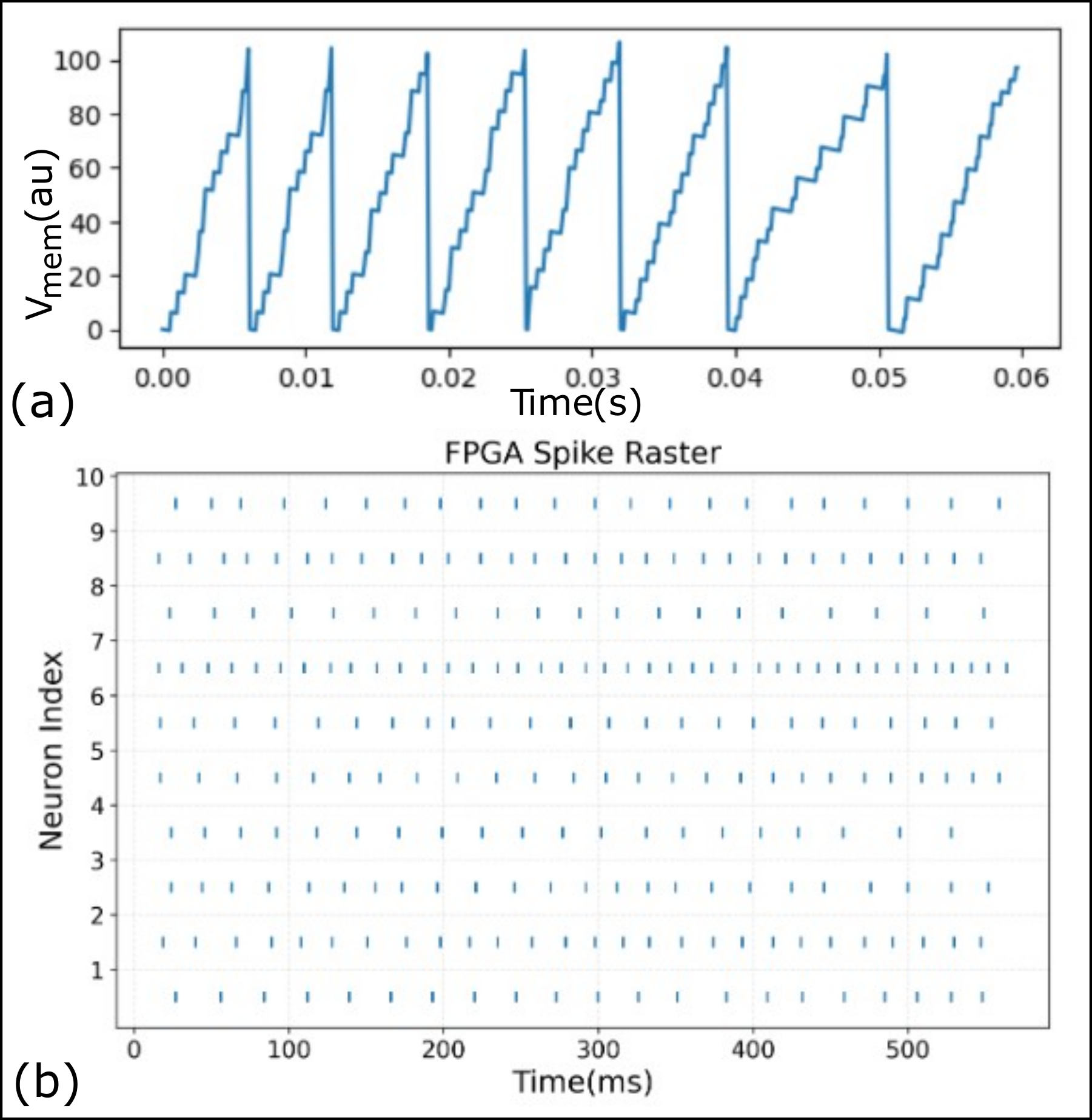

The intuitive Python-based interface supports spike train streaming, neuron state monitoring, and synaptic weight readback, facilitating co-design between software models and hardware validation (Figure 3).

Figure 3: (a) Membrane potential trace of a selected neuron, recorded from the FPGA during network emulation. (b) Spike raster plot showing activity of 10 neurons during a test run.

DIGITS Dataset

NeuroCoreX achieved 68% accuracy on digit classification tasks using the DIGITS dataset, confirming the functional accuracy of the hardware implementation against software simulations. The hardware demonstrated consistent dynamics, validating neuron and synapse computations.

MicroSeer Dataset

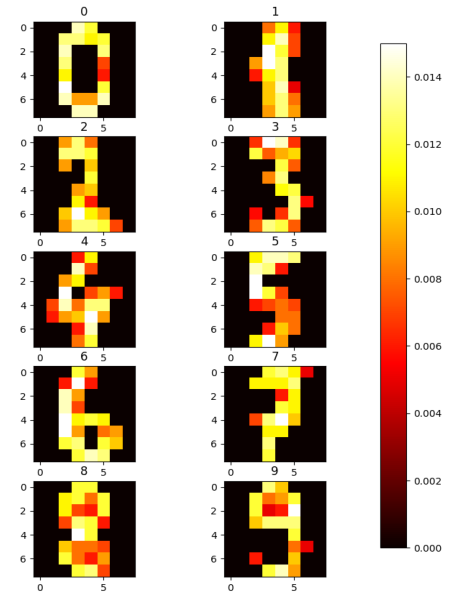

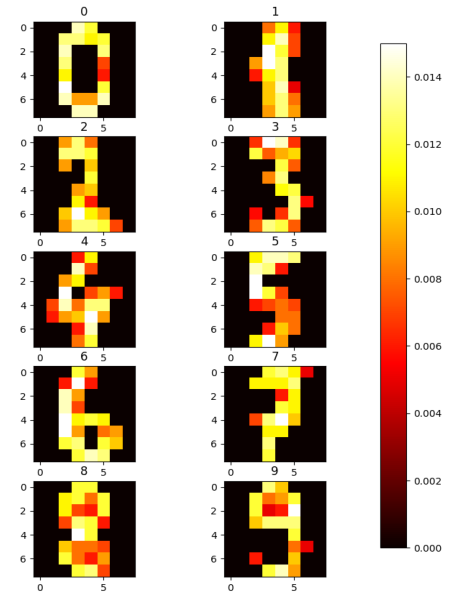

For graph-based learning on the MicroSeer dataset, NeuroCoreX enabled node classification through STDP learning. While demonstrating effective emulation under fixed-point precision constraints, it necessitated adjustments in learning parameters to align hardware learning outcomes with simulations (Figure 4).

Figure 4: Heat-map of the trained weights from all the 10 output neurons.

Discussion and Conclusion

NeuroCoreX exemplifies efficient neuromorphic computation and flexible network architecture, facilitating dynamic adjustment of learning processes inherent to edge AI applications. While the current design provides significant functionality, BRAM constraints pose scalability challenges. Future iterations will explore multi-port memory architectures to enhance memory bandwidth and support larger networks. Integrating advanced learning models and neuron dynamics will broaden the platform’s applicability to diverse neuromorphic tasks.

In summary, NeuroCoreX bridges the gap between software simulation and hardware deployment, offering open-source accessibility for continued research and educational initiatives in neuromorphic computing domains. It serves as a cornerstone for energy-efficient, adaptive AI systems aimed at advancing embedded intelligence.