- The paper introduces TISER, a framework that enhances temporal reasoning in language models by integrating timeline construction with iterative self-reflection.

- The methodology combines initial chain-of-thought reasoning, structured event timeline creation, and reflective verification to refine final answers.

- Experimental results show that TISER outperforms larger models on benchmarks like TGQA and TimeQA, highlighting its state-of-the-art performance.

Timeline Self-Reflection Framework for Enhanced Temporal Reasoning in LLMs

Introduction

The paper "Learning to Reason Over Time: Timeline Self-Reflection for Improved Temporal Reasoning in LLMs" (2504.05258) addresses a significant challenge in NLP: temporal reasoning. While LLMs have demonstrated proficiency in text generation and context understanding, their ability to reason about temporal sequences, event durations, and inter-temporal relationships remains less developed. This limitation affects applications such as question answering, historical analysis, and complex scheduling. The paper introduces Timeline Self-Reflection (TISER), a framework designed to enhance temporal reasoning capabilities in LLMs through a multi-stage process combining timeline construction with iterative self-reflection.

TISER Framework

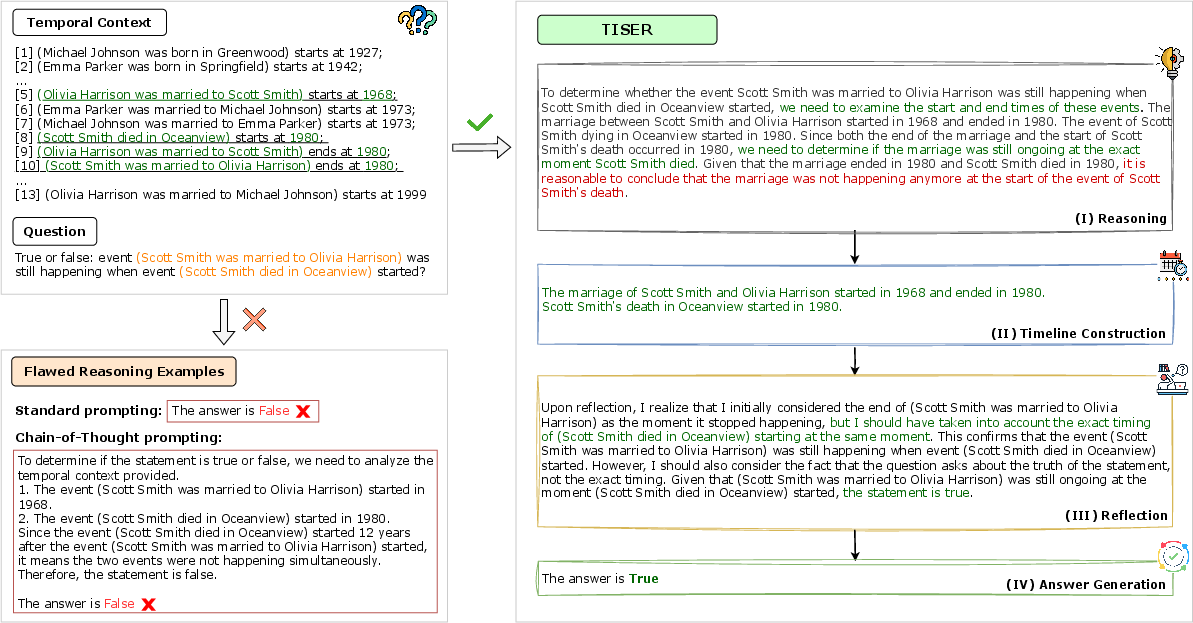

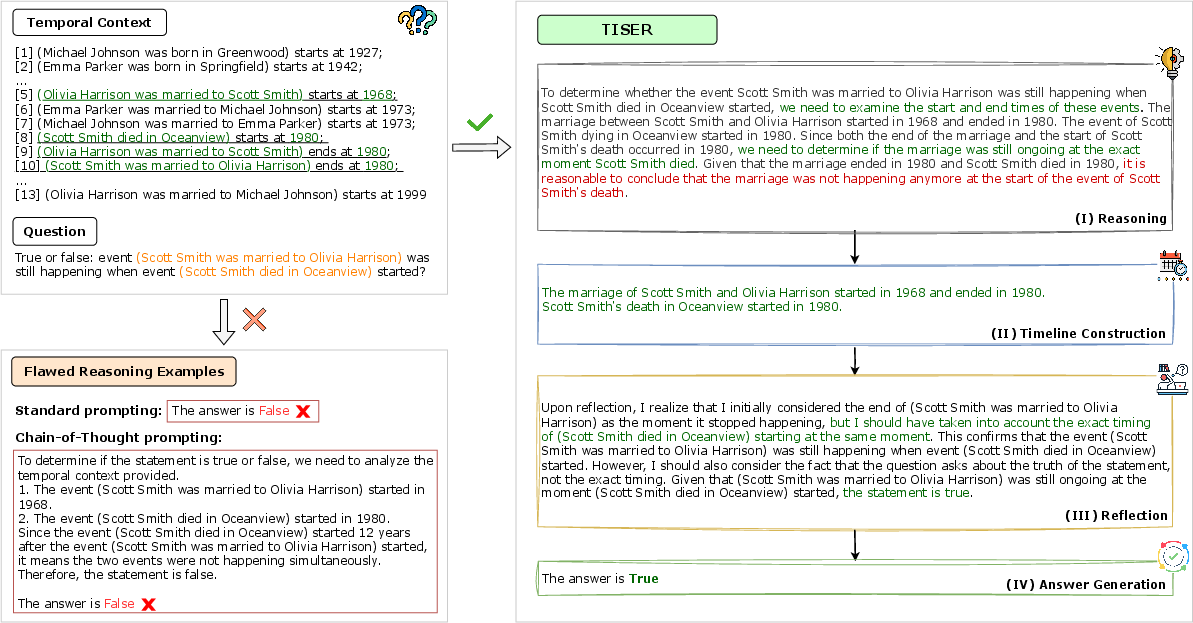

The TISER framework leverages test-time compute scaling to extend the reasoning trace length, enabling models to capture complex temporal dependencies. The framework is structured into several stages: reasoning, timeline construction, reflection, and final answer generation.

Reasoning: Initially, the model generates a preliminary chain-of-thought reasoning trace based on a question and its temporal context.

Timeline Construction: The model organizes relevant temporal events into an ordered timeline, providing a structured representation of temporal sequences and dependencies.

Reflection: Reflection allows the model to self-assess the initial reasoning trace against the constructed timeline, facilitating error detection and refinement.

Final Answer Generation: Utilizing the refined reasoning and timeline, the model produces a coherent and accurate final answer.

Figure 1: High-level overview of TISER compared to other prompting strategies, highlighting the advantages of test-time compute scaling.

Dataset Construction

For effective adaptation, the paper constructs a synthetic dataset that augments existing temporal reasoning benchmarks with detailed reasoning traces. These traces include initial reasoning sequences, ordered timelines of events, and reflective verifications. The dataset is filtered to retain only samples for which final answers match gold standards, ensuring high quality and consistency.

Experimental Results

The paper demonstrates significant performance improvements using TISER, particularly for smaller open-source models, which surpass larger closed-weight models on challenging tasks. Key benchmarks include TGQA, TempReason, and TimeQA, where TISER fine-tuning achieves state-of-the-art performance, highlighting its efficacy in enhancing temporal reasoning accuracy and preserving performance on standard queries.

Model Fine-Tuning

Fine-tuning is performed using Low-Rank Adaptation (LoRA), where models are trained with structured outputs that adhere to the TISER framework. This approach allows LLMs to learn coherent reasoning, accurate timeline construction, and effective reflection. The results showcase marked improvement across diverse datasets.

Implications and Future Directions

The TISER framework not only advances the temporal reasoning capabilities of LLMs but also boosts performance across broader reasoning tasks, including out-of-distribution scenarios. The paper suggests potential future improvements, such as exploring multi-modal reasoning and optimizing computation overhead associated with extended tracing processes.

Conclusion

TISER presents a novel approach to improving temporal reasoning in LLMs by integrating timeline construction and self-reflection. Its structured, multi-stage process fosters error correction and enhances understanding of complex temporal relationships. Overall, TISER demonstrates significant promise in advancing NLP models' reasoning capacities, suggesting avenues for future research and applications across various domains.