A Comprehensive Study on Quantization Techniques for Large Language Models

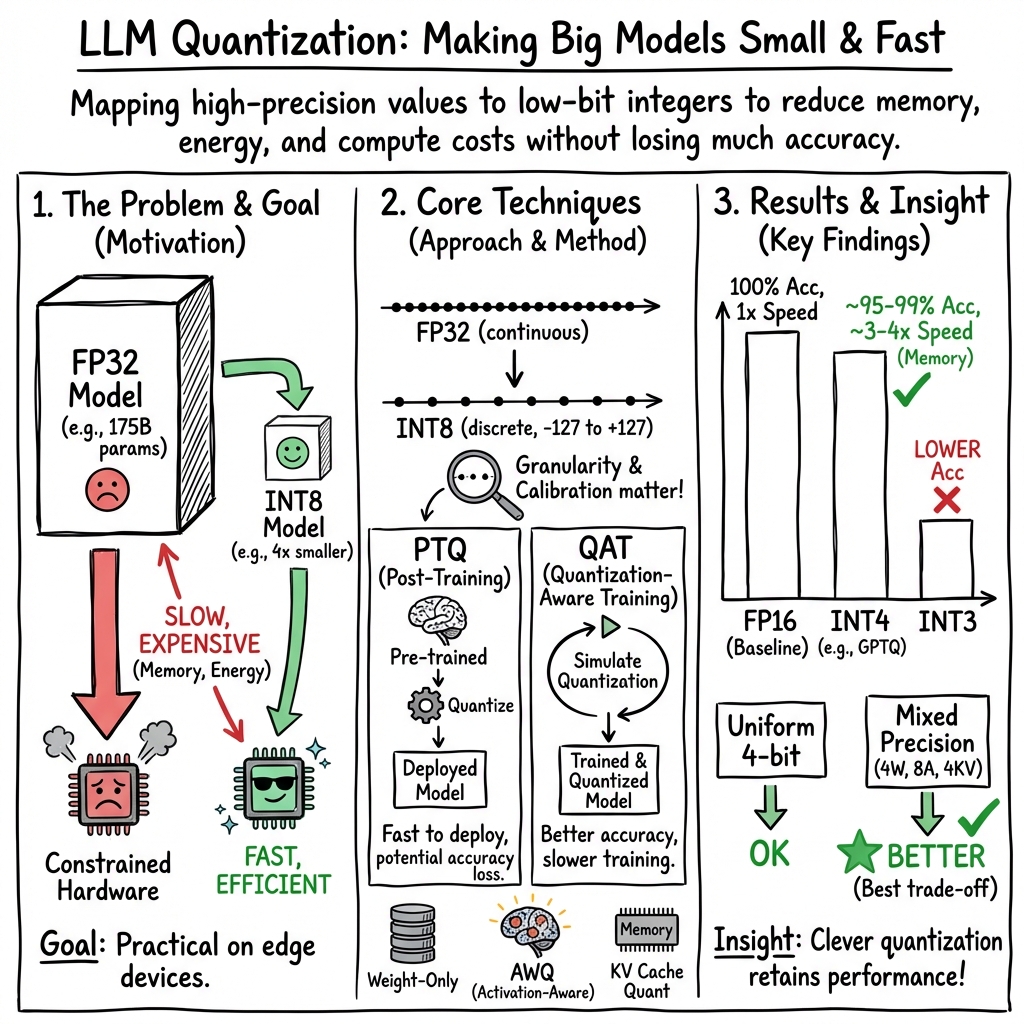

Abstract: LLMs have been extensively researched and used in both academia and industry since the rise in popularity of the Transformer model, which demonstrates excellent performance in AI. However, the computational demands of LLMs are immense, and the energy resources required to run them are often limited. For instance, popular models like GPT-3, with 175 billion parameters and a storage requirement of 350 GB, present significant challenges for deployment on resource-constrained IoT devices and embedded systems. These systems often lack the computational capacity to handle such large models. Quantization, a technique that reduces the precision of model values to a smaller set of discrete values, offers a promising solution by reducing the size of LLMs and accelerating inference. In this research, we provide a comprehensive analysis of quantization techniques within the machine learning field, with a particular focus on their application to LLMs. We begin by exploring the mathematical theory of quantization, followed by a review of common quantization methods and how they are implemented. Furthermore, we examine several prominent quantization methods applied to LLMs, detailing their algorithms and performance outcomes.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (in simple terms)

This paper looks at a way to shrink and speed up very large AI LLMs (like GPT-3) so they can run faster, use less memory, and work on smaller devices. The key trick is called quantization. Think of it like switching from writing numbers with many decimal places to rounding them to fewer digits. You lose a tiny bit of precision, but everything becomes lighter and quicker.

What questions the authors wanted to answer

- How does quantization work, mathematically and practically, for LLMs?

- Which quantization methods are commonly used, and what are their pros and cons?

- How do two big approaches—Post-Training Quantization (PTQ) and Quantization-Aware Training (QAT)—compare on LLMs?

- What do real methods like GPTQ (a PTQ method) and LLM-QAT (a QAT method) actually achieve?

How the research was done (methods explained simply)

This is a comprehensive study (a “big review”) with clear explanations and examples. The authors:

- Explain the math behind quantization using simple “mapping” ideas.

- Imagine you have a long ruler in centimeters (many precise marks) and you switch to a ruler with fewer marks (say only centimeters, no millimeters). You rescale and sometimes shift values so they fit neatly on the simpler ruler. That’s what “affine” and “scale” quantization do: rescale and sometimes add a small offset to match ranges.

- Describe where and how finely to apply quantization.

- Per-layer vs. per-channel: One-size-fits-all for an entire layer (easier but less accurate) vs. tailoring for each channel (harder but more accurate). Think of buying the same T-shirt size for everyone (per-layer) versus picking sizes that fit each person (per-channel).

- Show how to “calibrate” before quantizing so accuracy doesn’t drop too much.

- This is like setting the brightness on a camera before taking a photo, so you don’t lose detail. They describe several ways to choose the right range:

- Global or Max: use the biggest values you see.

- KL divergence: match the shape of the original data’s distribution so you keep as much information as possible.

- Percentile: ignore rare extreme values (outliers) to get a tighter, more useful range.

- Compare major techniques:

- Post-Training Quantization (PTQ): Quantize after training. It’s simple and fast, but may lose more accuracy.

- Quantization-Aware Training (QAT): Pretend the model is quantized during training so it learns to handle it. Better accuracy, but costs more time and compute.

- Dive into two case studies:

- GPTQ (a PTQ method): Compresses LLMs to 3–4 bits per weight with little accuracy loss, using clever math to reduce errors and speed up the process.

- LLM-QAT (a QAT method): Focuses on compressing the “key-value (KV) cache” in transformers—the temporary memory used for attention—so the model runs faster and can handle longer inputs, even at very low precisions, using data it generates itself.

What they found and why it matters

Here are the main takeaways, summarized in everyday language:

- Quantization can massively shrink models and speed them up.

- Example: GPT-3 has about 175 billion parameters and originally needs around 350 GB to store. If you reduce the number of bits per value (say from 16 bits to 4 bits), storage can drop roughly 4×, to about 90 GB.

- Not all parts of a model are equally easy to quantize.

- Weights are often easier than activations. Some methods (like SmoothQuant and AWQ) “shift the difficulty” or selectively keep the most important weights in high precision.

- How you apply quantization affects accuracy.

- Per-channel quantization (tailored per filter/channel) usually keeps accuracy better than per-layer.

- Good calibration (like KL divergence or smart percentiles) helps keep the important information.

- PTQ vs. QAT:

- PTQ (easier): GPTQ shows you can compress big models to 3–4 bits and still do well, often with big speedups. It’s great for quick deployment.

- QAT (more effort): LLM-QAT shows that when you go below 8 bits, training the model to “expect” quantization helps a lot—especially by quantizing the KV cache carefully and using synthetic (self-generated) data to avoid needing the original training set.

- Mixed precision often wins.

- Using different bit-widths for different parts (for example, 4-bit weights, 8-bit activations, and carefully chosen bits for the KV cache) can keep accuracy high while still getting big speed and memory gains.

- Special tricks for LLMs:

- Attention-aware methods and KV-cache quantization are key for speeding up and extending how much text a model can handle at once (longer context and bigger batch sizes).

Why this is important and what it could change

- Cheaper and greener AI: Smaller, faster models use less energy and cost less to run.

- More accessible AI: Quantized LLMs can run on a single GPU, laptops, or even some edge devices (like robots or smart sensors), enabling new applications in places with limited compute or power.

- Better user experiences: Faster responses, longer context windows (thanks to KV-cache compression), and the ability to handle larger batches can improve apps from chatbots to coding assistants.

- Future directions: Picking the “right mix” of precisions (which parts get how many bits) and improving calibration/training strategies will likely bring even better performance with even smaller models.

In short

This paper explains how to “round and resize” the numbers inside giant LLMs so they run faster and fit on smaller hardware, without losing much smarts. It shows when simple, after-the-fact shrinking (PTQ) is enough, and when you should train the model to handle shrinking from the start (QAT). With the right choices, you can get big speed and memory savings while keeping accuracy strong—opening the door to powerful AI almost anywhere.

Collections

Sign up for free to add this paper to one or more collections.