- The paper presents a new operator that uses attention mechanisms to generate data-dependent kernel maps, improving the solution of inverse PDE problems.

- It integrates a reproducing kernel Hilbert space approach to learn nonlocal interactions, effectively mitigating ill-posedness in physical models.

- Empirical results from subsurface flow and Mechanical MNIST benchmarks validate enhanced performance and interpretability across diverse physics tasks.

Nonlocal Attention Operator: Materializing Hidden Knowledge Towards Interpretable Physics Discovery

Introduction

The paper, "Nonlocal Attention Operator: Materializing Hidden Knowledge Towards Interpretable Physics Discovery" (2408.07307), addresses the increasing interest in using attention-based neural architectures for the modeling of complex physical systems. Despite the extensive exploration of attention mechanisms in fields such as NLP and computer vision (CV), their application to physical modeling remains underdeveloped. The authors introduce a novel architecture, the Nonlocal Attention Operator (NAO), which leverages the attention mechanism to facilitate the discovery of invertible physical models through data-dependent kernel mappings. The architecture is posited to enhance generalizability and interpretability in inverse partial differential equation (PDE) problems by enabling nonlocal interactions characterized by data-driven kernels.

Neural Operator Architecture and Theory

NAO leverages the attention mechanism to create a double integral operator enabling interactions among spatial tokens, thereby overcoming issues of ill-posedness and rank deficiency typically encountered in inverse PDE problems. The data-dependent kernel extracts global prior information from multiple systems and suggests the exploratory space in the form of a nonlinear kernel map. This mechanism addresses the challenges of traditional neural network models, such as the need for regularization and the domain-specific nature of prior information. By encoding regularization inherently, NAO introduces a novel way of conceptualizing the attention mechanism, evolving it from a tool for capturing long-range dependencies to a framework for physics-driven discovery.

The theoretical underpinnings of the attention mechanism within NAO evidence a data-adaptive reproducing kernel Hilbert space (RKHS), forming a critical component in resolving the inverse problem. The kernel map constructed through attention serves as an inverse PDE solver, differing from conventional approaches by its independence from input functions and its reliance on the context offered by both functions in the data pairs.

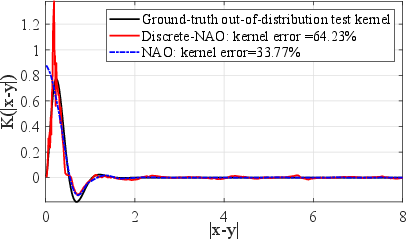

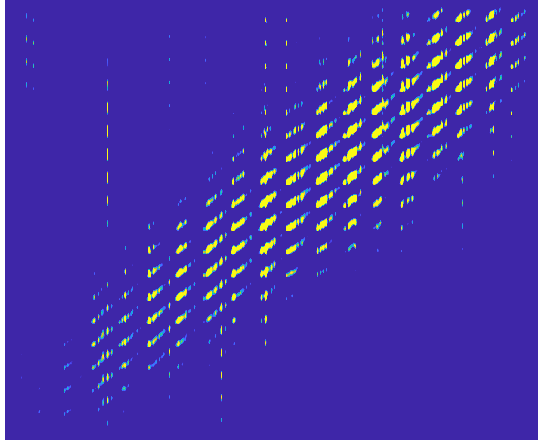

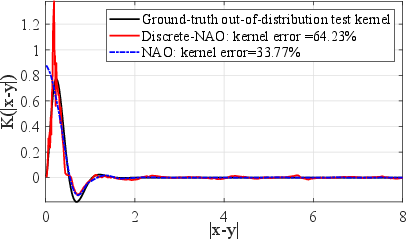

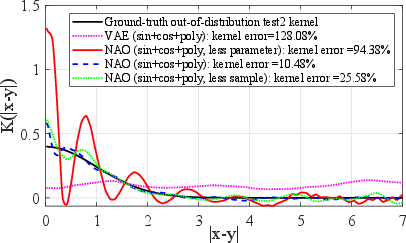

Figure 1: Results on radial kernel learning, when learning the test kernel from a small (d=30) number of data pairs: test on an ID task (left), and test on an OOD task (right).

Experimental Validation

Empirical evaluations demonstrate NAO’s superior performance over baseline neural models in scenarios demanding generalizability to varied data resolutions and system states. It outperforms both classical approaches and other contemporary models like Discrete-NAO and AFNO by providing a kernel map that effectively mitigates the ill-posedness of inverse problems in physical modeling.

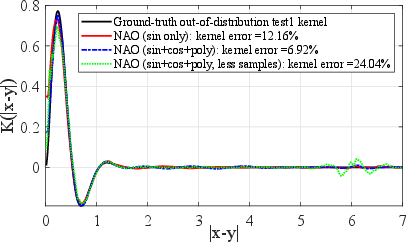

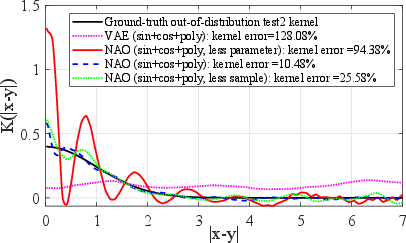

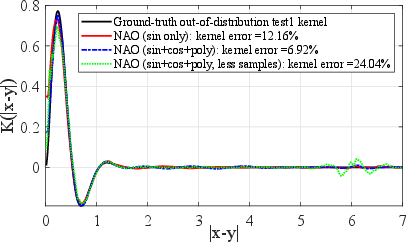

Figure 2: OOD test results on radial kernel learning, with diverse training tasks and d=302. OOD1 (left): true kernel γ(r)=r(11−r)exp(−5r)sin(6r)1[0,11](r).

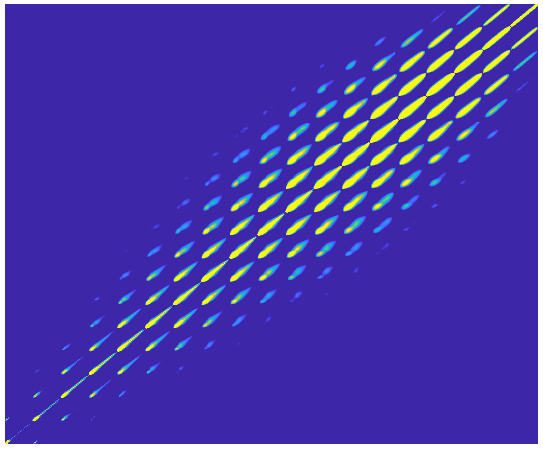

In particular, NAO was tested on tasks ranging from modeling 2D subsurface flows to learning heterogeneous material responses in Mechanical MNIST benchmarks. In subsurface flow tasks, it consistently achieved lower error margins compared to discrete counterparts, demonstrating its efficacy as a forward PDE solver. The kernel recovery experiments revealed the advantage of NAO in maintaining meaningful physical interpretations, a critical feature for applications in scientific and engineering domains.

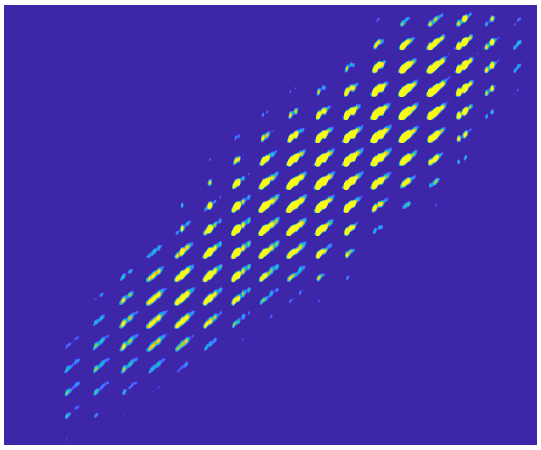

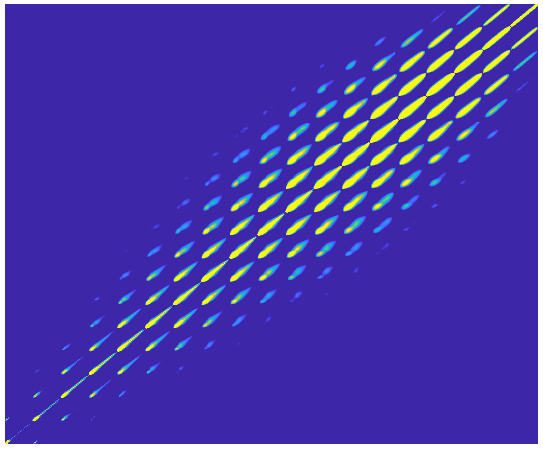

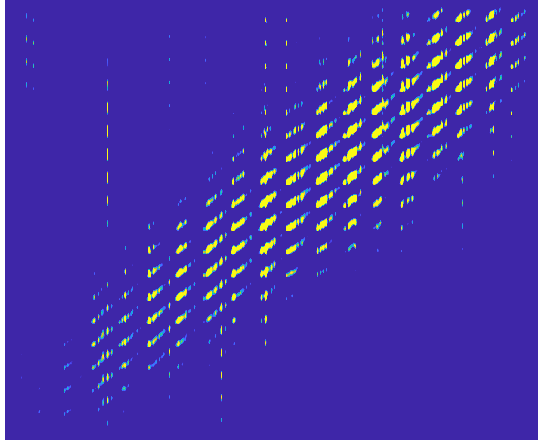

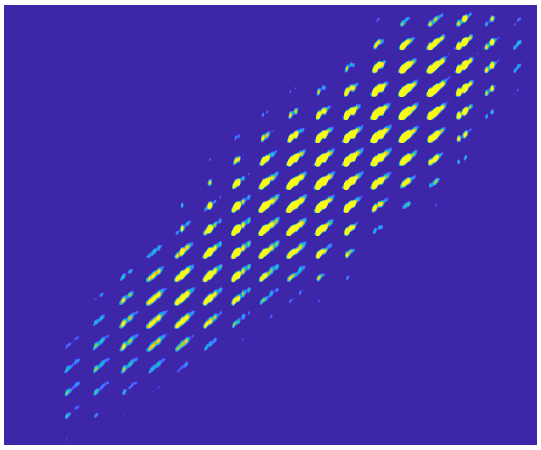

Figure 3: Kernel visualization in experiment 2, where the kernels correspond to the inverse of stiffness matrix: ground truth (left), test kernel from Discrete-NAO (middle), test kernel from NAO (right).

Moreover, in Mechanical MNIST, NAO showed a marked improvement in generalizability and interpretability over baseline models, especially in settings where the data is sparse or out of distribution (OOD) cases, further emphasizing its role as a tool for interpretable physics discovery.

Conclusion

This work proposes an innovative approach to bridging the gap between forward and inverse PDE problems through a novel neural operator architecture, the Nonlocal Attention Operator. NAO not only addresses ill-posedness in inverse PDE tasks more effectively than existing models but also advances the interpretability of learned physical systems. By establishing a foundation for using attention mechanisms within physics discovery, it lays the groundwork for future exploration into physics-based data-driven modeling. The integration of NAO into broader AI tasks could yield significant advancements in both theoretical and practical aspects of modeling complex systems.